Applying Visual Domain Style Transfer and Texture Synthesis Techniques to Audio - Insights and Challenges

Style transfer is a technique for combining two images based on the activations and feature statistics in a deep learning neural network architecture. This paper studies the analogous task in the audio domain and takes a critical look at the problems…

Authors: M. Huzaifah, L. Wyse

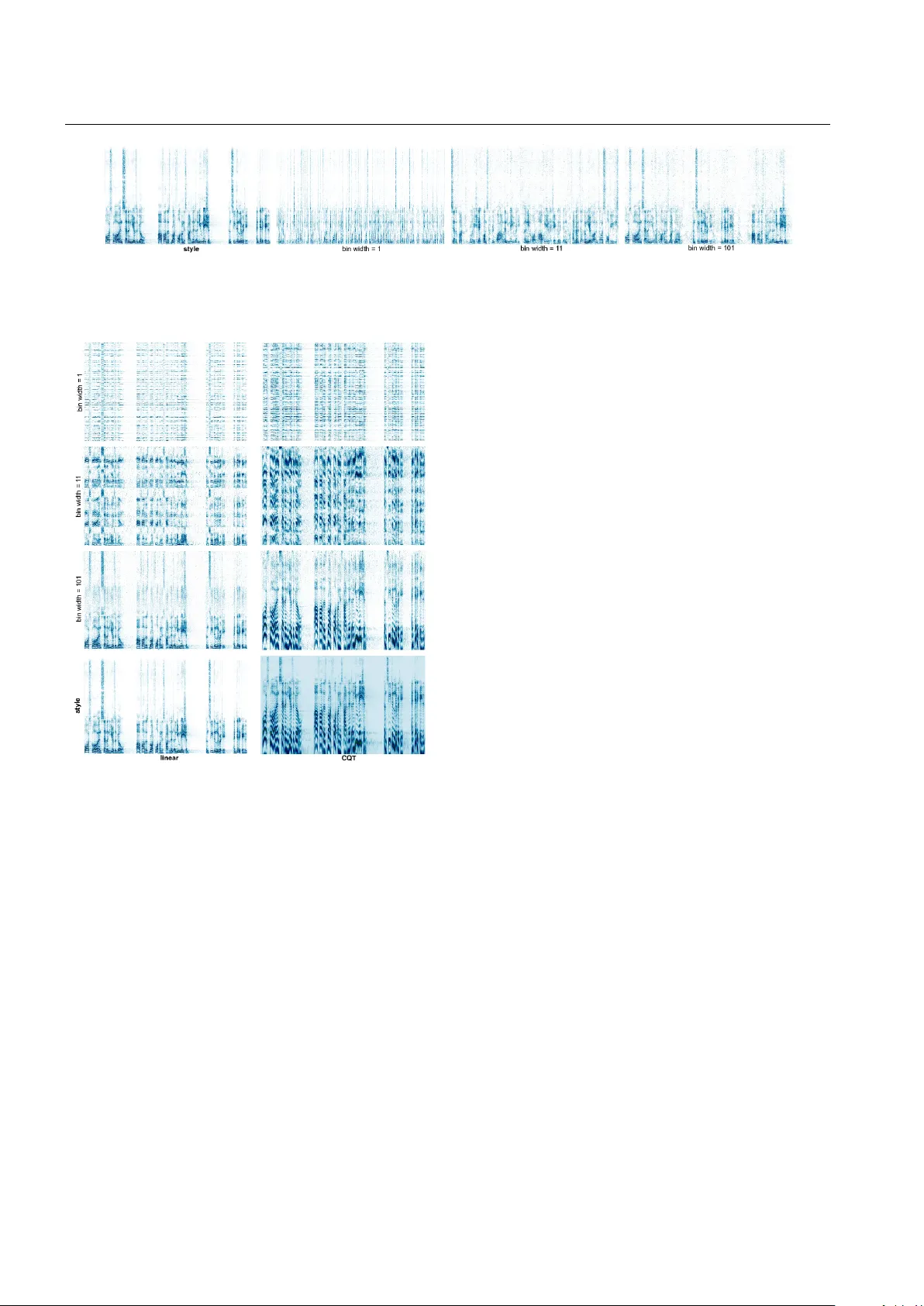

Neural Computing and Applications manuscript No. (will be inserted by the editor) A pplying V isual Domain Style T ransfer and T extur e Synthesis T echniques to A udio - Insights and Challenges Muhammad Huzaifah · Lonce W yse This is a post-peer-re view , pre-copyedit version of an article published in Neural Computing and Applications. The final authenticated version is a vailable online at: http://dx.doi.or g/10.1007/s00521-019-04053-8 Abstract Style transfer is a technique for combining two images based on the activ ations and feature statistics in a deep learning neural network architecture. This paper stud- ies the analogous task in the audio domain and takes a crit- ical look at the problems that arise when adapting the orig- inal vision-based frame work to handle spectrogram repre- sentations. W e conclude that CNN architectures with fea- tures based on 2D representations and conv olutions are bet- ter suited for visual images than for time-frequency repre- sentations of audio. Despite the awkward fit, experiments show that the Gram matrix determined style for audio is more closely aligned with timbral signatures without tem- poral structure whereas network layer activity determining audio content seems to capture more of the pitch and rhyth- mic structures. W e shed insight on several reasons for the domain dif ferences with illustrativ e examples. W e motiv ate the use of se veral types of one-dimensional CNNs that gen- erate results that are better aligned with intuitive notions of audio texture than those based on e xisting architectures b uilt for images. These ideas also prompt an exploration of audio texture synthesis with architectural variants for extensions to infinite textures, multi-textures, parametric control of re- This research was supported by an NVIDIA Corporation Academic Programs GPU grant. Muhammad Huzaifah bin Md Shahrin National Uni versity of Sing apore, NUS Graduate School for Integra- tiv e Sciences and Engineering ORCID: 0000-0002-7188-3600 E-mail: E0029863@u.nus.edu Lonce W yse National Univ ersity of Singapore, Communications and New Media Department ORCID: 0000-0002-9200-1048 E-mail: cnmwll@nus.edu.sg ceptiv e fields and the constant-Q transform as an alternati ve frequency scaling for the spectrogram. Keyw ords style transfer · texture synthesis · sound modeling · con volutional neural netw orks 1 Introduction and related w ork If articulating the intuitiv e distinction between style and con- tent for images is difficult, it is e ven more so for sound, in particular non-speech sound. The recent use of neural network-deri ved statistics to describe such perceptual qual- ities for texture synthesis [14] and style transfer [13] of fers a fresh computational outlook on the subject. This paper examines se veral issues with these image-based techniques when adapting them for the audio domain. Belonging to a class of problems associated with gen- erativ e applications, style transfer as a concept within deep learning w as popularized with the pioneering work of Gatys et al. [13, 14]. Their core approach leverages the conv olu- tional neural network (CNN) to extract high-level feature representations that correspond to certain perceptual dimen- sions of an image. In texture synthesis [14] image statistics were extracted from a single reference texture with the ob- jectiv e of generating further examples of the same texture. In style transfer [13] the goal was to simultaneously match the textural properties of one image (as a representation of artis- tic style), with the visual content of another , thus allo wing the original style to be swapped with that of another image while still preserving its overall semantic content. Both tasks are framed as optimization problems, whereby the statistics of feature maps are used as measures to formulate a loss function between the reference images and the ne wly gener- ated image. Man y authors ha ve extended Gatys’ original ap- proach in terms of speed [22, 35], quality [27, 36], di versity 2 Muhammad Huzaifah, Lonce W yse [37] and control [11, 15, 16]. F or a more thorough revie w of the historical dev elopments in image style transfer , we refer the reader to Jing et al. [21] and the individual papers. T o build on existing mechanisms that were de veloped in the context of the visual domain and carry out the analo- gous tasks in the audio domain, sev eral exploratory studies hav e replaced images (in the sense of photographs/pictures) with a time-frequency representation of an audio signal in the form of a spectrogram. 2-dimensional (2D) spectral im- ages hav e previously been utilized as input representations for CNNs in a range of audio domain tasks including speech recognition [8], en vironmental sound classification [31] and music information retriev al [9]. In empirical terms the pair- ing of spectrograms with CNNs has demonstrated consis- tently superior pattern learning ability and discriminativ e power over more traditional machine learning methods such as the hidden Markov model (HMM), and lossy representa- tions like Mel-frequency cepstral coef ficients (MFCCs) [24]. There have been sev eral preliminary attempts at audio style transfer with v arying experimental focus. Ulyanov and Lebedev in a blog post [34], outlined several recommenda- tions for audio style transfer including using shallow , ran- dom networks instead of the deep, pretrained netw orks com- mon for the image task. Importantly , while the spectrogram is a 2D representation, in Ulyanov’ s framework it is still processed as a 1D signal by replacing the colour channel in the image case with the spectrogram’ s frequency dimension, therefore conv olving only across the time dimension. Subse- quent work in this field provided a more thorough treatment of the network’ s ef fect on the deri ved statistics. W yse [40] looked at network pretraining and input initialization, while Grinstein et al. [18] focused on the impact of dif ferent net- work architectures on style transfer . In the latter study , the deeper networks, specifically VGG-19 and SoundNet, were contrasted with Ulyanov’ s shallow-random network and a hand-crafted auditory processing model based upon McDer- mott and Simoncelli’ s earlier audio texture work [26]. They concluded that the shallow-random network and auditory model sho wed more promising results than the deeper mod- els. V erma [39] in contrast to the others maintained the 2D con volutions and the pretrained network. They found that small con volution k ernels are effecti ve for signals with steady- state pitches for the reference style, but provide a limited number of examples which are only presented visually , mak- ing interpretation difficult. There was also an attempt to iso- late prosodic speech using the style transfer approach by Perez, Proctor and Jain [28], although only low-le vel tex- tural properties of the voice were successfully transferred. While all the related works studied certain aspects of the problem, none go into detail on the challenges posed by the nature of sound and how it is represented, especially in re- lation to what is essentially a vision inspired model in the CNN. Hence, the focus of this paper is not a presentation of state-of-the-art audio style transfer but an analysis of the issues inv olved in adopting existing style transfer and tex- ture synthesis mechanisms for audio. In paintings, Gatys’ style formulation preserv es brush strokes, including, to a de- gree, texture, direction, and colour information, leading up to lar ger spatial scale motifs (such as the swirls in the sk y in Starry Starry Night, see Fig.1 in [13]) as the recepti ve field grows larger deeper in the network. How these style con- cepts translate to style in the audio domain is not straight- forward and forms part of the analysis here. Our main contributions are threefold, and relate to the ov erall goal of elucidating the possibilities and limitations of existing vision-focused style transfer techniques when ap- plied to the audio domain. Firstly , we distil the issues in- volv ed in directly applying CNN-based style transfer to au- dio. The problems highlighted serve as a basis for the im- prov ements suggested here and elsewhere. Secondly , we pro- vide further insight to possible characterizations of style and content within a style transfer framework given a spectro- gram input, as well as its connection to audio texture. Thirdly , we strengthen the link to audio textures by dev eloping a nov el use of descripti ve statistics taken from deep neural networks for audio texture generation. The rest of the paper is organized as follows: in the first half we discuss the theoretical issues that arise when apply- ing the CNN architecture, largely designed to process 2D images, to the domain of audio. The second half documents sev eral e xperiments that further e xpound the issues raised in the first half. Here we also sho w , through an exploration of the relev ant architectures, ho w the style transfer framew ork may in fact align nicely with the con v entional description of audio texture. T o further illustrate the concepts introduced, audio sam- ples are interspersed throughout the paper . The y can be heard, together with additional examples, on the companion web- site at the following address: http://animatedsound.com/ research/2018.02_texturecnn/ 2 The style transfer algorithm Giv en a reference content image c and a reference style im- age s , the aim of style transfer is to synthesize a new image x matching the content of c and the style features drawn from s . Gatys et al. [13] provides precise definitions of content and style for use in the neural network models that produce images that correlate remarkably well with our intuitiv e un- derstanding of these terms. Style is defined in terms of a Gram matrix (Eq. 1) used to compute feature correlations as second-order statistics for a given input. W e start by reshaping the tensor representing the set of filter responses φ extracted in layer n of a CNN to ( BH n W n , C n ) where B , H n , W n and C n are batch size, height, Applying V isual Domain Style T ransfer and T e xture Synthesis T echniques to Audio - Insights and Challenges 3 width and number of distinct filters respectively . The Gram matrix is then obtained by taking the inner product of the i th and j th feature map at each position k , additionally normal- izing by U n = BH n W n . G = 1 U n φ T φ = 1 U n ∑ k φ ik φ k j (1) Giv en a set of style layers S , the contribution of each layer n to the style loss function is defined as the squared Frobenius norm of the difference between the style repre- sentations of the original style image and the generated im- age. L st yl e ( s , x ) = ∑ n ∈ S 1 C n k G ( φ n ( s )) − G ( φ n ( x )) k 2 F (2) In contrast, content features are taken directly from the filter responses, yielding the corresponding content loss func- tion between the content representations of the original con- tent image and the generated image in content layers C . L cont ent ( c , x ) = ∑ m ∈ C 1 C m k φ m ( c ) − φ m ( x ) k 2 2 (3) The total loss used to driv e the updating of the hybrid image is a linear combination of the style and content loss functions at the desired layers S and C of the CNN. Start- ing from an input image x of random noise (or alternatively a clone of the content image c ), this objective function is then minimized by gradually changing x based on gradi- ent descent with backpropagation through a CNN with fixed weights to obtain an image of similar textural properties as s mixed with the high-lev el visual content of c . The balance of influence between content and style representations can be controlled by altering the weighting hyperparameters α and β respectiv ely . α can be set to 0 to completely remove the contribution of content (or otherwise omit the content operations to save compute time) resulting in pure texture synthesis (in which case the input image is initialized with random noise). In practice we also introduce an additional regularization term in the loss to constrain the optimization of x to pixel values between 0 and 1. Preliminary experiments showed that imposing a hard bound through clipping the spectro- gram v alues resulted in the saturation of many pix els at 0 or 1, negati vely impacting the perceiv ed quality of the gener- ated sounds despite a similar approach working reasonably well for images. Instead, the regularization term penalizes values to the extent that they are outside the interval ( 0 , 1 ) , and is weighted by γ . U = max { x − 1 , 0 } (4) D = max { 0 − x , 0 } (5) where 0 and 1 are matrices of 0s and 1s the same size as x x = argmin x L t ot al ( c , s , x ) = argmin x α L cont ent + β L st yl e + γ k U + D k 2 (6) 3 Stylization with spectrograms 3.1 The spectrogram representation For many classification tasks as well as for style transfer , images are represented as a 2D array of pixel values with 3 channels (red, green and blue) for colour or 1 channel for greyscale. Con volutional neural networks ha ve achie ved their well-kno wn le vel of performance on tasks in the visual domain based on this input representation. The traditional 2D-CNN working on visual tasks uses kernels that span a small number of pixels in both spatial dimensions, and com- pletely across the channel dimension. One of the defining characteristics of con volutional networks is that kernels at different spatial locations in each layer share weights. This architecture drastically reduces the number of parameters compared to fully connected layers, making networks more efficient and less prone to overfitting. It also aligns with the intuition that image objects have translational in variance, that is that the appearance of objects does not change with their spatial location. In this sense, the CNN architecture is domain-dependent. As a starting point for using existing style-transfer ma- chinery to explore audio style transfer , audio can be repre- sented as a 2D image through a variety of transformations of the time domain signal. One such representation is the spec- trogram, which is produced by taking a sequence of overlap- ping frames from the raw audio sample stream, performing a discrete Fourier transform on each, and then taking the magnitude of the complex number in each frequenc y bin. X ( n , k ) = L − 1 ∑ m = 0 x ( m ) w ( n − m ) e − i 2 π k N m (7) Explicitly , the discrete Fourier transform with a sliding window function w ( n ) of length L is applied to overlapping segments of the signal x as sho wn in Eq. 7. The overall pro- cess is known as the short-time F ourier transform (STFT). The spectrogram consists of a time-ordered sequence of magnitude vectors, with frequenc y along the y-axis and time along the x-axis, an example of which is seen in Fig. 1. 4 Muhammad Huzaifah, Lonce W yse Fig. 1 Reference style image s in the form of a spectrogram (left) and synthesized texture x processed using a 2D-CNN model (right), match- ing the style statistics of s . T exture synthesis done with a 2D con volu- tional kernel results in visual patterns that are similar to those for im- age style transfer but does not make much perceptual sense in the au- dio space, as can be heard in the accompanying audio clip. This study makes se veral suggestions to better align style transfer to the audio do- main. Where color images have a separate channel for each color , this audio representation has just one for the magnitudes of each time-frequency location. This audio-as-image rep- resentation can be used directly with the same architectures that have been de veloped for image classification and style- transfer , and has been shown to be useful for various pur- poses in sev eral previous studies [8, 9, 31, 34, 40]. The magnitude spectrogram does not contain phase in- formation. T o in vert magnitude spectra, reasonable phases for each time-frequency point must be estimated, but there are well-known techniques for doing this that produce rea- sonably high-quality audio reconstructions [2, 17]. 3.2 Objects, Content, and Style What we mean by content and style are necessarily domain dependent. In the visual domain, content is commonly asso- ciated with the identity of an object. The identity of an ob- ject is inv ariant to the different appearances it may take. In the audio domain, psychologists and auditory physiologists speak of “auditory objects” that are groupings of informa- tion across time and frequency which may or may not corre- spond to physical source [3]. The in v ariance of such objects across different sonic manifestations is a central concept in both domains. Ho we ver , the relationship between sound and an “object” is more tenuous than it is for images. Sounds are more closely tied to ev ents, sonic characteristics are the result of the interaction between multiple objects and their physical characteristics, and sounds often carry less infor- mation about the identity of their physical sources than im- ages. When we consider abstract representations where sense- making is based on the interplay of relationships between patterns rather than on references to real-world objects, then the distinction between content and style becomes ev en more fluid. The vast majority of images used in the literature on style transfer are not abstract, but are of identifiable w orldly objects and scenes. On the other hand, abstract material, like music, occupies a huge part of our sonic experience, and this requires a dif ferent understanding of content. The ques- tion for this paper is, what are the domain-specific impli- cations for a computational architecture for separating style and content? There is a con ventional use of the term “style” in mu- sic that, to a first approximation, refers to everything e xcept the sequence of intervals between the pitches of notes. W e can play a piece of music slow or fast, on a piano or with a string quartet, without changing the identity of the piece. If we consider content to be auditory objects corresponding to time-frequency structures of pitches, then ho w appropri- ate are 2D CNNs and the Gram matrix metric for separating style from content, and how does the representation of audio interact with the computational architecture? “Pitch” is a subjectiv e quality we associate with sounds such as notes played on a musical instrument or spoken vo w- els. T o a first approximation, pitched sounds hav e energy around integer multiples (“harmonics”) of a fundamental. The different components are perceptually grouped and heard as a single source rather than separately . The relativ e am- plitude of the harmonics contributes to the “timbre” of the sound that allo w us to distinguish between, for example, dif- ferent instruments producing the same note or different v ow- els sung at the same pitch. For this reason, we can think of a pitched sound as an auditory “object” that has characteristics including pitch, loudness and timbre. There are fundamental differences between the way these auditory objects are rep- resented in spectrograms and the way physical objects are represented in visual images that, if unaccounted for , may impact the efficac y of spectrograms as a viable data repre- sentation for CNN-based tasks. Objects in images tend to be represented by contiguous pixels, whereas in the audio domain the energy is in gen- eral not contiguous in the frequency dimension. CNN ker - nels learn patterns within continuous receptive fields which may therefore be less useful in the audio domain. Further- more, in the visual domain, individual image pixels tend to be associated with one and only one object, and transparency is rare. In the audio domain, transparency is the norm and sound emanating from different physical objects commonly ov erlap in frequency . The hearing brain routinely permits energy from one time-frequency location to multiple objects in a process known as “duple x perception” [30]. One of the implications of the distinctions between the 2D auditory and visual representations for neural network modeling appears in how we approach data augmentation. Dilations, shifts, rotations, and mirroring operations are com- mon techniques in the visual domain because we do not con- sider the operations to alter the class of the objects. These operations are clearly domain-specific since they can com- pletely alter how a sound is percei ved when performed in the time and/or frequenc y domains. Such operations when done in the Fourier space may inadvertently destroy the underly- Applying V isual Domain Style T ransfer and T e xture Synthesis T echniques to Audio - Insights and Challenges 5 ing time domain information, with the recov ered audio often losing any discernible structure relating to the original. Perhaps the most pertinent issue in using spectrograms in place of images is the inherent asymmetry of the ax es. For a linear-frequency spectrogram, translational in variance for a sound only holds true in the x (time) dimension, but not in the y (frequency) dimension. Raising the pitch of a sound shifts not only the fundamental frequenc y but also results in changes to the spatial extent of its multiplicativ ely-related harmonics. In other words, linearly moving a section of the frequency spectra along the y-axis would change not only its pitch but also affect its timbre, thereby altering the original characteristics of the sound. In contrast, shifting a sound in time does not change its perceptual identity . Because of the difference between the semantics of space versus time and frequency , as well as the asymmetry of the x and y-axes in the audio representation, we might expect a 2D kernel with a local receptive field (like those used in the natural image domain) that treats both frequenc y and time in the same w ay to be problematic when applied to a spectrogram represen- tation (see Fig. 1). T o address this problem, an adjustment can be made to either the way frequency is scaled or the way the model ar- chitecture treats the dimensions. One possibility is to use a log-frequency scaling such as the Mel-frequency scale or the constant-Q transform (CQT) that has been empirically shown by Huzaifah [20] to improve classification perfor- mance. This is at least suggestiv e of the influence of input scaling on the pattern learning ability of the CNN, of which translational in v ariance is a central idea. The CQT is of par - ticular interest as it preserves harmonic structure, keeping the relativ e positions of the harmonics the same even when the fundamental frequency is changed, preserving some de- gree of in variance in translation. Sounds that hav e the same relativ e harmonic amplitude pattern would then activ ate the same set of filters independent of any shift in the pitch of the sound. Alternativ ely , we can alter the architecture typically used in visual applications. Recent work in the audio domain has turned to the use of 1D-CNN [34], where we re-orientate the axes: the spectrograms frequency components are moved into the channel dimension, thereby treated analogously to colour in visual applications. Of course, the number of audio channels is thus much greater than the 3 typically used for colour . The time axis remains as the width as in the 2D-CNN which is now treated as the 1-dimensional “spatial” input to the network (Fig. 2, right). As in image applications, the con volution kernel still spans the entire channel dimension and has a limited extent of se veral pixels along the width. W e take advantage of this unequal treatment of the dimen- sions to allo w the CNN to learn patterns along the full spec- trum that are still local in time. Moreover , by allowing kernel shifts and pooling operations only ov er the time dimension, this re-orientation of the axes reinforces the idea of kernels capturing translational inv ariance ov er time but not ov er fre- quency . Equally , one can think of the 1D-CNN arrangement as a 2D con volutional kernel spanning the entire frequency spectrum, v alid padding-like, on one edge while shifting the kernel only across the time axis. Interestingly , there is also the possibility of transposing the two dimensions, and instead of frequency we can treat time bins as channels. V isually this operation can be pic- tured as a 90 ◦ rotation of the spectrogram along an axis per - pendicular to its plane in the 1D-CNN in Fig. 2. In this rep- resentation, the width ov er which the kernel slides is now occupied by frequency . This arrangement was not designed to mitigate any issues with the audio representation, and in fact undoes the earlier adv antages of the 1D-CNN when it comes to imposing translational in variance only ov er time (and not frequency), b ut we use it to e xplore additional pos- sibilities in texture synthesis. Having the global temporal structure preserved in the channels also allows us to isolate any effects on the frequencies due to style transfer . This sec- ond model was therefore adopted for a comparison between linear-scaled STFT and CQT input spectrograms 1 that have different treatment for frequencies but do not alter things temporally . 4 Concerning the Gram matrix and audio textures The empirical observation that deeper le vels in the network hierarchy capture higher lev el semantic content motiv ated the use of a content loss based directly on these features in Mahendran [25] and later , Gatys [13]. V isualization experi- ments to help interpret the learned weights have sho wn that individual neurons, and indeed whole layers, ev olve during training to specialize in picking out edges, colour and other patterns, leading up to more complex structures. Similar attempts have been made to interpret spectro- gram inputs to the network. Choi et al. [4] reconstructed an audio signal from “decon volv ed” [41] spectrograms (actu- ally a visualization of the network acti vations in a layer ob- tained through strided con volutions and unpooling) by in- verting their magnitude spectra. They found that filters in their trained networks were responding as onset detectors and harmonic frequency selectors in the shallow layers that are analogous to lo w-lev el visual features such as vertical and horizontal edge detectors in image recognition. For the 1D-CNN used in this paper , con volution kernels hav e tem- poral extent, but just as with image processing networks, the kernels extend along the entirety of the channel depth. As a result, the spectral information occupying this dimension is left mostly intact in feature space. This makes the deri ved 1 See section 5.3.4 Frequency scaling. 6 Muhammad Huzaifah, Lonce W yse Fig. 2 2D (left) and 1D (right) v ariations of the 3-layer CNN network that was used in this study . Only conv olutional layers are sho wn, with the kernels depicted in blue and the numbers representing the size of each dimension. Note the kernel is shifted only across width in the 1D case. features more easily interpretable especially in the shallower layers. As for style, Gatys observed that it can be interpreted as a visual texture that is characterised by global homogene- ity and regularity but is variable and random at a more lo- cal level [6]. As first conjectured by Julesz in 1962 [23], low order statistics are generally suf ficient to parametrically capture these properties. In Gatys’ formulation of the style transfer problem, this takes the form of a Gram matrix of feature representations provided by the network. In the standard orientation of a 1D-CNN with frequenc y bins as channels, the Gram matrix operation transforms a matrix of features maps index ed by time into a square ma- trix of correlations, with each correlation value between a pair of feature maps, capturing information on features that tend to be activ ated together . Further, each entry of the Gram matrix is the sum total of a feature correlation pair across all time. This folding in of the time dimension results in dis- carding information relating to specific time bins (just as this metric of style discards global spatial structure in visual images). W e are thus left with a global summary of the rela- tionship between feature maps abstracted from any localized point of time. W e would therefore expect the optimization ov er style statistics to generally distribute random rearrange- ments of sonic patterns or e vents over time. In this case, full- range spectral features over short (kernel-length) time spans are texture elements that are statistically distrib uted through time to create a texture. The second variant of the 1D-CNN, referred to in the preceding section, has time bins as channels. In this orien- tation feature maps would largely retain temporal informa- tion while being indexed by frequency . This creates a dif- ferent kind of texture model where features localized in fre- quency but with long-term temporal features are statistically distributed across frequenc y to create a texture. W ith this insight, parallels can be drawn to the con ven- tional idea of audio textures which are, correspondent to their visual namesakes, consistent at some scale - that scale being some duration in time for the first case and some range in frequency for the second. Informally , audio textures are sounds that hav e stable characteristics within an adequately large window of time. Common examples include fire crackling, wav es crashing and birds chirping. There hav e been a variety of approaches to modeling audio textures, including those by Athineos and Ellis [1], Dubnov et al. [10], Hoskinson and Pai [19], and Schwarz and Schnell [32]. These texture models are all char- acterized by the distinction between processes at different time scales; one with fast changes, the other static or slo wly- varying, and provide representations that can support per- ceptually similar re-synthesis, temporal extension, and in- teractiv e control ov er audio characteristics. Neural networks based on style transfer mechanisms from the visual [13, 14] and audio [34] domains offer a ne w ap- proach to texture modeling. Under this paradigm, texture corresponds to the style definition, while content is trivial giv en the absence of any large-scale temporal structure. W e will explore style transfer architectures further for additional insights and propose sev eral extensions to the canonical sys- tem to allow for greater flexibility and a de gree of e xpressiv e control ov er the audio texture output. 5 Experiments The rest of the paper documents experiments that probe the issues discussed pre viously , and show both the working as- pects and limitations of CNN-based audio style transfer (Sec- tion 5.2) and texture synthesis (Section 5.3). 5.1 T echnical details T o utilize the feature space learned by a pretrained CNN many pre vious studies have used VGG-19 [33] trained on Applying V isual Domain Style Transfer and T exture Synthesis T echniques to Audio - Insights and Challenges 7 Imagenet [7]. Learned filters were initially noted by Gatys to be important to the success of texture generation [14] and by extension style transfer . Howe ver , this assessment was revised in a more recent work by Ustyuzhaninov , Brendel, Gatys and Bethge [38] that suggests that random, shallow networks may work just as well for many case conditions. Network weights certainly have some influence on the ex- tracted features and perceptual quality of synthesis as W yse [40] reported that audible artefacts were introduced when using an image trained network (i.e. VGG-19) for the audio style transfer task. T o further probe this ef fect in the audio domain, we compare a network with random weights against one trained on the audio-based ESC-50 dataset [29]. For the audio-trained netw ork, ESC-50 audio clips were pre-processed prior to training as follows: All clips were first sampled at 22050 Hz and clipped/padded to a standard du- ration of 5 seconds. Spectrograms were generated via short- time Fourier transform (STFT), using a windo w length of 1024 samples ( ∼ 46.4 ms) and hopsize of 256 ( ∼ 11.6ms). STFT magnitudes were normalized through a natural log function log ([ 1 + | magni t ud e | ) and then scaled to (0, 255) to be saved as 8-bit greyscale png images. The samples used for style transfer underwent the same processing without the initial clipping/padding. For a comparison, a second dataset was prepared con- taining CQT -scaled spectrograms that were conv erted from the previous linear-scaled STFT spectrograms using the al- gorithm adapted from Ellis [12], which is in vertible, though mildly lossy . In vertibility is required after the style transfer process to recover audio from the ne w image using a variant of the Griffin-Lim algorithm [2, 17]. Fig. 3 Overview of the netw ork layers used in this study . The network architecture consists of 3 stacks; within each stack a con volutional layer is followed in sequence by a batch normalization layer , ReLu and max pooling (Fig. 3). Our initial inv estigations and prior work by Ulyanov and Lebedev [34], and W yse [40] influenced the design of the CNN in an important way . A very large channel depth was required to compensate for the reduction in network param- eters for a 1D-CNN, and which generally lead to superior style transfer results. This is corroborated by Gatys in [14], in which he demonstrated that increasing the number of pa- rameters led to better quality synthesized textures. In each of the con volutional layers we used 4096 channels, much larger than the typical channel depth in image processing networks. Meanwhile, the number of channels in the 2D- CNN were heuristically chosen to roughly match the num- ber of network parameters in the 1D-CNN and hence were much smaller (see Fig. 2). In addition to the 2D-CNN, two variants of the 1D-CNNs were used in the experiments: 1D with frequency bins as channels and 1D with time bins as channels. Unless otherwise stated, all experiments were done with the 1D-CNN with frequency bins as channels and a kernel size of 11. Also for all experiments 2 , C = rel u 3 and S = rel u 1 , rel u 2. Further information on hyperparameters from the classification of ESC-50 and style transfer will be provided in the Appendix. A Pytorch implementation of au- dio style transfer as used in our experiments is also av ailable on Github 3 . 5.2 Audio style transfer W e attempt audio style transfer with the 1D-CNN described previously , and characterize the output in terms of its per- ceptual properties and the CNN architecture. Sev eral tech- nical aspects that effect the result are also in vestigated. 5.2.1 Examples of audio style transfer Sev eral examples of audio style transfer were generated, and can be heard on the companion website. The content sound (English male speech) was stylized with a variety of dif- ferent audio clips including Japanese female speech, piano (“Riv er flows in you”), a crowing rooster , and a section of “Imperial March”. Though the style examples came from di verse sources (speech, music and environmental sound) and so had very different audio properties, the style transfer algorithm pro- cessed them similarly . “Content” was observed to mainly preserve sonic objects (pitch), along with their rhythmic struc- ture. Meanwhile “style” mostly manifests itself as timbre signatures without clear temporal structure. The mix seemed to be most strongly activ ated at places where the dynamics 2 When referring to layers, the nomenclature used in this paper is as follows: rel u 1 refers to the ReLu layer within the 1st stack, con v 3 refers to the con volutional layer in the 3rd stack etc. 3 https://github.com/muhdhuz/Audio_NeuralStyle 8 Muhammad Huzaifah, Lonce W yse Fig. 4 The columns show different relati ve weightings between style and content gi ven by β / α , with s on the left and c on the right. Samples can be heard here. of the style and content references coincided. Furthermore, ev en though the speech does take on the timbre of the style reference, content and style features are not disentangled completely , and the original voice can still be faintly heard in the background. For a contrast, we switched to using pi- ano as the content and speech as the style for synthesis. As before, the interplay between style and content characteriza- tions can be clearly heard. As “style”, the human voice fol- lows the rhythm of the piano content, without actually form- ing coherent words. The original piano content was howev er more prominent than in the previous speech examples and so may sound less con vincing as an example of style transfer to the reader . While audio style transfer has been shown here to suc- ceed on a wide v ariety of sources, it should be noted that in- dividual tuning was required to obtain a subjecti vely “good” balance of content and audio features. It is perhaps the case that style and content references with similar feature acti- vations would lead to smoother optimization and a better meshing during style transfer , but this would limit w orkable content/style combinations in a way that is not necessary in visual style transfer . 5.2.2 V arying influence of style and content The preceding examples were synthesized with careful tun- ing of the weighting hyperparameters to obtain perceptually satisfying e xamples of style transfer . T o further illustrate the effect of mixing different amounts of style and content fea- tures, we progressively varied the ratio of weighting hyper- parameters α and β as shown in Fig. 4.The content tar- get here was speech, while the style target was a crowing rooster . For β / α = 1 e 5, the original speech can be generally heard clearly in the presence of some minor distortions and audio artefacts. From the spectrogram it becomes clear that the distortions originate from the faint smearing of the domi- nant frequencies in the reference style across time. The mix- ing of the dominant frequencies for both the speech and rooster sounds becomes more apparent when β / α = 1 e 7. At this ratio, the original speech can still be heard albeit with less prominence, while it starts to take on the timbre of the rooster . Further increasing the influence of style at β / α = 1 e 9 results in the output completely taking on the timbre of the rooster . It is interesting to note that the rhythm of the speech at this ratio is still retained. Using the characterization of style as timbre and content as pitch and rhythm as before, we conclude that the style transfer at β / α = 1 e 9 generates the best result among the examples here, although more fine tuning (as was the case in the preceding section) would arguably generate better re- sults. 5.2.3 Network weights and input initialization Stylized spectrograms were generated with different input conditions and netw ork weights as sho wn in Fig. 6. The syn- thesized image x was either initialized as random noise or a clone of the content reference spectrogram c . For stylization with a trained network, the 1D-CNN was pretrained for the classification of the ESC-50 dataset for 30 epochs. The con- tent target was speech, while the style target was “Imperial March” (Fig. 5). Among the four v ariations tested, the combination of random network weights and content initialization brought about the most stable style transfer . T rained weights gen- erated much higher content and style losses during the opti- mization process as compared to random weights, regardless of whether the sounds used for style transfer were previously exposed to the netw ork as part of the ESC-50 dataset. A pos- sible reason for this observation may be the more po werful discriminativ e po wer of a network pretrained for classifica- Fig. 5 Reference content image c (left) and reference style image s (right). Listen to them here. Applying V isual Domain Style Transfer and T exture Synthesis T echniques to Audio - Insights and Challenges 9 Fig. 6 Synthesized hybrid image x with dif ferent input and weight con- ditions. Clockwise from top left: (1) With random weights and initial- ization, the speech is clear with the style timbre sitting slightly more recessed in the background (2) It was still fairly problematic to get good results for trained weights and a random input. Hints of the as- sociated style and content timbres could be picked up and seem well integrated b ut are very noisy . The output also loses an y clear long term temporal structure (3) Trained weights and input content generated a sample sound fairly similar to (1) with a greater presence of noise and artefacts (4) Random weights plus input content seem to result in the most integrated features of both style and content. tion, resulting in a high Frobenius norm between features deriv ed from different inputs. The high initial losses with trained weights had a negati ve impact on the optimization, leading it to fall into a local minimum (Fig. 7) and an en- suing noisy-sounding synthesis from the network (Fig. 6, right). Indeed, a more aggressive regularization penalty in the form of γ had to be used for the optimization to proceed smoothly . Howe ver , the optimization took much longer to con ver ge. As expected, initializing with a clone of the content ref- erence spectrogram resulted in very strong content features in the output, with the style features normally less pronounced (Fig. 6, bottom). In contrast, starting from a noisy image yielded more mixed results. In Fig. 6 (top left), the output sounds more lik e a mix of content and style rather than styl- ized content. With pretraining (Fig. 6, top right), the output sounds heavily distorted on both the content and style. Interestingly , with some hyperparameter tuning ( α , β and γ ), all four v ariants, at least by visual inspection of the spectrograms, look to be plausible results of style transfer . All outputs generally display the style frequencies overlay- ing that of the content, while still retaining the onset mark- ers of the content sound. Nevertheless, not all of them are of the same sonic quality , and listening to the correspond- ing audio clips show a significant difference between them. Using a random network with content initialization consis- tently rendered more integrated style and content features than the others. The observation that the expectation of style transfer in images and audio do not always align may be in- dicativ e of a greater sensitivity in the audio domain to what constitutes “successful” style transfer in comparison to vi- sion, suggesting that the manifold of such examples exists in a narrower range of data space than for visual data. Overall, our results show that trained weights are not es- sential for successful style transfer , and the particular com- bination of hyperparameters had a greater impact on the out- put, therefore validating Gatys’ revised conclusion in [38]. On the other hand, random weights seemed to be more tol- erant to changes in the hyperparameters as a wider range and more combinations of hyperparameters led to success- ful style transfer . Samples generated from random weights were also generally found to be cleaner in comparison to trained weights that slightly coloured the output with audio artefacts that do not appear to be from the reference style or content. This final result is in accordance with Ulyano v [34] and Grinstein et al. [18] who observ ed that random networks worked at least as well or better than pretrained netw orks for audio style transfer , suggesting that this behaviour may be ev en more pronounced for the audio domain. 5.3 Audio texture synthesis The previous sections demonstrate how a degree of style transfer is possible with the CNN-based framew ork although limitations exist in obtaining expressions of audio content and style as lucid as those seen in image work. Howe ver , if we leav e the task of disentangling and recombining the div ergent aspects of content and style behind, and consider only a single perceptual f actor (i.e. style as texture), we find that the Gram matrix description of audio texture is a fairly representativ e summary statistic when applied to either one of frequency or time. In this section, sev eral aspects of audio texture synthesis by the style transfer schema are discussed further . 5.3.1 Infinite textur es Important to the versatility of the technique for texture syn- thesis is the possibility of continuous generation i.e. infi- nite synthesis. This is achiev ed by appreciating the fact that the entries of the Gram matrix divided by U n (Eq. 1) rep- resent a mean value in time for the normally oriented spec- trogram images (the aforementioned abstraction from time). The time axis giv en by the width of the spectrogram can therefore be arbitrarily extended while still keeping the num- ber of terms in G ( φ n ( s )) and G ( φ n ( x )) consistent. Conse- quently , the generated image x need not match the width of the reference style image s . This implies that audio textures can be synthesized ad infinitum (or con versely shortened). 10 Muhammad Huzaifah, Lonce W yse Fig. 7 Losses from the optimization process comparing the difference between random weights depicted as solid lines and trained weights depicted as dashed lines. T uning the hyperparameters lead to generally better con vergence for both random and trained weights, and more integrated style and content features. Despite this, losses were still very lar ge for trained wights resulting in a much slower con vergence. Sev eral samples were synthesized up to 3 times the orig- inal input duration to demonstrate this effect. The same style statistics hold for the full duration of x , so textural elements are uniquely distributed throughout the specified output length and are not merely repeats of the original duration. 5.3.2 Multi-textur e synthesis T extures from multiple sources can be blended together by utilizing the batch dimension of the input, a different mecha- nism from what has been proposed for multi-style transfer in the literature so far . Previous approaches ha ve included us- ing an aggregated style loss function with contributions from multiple networks trained on distinctiv e styles [5]; or com- bining two styles of distinct spatial structure (e.g. course and fine brushstrokes) by treating one as an input image and the other as the reference style [16] afterwards using the new blended output as the reference style for subsequent style transfer . Dumoulin et al. [11] introduced a “conditional in- stance normalization” network layer that can not only learn multiple styles b ut also interpolate between styles by tuning certain hyperparameters. A single style image s is represented by a 4-dimensional tensor ( B , C , H , W ) , or ( 1 , 513 , 1 , 431 ) substituting the actual numerical v alues used in our e xperiments for a 1D-CNN. In our approach to texture mixing, a set of Σ style images is fed into the network as ( Σ , 513 , 1 , 431 ) . Con volution is done separately on each batch member in the forward pass, result- ing in distinct feature maps for each style. Next the batch dimension is flattened and concatenated into the 1st dimen- sion as part of reshaping φ to ( BH W , C ) prior to calculating the Gram matrix. As a result, deriv ativ e style features are correlated not only within a style image but across multi- ple styles, producing a con vincing amalgamation of textures from several sources. In this way , more complex en viron- mental sounds can be built up from its components, although we observe that using sources that are similar to each other creates a more cogent overall texture. T o demonstrate this, a multi-texture output w as generated from a combination of 3 distinct bird chirping sounds of 5 seconds each taken from the ESC-50 dataset (Fig. 8). The synthesized output was e x- tended to a length of 20 seconds using the technique outlined in Section 5.3.1. As per the description for texture, chirps from all 3 clips do not merely appear periodically but occur randomly and even overlap at times throughout the output. For a better intuition of the meshing of multi-textures we also generated a clip made up of 3 very contrasting sounds from speech, piano, and a cro wing rooster (Fig. 9). For this example, there is usually one dominant texture (dra wn from just one of the original images) at an y gi ven time. It is lik ely that in this case very fe w correlations in the Gram matrix are found between sounds from the dif ferent sources, lead- ing to the lack of hybrid combinations, but the example still provides insight into the time scales and process of recon- struction. Fig. 8 The extended multi-texture bird sound sample that was synthe- sized (bottom) from its 3 constituent clips (top). Prominent spectral structures from the component sounds can be seen in the output spec- trogram although there are ov erlaps with other frequencies. The technique is not without its drawbacks. Since we use the entirety of the reference textures, unwanted “back- ground” sounds from the sources also appear in the recon- structed output, such as the low rumbling noise from one of the chirp sounds that is intermittently heard in the multi- texture bird sound example. The nai ve remedy to this is to Applying V isual Domain Style Transfer and T exture Synthesis T echniques to Audio - Insights and Challenges 11 Fig. 9 The multi-texture output (bottom) from 3 distinct sounds (top) that may be described as uncharacteristic for textures since they are fairly dynamic in the long term. In contrast to Fig. 6a, the synthesis is not as integrated largely because the constituent sounds ha ve very dif- ferent spectral properties, resulting in stark transitions between them. essentially make sure the entirety of the reference clip is de- sirable, although more fine-tune control of certain aspects of the feature space similar to those currently being dev eloped in image style transfer constitutes future work. 5.3.3 Contr olling time and fr equency ranges of te xtural elements The range in time or frequency captured by each textural element can be altered by v arying the kernel width hyperpa- rameter in the 1D-CNN, which in turn changes the effecti ve receptiv e field of neural units in the CNN. This offers us a simple parametric measure to change the desired output. The effect is most obvious for a 1D-CNN with frequency as channels, in which we control the duration of indi vid- ual textural elements through the width of the kernel. Sev- eral clips deriv ed from speech were synthesized with kernel widths ranging from 1 , 11, to 101 bins (Fig. 10). The short- est width of 1 only picks up parts of single words, reminis- cent of phonemes, and produces babble. As we lengthen the time window , whole words and ev entually phrases are cap- tured. Further examples of the increased temporal receptiv e field can be found in the piano samples. The piano attack is also audibly not as crisp as the original clip due to a degree of temporal blur induced by con volution and pooling. In the alternativ e orientation with time bins as channels, the kernel width has control o ver the range in frequency . Us- ing a short width (bin width = 1), local frequency patterns are captured and translated to the entire spectrum, result- ing in a sharp, high-pitched version of the original voice, as one would get with sounds containing many extra high frequency harmonics. Nev ertheless, the onset of each word is evident enough to conclude that long term time structure is preserved. Using progressiv ely longer kernels (bin width = 11, 101) leads to the output voice becoming closer and closer to its original form as illustrated in Fig. 11 (left). This same trend appears for the piano samples, where the trans- lated high frequency harmonics results in the piano timbre taking on a bell-like quality (bin width = 1, 11) that gets closer to the original with a longer kernel (bin width = 101). 5.3.4 F r equency scaling The linearly-scaled STFT spectrograms in the previous ex- periment were replaced with CQT spectrograms while keep- ing the k ernel width variation to the same 1, 11 and 101 bins for speech (Fig. 11, right) and for piano. Specifically , we want to test the hypothesis discussed above that the CQT is better suited for translational in variance in the audio fre- quency dimension. Unlike linear scaling, the CQT preserves harmonic structure as pitch shifts. Con versely , any shift along the frequency dimension in a CQT spectrogram image only affects the pitch and leaves the timbre, or more precisely , the relative amplitudes of harmonically-related components intact. For this experiment the 1D-CNN with time bins as channels was used since it preserv es temporal structure thus isolating any effects due to the shifting shared-weight ker - nels to just the frequencies, allowing for a clearer analysis. Similar to the linear spectrograms, extending the kernels resulted in the capture of a bigger portion of the spectrum. The two transformations howe ver modified the sounds in distinct ways. One reason is simply that the CQT samples lower frequencies more densely than the linear scaling, and higher frequencies less densely . Learning localized frequency patterns over the linear- STFT resulted in a single synthesized voice that sounded as if it contained many additional harmonics. In contrast, the CQT generated something that seemingly contained multi- ple voices at dif ferent pitches. An explanation of this phe- nomenon may lie in the different ef fect each transformation has on harmonic relationships and how we percei ve these relationships. W ithin the kernels receptive field, the CQT maintains harmonic structure and thus sounds are percep- tually grouped to sound as part of the same source. Multiple instances of these harmonic groups appear in the synthesis leading to the presence of multiple layered voices. Mean- while, the linear translation does not preserve the multiplica- tiv e relationship between the components and thus the har- monic relationship of the sound breaks. As a result, voices and instruments are heard as a plethora of different indi- vidual localized frequency sources rather than as a smaller number of rich timbral sources. In that sense, the CQT achiev es translational inv ariance to a certain degree within the re- ceptiv e field of the conv olution kernel, although timbre as a whole is not preserved because only parts of the full spec- trum are being translated. W e note, howe ver , that the Gram matrix represents correlations across filters at the same loca- tion. In the case of the rotated spectrogram orientation, these 12 Muhammad Huzaifah, Lonce W yse Fig. 10 The corresponding spectrogram outputs for an increasing kernel width over time bins and the style reference on the left. The effect of the varying kernel width is apparent from the spectrograms as bigger slices of the original signal is captured in time with a larger receptive field. Samples are found here. Fig. 11 Synthesized textures generated from linearly-scaled STFT spectrograms (left column) and CQT spectrograms (right column). The style references are sho wn at the bottom. Gradually lengthening the kernel width (1 , 11, 101 bins) in the re-oriented 1D-CNN results in capturing more of the frequency spectrum within the kernel for both the linear-STFT and CQT spectrograms, although there are subtle dif- ferences in the corresponding audio reconstructions. are temporally-e xtended filters located at the same local fre- quency region. The distributed nature of pitched sound ob- jects that have energy at multiples of a fundamental fre- quency means that the Gram matrix “style” measure is less sensitiv e to patterns in audio representations than to patterns in visual images, irrespectiv e of the frequency scaling. 6 Discussion In this paper we took a critical look at some of the prob- lems with the canonical style transfer mechanism, that relies on image statistics extracted by a CNN, when adapting it for style transfer in the audio domain. Some key issues that were raised include the asymmetry of the time and frequency di- mensions in a spectrogram representation, the non-linearity and lack of translational inv ariance of the frequency spec- trum, and the loose definitions of style and content in au- dio. This served as motiv ation to explore how style transfer may work for audio, leading to the implementation of se v- eral tweaks based around the use of a 1D-CNN similar to the one introduced by Ulyanov and Lebede v [34]. While changes were made to the model architecture and input representations to better align the method with our per - ceptual understanding of audio, we retained the core idea of using descriptive statistics deriv ed from CNN feature rep- resentations to embody notions of content and style. More- ov er , how content is distinguished from style – direct feature activ ations versus feature correlations via the Gram matrix (or equi valently first and second-order treatment of netw ork features) – was similarly adopted unchanged. A major goal was to therefore relate what these metrics mean in terms of audio, and whether they agree with some of our precon- ceiv ed sentiments of what these concepts represent. For audio, e veryday notions of “style” and “content” are ev en harder to articulate than for images. In addition to the technical issues examined experimentally , they tend to be dependent on context and lev el of abstraction. For example, in music, at a very high le vel of abstraction, style may be a term for genre and content may refer to the lyrics. At a lower le vel, style can refer to instrumentational timbres and content to the actual notes and rhythm. On the other hand, in speech, style may relate to aspects of prosody such as in- tonation, accent, timing patterns, or emotion while content relates to the phonemes building up to the words being spo- ken. Gatys et al. [13] found that first and second-order treat- ment of network features relate remarkably well with what we visually perceive as content and style. In a few words, “style” is described as a multi-scale, spatially inv ariant prod- uct of intra-patch statistics that emerges as a texture, and “content” is the high-lev el structure, encompassing its se- mantics and global arrangement. In the audio realm, we hav e shown through various examples that this spatial structure makes the most perceptual sense when extended to the di- mensions of frequency or time separately . Applying V isual Domain Style Transfer and T exture Synthesis T echniques to Audio - Insights and Challenges 13 Perceptually we found that with the style transfer ar- chitecture “content” strongly captures the global rhythmic structure and pitch while “style” characterizes more of the timbral characteristics. Style transfer for audio in this con- text can hence be construed as a kind of timbre transfer . As a whole ho wev er , there is less obvious disentanglement be- tween style and content in comparison to image style trans- fer , and when their features are combined, they are not as seamless. Consequently , results heard in the audio clips are generally less satisfying and aesthetically appealing than those produced in the image domain. Despite this, an analysis of the Gram matrix of feature correlations reveals that this particular aspect of the style transfer formulation bears many similarities to the concept of audio textures. Generating new textures of the same type as the reference in this paradigm is a case of rearranging, at a certain scale (largely dictated by the kernel width), either the feature maps of the frequency spectrum or that of tempo- ral information, while leaving the other dimension relati vely intact. These ideas were demonstrated with numerous syn- thesized e xamples of audio te xture, including ones of multi- source textures and the constant-Q representation of audio. It is e vident that the approach borrowed from the vision domain is far from a complete solution when it comes to im- posing a giv en style on a section of audio, e ven if we narro w the scope of “style” to timbre. Our intuitions about audio style and content are not well captured by the CNN/Gram matrix computational architecture and how it works with time-frequency representations of audio. Furthermore, the finding that random networks were better than trained net- works at the audio style transfer task ev en puts to question the central role of feature representation in a CNN-based style transfer frame work. Future work needs to instead de- velop a better understanding of what constitutes “style” and “content” in audio. That insight can then be used to fur- ther inv estigate audio representations and model architec- tures that are a better fit and more specifically suited for au- dio generativ e tasks than the visual domain-specific CNN style transfer networks. References 1. Athineos M, Ellis D (2003) Sound texture modelling with linear prediction in both time and frequency do- mains. In: 2003 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), IEEE, vol 5, pp V –648 2. Beauregard GT , Harish M, W yse L (2015) Single pass spectrogram in version. In: 2015 IEEE International Conference on Digital Signal Processing (DSP), IEEE, pp 427–431 3. Bizley JK, Cohen YE (2013) The what, where and ho w of auditory-object perception. Nature Revie ws Neuro- science 14(10):693 4. Choi K, Fazekas G, Sandler M (2016) Explaining deep con volutional neural networks on music classification. arXiv preprint arXi v:160702444 5. Cui B, Qi C, W ang A (2017) Multi-style transfer: Gen- eralizing fast style transfer to se veral genres 6. Davies ER (2008) Handbook of texture analysis. Impe- rial College Press, London, UK, chap Introduction to texture analysis, pp 1–31 7. Deng J, Dong W , Socher R, Li LJ, Li K, Fei-Fei L (2009) Imagenet: A large-scale hierarchical image database. In: Computer V ision and Pattern Recognition, 2009, IEEE, pp 248–255 8. Deng L, Abdel-Hamid O, Y u D (2013) A deep con- volutional neural network using heterogeneous pool- ing for trading acoustic in variance with phonetic confu- sion. In: 2013 IEEE International Conference on Acous- tics, Speech and Signal Processing (ICASSP), IEEE, pp 6669–6673 9. Dieleman S, Schrauwen B (2014) End-to-end learn- ing for music audio. In: 2014 IEEE International Con- ference on Acoustics, Speech and Signal Processing (ICASSP), IEEE, pp 6964–6968 10. Dubnov S, Bar-Joseph Z, El-Y aniv R, Lischinski D, W erman M (2002) Synthesizing sound textures through wa velet tree learning. IEEE Computer Graphics and Applications (4):38–48 11. Dumoulin V , Shlens J, Kudlur M (2017) A learned rep- resentation for artistic style. Proceedings of ICLR 12. Ellis D (2013) Spectrograms: Constant-q (log- frequency) and con ventional (linear). URL http:// www.ee.columbia.edu/ln/rosa/matlab/sgram/ 13. Gatys LA, Ecker AS, Bethge M (2015) A neural algo- rithm of artistic style. arXiv preprint arXi v:150806576 14. Gatys LA, Ecker AS, Bethge M (2015) T exture synthe- sis using conv olutional neural networks. In: Advances in Neural Information Processing Systems, pp 262–270 15. Gatys LA, Bethge M, Hertzmann A, Shechtman E (2016) Preserving color in neural artistic style transfer . arXiv preprint arXi v:160605897 16. Gatys LA, Ecker AS, Bethge M, Hertzmann A, Shecht- man E (2017) Controlling perceptual factors in neural style transfer . In: 2017 IEEE Conference on Computer V ision and Pattern Recognition (CVPR) 17. Griffin D, Lim J (1984) Signal estimation from modi- fied short-time fourier transform. IEEE T ransactions on Acoustics, Speech, and Signal Processing 32(2):236– 243 18. Grinstein E, Duong N, Ozerov A, Perez P (2017) Audio style transfer . arXiv preprint arXi v:171011385 14 Muhammad Huzaifah, Lonce W yse 19. Hoskinson R, Pai D (2001) Manipulation and resynthe- sis with natural grains. In: Proceedings of the 2001 In- ternational Computer Music Conference, ICMC 20. Huzaifah M (2017) Comparison of time-frequency representations for en vironmental sound classification using con volutional neural networks. arXiv preprint arXiv:170607156 21. Jing Y , Y ang Y , Feng Z, Y e J, Song M (2017) Neural style transfer: A revie w . arXiv preprint arXiv:170504058 22. Johnson J, Alahi A, Fei-Fei L (2016) Perceptual losses for real-time style transfer and super-resolution. In: Eu- ropean Conference on Computer V ision, Springer, pp 694–711 23. Julesz B (1962) V isual pattern discrimination. IRE transactions on Information Theory 8(2):84–92 24. Ling ZH, Kang SY , Zen H, Senior A, Schuster M, Qian XJ, Meng HM, Deng L (2015) Deep learning for acous- tic modeling in parametric speech generation: A sys- tematic re view of e xisting techniques and future trends. IEEE Signal Processing Magazine 32(3):35–52 25. Mahendran A, V edaldi A (2015) Understanding deep image representations by inv erting them. In: 2015 IEEE Conference on Computer V ision and Pattern Recogni- tion (CVPR), pp 5188–5196 26. McDermott JH, Simoncelli EP (2011) Sound texture perception via statistics of the auditory periphery: ev- idence from sound synthesis. Neuron 71(5):926–940 27. Nov ak R, Nikulin Y (2016) Improving the neural algo- rithm of artistic style. arXiv preprint arXi v:160504603 28. Perez A, Proctor C, Jain A (2017) Style transfer for prosodic speech. T ech. rep., T ech. Rep., Stanford Uni- versity 29. Piczak KJ (2015) Esc: Dataset for en vironmental sound classification. In: Proceedings of the 23rd A CM interna- tional conference on Multimedia, A CM, pp 1015–1018 30. Rand TC (1974) Dichotic release from masking for speech. The Journal of the Acoustical Society of Amer- ica 55(3):678–680 31. Salamon J, Bello JP (2017) Deep con volutional neu- ral networks and data augmentation for en vironmen- tal sound classification. IEEE Signal Processing Letters 24(3):279–283 32. Schwarz D, Schnell N (2010) Descriptor-based sound texture sampling. In: Sound and Music Computing (SMC), pp 510–515 33. Simonyan K, Zisserman A (2014) V ery deep con volu- tional networks for lar ge-scale image recognition. arXiv preprint arXiv:14091556 34. Ulyanov D, Lebedev V (2016) Audio tex- ture synthesis and style transfer . URL https://dmitryulyanov.github.io/ audio- texture- synthesis- and- style- transfer/ 35. Ulyanov D, Lebedev V , V edaldi A, Lempitsky VS (2016) T exture networks: Feed-forward synthesis of textures and stylized images. In: ICML, pp 1349–1357 36. Ulyanov D, V edaldi A, Lempitsky VS (2016) Instance normalization: The missing ingredient for fast styliza- tion. arXiv preprint arXi v:160708022 37. Ulyanov D, V edaldi A, Lempitsk y VS (2017) Improved texture networks: Maximizing quality and div ersity in feed-forward stylization and texture synthesis. In: 2017 IEEE Conference on Computer V ision and Pattern Recognition (CVPR), vol 1, p 3 38. Ustyuzhaninov I, Brendel W , Gatys LA, Bethge M (2016) T exture synthesis using shallow con volu- tional networks with random filters. arXiv preprint arXiv:160600021 39. V erma P , Smith JO (2018) Neural style transfer for au- dio spectograms. arXiv preprint arXi v:180101589 40. W yse L (2017) Audio spectrogram representations for processing with con volutional neural networks. In: Pro- ceedings of the First International W orkshop on Deep Learning and Music joint with IJCNN, pp 37–41 41. Zeiler MD, Fergus R (2014) V isualizing and under- standing con volutional networks. In: European confer- ence on computer vision, Springer , pp 818–833 Appendix All models implemented in Pytorch. Model parameters : – Max pooling: 1x2, stride 1x2 (1D-CNN) / 2x2, stride 2x2 (2D-CNN) – Padding: “Same” padding used on all con volutional lay- ers – Batchnorm: Default Pytorch parameters used on all batch normalization layers Classification hyperparameters : – Dataset: ESC-50 (fold 1 used for v alidation, fold 2-5 used for training) – Optimizer: Adam (lr = 1e-3, β 1 = 0.9, β 2 = 0.999, eps = 1e-8) – Loss criterion: Cross Entropy Loss – Batch size: 20 – T raining epochs: 30 Style transfer hyperparameters : – Optimizer: L-BFGS (with default Pytorch parameters) – No. of iterations: 500 Section 5.2.2 : – Random network/content input: α = 1, β = 1e5/1e7/1e9, γ = 1e-3 Applying V isual Domain Style Transfer and T exture Synthesis T echniques to Audio - Insights and Challenges 15 Section 5.2.3 : – Pretrained network/random input: α = 1, β = 1e3, γ = 1 – Random network/random input: α = 1e1, β = 1e7, γ = 1e-3 – Pretrained network/content input: α = 1, β = 1e5, γ = 1e1 – Random network/content input: α = 1, β = 1e8, γ = 1e-3 Section 5.3 : – Random network/random input: α = 0, β = 1e9, γ = 1e-3

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment