Graph Learning Under Partial Observability

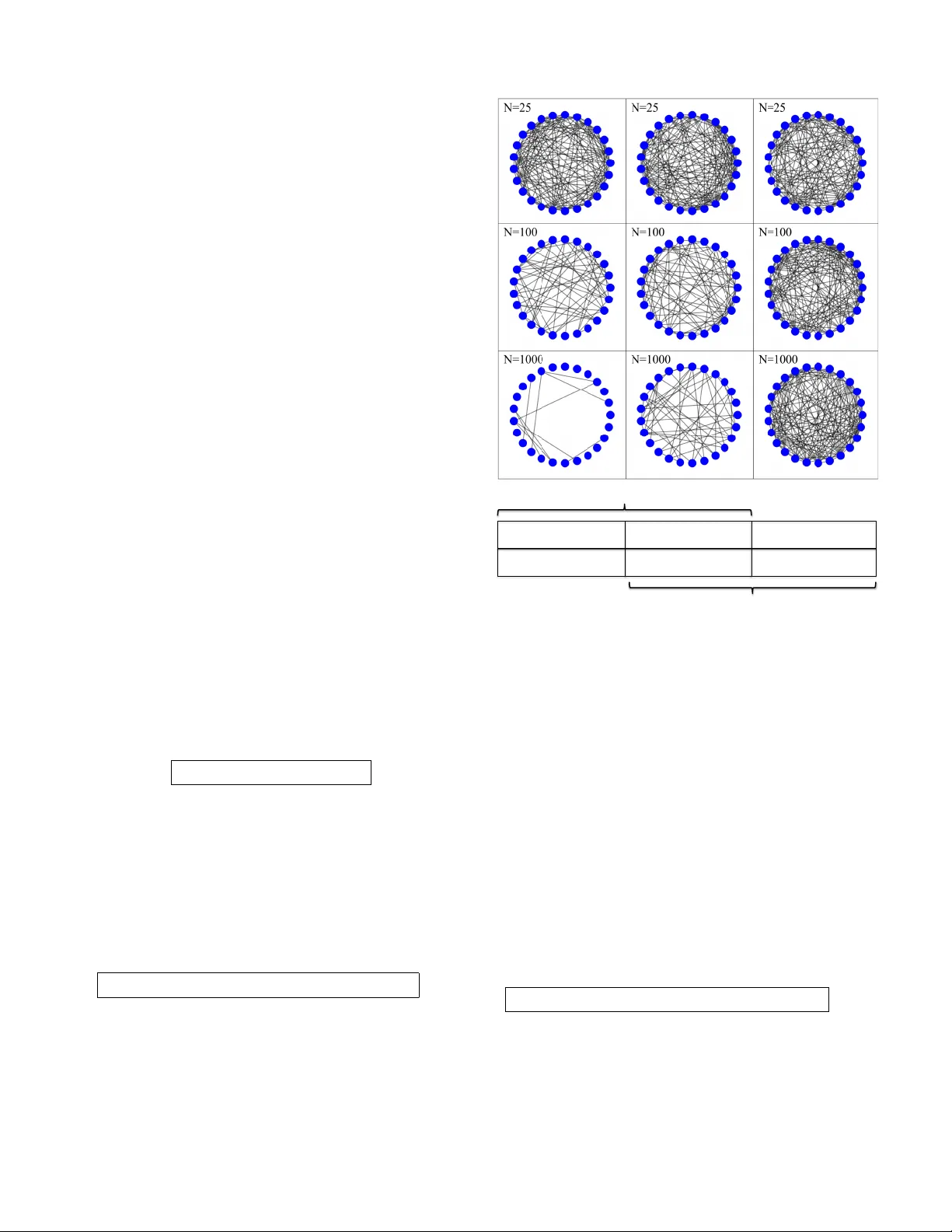

Many optimization, inference and learning tasks can be accomplished efficiently by means of decentralized processing algorithms where the network topology (i.e., the graph) plays a critical role in enabling the interactions among neighboring nodes. T…

Authors: Vincenzo Matta, Augusto Santos, Ali H. Sayed