Hide and Speak: Towards Deep Neural Networks for Speech Steganography

Steganography is the science of hiding a secret message within an ordinary public message, which is referred to as Carrier. Traditionally, digital signal processing techniques, such as least significant bit encoding, were used for hiding messages. In…

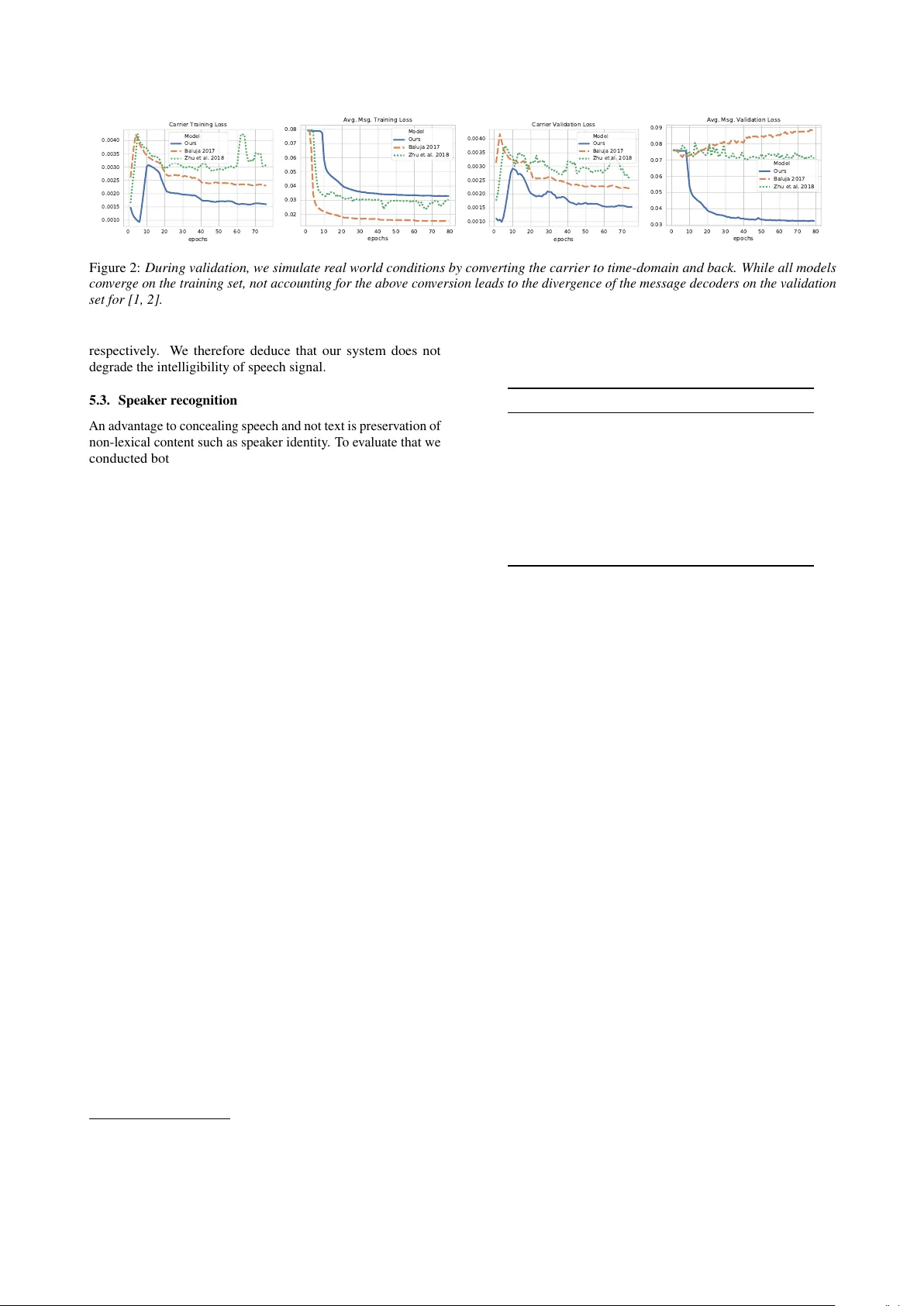

Authors: Felix Kreuk, Yossi Adi, Bhiksha Raj

Hide and Speak: T owards Deep Neural Netw orks f or Speech Steganograph y F elix Kr euk 1 , Y ossi Adi 2 , Bhiksha Raj 3 , Rita Singh 3 , J oseph K eshet 1 1 Bar-Ilan Uni versity 2 Facebook AI Research 3 Carnegie Mellon Uni versity felix.kreuk@gmail.com Abstract Steganography is the science of hiding a secret message within an ordinary public message, which is referred to as Carrier . T ra- ditionally , digital signal processing techniques, such as least significant bit encoding, were used for hiding messages. In this paper , we explore the use of deep neural networks as stegano- graphic functions for speech data. W e showed that steg anography models proposed for vision are less suitable for speech, and pro- pose a new model that includes the short-time Fourier transform and in verse-short-time Fourier transform as differentiable lay- ers within the network, thus imposing a vital constraint on the network outputs. W e empirically demonstrated the effecti veness of the proposed method comparing to deep learning based on sev eral speech datasets and analyzed the results quantitatively and qualitati vely . Moreov er , we showed that the proposed ap- proach could be applied to conceal multiple messages in a single carrier using multiple decoders or a single conditional decoder . Lastly , we evaluated our model under different channel distor- tions. Qualitative e xperiments suggest that modifications to the carrier are unnoticeable by human listeners and that the decoded messages are highly intelligible. 1. Introduction Steganography (“ste ganos” – concealed or covered, “graphein” – writing) is the science of concealing messages inside other messages. It is generally used to conv ey concealed “secret” messages to recipients who are aware of their presence, while keeping e ven their existence hidden from other unaw are parties who only see the “public” or “carrier” message. Recently , [ 1 , 2 ] proposed to use deep neural networks as a steganographic function for hiding an image inside another im- age. Unlike traditional steganography methods [ 3 , 4 ], in this line of work, the network learns to conceal a hidden message inside the carrier without manually specifying a particular redundancy to exploit. Although these studies presented impressi ve results on im- age data, the applicability of such models for speech data was not explored. As opposed to working with ra w images in the domain of vision processing, the common approach when learning from speech data is to work at the frequency domain, and specifically , using the short time Fourier transform (STFT) to capture the spectral changes ov er time. The STFT output is a complex ma- trix composed of the Fourier transform of dif ferent time frames. The common practice is to use the absolute values (magintudes) of the STFT measurements, and to maintain a substantial o verlap between adjacent frames [ 5 ]. Consequently , the original signal cannot be losslessly reco vered from STFT . Moreov er, as only the magnitude is considered, the phase needs to be recov ered. This process complicates the restoration of the time domain signal ev en further . In this study , we sho w that steganography models proposed for vision are less suitable for speech. W e b uild on the work by [ 1 , 2 ] and propose a ne w model that includes the STFT and in verse-STFT as differentiable layers within the netw ork, thus imposing a vital constraint on the network outputs. Although one can simply hide written text inside audio files and conv ey the same lexical content, concealing audio inside audio preserves additional features. For instance, the secret message may con vey the speaker identity , the sentiment of the speaker , prosody , etc. These features can be used for later identi- fication and authentication of the message. Similarly to [ 1 , 2 ], the proposed model is comprised of three parts. The first learns to encode a hidden message inside the carrier . The second component are dif f erential STFT and in verse- STFT layers that simulate transformations between frequency and time domains. Lastly , the third component learns to decode a hidden message from a generated carrier . Additionally , we demonstrated for the first time, that the abo ve scheme no w per- mits us to hide multiple secret messages into a single carrier, each potentially with a different intended recipient who is the only person who can recov er it. Further analysis shows that the addition of STFT layers yields a method which is robust to various channel distortions and compression methods, such as MP3 encoding, Additi ve White Gaussian Noise, sample rate reduction, etc. Qualitativ e experiments suggest that modifications to the carrier are unno- ticeable by human listeners and that the decoded messages are highly intelligible and preserve other semantic content, such as speaker identity . Our contribution: • W e empirically sho w that steganographic vision-oriented models are less suitable for the audio domain. • W e augment vision-oriented models with differentiable STFT/In verse-STFT layers during training to care for noise introduced when conv erting signals from frequency to time domain and back. • W e embed multiple speech messages in a single speech carrier . • W e provide e xtensiv e empirical and subjectiv e analysis of the reconstructed signals and sho w that the produced carriers are indistinguishable from the original carriers, while keeping the decoded messages highly intelligible. The paper is or ganized as follo ws, Section 2 formulates all the notations we use throughout the paper . In Section 3 we describe the proposed model. Section 4 and Section 5 present the results together with objecti ve and subjecti ve analysis. Section 6 sum- marizes the related work. W e conclude the paper in Section 7 with a discussion and future work. 2. Notation and representation In this section, we rigorously set the notation we use throughout the paper . ˆ ˆ ˆ ˆ ˜ ( ) ( ) Figure 1: Model overview: the encoder E gets as input the carrier C , its output is then concatenated with C and M to cr eate H . Then, the carrier decoder D c generates the new embedded carrier , fr om which the messa ge decoder D m decodes the message ˆ M ; Sub-figur e (1A) depicts the baseline model (1B) depicts our pr oposed model. Steganography notations. Recall, in steganograph y the goal is to conceal a hidden message within a carrier segment. Specifi- cally , the steganography system is a function that gets as input a carrier utterance, denoted by c , and a hidden message, denoted by m . The outputs of the system are the embedded carrier ˆ c , and consequently the r ecover ed message , ˆ m , such that the following constraints are satisfied: (i) both ˆ c and ˆ m should be perceptually similar to c and m , respecti vely , by a human ev aluator; (ii) the message ˆ m should be recoverable from the carrier ˆ c and should be intelligible; and lastly (iii) a human e valuator should not be able to detect the presence of a hidden message embedded in ˆ c . A udio notations. Let x = ( x [0] , x [1] , . . . , x [ N − 1]) be a speech signal that is composed of N samples. The spectral content of the signal changes over time, therefore it is often rep- resented by the short-time Fourier transform, commonly known as the spectr ogram , rather than by the Fourier transform. The STFT , X , is a matrix of complex numbers, its columns are the Fourier transform of a giv en time frame and its rows are frame indices. In speech processing we are often interested in the absolute value of the STFT , or the magnitude, which is denoted by X = |X | . Similarly we denote the phase of the STFT by ∠ X = arctan (Im( X ) / Re( X )) . Furthermore, we denote by S the operator that gets as input a real signal and outputs the magnitude matrix of its STFT , X = S ( x ) , and denote by S † the operator that gets as input the magnitude and phase matrices of the STFT , and returns a recovered version of the speech wav eform, x = S † ( X , ∠ X ) . Here S † is computed by taking the inv erse Fourier transform of each column of X , and then reconstructing the wav eform by combining the outputs by the ov erlap-and-add method. Note that this reconstruction is imperfect, since there is a substantial o verlap between adjacent windows when using STFT in speech processing, hence part of the signal at each window is lost [6]. 3. Model Similarly to the models proposed in [ 1 , 2 ], our architecture is composed of the follo wing components: (i) Encoder Network denoted E ; (ii) Carrier Decoder Network denoted D c ; and (iii) Message Decoder Network denoted D m . The model is schemat- ically depicted in Figure 1A. The Encoder Network E , gets as input a carrier C , and outputs a latent representation of the carrier , E ( C ) . Then, we compose a joint representation of the encoded carrier E ( C ) , message M , and original carrier C by concatenating all three along the con volutional channel axis, H = [ E ( C ); C ; M ] as proposed in [ 2 ], where we denote the concatenation operator by ;. The Carrier Decoder Network, D c , gets as input the afore- mentioned representation and outputs ˆ C , the carrier embedded with the hidden message. Lastly , the Message Decoder Network D m , gets as input ˆ C and outputs ˆ M , the reconstructed hidden message. Each of the abov e components is a neural network, where the parameters are found by minimizing the absolute error between the carrier and the embedded carrier and between the original message and the reconstructed message. At this point our architecture diver ges from the one proposed in [ 1 ] by the addition of a differentiable STFT layers. Recall, our goal is to transmit ˆ c , which means we need to recover the time-domain wa veform from the magnitude ˆ C . Unfortunately , the recovery of ˆ c from the STFT magnitude only , is an ill-posed problem in general [ 7 , 6 ]. Ideally , we would like to reconstruct ˆ c using S † ( ˆ C , ∠ ˆ C ) . Howe ver , the phase ∠ ˆ C is unkno wn, and therefore must be approximated. One way to overcome this phase recov ery obstacle is to use the classical alternating projection algorithm of Grif fin and Lim [ 8 ]. Unfortunately , this method produces a carrier with noticeable artifacts. The message, howe ver , can be recov ered that way and is intelligible. Another way to reconstruct the time-domain signal is to use the magnitude of the embedded carrier ˆ C , and the phase of the original carrier, ∠ C . In subjective tests we found that the restructured carrier, denoted as ˜ c = S † ( ˆ C , ∠ C ) , sounds acoustically similar to the original carrier c . Howe ver , when recov ering the hidden message we get unintelligible output. This is due to the fact that we used a mismatched phase. T o mitigate that, we turn to a third solution, where we con- strain the loss function by S and S † . Formally , we minimize: L ( C , M ) = λ c k C − ˆ C k 1 + λ m k M − ˜ M k 1 , where ˆ C = D c ( H ) , ˜ C = S ( S † ( ˆ C , ∠ C )) , and ˜ M = D m ( ˜ C ) . Practically , we added S and S † operators as dif ferentiable 1D- con volution layers as illustrated in Figure 1B. In words, we jointly optimize the model to generate ˆ C which will preserve the hidden message after S ◦ S † and will also resemble C . The abov e approach can be naturally extended to conceal multiple messages. In that case, the model is provided with a single carrier C , and a set of k messages, { M i } k i =1 , where k > 1 . W e explored two settings: (i) multiple message decoders , in which we use k different message decoders denoted by D m,i where 1 < i ≤ k , one for each message; and (ii) a single conditional decoder , in which we condition a single decoder D m with a set of codes { q i } k i =1 . Each code q i is represented as a one-hot vector of size k . 4. Experimental results W e ev aluated our approach on TIMIT [ 9 ] and YOHO [ 10 ] datasets using the standard train/val/test splits. W e e valuated the proposed method on the aforementioned datasets to assess the model under various recording conditions. Each utterance was sampled at 16kHz and represented as its power spectrum by applying the STFT with W = 256 FFT frequency bins and sliding windo w with a shift L = 128 . Training e xamples were generated by randomly selecting one utterance as carrier and k other utterances as messages for k ∈ { 1 , 3 , 5 } . Thus, the matching of carrier and message is completely arbitrary and not fixed. Further , it may originate from different speak ers. All models were trained using Adam for 80 epochs with an initial learning rate of 10 − 3 and a decaying factor of 10 e v- ery 20 epochs. W e balanced between the carrier and message T able 1: Absolute Err or (lower is better) and Signal to Noise Ratio (higher is better) for both carrier and messa ge using single message embedding. Results ar e r eported for both TIMIT and YOHO datasets. Model Car . loss Car . SNR Msg. loss Msg. SNR TIMIT Freq. Chop 0.0770 0.22 0.046 6.85 Baluja et al. [1] 0.0023 27.11 0.096 0.14 Zhu et al. [2] 0.0027 32.70 0.078 0.71 Ours 0.0016 28.27 0.035 8.76 Ours + Adv . 0.0022 34.54 0.051 4.02 YOHO Freq. Chop 0.0550 0.24 0.038 7.08 Baluja et al. [1] 0.0021 26.35 0.072 0.53 Zhu et al. [2] 0.0047 27.99 0.066 1.05 Ours 0.0016 27.86 0.028 8.16 Ours + Adv . 0.0016 31.18 0.033 6.00 reconstruction losses using λ c = 3 , λ m = 1 . Each compo- nent in our model is implemented as a Gated Con volutional Neural Network as proposed by [ 11 ]. Specifically , E is com- posed of three blocks of gated con volutions, D c was composed of four blocks of gated conv olutions, and D m was composed of six blocks of gated con volutions. Each block contained 64 kernels of size 3 × 3. Sample waveforms of different models and experiments as well as the source code are available at http://hyperurl.co/ab7c3g . W e report results for the proposed approach together with [ 1 , 2 ]. Additionally , we included a naiv e baseline, de- noted by F r equency Chop . In which, we concatenated the lower half of frequencies of M abov e the lower half of frequencies of C , to form ˆ C . Message decoding was performed by extracting the upper half of frequencies from ˆ C and zero padding to the original size. Results for concealing a single message are reported in T a- ble 1: the Absolute-Error (AE) and Signal-to-Noise-Ratio (SNR) for both carrier and message of all baselines and the proposed models on TIMIT and Y OHO. Notice, while both [ 1 ] and [ 2 ] yield low carrier errors, their direct application to speech data produced unintelligible mes- sages with a lo w SNR. This is due to the fact that these models were not constrained to retain the same carrier content after the conv ersion to time-domain and back. Figure 2 depicts the training process of the proposed model and baselines. It can be seen that without any constraints, the baseline message decoders div erge. Lastly , Frequency Chop retains much of the message content after decoding, but creates a carrier with noticeable arti- facts. This is due to the fact that the hidden message is audible as it resides in the carrier’ s high frequencies. Moreov er , we explored including adversarial loss terms be- tween C and ˜ C to the optimization problem as suggested by [ 2 ]. Similarly to the effect on images, when incorporating the ad- versarial loss, the carrier quality improved and contained less artifacts, ho wev er it comes with the cost of less accurate message reconstruction. Overall, the abo ve results highlight the importance of model- ing the time-frequency transformations in the context of ste gano- graphic models for the audio-domain. Multiple messages. Next, we further explore the capability of the proposed model for concealing sev eral hidden messages. W e analyzed the two settings described in Section 3, namely multiple decoders and single conditional decoder . T able 2 summarizes the results. The reported loss and SNR are averaged over the T able 2: AE and SNR for both carrier and messag e concealing 3 and 5 messages using either multiple decoders or one condi- tional decoder . Results are r eported for both TIMIT and YOHO datasets. TIMIT Carrier Message model loss SNR loss SNR multi-3 0.0042 25.13 0.0458 6.16 cond-3 0.0043 24.08 0.0463 6.08 multi-5 0.0058 23.64 0.0550 4.42 cond-5 0.0063 22.70 0.0516 4.87 Y OHO multi-3 0.0042 23.80 0.0349 6.29 cond-3 0.0038 23.53 0.0344 6.49 multi-5 0.0046 23.33 0.0428 4.17 cond-5 0.0051 22.30 0.0392 4.85 k messages. Interestingly , both settings achie ved comparable results for embedding 3 and 5 messages in a single carrier . An increase in the number of messages translates to higher loss values both for carrier and for messages. These results are to be expected as the model is forced to work at higher compression rates due to concealing and recovering more messages while keeping the carrier dimension the same. 5. Analysis In this section we provide sev eral ev aluations regarding the qual- ity of the embedded carrier , and the recov ered message. W e start with a subjectiv e analysis of the resulted waveforms. 5.1. Carrier ABX testing T o validate that the difference between c and ˜ c is not detectable by humans, we performed ABX testing. W e present each human with two audio samples A and B. Each of these two samples is either the original carrier or the carrier embedded with a hidden message. These two samples are followed by a third sample X randomly selected to be either A or B. Next, the human must choose whether X is the same as A or B. W e generated 50 (25 from TIMIT and 25 from Y OHO) audio samples, for each audio sample we recorded 20 answers from Amazon Mechanical T urk (AMT), 1000 answers ov erall. Only 51.2% (48.8% for TIMIT and 53.6% for Y OHO) of the carriers embedded with hidden messages could be distinguished from the original ones by humans (the optimal ratio is 50%). Therefore we conclude that the modifications made by the steganographic function are not detectable by the human ear . 5.2. Message intelligibility A major metric in e valuating a speech steganograph y system is the intelligibility of the reconstructed messages. T o quantify this measure we conducted an additional subjective experiment in AMT . W e generated 40 samples from TIMIT dataset: 20 original messages and 20 messages reconstructed by our model. W e used TIMIT for that task since it contains a reacher v ocabulary set comparing to YOHO. W e recorded 20 answers for each sample (800 answers ov erall). The participants were instructed to transcribe the presented samples, and the W ord Error Rate (WER) and Character Error Rate (CER) were measured. While WER is a coarse measure, CER provides finer ev aluation of transcription error . The CER/WER measured on original and reconstructed messages were 5.1%/2.86% and 5.15%/2.78% 0 10 20 30 40 50 60 70 epochs 0.0010 0.0015 0.0020 0.0025 0.0030 0.0035 0.0040 Carrier Training Loss Model Ours Baluja 2017 Zhu et al. 2018 0 10 20 30 40 50 60 70 80 epochs 0.02 0.03 0.04 0.05 0.06 0.07 0.08 Avg. Msg. Training Loss Model Ours Baluja 2017 Zhu et al. 2018 0 10 20 30 40 50 60 70 epochs 0.0010 0.0015 0.0020 0.0025 0.0030 0.0035 0.0040 Carrier Validation Loss Model Ours Baluja 2017 Zhu et al. 2018 0 10 20 30 40 50 60 70 80 epochs 0.03 0.04 0.05 0.06 0.07 0.08 0.09 Avg. Msg. Validation Loss Model Ours Baluja 2017 Zhu et al. 2018 Figure 2: During validation, we simulate r eal world conditions by con verting the carrier to time-domain and bac k. While all models con verg e on the training set, not accounting for the above con version leads to the diverg ence of the message decoder s on the validation set for [1, 2]. respectiv ely . W e therefore deduce that our system does not degrade the intelligibility of speech signal. 5.3. Speaker recognition An adv antage to concealing speech and not text is preserv ation of non-lexical content such as speaker identity . T o ev aluate that we conducted both human and automatic ev aluations, adhering to the Speak er V erification Protocol [ 12 ]. Given 4 speech se gments, the first three were uttered by a single speaker, the forth was uttered by either the same speaker or by a different one, the goal is to verify whether the speaker in the forth sample is the same as in the first three 1 . For the human ev aluation, we recorded 400 human answers, in 82% of cases, listeners were able to distinguish whether the speaker in the forth sample matched the speaker in the first three. In the automatic e valuation setup, we used the automatic speaker verification system proposed by [ 12 ]. The Equal Error Rate (EER) of the system is 18% (82% accuracy) on the generated messages, and 15% EER (85% accuracy) on the original messages. Hence, we deduce much of the speaker identity information is preserved in the generated messages. 5.4. Robustness to channel distortion Another critical e valuation is performance under noisy condi- tions. T o e xplore that we applied dif ferent channel distortion and compression techniques on the reconstructed carrier ˜ c . In T a- ble 3 we describe message reconstruction results after distorting the carrier using: 16kHz to 8kHz do wn-sampling, MP3 compres- sion (using dif ferent bit rates), 16-bit precision to 8-bit precision, Additiv e White Gaussian Noise (A WGN) and Speckle noise. Re- sults suggest that our method is robust to carrier down-sampling, MP3 compression and noise addition. Contrarily , the model is sensitiv e to bit precision change, but this is to be expected as the message decoder relies on miniscule carrier modification in order to reconstruct the hidden message. 6. Related work A large v ariety of steganography methods hav e been proposed ov er the years, where most of them are applied to images [ 3 , 4 ]. Traditionally , steganographic functions exploited actual or perceptual redundancies in the carrier signal. The most common approach is to encode the secret message is in the least significant bits of individual signal samples [ 13 ]. Other methods include concealing the secret message in the phase of the frequency components of the carrier [ 14 ] or in the form of the parameters of a miniscule echo that is introduced into the carrier signal [ 15 ]. 1 W e use speakers of the same gender to make the task of speaker differentiation more challenging. T able 3: Noise r obustness r esults. W e denote by σ the norm of the added noise Noise Msg. Loss Msg. SNR Down-sampling to 8k 0.046 7.72 MP3 compression 128k 0.045 6.88 MP3 compression 64k 0.062 5.34 MP3 compression 32k 0.089 2.15 A WGN, σ = 0 . 01 0.077 -12.52 A WGN, σ = 0 . 001 0.044 8.50 Speckle, σ = 0 . 1 0.035 8.26 Speckle, σ = 0 . 01 0.035 8.76 Prec. reduction 8-bit 0.160 0.25 Recently , neural netw orks ha ve been widely used for steganography [ 1 , 2 , 16 , 17 , 18 , 19 , 20 , 21 , 22 , 23 , 24 , 25 ]. The authors in [ 1 ] first suggested to train neural networks to hide an entire image within another image (similarly to Figure 1A). [ 2 ] extended the w ork of [ 1 ] while adding an adv ersarial loss term to the objective. [ 16 ] suggested to use generativ e adversarial learning to generate stenographic images. Howe ver , none of the abov e approaches explored speech data and were focused on hiding a single message only . A closely related task is W atermarking . Both approaches aim to encode a secret message into a data file. Howe ver , in steganography the goal is to perform secret communication while in w atermarking the goal is verification and ownership protection. Sev eral watermarking techniques use LSB encoding [ 26 , 27 ]. Recently , [ 28 , 29 ] suggested to embed watermarks into neural networks parameters. 7. Discussion and future work In this work we show that the recently proposed deep learn- ing models for image steganograph y are less suitable for audio data. W e show that in order to utilize such models, time-domain transformations must be addressed during training. Moreov er, we extend the general deep-learning ste ganography approach to hide multiple messages. W e e valuated our model under se veral noisy conditions and sho wed empirically that such modifications to carriers are indistinguishable by humans and the messages recov ered by our model are highly intelligible. Finally , we demonstrated that voice speak er verification is a viable means of authentication for hidden speech messages. For future work we would like to explore the ability of such steganographic methods to e vade detection by steg analysis algorithms, and incorporate such e vasion capabilities as part of the training pipeline. 8. References [1] Shumeet Baluja, “Hiding images in plain sight: Deep steganog- raphy , ” in Advances in Neural Information Processing Systems , 2017, pp. 2069–2079. [2] Jiren Zhu, Russell Kaplan, Justin Johnson, and Li Fei-Fei, “Hidden: Hiding data with deep networks, ” in European Confer ence on Computer V ision . Springer , 2018, pp. 682–697. [3] T ayana Morkel, Jan HP Elof f , and Martin S Olivier , “ An overvie w of image steganography ., ” in ISSA , 2005, pp. 1–11. [4] GC Kessler , “ An overvie w of steganography for the computer forensics examiner . retriev ed february 26, 2006, ” 2004. [5] Jae Soo Lim and Alan V Oppenheim, “Enhancement and band- width compression of noisy speech, ” Pr oceedings of the IEEE , vol. 67, no. 12, pp. 1586–1604, 1979. [6] Kishore Jaganathan, Y onina C Eldar , and Babak Hassibi, “Stft phase retriev al: Uniqueness guarantees and recov ery algorithms, ” IEEE Journal of selected topics in signal pr ocessing , vol. 10, no. 4, pp. 770–781, 2016. [7] E Hofstetter , “Construction of time-limited functions with specified autocorrelation functions, ” IEEE T ransactions on Information Theory , vol. 10, no. 2, pp. 119–126, 1964. [8] Daniel Griffin and Jae Lim, “Signal estimation from modified short-time fourier transform, ” IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , vol. 32, no. 2, pp. 236–243, 1984. [9] John S Garofolo, Lori F Lamel, W illiam M Fisher , Jonathan G Fis- cus, and David S Pallett, “Darpa timit acoustic-phonetic continous speech corpus cd-rom. nist speech disc 1-1.1, ” NASA STI/Recon technical r eport n , vol. 93, 1993. [10] Joseph P Campbell, “T esting with the yoho cd-rom voice verifica- tion corpus, ” in ICASSP , 1995. [11] Y ann N Dauphin, Angela Fan, Michael Auli, and David Grang- ier , “Language modeling with gated con volutional networks, ” in Pr oceedings of the 34th International Conference on Machine Learning-V olume 70 . JMLR. org, 2017, pp. 933–941. [12] Georg Heigold, Ignacio Moreno, Samy Bengio, and Noam Shazeer , “End-to-end text-dependent speaker verification, ” in 2016 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocess- ing (ICASSP) . IEEE, 2016, pp. 5115–5119. [13] P Jayaram, HR Ranganatha, and HS Anupama, “Information hiding using audio steganography–a survey , ” The International Journal of Multimedia & Its Applications (IJMA) V ol , vol. 3, pp. 86–96, 2011. [14] Xiaoxiao Dong, Mark F Bocko, and Zeljko Ignjatovic, “Data hiding via phase manipulation of audio signals, ” in Acoustics, Speech, and Signal Pr ocessing, 2004. Pr oceedings.(ICASSP’04). IEEE International Confer ence on . IEEE, 2004, vol. 5, pp. V –377. [15] W alter Bender , Daniel Gruhl, and Norishige Morimoto, “Method and apparatus for echo data hiding in audio signals, ” Apr. 6 1999, US Patent 5,893,067. [16] Jamie Hayes and George Danezis, “Generating steganographic images via adversarial training, ” in Advances in Neural Information Pr ocessing Systems , 2017, pp. 1954–1963. [17] Y inlong Qian, Jing Dong, W ei W ang, and Tieniu T an, “Deep learning for steganalysis via conv olutional neural networks, ” in Media W atermarking, Security , and F orensics 2015 . International Society for Optics and Photonics, 2015, vol. 9409, p. 94090J. [18] Lionel Pibre, Jérôme Pasquet, Dino Ienco, and Marc Chaumont, “Deep learning is a good steganalysis tool when embedding key is reused for dif ferent images, ev en if there is a cover sourcemis- match, ” Electr onic Imaging , vol. 2016, no. 8, pp. 1–11, 2016. [19] Pin Wu, Y ang Y ang, and Xiaoqiang Li, “Stegnet: Mega image steganography capacity with deep con volutional network, ” Futur e Internet , vol. 10, no. 6, pp. 54, 2018. [20] W eixuan T ang, Shunquan T an, Bin Li, and Jiwu Huang, “ Auto- matic steganographic distortion learning using a generati ve adver- sarial network, ” IEEE Signal Pr ocessing Letters , v ol. 24, no. 10, pp. 1547–1551, 2017. [21] Nameer N El-emam, “Embedding a lar ge amount of information using high secure neural based steganography algorithm, ” Inter- national Journal of Information and Communication Engineering , vol. 4, no. 2, pp. 2, 2008. [22] Haichao Shi, Jing Dong, W ei W ang, Y inlong Qian, and Xiaoyu Zhang, “Ssgan: secure steganography based on generative ad- versarial networks, ” in P acific Rim Conference on Multimedia . Springer , 2017, pp. 534–544. [23] W enxue Cui, Shaohui Liu, Feng Jiang, Y ongliang Liu, and Debin Zhao, “Multi-stage residual hiding for image-into-audio steganog- raphy , ” in ICASSP 2020-2020 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2020, pp. 2832–2836. [24] Shiv am Agarwal and Siddarth V enkatraman, “Deep residual neural networks for image in speech steganography , ” arXiv pr eprint arXiv:2003.13217 , 2020. [25] Ru Zhang, Shiqi Dong, and Jianyi Liu, “Invisible steganogra- phy via generative adversarial networks, ” Multimedia tools and applications , vol. 78, no. 7, pp. 8559–8575, 2019. [26] Ron G V an Schyndel, Andrew Z Tirk el, and Charles F Osborne, “ A digital watermark, ” in Image Processing, 1994. Proceedings. ICIP-94., IEEE International Confer ence . IEEE, 1994, vol. 2, pp. 86–90. [27] Raymond B W olfgang and Edward J Delp, “ A watermark for digital images., ” in ICIP (3) , 1996, pp. 219–222. [28] Y usuke Uchida, Y uki Nagai, Shige yuki Sakazawa, and Shin’ichi Satoh, “Embedding watermarks into deep neural networks, ” in Pr oceedings of the 2017 ACM on International Conference on Multimedia Retrieval . A CM, 2017, pp. 269–277. [29] Y ossi Adi, Carsten Baum, Moustapha Cisse, Benny Pinkas, and Joseph Keshet, “T urning your weakness into a strength: W ater- marking deep neural networks by backdooring, ” USENIX , 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment