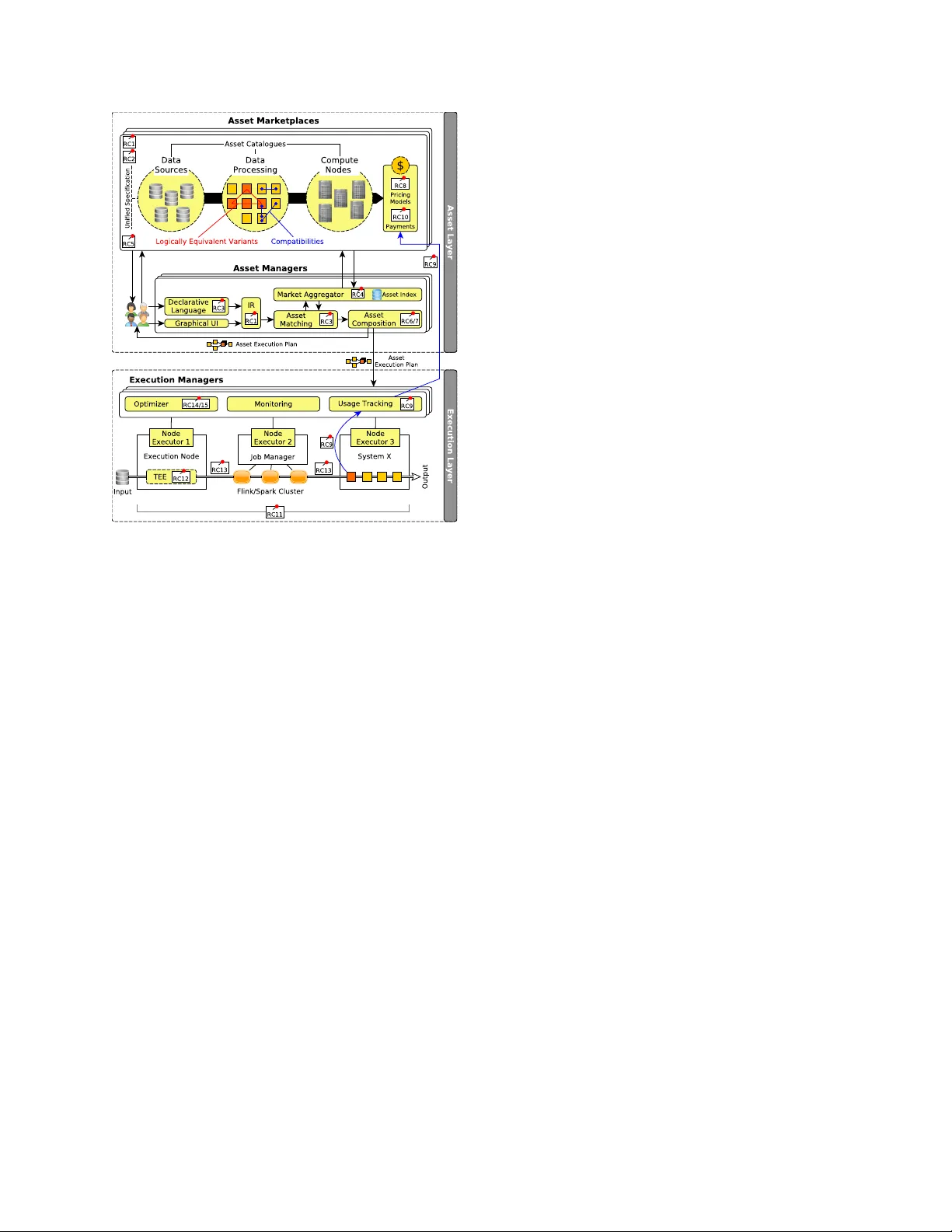

Agora: A Unified Asset Ecosystem Going Beyond Marketplaces and Cloud Services

Data, algorithms, and compute/storage infrastructure are key assets that drive data science and artificial intelligence applications. As providing all these assets requires a huge investment, data science and artificial intelligence technologies are …

Authors: Jonas Traub, Jorge-Arnulfo Quiane-Ruiz, Zoi Kaoudi