On Scaling Data-Driven Loop Invariant Inference

Automated synthesis of inductive invariants is an important problem in software verification. Once all the invariants have been specified, software verification reduces to checking of verification conditions. Although static analyses to infer invaria…

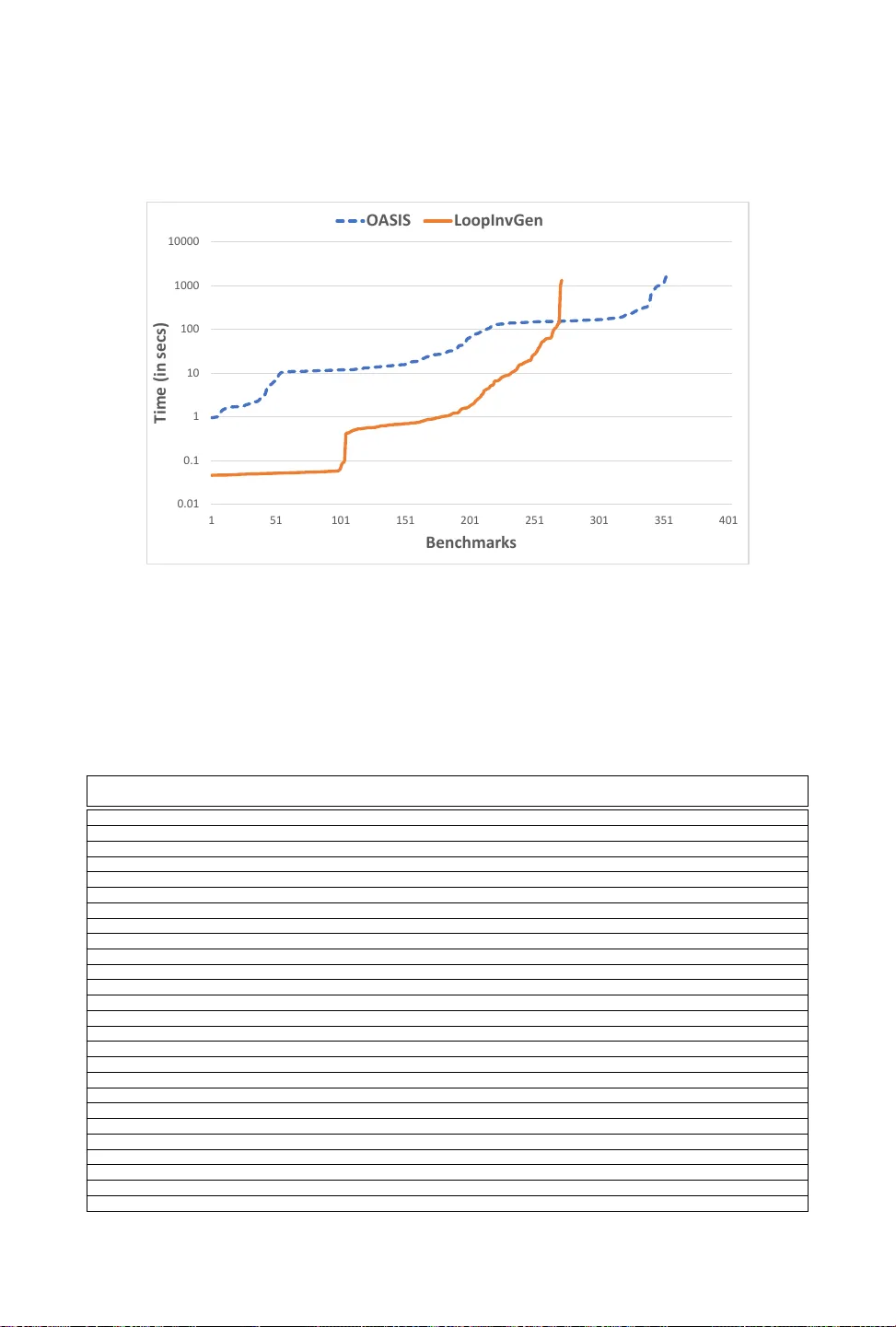

Authors: Sahil Bhatia, Saswat Padhi, Nagarajan Natarajan