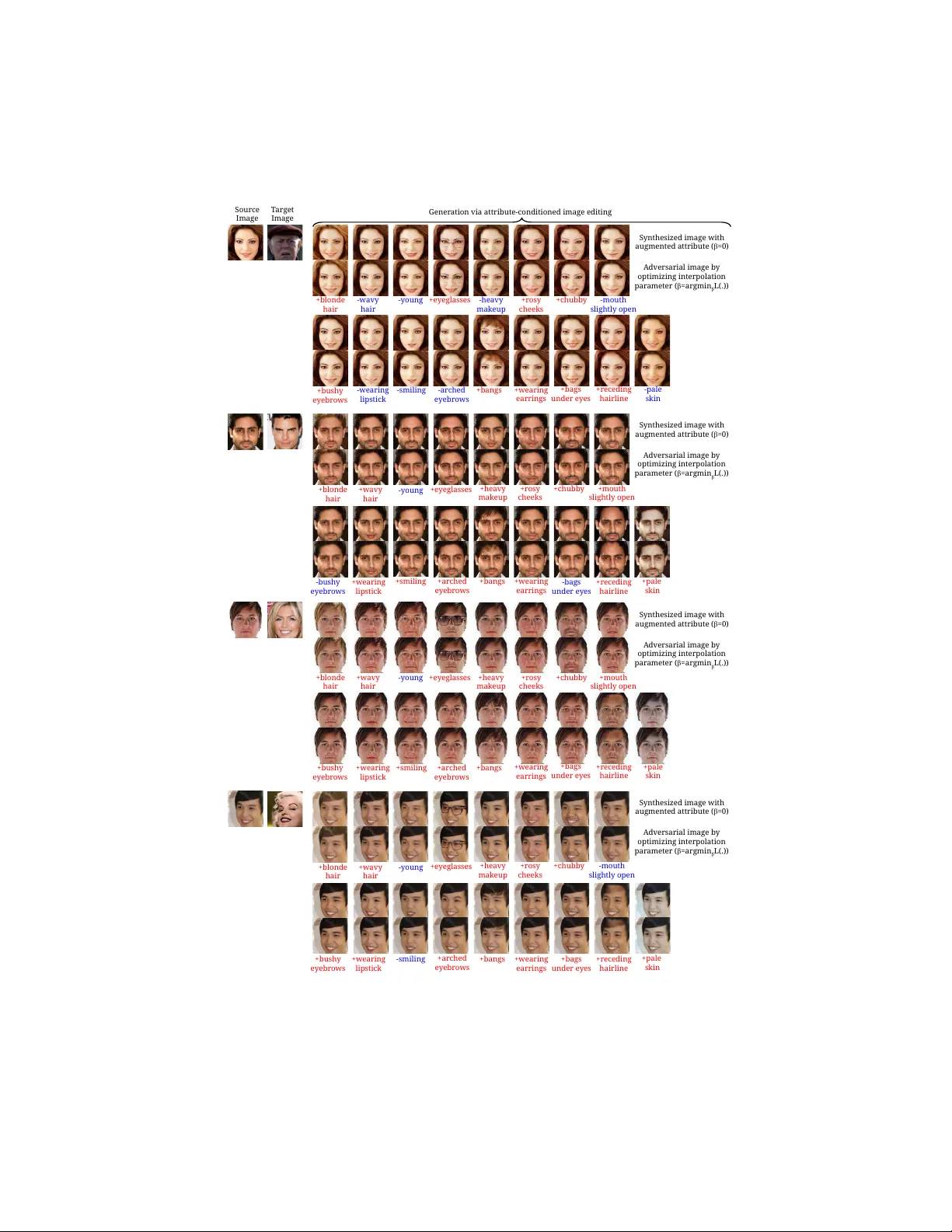

SemanticAdv: Generating Adversarial Examples via Attribute-conditional Image Editing

Deep neural networks (DNNs) have achieved great success in various applications due to their strong expressive power. However, recent studies have shown that DNNs are vulnerable to adversarial examples which are manipulated instances targeting to mis…

Authors: Haonan Qiu, Chaowei Xiao, Lei Yang