Bridging data science and dynamical systems theory

This short review describes mathematical techniques for statistical analysis and prediction in dynamical systems. Two problems are discussed, namely (i) the supervised learning problem of forecasting the time evolution of an observable under potentia…

Authors: Tyrus Berry, Dimitrios Giannakis, John Harlim

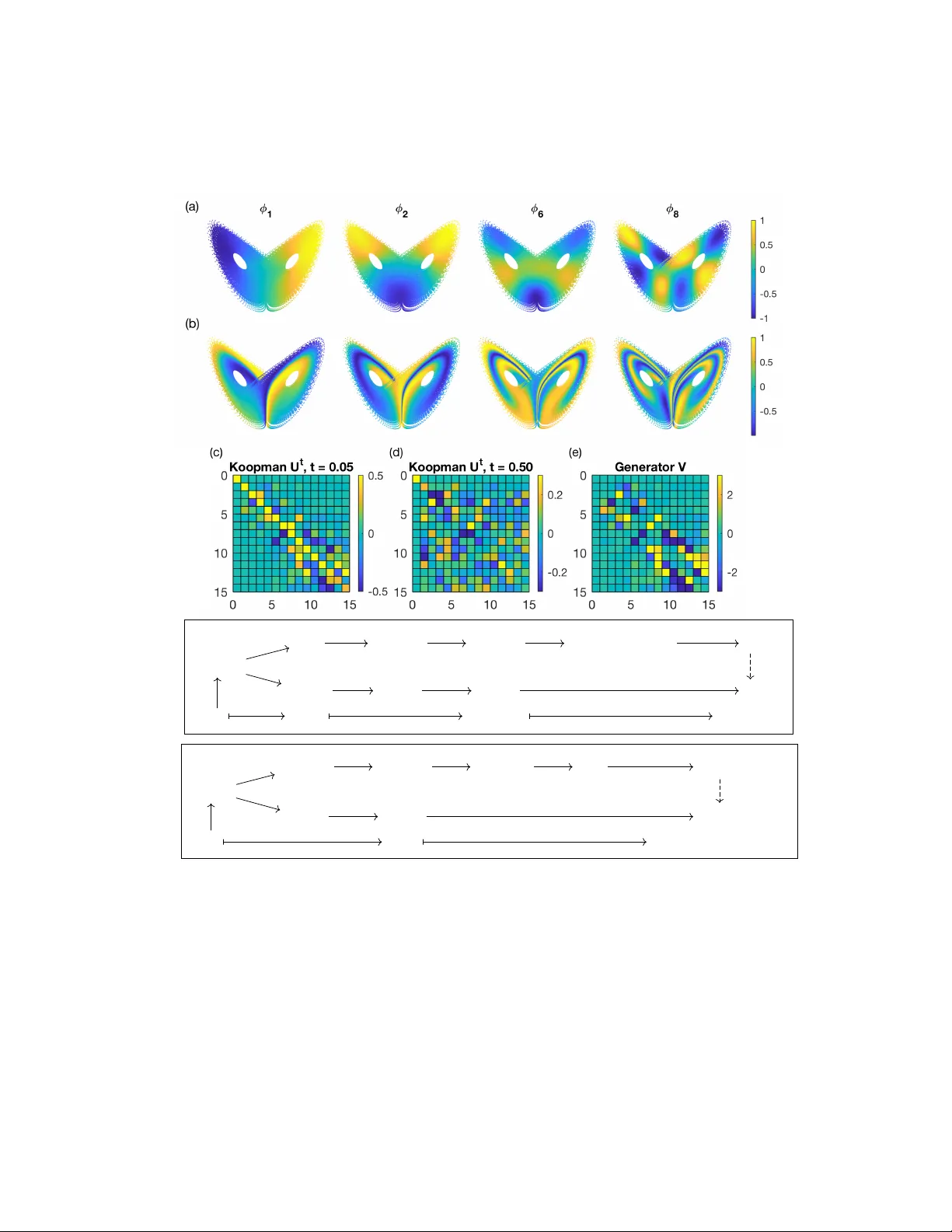

Bridging data science and dynamical systems theory T yrus Berry ∗ Dimitrios Giannakis † John Harlim ‡ July 1, 2020 Mo dern science is undergoing what migh t arguably b e called a “data rev olution”, manifested by a rapid gro wth of observed and sim ulated data from complex systems, as w ell as vigorous research on in mathemat- ical and computational framew orks for data analysis. In man y scien tific branc hes, these efforts hav e led to the creation of statistical models of complex systems that matc h or exceed the skill of first-principles mo d- els. Y et, despite these successes, statistical mo dels are oftentimes treated as black b oxes, providing lim- ited guarantees ab out stability and conv ergence as the amoun t of training data increases. Blac k-b ox mo dels also offer limited insights about the operating mec hanisms (physics), the understanding of which is cen tral to the adv ancemen t of science. In this short review, w e describ e mathematical tec hniques for statistical analysis and prediction of time-ev olving phenomena, ranging from simple ex- amples suc h as an oscillator, to highly complex sys- tems suc h as the turbulen t motion of the Earth’s atmosphere, the folding of proteins, and the evolu- tion of species p opulations in an ecosystem. Our main thesis is that com bining ideas from the theory of dynamical systems with learning theory provides an effective route to data-driv en mo dels of complex systems, with refinable predictions as the amount of training data increases, and ph ysical in terpretability through discov ery of coherent patterns around which the dynamics is organized. Our article thus serves as an invitation to explore ideas at the interface of the ∗ Tyrus Berry is an assistant professor at George Mason Universit y . His email address is tb erry@gmu.edu. † Dimitrios Giannakis is an associate professor at New Y ork Universit y . His email address is dimitris@cims.nyu.edu. ‡ John Harlim is a professor at the Pennsylv ania State Uni- versit y . His email address is jharlim@psu.edu. t wo fields. This is a v ast sub ject, and inv ariably a num- b er of important developmen ts in areas such as deep learning [EHJ17], reservoir computing [PHG + , VPH + 20], con trol [KM18, KNP + 20], and non- autonomous/sto c hastic systems [F ro13, KNK + 18] are not discussed here. Statistical forecasting and coher- en t pattern extraction Consider a dynamical system of the form Φ t : Ω → Ω, where Ω is the state space and Φ t , t ∈ R , the flow map. F or example, Ω could b e Euclidean space R d , or a more general manifold, and Φ t the solution map for a system of ODEs defined on Ω. Alternativ ely , in a PDE setting, Ω could be an infinite-dimensional function space and Φ t an ev olution group acting on it. W e consider that Ω has the structure of a metric space equipped with its Borel σ -algebra, playing the role of an ev ent space, with measurable functions on Ω acting as random v ariables, called observables . In a statistical mo deling scenario, we consider that a v ailable to us are time series of v arious such observ- ables, sampled along a dynamical tra jectory which w e will treat as b eing unkno wn. Sp ecifically , w e assume that we hav e access to t w o observ ables, X : Ω → X and Y : Ω → Y , resp ectively referred to as co v ari- ate and resp onse functions, together with corresp ond- ing time series x 0 , x 1 , . . . , x N − 1 and y 0 , y 1 , . . . , y N − 1 , where x n = X ( ω n ), y n = Y ( ω n ), and ω n = Φ n ∆ t ( ω 0 ). Here, X and Y are metric spaces, ∆ t is a p ositiv e sampling interv al, and ω 0 an arbitrary p oint in Ω initializing the tra jectory . W e shall refer to the 1 collection { ( x 0 , y 0 ) , . . . , ( x N − 1 , y N − 1 ) } as the train- ing data. W e require that Y is a Banach space (so that one can talk ab out exp ectations and other func- tionals applied to Y ), but allo w the co v ariate space X to b e nonlinear. Man y problems in statistical mo deling of dynami- cal systems can be expressed in this framework. F or instance, in a lo w-dimensional ODE setting, X and Y could both be the iden tity map on Ω = R d , and the task could be to build a model for the ev olution of the full system state. W eather forecasting is a classical high-dimensional application, where Ω is the abstract state space of the climate system, and X a (highly non-in vertible) map represen ting measurements from satellites, meteorological stations, and other sensors a v ailable to a forecaster. The resp onse Y could be temp erature at a sp ecific location, Y = R , illustrat- ing that the resp onse space ma y b e of considerably lo wer dimension than the cov ariate space. In other cases, e.g., forecasting the temp erature field ov er a geographical region, Y may be a function space. The t wo primary questions that will concern us here are: Problem 1 (Statistical forecasting) . Giv en the training data, construct (“learn”) a function Z t : X → Y that predicts Y at a lead time t ≥ 0. That is, Z t should hav e the property that Z t ◦ X is closest to Y ◦ Φ t among all functions in a suitable class. Problem 2 (Coherent pattern extraction) . Giv en the training data, iden tify a collection of observ ables z j : Ω → Y which hav e the prop ert y of evolving co- heren tly under the dynamics. By that, we mean that z j ◦ Φ t should b e relatable to z j in a natural w ay . These problems ha ve an extensive history of study from an in terdisciplinary p ersp ective spanning math- ematics, statistics, physics, and man y other fields. Here, our fo cus will b e on nonp ar ametric metho ds , whic h do not employ explicit parametric mo dels for the dynamics. Instead, they use universal structural prop erties of dynamical systems to inform the design of data analysis tec hniques. F rom a learning stand- p oin t, Problems 1 and 2 can b e thought of as su- p ervise d and unsup ervise d learning, resp ectively . A mathematical requirement we will impose to metho ds addressing either problem is that they ha ve a w ell- defined notion of con vergence, i.e., they are refinable, as the num ber N of training samples increases. Analog and POD approac hes Among the earliest examples of nonparametric fore- casting tec hniques is Lorenz’s analog method [Lor69]. This simple, elegant approac h mak es predictions b y trac king the evolution of the resp onse along a dy- namical tra jectory in the training data (the analogs). Go o d analogs are selected according to a measure of geometrical similarity b etw een the cov ariate v ariable observ ed at forecast initialization and the cov ariate training data. This metho d p osits that past b ehav- ior of the system is represen tativ e of its future b ehav - ior, so lo oking up states in a historical record that are closest to current observ ations is lik ely to yield a skill- ful forecast. Subsequent methodologies ha ve also em- phasized asp ects of state space geometry , e.g., using the training data to appro ximate the ev olution map through patched local linear mo dels, often leveraging dela y coordinates for state space reconstruction. Early approaches to coherent pattern extraction in- clude the prop er orthogonal decomp osition (POD) [Kos43], which is closely related to principal com- p onen t analysis (PCA; in tro duced in the early 20th cen tury b y P earson), the Karhunen-Lo ` ev e expansion, and empirical orthogonal function (EOF) analysis. Assuming that Y is a Hilbert space, POD yields an expansion Y ≈ Y L = P L j =1 z j , z j = u j σ j ψ j . Ar- ranging the data into a matrix Y = ( y 0 , . . . , y N − 1 ), the σ j are the singular v alues of Y (in decreasing or- der), the u j are the corresponding left singular v ec- tors, called EOFs, and the ψ j are giv en b y pro jec- tions of Y onto the EOFs, ψ j ( ω ) = h u j , Y ( ω ) i Y . That is, the principal comp onent ψ j : Ω → R is a linear feature characterizing the unsup ervised data { y 0 , . . . , y N − 1 } . If the data is dra wn from a proba- bilit y measure µ , as N → ∞ the POD expansion is optimal in an L 2 ( µ ) sense; that is, Y L has minimal L 2 ( µ ) error k Y − Y L k L 2 ( µ ) among all rank- L appro x- imations of Y . Effectively , from the p ersp ective of POD, the imp ortant comp onents of Y are those cap- turing maximal v ariance. Despite many successes in c hallenging applications 2 (e.g., turbulence), it has b een recognized that POD ma y not reveal dynamically significan t observ ables, offering limited predictability and physical insight. In recent years, there has been significant in terest in tec hniques that address this shortcoming by mo dify- ing the linear map Y to hav e an explicit dep endence on the dynamics [BK86], or replacing it b y an evolu- tion operator [DJ99, MB99]. Either directly or indi- rectly , these metho ds mak e use of op erator-theoretic ergo dic theory , which we no w discuss. Op erator-theoretic form ulation The operator-theoretic formulation of dynamical sys- tems theory shifts atten tion from the state-space p ersp ectiv e, and instead characterizes the dynamics through its action on linear spaces of observ ables. Denoting the v ector space of Y -v alued functions on Ω by F , for every time t the dynamics has a nat- ural induced action U t : F → F giv en b y comp o- sition with the flow map, U t f = f ◦ Φ t . It then follo ws by definition that U t is a linear operator, i.e., U t ( αf + g ) = αU t f + U t g for all observ ables f , g ∈ F and every scalar α ∈ C . The op erator U t is kno wn as a c omp osition op er ator , or Ko opman op er- ator after classical work of Bernard Koopman in the 1930s [Koo31], which established that a general (p o- ten tially nonlinear) dynamical system can b e charac- terized through intrinsically linear operators acting on spaces of observ ables. A related notion is that of the tr ansfer op er ator , P t : M → M , which describes the action of the dynamics on a space of measures M via the pushforward map, P t m := Φ t ∗ m = m ◦ Φ − t . In a n um ber of cases, F and M are dual spaces to one another (e.g., contin uous functions and Radon mea- sures), in which case U t and P t are dual op erators. If the space of observ ables under consideration is equipp ed with a Banach or Hilb ert space struc- ture, and the dynamics preserves that structure, the op erator-theoretic formulation allo ws a broad range of tools from sp ectral theory and approximation the- ory for linear op erators to b e employ ed in the study of dynamical systems. F or our purp oses, a par- ticularly adv an tageous asp ect of this approac h is that it is amenable to rigorous statistical approxi- mation, whic h is one of our principal ob jectiv es. It should be kept in mind that the spaces of observ ables encoun tered in applications are generally infinite- dimensional, leading to b ehaviors with no counter- parts in finite-dimensional linear algebra, such as un- b ounded op erators and contin uous sp ectrum. In fact, as we will see below, the presence of con tin uous sp ec- trum is a hallmark of mixing (c haotic) dynamics. In this review, we restrict attention to the op erator-theoretic description of me asur e-pr eserving, er go dic dynamics . By that, we mean that there is a probability measure µ on Ω such that (i) µ is in- v arian t under the flow, i.e., Φ t ∗ µ = µ ; and (ii) every measurable, Φ t -in v arian t set has either zero or full µ - measure. W e also assume that µ is a Borel measure with compact supp ort A ⊆ Ω; this set is necessarily Φ t -in v arian t. An example known to rigorously sat- isfy these prop erties is the Lorenz 63 (L63) system on Ω = R 3 , whic h has a compactly supported, ergo dic in v arian t measure supp orted on the famous “butter- fly” fractal attractor; see Figure 1. L63 exemplifies the fact that a smo oth dynamical system may ex- hibit in v arian t measures with non-smo oth supp orts. This b ehavior is ubiquitous in mo dels of physical phe- nomena, which are formulated in terms of smo oth differen tial equations, but whose long-term dynam- ics concentrate on lo w er-dimensional subsets of state space due to the presence of dissipation. Our meth- o ds should therefore not rely on the existence of a smo oth structure for A . In the setting of ergo dic, measure-preserving dy- namics on a metric space, tw o relev an t structures that the dynamics ma y be required to preserve are con tinuit y and µ -measurabilit y of observ ables. If the flo w Φ t is contin uous, then the Koopman op erators act on the Banach space F = C ( A, Y ) of contin u- ous, Y -v alued functions on A , equipp ed with the uni- form norm, by isometries, i.e., k U t f k F = k f k F . If Φ t is µ -measurable, then U t lifts to an operator on equiv alence classes of Y -v alued functions in L p ( µ, Y ), 1 ≤ p ≤ ∞ , and acts again by isometries. If Y is a Hilb ert space (with inner product h· , ·i Y ), the case p = 2 is sp ecial, since L 2 ( µ, Y ) is a Hilb ert space with inner pro duct h f , g i L 2 ( µ, Y ) = R Ω h f ( ω ) , g ( ω ) i Y dµ ( ω ), on which U t acts as a unitary map, U t ∗ = U − t . Clearly , the prop erties of approximation tec hniques 3 for observ ables and evolution op erators dep end on the underlying space. F or instance, C ( A, Y ) has a w ell-defined notion of p oint wise ev aluation at ev ery ω ∈ Ω by a contin uous linear map δ ω : C ( A, Y ) → Y , δ ω f = f ( ω ), which is useful for in terp olation and forecasting, but lac ks an inner-pro duct structure and asso ciated orthogonal pro jections. On the other hand, L 2 ( µ ) has inner-pro duct structure, whic h is v ery useful theoretically as w ell as for n umerical algo- rithms, but lacks the notion of p oint wise ev aluation. Letting F stand for an y of the C ( A, Y ) or L p ( µ, Y ) spaces, the set U = { U t : F → F } t ∈ R forms a strongly con tin uous group under comp osition of op- erators. That is, U t ◦ U s = U t + s , U t, − 1 = U − t , and U 0 = Id, so that U is a group, and for every f ∈ F , U t f conv erges to f in the norm of F as t → 0. A cen tral notion in suc h evolution groups is that of the gener ator , defined by the F -norm limit V f = lim t → 0 ( U t f − f ) /t for all f ∈ F for which the limit exists. It can b e sho wn that the domain D ( V ) of all such f is a dense subspace of F , and V : D ( V ) → F is a closed, un bounded op erator. In- tuitiv ely , V can b e though t as a directional deriv ativ e of observ ables along the dynamics. F or example, if Y = C , A is a C 1 manifold, and the flow Φ t : A → A is generated b y a con tinuous v ector field ~ V : A → T A , the generator of the Ko opman group on C ( A ) has as its domain the space C 1 ( A ) ⊂ C ( A ) of contin- uously differen tiable, complex-v alued functions, and V f = ~ V · ∇ f for f ∈ C 1 ( A ). A strongly contin- uous ev olution group is completely c haracterized by its generator, as any t w o such groups with the same generator are identical. The generator acqui res additional properties in the setting of unitary evolution groups on H = L 2 ( µ, Y ), where it is skew-adjoin t, V ∗ = − V . Note that the sk ew-adjoin tness of V holds for more general measure-preserving dynamics than Hamiltonian sys- tems, whose generator is sk ew-adjoin t with resp ect to Leb esgue measure. By the spectral theorem for sk ew- adjoin t op erators, there exists a unique pro jection- v alued measure E : B ( R ) → B ( H ), giving the gener- ator and Ko opman op erator as the spectral integrals V = Z R iα dE ( α ) , U t = e tV = Z R e iαt dE ( α ) . Here, B ( R ) is the Borel σ -algebra on the real line, and B ( H ) the space of b ounded op erators on H . In- tuitiv ely , E can b e thought of as an op erator ana- log of a complex-v alued sp ectral measure in F ourier analysis, with R playing the role of frequency space. That is, given f ∈ H , the C -v alued Borel measure E f ( S ) = h f , E ( S ) f i H is precisely the F ourier sp ec- tral measure asso ciated with the time-auto correlation function C f ( t ) = h f , U t f i H . The latter, admits the F ourier representation C f ( t ) = R R e iαt dE f ( α ). The Hilb ert space H admits a U t -in v arian t split- ting H = H a ⊕ H c in to orthogonal subspaces H a and H c asso ciated with the p oint and contin uous comp o- nen ts of E , respectively . In particular, E has a unique decomp osition E = E a + E c with H a = ran E a ( R ) and H c = ran E c ( R ), where E a is a purely atomic sp ectral measure, and E c is a sp ectral measure with no atoms. The atoms of E a (i.e., the singletons { α j } with E a ( { α j } ) 6 = 0) corresp ond to eigenfr e quencies of the generator, for whic h the eigen v alue equation V z j = iαz j has a nonzero solution z j ∈ H a . Un- der ergodic dynamics, every eigenspace of V is one- dimensional, so that if z j is normalized to unit L 2 ( µ ) norm, E ( { α j } ) f = h z j , f i L 2 ( µ ) z j . Ev ery suc h z j is an eigenfunction of the Koopman op erator U t at eigen- v alue e iα j t , and { z j } is an orthonormal basis of H a . Th us, every f ∈ H a has the quasip erio dic evolution U t f = P j e iα j t h z j , f i L 2 ( µ ) z j , and the auto correla- tion C f ( t ) is also quasip erio dic. While H a alw ays con tains constan t eigenfunctions with zero frequency , it might not hav e any non-constan t elements. In that case, the dynamics is said to b e we ak-mixing . In contrast to the quasip erio dic ev olution of observ- ables in H a , observ ables in the contin uous sp ectrum subspace exhibit a loss of correlation characteristic of mixing (c haotic) dynamics. Sp ecifically , for every f ∈ H c the time-av eraged autocorrelation function ¯ C f ( t ) = R t 0 | C f ( s ) | ds/t tends to 0 as | t | → ∞ , as do cross-correlation functions h g , U t f i L 2 ( µ ) b et ween ob- serv ables in H c and arbitrary observ ables in L 2 ( µ ). Data-driv en forecasting Based on the concepts in troduced ab ov e, one can for- m ulate statistical forecasting in Problem 1 as the 4 task of constructing a function Z t : X → Y on co v ariate space X , such that Z t ◦ X optimally ap- pro ximates U t Y among all functions in a suitable class. W e set Y = C , so the resp onse v ariable is scalar-v alued, and consider the Ko opman op erator on L 2 ( µ ), so we ha v e access to orthogonal pro jections. W e also assume for no w that the co v ariate function X is injective, so ˆ Y t := Z t ◦ X should b e able to ap- pro ximate U t Y to arbitrarily high precision in L 2 ( µ ) norm. Indeed, let { u 0 , u 1 , . . . } b e an orthonormal ba- sis of L 2 ( ν ), where ν = X ∗ µ is the pushforw ard of the inv ariant measure on to X . Then, { φ 0 , φ 1 , . . . } with φ j = u j ◦ X is an orthonormal basis of L 2 ( µ ). Giv en this basis, and because U t is b ounded, we ha ve U t Y = lim L →∞ U t L Y , where the partial sum U t L Y := P L − 1 j =0 h U t Y , φ j i L 2 ( µ ) φ j con verges in L 2 ( µ ) norm. Here, U t L is a finite-rank map on L 2 ( µ ) with range span { φ 0 , . . . , φ L − 1 } , represen ted b y an L × L matrix U ( t ) with elemen ts U ij ( t ) = h φ i , U t φ j i L 2 ( µ ) . Defining ~ y = ( ˆ y 0 , . . . , ˆ y L − 1 ) > , ˆ y j = h φ j , U t Y i L 2 ( µ ) , and ( ˆ z 0 ( t ) , . . . , ˆ z L − 1 ( t )) > = U ( t ) ~ y , w e ha v e U t L Y = P L − 1 j =0 ˆ z j ( t ) φ j . Since φ j = u j ◦ X , this leads to the estimator ˆ Z t,L ∈ L 2 ( ν ), with ˆ Z t,L = P L − 1 j =0 ˆ z j ( t ) u j . The approac h outlined ab ov e ten tativ ely pro vides a consisten t forecasting framework. Y et, while in principle app ealing, it has three ma jor shortcomings: (i) Apart from special cases, the in v arian t measure and an orthonormal basis of L 2 ( µ ) are not known. In particular, orthogonal functions with resp ect to an ambien t measure on Ω (e.g., Leb esgue-orthogonal p olynomials) will not suffice, since there are no guar- an tees that suc h functions form a Schauder basis of L 2 ( µ ), let alone b e orthonormal. Even with a basis, w e cannot ev aluate U t on its elements without kno w- ing Φ t . (ii) P oin t wise ev alu ation on L 2 ( µ ) is not de- fined, making ˆ Z t,L inadequate in practice, even if the co efficien ts ˆ z j ( t ) are known. (iii) The cov ariate map X is oftentimes non-inv ertible, and th us the φ j span a strict subspace of L 2 ( µ ). W e no w describe metho ds to ov ercome these obstacles using learning theory . Sampling measures and ergo dicit y The dynamical tra jectory { ω 0 , . . . , ω N − 1 } in state space underlying the training data is the support of a discrete sampling me asur e µ N := P N − 1 n =0 δ ω n / N . A k ey consequence of ergodicity is that for Lebesgue- a.e. sampling interv al ∆ t and µ -a.e. starting p oint ω 0 ∈ Ω, as N → ∞ , the sampling measures µ N w eak- con verge to the inv ariant measure µ ; that is, lim N →∞ Z Ω f dµ N = Z Ω f dµ, ∀ f ∈ C (Ω) . (1) Since in tegrals against µ N are time a v erages on dy- namical tra jectories, R Ω f dµ N = P N − 1 n =0 f ( ω n ) / N , er- go dicit y pro vides an empirical means of accessing the statistics of the inv ariant measure. In fact, many systems encountered in applications p ossess so-called physic al me asur es , where (1) holds for ω 0 in a “larger” set of positive measure with respect to an ambien t measure (e.g., Leb esgue measure) from which exp er- imen tal initial conditions are drawn. Hereafter, we will let M b e a compact subset of Ω, whic h is forward- in v arian t under the dynamics (i.e., Φ t ( M ) ⊆ M for all t ≥ 0), and thus necessarily contains A . F or ex- ample, in dissipative dynamical systems such as L63, M can b e chosen as a compact absorbing ball. Shift op erators Ergo dicit y suggests that appropriate data-driv en analogs of are the L 2 ( µ N ) spaces induced by the the sampling measures µ N . F or a given N , L 2 ( µ N ) con- sists of equiv alence classes of measurable functions f : Ω → C having common v alues at the sampled states ω n , and the inner pro duct of tw o elements f , g ∈ L 2 ( µ N ) is giv en b y an empirical time-correlation, h f , g i µ N = R Ω f ∗ g dµ N = P N − 1 n =0 f ∗ ( ω n ) g ( ω n ) / N . Moreo ver, if the ω n are distinct (as w e will assume for simplicit y of exp osition), L 2 ( µ N ) has dimension N , and is isomorphic as a Hilbert space to C N equipp ed with a normalized dot pro duct. Given that, w e can represen t ev ery f ∈ L 2 ( µ N ) b y a column v ector ~ f = ( f ( ω 0 ) , . . . , f ( ω N − 1 )) > ∈ C N , and every linear map A : L 2 ( µ N ) → L 2 ( µ N ) by an N × N matrix A , so that ~ g = A ~ f is the column v ector represen t- ing g = Af . The elements of ~ f can also b e under- sto o d as expansion co efficients in the standard ba- sis { e 0 ,N , . . . , e N − 1 ,N } of L 2 ( µ N ), where e j,N ( ω n ) = N 1 / 2 δ j n ; that is, f ( ω n ) = h e n,N , f i L 2 ( µ N ) . Similarly , 5 the elemen ts of A corresp ond to the op erator matrix elemen ts A ij = h e i,N , Ae j,N i L 2 ( µ N ) . Next, w e w ould lik e to define a Ko opman op erator on L 2 ( µ N ), but this space do es not admit a suc h an op erator as a comp osition map induced b y the dy- namical flow Φ t on Ω. This is b ecause Φ t do es not preserv e null sets with resp ect to µ N , and thus does not lead to a w ell-defined comp osition map on equiv a- lence classes of functions in L 2 ( µ N ). Nev ertheless, on L 2 ( µ N ) there is an analogous construct to the Ko op- man op erator on L 2 ( µ ), namely the shift op er ator , U q N : L 2 ( µ N ) → L 2 ( µ N ), q ∈ Z , defined as U q N f ( ω n ) = ( f ( ω n + q ) , 0 ≤ n + q ≤ N − 1 , 0 , otherwise . Ev en though U q N is not a comp osition map, in- tuitiv ely it should ha v e a connection with the Ko opman operator U q ∆ t . One could consider, for instance, the matrix represen tation ˜ U N ( q ) = [ h e i,N , U q N e j,N i L 2 ( µ N ) ] in the standard basis, and at- tempt to connect it with a matrix represen tation of U q ∆ t in an orthonormal basis of L 2 ( µ ). Ho w ev er, the issue with this approac h is that the e j,N do not hav e N → ∞ limits in L 2 ( µ ), meaning that there is no suitable notion of N → ∞ conv ergence of the matrix elemen ts of U q N in the standard basis. In resp onse, we will construct a represen tation of the shift op erator in a differen t orthonormal basis with a well-defined N → ∞ limit. The main to ols that w e will use are kernel inte gr al op er ators , whic h we now describ e. Kernel in tegral op erators In the presen t context, a kernel function will b e a real-v alued, contin uous function k : Ω × Ω → R with the prop ert y that there exists a strictly p ositive, con- tin uous function d : Ω → R such that d ( ω ) k ( ω , ω 0 ) = d ( ω 0 ) k ( ω 0 , ω ) , ∀ ω , ω 0 ∈ Ω . (2) Notice the similarity b etw een (2) and the detailed balance relation in reversible Marko v chains. Let no w ρ b e any Borel probability measure with compact supp ort S ⊆ M included in the forward-in v ariant set M . It follows by contin uit y of k and compactness of S that the integral op erator K ρ : L 2 ( ρ ) → C ( M ), K ρ f = Z Ω k ( · , ω ) f ( ω ) dρ ( ω ) , (3) is w ell-defined as a b ounded op erator mapping ele- men ts of L 2 ( ρ ) in to contin uous functions on M . Us- ing ι ρ : C ( M ) → L 2 ( ρ ) to denote the canonical inclu- sion map, we consider tw o additional in tegral opera- tors, G ρ : L 2 ( ρ ) → L 2 ( ρ ) and ˜ G ρ : C ( M ) → C ( M ), with G ρ = ι ρ K ρ and ˜ G ρ = K ρ ι ρ , resp ectively . The operators G ρ and ˜ G ρ are compact op erators acting with the same integral form ula as K ρ in (3), but their codomains and domains, resp ectively , are differen t. Nevertheless, their nonzero eigenv alues co- incide, and φ ∈ L 2 ( ρ ) is an eigenfunction of G ρ cor- resp onding to a nonzero eigen v alue λ if and only if ϕ ∈ C ( M ) with ϕ = K ρ φ/λ is an eigenfunction of ˜ G ρ at the same eigenv alue. In effect, φ 7→ ϕ “in- terp olates” the L 2 ( ρ ) elemen t φ (defined only up to ρ -n ull sets) to the con tin uous, ev erywhere-defined function ϕ . It can be verified that if (2) holds, G ρ is a trace-class op erator with real eigenv alues, | λ 0 | ≥ | λ 1 | ≥ · · · & 0 + . Moreov er, there exists a Riesz basis { φ 0 , φ 1 , . . . , } of L 2 ( ρ ) and a corresp ond- ing dual basis { φ 0 0 , φ 0 1 , . . . } with h φ 0 i , φ j i L 2 ( ρ ) = δ ij , suc h that G ρ φ j = λ j φ j and G ∗ ρ φ 0 j = λ j φ 0 j . W e say that the kernel k is L 2 ( ρ ) -universal if G ρ has no zero eigen v alues; this is equiv alent to ran G ρ b eing dense in L 2 ( ρ ). Moreov er, k is said to be L 2 ( ρ ) -Markov if G ρ is a Marko v op erator, i.e., G ρ ≥ 0, G ρ f ≥ 0 if f ≥ 0, and G 1 = 1. Observ e now that the op erators G µ N asso ciated with the sampling measures µ N , henceforth abbrevi- ated b y G N , are represen ted by N × N kernel ma- trices G N = [ h e i,N , G N e j,N i L 2 ( µ N ) ] = [ k ( ω i , ω j )] in the standard basis of L 2 ( µ N ). F urther, if k is a pullbac k kernel from co v ariate space, i.e., k ( ω , ω 0 ) = κ ( X ( ω ) , X ( ω 0 )) for κ : X × X → R , then G N = [ κ ( x i , x j )] is empirically accessible from the train- ing data. P opular kernels in applications include the cov ariance kernel κ ( x, x 0 ) = h x, x 0 i X on an inner-pro duct space and the radial Gaussian kernel κ ( x, x 0 ) = e −k x − x 0 k 2 X / [Gen01]. It is also common to emplo y Marko v k ernels constructed b y normalization of symmetric kernels [CL06, BH16]. W e will use k N to 6 denote kernels with data-dep endent normalizations. A widely used strategy for learning with inte- gral op erators [vLBB08] is to construct families of k ernels k N con verging in C ( M × M ) norm to k . This implies that for ev ery nonzero eigen v alue λ j of G ≡ G µ , the sequence of eigenv alues λ j,N of G N satisfies lim N →∞ λ j,N = λ j . Moreo v er, there exists a sequence of eigenfunctions φ j,N ∈ L 2 ( µ N ) corresp onding to λ j,N , whose contin uous representa- tiv es, ϕ j,N = K N φ j,N /λ j,N , conv erge in C ( M ) to ϕ j = K φ j /λ j , where φ j ∈ L 2 ( µ ) is an y eigenfunction of G at eigen v alue λ j . In effect, we use C ( M ) as a “bridge” to establish sp ectral con v ergence of the op- erators G N , which act on differen t spaces. Note that ( λ j,N , ϕ j,N ) do es not con v erge uniformly with resp ect to j , and for a fixed N , eigenv alues/eigenfunctions at larger j exhibit larger deviations from their N → ∞ limits. Under measure-preserving, ergo dic dynamics, con vergence o ccurs for µ -a.e. starting state ω 0 ∈ M , and ω 0 in a set of p ositive ambien t measure if µ is ph ysical. In particular, the training states ω n need not lie on A . See Figure 1 for eigenfunctions of G N computed from data sampled near the L63 attrac- tor. When the inv ariant measure µ has a smo oth densit y with resp ect to lo cal co ordinates on Ω, re- sults on sp ectral conv ergence of graph Laplacians to manifold Laplacians [TS18, TGHS19] could b e em- plo yed to pro vide a more precise characterization of the spectral properties of G N for suitable choices of k ernel. Diffusion forecasting W e now hav e the ingredients to build a concrete sta- tistical forecasting sc heme based on data-driven ap- pro ximations of the Ko opman op erator. In partic- ular, note that if φ 0 i,N , φ j,N are biorthogonal eigen- functions of G ∗ N and G N , resp ectively , at nonzero eigen v alues, we can ev aluate the matrix element U N ,ij ( q ) := h φ 0 i,N , U q N φ j,N i L 2 ( µ N ) of the shift op er- ator using the con tinuous represen tatives ϕ 0 i,N , ϕ j,N , U N ,ij ( q ) = 1 N N − 1 − q X n =0 φ 0 i,N ( ω n ) φ j,N ( ω n + q ) = N − q N Z Ω ϕ 0 i,N U q ∆ t ϕ j,N dµ N − q , where U q ∆ t is the Ko opman op erator on C ( M ). Therefore, if the corresponding eigenv alues λ i , λ j of G are nonzero, b y the w eak con v ergence of the sam- pling measures in (1) and uniform conv ergence of the eigenfunctions, as N → ∞ , U ij,N ( q ) conv erges to the matrix element U ij ( q ∆ t ) = h φ i , U q ∆ t φ j i L 2 ( µ ) of the Ko opman op erator on L 2 ( µ ). This conv er- gence is not uniform with resp ect to i, j , but if we fix a parameter L ∈ N (which can be though t of as sp ectral resolution) such that λ L − 1 6 = 0, w e can ob- tain a statistically consisten t approximation of L × L Ko opman operator matrices, U ( q ∆ t ) = [ U ij ( q ∆ t )], b y shift op erator matrices, U N ( q ) = [ U N ,ij ( q )], with i, j ∈ { 0 , . . . , L − 1 } . Chec k erb oard plots of U N ( q ) for the L63 system are display ed in Figure 1. This metho d for approximating matrix elements of Ko opman op erators was prop osed in a technique called diffusion for e c asting (named after the diffu- sion k ernels emplo y ed) [BGH15]. Assuming that the resp onse Y is contin uous and b y spectral con ver- gence of G N , for every j ∈ N 0 suc h that λ j > 0, the inner pro ducts ˆ Y j,N = h φ 0 j,N , Y i µ N con verge, as N → ∞ , to ˆ Y j = h φ 0 j , Y i L 2 ( µ ) . This implies that for an y L ∈ N suc h that λ L − 1 > 0, P L − 1 j =0 ˆ Y j,N ϕ j,N con verges in C ( M ) to the con tin uous representativ e of Π L Y , where Π L is the orthogonal pro jection on L 2 ( µ ) mapping into span { φ 0 , . . . , φ L − 1 } . Supp ose no w that % N is a sequence of contin uous functions con verging uniformly to % ∈ C ( M ), such that % N are probabilit y densities with respect to µ N (i.e., % N ≥ 0 and k % N k L 1 ( µ N ) = 1). By similar argu- men ts as for Y , as N → ∞ , the contin uous func- tion P L − 1 j =0 ˆ % j,N ϕ j,N with ˆ % j,N = h ϕ 0 j,N , % N i L 2 ( µ N ) con verges to Π L % in L 2 ( µ ). Putting these facts to- gether, and setting ~ % N = ( ˆ % 0 ,N , . . . , ˆ % L − 1 ,N ) > and ~ Y N = ( ˆ Y 0 ,N , . . . , ˆ Y L − 1 ,N ) > , we conclude that ~ % > N U N ( q ) ~ Y N N →∞ − − − − → h Π L %, Π L U q ∆ t Y i L 2 ( µ ) . (4) Here, the left-hand side is given by matrix–vector pro ducts obtained from the data, and the right- hand side is equal to the expectation of Π L U q ∆ t Y with resp ect to the probability measure ρ with 7 H N L 2 ( µ N ) L 2 ( µ N ) span( { φ i,N } L i =1 ) Y M ⊆ Ω H ( M ) L 2 ( µ ) L 2 ( µ ) Y ω Ψ( ω ) U t ∗ Ψ( ω ) E Ψ( ω ) U t Y ι N U q ∗ N Π L E ( · ) Y error Ψ N Ψ ι U t ∗ E ( · ) Y ∈ (f ) L 2 ( µ N ) L 2 ( µ N ) L 2 X ( µ N ) H N L 2 X ( µ ) C ( M ) L 2 ( µ ) L 2 ( µ ) L 2 X ( µ ) Y U t Y Z t ◦ X = E ( U t Y | X ) U q N Π X N N ι error ι N ι U t Π X ∈ (g) Figure 1: Panel (a) sho ws eigenfunctions φ j,N of G N for a dataset sampled near the L63 attractor. Panel (b) sho ws the action of the shift operator U q N on the φ j,N from (a) for q = 50 steps, appro ximating the Koopman op erator U q ∆ t . Panels (c, d) sho w the matrix elemen ts h φ i,N , U q N φ j,N i µ N of the shift operator for q = 5 and 50. The mixing dynamics is evident in the larger far-from-diagonal components in q = 50 vs. q = 5. P anel (e) shows the matrix represen tation of a finite-difference approximation of the generator V , whic h is sk ew-symmetric. Panels (f, g) summarize the diffusion forecast (DF) and kernel analog forecast (KAF) for lead time t = q ∆ t . In eac h diagram, the data-driv en finite dimensional approximation (top row) con v erges to the true forecast (middle ro w). DF maps an initial state ω ∈ M ⊆ Ω to the future exp ectation of an observ able E Ψ( ω ) U t Y = E U t ∗ Ψ( ω ) Y and KAF maps a response function Y ∈ C ( M ) to the conditional exp ectation E ( U t Y | X ). 8 densit y dρ/dµ = % ; i.e., h Π L %, Π L U q ∆ t Y i L 2 ( µ ) = E ρ (Π L U q ∆ t Y ), where E ρ ( · ) := R Ω ( · ) dρ . What ab out the dependence of the forecast on L ? As L increases, Π L con verges strongly to the orthog- onal pro jection Π G : L 2 ( µ ) → L 2 ( µ ) onto the clo- sure of the range of G . Th us, if the kernel k is L 2 ( µ )-univ ersal (i.e., ran G = L 2 ( µ )), Π G = Id, and under the iterated limit of L → ∞ after N → ∞ the left-hand side of (4) conv erges to E ρ U q ∆ t Y . In summary , implemen ted with an L 2 ( µ )-univ ersal ker- nel, diffusion forecasting consistently approximates the exp ected v alue of the time-evolution of any con- tin uous observ able with resp ect to any probability measure with con tin uous densit y relativ e to µ . An example of an L 2 ( µ )-univ ersal kernel is the pullback of a radial Gaussian k ernel on X = R m . In contrast, the cov ariance kernel is not L 2 ( µ )-univ ersal, as in this case the rank of G is b ounded by m . This illustrates that forecasting in the POD basis ma y be sub ject to in trinsic limitations, even with full observ ations. Kernel analog forecasting While pro viding a flexible framework for appro ximat- ing exp ectation v alues of observ ables under measure- preserving, ergodic dynamics, diffusion forecasting do es not directly address the problem of construct- ing a concrete forecast function, i.e., a function Z t : X → C approximating U t Y as stated in Problem 1. One w ay of defining suc h a function is to let κ N b e a L 2 ( ν N )-Mark ov kernel on X for ν N = X ∗ µ N , and to consider the “feature map” Ψ N : X → C ( M ) mapping each p oint x ∈ X in cov ariate space to the k ernel section Ψ N ( x ) = κ N ( x, X ( · )). Then, Ψ N ( x ) is a con tinuous probability density with resp ect to µ N , and we can use diffusion forecasting to define Z q ∆ t ( x ) = − − − − → Ψ N ( x ) > U N ( q ) ~ Y N with notation as in (4). While this approach has a w ell-defined N → ∞ limit, it do es not provide optimality guarantees, par- ticularly in situations where X is non-injective. In- deed, the L 2 ( µ )-optimal approximation to U t Y of the form Z t ◦ X is giv en by the c onditional exp e ctation E ( U t Y | X ). In the present, L 2 , setting we hav e E ( U t Y | X ) = Π X U t Y , where Π X is the orthogonal pro jection in to L 2 X ( µ ) := { f ∈ L 2 ( µ ) : f = g ◦ X } . That is, the conditional exp ectation minimizes the error k f − U t Y k 2 L 2 ( µ ) among all pullbac ks f ∈ L 2 X ( µ ) from cov ariate space. Even though E ( U t Y | X = x ) can b e expressed as an expectation with respect to a conditional probabilit y measure µ ( · | x ) on Ω, that measure will generally not hav e an L 2 ( µ ) density , and there is no map Ψ : X → C ( M ) suc h that h Ψ( x ) , U t Y i L 2 ( µ ) equals E ( U t Y | X = x ). T o construct a consisten t estimator of the condi- tional exp ectation, w e require that k is a pullback of a k ernel κ : X × X → R on co v ariate space which is (i) symmetric, κ ( x, x 0 ) = κ ( x 0 , x ) for all x, x 0 ∈ X (so (2) holds); (ii) strictly p ositiv e; and (iii) strictly p ositive-definite . The latter means that for an y se- quence x 0 , . . . , x n − 1 of distinct points in X the ma- trix [ κ ( x i , x j )] is strictly p ositive. These properties imply that there exists a Hilbert space H of complex- v alued functions on Ω, suc h that (i) for every ω ∈ Ω, the kernel sections k ω = k ( ω , · ) lie in H ; (ii) the ev al- uation functional δ ω : H → C is b ounded and satis- fies δ ω f = h k ω , f i H ; (iii) ev ery f ∈ H has the form f = g ◦ X for a contin uous function g : X → C ; and (iv) ι µ H lies dense in L 2 X ( µ ). A Hilb ert space of functions satisfying (i) and (ii) ab o ve is kno wn as a r epr o ducing kernel Hilb ert sp ac e (RKHS) , and the asso ciated kernel k is kno wn as a r epr o ducing kernel . RKHSs hav e man y useful prop- erties for statistical learning [CS01], not least be- cause they combine the Hilb ert space structure of L 2 spaces with p oint wise ev aluation in spaces of con- tin uous functions. The density of H in L 2 X ( µ ) is a consequence of the strict p ositive-definiteness of κ . In particular, because the conditional exp ectation E ( U t Y | X ) lies in L 2 X ( µ ), it can b e approximated b y elemen ts of H to arbitrarily high precision in L 2 ( µ ) norm, and every suc h appro ximation will be a pull- bac k ˆ Y t = Z t ◦ X of a con tin uous function Z t that can b e ev aluated at arbitrary cov ariate v alues. W e now describ e a data-driven technique for con- structing suc h a prediction function, which we refer to as kernel analo g for e c asting (KAF) [AG20]. Math- ematically , KAF closely related to kernel principal comp onen t regression. T o build the KAF estima- tor, we w ork again with integral operators as in (3), with the difference that now K ρ : L 2 ( ρ ) → H ( M ) tak es v alues in the restriction of H to the forward- 9 in v arian t set M , denoted H ( M ). One can sho w that the adjoin t K ∗ ρ : H ( M ) → L 2 ( ρ ) coincides with the inclusion map ι ρ on contin uous functions, so that K ∗ ρ maps f ∈ H ( M ) ⊂ C ( M ) to its corresp onding L 2 ( ρ ) equiv alence class. As a result, the in tegral operator G ρ : L 2 ( ρ ) → L 2 ( ρ ) takes the form G ρ = K ∗ ρ K ρ , b e- coming a self-adjoint, positive-definite, compact op- erator with eigen v alues λ 0 ≥ λ 1 ≥ · · · & 0 + , and a corresp onding orthonormal eigenbasis { φ 0 , φ 1 , . . . } of L 2 ( ρ ). Moreov er, { ψ 0 , ψ 1 , . . . } with ψ j = K ρ φ j /λ 1 / 2 j is an orthonormal set in H ( M ). In fact, Mercer’s the- orem pro vides an explicit represen tation k ( ω , ω 0 ) = P ∞ j =0 ψ j ( ω ) ψ j ( ω 0 ), where direct ev aluation of the k ernel in the left-hand side (known as “kernel tric k”) a voids the complexit y of inner-pro duct computations b et ween feature v ectors ψ j . Here, our p ersp ectiv e is to rely on the orthogonalit y of the eigenbasis to appro ximate observ ables of interest at fixed L , and establish con v ergence of the estimator as L → ∞ . A similar approac h w as adopted for densit y estimation on non-compact domains, with Mercer-t ype k ernels based on orthogonal p olynomials [ZHL19]. No w a key op eration that the RKHS enables is the Nystr¨ om extension , which interpolates L 2 ( ρ ) el- emen ts of appropriate regularity to RKHS func- tions. The Nystr¨ om operator N ρ : D ( N ρ ) → H ( M ) is defined on the domain D ( N ρ ) = { P j c j φ j : P j | c j | 2 /λ j < ∞} by linear extension of N ρ φ j = ψ j /λ 1 / 2 j . Note that N ρ φ j = K ρ φ j /λ j = ϕ j , so N ρ maps φ j to its contin uous represen tative, and K ∗ ρ N ρ f = f , meaning that N ρ f = f , ρ -a.e. While D ( N ρ ) may b e a strict L 2 ( ρ ) subspace, for any L with λ L − 1 > 0 we define a sp ectrally truncated op erator N L,ρ : L 2 ( ρ ) → H ( M ), N L,ρ P j c j φ j = P L − 1 j =0 c j ψ j /λ 1 / 2 j . Then, as L increases, K ∗ ρ N L,ρ f con verges to Π G ρ f in L 2 ( ρ ). T o make empirical fore- casts, w e set ρ = µ N , compute the expansion co effi- cien ts c j,N ( t ) of U t Y in the { φ j,N } basis of L 2 ( µ N ), and construct Y t,L,N = N L,N U t Y ∈ H ( M ). Be- cause ψ j,N are pullbac ks of kno wn functions u j,N ∈ C ( X ), w e ha v e Y t,L,N = Z t,L,N ◦ X , where Z t,L,N = P L − 1 j =0 c j ( t ) u j,N /λ 1 / 2 j,N can b e ev aluated at any x ∈ X . The function Y t,L,N is our estimator of the con- ditional expectation E ( U t Y | X ). By spectral con- Figure 2: KAF applied to the L63 state v ector com- p onen t Y ( ω ) = ω 1 with full (blue) and partial (red) observ ations. In the fully observed case, the cov ariate X is the iden tity map on Ω = R 3 . In the partially observ ed case, X ( ω ) = ω 1 is the pro jection to the first coordinate. T op: F orecasts Z t,L,N ( x ) initialized from fixed x = X ( ω ), compared with the true ev o- lution U t Y ( ω ) (black). Shaded regions show error b ounds based on KAF estimates of the conditional standard deviation, σ t ( x ). Bottom: RMS forecast errors (solid lines) and σ t (dashed lines). The agree- men t b etw een actual and estimated errors indicates that σ t pro vides useful uncertaint y quantification. v ergence of kernel integral op erators, as N → ∞ , Y t,L,N con verges to Y t,L := N L U t Y in C ( M ) norm, where N L ≡ N L,µ . Then, as L → ∞ , K ∗ Y t,L con- v erges in L 2 ( µ ) norm to Π G U t Y . Because κ is strictly p ositiv e-definite, G has dense range in L 2 X ( µ ), and th us Π G U t Y = Π X U t Y = E ( U t Y | X ). W e there- fore conclude that Y t,L,N con verges to the conditional exp ectation as L → ∞ after N → ∞ . F orecast re- sults from the L63 system are sho wn in Figure 2. 10 Coheren t pattern extraction W e now turn to the task of coheren t pattern ex- traction in Problem 2. This is a fundamentally unsup ervised learning problem, as we seek to dis- co ver observ ables of a dynamical system that ex- hibit a natural time ev olution (b y some suitable cri- terion), rather than approximate a given observ able as in the context of forecasting. W e ha ve men- tioned POD as a tec hnique for iden tifying coheren t observ ables through eigenfunctions of cov ariance op- erators. Kernel PCA [SSM98] is a generalization of this approach utilizing integral op erators with p o- ten tially nonlinear kernels. F or data lying on Rie- mannian manifolds, it is p opular to employ kernels appro ximating geometrical op erators, suc h as heat op erators and their asso ciated Laplacians. Exam- ples include Laplacian eigenmaps [BN03], diffusion maps [CL06], and v ariable-bandwidth k ernels [BH16]. Mean while, coheren t pattern extraction tec hniques based on ev olution op erators ha v e also gained pop- ularit y in recent years. These metho ds include sp ectral analysis of transfer operators for detection of inv ariant sets [DJ99, DF00], harmonic av eraging [Mez05] and dynamic mo de decomp osition (DMD) [RMB + 09, Sch10, WKR15, KBBP17, KNK + 18] tec h- niques for appro ximating Ko opman eigenfunctions, and Darb oux k ernels for approximating sp ectral pro- jectors [KPM20]. While natural from a theoret- ical standp oint, evolution op erators tend to hav e more complicated sp ectral properties than kernel in- tegral op erators, including non-isolated eigenv alues and contin uous sp ectrum. The follo wing examples il- lustrate distinct b ehaviors asso ciated with the point ( H a ) and contin uous ( H c ) sp ectrum subspaces of L 2 ( µ ). Example 1 (T orus rotation) . A quasip erio dic ro- tation on the 2-torus, Ω = T 2 , is gov erned b y the system of ODEs ˙ ω = ~ V ( ω ), where ω = ( ω 1 , ω 2 ) ∈ [0 , 2 π ) 2 , ~ V = ( ν 1 , ν 2 ), and ν 1 , ν 2 ∈ R are rationally indep enden t frequency parameters. The resulting flo w, Φ t ( ω ) = ( ω 1 + ν 1 t, ω 2 + ν 2 t ) mo d 2 π , has a unique Borel ergo dic inv ariant probabilit y measure µ giv en b y a normalized Leb esgue measure. Moreov er, there exists an orthonormal basis of L 2 ( µ ) consist- ing of Ko opman eigenfunctions z j k ( ω ) = e i ( j ω 1 + kω 2 ) , j, k ∈ Z , with eigenfrequencies α j k = j ν 1 + k ν 2 . Thus, H a = L 2 ( µ ), and H c is the zero subspace. Such a sys- tem is said to hav e a pur e p oint sp e ctrum . Example 2 (Lorenz 63 system) . The L63 system on Ω = R 3 is gov erned by a system of smo oth ODEs with t wo quadratic nonlinearities. This system is known to exhibit a physical ergo dic inv ariant probability mea- sure µ supp orted on a compact set (the L63 attrac- tor), with mixing dynamics. This means that H a is the one-dimensional subspace of L 2 ( µ ) consisting of constant functions, and H c consists of all L 2 ( µ ) functions orthogonal to the constants (i.e., with zero exp ectation v alue with resp ect to µ ). Dela y-co ordinate approaches F or the p oint sp ectrum subspace H a , a natural class of coheren t observ ables is pro vided b y the Ko op- man eigenfunctions. Every Ko opman eigenfunction z j ∈ H a ev olves as a harmonic oscillator at the cor- resp onding eigenfrequency , U t z j = e iα j t z j , and the asso ciated auto correlation function, C z j ( t ) = e iα j t , also has a harmonic evolution. Short of temp oral in v ariance (whic h only o ccurs for constant eigenfunc- tions under measure-preserving ergodic dynamics), it is natural to think of a harmonic evolution as b eing “maximally” coherent. In particular, if z j is contin- uous, then for any ω ∈ A , the real and imaginary parts of the time series t 7→ U t z j ( ω ) are pure si- n usoids, even if the flow Φ t is ap erio dic. F urther attractiv e prop erties of Koopman eigenfunctions in- clude the facts that they are in trinsic to the dynam- ical system generating the data, and they are closed under point wise multiplication, z j z k = z j + k , allo w- ing one to generate ev ery eigenfunction from a p o- ten tially finite generating set. Y et, consistently approximating Ko opman eigen- functions from data is a non-trivial task, ev en for sim- ple systems. F or instance, the torus rotation in Ex- ample 1 has a dense set of eigenfrequencies by ratio- nal indep endence of the basic frequencies ν 1 and ν 2 . Th us, any op en in terv al in R contains infinitely man y eigenfrequencies α j k , necessitating some form of reg- ularization. Arguably , the term “pure p oint sp ec- 11 trum” is somewhat of a misnomer for suc h systems since a non-empty contin uous sp ectrum is present. Indeed, since the sp ectrum of an op erator on a Ba- nac h space includes the closure of the set of eigenv al- ues, i R \ { iα j k } lies in the contin uous sp ectrum. As a wa y of addressing these c hallenges, observ e that if G is a self-adjoin t, compact op erator commut- ing with the Ko opman group (i.e., U t G = GU t ), then an y eigenspace W λ of G corresponding to a nonzero eigen v alue λ is inv ariant under U t , and thus under the generator V . Moreov er, by compactness of G , W λ has finite dimension. Thus, for any orthonormal basis { φ 0 , . . . , φ l − 1 } of W λ , the generator V on W λ is represented b y a sk ew-symmetric, and thus unitar- ily diagonalizable, l × l matrix V = [ h φ i , V φ j i L 2 ( µ ) ]. The eigen v ectors ~ u = ( u 0 , . . . , u l − 1 ) > ∈ C l of V then con tain expansion co efficients of Ko opman eigenfunc- tions z = P l − 1 j =0 u j φ j in W λ , and the eigen v alues cor- resp onding to ~ u are eigenv alues of V . On the basis of the abov e, since any integral op er- ator G on L 2 ( µ ) asso ciated with a symmetric kernel k ∈ L 2 ( µ × µ ) is Hilb ert-Schmidt (and thus com- pact), and w e hav e a wide v ariet y of data-driv en to ols for approximating integral op erators, w e can re- duce the problem of consisten tly approximating the p oin t sp ectrum of the Ko opman group on L 2 ( µ ) to the problem of constructing a comm uting integral op erator. As we no w argue, the success of a n um- b er of tec hniques, including singular sp ectrum anal- ysis (SSA) [BK86, VG89], diffusion-mapp ed delay co- ordinates (DMDC) [BCGFS13], nonlinear Laplacian sp ectral analysis (NLSA) [GM12], and Hank el DMD [BBP + 17], in iden tifying coherent patterns can at least b e partly attributed to the fact that they em- plo y in tegral op erators that approximately commute with the Ko opman op erator. A common characteristic of these metho ds is that they employ , in some form, delay-c o or dinate maps [SYC91]. With our notation for the cov ariate func- tion X : Ω → X and sampling interv al ∆ t , the Q -step dela y-co ordinate map is defined as X Q : Ω → X Q with X Q ( ω ) = ( X ( ω 0 ) , X ( ω − 1 ) , . . . , X ( ω − Q +1 )) and ω q = Φ q ∆ t ( ω ). That is, X Q can b e though t of as a lift of X , whic h produces “snapshots”, to a map taking v alues in the space X Q con taining “videos”. In tu- itiv ely , by virtue of its higher-dimensional codomain and dep endence on the dynamical flo w, a delay- co ordinate map such as X Q should provide additional information ab out the underlying dynamics on Ω o ver the ra w co v ariate map X . This in tuition has been made precise in a num ber of “em b edology” theorems [SYC91], whic h state that under mild assumptions, for an y compact subset S ⊆ Ω (including, for our purp oses, the inv ariant set A ), the dela y-co ordinate map X Q is injectiv e on S for sufficiently large Q . As a result, delay-coordinate maps provide a p o wer- ful to ol for state space reconstruction, as w ell as for constructing informative predictor functions in the con text of forecasting. Aside from considerations associated with top olog- ical reconstruction, how ev er, observe that a metric d : X × X → R on cov ariate space pulls bac k to a distance-lik e function ˜ d Q : Ω × Ω → R such that ˜ d 2 Q ( ω , ω 0 ) = 1 Q Q − 1 X q =0 d 2 ( X ( ω − q ) , X ( ω 0 − q )) . (5) In particular, ˜ d 2 Q has the structure of an ergo dic av- erage of a con tin uous function under the pro duct dy- namical flow Φ t × Φ t on Ω × Ω. By the von Neu- mann ergodic theorem, as Q → ∞ , ˜ d Q con verges in L 2 ( µ × µ ) norm to a b ounded function ˜ d ∞ , whic h is in v arian t under the Ko opman op erator U t ⊗ U t of the pro duct dynamical system. Note that ˜ d ∞ need not b e µ × µ -a.e. constant, as Φ t × Φ t need not be ergo dic, and aside from special cases it will not be con tinuous on A × A . Nevertheless, based on the L 2 ( µ × µ ) conv ergence of ˜ d Q to ˜ d ∞ , it can be shown [DG19] that for an y contin uous function h : R → R , the in tegral op erator G ∞ on L 2 ( µ ) associated with the kernel k ∞ = h ◦ d ∞ comm utes with U t for any t ∈ R . Moreov er, as Q → ∞ , the op erators G Q as- so ciated with k Q = h ◦ d Q con verge to G ∞ in L 2 ( µ ) op erator norm, and th us in sp ectrum. Man y of the operators employ ed in SSA, DMDC, NLSA, and Hank el DMD can be mo deled after G Q describ ed ab ov e. In particular, b ecause G Q is in- duced by a contin uous k ernel, its sp ectrum can b e consisten tly appro ximated by data-driven op erators G Q,N on L 2 ( µ N ), as describ ed in the context of 12 forecasting. The eigenfunctions of these op erators at nonzero eigen v alues approximate eigenfunctions of G Q , which approximate in turn eigenfunctions of G ∞ lying in finite unions of Koopman eigenspaces. Th us, for sufficien tly large N and Q , the eigenfunc- tions of G Q,N at nonzero eigen v alues capture distinct timescales asso ciated with the point sp ectrum of the dynamical system, providing physically interpretable features. These k ernel eigenfunctions can also b e em- plo yed in Galerkin schemes to approximate individual Ko opman eigenfunctions. Besides the sp ectral considerations describ ed ab o ve, in [BCGFS13] a geometrical c haracterization of the eigenspaces of G Q w as giv en based on Ly a- puno v metrics of dynamical systems. In particular, it follo ws b y Oseledets’ multiplicativ e ergo dic theorem that for µ -a.e. ω ∈ M there exists a decomp osition T ω M = F 1 ,ω ⊕ . . . ⊕ F r,ω , where T ω M is the tangent space at ω ∈ M , and F j,ω are subspaces satisfying the equiv ariance condition D Φ t F j,ω = F j, Φ t ( ω ) . More- o ver, there exist Λ 1 > · · · > Λ r , suc h that for every v ∈ F j,ω , Λ j = lim t →∞ R t 0 log k D Φ s v k ds/t , where k·k is the norm on T ω M induced by a Riemannian met- ric. The n um b ers Λ j are called Lyapunov exp onents , and are metric-indep enden t. Note that the dynami- cal v ector fie ld ~ V ( ω ) lies in a subspace F j 0 ,ω with a corresp onding zero Lyapuno v exp onent. If F j 0 ,ω is one-dimensional, and the norms k D Φ t v k ob ey appropriate uniform gro wth/deca y bounds with resp ect to ω ∈ M , the dynamical flow is said to b e uniformly hyp erb olic . If, in addition, the s upport A of µ is a differen tiable manifold, then there exists a class of Riemannian metrics, called Lyapunov met- rics , for whic h the F j,ω are m utually orthogonal at ev ery ω ∈ A . In [BCGFS13], it was shown that us- ing a modification of the delay-distance in (5) with exp onen tially deca ying w eigh ts, as Q → ∞ , th e top eigenfunctions φ ( Q ) j of G Q v ary predominantly along the subspace F r,ω asso ciated with the most stable Ly apunov exp onent. That is, for ev ery ω ∈ Ω and tangen t vector v ∈ T ω M orthogonal to F r,ω with re- sp ect to a Ly apunov metric, lim Q →∞ v · ∇ φ ( Q ) j = 0. RKHS approac hes While delay-coordinate maps are effectiv e for appro x- imating the p oint sp ectrum and asso ciated Koop- man eigenfunctions, they do not address the problem of identifying coherent observ ables in the contin uous sp ectrum subspace H c . Indeed, one can verify that in mixed-spectrum systems the infinite-dela y op era- tor G ∞ , whic h provides access to the eigenspaces of the Ko opman op erator, has a non-trivial nullspace that includes H c as a subspace More broadly , there is no ob vious wa y of identifying coherent observ ables in H c as eigenfunctions of an in trinsic ev olution oper- ator. As a remedy of this problem, w e relax the prob- lem of seeking Ko opman eigenfunctions, and consider instead appr oximate eigenfunctions . An observ able z ∈ L 2 ( µ ) is said to b e an -approximate eigenfunc- tion of U t if there exists λ t ∈ C suc h that k U t z − λ t z k L 2 ( µ ) < k z k L 2 ( µ ) . (6) The num b er λ t is then said to lie in the -approximate sp ectrum of U t . A Ko opman eigenfunction is an -appro ximate eigenfunction for every > 0, so we think of (6) as a relaxation of the eigenv alue equa- tion, U t z − λ t z = 0. This suggests that a natural notion of coherence of observ ables in L 2 ( µ ), appro- priate to b oth the point and contin uous sp ectrum, is that (6) holds for 1 and all t in a “large” in terv al. W e now outline an RKHS-based approach [DGS18], which identifies observ ables satisfying this condition through eigenfunctions of a regularized op- erator ˜ V τ on L 2 ( µ ) approximating V with the prop- erties of (i) b eing skew-adjoin t and compact; and (ii) ha ving eigenfunctions in the domain of the Nystr¨ om op erator, which maps them to differentiable functions in an RKHS. Here, τ is a p ositive regularization pa- rameter such that, as τ → 0 + , ˜ V τ con verges to V in a suitable spectral sense. W e will assume that the forw ard-inv ariant, compact manifold M has C 1 reg- ularit y , but will not require that the supp ort A of the in v arian t measure be differentiable. With these assumptions, let k : Ω × Ω → R b e a symmetric, positive-definite kernel, whose restric- tion on M × M is contin uously differentiable. Then, the corresp onding RKHS H ( M ) embeds contin uously in the Banach space C 1 ( M ) of contin uously differen- 13 tiable functions on M , equipp ed with the standard norm. Moreo v er, b ecause V is an extension of the directional deriv ativ e ~ V · ∇ asso ciated with the dy- namical vector field, every function in H ( M ) lies, up on inclusion, in D ( V ). The key point here is that regularit y of the k ernel induces RKHSs of observ- ables whic h are guaranteed to lie in th e domain of the generator. In particular, the range of the in te- gral op erator G = K ∗ K on L 2 ( µ ) asso ciated with k lies in D ( V ), so that A = V G is w ell-defined. This op erator is, in fact, Hilb ert-Sc hmidt, with Hilb ert- Sc hmidt norm b ounded by the C 1 ( M × M ) norm of the kernel k . What is p erhaps less obvious is that G 1 / 2 V G 1 / 2 (whic h “distributes” the smo oth- ing by G to the left and right of V ), defined on the dense subspace { f ∈ L 2 ( µ ) : G 1 / 2 f ∈ D ( V ) } is also b ounded, and thus has a unique closed ex- tension ˜ V : L 2 ( µ ) → L 2 ( µ ), which turns out to b e Hilb ert-Sc hmidt. Unlik e A , ˜ V is skew-adjoin t, and th us preserves an imp ortant structural prop erty of the generator. By sk ew-adjointness and compactness of ˜ V , there exists an orthonormal basis { ˜ z j : j ∈ Z } of L 2 ( µ ) consisting of its eigenfunctions ˜ z j , with purely imaginary eigenv alues i ˜ α j . Moreov er, (i) all ˜ z j cor- resp onding to nonzero ˜ α j lie in the domain of the Nystr¨ om op erator, and therefore ha v e C 1 represen- tativ es in H ( M ); and (ii) if k is L 2 ( µ )-univ ersal, Mark ov, and ergo dic, ˜ V has a simple eigenv alue at zero, in agreemen t with the analogous prop erty of V . Based on the ab ov e, we seek to construct a one- parameter family of such kernels k τ , with asso ciated RKHSs H τ ( M ), such that as τ → 0 + , the regular- ized generators ˜ V τ con verge to V in a sense suitable for sp ectral conv ergence. Here, the relev an t notion of con vergence is str ong r esolvent c onver genc e ; that is, for every element λ of the resolven t set of V and ev ery f ∈ L 2 ( µ ), ( ˜ V τ − λ ) − 1 f m ust con v erge to ( V − λ ) − 1 f . In that case, for every element iα of the sp ectrum of V (b oth point and con tinuous), there exists a se- quence of eigenv alues i ˜ α j τ ,τ of ˜ V τ con verging to iα as τ → 0 + 0. Moreov er, for any > 0 and T > 0, there exists τ ∗ > 0 such that for all 0 < τ < τ ∗ and | t | < T , e iα j τ ,τ t lies in the -approximate sp ectrum of U t and ˜ z j τ ,τ is an -approximate eigenfunction. In [DGS18], a constructiv e pro cedure w as prop osed for obtaining the k ernel family k τ through a Marko v semigroup on L 2 ( µ ). This method has a data-driven implemen tation, with analogous spectral con v ergence results for the asso ciated integral op erators G τ ,N on L 2 ( µ N ) to those described in the setting of forecast- ing. Giv en these op erators, we appro ximate ˜ V τ b y ˜ V τ ,N = G 1 / 2 τ ,N V N G 1 / 2 τ ,N , where V N is a sk ew-adjoint, finite-differ enc e appr oximation of the generator. F or example, V N = ( U 1 N − U 1 ∗ N ) / (2 ∆ t ) is a second-order finite-difference approximation based on the 1-step shift op erator U 1 N . See Figure 1 for a graphical rep- resen tation of a generator matrix for L63. As with our data-driv en approximations of U t , w e work with a rank- L op erator ˆ V τ := Π τ ,N ,L V τ ,N Π τ ,N ,L , where Π τ ,N ,L is the orthogonal pro jection to the subspace spanned by the first L eigenfunctions of G τ ,N . This family of operators conv ergences spectrally to V τ in a limit of N → 0, follo w ed b y ∆ t → 0 and L → ∞ , where we note that C 1 regularit y of k τ is imp ortant for the finite-difference appro ximations to conv erge. A t an y giv en τ , an a p osteriori criterion for iden- tifying candidate eigenfunctions ˆ z j,τ satisfying (6) for small is to compute a Dirichlet ener gy func- tional , D ( ˆ z j,τ ) = kN τ ,N ˆ z j,τ k 2 H τ ( M ) / k ˆ z j,τ k 2 L 2 ( µ N ) . In- tuitiv ely , D assigns a measure of roughness to ev ery nonzero element in the domain of the Nystr¨ om op er- ator (analogously to the Dirichlet energy in Sobolev spaces on differen tiable manifolds), and the smaller D ( ˆ z j,τ ) is, the more coheren t ˆ z j,τ is expected to be. Indeed, as shown in Figure 3, the ˆ z j,τ corresp onding to low Dirichlet energy identify observ ables of the L63 system with a coherent dynamical evolution, even though this system is mixing and has no nonconstan t Ko opman eigenfunctions. Sampled along dynami- cal tra jectories, the approximate Ko opman eigen- functions resem ble amplitude-mo dulated wa v epac k- ets, exhibiting a low-frequency mo dulating en v elop e while maintaining phase coherence and a precise car- rier frequency . This b ehavior can b e thought of as a “relaxation” of Ko opman eigenfunctions, which gen- erate pure sinusoids with no amplitude mo dulation. Conclusions and outlo ok W e ha ve presen ted mathematical tec hniques at the in terface of dynamical systems theory and data sci- 14 Figure 3: A representativ e eigenfunction ˆ z j,τ of the compactified generator ˆ V τ for the L63 system, with lo w corresp onding Diric hlet energy . T op: Scatterplot of Re ˆ z j,τ on the L63 attractor. Bottom: Time series of Re ˆ z j,τ sampled along a dynamical tra jectory . ence for statistical analysis and mo deling of dynam- ical systems. One of our primary goals has b een to highligh t a fruitful in terplay of ideas from ergo dic theory , functional analysis, and differential geome- try , whic h, coupled with learning theory , provide an effectiv e route for data-driv en prediction and pattern extraction, well-adapted to handle nonlinear dynam- ics and complex geometries. There are sev eral op en questions and future re- searc h directions stemming from these topics. First, it should b e p ossible to combine p oint wise estima- tors deriv ed from metho ds suc h as diffusion forecast- ing and KAF with the Mori-Zw anzig formalism so as to incorp orate memory effects. Another p oten- tial direction for future developmen t is to incorporate w av elet frames, particularly when the measuremen ts or probabilit y densities are highly lo calized. More- o ver, when the attractor A is not a manifold, appro- priate notions of regularit y need to b e identified so as to fully c haracterize the behavior of k ernel algorithms suc h as diffusion maps. While w e susp ect that kernel- based constructions will still b e the fundamental to ol, the choice of k ernel ma y need to be adapted to the regularit y of the attractor to obtain optimal perfor- mance. Finally , a num ber of applications (e.g., anal- ysis of p erturbations) concern the action of dynamics on more general v ector bundles b esides functions, po- ten tially with a non-commutativ e algebraic structure, calling for the developmen t of suitable data-driven tec hniques for such spaces. Ac kno wledgmen ts Research of the authors de- scrib ed in this review was supported by D ARP A gran t HR0011-16-C-0116, NSF grants 1842538, DMS-1317919, DMS-1521775, DMS-1619661, DMS- 172317, DMS-1854383, and ONR grants N00014- 12-1-0912, N00014-14-1-0150, N00014-13-1-0797, N00014-16-1-2649, N00014-16-1-2888. References [AG20] R. Alexander and D. Giannakis, Op er ator- the or etic fr amework for for e c asting nonline ar time series with kernel analo g te chniques , Phys. D 409 (2020), 132520. [BBP + 17] S. L. Brunton, B. W. Brunton, J. L. Pro ctor, E. Kaiser, and J. N. Kutz, Chaos as an inter- mittently for c e d line ar system , Nat. Commun. 8 (2017), no. 19. [BCGFS13] T. Berry, R. Cressman, Z. Greguri ´ c-F erenˇ cek, and T. Sauer, Time-sc ale sep ar ation fr om diffusion- mapp e d delay co or dinates , SIAM J. Appl. Dyn. Sys. 12 (2013), 618–649. [BGH15] T. Berry, D. Giannakis, and J. Harlim, Nonp ar a- metric for ec asting of low-dimensional dynamic al systems , Phys. Rev. E. 91 (2015), 032915. [BH16] T. Berry and J. Harlim, V ariable b andwidth dif- fusion kernels , Appl. Comput. Harmon. Anal. 40 (2016), no. 1, 68–96. [BK86] D. S. Broomhead and G. P . King, Extr acting qual- itative dynamics fr om experimental data , Phys. D 20 (1986), no. 2–3, 217–236. [BN03] M. Belkin and P . Niy ogi, L aplacian eigenmaps for dimensionality r e duction and data repr esentation , Neural Comput. 15 (2003), 1373–1396. [CL06] R. R. Coifman and S. Lafon, Diffusion maps , Appl. Comput. Harmon. Anal. 21 (2006), 5–30. 15 [CS01] F. Cuc ker and S. Smale, On the mathematic al foundations of le arning , Bull. Amer. Math. So c. 39 (2001), no. 1, 1–49. [DF00] M. Dellnitz and G. F royland, On the isolate d sp e c- trum of the Perr onF r ob enius op er ator , Nonlinear- ity (2000), 1171–1188. [DG19] S. Das and D. Giannakis, Delay-c oor dinate maps and the sp e ctr a of Koopman op erators , J. Stat. Phys. 175 (2019), no. 6, 1107–1145. [DGS18] S. Das, D. Giannakis, and J. Slawinsk a, R epr o duc- ing kernel Hilb ert sp ac e c ompactific ation of uni- tary evolution gr oups , 2018. [DJ99] M. Dellnitz and O. Junge, On the appr oximation of c omplic ated dynamic al b ehavior , SIAM J. Nu- mer. Anal. 36 (1999), 491. [EHJ17] W. E, J. Han, and A. Jen tzen, De ep learning- b ase d numeric al methods for high-dimensional p ar ab olic p artial differ ential e quations and b ack- war d stochastic differ ential e quations , Commun. Math. Stat. 5 (2017), 349–380. [F ro13] G. F royland, An analytic fr amework for identi- fying finite-time c oher ent sets in time-dep endent dynamic al systems , Ph ys. D 250 (2013), 1–19. [Gen01] M. C. Genton, Classes of kernels for machine le arning: A statistics p erspe ctive , J. Mach. Learn. Res. 2 (2001), 299–312. [GM12] D. Giannakis and A. J. Ma jda, Nonline ar L apla- cian sp e ctr al analysis for time series with in- termittency and low-fr e quency variability , Pro c. Natl. Acad. Sci. 109 (2012), no. 7, 2222–2227. [KBBP17] J. N. Kutz, S. L. Brunton, B. W. Bunton, and J. L. Proctor, Dynamic mo de de c omp osition: Data- driven mo deling of c omplex systems , Society for Industrial and Applied Mathematics, Philadel- phia, 2017. [KM18] M. Korda and I. Mezi´ c, Line ar pr edictors for nonline ar dynamical systems: Ko opman oper ator me ets mo del pr e dictive contr ol , Automatica 93 (2018), 149–160. [KNK + 18] S. Klus, F. N¨ uske, P . Koltai, H. W u, I. Kevrekidis, C. Sch¨ utte, and F. No´ e, Data-driven mo del r e- duction and tr ansfer op er ator approximation , J. Nonlinear Sci. 28 (2018), 985–1010. [KNP + 20] S. Klus, F. N ¨ uske, S. P eitz, J.-H. Niemann, C. Clementi, and C. Sch¨ utte, Data-driven appr oxi- mation of the Koopman gener ator: Mo del r e duc- tion, system identification, and c ontr ol , Phys. D 406 (2020), 132416. [Koo31] B. O. Koopman, Hamiltonian systems and tr ans- formation in Hilb ert spac e , Pro c. Natl. Acad. Sci. 17 (1931), no. 5, 315–318. [Kos43] D. D. Kosam bi, Satistics in function sp ac e , J. Ind. Math. So c. 7 (1943), 76–88. [KPM20] M. Korda, M. Putinar, and I. Mezi´ c, Data-driven sp e ctr al analysis of the Koopman op er ator , Appl. Comput. Harmon. Anal. 48 (2020), no. 2, 599– 629. [Lor69] E.N. Lorenz, Atmospheric pre dictability as r e- ve ale d by naturally oc curring analo gues , J. Atmos. Sci. 26 (1969), 636–646. [MB99] I. Mezi ´ c and A. Banaszuk, Comp arison of systems with c omplex b ehavior: Sp ectr al metho ds , Proceed- ings of the 39th IEEE conference on decision and control, 1999, pp. 1224–1231. [Mez05] I. Mezi´ c, Sp e ctr al pr operties of dynamic al systems, mo del r eduction and de c ompositions , Nonlinear Dyn. 41 (2005), 309–325. [PHG + ] J. Pathak, B. Hunt, M. Girv an, Z. Lu, and E. Ott, Mo del-fr e e pr e diction of lar ge sp atiotemp o- r al ly chaotic systems fr om data: A r eservoir c om- puting appr o ach , Phys. Rev. Lett. 120 , 024102l. [RMB + 09] C. W. Rowley , I. Mezi ´ c, S. Bagheri, P . Sc hlatter, and D. S. Henningson, Sp e ctr al analysis of non- line ar flows , J. Fluid Mech. 641 (2009), 115–127. [Sch10] P . J. Schmid, Dynamic mo de de comp osition of numeric al and exp erimental data , J. Fluid Mech. 656 (2010), 5–28. [SSM98] B. Sc h¨ olkopf, A. Smola, and K. M¨ uller, Nonline ar c omp onent analysis as a kernel eigenvalue prob- lem , Neural Comput. 10 (1998), 1299–1319. [SYC91] T. Sauer, J. A. Y orke, and M. Casdagli, Emb e dol- o gy , J. Stat. Phys. 65 (1991), no. 3–4, 579–616. [TGHS19] N. G. T rillos, M. Gerlach, M. Hein, and D. Slepˇ cev, Error estimates for sp e ctral conver genc e of the gr aph L aplacian on r andom ge ometric gr aphs towar ds the Laplac e–Beltrami oper ator , F ound. Comput. Math. (2019). In press. [TS18] N. G. T rillos and D. Slep ˇ cev, A variational ap- pr o ach to the c onsistency of sp e ctr al clustering , Appl. Comput. Harmon. Anal. 45 (2018), no. 2, 239–281. [VG89] R. V autard and M. Ghil, Singular sp e ctrum anal- ysis in nonline ar dynamics, with applications to p ale o climatic time series , Ph ys. D 35 (1989), 395– 424. [vLBB08] U. v on Luxburg, M. Belkin, and O. Bousquet, Consitency of sp e ctral clustering , Ann. Stat. 26 (2008), no. 2, 555–586. [VPH + 20] P . R. Vlachas, J. Pathak, B. R. Hunt, T. P . Sapsis, M. Girv an, and E. Ott, Backprop agation algorithms and R eservoir Computing in R e cur- r ent Neur al Networks for the for e c asting of c om- plex sp atiotemp oral dynamics , Neural Netw. 126 (2020), 191–217. 16 [WKR15] M. O. Williams, I. G. Kevrekidis, and C. W. Ro w- ley , A data-driven appr oximation of the Koop- man op er ator: Extending dynamic mo de de c om- p osition , J. Nonlinear Sci. 25 (2015), no. 6, 1307– 1346. [ZHL19] H. Zhang, J. Harlim, and X. Li, Computing lin- e ar r esp onse statistics using ortho gonal polyno- mial b ase d estimators: An RKHS formulation , 2019. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment