Global Convergence of Policy Gradient Methods to (Almost) Locally Optimal Policies

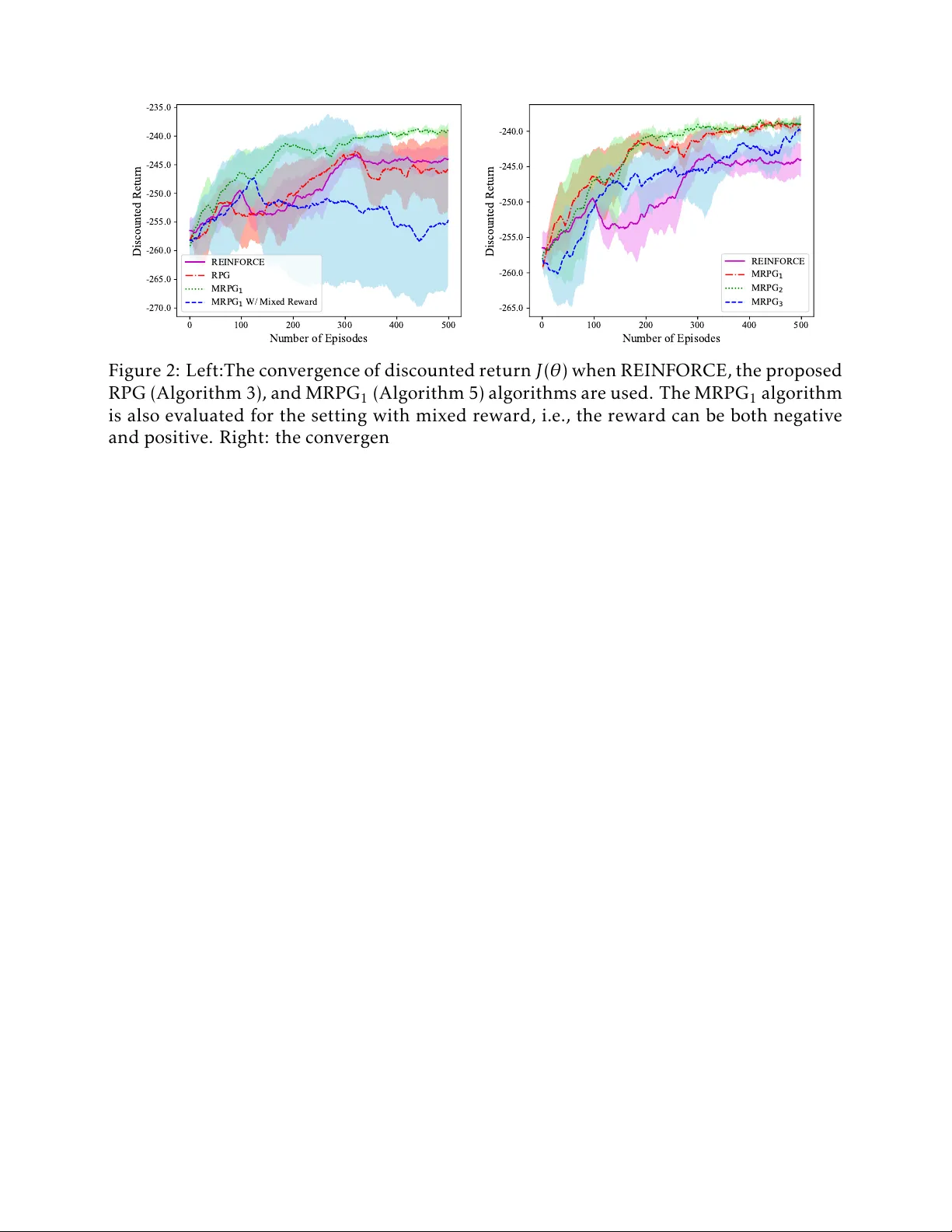

Policy gradient (PG) methods are a widely used reinforcement learning methodology in many applications such as video games, autonomous driving, and robotics. In spite of its empirical success, a rigorous understanding of the global convergence of PG …

Authors: Kaiqing Zhang, Alec Koppel, Hao Zhu