Optimizing Neural Networks with Kronecker-factored Approximate Curvature

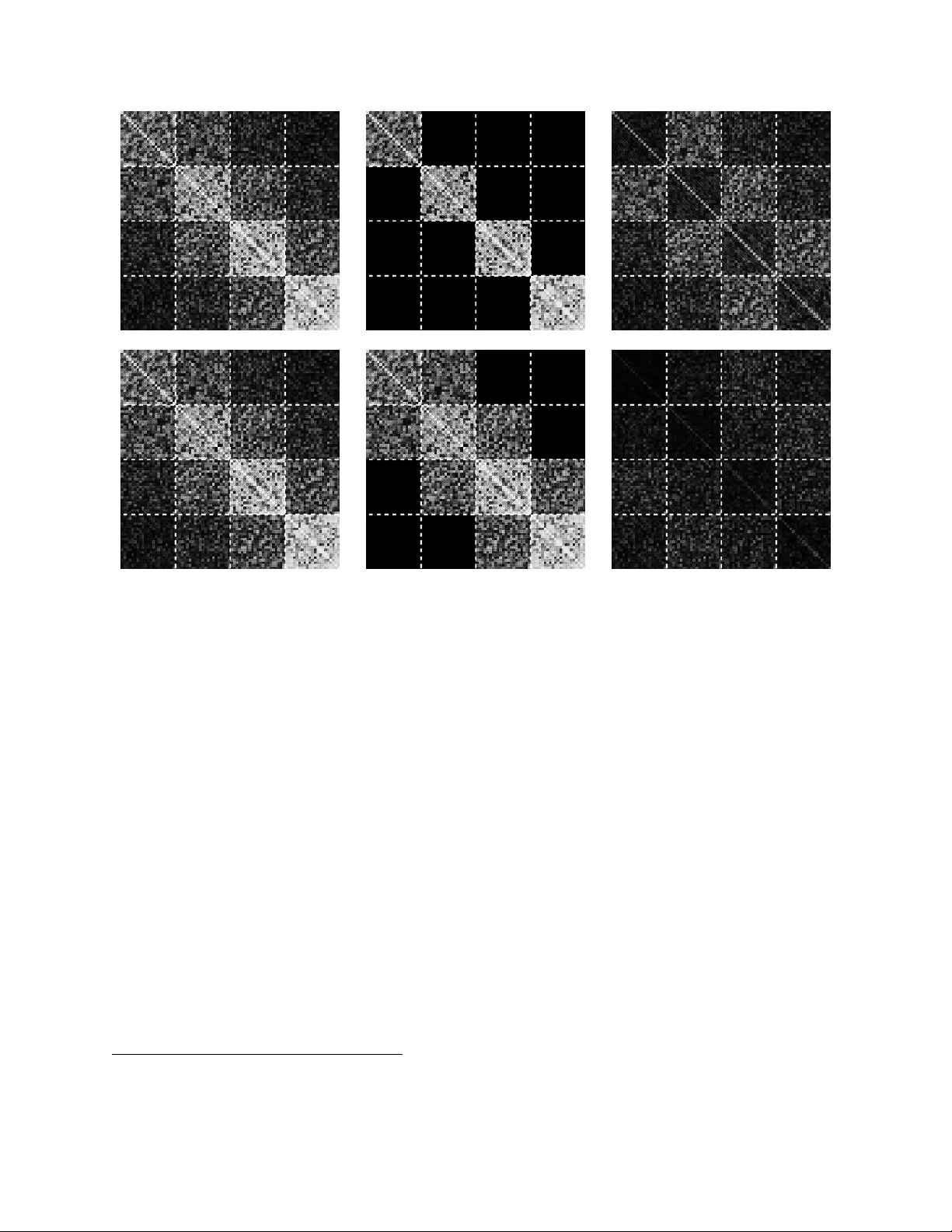

We propose an efficient method for approximating natural gradient descent in neural networks which we call Kronecker-Factored Approximate Curvature (K-FAC). K-FAC is based on an efficiently invertible approximation of a neural network's Fisher inform…

Authors: James Martens, Roger Grosse