The Art of CPU-Pinning: Evaluating and Improving the Performance of Virtualization and Containerization Platforms

Cloud providers offer a variety of execution platforms in form of bare-metal, VM, and containers. However, due to the pros and cons of each execution platform, choosing the appropriate platform for a specific cloud-based application has become a challenge for solution architects. The possibility to combine these platforms (e.g. deploying containers within VMs) offers new capacities that makes the challenge even further complicated. However, there is a little study in the literature on the pros and cons of deploying different application types on various execution platforms. In particular, evaluation of diverse hardware configurations and different CPU provisioning methods, such as CPU pinning, have not been sufficiently studied in the literature. In this work, the performance overhead of container, VM, and bare-metal execution platforms are measured and analyzed for four categories of real-world applications, namely video processing, parallel processing (MPI), web processing, and No-SQL, respectively representing CPU intensive, parallel processing, and two IO intensive processes. Our analyses reveal a set of interesting and sometimes counterintuitive findings that can be used as best practices by the solution architects to efficiently deploy cloud-based applications. Here are some notable mentions: (A) Under specific circumstances, containers can impose a higher overhead than VMs; (B) Containers on top of VMs can mitigate the overhead of VMs for certain applications; (C) Containers with a large number of cores impose a lower overhead than those with a few cores.

💡 Research Summary

The paper presents a systematic performance evaluation of four common cloud execution environments—bare‑metal (BM), KVM‑based virtual machines (VM), Docker containers (CN), and Docker containers running inside VMs (VMCN)—with a focus on the impact of CPU provisioning strategies, especially CPU‑pinning (static core binding). The authors motivate the study by noting that while cloud providers offer a plethora of deployment options, architects often lack quantitative guidance on which platform best suits a given workload, and existing literature scarcely addresses how hardware configurations and CPU‑pinning affect overhead.

Experimental Setup

All experiments run on a single physical host: a Dell PowerEdge R830 equipped with four Intel Xeon E5‑4628Lv4 CPUs (112 logical cores, 384 GB RAM, RAID‑1 storage). Six instance sizes are defined, ranging from 2 to 64 cores, with memory scaled proportionally (Table II). Each platform is evaluated in two CPU allocation modes: “vanilla” (default CFS scheduling, i.e., CPU‑quota) and “pinning” (CPU‑set, where specific cores are dedicated to the VM or container). The baseline is bare‑metal, which incurs the smallest possible overhead.

Four representative real‑world applications are selected to cover distinct processing characteristics:

- FFmpeg 3.4.6 – a multi‑threaded video transcoding tool, representing a CPU‑bound workload that can exploit up to 16 cores.

- OpenMPI 2.1.1 – a high‑performance parallel computing benchmark, emphasizing inter‑process communication overhead.

- WordPress 5.3.2 – a typical web server handling many short, I/O‑intensive HTTP requests.

- Apache Cassandra 2.2 – a NoSQL database with heavy disk and memory I/O inside a single large process.

Performance is measured as total execution time; the overhead ratio is defined as (execution time on a given platform) / (average execution time on bare‑metal). Linux utilities (top, iostat, perf) and BCC tracing are used to capture CPU migrations, cache misses, and context‑switch counts.

Key Findings

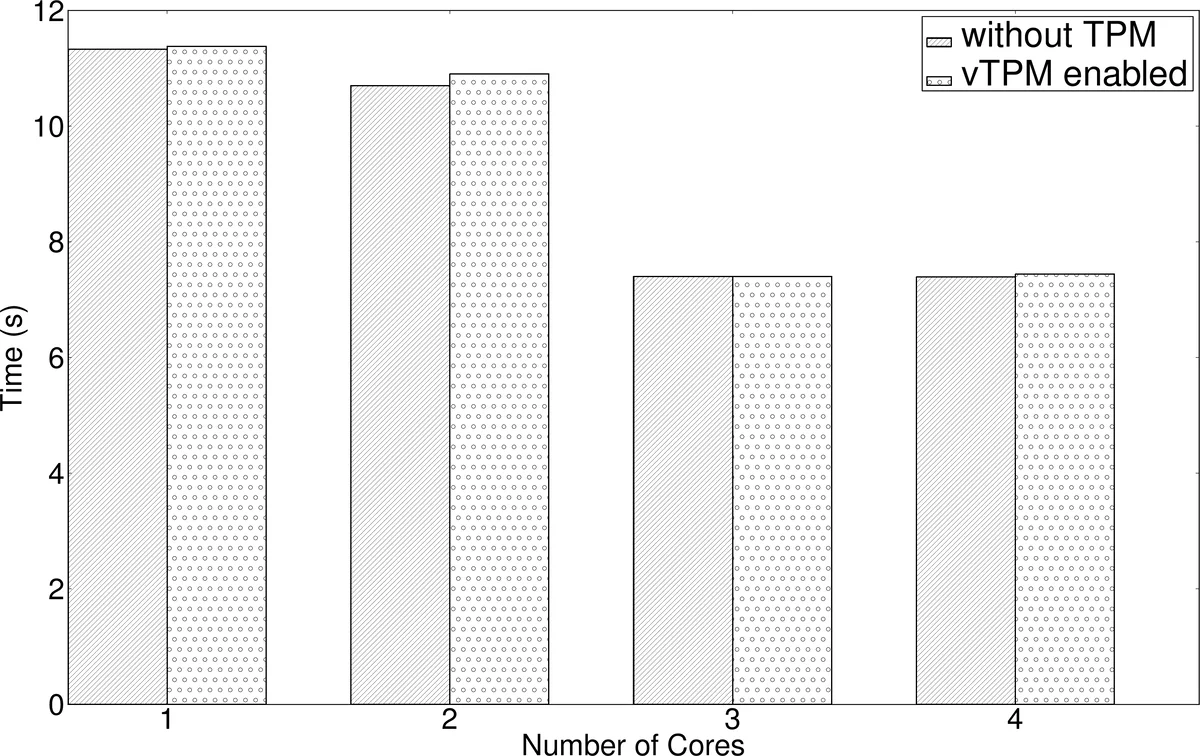

CPU‑Pinning Reduces Overhead – Pinning limits the host scheduler’s freedom, thereby reducing process migrations, cache‑miss penalties, and context‑switch overhead. For CPU‑intensive workloads (FFmpeg, MPI) the average overhead drops by 8‑12 % when pinning is enabled. For I/O‑bound workloads (WordPress, Cassandra) the benefit is modest (4‑7 %) because the dominant cost lies in disk/network operations rather than CPU scheduling. Pinning, however, leaves unpinned cores idle, potentially lowering overall CPU utilization if the workload cannot fully exploit the assigned cores.

Containers Are Not Always Faster Than VMs – Contrary to the common belief that containers are always lighter, the study shows that on small instance sizes (2–4 cores) containers can incur higher overhead than VMs. This is attributed to cgroups relying on the host’s CFS scheduler, which leads to contention when few cores are shared among many containers. As the core count grows, the gap narrows; with 16 cores or more containers match or slightly outperform VMs.

Containers‑in‑VM (VMCN) Can Mitigate VM Overhead – By pinning a VM to a set of cores and then running a Docker container inside it, the system benefits from the VM’s isolation while avoiding an extra scheduling layer for the container. In the experiments, VMCN consistently outperformed plain VMs for MPI and Cassandra by 5‑9 % overhead reduction, demonstrating a synergistic effect.

Core Count Matters – Overhead ratios decrease markedly as the number of allocated cores increases. With only 2 cores, average overhead exceeds 15 %; with 64 cores it falls below 3 %. Larger core pools give the scheduler more flexibility to allocate dedicated cores, reducing migrations and cache thrashing. For highly parallel workloads (MPI) and heavy I/O (Cassandra), 32 cores or more bring performance within a few percent of bare‑metal.

Bare‑Metal Remains the Gold Standard – Unsurprisingly, bare‑metal delivers the lowest absolute execution times across all workloads. However, its management overhead, lack of isolation, and poor multi‑tenant utilization make it unsuitable for most cloud scenarios except for ultra‑high‑performance or latency‑critical services.

Best‑Practice Recommendations

- CPU‑Bound Workloads (FFmpeg, MPI) – Deploy on instances with ≥ 16 cores, enable CPU‑pinning, and prefer VMCN if isolation is required.

- I/O‑Bound Workloads (WordPress, Cassandra) – Use ≥ 8 cores; pinning is optional (benefit modest). Containers alone are sufficient and simplify operations.

- Small Instances (2–4 cores) – Favor VMs over containers, as containers may suffer higher scheduling contention.

- Large Instances (≥ 32 cores) – Overhead differences among BM, VM, CN, and VMCN become negligible; choose based on security, licensing, or operational policies.

Conclusion

The study empirically validates that CPU‑pinning can meaningfully reduce virtualization overhead, especially for CPU‑intensive applications, but it must be balanced against potential under‑utilization of idle cores. It also challenges the blanket assumption that containers are always more efficient than VMs, showing that workload characteristics and instance size critically influence the outcome. By providing quantitative data and concrete guidelines, the paper equips cloud architects with actionable insights for selecting the optimal execution platform and CPU provisioning strategy, ultimately enabling more cost‑effective and performant cloud deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment