Real-Time Stochastic Optimal Control for Multi-agent Quadrotor Systems

This paper presents a novel method for controlling teams of unmanned aerial vehicles using Stochastic Optimal Control (SOC) theory. The approach consists of a centralized high-level planner that computes optimal state trajectories as velocity sequenc…

Authors: Vicenc{c} Gomez, Sep Thijssen, Andrew Symington

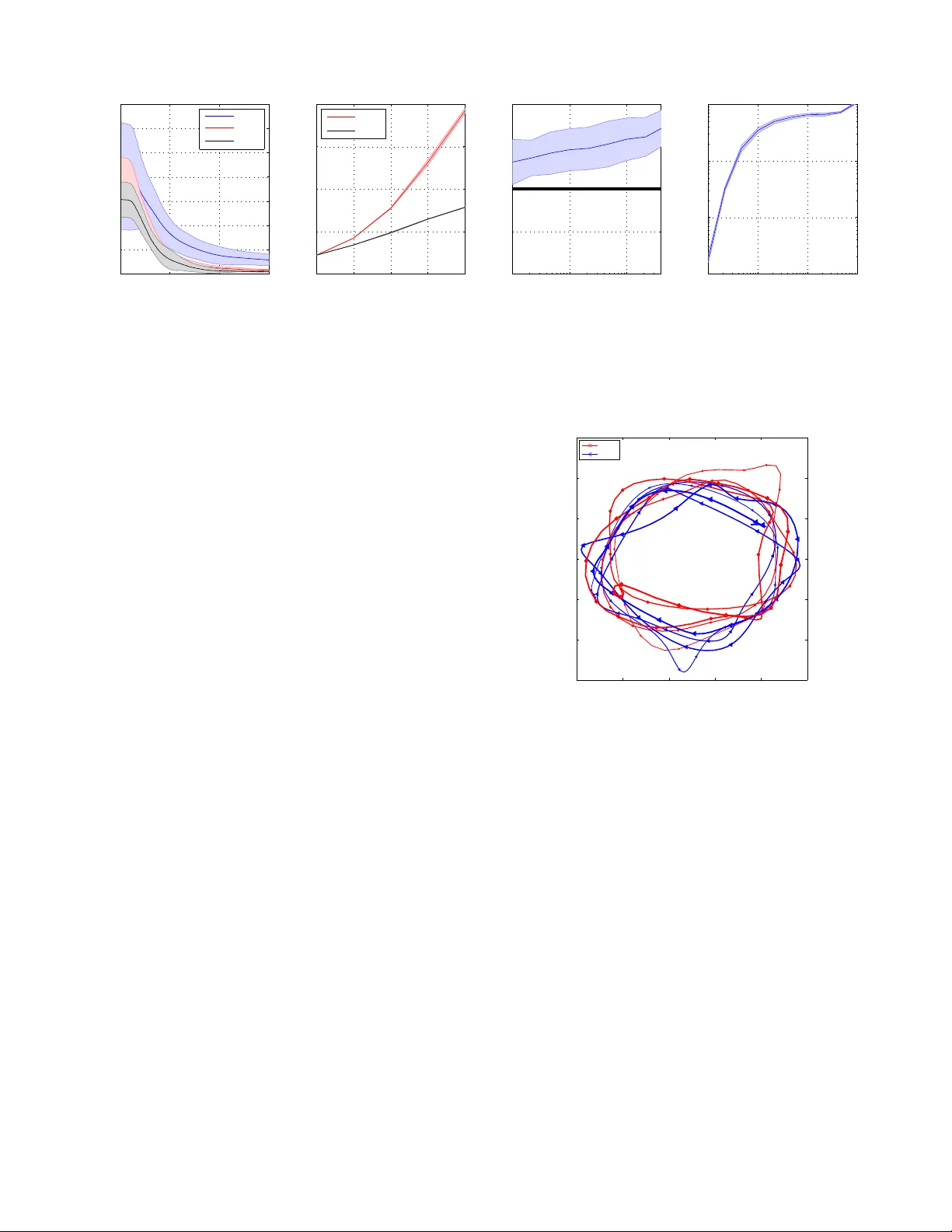

Real-T ime Stochastic Optimal Contr ol f or Multi-agent Quadr otor Systems V icenc ¸ G ´ omez 1 , Sep Thijssen 2 , Andrew Symington 3 , Stephen Hailes 4 , Hilbert J. Kappen 2 1 Univ ersitat Pompeu F abra. Barcelona, Spain vicen.gomez@upf.edu 2 Radboud Univ ersity Nijme gen, the Netherlands { s.thijssen,b.kappen } @donders.ru.nl 3 Univ ersity of California Los Angeles, USA andrew.c.symington@gmail.com 4 Univ ersity Colle ge London, United Kingdom s.hailes@cs.ucl.ac.uk Abstract This paper presents a nov el method for controlling teams of unmanned aerial vehicles using Stochastic Optimal Con- trol (SOC) theory . The approach consists of a centralized high-lev el planner that computes optimal state trajectories as velocity sequences, and a platform-specific low-le vel con- troller which ensures that these velocity sequences are met. The planning task is expressed as a centralized path-inte gral control problem, for which optimal control computation cor- responds to a probabilistic inference problem that can be solved by ef ficient sampling methods. Through simulation we sho w that our SOC approach (a) has significant benefits compared to deterministic control and other SOC methods in multimodal problems with noise-dependent optimal solu- tions, (b) is capable of controlling a large number of platforms in real-time, and (c) yields collective emergent behaviour in the form of flight formations. Finally , we show that our ap- proach w orks for real platforms, by controlling a team of three quadrotors in outdoor conditions. 1 Introduction The recent surge in autonomous Unmanned Aerial V ehicle (U A V) research has been driven by the ease with which plat- forms can no w be acquired, ev olving legislation that reg- ulates their use, and the broad range of applications en- abled by both individual platforms and cooperati ve swarms. Example applications include automated delivery systems, monitoring and surveillance, target tracking, disaster man- agement and navigation in areas inaccessible to humans. Quadrotors are a natural choice for an experimental plat- form, as they provide a safe, highly-agile and inexpensi ve means by which to ev aluate U A V controllers. Figure 1 shows a 3D model of one such quadrotor , the Ascending T echnolo- gies P elican . Quadrotors hav e non-linear dynamics and are naturally unstable, making control a non-trivial problem. Stochastic optimal control (SOC) provides a promising theoretical frame work for achieving autonomous control of quadrotor systems. In contrast to deterministic control, SOC directly captures the uncertainty typically present in noisy en vironments and leads to solutions that qualitativ ely de- pend on the level of uncertainty (Kappen 2005). Howe ver , with the exception of the simple Linear Quadratic Gaussian Copyright c 2016, Association for the Advancement of Artificial Intelligence (www .aaai.org). All rights reserv ed. Figure 1: Control hierarch y : The path-integral controller (1) calculates target velocities/heights for each quadrotor . These are conv erted to roll, pitch, throttle and yaw rates by a platform-specific V elocity Height PID controller (2). This control is in turn passed to the platform’ s flight control sys- tem (3), and con verted to relativ e motor speed changes. case, for which a closed form solution exists, solving the SOC problem requires solving the Hamilton Jacobi Bellman (HJB) equations. These equations are generally intractable, and so the SOC problem remains an open challenge. In such a complex setting, a hierarchical approach is usu- ally taken and the control problem is reduced to follow a state-trajectory (or a set of way points) designed by hand or computed of fline using trajectory planning algorithms (Kendoul 2012). While the planning step typically inv olves a low-dimensional state representation, the control methods use a detailed complex state representation of the U A V . Ex- amples of control methods for trajectory tracking are the Proportional Inte gral Deriv ati ve or the Linear-Quadratic reg- ulator . A generic class of SOC problems was introduced in Kap- pen; T odorov (2005; 2006) for which the controls and the cost function are restricted in a way that makes the HJB equation linear and therefore more ef ficiently solv able. This class of problems is known as path integral (PI) control, linearly-solvable controlled dif fusions or K ullback-Leibler control, and it has lead to successful robotic applications, e.g. (Kinjo, Uchibe, and Doya 2013; Rombokas et al. 2012; Theodorou, Buchli, and Schaal 2010). A particularly inter- esting feature of this class of problems is that the compu- tation of optimal control is an inference problem with a so- lution giv en in terms of the passive dynamics. In a multi- agent system, where the agents follow independent passiv e dynamics, such a feature can be exploited using approxi- mate inference methods such as v ariational approximations or belief propagation (Kappen, G ´ omez, and Opper 2012; V an Den Broek, W iegerinck, and Kappen 2008). In this paper , we sho w how PI control can be used for solving motion planning tasks on a team of quadrotors in real time. W e combine periodic re-planning with receding horizon, similarly to model predictive control, with effi- cient importance sampling. At a high lev el, each quadro- tor is modelled as a point mass that follows simple dou- ble integrator dynamics. Lo w-le vel control is achieved us- ing a standard Proportional Integral Deriv ati ve (PID) veloc- ity controller that interacts with a real or simulated flight control system. W ith this strategy we can scale PI control to ten units in simulation. Although in principle there are no further limits to experiments with actual platforms, our first results with real quadrotors only include three units. T o the best of our knowledge this has been the first real-time im- plementation of PI control on an actual multi-agent system. In the next section we describe related work. W e introduce our approach in Section 3 Results are shown on three dif- ferent scenarios in Section 4 Finally , Section 5 concludes this paper . 2 Related W ork on U A V Planning and Control There is a large and growing body of literature related to this topic. In this section, we highlight some of the most related papers to the presented approach. An recent sur- ve y of control methods for general UA Vs can be found in Kendoul (2012). Stochastic optimal control is mostly used for U A V con- trol in its simplest form, assuming a linear model perturbed by additi ve Gaussian noise and subject to quadratic costs (LQG), e.g. (Ho w et al. 2008). While LQG can successfully perform simple actions like hovering, executing more com- plex actions requires considering additional corrections for aerodynamic effects such as induced power or blade flap- ping (Hoffmann et al. 2011). These approaches are mainly designed for accurate trajectory control and assume a gi ven desired state trajectory that the controller transforms into motor commands. Model Predictive Control (MPC) has been used optimize trajectories in multi-agent U A V systems (Shim, Kim, and Sastry 2003). MPC emplo ys a model of the U A V and solves an optimal control problem at time t and state x ( t ) over a future horizon of a fixed number of time-steps. The first op- timal move u ∗ ( t ) is then applied and the rest of the optimal sequence is discarded. The process is repeated again at time t + 1 . A quadratic cost function is typically used, but other more complex functions e xist. MPC has mostly been used in indoor scenarios, where high-precision motion capture systems are av ailable. For in- stance, in Kushleye v et al. (2013) authors generate smooth trajectories through known 3-D en vironments satisfying specifications on intermediate waypoints and sho w remark- able success controlling a team of 20 quadrotors. T rajectory optimization is translated to a relaxation of a mix ed inte- ger quadratic program problem with additional constraints for collision a voidance, that can be solved ef ficiently in real- time. Examples that follow a similar methodology can be found in T urpin, Michael, and Kumar; Augugliaro, Schoel- lig, and D’Andrea (2012; 2012). Similarly to our approach, these methods use a simplified model of dynamics, either us- ing the 3-D position and yaw angle Kushle yev et al.; T urpin, Michael, and Kumar (2013; 2012) or the position and ve- locities as in Augugliaro, Schoellig, and D’Andrea (2012). Howe ver , these approaches are inherently deterministic and express the optimal control problem as a quadratic problem. In our case, we solve an inference problem by sampling and we do not require intermediate trajectory waypoints. In outdoor conditions, motion capture is difficult and Global Positioning System (GPS) is used instead. Existing control approaches are typically either based on Reynolds flocking (B ¨ urkle, Segor , and K ollmann 2011; Hauert et al. 2011; V ´ as ´ arhelyi et al. 2014; Reynolds 1987) or flight for- mation (Guerrero and Lozano 2012; Y u et al. 2013). In Reynolds flocking, each agent is considered a point mass that obeys simple and distributed rules: separate from neigh- bors, align with the av erage heading of neighbors and steer tow ards neighborhood centroid to keep cohesion. Flight for - mation control is typically modeled using graphs, where ev- ery node is an agent that can exchange information with all or several agents. V elocity and/or position coordination is usually achiev ed using consensus algorithms. The work in Quintero, Collins, and Hespanha (2013) shares man y similarities with our approach. Authors deriv e a stochastic optimal control formulation of the flocking prob- lem for fixed-wings U A Vs. They take a leader-follo wer strat- egy , where the leader follows an arbitrary (predefined) pol- icy that is learned of fline and define the immediate cost as a function of the distance and heading with respect to the leader . Their method is demonstrated outdoors with 3 fixed- wing U A Vs in a distributed sensing task. As in this paper , they formulate a SOC problem and perform MPC. Howe ver , in our case we do not restrict to a leader -follower setup and consider a more general class of SOC problems which can include coordination and cooperation problems. Planning approaches W ithin the planning community , Bernardini, Fox, and Long (2014) consider search and track- ing tasks, similar to one of our scenarios. Their approach is different to ours, they formulate a planning problem that uses used sear ch patterns that must be selected and se- quenced to maximise the probability of rediscovering the target. Albore et al. (2015) and Chanel, T eichteil-Knigsbuch, and Lesire (2013) consider a different problem: dynamic data acquisition and en vironmental kno wledge optimisation. Both techniques use some form of replanning. While Al- bore et al. (2015) uses a Marko v Random Field frame work to represent knowledge about the uncertain map and its qual- ity , Chanel, T eichteil-Knigsbuch, and Lesire (2013) rely on partially-observable MDPs. All these works consider a sin- gle U A V scenario and low-le vel control is either neglected or deferred to a PID or waypoint controller . Recent Progress in Path-Integral Contr ol There has been significant progress in PI control, both theoretically and in applications. Most of existing methods use parametrized policies to ov ercome the main limitations (see Section 3.1). Examples can be found in Theodorou, Buchli, and Schaal; Stulp and Sigaud; G ´ omez et al. (2010; 2012; 2014). In these methods, the optimal control solution is restricted by the class of parametrized policies and, more importantly , it is computed offline. In Rawlik, T oussaint, and V ijayaku- mar (2013), authors propose to approximate the transformed cost-to-go function using linear operators in a reproducing kernel Hilbert space. Such an approach requires an analyt- ical form of the PI embedding, which is dif ficult to obtain in general. In Horowitz, Damle, and Burdick (2014), a low- rank tensor representation is used to represent the model dy- namics, allowing to scale PI control up to a 12-dimensional system. More recently , the issue of state-dependence of the optimal control has been addressed (Thijssen and Kappen 2015), where a parametrized state-dependent feedback con- troller is deriv ed for the PI control class. Finally , model predicti ve PI control has been recently pro- posed for controlling a nano-quadrotor in indoor settings in an obstacle av oidance task (W illiams, Rombokas, and Daniel 2014). In contrast to our approach, their method is not hierac hical and uses nai ve sampling, which makes it less sample efficient. Additionally , the control cost term is ne- glected, which can ha ve important implications in complex tasks inv olving noise. The approach presented here scales well to sev eral U A Vs in outdoor conditions and is illustrated in tasks beyond obstacle a voidance navigation. 3 Path-Integral Contr ol for Multi-U A V planning W e first briefly re vie w PI control theory . This is follo wed by a description of the proposed method used to achie ve motion planning of multi-agent U A V systems using PI control. 3.1 Path-Integral Control W e consider continuous time stochastic control problems, where the dynamics and cost are respectively linear and quadratic in the control input, but arbitrary in the state. More precisely , consider the following stochastic differential equation of the state vector x ∈ R n under controls u ∈ R m d x = f ( x ) dt + G ( x )( u dt + d ξ ) , (1) where ξ is m − dimensional W iener noise with co variance Σ u ∈ R m × m and f ( x ) ∈ R n and G ( x ) ∈ R n × m are ar- bitrary functions, f is the drift in the uncontrolled dynamics (including gravity , Coriolis and centripetal forces), and G describes the effect of the control u into the state vector x . A realization τ = x 0: dt : T of the abov e equation is called a (random) path. In order to describe a control problem we define the cost that is attributed to a path (cost-to-go) by S ( τ | x 0 , u ) = r T ( x T ) + X t =0: dt : T − dt r t ( x t ) + 1 2 u > t Ru t dt, (2) where r T ( x T ) and r t ( x t ) are arbitrary state cost terms at end and intermediate times, respectiv ely . R is the control cost matrix. The general stochastic optimal control problem is to minimize the expected cost-to-go w .r .t. the control u ∗ = arg min u E [ S ( τ | x 0 , u )] . In general, such a minimization leads to the Hamilton- Jacobi-Bellman (HJB) equations, which are non-linear, sec- ond order partial differential equations. Ho we ver , under the following relation between the control cost and noise co- variance Σ u = λ R − 1 , the resulting equation is linear in the exponentially transformed cost-to-go function. The solution is giv en by the Feynman-Kac Formula, which e xpresses op- timal control in terms of a Path-Integral, which can be in- terpreted as taking the expectation under the optimal path distribution (Kappen 2005) p ∗ ( τ | x 0 ) ∝ p ( τ | x 0 , u ) exp( − S ( τ | x 0 , u ) /λ ) , (3) h u ∗ t ( x 0 ) i = h u t + ( ξ t + dt − ξ t ) /dt i , (4) where p ( τ | x 0 , u ) denotes the probability of a (sub-optimal) path under equation (1) and h·i denotes expectation over paths distributed by p ∗ . The constraint Σ u = λ R − 1 forces control and noise to act in the same dimensions, but in an in verse relation. Thus, for fixed λ , the larger the noise, the cheaper the control and vice- versa. Parameter λ act as a temperature: higher v alues of λ result in optimal solutions that are closer to the uncontrolled process. Equation (4) permits optimal control to be calculated by probabilistic inference methods, e.g., Monte Carlo. An in- teresting fact is that equations (3, 4) hold for all controls u . In particular , u can be chosen to reduce the v ariance in the Monte Carlo computation of h u ∗ t ( x 0 ) i which amounts to importance sampling. This technique can drastically im- prov e the sampling efficiency , which is crucial in high di- mensional systems. Despite this improvement, direct appli- cation of PI control into real systems is limited because it is not clear how to choose a proper importance sampling dis- tribution. Furthermore, note that equation (4) yields the op- timal control for all times t av eraged ov er states. The result is therefore an open-loop controller that neglects the state- dependence of the control beyond the initial state. 3.2 Multi-U A V planning The proposed architecture is composed of two main lev els. At the most abstract lev el, the U A V is modeled as a 2D point-mass system that follows double integrator dynamics. Algorithm 1 PI control for UA V motion planning 1: function P I C O N T RO L L E R ( N , H , dt, r t ( · ) , Σ u , u t : dt : t + H ) 2: for k = 1 , . . . , N do 3: Sample paths τ k = { x t : dt : t + H } k with Eq. (5) 4: end f or 5: Compute S k = S ( τ k | x 0 , u ) with Eq. (2) 6: Store the noise realizations { ξ t : dt : t + H } k 7: Compute the weights: w k = e − S k /λ / P l e − S l /λ 8: for s = t : dt : t + H do 9: u ∗ s = u s + 1 dt P k w k ( { ξ s + dt } k − { ξ s } k ) 10: end f or 11: Return next desired velocity: v t + dt = v t + u ∗ t dt and u ∗ t : dt : t + H for importance sampling at t + dt 12: end function At the lo w-level, we use a detailed second order model that we learn from real flight data (De Nardi and Holland 2008). W e use model predictive control combined with importance sampling. There are tw o main benefits of using the proposed approach: first, since the state is continuously updated, the controller does not suffer from the problems caused by us- ing an open-loop controller . Second, the control policy is not restricted by any parametrization. The two-lev el approach permits to transmit control sig- nals from the high-lev el PI controller to the lo w-level control system at a relati vely lo w frequencies (we use 15 Hz in this work). Consequently , the PI controller has more time av ail- able for sampling a large number of trajectories, which is critical to obtain good estimates of the control. The choice of 2D in the presented method is not a fundamental limitation, as long as double-integrator dynamics is used. The control hierarchy introduces additional model mismatch. Howe ver , as we show in the results later , this mismatch is not critical for obtaining good performance in real conditions. Ignoring height, the state vector x is thus composed of the East-North (EN) positions and EN velocities of each agent i = 1 , . . . , M as x i = [ p i , v i ] > where p i , v i ∈ R 2 . Similarly , the control u consists of EN accelerations u i ∈ R 2 . Equa- tion (1) decouples between the agents and takes the linear form dx i = Ax i dt + B ( u i dt + dξ i ) , A = 0 1 0 0 , B = 0 1 . (5) Notice that although the dynamics is decoupled and linear, the state cost r t ( x t ) in equation (2) can be any arbitrary function of all U A Vs states. As a result, the optimal con- trol will in general be a non-linear function that couples all the states and thus hard to compute. Giv en the current joint optimal action u ∗ t and velocity v t , the expected velocity at the next time t 0 is calculated as v t 0 = v t + ( t 0 − t ) u ∗ t and passed to the low-le vel controller . The final algorithm optionally keeps an importance-control sequence u t : dt : t + H that is incrementally updated. W e sum- marize the high-lev el controller in Algorithm 1. The importance-control sequence u t : dt : t + H is initialized using prior kno wledge or with zeros otherwise. Noise is Figure 2: The flight control system (FCS) is comprised of two control loops: one for stabilization and the other for pose control. A lo w-level controller interacts with the FCS ov er a serial interface to stream measurements and issue control. dimension-independent, i.e. Σ u = σ 2 u Id. T o measure sam- pling con ver gence, we define the Effective Sample Size (ESS) as ESS := 1 / P N k =1 w 2 k , which is a quantity between 1 and N . V alues of ESS close to one indicate an estimate dominated by just one sample and a poor estimate of the optimal control, whereas an ESS close to N indicates near perfect sampling, which occurs when the importance- equals the optimal-control function. 3.3 Low Level Contr ol The target velocity v = [ v E v N ] > is passed along with a height ˆ p U to a V elocity-Height controller . This con- troller uses the current state estimate of the real quadrotor y = [ p E p N p U φ θ ψ u v w p q r ] > , where ( p E , p N , p U ) and ( φ, θ, ψ ) denote na vigation-frame position and orienta- tion and ( u, v , w ) , ( p, q, r ) denote body-frame and angular velocities, respecti vely . It is composed of four independent PID controllers for roll ˆ φ , pitch ˆ θ , throttle ˆ γ and yaw rate ˆ r . that send the commands to the flight control system (FCS) to achiev e v . Figure 2 shows the details of the FCS. The control loop runs at 1kHz fusing triaxial gyroscope, accelerometer and magnetometer measurements. The accelerometer and mag- netometer measurements are used to determine a reference global orientation, which is in turn used to track the gyro- scope bias. The difference between the desired and actual angular rates are con verted to motor speeds using the model in Mahony , Kumar , and Corke (2012). An outer pose control loop calculates the desired angular rates based on the desired state. Orientation is obtained from the inner control loop, while position and velocity are ob- tained by fusing GPS na vigation fix es with barometric pres- sure (BAR) based altitude measurements. The radio trans- mitter (marked TX in the diagram) allo ws the operator to switch quickly between autonomous and manual control of a platform. There is also an acoustic alarm on the platform itself, which warns the operator when the GPS signal is lost or the battery is getting low . If the battery reaches a critical lev el or communication with the transmitter is lost, the plat- form can be configured to land immediately or alternati vely , to fly back and land at its take-of f point. Figure 3: Drunken Quadrotor: a red target has to be reached while avoiding obstacles. (Left) the shortest route is the op- timal solution in the absence of noise. (Right) with control noise, the optimal solution is to fly around the building. 3.4 Simulator Platform W e hav e developed an open-source frame work called CRATES 1 . The framework is a implementation of QRSim (De Nardi 2013; Symington et al. 2014) in Gazebo, which uses Robot Operating System (ROS) for high-le vel control. It permits high-le vel controllers to be platform-agnostic. It is similar to the Hector Quadrotor project (Meyer et al. 2012) with a formalized notion of a hardware abstraction layers. The CRATES simulator propagates the quadrotor state forward in time based on a second order model (De Nardi and Holland 2008). The equations were learned from real flight data and verified by expert domain knowledge. In ad- dition to platform dynamics, CRATES also simulates var - ious noise-perturbed sensors, wind shear and turbulence. Orientation and barometric altitude errors follo w zero-mean Ornstein-Uhlenbeck processes, while GPS error is modeled at the pseudo range le vel using trace data av ailable from the International GPS Service. In accordance with the Mil- itary Specification MIL-F-8785C, wind shear is modeled as a function of altitude, while turbulence is modeled as a dis- crete implementation of the Dryden model. CRATES also provides support for generating terrain from satellite images and light detection and ranging (LID AR) technology , and reporting collisions between platforms and terrain. 4 Results W e now analyze the performance of the proposed approach in three dif ferent tasks. W e first sho w that, in the presence of control noise, PI control is preferable ov er other approaches. For clarity , this scenario is presented for one agent only . W e then consider two tasks in volving several units: a flight for- mation task and a pursuit-ev asion task. W e compare the PI control method described in Section 3.2 with iterative linear -quadratic Gaussian (iLQG) control (T odorov and Li 2005). iLQG is a state-of-the-art method based on differential dynamic programming, that iterati vely computes local linear-quadratic approximations to the finite 1 CRATES stands for ’Cogniti ve Robotics Architecture for T ightly-Coupled Experiments and Simulation’. A vailable at https://bitbucket.org/vicengomez/crates horizon problem. A key difference between iLQG and PI control is that the linear-quadratic approximation is certainty equiv alent. Consequently , iLQG yields a noise independent solution. 4.1 Scenario I: Drunken Quadr otor This scenario is inspired in Kappen (2005) and highlights the benefits of SOC in a quadrotor task. The Drunken Quadro- tor is a finite horizon task where a quadrotor has to reach a target, while a voiding a b uilding and a wall (figure 3). There are two possible routes: a shorter one that passes through a small gap between the wall and the building, and a longer one that goes around the building. Unlike SOC, the deter- ministic optimal solution does not depend on the noise le vel and will always tak e the shorter route. Ho wever , with added noise, the risk of collision increases and thus the optimal noisy control is to take the longer route. This task can be alternatively addressed using other plan- ning methods, such as the one proposed by Ono, Williams, and Blackmore (2013), which allow for specification of user’ s acceptable levels of risk using chance constraints. Here we focus on comparing deterministic and stochastic optimal control for motion planning. The amount of noise thus determines whether the optimal solution is go through the risky path or the longer safer path. The state cost in this problem consists of hard constraints that assign infinite cost when either the wall or the b uild- ing is hit. PI control deals with collisions by killing par- ticles that hit the obstacles during Monte Carlo sampling. For iLQG, the local approximations require a twice differ - entiable cost function. W e resolv ed this issue by adding a smooth obstacle-proximity penalty in the cost function. Al- though iLQG computes linear feedback, we tried to impro ve it with a MPC scheme, similar as for PI control. Unfortu- nately , this leads to numerical instabilities in this task, since the system disturbances tend to move the reference trajec- tory through a building when moving from one time step to the next. For MPC with PI control we use a receding horizon of three seconds and perform re-planning at a frequenc y of 15 Hz with N = 2000 sample paths. Both methods are ini- tialized with u t = 0 , ∀ t . iLQG requires approximately 10 3 iterations to con verge with a learning rate of 0 . 5% . Figure 3 (left) shows an example of real trajectory com- puted for low control noise lev el, σ 2 u = 10 − 3 . T o be able to obtain such a trajectory we deactiv ate sensor uncertain- ties in accelerometer , gyroscope, orientation and altimeter . External noise is thus limited to aerodynamic turbulences only . In this case, both iLQG and PI solutions correspond to the shortest path, i.e. go through the gap between the wall and the b uilding. Figure 3 (right) illustrates the solutions ob- tained for larger noise le vel σ 2 u = 1 . While the optimal ref- erence trajectory obtained by iLQG does not change, which results in collision once the real noisy controller is ex ecuted (left path), the PI control solution av oids the b uilding and takes the longer route (right path). Note that iLQG can find both solutions depending on initialization. Howe ver , How- ev er, it will always choose the shortest route, regardless of nearby obstacles. Also, note that the PI controlled unit takes a longer route to reach the target. The reason is that the con- 0 0.05 0.1 0.15 0.2 50 60 70 80 90 Wind velocity (m/s) Cost solution 0 0.05 0.1 0.15 0.2 0 0.2 0.4 0.6 0.8 1 Wind velocity (m/s) Percent of crashes iLQG PI Figure 4: Results: Drunken Quadrotor with wind : For dif ferent wind velocities and fixed control noise σ 2 u = 0 . 5 . (Left) cost of the obtained solutions and (Right) percentage of crashes using iLQG and PI. trol cost R is set quite high in order to reach a good ESS. Alternativ ely , if R is decreased, the optimal solution could reach the target sooner , but at the cost of a decreased ESS. This trade-of f, which is inherent in PI control, can be re- solved by incorporating feedback control in the importance sampling, as presented in Thijssen and Kappen (2015). W e also consider more realistic conditions with noise not limited to act in the control. Figure 4 (a,b) sho ws results in the presence of wind and sensor uncertainty . Panel (a) sho ws how the wind af fects the quality of the solution, resulting in an increase of the variance and the cost for stronger wind. In all our tests, iLQG is not able to bring the quadrotor to the other side. Panel (b) sho ws the percentage of crashes using both methods. Crashes occur often using iLQG con- trol and only occasionally using PI control. W ith stronger wind, the iLQG controlled unit does occasionally not ev en reach the corridor (the unit did not reach the other side but did not crash either). This explains the difference in percent- ages of Panel (b). W e conclude that for multi-modal tasks (tasks where multiple solution trajectories exist), the pro- posed method is preferable to iLQG. 4.2 Scenario II: Holding Patter n The second scenario addresses the problem of coordinating agents to hold their position near a point of interest while keeping a safe range of v elocities and a voiding crashing into each other . Such a problem arises for instance when multiple aircraft need to land at the same location, and simultaneous landing is not possible. The resulting flight formation has been used frequently in the literature (V ´ as ´ arhelyi et al. 2014; How et al. 2008; Y u et al. 2013; Franchi et al. 2012), but always with prior specification of the trajectories. W e show how this formation is obtained as the optimal solution of a SOC problem. Consider the following state cost (omitting time inde xes) r HP ( x ) = M X i =1 exp ( v i − v max ) + exp ( v min − v i ) + exp ( k p i − d k 2 ) + M X j >i C hit / k p i − p j k 2 (6) Figure 5: Holding pattern in the CRATES simulator . T en units coordinate their flight in a circular formation. In this example, N = 10 4 samples, control noise is σ 2 u = 0 . 1 and horizon H = 1 sec. Cost parameters are v min = 1 , v max = 3 , C hit = 20 and d = 7 . En vironmental noise and sensing uncertainties are modeled using realistic parameter values. where v max and v min denote the maximum and minimum v e- locities, respectiv ely , d denotes penalty for deviation from the origin and C hit is the penalty for collision risk of two agents. k · k 2 denotes ` - 2 norm. The optimal solution for this problem is a circular flying pattern where units fly equidistantly from each other . The value of parameter d determines the radius and the av erage velocities of the agents are determined from v min and v max . Since the solution is symmetric with respect to the direc- tion of rotation (clockwise or anti-clockwise), only when the control is ex ecuted, a choice is made and the symmetry is broken. Figure 5 shows a snapshot of a simulation after the flight formation has been reached for a particular choice of parameter values 2 . Since we use an uninformed initial con- trol trajectory , there is a transient period during which the agents or ganize to reach the optimal configuration. The co- ordinated circular pattern is obtained regardless of the initial positions. This behavior is robust and obtained for a large range of parameter values. 2 Supplementary video material is av ailable at http://www. mbfys.ru.nl/staff/v.gomez/uav.html 0 50 100 150 100 150 200 250 300 350 400 450 time state cost (a) N=10 N=10 2 N=10 3 2 4 6 8 10 50 100 150 200 250 number of quadrotors state cost (b) N=10 2 N=10 4 10 −3 10 −2 10 −1 0.9 0.95 1 1.05 1.1 Noise level σ u 2 Cost iLQG/PI (c) 10 −3 10 −2 10 −1 10 0 10 0 10 1 10 2 10 3 Noise level σ u 2 ESS (d) Figure 6: Holding pattern : (a) ev olution of the state cost for different number of samples N = 10 , 10 2 , 10 3 . (b) scaling of the method with the number of agents. For different control noise lev els, (c) comparison between iLQG and PI control (ratios > 1 indicate better performance of PI over iLQG) and (d) Effecti ve Sample Sizes. Errors bars correspond to ten dif ferent random realizations. Figure 6(a) shows immediate costs at dif ferent times. Cost always decreases from the starting configuration until the formation is reached. This value depends on sev eral param- eters. W e report its dependence on the number N of sample paths. For large N , the variances are small and the cost at- tains small v alues at con vergence. Con versely , for small N , there is larger variance and the obtained dynamical config- uration is less optimal (typically the distances between the agents are not the same). During the formation of the pat- tern the controls are more expensi ve. F or this particular task, full con vergence of the path inte grals is not required, and the formation can be achiev ed with a very small N . Figure 6(b) illustrates how the method scales as the num- ber of agents increases. W e report av erages over the mean costs ov er 20 time-steps after one minute of flight. W e var - ied M while fixing the rest of the parameters (the distance d which was set equal to the number of agents in meters). The small variance of the cost indicates that a stable forma- tion is reached in all the cases. As e xpected, larger v alues of N lead to smaller state cost configurations. For more than ten UA Vs, the simulator starts to hav e problems in this task and occasional crashes may occur before the formation is reached due to limited sample sizes. This limitation can be addressed, for example, by using more processing po wer and parallelization and it is left for future work. W e also compared our approach with iLQG in this sce- nario. Figure 6(c) shows the ratio of cost differences after con vergence of both solutions. Both use MPC, with a hori- zon of 2s and update frequency of 15Hz. V alues above 1 in- dicate that PI control consistently outperforms iLQG in this problem. Before conv ergence, we also found, as in the pre- vious task, that iLQG resulted in occasional crashes while PI control did not. The Effecti ve Sample Size (ESS) is shown in Figure 6(d). W e observe that higher control noise lev els result in better exploration and thus better controls. W e can thus conclude that the proposed methodology is feasible for coordinating a large team of quadrotors. For this task, we performed e xperiments with the real plat- forms. Figure 7 shows real trajectories obtained in outdoor −5 −3 −1 1 3 5 −6 −4 −2 0 2 4 6 Meters East Meters North Position for both UAVs UAV0 UAV1 Figure 7: Resulting trajectories of a Holding Pattern experi- ment using two platforms in outdoors conditions. conditions (see also the video that accompanies this paper for an experiment with three platforms). Despite the pres- ence of significant noise, the circular behavior was also ob- tained. In the real experiments, we used a Core i7 laptop with 8GB RAM as base station, which run its o wn ROS messag- ing core and forwarded messages to and from the platforms ov er a IEEE 802.11 2.4GHz network. F or safety reasons, the quadrotors were flown at dif ferent altitudes. 4.3 Scenario III: Cat and Mouse The final scenario that we consider is the cat and mouse sce- nario. In this task, a team of M quadrotors (the cats) has to catch (get close to) another quadrotor (the mouse). The mouse has autonomous dynamics: it tries to escape the cats by moving at v elocity inv ersely proportional to the distance to the cats. More precisely , let p mouse denote the 2 D posi- tion of the mouse, the velocity command for the mouse is Mouse Cats Figure 8: Cat and mouse scenario: (T op-left) four cats and one mouse. (T op-right) for horizon time H = 2 seconds, the four cats surround the mouse forev er and keep rotation around it. (Bottom-left) for horizon time H = 1 seconds, the four cats chase the mouse but (bottom-right) the mouse manages to escape. W ith these settings, the multi-agent system alternates between these two dynamical states. Number of sample paths is N = 10 4 , noise le vel σ 2 u = 0 . 5 . Other parameter v alues are d = 30 , v min = 1 , v max = 4 , v min = 4 and v max mouse = 3 . computed (omitting time index es) as v mouse = v max mouse v k v k 2 , where v = M X i =1 p i − p mouse k p i − p mouse k 2 . The parameter v max mouse determines the maximum velocity of the mouse. As state cost function we use equation (6) with an additional penalty term that depends o n the sum of the distances to the mouse r CM ( x ) = r HP ( x ) + M X i =1 k p i − p mouse k 2 . This scenario leads to se veral interesting dynamical states. For example, for a sufficiently large value of M , the mouse always gets caught (if its initial position is not close to the boundary , determined by d). The optimal control for the cats consists in surrounding the mouse to prev ent collision. Once the mouse is surrounded, the cats k eep rotating around it, as in the previous scenario, but with the origin replaced by the mouse position. The additional video shows examples of other complex beha viors obtained for different parameter settings. Figure 8 (top-right) illustrates this behavior . The types of solution we observe are different for other parameter v alues. For example, for M = 2 or a small time horizon, e.g. H = 1 , the dynamical state in which the cats rotate around the mouse is not stable, and the mouse escapes. This is displayed in Figure 8 (bottom panels) and better il- lustrated in the video provided as supplementary material. W e emphasize that these different behaviors are observed for large uncertainty in the form of sensor noise and wind. 5 Conclusions This paper presents a centralized, real-time stochastic opti- mal control algorithm for coordinating the actions of multi- ple autonomous vehicles in order to minimize a global cost function. The high-level control task is expressed as a Path- Integral control problem that can be solved using efficient sampling methods and real-time control is possible via the use of re-planning and model predictive control. T o the best of our kno wledge, this is the first real-time implementation of Path-Inte gral control on an actual multi-agent system. W e have shown in a simple scenario (Drunken Quadro- tor) that the proposed methodology is more conv enient than other approaches such as deterministic control or iLQG for planning trajectories. In more complex scenarios such as the Holding Pattern and the Cat and Mouse, the pro- posed methodology is also preferable and allows for real- time control. W e observe multiple and complex group be- havior emerging from the specified cost function. Our ex- perimental frame work CRATES has been a ke y de velopment that permitted a smooth transition from the theory to the real quadrotor platforms, with literally no modification of the un- derlying control code. This gi ves e vidence that the model mismatch caused by the use of a control hierarchy is not critical in normal outdoor conditions. Our current research is addressing the following aspects: Large scale parallel sampling − the presented method can be easily parallelized, for instance, using graphics pro- cessing units, as in Williams, Rombokas, and Daniel (2014). Although the tasks considered in this work did not required more than 10 4 samples, we expect that this improv ement will significantly increase the number of application do- mains and system size. Distributed control − we are exploring different dis- tributed formulations that take better profit of the factorized representation of the state cost. Note that the costs functions considered in this work only require pairwise couplings of the agents (to prev ent collisions). Ho we ver , full observabil- ity of the joint space is still required, which is not av ailable in a fully distributed approach. References [Albore et al.] Albore, A.; Peyrard, N.; Sabbadin, R.; and T e- ichteil K ¨ onigsbuch, F . 2015. An online replanning approach for crop fields mapping with autonomous U A Vs. In International Con- fer ence on Automated Planning and Scheduling (ICAPS) . [Augugliaro, Schoellig, and D’Andrea] Augugliaro, F .; Schoellig, A.; and D’Andrea, R. 2012. Generation of collision-free trajec- tories for a quadrocopter fleet: A sequential con vex programming approach. In Intelligent Robots and Systems (IROS) , 1917–1922. [Bernardini, Fox, and Long] Bernardini, S.; Fox, M.; and Long, D. 2014. Planning the behaviour of low-cost quadcopters for surveil- lance missions. In International Confer ence on Automated Plan- ning and Scheduling (ICAPS) . [B ¨ urkle, Segor , and Kollmann] B ¨ urkle, A.; Segor, F .; and K oll- mann, M. 2011. T owards autonomous micro U A V swarms. J. Intell. Robot. Syst. 61(1-4):339–353. [Chanel, T eichteil-Knigsbuch, and Lesire] Chanel, C. C.; T eichteil- Knigsbuch, F .; and Lesire, C. 2013. Multi-target detection and recognition by U A Vs using online POMDPs. In International Con- fer ence on Automated Planning and Scheduling (ICAPS) . [De Nardi and Holland] De Nardi, R., and Holland, O. 2008. Co- ev olutionary modelling of a miniature rotorcraft. In 10th Interna- tional Confer ence on Intelligent A utonomous Systems (IAS10) , 364 – 373. [De Nardi] De Nardi, R. 2013. The QRSim Quadrotors Simulator. T echnical Report RN/13/08, University Colle ge London. [Franchi et al.] Franchi, A.; Masone, C.; Grabe, V .; Ryll, M.; B ¨ ulthoff, H. H.; and Giordano, P . R. 2012. Modeling and con- trol of UA V bearing-formations with bilateral high-level steering. Int. J. Robot. Res. 0278364912462493. [G ´ omez et al.] G ´ omez, V .; Kappen, H. J.; Peters, J.; and Neumann, G. 2014. Policy search for path integral control. In Eur opean Conf. on Mac hine Learning & Knowledge Discovery in Databases , 482– 497. [Guerrero and Lozano] Guerrero, J., and Lozano, R. 2012. Flight F ormation Control . John W iley & Sons. [Hauert et al.] Hauert, S.; Leven, S.; V arga, M.; Ruini, F .; Can- gelosi, A.; Zuf ferey , J.-C.; and Floreano, D. 2011. Reynolds flock- ing in reality with fixed-wing robots: Communication range vs. maximum turning rate. In Intelligent Robots and Systems (IR OS) , 5015–5020. [Hoffmann et al.] Hoffmann, G. M.; Huang, H.; W aslander, S. L.; and T omlin, C. J. 2011. Precision flight control for a multi-v ehicle quadrotor helicopter testbed. Contr ol. Eng. Pract. 19(9):1023 – 1036. [Horowitz, Damle, and Burdick] Horowitz, M. B.; Damle, A.; and Burdick, J. W . 2014. Linear Hamilton Jacobi Bellman equations in high dimensions. arXiv preprint . [How et al.] How , J.; Bethke, B.; Frank, A.; Dale, D.; and V ian, J. 2008. Real-time indoor autonomous vehicle test en vironment. IEEE Contr . Syst. Mag. 28(2):51–64. [Kappen, G ´ omez, and Opper] Kappen, H. J.; G ´ omez, V .; and Op- per , M. 2012. Optimal control as a graphical model inference problem. Mach. Learn. 87:159–182. [Kappen] Kappen, H. J. 2005. Path integrals and symmetry break- ing for optimal control theory . Journal of statistical mechanics: theory and experiment 2005(11):P11011. [Kendoul] Kendoul, F . 2012. Surve y of adv ances in guidance, navi- gation, and control of unmanned rotorcraft systems. J. Field Robot. 29(2):315–378. [Kinjo, Uchibe, and Doya] Kinjo, K.; Uchibe, E.; and Doya, K. 2013. Ev aluation of linearly solv able Markov decision process with dynamic model learning in a mobile robot na vigation task. F r ont. Neur orobot. 7:1–13. [Kushle yev et al.] Kushleye v , A.; Mellinger , D.; Powers, C.; and Kumar , V . 2013. T ow ards a swarm of agile micro quadrotors. Auton. Robot. 35(4):287–300. [Mahony , Kumar , and Corke] Mahony , R.; Kumar , V .; and Corke, P . 2012. Multirotor aerial vehicles: Modeling, estimation, and control of quadrotor. IEEE Robotics & Automation Magazine 20–32. [Meyer et al.] Meyer , J.; Sendobry , A.; Kohlbrecher , S.; and Klin- gauf, U. 2012. Comprehensive Simulation of Quadrotor UA Vs Using R OS and Gazebo. Lectur e Notes in Computer Science 7628:400–411. [Ono, W illiams, and Blackmore] Ono, M.; Williams, B. C.; and Blackmore, L. 2013. Probabilistic planning for continuous dy- namic systems under bounded risk. J . Artif. Int. Res. 46(1):511– 577. [Quintero, Collins, and Hespanha] Quintero, S.; Collins, G.; and Hespanha, J. 2013. Flocking with fix ed-wing U A Vs for distributed sensing: A stochastic optimal control approach. In American Con- tr ol Conference (A CC) , 2025–2031. [Rawlik, T oussaint, and V ijayakumar] Rawlik, K.; T oussaint, M.; and V ijayakumar , S. 2013. Path integral control by reproducing kernel Hilbert space embedding. In T wenty-Thir d International Joint Confer ence on Artificial Intelligence , 1628–1634. AAAI Press. [Reynolds] Reynolds, C. W . 1987. Flocks, herds and schools: A dis- tributed behavioral model. SIGGRAPH Comput. Graph. 21(4):25– 34. [Rombokas et al.] Rombokas, E.; Theodorou, E.; Malhotra, M.; T odorov , E.; and Matsuoka, Y . 2012. T endon-driv en control of biomechanical and robotic systems: A path integral reinforcement learning approach. In International Confer ence on Robotics and Automation , 208–214. [Shim, Kim, and Sastry] Shim, D. H.; Kim, H. J.; and Sastry , S. 2003. Decentralized nonlinear model predictive control of mul- tiple flying robots. In IEEE conference on Decision and control (CDC) , volume 4, 3621–3626. [Stulp and Sigaud] Stulp, F ., and Sigaud, O. 2012. Path integral policy impro vement with cov ariance matrix adaptation. In Inter- national Confer ence Machine Learning (ICML) . [Symington et al.] Symington, A.; De Nardi, R.; Julier , S.; and Hailes, S. 2014. Simulating quadrotor U A Vs in outdoor scenar- ios. In Intelligent Robots and Systems (IROS) , 3382–3388. [Theodorou, Buchli, and Schaal] Theodorou, E.; Buchli, J.; and Schaal, S. 2010. A generalized path inte gral control approach to reinforcement learning. J. Mach. Learn. Res. 11:3137–3181. [Thijssen and Kappen] Thijssen, S., and Kappen, H. J. 2015. Path integral control and state-dependent feedback. Phys. Rev . E 91:032104. [T odorov and Li] T odorov , E., and Li, W . 2005. A generalized it- erativ e LQG method for locally-optimal feedback control of con- strained nonlinear stochastic systems. In American Control Con- fer ence (ACC) , 300–306 vol. 1. IEEE. [T odorov] T odorov , E. 2006. Linearly-solvable Marko v decision problems. In Advances in neural information processing systems (NIPS) , 1369–1376. [T urpin, Michael, and Kumar] T urpin, M.; Michael, N.; and Kumar , V . 2012. Decentralized formation control with v ariable shapes for aerial robots. In International Conference on Robotics and Au- tomation (ICRA) , 23–30. [V an Den Broek, Wie gerinck, and Kappen] V an Den Broek, B.; W iegerinck, W .; and Kappen, H. J. 2008. Graphical model infer - ence in optimal control of stochastic multi-agent systems. J. Artif. Intell. Res. 32:95–122. [V ´ as ´ arhelyi et al.] V ´ as ´ arhelyi, G.; V ir ´ agh, C.; Somorjai, G.; T arcai, N.; Szorenyi, T .; Nepusz, T .; and V icsek, T . 2014. Outdoor flocking and formation flight with autonomous aerial robots. In Intelligent Robots and Systems (IR OS) , 3866–3873. [W illiams, Rombokas, and Daniel] Williams, G.; Rombokas, E.; and Daniel, T . 2014. GPU based path integral control with learned dynamics. In Autonomously Learning Robots - NIPS W orkshop . [Y u et al.] Y u, B.; Dong, X.; Shi, Z.; and Zhong, Y . 2013. Formation control for quadrotor swarm systems: Algorithms and experiments. In Chinese Contr ol Conference (CCC) , 7099–7104.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment