Audio to Body Dynamics

We present a method that gets as input an audio of violin or piano playing, and outputs a video of skeleton predictions which are further used to animate an avatar. The key idea is to create an animation of an avatar that moves their hands similarly …

Authors: Eli Shlizerman, Lucio M. Dery, Hayden Schoen

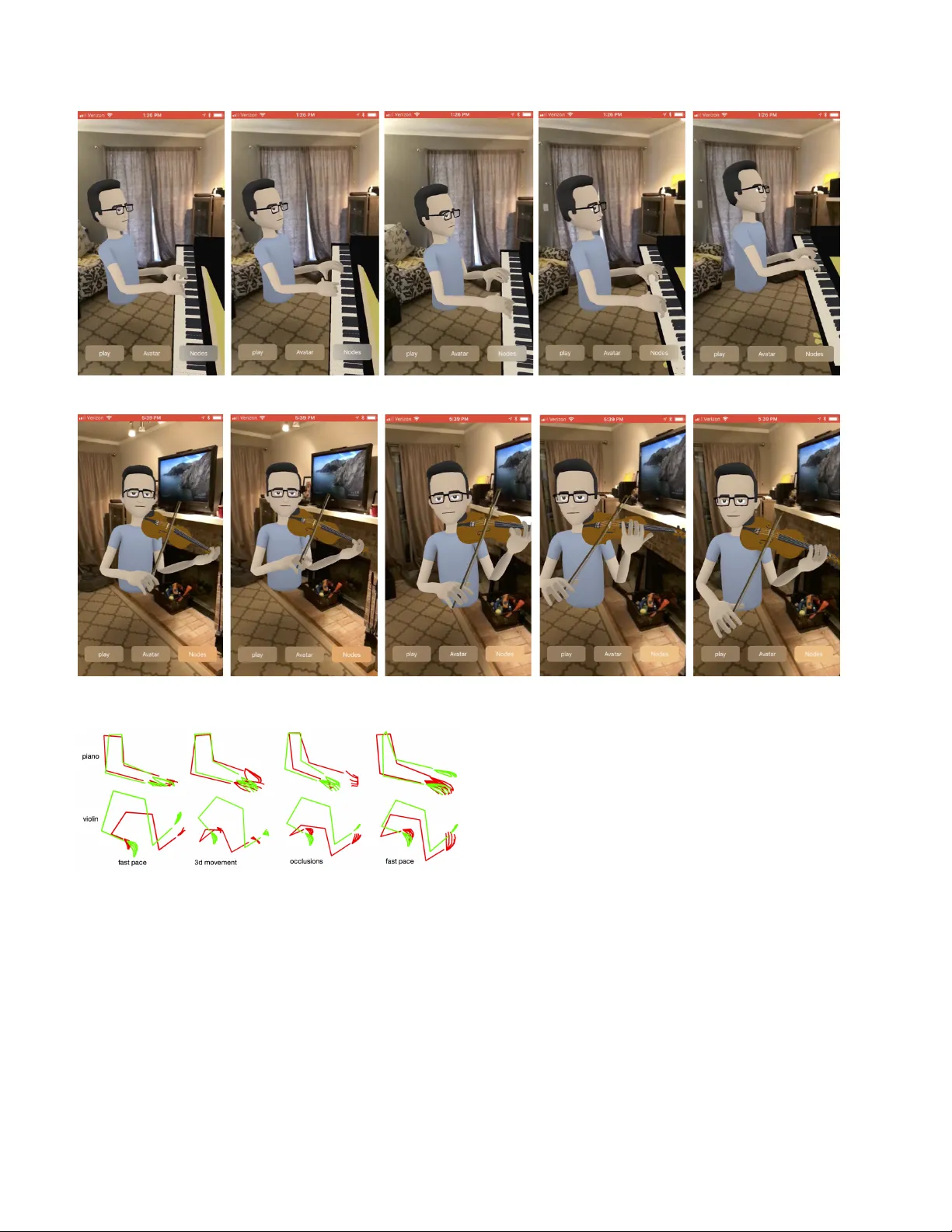

A udio to Body Dynamics Eli Shlizerman 1,3 , Lucio Dery 1,2 , Hayden Schoen 1 , and Ira K emelmacher-Shlizerman 1,3 1 Facebook Inc. 2 Stanford Uni versity 3 Uni versity of W ashington Figure 1. Giv en as input a music of violin (audio signal), our method (1) predicts body skeleton and (2) uses the skeleton to animate an av atar . See videos in the supplementary material (with audio on). Abstract W e pr esent a method that gets as input an audio of vi- olin or piano playing, and outputs a video of skeleton pr e- dictions which are further used to animate an avatar . The ke y idea is to cr eate an animation of an avatar that moves their hands similarly to how a pianist or violinist would do, just fr om audio. Aiming for a fully detailed correct arms and fingers motion is a goal, however , it’ s not clear if body movement can be pr edicted fr om music at all. In this paper , we pr esent the first r esult that shows that natural body dy- namics can be predicted at all. W e b uilt an LSTM network that is trained on violin and piano r ecital videos uploaded to the Internet. The predicted points are applied onto a rigg ed avatar to cr eate the animation. 1. Intr oduction All the same it is being said ev erywhere that I played too softly , or rather, too delicately for people used to the piano-pounding of the artists here. F rederic Chopin When pianists play a musical piece on a piano, their body reacts to the music. Their fingers strike piano keys to cre- ate music. They move their arms to play on different oc- tav es. V iolin players draw the bo w with one hand across the strings and touch lightly or pluck the strings with the other hand’ s fingers. Faster bo wing produces faster music pace. An interesting question is: can body mo vement be pre- dicted computationally from a music signal? This is a highly challenging computational problem. W e need to hav e a good training set of videos, we need to be able to accurately predict body poses in those videos, and build an algorithm that is able to find correlation between music and body , to further predict movement. 1 Figure 2. Method overvie w: (a) Our method gets as input an audio signal, e.g., piano music, (b) that is fed into our LSTM network to predict body mov ement points, (c) which in turn are used to animate an avatar and sho w it playing the input music on a piano (the a v atar and piano are models while the rest is real apartment background). Human body dynamics is complex, particularly giv en the quality needed to learn correlation to audio. T raditionally , state of the art prediction of natural body mo vement from video sequences (not audio) used laboratory captured mo- tion capture sequences. E.g., in our scenario we would need to bring a pianist to a laboratory and have them play se v- eral hours with sensors attaches to their fingers and body joints. Such approach is hard to execute in practice and not easily generalizable. If we could lev erage the publicly av ailable videos of highly skilled people playing online we potentially allow higher degree of di versity in data. Until recently , though estimating accurate body pose from videos was not possible. This year, several methods appeared that may allow us to learn from data “in the wild”. In parallel, a number of methods [ 39 , 41 , 26 ] also showed remarkable results for lip sync from speech. I.e., giv en an audio of a person saying a sentence they sho wed that it is possible to predict ho w that person’ s mouth land- marks would mov e while saying the words. These two advancements inspired us to tackle the chal- lenging ideas of predicting body and fingers mov ement just from music. The goal of this paper is to explore if it’ s pos- sible at all, and if we can create natural and logical body dynamics from audio. Note that we do not use information like midi files from which we can potentially learn the cor- relation between exact piano keys and music. W e focus on creating an av atar that moves their hands and fingers, like a pianist would. An interesting complementary direction would be also to learn the correlation between music and piano ke ys by training on midi files, and then combine with the method described in this paper . W e consider two sets of data, piano and violin recitals (Fig. 3 ). W e collected videos for each of the two categories, and processed the videos by detecting upper body , and fin- gers in each frame of each video (giv en the points are visi- ble). T otal of 50 points per frame, where 21 points represent the fingers in each hand, 8 points for upper body . In addition to predicting the points, one of our goals is Figure 3. T raining data: Example frames from violin and piano sets and their corresponding example ke ypoints. to visualize the points via animation of an a v atar that moves naturally according to the giv en audio input. W e solve this problem is two steps. First, we build a Long-Short-T erm- Memory (LSTM) network that learns the correlation be- tween audio features and body skeleton landmarks. In the second part, we automatically animate an av atar using pre- dicted landmarks. The final output is an av atar that mo ves according to the audio input. For each set we trained a separate neural network. I.e., separate network for violin and separate network for piano. The output skeletons are promising, and produce interest- ing body dynamics. W e encourage the reader to watch the supplementary videos with audio turned on, to experience the results. 2. Related W ork The correlation between speech and facial mov ements was researched extensi v ely be ginning with classical works by [ 6 ] and [ 7 ] and most recently showing remarkable results of generating high quality videos of a f ace talking just from audio [ 39 ] and animating av atars using speech [ 41 , 26 ]. The three later papers hav e an e xtensiv e state of the art summary on facial animation from speech. Animating body pose just from music was not e xplored as far as we are aware. There is, howe v er , a large body of work in three related areas: behavioral studies of ho w people’ s bodies react to music and sounds, computer vi- sion, learning and graphics research in creating and predict- ing natural body pose changes, for example to learn walk- ing and dancing styles from videos (no audio), and multi- modal studies of combining audio and video input to im- prov e recognition of facial e xpressions and body poses. W e will describe the related works belo w . Multi-modal studies [ 24 ] have shown that combining au- dio and visual inputs produces higher accuracy results than just each of the modalities alone [ 47 ]. F or e xample, facial expression recognition may benefit from also getting voice input since the emotion/pitch/loudness of the message may help recognition [ 10 , 36 ]. W ang et al. showed that body pose estimation [ 33 ] works better with audio input [ 45 ]. Multi-speaker tracking [ 1 ], and combination of modalities to identify intent to interact [ 35 ] are additional examples. In dyadic communication, the relationship between speech and body rhythms was in v estigated, e.g., [ 13 , 43 ] with interesting results showing that certain speech charac- teristics were correlated with body movement frequencies [ 5 ]. Dif ferent emotional scenarios had different types of mov ements and interactions, e.g., regular social interaction [ 14 ] vs. interview [ 5 ]. [ 18 ] and [ 42 ] researched if there is correspondence between the way pianists perform par- ticular music, and found that there is consistenc y in the way we perceiv e music-movement correspondences. They also demonstrate that correspondences emer ge flexibly , i.e. that the same musical e xcerpt may correspond to dif ferent variants of movement. W e find these studies an inspiration to our idea that body mo vement can be possibly predicted from music and speech. There is a large body of work of the use of LSTMs to pre- dict and edit future poses giv en a short video of movement or e ven a single photo, e.g., [ 15 , 31 , 17 ] and [ 21 , 27 , 44 , 9 ]. State of the art body pose estimation and recognition tech- niques typically use CNNs, e.g., [ 23 , 2 , 22 , 46 ] and [ 19 ]. Earlier works sho wed that it is possible to learn and predict motion style [ 29 ] from videos and further animate the char - acters in real-time [ 16 ]. These works did not use audio as their input. A complementary area of research is to predict audio from video. This is the inv erse of our goal of predict- ing video from audio. Some examples include estimating speech from face videos with the goal of lip reading [ 11 ], or predicting which sounds an object mak e from video [ 12 , 34 ] or photo [ 38 ]. Finally , [ 28 ] e xplored creating audio dri v en video montages. While related works do not talk directly about animating body pose just from speech or music, it provides suf ficient evidence that some correlation exists. This encourages us to explore estimation of such correlation. W e do not expect to reproduce e xact body motion, and in fact assume that the transformation from speech/music to body motion is not unique but we do aim to create natural looking body pose that makes sense for the music or speech. 3. Body Dynamics Repr esentation There are variety of classes of videos where the correla- tion between audio and video is interesting. E.g., videos of dance, people giving lectures, playing musical instruments, or stand-up comedy to name a fe w . W e chose to experiment with sev eral of those. Belo w we describe which datasets we use, ho w we process the audio and video signals, and ho w the training data is prepared and represented. The training and testing algorithm is described in Section 4 . 3.1. Data sets W e hav e experimented with data from violin recitals, and piano recitals. The full list of URLs is av ailable in the sup- plementary material and example URLs in the footnote be- low 1 . Figure 3 sho ws example frames from each of the sets and their corresponding keypoints. All the videos used were do wnloaded from the Internet and are videos “in the wild”. W e made sure to select videos which are fa v orable to the processing algorithms. Specifi- cally , the intuition behind our choice of video was to hav e clear high quality music sound, no background noise, no ac- companying instruments, solo performance. On the video quality side, we searched for videos of high resolution, sta- ble fix ed camera, and bright lighting. W e preferred longer videos for continuity . Our selected videos range from 3 min to 50min. W e found that using recitals or single person shows are optimal sets that satisfy the abov e goals. T otal of 3.6 hours of violin recitals was collected and 4.4 hours of piano recitals. 3.2. A udio Processing MFCC features were shown to be successful in pre vious art to identify and classify different musical instruments. E.g., [ 30 ] showed that a network trained on PCA of MFCC coefficients of flute, piano and violin can recognize which instruments are used. It was also shown that MFCCs per- form well for capturing the variation in speech [ 48 ]. W e follow the optimal process for computing the features de- scribed in [ 39 ] with several modification to adjust to our datasets frame rate. Specifically , we compute the features on stereo 44 . 1 Khz sample rate audio, perform RMS normal- ization to 0 db using FFMPEG [ 3 ], and choose the window length as 1000 / video fps with fps = 24 , i.e., 41 . 66 ms. W e 1 V iolin recital: https://www.youtube.com/watch?v= BP65MiYNh50 , and Piano recital: https://www.youtube.com/ watch?v=SD1nhx9qYH0 Figure 4. Example failure cases of keypoints detectors (row 1 for violin set, and row 2 for piano set) which were eliminated auto- matically in our preprocessing step. synchronized all videos to hav e the same audio and video rates. The final audio feature size is 28-D which includes the 13-D feature vector , their temporal deriv ativ e, and log mean energy for v olume. 3.3. Keypoints Estimation W e are interested in two types of ke ypoints: body , and hand fingers. T ypically , estimation of k eypoints on videos in the wild is challenging due to the large variation in cam- era, lighting, and fast movement which can div ert from type of benchmark videos on which the algorithms e valuate. Re- cently , ho we ver a number of methods appeared that can handle in the wild videos much better . W e ha ve came up with a process that allows us to get suf ficiently accurate key- points, as described below . W e begin by running a video through three libraries: OpenPose that pro vides face, body , and hands keypoints [ 37 , 46 , 8 ], MaskRCNN [ 19 ], and DeepFace face recog- nition algorithm [ 40 ]. Those three libraries perform well on benchmarks, b ut on our videos in the wild each f ails on some frames. W e have noticed, howe v er , that the failure and success are somewhat complementary across the algo- rithms. Thus, per video we select a single frame and a detection box that includes the person of interest, as our reference frame. The person’ s face in the box gets a signature from the face recognition algorithm. Each consequent frame’ s box is automatically eliminated if it’ s 1) too f ar from the location of the box in the reference frame, 2) face signature doesn’t match, or 3) the L 2 distance between points in consecutive frames is too big. See thresholds in Experiments section. Giv en the box we use the hands points from OpenPose that appear in that box. W e further choose among MaskRCNN or OpenPose which body points to use based on the confi- dence and existence of points (in case part of the points are not detected due to occlusions, or mis-detection). In case part of points are still missing we exclude that frame from training. See Figure 4 for example frames that were elimi- nated from training. 3.4. Keypoints Motion F actorization Consider a video with ke ypoints estimation per frame, resulting in a timeseries of points. The motion in keypoints across frames is a product of sev eral ke y components: V frame = M camera ( M s,T ,R ( V person + V audio )) (1) where M camera is motion due to camera, e.g., zoom in- out and viewpoint change, M s,T ,R is the rigid transforma- tion of the person, V audio is the person body transformation due to audio, e.g., bow drawing, and striking piano keys, and V person is non-rigid transformation of the person’ s body which is not correlated to audio, e.g., person pacing on stage while playing violin. W e assume fixed stable camera, and we solve for scale, translation and rotation in 2D by choos- ing a reference points configuration. W e assume that V person is zero, for simplicity . Our goal is then to predict V audio . T o reduce the dimensionality and noise of the data we compute PCA coefficients of the k eypoints as follo ws. PCA on keypoints: Gi ven aligned points per frame in each video, we collect all points to a single matrix of size 2 p × f , where p is the number of points per frame, each point has 2 dimensions, and f is the total number of frames in a dataset. Each set of points is reshaped to a 1-D vector of length 2 p . W e compute PCA on the final matrix across all frames, and reduce the dimensionality to capture 90% of the data. Each frame is then represented using the PCA co- efficients. This allows us to reduce noise, as well as reduce dimensionality . As a final step we upsample the PCA coef- ficients linearly to 4 × video fps . Exact numbers per set and implementation details are also presented in Section 6 . The resulted PCA coefficients (represent body motion) and MFCC coefficients (represent audio) are used in the next steps. 4. A udio to Body Keypoints Prediction Our goal is to learn a correlation between audio features and body mo vements. F or this, we b uilt an LSTM (Long- Short T erm Memory) network [ 20 ]. It was sho wn recently that LSTMs can be used successfully to predict lip sync, e.g., [ 39 ] (this paper also has a nice explanation of LSTM vs. RNN vs. HMM models, and the use of LSTM with time-delay). Our architecture is visualized in Fig. 5 . W e chose to use a unidirectional single layer LSTM with time delay . x i is an audio MFCC in a particular time instance i , y i s are PCA coefficients of body keypoints, and m is the memory . W e also add a fully connected layer ’fc’ which we found to increase performance. W e ha ve experimented with other variations of the architecture which did not show im- prov ement in results. Those include: using H norm for loss, global time delay , multi-layer LSTM, training on keypoints directly rather than PCA components. Figure 5. Architecture of our points prediction LSTM. x i represent the audio features, and y j represent the corresponding keypoints. The parameters that we used are hidden state of 200 (the length of m ), trained with truncated back propagation with time steps of 400 , time delay of 5 , dropout of 0 . 4 , learning rate of 5 e − 3 . The number of PCA components we typ- ically use is 10 . W e ran the training for 300 epochs. The network is implemented in Caffe2 [ 25 ], and use AD AM op- timizer . Both input and output are normalized by subtract- ing the mean and di viding the v ariance. In Fig. 6 we show the impro vement in PCA coef ficients prediction as a func- tion of epochs for the piano set. 5. Body Keypoints to A v atar Once the keypoints are estimated we animate an a v atar using the points. W e hav e built an Augmented reality ap- plication using ARkit which runs on the phone in real time. Giv en a sequence of 2D predicted points, and a body av atar , the mov ements are applied onto the av atar . Below we de- scribe the specific details. The av atars we ha ve used are 3D body models with a body bone rig. W e first initialize the rig by aligning the pre- dicted points to 3D world coordinates. W e do it by estimat- ing average left and right shoulder points across all frames, and calculate rigid transformation to the av atar . Then we consider each of the body , arms, head, and fingers sepa- rately . If some of the body , arms, head or fingers are not provided only the pro vided parts are animated. Finally , we apply root rotation of fset to match pose angle of piano. The z depth for the piano is calculated off of the wrists x posi- tion, the further left it is the closer it gets to camera. There are details below on ho w we rig the violin. Body: IK chain was created where the root node defined as a verage between the left and right hip, and connected to the av erage of left and right shoulder . This defines the spine. W e then estimate the average spine length across the frames, and scale the av atar spine accordingly . Arms: W e defined an IK chain, where the reference point was used as the wrist point. Next we calculate an offset that defines ho w much the arms point forw ard (out of fixed plane). The length of the forearm determines the off- set. First, the maximum forearm length is calculated across all frames, then if the forearm appeared in its maximum length, then the reference is on the original 2D plane and arm is straight, otherwise if length is zero, then the arm is perpendicular to the source plane. Hands/Fingers: Hand rotation was determined via root joint of the pinkie finger and the root joint of the pointer finger . E.g., if on the right hand the pointer joint is to stage left of the pinkie joint, then the hand must be rotated to the av atar’ s right with the palm facing up. The palm would be facing down if the opposite were true. Additionally , for each finger , the angle between the reference point and root was calculated and applied. Rigging the violin: Rigging the violin was done with four points used as constraint references. The violins posi- tion was constrained to a point attached to the head and the rotation was determined using a lookat constraint the was attached to the left hand. The bow position is constrained to a point on the right hand and the rotation uses a lookat constraint attached to the bridge of the violin. 6. Experiments In this section, we discuss our experiments, implementa- tion details, comparisons, and ev aluations. Running times and hardware: Our preprocessing, train and test runs are done on a GPU serv er of 8 NVIDIA M 40 GPUs, tw o 12 cores CPUs, 256 GB RAM. The running time for 200 epochs of training of 3 . 6 hours of violin set took 1 . 5 hours (30s per epoch). Piano dataset is 4.4 hours. The running time of the test set and animation of the av atar is real time. The processing time to calculate k eypoints on the training set is 10fps for OpenPose and 2fps for MaskRCNN. Data: W e hav e experimented with V iolin recitals and Piano recitals. All videos were synchronized to 24fps. W e hav e randomly selected 20% of each of the datasets for val- idation and 80% for training. Each of the sets w as trained and tested completely independently , i.e., we have a violin net, and a piano net. W e hav e removed approximately 10% of the frames in the videos due to not accurately predicted keypoints (based on the procedure described in Sec. 3 ), the total number of frames in V iolin set is about 324,000, and 414,000 for piano recitals. The threshold for removing frames are if points are farther from previous frame more than 10% of the width of frame (typically 20 pixels). T est audio was not part of the training or v alidation sets. Evaluations: W e ha ve experimented with dif ferent pa- rameters choices in our network and provide comparisons in T ables 1 and 2 . T o find the optimal parameters speci- fied in Sec. 4 we ran a hyper -parameters search. The er- rors in the tables are presented in pixels, where lo w is bet- ter . T o achie ve good results it is important to filter out all bad frames in the training data (wrong skeleton, wrong per - Figure 6. Evolution of testing of first PCA mode (piano) as function of epochs. Method T rain V alid T est Frames not dropped 12.44 12.09 18.31 50% training data 7.22 11.18 9.38 PCA coeff = 5 6.86 7.04 9.35 Dropout = 0 . 6 7.25 7.51 9.31 Seq. length = 500 7.40 6.95 9.24 No time delay 7.32 7.76 9.19 Upsample 6x 7.07 6.95 9.18 PCA coeff = 30 7.39 7.12 9.13 Seq. length = 200 6.88 6.75 9.13 Dropout = 0 7.32 7.71 9.03 Upsample 2x 7.33 9.09 8.66 Final ours 7.35 6.58 8.31 T able 1. V iolin net: Comparison of errors in training, v alidation, and testing with dif ferent parameter choices. Errors presented in pixels (low is better). Ours is: PCA coeff = 15, Hidden variables = 200, Seq. length = 60, Batch size = 100, Learning rate 2-e03, time delay=24ms, dropout = 0.15, upsample = 4x. son detection, wrong person recognition), we see that errors drop significantly just by filtering out the data. In case we use half of the training data we improve the training error but test error is lar ger . By using less PCA coefficients we can fit better to the training data but test error is larger than using more coefficients. Using dropout doesn’t improv e re- sults in our case. Time delay helps impro ve results. W e hav e experimented (Fig. 7 ) with giving a random sequence as test audio to predict points with both nets, the test showed no correlation and no mov ement was predicted, as expected. Results: In Figure 8 and 9 we present representativ e re- sults. W e sho w the predicted keypoints for different body poses, as well as the original frame for context. F or the ke y- points we show them o verlaid on the ground truth points for visual comparison. Note that we don’t expect the points to be exactly the same, b ut the fingers and hands to produce a similar satisfactory mov ement which was the goal of this paper . The ground truth in our case is result of 2D body pose detector which can be mistaken. Finally , we sho w f ail- ure cases in Fig 12 , row 1 sho ws piano, and ro w 2 violin. These sho w limitations of our system: currently our system is trained on 2D poses, while actual poses in training videos Method T rain V alid T est Frames not dropped 17.15 14.64 9.49 50% training data 6.94 6.65 8.87 Interpolated missing data 7.05 6.41 8.14 Upsample 2x 7.18 6.95 7.98 No time delay 6.83 6.04 7.48 PCA coeff = 30 6.66 6.79 7.42 Dropout = 0 . 6 6.76 5.97 7.38 Dropout = 0 6.77 6.07 7.28 Seq. length = 200 6.76 6.92 7.06 PCA coeff = 5 6.27 7.24 6.94 Final ours 6.52 6.06 6.84 T able 2. Piano net: Comparison of errors in training, validation, and testing with dif ferent parameter choices. Errors presented in pixels (low is better). Ours is: PCA coeff = 15, Hidden variables = 200, Seq. length = 60, Batch size = 100, Learning rate 1-e03, time delay=24ms, dropout = 0.1, upsample = 4x. Figure 7. Predicting a random sequence with violin net. W e see that the prediction is stationary , means there is no correlation be- tween the signal and violin net. are 3D. Consequently , occlusions and in visible points are not predicted well. In high pace and frequency parts of the videos, the body pose detectors may create mistakes, sim- ilarly in case of motion blur . This causes the network to learn behav e in high frequency audio accordingly . Figures 10 and 11 show screenshots of our av atar anima- tion based on predicted points. The piano, violin, and a v atar are synthetic objects placed in a real scene (augmented with ARKit). V ideos: W e recommend watching the supplementary video with audio on. In addition to showing the results we present ho w an animation may look like when music and keypoints don’ t match, you will see in the video that it’ s look very unnatural thus the importance of the network. 7. Discussion and Limitations W e ha ve proposed a ne w hypothesis that body gestures can be predicted from audio signal, and showed promising initial results. W e believe the correlation between audio to human body is very promising for a v ariety of applications in VR/AR and recognition. It was shown previously that mouth animation can be done just from audio, and in this paper we sho w initial results on body animation. W e hope it will open up further research. There are a number of lim- itations and many extensions that would be interesting to explore. One direction is to enable 3D movement, currently we use OpenPose and MaskRCNN to estimate keypoints. These are both 2D points estimators. Provided a 3D body keypoints estimator , e.g., Vnect [ 32 ] or SMPL [ 4 ] our ap- proach can potentially be e xtended to handle 3D motion as well, and allow more di verse human modeling. Another direction is to predict occluded keypoints. Cur- rently , we can only predict the visible points and if training frames do not hav e all the points we ignore that frame. It would be interesting to see if the keypoints from audio net- work can be extended to predict occlusions, e.g., in piano the left hand usually occludes the right hand b ut the audio includes music played by both hands. W e have used only Y ouT ube videos as training data. In the future, to increase realism and accurac y we could con- sider complementing the training data with sensor informa- tion or midi files. Finally , getting good training data per class of acti vity is not straight forward. Note the constraints we required in Sec. 3 . It w ould be interesting to e xplore a general net- work that can handle v ariety of poses, without the need to classify the type of action a priori. Alternativ ely , it would be interesting to incorporate video acti vity recognition into our learning framew ork. References [1] Y . Ban, L. Girin, X. Alameda-Pineda, and R. Horaud. Ex- ploiting the complementarity of audio and visual data in multi-speaker tracking. In ICCV W orkshop on Computer V i- sion for Audio-V isual Media , 2017. 3 [2] V . Belagiannis and A. Zisserman. Recurrent human pose estimation. In A utomatic F ace & Gestur e Recognition (FG 2017), 2017 12th IEEE International Conference on , pages 468–475. IEEE, 2017. 3 [3] F . Bellard, M. Niedermayer , et al. Ffmpeg. A vailabel fr om: http://ffmpe g. or g , 2012. 3 [4] F . Bogo, A. Kanazawa, C. Lassner , P . Gehler, J. Romero, and M. J. Black. Keep it smpl: Automatic estimation of 3d human pose and shape from a single image. In European Confer ence on Computer V ision , pages 561–578. Springer , 2016. 7 [5] D. S. Boomer and A. T . Dittmann. Speech rate, filled pause, and body mo vement in interviews. The Journal of nervous and mental disease , 139(4):324–327, 1964. 3 [6] M. Brand. V oice puppetry . In Pr oceedings of the 26th an- nual confer ence on Computer graphics and interactive tec h- niques , pages 21–28. A CM Press/Addison-W esley Publish- ing Co., 1999. 2 [7] C. Bregler , M. Covell, and M. Slaney . V ideo re write: Driv- ing visual speech with audio. In Pr oceedings of the 24th an- nual confer ence on Computer graphics and interactive tec h- niques , pages 353–360. ACM Press/Addison-W esley Pub- lishing Co., 1997. 2 [8] Z. Cao, T . Simon, S.-E. W ei, and Y . Sheikh. Realtime multi- person 2d pose estimation using part affinity fields. In CVPR , 2017. 4 [9] Y .-W . Chao, J. Y ang, B. Price, S. Cohen, and J. Deng. Fore- casting human dynamics from static images. arXiv pr eprint arXiv:1704.03432 , 2017. 3 [10] L. S.-H. Chen and T . S. Huang. Joint pr ocessing of audio- visual information for the r ecognition of emotional e xpr es- sions in human-computer inter action . University of Illinois at Urbana-Champaign, 2000. 3 [11] J. S. Chung, A. Senior, O. V inyals, and A. Zisser - man. Lip reading sentences in the wild. arXiv pr eprint arXiv:1611.05358 , 2016. 3 [12] A. Davis, M. Rubinstein, N. W adhwa, G. J. Mysore, F . Du- rand, and W . T . Freeman. The visual microphone: Passi v e recov ery of sound from video. 2014. 3 [13] A. T . Dittmann. The body movement-speech rh ythm rela- tionship as a cue to speech encoding. Studies in dyadic com- munication , pages 135–152, 1972. 3 [14] A. T . Dittmann and L. G. Llewellyn. Body mo vement and speech rhythm in social con versation. J ournal of personality and social psychology , 11(2):98, 1969. 3 [15] K. Fragkiadaki, S. Levine, P . Felsen, and J. Malik. Recurrent network models for human dynamics. In Proceedings of the IEEE International Confer ence on Computer V ision , pages 4346–4354, 2015. 3 [16] K. Grocho w , S. L. Martin, A. Hertzmann, and Z. Popovi ´ c. Style-based in verse kinematics. In ACM transactions on graphics (TOG) , volume 23, pages 522–531. A CM, 2004. 3 [17] I. Habibie, D. Holden, J. Schwarz, J. Y earsley , and T . K o- mura. A recurrent v ariational autoencoder for human motion synthesis. 2017. 3 [18] E. Haga. Correspondences between music and body mo ve- ment. 2008. 3 [19] K. He, G. Gkioxari, P . Doll ´ ar , and R. Girshick. Mask r-cnn. arXiv pr eprint arXiv:1703.06870 , 2017. 3 , 4 [20] S. Hochreiter and J. Schmidhuber . Long short-term memory . Neural computation , 9(8):1735–1780, 1997. 4 [21] D. Holden, J. Saito, and T . K omura. A deep learning frame- work for character motion synthesis and editing. ACM T rans- actions on Graphics (T OG) , 35(4):138, 2016. 3 Figure 8. Piano test results. W e encourage the reader to watch the supplementary videos (with audio on) too. In ro w 1 we sho w predicted points from audio (in green) ov erlaid on top of ground truth points (red; 2d pose detector). Row 2 shows the corresponding frame for context. Note that we don’t e xpect for them to fit exactly b ut just aiming for similar hands and fingers configurations. Figure 9. V iolin test results. W e encourage the reader to watch the supplementary videos (with audio on) too. In row 1 we show predicted points from audio (in green) ov erlaid on top of ground truth points (red; 2d pose detector). Row 2 shows the corresponding frame for context. [22] E. Insafutdino v , L. Pishchulin, B. Andres, M. Andriluka, and B. Schiele. Deepercut: A deeper , stronger , and faster multi- person pose estimation model. In Eur opean Conference on Computer V ision , pages 34–50. Springer , 2016. 3 [23] E. Insafutdinov , B. Schiele, and B. Andres. Dense-CNN: Fully Con volutional Neural Networks for Human Body P ose Estimation . PhD thesis, Uni versit ¨ at des Saarlandes Saarbr ¨ ucken, 2016. 3 [24] A. Jaimes and N. Sebe. Multimodal human–computer inter- action: A surve y . Computer vision and imag e understanding , 108(1):116–134, 2007. 3 [25] Y . Jia, E. Shelhamer , J. Donahue, S. Karaye v , J. Long, R. Gir - shick, S. Guadarrama, and T . Darrell. Caffe: Conv olu- tional architecture for f ast feature embedding. In Pr oceed- ings of the 22nd ACM international conference on Multime- dia , pages 675–678. A CM, 2014. 5 [26] T . Karras, T . Aila, S. Laine, A. Herva, and J. Lehtinen. Audio-driv en facial animation by joint end-to-end learning of pose and emotion. ACM T ransactions on Gr aphics (TOG) , 36(4):94, 2017. 2 [27] Z. Li, Y . Zhou, S. Xiao, C. He, and H. Li. Auto-conditioned lstm network for extended complex human motion synthesis. arXiv pr eprint arXiv:1707.05363 , 2017. 3 [28] Z. Liao, Y . Y u, B. Gong, and L. Cheng. Audeosynth: music-driv en video montage. A CM T ransactions on Graph- ics (TOG) , 34(4):68, 2015. 3 [29] C. K. Liu, A. Hertzmann, and Z. Popovic. Learning physics-based motion style with nonlinear in verse optimiza- tion. A CM T r ansactions on Graphics (TOG) , 24(3):1071– 1081, 2005. 3 [30] R. Loughran, J. W alk er , M. O’Neill, and M. O’Farrell. The use of mel-frequency cepstral coef ficients in musical instru- ment identification. In ICMC , 2008. 3 [31] J. Martinez, M. J. Black, and J. Romero. On human motion prediction using recurrent neural networks. arXiv pr eprint arXiv:1705.02445 , 2017. 3 Figure 10. A vatar playing piano: Screen shots of a vatar animation result with the predicted sk eleton. Figure 11. A vatar playing violin: Screen shots of a vatar animation result with the predicted sk eleton. Figure 12. T ypical limitations of the method. The method fails with extreme occlusions, or une xpected tones or movements that are not captured well in the training data (fast raising of arms, mov ement from one side of keyboard to e xtreme other , or very fast pace). It may also fail since we train based on 2D pose estimators while people in Y ouT ube videos move in 3D. The predicted points (green) are overlaid on top of ground truth (red) for comparison, in those cases also the ground truth points often (estimated by pose detection) are not exact. [32] D. Mehta, S. Sridhar , O. Sotnychenko, H. Rhodin, M. Shafiei, H.-P . Seidel, W . Xu, D. Casas, and C. Theobalt. Vnect: Real-time 3d human pose estimation with a single rgb camera. arXiv pr eprint arXiv:1705.01583 , 2017. 7 [33] W . Ouyang, X. Chu, and X. W ang. Multi-source deep learn- ing for human pose estimation. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 2329–2336, 2014. 3 [34] A. Owens, P . Isola, J. McDermott, A. T orralba, E. H. Adel- son, and W . T . Freeman. V isually indicated sounds. In Pr o- ceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 2405–2413, 2016. 3 [35] J. Schwarz, C. C. Marais, T . Leyvand, S. E. Hudson, and J. Mank off. Combining body pose, gaze, and gesture to de- termine intention to interact in vision-based interfaces. In Pr oceedings of the 32nd annual ACM conference on Hu- man factors in computing systems , pages 3443–3452. A CM, 2014. 3 [36] N. Sebe, I. Cohen, T . S. Huang, et al. Multimodal emotion recognition. Handbook of P attern Recognition and Com- puter V ision , 4:387–419, 2005. 3 [37] T . Simon, H. Joo, I. Matthews, and Y . Sheikh. Hand keypoint detection in single images using multivie w bootstrapping. In CVPR , 2017. 4 [38] M. Soler , J.-C. Bazin, O. W ang, A. Krause, and A. Sorkine- Hornung. Suggesting sounds for images from video collec- tions. In Eur opean Confer ence on Computer V ision , pages 900–917. Springer , 2016. 3 [39] S. Suwajanakorn, S. M. Seitz, and I. Kemelmacher- Shlizerman. Synthesizing obama: learning lip sync from au- dio. A CM T ransactions on Gr aphics (TOG) , 36(4):95, 2017. 2 , 3 , 4 [40] Y . T aigman, M. Y ang, M. Ranzato, and L. W olf. Deepface: Closing the gap to human-level performance in f ace v erifi- cation. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , pages 1701–1708, 2014. 4 [41] S. T aylor , T . Kim, Y . Y ue, M. Mahler , J. Krahe, A. G. Ro- driguez, J. Hodgins, and I. Matthe ws. A deep learning ap- proach for generalized speech animation. A CM T ransactions on Graphics (T OG) , 36(4):93, 2017. 2 [42] M. R. Thompson and G. Luck. Exploring relationships be- tween pianists body movements, their expressi ve intentions, and structural elements of the music. Musicae Scientiae , 16(1):19–40, 2012. 3 [43] P . W agner , Z. Malisz, and S. Kopp. Gesture and speech in interaction: An o vervie w . Speech Communication , 57:209– 232, 2014. 3 [44] J. W alker , K. Marino, A. Gupta, and M. Hebert. The pose knows: V ideo forecasting by generating pose futures. arXiv pr eprint arXiv:1705.00053 , 2017. 3 [45] S. B. W ang and D. Demirdjian. Inferring body pose using speech content. In Proceedings of the 7th international con- fer ence on Multimodal interfaces , pages 53–60. A CM, 2005. 3 [46] S.-E. W ei, V . Ramakrishna, T . Kanade, and Y . Sheikh. Con- volutional pose machines. In CVPR , 2016. 3 , 4 [47] M. W ¨ ollmer , M. Kaiser , F . Eyben, B. Schuller , and G. Rigoll. Lstm-modeling of continuous emotions in an audiovisual af- fect recognition framework. Image and V ision Computing , 31(2):153–163, 2013. 3 [48] F . Zheng, G. Zhang, and Z. Song. Comparison of different implementations of mfcc. Journal of Computer Science and T echnolo gy , 16(6):582–589, 2001. 3

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment