A pedestrian path-planning model in accordance with obstacles danger with reinforcement learning

Most microscopic pedestrian navigation models use the concept of "forces" applied to the pedestrian agents to replicate the navigation environment. While the approach could provide believable results in regular situations, it does not always resemble…

Authors: Thanh-Trung Trinh, Dinh-Minh Vu, Masaomi Kimura

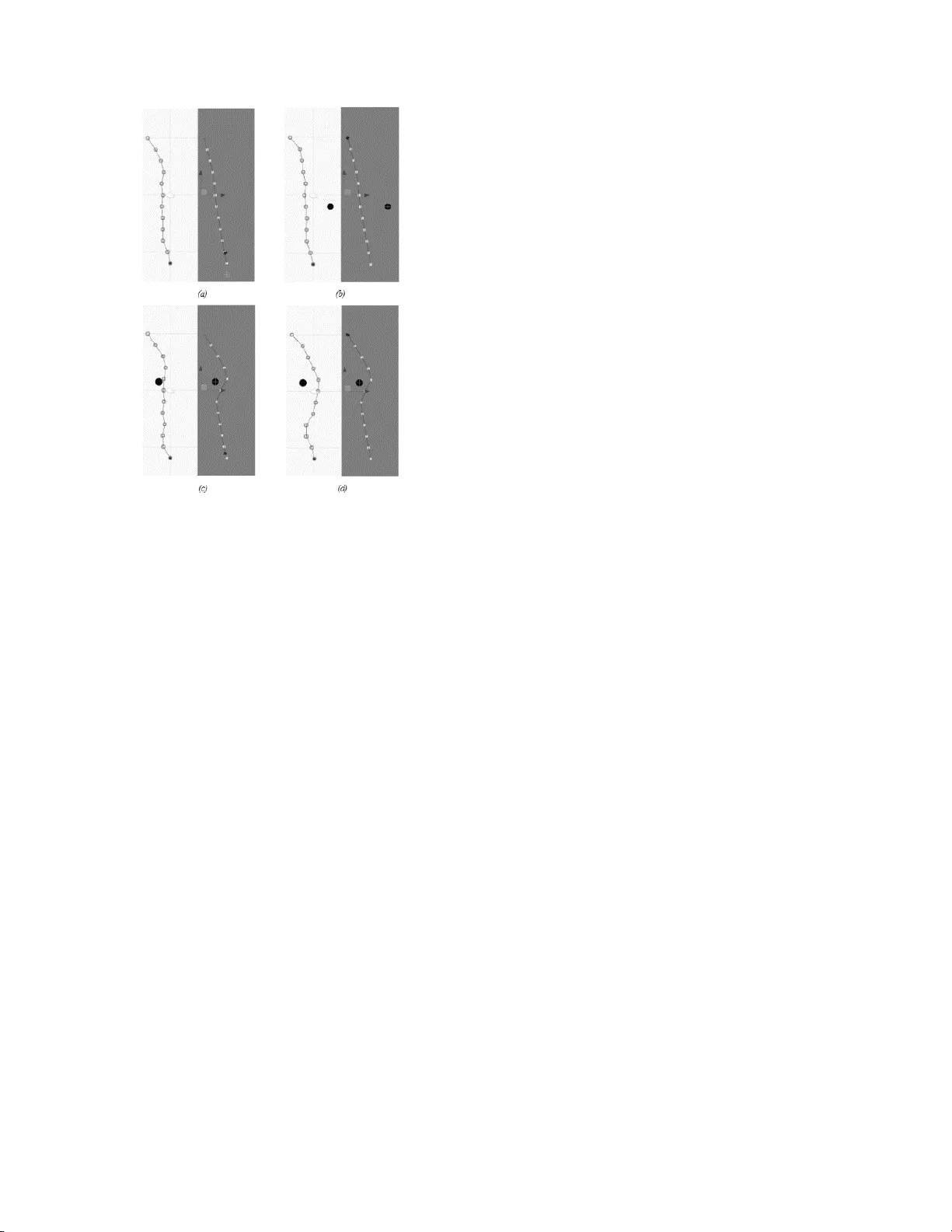

A pedestrian path-planning model in accordance with obstacle’s danger with reinforcement learning Tha nh- Tr ung Tr in h Shi bau ra I ns titu te of Techn ol ogy 3-7-5 Toyosu, Koto -ku, Toky o 135 -8548 Japan (+81)50-5339-32 33 nb18 50 3@s hib aur a-i t. ac. jp Di nh- Min h V u Shi bau ra I ns titu te of Techn ol ogy 3-7-5 Toyosu, Koto -ku, Toky o 135 -8548 Japan (+8 1)90 -63 29- 5811 n b175 0 2@sh ib aur a-i t. ac. jp Mas aom i K imu ra Shi bau ra I ns titu te of Techn ol ogy 3-7-5 Toyosu, Koto -ku, Tok yo 135 -8548 Ja pan (+8 1)3 -585 9-850 7 masa om i@s hib aur a- it. ac. jp ABSTRACT Most microscopic pedestrian navigation models use the concept of “forces” applied to the pedestrian ag ents to replicate the navigation environment. While the approach could provide believable results in regular situations, it does not always resemble natural p edestrian navigation behaviour in many typical settings. In our research, we p roposed a novel approach using reinforcement learning for simulation of pedestrian agent path planning and collision a voidance problem. The primary focus of this approach is using human perception of the environment and danger awareness of interferences. The implementation of ou r model has sh own that the path planned by the agent shares many similarities with a human pedestrian in several aspects such as following common walking conventions and human behaviours . CCS Concepts • Computing m ethodologies → Neural networks • Computing methodologies → Agent / discrete mod els • Applied computing → Law, social and behavioral science s. Keywords Pedestrian; navigation ; path p lanning; rein forcement learning ; PPO . 1. INTRODUCTION Recent studies in p edestrian simulation are often fixated within one o f th e three categories assorted by the level of interaction: macroscopic, mesoscop ic and microscopic [1] . Th e macroscopic simulation models use the concept of flu id and particles originated from physics to con struct p edestrian n avigations while igno ring the interactions b etween pedestrians as well as individual characteristics of each ped estrian. For an excessively high -density crowd, a macroscopic model could be sufficien t; h owever, for a smaller size of p edestrians where social interactions are essential, a mesoscopic or microscopic model would be more suitable. A mesoscopic model sits between macroscopic and microscopic, which is still able to simulate a relative large-sized environment but with the cost o f agent’s movements and interactions . Compared to mesoscopic, a microscopic mod el is more realistic as each pedestrian is considered as an independent object or a computer agent whose behaviours and thinking processes could be modelled upon. Most microscopic pedestrian simulation models use the concept of “forces” appli ed to the pedestrian agent to replicate the navigation behaviour [2]. The b asic idea o f these models is that pedestrian agents are attracted to a specific point - of -interest (e .g. pede strian’s destination) an d repulsed from possible coll isions (e.g. walls, obstacles an d oth er agents). The representation of the force-based models is similar to the interactions betwee n magnetic o bjects with some certain improvements. There is undoubtedly a sufficient resemblance in basic movement and collision avoidance with the implementation of th e model in a simulation. However, when comparing with ped estrian navigation in real life, many human decisions which require strategical thinking or social interacting are n ot reflected i n the fo rce-based simulation. For instance, wh en an a gent plans a path to go from its c urrent position to a destination, a fo rce-based agent often chooses the shortest path without colliding into o ther o bstacles most of the time. In real life, a human pedestrian has ma ny other aspects affecting his decision such as social co mfort, law obeyance o r his personal feeling. This could be a problem if the s imulation n eeds the p reciseness of pedestrian behaviour, for instance, a traffic simulation system for automated vehicles. The main idea of our research is adopting rein forcement learning in the p edestrian agent’s decision -making process. Reinforcement learning is a machine learning paradigm based on the concept of reinforcement in behavioural psychology, in which the learner needs to find an action in the current state fo r an optimum reward. The concept is virtually close to th e way hu mans learn to b ehave in many rea l-life situ ations, including p ath navigation. Wh en a person p lans a pat h to th e destination and feels uncomfor table with his decision, for instance, because of taking a lo nger p ath or colliding with obstacles, he will then receive a negative rew ard and will try to improve his behaviour. As a result, on ce an environment is observed, that individual will b e able to come up with a path using his current optimum policy without the needs o f various calculation such as “forces” realis ed in many microsc op ic pedestrian models. The remainder of this paper is structured a s follows: The next section provides an overview of studies related to our research. Section 3 presents the backgrounds o f reinforcement learning and the PPO algorithm. After that, we describe th e methodology in our path planning model in Section 4. The modelling of our model and the formulation o f our rewarding behaviour will be presented in Section 5 and Section 6, respectively. In Section 7 , we present the implementation o f o ur mod el and evalu ation with a conventional rule-based model. 2. RELATED WORK One of the most influential algorithms in microscopic pedestrian simulation is the Social Force Model (SFM) by Helbing et al. [3]. The concept o f th is model is that each pedestrian agent will be under influences of different social forces, including driving force, agent interact force and wall interact force. The driving force attracts agen t toward the desti nation, the agent intera ct force repulses agent from other agents, and the wall int eract force repulses agent from walls or boundaries. Since SFM was introduced, there h ave been a v ariety of models formulated based on SFM However, such models do not take account o f the cognitive thinking process within th e human brain, which leads to man y d eviations from actual huma n behaviour. Regarding research in human behaviour, many studies can be found i n the field of robotics research. Many re searchers have tried to solve the problems in human comfort and constructing naturalness [4]. Fo r an agent to navigate natu rally, not conflicting with other ped estrians or obstacles is not enough; but the ag ent also needs to replicate different behaviours from humans. Another concept propo sed in human behaviour research is human b ias o r cognitive bias , which c auses the anomaly in the human decision process. For e xample, in [5 ], Golledge et al. have shown that pedestrians do not always choose the most optimised decision while selecting a path. Another study by Cohen et al. [ 6] also discussed how th e human brain making decisions between exploitation and exploration. These aspects were supportive for forming the agent behaviour in our research. In using rein forcement learning fo r pedestrian navigation, the amount o f research is moderately limited. In a study by Mar tinez et al. [7], a n experiment in using reinforcement learning for a multi-agent navigation system has been implemented; however, the algorithm used was q -learning which is too simple and does not suit well to a dynamic environment. Another approach is le arning from observing examples from hu man behaviour. In th eir paper by Kretzschmar et al. [8], a navigation model was proposed using in verse reinforcement learnin g. One difficulty in such approach is the ex ample or the dataset from human b ehaviour is not easy to be extracted or readily available. 3. BACKGROUND 3.1 Reinforcement Learning Reinforcement learning was first introduced by Surton et al. (1998) [9]. A reinforcement learning ag ent learns to o ptimise th e policy , the mapping from a (possibly partial) observed states of the environment to action to be taken, in ord er to maximise the expected cumulative reward . Differe nt to super vised learning, instead of using existing inputs and outputs, the reward will be given by using the reward signal . T his c ould be inferred as a positive or n egative experie nce from humans (such as satisfied o r discomfort) in a biological system. However, a positive o r negative reward is intermediate, which means that an action is considered b ad at that moment but could also yield a better result in the long run. As a result, a reinforcement learning system also needs a value function to defin e the ex pected long -term reward positivity. 3.2 PPO Algorithm Many modern reinforcement learning alg orithms employ different deep learning techniques to optimise th e total cumu lative reward. These approaches u se t he neur al network training process to optimise the agen t’s policy. T hey often calculate an advantage value by comparing the expec ted reward o ver the average reward fo r that state. The advantage fun ction will be then used in the loss functi on of the n eural network , which is con sequently trained for a number of steps and outputs the most optimised policy. For algorithm like Policy Gradient, the policy π θ (a t | s t ) will b e constantly updated after every training step. With a n oisy environment, th e old policy π θ old (a t | s t ) , which might actually be better th an the new one, will be overwritten ; causing th e training process to be less efficient. The algorithm Pro ximal Policy Optimization (PPO) was introduced by S chulman et al. [10] try to avoid that problem. T he objective of the algorithm is to avo id staying away too far from a good policy by keeping the old good policy and compared with upd ated one u sing a mor e efficient loss function. 4. PATH PLANNING MODEL To d esign our model of pedestrian path planning a nd collision avoidance u sing reinforcement learning , we had t o address the following problems: the defi nition of the environment and the formulation of the rewarding behaviour. Regarding the d efinition of the environment, one difficulty is that the model of the designed environment cannot be too complicated. If the environment is too complex (e.g . too large or too many obstacles); th e agent might not b e able to learn the appropriate behaviour, or it could take an excessive am ount of time. In addition to that, the environment also needs to p rovide a stable training p rocess, or the variation between each training states should be balanced . For the fo rmer prob lem, we limited our environment to a relatively small area with an appearance chance of one ob stacle, assumed that a more co mplex walking environment could be d ivided into smaller pat hs. For the latter problem, we introduced a mech an ism for resetting the environment to balance the proportion between th e cases when there is an obstacle p resent an d ones when there is none. For instance, if the agent fails to navigate without collision with an obstacle when there is one; bu t in the next train ing step, there is no obstacle so the agent could produce a path without collision. This could make th e agent incorrectly thinks that the current policy is a g ood on e, while it is probab ly not . As a result, instead of resetting every training step , we suggest ed resetting th e environment only when the agent has already planned a path without conflicting with the obstacle. If the agent fails, we retained the states of the environment for a nu mber of iteration before resetting so that the agent could gain enough experience without being stuck in a bad policy. We also introduced a definition to th e obstacle in our environment so that the agents could outpu t a natu ral path around it. Different to a physical obstacle in real-world (e.g. a rock, a wall or a construction site), the term obstacle in our research represents the feeling o f interference wh ile planning a path. For example, a group of p eople engaging in a conv ersation in the middle of the walking area could also be considered as an o bstacle. Although there could b e a considerable amount of possible walking space within the group’s territory, planning a path through this area is considered rude o r unusual for a no rmal person. In a study by Chung et al. [ 11 ], such areas are called th e “spatial effect” in an environment. As th e process of constructing a spatial effect area is carried o ut in the human cognitive system, the interpretation of an obstacle could be slightly d ifferent for each person or in different situations (e.g. the crossroad when the traffic light is green or red) . Apart from positi on, we p roposed two properties to ou r ob stacle : size and d anger level , which should have a g reat impact on the path planned by the agent as suggested in several studies [ 12 ] . Th e size of t he obstacle sh ould cover the concept of spatial effect mentioned above, not just the size of the physical obstacle. For example, a damaged or unstable power pole would have a much larger “size” compared to a steady or stable one du e to the fear of the pole falling. For simplicity, we assume our obstacle has a round shape; thus, the size of an obstacle will be expressed by a radius value. In terms of the danger level, similar to obstacle’s size, is also a concept formed within the human mind. The feeling of danger level could have a great impact on the process of planning a p ath by a ped estrian. For example, in the two settings illustrated in Figure 1 below, on the left is a n obstacle such as a water pud dle which h as a much less d anger lev el compar ed to a deep hole on the right. As a result, t he planned path would normally sta y much further away from the hole than from th e puddle. A more detailed description o f the obstacle properties w as shown in the technical report [ 13 ]. The concrete mod elling of th e environment will be presented in Section 5. Figure 1. Path planned by an agent in different settings. In addition to the modelling of the env ironment, we need to specify the appropriate reward function to th e a gent. For each taken action, the agent needs to know if the action is possibly good or bad b ased on the giv en reward. Different from rule-b ased methods, in reinforcement learning, rewards are often given based on the results of the agent’s actions to help the agent in shaping the behaviour. An improper rewa rding, for example, giving a large penalty for an undesirable action might cause th e agent to avoid such action completely, although th ere might be a chance that the action could lead to a higher cumulative result in th e long run. Another p roblem with rewarding is that the ag ent does no t receive each specific reward for each behaviour but only the total reward for every action. While this is correspo nding to the concept of reinforcement in human cognition, sh aping a specific behaviour is much harder compared to in rule-based methods. As a result, we chose to formalise our rewarding behav iour based on v arious factors affecting h uman comfort wh ich were summarised in an article by T. Kruse et al. [4]. These are a number of factors app lied to robot movement wh ich may cause humans to observe its movement as more natural or human-like. Consequently, a human being should feel the same level of comfort when exhibiting similar behaviour. We will thoroughly present our rewarding formulation in Section 6 of this paper. 5. ENVIRONMENT MODELLING The modelling of ou r environment is presented as illustrated in Figure 2 . In the scope of our research, the size of our environment is limited to an area of 22 meters by 10 meters; th e current position o f th e pedestrian agent will be placed between ( -5, -12) and (5, -12); the desired destination of the agent will b e placed between (-5, 10) and (5, 10). The o bstacle has a rand om chance of appearing in the environment. Each obstacle has a size ranged from 0. 5 to 2 meters and a danger level ranged from 0 to 1. The objective o f our research is to let the pedestrian agent plan a path from its po sition to a p re-defined destination. In order to do this, the agent must observe the env ironment then provide a p ath using its current policy. In our model, the ag ent path is constructed from 10 outputs of the neu ral network, corresponding to 10 component p ath nodes’ relative x po sitions. Appropriately, the component p ath nodes’ relative y positions are { -10 , -8, -6... 6, 8}. Figure 2. Path-planning model. Specifically, for each training step, the pedestrian agent observes the following values: - Relative x positions of agent’s current position and it s desired destination - The presence of the obstacle. If the obstacle is p resent, the agent will observe the relative po sition, size and the danger level of the obstacle. The taken action of the agent, which is the planned p ath in this case, will then b e rewarded based on the rewarding behaviour discussed in Section 6. After that, the training step is terminated and the new training environment will be initialised. 6. REWARDING FORMULATION As suggested in Section 4, we formulise our rewarding behaviour based on the idea of human comfort. There are many factors could affect human comfort level, but within the scope of research, we employed the following factors: - Choosing the shortest path to the destination. - Encouraging actions following the b asic rules or common sense. - Discouraging the changing of direction. - Avoid getting through restricted regions. Choosing th e shorte st path to th e destination, as d iscussed in [5], is not always optimised for path planning but still has a very high priority in the process. For calculating the reward, we u sed the negative of the sum of all squared lengths of all walking paths . The bias b used in th e reward is for providing a positiv e reward to the agent wh en a satisfactory pa th is take n. The rewarding for taking the shortest path is formulated as follows where p i is a vector representing the p ath from the p revious node to the next node in the agent’s planned path and b is the bias. For discouraging changing direction, we added a small penalty when a change in direction is greater than 30 o . The reason for this is th at in human navigation, a mi n or change in direction is still considered acceptable. The rewarding for changing d irection Acknowledgements as follows where ang le(p i , p j ) is th e value in deg ree of angle fo rmed b y two vectors p i and p j ; θ (x) is the Heaviside step fun ction which is defined as . In terms of following the basic rules or common sense, this could be varied d epends on regional laws and cultures. In th e scope of our research, we implemented the following principles: (1) Favour going parallel to the sides. This will help the agent maintain the flow of the movement in the road. (2) Following the left side of th e road (o r the right side, in case of right-side walking countries). Although pedestrians are not explicitly required b y the laws to follow this convention in many countries, many people still follow the convention as a rule of thumb in the decision-making process in many situations. (3) Avoid getting too clo se with th e boundaries. As discussed in several studies, especially rule-based models, this is for avoiding accidental injuries when colliding with walls or surrounding objects. [3] To implement these, we simulated the navigation along the path by sampling the planned path into N samples s i , then calculate the appropriate rewards where x_position function is for g etting the x coordinate of th e position s i . Obstacle avoid ance is probably the most essential criteria in path planning as it d irectly affects the pedestrian’s safety. In real life, humans often try to keep a certain distance from the obstacle’s centre, but once the distan ce is assured, the priority in the p ath planning process will shift to other interests. In the idea of reinforcement learnin g, when the path does not conflict with th e obstacle area, a furth er distance from o bstacle will no t provide a higher reward. This idea was formulated in our rewardi ng for avoiding obstacle as follows: with , where d(s i ,obs) is the distance from the sampled position s i to obstacle’s p osition; r obs an d danger obs are the radius and the danger level of the obstacle area, respectively. The cumulative reward is calculated by the sum of all component rewards mention ed abov e, each was multipli ed by an appropriate coefficient: where к i is the coefficient for rewarding of each reward. These coefficients represent the propo rtion of imp ortance of each reward, which can be different between agents. Variation of these coefficients could alternate the o utput results, and b y th at can be a representation o f different huma n personalities. For example, a law-obedient pedestrian could u se a hig h v alue for the coefficient of walking in the left side, whil e a cauti ou s agent could use a hig h value for the coefficient of obstacle avoidance. 7. IMPLEMENTATION AND DISCUSSION The realisation of o ur model was made available u sing Unity -ML [14], a framework which functions as a communicator between Python u sing TensorFlow and the 3D graphics engine Unity. For each training step, we initialise our environment then let our ag ent observes the current state. After th at, these signals are sent to Python via the communicator for th e training process. The output of th e neural network using PPO alg orithm will be then sent back to Unity and used f or positioning the coordinates of each path node. The cumulative reward is calculated based on the output path and sent to Python for the training process. We built the model for the environment entirely wit hin Unity environment. The e nvironment modelled is excessively noisy; therefore, a large size of batch is required. F or faster training, we concurrently trained the model using 10 copies o f the same environment. We h ave b een able to successfully train the model with a batch size of 20480, buffer size of 2 04800 and the learning rate of 1.5e-3 in three million steps. As can be seen in Figure 3 as follows, the reward has seemed to be converged at around -0.3. Figure 3. Cumulative reward statistics. Using the trained model f or pedestrian agent’s path planning action, the behaviour can be observed from the results presented in Figure 4 . From observation, generally, it can be said that the agent’s path resembles a similarity with a human p erson’s decision of fo rming a walking path. In (a ), the figure shows that the agent by our model planned a relatively s hort path that still co nforms th e walking convention such as walking on th e left side o f the ro ad and changing d irection naturally. On the contrary, the path formed by SFM leads the agent to go straight to th e destination. In (b), there is an obstacle but outside of the agent’s common p lanned path. The ob stacle, in th is case, has little to no effect on the result of it s planned path; therefore, there are little changes to the path compared to th e situation in (a). S imilarly, no change was observed in th e p ath formed by SFM as well. In (c), the obstacle now is in the agent’s common planned path. In th is ca se, the danger level of the o bstacle perceived b y the agent is very small, so our agent only tried to mo dify the path j ust enough to no t conflict with the obstacle. As for th e path by SFM, the agent still chooses to go straight to the destination and only try to avoid the obstacle when being close to it. When the danger level perceived was increased as in (d), our agent tried to stay away fr om the Figure 4. Agent’s planned path in different situations. The path using RL (our model) is on the left, the path using SFM is on the right. (a) No obstacle; (b) Obstacle area outside agent’s path; (c) Obstacle area within agent’s path, danger level = 0.1; (d) Same obstacle area with danger level = 1. obstacle much further. As can be seen from the fig ure, there are parts of the p lanned path po sitioned slightly on the right side o f the road. Also, the total length of th e path is also not the shortest. This path has replicated the common b ehaviour fro m hu mans to ensure their highest safety while walking on the road. The danger level is not present in SFM, therefore th ere is no change to the path compared to the situation (c). Compared to a force-based model, SFM in p articular, our model has the advantage of replicating the natural behaviour of human navigation. Although a rule-based model, e.g. a finite state machine model, mig ht be able to mimic the e xact behaviour precisely , it is prone to have the limitation o f the finite number o f rulesets. Reinforcement learning, on the hand s, has th e advantage of creating d iversity o n human behaviour t hanks to the shared concepts between neural network and reinforcement in machine learning and in real life. However, our model is still in early-stage and re quire muc h further research in order to replicate a perfect p edestrian behaviour in m ultiple situations. T he obstacle, f or one, c annot represent a moving agent such as auto mobile or an other pedestrian. The re ason for th at is whe n encountering wit h a moving obstacle, the agent needs differen t ways to plan a path . For instance, th e agent might need to plan ahead b y making predictions, as discussed in [14]; o r ad apt to the changes in the environment and make decisions synchronously. Another limitation o f our research is the lack of chan ging in the agent’s velocity. The variation in speed of pedestrians is als o a major factor in repli cating a natural walking b ehaviour. In the future, we will co nduct further research to address these p roblems using the result presented in this paper as a groundwork. 8. CONCLUSION We have pr oposed a n ovel reinforcement lea rning model f or pedestrian ag ent path planning and collision avoidance . The implementation of our model has shown that the ag ent is able to plan a n atural path to the destination while avoiding colliding with the obstacle in different situations. The p lanned p ath shares many similarities with a human pedestrian in several aspects such as following common walking conventions and human behaviours. 9. REFERENCES [1] Ijaz, K., Sohail, S. and Hashish, S., 2015, March. A survey of latest approaches for crowd simulation and modeling using hybrid techniques. In 17th UKSIMAMSS International Conference on Modelling and Simulation (pp. 111-116). [2] Tekno mo, K., Takeyama, Y. and Inam ura, H., 2000. Review on microscopic pedestrian simulation model. In Proceedings Japan Society of Civil Engineering Conference . [3] Helbing, D. and Molnar, P., 1995. Social force model for pedestrian dynamics. Physical review E , 51 (5), p.4282. [4] Kruse, T., Pandey, A.K., Alami, R. and Kirsch, A., 2013. Human-aware robot navigation: A survey. Robotics and Autonomous Systems , 61 (12), pp.1726-1743. [5] G olledge, R.G., 1995, September. Path selection and route preference in human navigation: A progress report. In International Conference on Spatial Information Theory (pp. 207-222). Springer, Berlin, Heidelberg. [6] Cohen, J.D., McClure, S.M. and Yu, A.J., 2007. Should I stay or should I go? How the human brain manages the trade-off between exploitation and exploration. Philosophical Transactions of the Royal Society B: Biological Sciences , 362 (1481), pp.933-942. [7] Martinez-Gil, F., Lozano, M. a nd Fernández, F., 2011, May. Multi-agent reinforcement learning for simulating pedestrian navigation. In International Workshop on Adaptive and Learning Agents (pp. 54-69). Springer, Berlin, Heidelberg. [8] Kretzsc hmar, H., Spies, M., Sprunk, C. and Burgard, W., 2016. Socially compliant mobile robot navigation via inverse reinforcement learning. The International Journal of Robotics Research , 35 (11), pp.1289-1307. [9] Sutton, R.S. and Barto, A.G., 1998. Introduction to reinforcement learning (Vol. 2, No. 4). Cambridge: MIT press. [10] Schulman, J., Wolski, F. , Dhariwal, P., Radford, A. and Klimov, O., 2017. Proximal policy optimization algorithms. . [11] Chung, W., Kim, S., Choi, M., Choi, J., Kim, H., Moon, C.B. and Song, J.B., 2009. Safe navigation of a mo bile robot considering visibility of environment. IEEE Transactions on Industrial Electronics , 56 (10), pp.3941-3950. [12] Melchior, P., Orsoni, B., Lavialle, O., Poty, A. and Oustaloup, A., 2003. Consideration of obstacle danger level in path planning using A and fast-marching optimisation: comparative study. Signal processing , 83 (11), pp.2387-2396 [13] Trinh Thanh Trung and Masaomi Kimura, 2019. Reinforcement learning for pedestrian agent route planning and collision avoidance, IEICE Tech. Rep., vol. 119, no. 210, SSS2019-21, pp. 17- 22 . [14] Juliani, A., Berges, V.P., Vcka y, E., Gao, Y., Henry, H., Mattar, M. and Lange, D., 2018. Unity: A general platform for intelligent agents. . [15] Ziebart, B.D., Ratliff, N., G allagher, G., Mertz, C., Peterson, K., Bagnell, J.A., Hebert, M., Dey, A.K. and Srinivasa, S., 2009, October. Planning-based prediction for pedestrians. In 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems (pp. 3931-3936). IEEE.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment