Balanced One-shot Neural Architecture Optimization

The ability to rank candidate architectures is the key to the performance of neural architecture search~(NAS). One-shot NAS is proposed to reduce the expense but shows inferior performance against conventional NAS and is not adequately stable. We inv…

Authors: Renqian Luo, Tao Qin, Enhong Chen

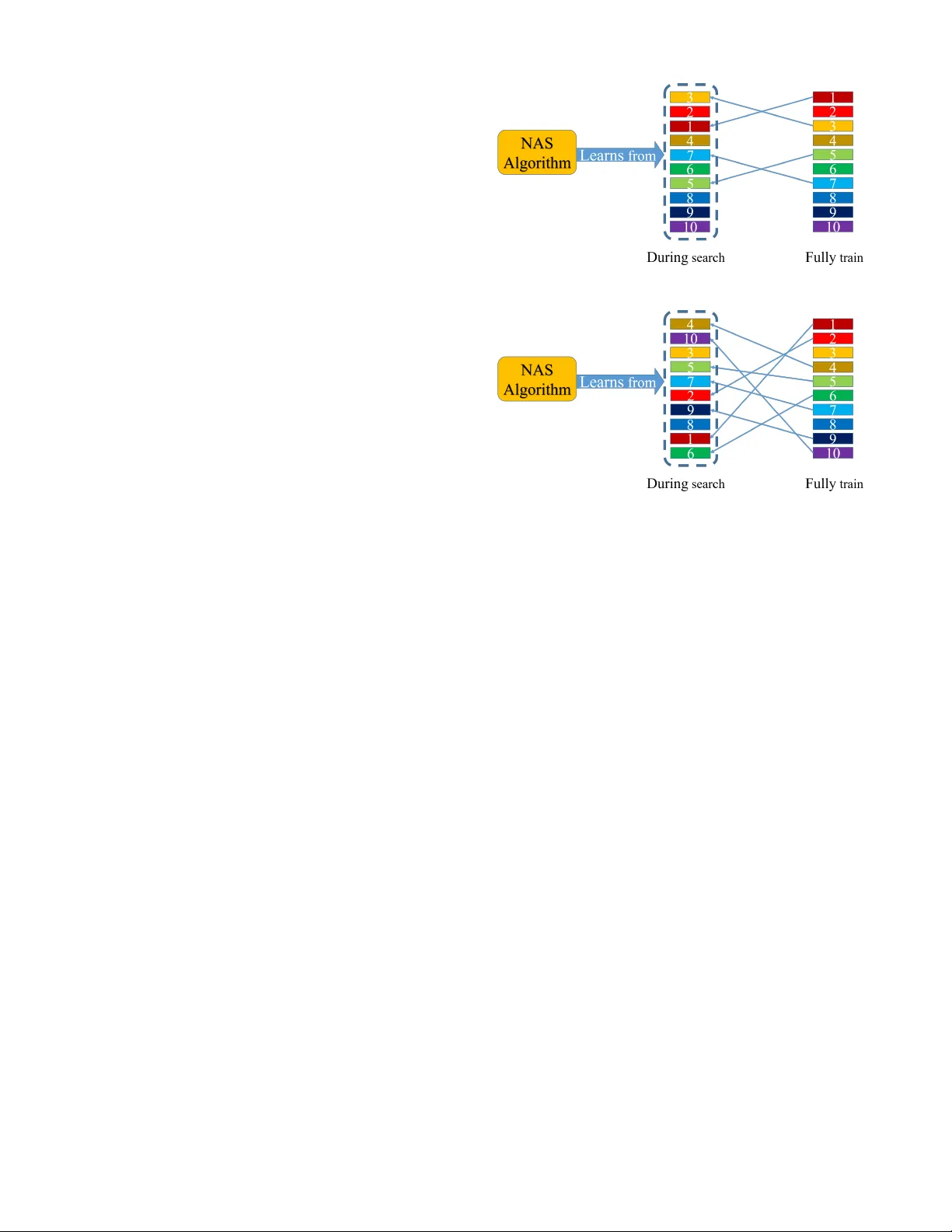

Balanced One-shot Neural Ar chitectur e Optimization Renqian Luo 1 , T ao Qin 2 , Enhong Chen 1 1 Uni versity of Science and T echnology of China 2 Microsoft Research Asia lrq@mail.ustc.edu.cn, taoqin@microsoft.com, cheneh@ustc.edu.cn Abstract The ability to rank candidate architectures is the key to the performance of neural architecture search (N AS). One-shot N AS is proposed to reduce the e xpense b ut sho ws inferior performance against con v entional N AS and is not adequately stable. W e in vestigate into this and find that the ranking corre- lation between architectures under one-shot train- ing and the ones under stand-alone full training is poor , which misleads the algorithm to disco ver bet- ter architectures. Further , we sho w that the train- ing of architectures of different sizes under current one-shot method is imbalanced, which causes the ev aluated performances of the architectures to be less predictable of their ground-truth performances and affects the ranking correlation heavily . Conse- quently , we propose Balanced NA O where we in- troduce balanced training of the supernet during the search procedure to encourage more updates for large architectures than small architectures by sampling architectures in proportion to their model sizes. Comprehensiv e e xperiments verify that our proposed method is effecti ve and robust which leads to a more stable search. The final discov- ered architecture shows significant improvements against baselines with a test error rate of 2.60% on CIF AR-10 and top-1 accuracy of 74.4% on Ima- geNet under the mobile setting. Code and model checkpoints will be publicly av ailable. Code is av ailable at github .com/renqianluo/N A O pytorch. 1 Introduction Neural architecture search (N AS) aims to automatically de- sign neural network architectures. Recent NAS works show impressiv e results and ha ve been applied in man y tasks, in- cluding image classification Zoph and Le (2016); Zoph et al. (2018), object detection Ghiasi et al. (2019), super resolu- tion Chu et al. (2019), language modeling Pham et al. (2018), neural machine translation So et al. (2019) and model com- pression Y u and Huang (2019). Without loss of generality , the search process can be vie wed as iterations of two steps: (1) In each iteration, the NAS algorithm generates some candidate architectures to estimate and the architectures are trained and ev aluated on the task. (2) Then the algorithm learns from the ev aluation results of the candidate architectures. In principle, it needs to estimate each generated candidate architecture by training it until con vergence, therefore the estimation proce- dure is resource consuming. Figure 1: Balanced One-shot Neural Architecture Optimization. Each architecture is sampled in proportion to its model size during training in the search procedure, to balance architectures of dif ferent sizes. One-shot N AS w as proposed Bender et al. (2018); Pham et al. (2018) to reduce the resources, by utilizing a supernet to include all candidate architectures in the search space and perform weight inheritance between different architectures. Howe ver , the architectures disco vered by such one-shot NAS works show inferior performance to conv entional N AS which trains and ev aluates each architecture individually . Mean- while, the stability is weak that the architectures generated by the algorithms do not always achiev e the performance re- ported originally Li and T al walkar (2019). W e conduct ex- periments on EN AS Pham et al. (2018), D AR TS Liu et al. (2018b) and N A O-WS Luo et al. (2018), and find that the sta- bility is weak and the performance is inferior to con ventional N AS Zoph et al. (2018); Real et al. (2018); Luo et al. (2018). During the search, the algorithm aims to dif ferentiate the candidate architectures and figures out relatively good archi- tectures among the all. Then the key to the algorithm is the ability to rank these candidate architectures. Then we should expect that the ranking of the architectures during fi- nal full training to be preserved when they are trained under such one-shot method during the search, so the algorithm can learn well. W e inv estigate into the one-shot training with the weight-sharing mechanism and find that the ranking corre- lation of the architectures is not well preserved under such one-shot training, which misleads the algorithm to effecti vely and robustly find an optimal architecture, as shown in Fig. 2. Architectures discovered could perform relativ ely well when trained in such one-shot method, without guarantee to per- form well when fully trained stand-alone. Under one-shot training, thousands of candidate architec- tures are included in a supernet and one architecture (or one dominant architecture) is sampled at each step during the training to update its parameters. The a verage training time of each architecture is handful and the optimization is insuf fi- cient, where the v alidation accurac y of the architecture is less representativ e of its ground-truth performance in final train- ing. W e sho w that the ranking correlation of the architectures is weak under one-shot training, and the ranking correlation increases as the training time increases. Since small architectures are easier to train than big archi- tectures, gi ven handful training steps and insuf ficient opti- mization, small architectures tend to perform well than big architectures, which is not consistent in the final training. Therefore the training of the candidate architectures is imbal- anced, and the ranking correlation is weak, which is demon- strated by our experiments. This misleads the search algo- rithm to effecti vely find good architecture and the algorithms are observed to produce some small architectures that gener- alize worse during the final training. Based on such insight, we propose balanced N A O where we introduce balanced training to encourage lar ge architec- tures for more updates than small architectures during the one-shot training of the supernet, depicted in Fig. 1. W e ex- pect the training time of an architecture to be proportional to its model size. Specifically , we sample a candidate archi- tecture in proportion to its model size, instead of uniformly random sampling. W ith this proportional sampling approach, the expected training time of the architecture associates with its size, and experiments sho w that the ranking correlation increases. Extensiv e experiments on CIF AR-10 demonstrate that: (1) Architectures discovered by our proposed method show sig- nificant improvements compared to baseline one-shot N AS methods, with a test error rate of 2.60% on CIF AR-10 and top-1 accuracy of 74.4% on ImageNet. (2) Our proposed method generates architectures with more stable performance and lower v ariance, which brings better stability . (a) Conv entional N AS (b) One-shot NAS Figure 2: Illustration of ranking correlation between architectures in neural architecture search. Rectangles with different colors and numbers represent different architectures ranked by performance. (a) In con ventional N AS, the ranking correlation between architec- tures during search and final fully trained ones is well preserved with slight bias. (b) In one-shot N AS, the ranking correlation is hardly preserved. 2 Related W ork Recent NAS algorithms mainly lie in three lines: reinforce- ment learning (RL), ev olutionary algorithm (EA) and gradi- ent based. Zoph and Le (2016) firstly proposed to search neu- ral network architectures with reinforcement learning (RL) and achieved better performances than human-designed ar- chitectures on CIF AR-10 and PTB. Real et al. (2018) proposed AmoebaNet using regularized ev olutionary algo- rithm. Luo et al. (2018) maps the discrete search space to con- tinuous space and search by gradient descent. These works achiev e promising results on several tasks, howe ver they are very costly . T o reduce the lar ge amount of resources con ventional N AS methods require, one-shot N AS was proposed. Bender et al. (2018) proposed to include all candidate operations in the search space within a supernet and share parameters among different candidate architectures. EN AS Pham et al. (2018) lev erages the idea of weight sharing and searches by RL. D AR TS Liu et al. (2018b) searched based on the su- pernet through a bi-level optimization by gradient descent. N A O Luo et al. (2018) also incorporates the idea of weight sharing. ProxylessN AS Cai et al. (2018) binaries architec- ture associated parameters to reduce the memory consump- tion in DAR TS and can directly search on lar ge-scale tar get task. SN AS Xie et al. (2018) trains the parameters of the supernet and architecture distribution parameters in the same round of back-propagation. Howe ver , such supernet requires delicate design and needs to be carefully tuned. 3 Analyzing One-shot NAS 3.1 Stability of Baselines Architectures EN AS DAR TS N A O-WS Original 2.89 2.83 2.93 network 1 3.09 3.00 2.90 network 2 3.00 2.82 2.96 network 3 2.97 3.02 2.98 network 4 2.79 2.96 3.02 network 5 3.07 3.00 3.05 T able 1: Evaluation of the stability of three recent one-shot NAS algorithms. The first block shows the test performance reported by the original authors. The second block shows the test performance of architectures we got using the algorithms. W e ran the search process for 5 times, and evaluated the 5 network architectures generated. All the models are trained with cutout DeVries and T aylor (2017) and the results are test error rate on CIF AR-10. W e follow the default settings and training procedure in the original works. Firstly , we study the stability of three previous popular one- shot N AS algorithms, EN AS Pham et al. (2018), D AR TS Liu et al. (2018b) and NA O Luo et al. (2018), as many one-shot N AS works are based on these three works. For N A O, we use N A O-WS (NA O with weight sharing), which leverages the one-shot method. W e use the official implementation of EN AS 1 , D AR TS 2 and N A O-WS 3 . W e sear ched and ev aluated on CIF AR-10 dataset, and adopted default settings and hyper- parameters by the authors, if not stated explicitly in the fol- lowing context. W e ran the search process for 5 times, and ev aluated the disco vered architectures. The results are sho wn in T able 1. W e can see that for all the three algorithms, among the 5 network architectures we ev aluated, at least one of them could achiev e the performance as reported in the original pa- per . Ho we ver the results are unstable. EN AS achie ved 2 . 79 % in the fourth run which is on par with the result reported in the paper ( 2 . 89 %), while the other four runs got inferior re- sults with about 0 . 2 drop. D AR TS achiev ed 2 . 82 % in the second run which is on par with the result reported origi- nally ( 2 . 83 %), but got around 3 . 0 % in the other four runs with about 0 . 2 drop. NA O-WS achieved 2 . 90 % and 2 . 96 % on the first and the second architectures which are on par with the re- sult reported by the authors ( 2 . 93 %), but got around 3 . 0 % on the other three architectures with also about 0 . 1 drop. More- ov er , all the results are inferior to the performance achieved by conv entional NAS algorithms which do not leverage one- shot method (e.g., AmoebaNet-B achie ved 2 . 55 %, N A ONet achiev ed 2 . 48 %). 1 https://github .com/melodyguan/enas.git 2 https://github .com/quark0/darts.git 3 https://github .com/renqianluo/NA O pytorch.git In the search process, the ability of the performance of an architecture under one-shot training (noted as one-shot per- formance ) to predict its performance under final full train- ing (noted as gr ound-truth performance ) is vital. Further, as the algorithm is practically supposed to learn to figure out relativ ely better architectures among the candidate architec- tures according to their one-shot performance, the ranking corr elation between candidate architectures during one-shot training and full training is the key to the algorithm. That is, if one architecture is better than another architecture in terms of ground-truth performance, we would expect that their one-shot performance also preserves the order, no mat- ter what concrete values they are. Since the search algorithms of most one-shot N AS works still hold RL, EA or gradient based optimization that are almost the same to the con ven- tional N AS (e.g., N A O has both regular version and one-shot version while the latter shows inferior performance), we con- jecture that the challenge is in the one-shot training mecha- nism. 3.2 Insufficient Optimization First, we consider the issue is due to the insufficient opti- mization of the architectures, as is also sho wn by Li and T alwalkar (2019). In one-shot training, the a verage training time of an individual architecture is surprisingly inadequate. Generally , an architecture should be trained for hundreds of epochs to con verge in the full training pipeline to e v aluate its performance. In con ventional NAS, early-stop is performed to cut-of f the high cost b ut each architecture is still trained for several tens of epochs, so the performance ranking of the models is relativ ely stable and can be predictable of their fi- nal ground-truth performance ranking. Howe ver in one-shot N AS, the supernet is usually trained in total for the time that training a single architecture needs. Since all the candidates are included, in a verage each architecture is trained for hand- ful steps. Though with the help of weight-sharing and the parameters of an architecture could be updated by other ar- chitectures that share the same part, the operations could play different roles in different architectures and may be af fected by other ones. Therefore, architectures are still far from con- ver gence and the optimization is insuf ficient. Their e v aluated one-shot performance are largely biased from their ground- truth performance. T raining Epochs Pairwise accuracy(%) 5 62 10 65 20 71 30 75 50 76 T able 2: P airwise accurac y of one-shot performance and ground- truth performance under different training epochs. T o verify this, we conduct experiments to see how train- ing time affects the ranking correlation. W e randomly gen- erate 50 different architectures and train each architecture stand-alone and completely , exactly follo w the default set- tings in Luo et al. (2018), and collect their performance (valid accuracy) on CIF AR-10. Then we train the architectures us- ing one-shot training with weight-sharing. Concretely at each step, one architecture from the 50 architectures is uniformly randomly sampled and trained at each step. W e train the ar- chitectures under one-shot training for different time (i.e., 5 , 10 , 20 , 30 , 50 epochs respectiv ely), and collect their perfor- mance on CIF AR-10. In order to e valuate the ranking correlation between the ar- chitectures under one-shot training and stand-alone trained ones, we calculate the pairwise accuracy between the one- shot performance and the stand-alone performance of the giv en architectures. The pairwise accuracy metric is the same as in Luo et al. (2018) which ranges from 0 to 1 , meaning the ranking is completely rev ersed or perfectly preserved. The results are listed in T able 2. W e can see that, when the training time is short (as in the case in practical one-shot NAS), the pairwise accuracy is 62% which is slightly better than random guess, meaning that the ranking is not well-preserved. The ranking of the archi- tectures under one-shot training is weakly correlated to that of ground-truth counterpart. As the training time increases, the av erage number of updates of individual architecture in- creases and pairwise accuracy increases evidently . One may think that when training for 50 epochs, the pairwise accuracy seems to be satisf actory and commonly the supernet is trained for hundreds of epochs. Howe ver we should notice that this is only a demonstrative experiment where we only use 50 ar- chitectures to train the supernet. In practice, the search algo- rithms al ways need to sample thousands of architectures (i.e., more than 10 times) to train Pham et al. (2018); Luo et al. (2018); Real et al. (2018) so the a verage training time of each individual architecture is handful as limited as the setting of 5 epochs here. This indicates that the short av erage train- ing time and inadequate update of indi vidual architecture lead to insufficient optimization of individual architecture in one- shot training and brings weak ranking correlation. 3.3 Imbalanced T raining of Architectur es Model Sizes Pairwise Accurac y(%) div erse 62 similar 73 T able 3: P airwise accurac y of one-shot performance and ground- truth performance, of architectures with similar sizes and div erse sizes. During multiple runs of one-shot N AS (e.g., D AR TS and N A O-WS), we find that they sometimes tend to produce small networks, which contain many parameter-free opera- tions (e.g., skip connection, pooling). These architectures are roughly less than 2 M B . Theoretically , this means that these small architectures should perform well on the target task dur- ing final training. Howe ver , they do not consistently perform well when fully trained and on the contrary , they generally perform worse. This is an interesting finding, meaning that during the one-shot training, these inferior small architectures tend to perform well than big architectures, so they are pick ed out by the algorithms as good architectures. In general, small architectures are easier to train and need fewer updates than big architectures to conv erge. Gi ven the insights above of the inadequate training and insufficient op- timization, we conjecture that small networks are easier to be optimized and tend to perform better than big networks. Big networks are then relati vely less-trained on the contrary com- pared to small networks and perform worse. Consequently , the algorithm learns from the incorrect information that small networks would perform well. In con ventional NAS, where each architecture is trained for tens of epochs though with early-stopping, both small architectures and big architectures are updated relativ ely more adequately and their performance ranking is more stable and close to the final ground-truth ranking. Therefore, different architectures are imbalanced during one-shot training. T o verify this and demonstrate whether this affects the ranking correlation, we conduct experiments on architectures with diverse sizes (as in the practical case) and similar sizes. For architectures with di verse sizes, we just use the architectures and the results in the above e xperiment in T able 2. Then we randomly generate other 50 architectures with similar sizes and train them using supernet. From the results in T able 3, we can see that when the ar- chitectures are of diverse sizes, the pairwise accuracy is low . When the architectures hav e similar model sizes, the pairwise accuracy increases. This indicates that in practical case the training of the architectures with different sizes is imbalanced and the ranking correlation is not preserved well. 4 Method W e hav e shown that the training of the architectures in com- mon one-shot N AS is imbalanced, then we propose an ap- proach to balance architectures with different sizes. W e mainly propose our approach based on NA O Luo et al. (2018) for the following two reasons: Firstly , both ENAS and N A O perform sampling from candidate architectures at each step during the search. NA O directly samples from the architec- ture pool while EN AS samples from the learned controller , so the sampling procedure is easier to control in N A O. Secondly , D AR TS and D AR TS-like w orks (e.g., ProxylessNAS Cai et al. (2018), SN AS Xie et al. (2018)) combine the parameter optimization and architecture optimization together in a for- ward pass which is not based on sampling architectures. T o balance the architectures, we expect to encourage more updates for large architectures o ver small architectures. Specifically , we expect an architecture x i to be trained for T i steps where T i is in proportional to its model size, follo wing: T i ∝ model siz e of ( x i ) (1) One naiv e and simple way is to calculate the size of the smallest model noted as x s in the search space, and set a base step T base for it. Then for any architecture x i , its number of training steps is calculated as: T i = T base × model siz e of ( x i ) model siz e of ( x s ) (2) Howe ver , such a nai ve implementation may lead to expensi ve cost which may run out of the search b udget, and the relation- Model T est error(%) Params(M) Methods Hier-EA Liu et al. (2018a) 3.75 15.7 EA N ASNet-A Zoph et al. (2018) 2.65 3.3 RL AmoebaNet-B Real et al. (2018) 2.13 34.9 EA N A ONet Luo et al. (2018) 2.48 10.6 gradients N A ONet Luo et al. (2018) 1.93 128 gradients EN AS Pham et al. (2018) 2.89 4.6 one-shot + RL D AR TS Liu et al. (2018b) 2.83 3.4 one-shot + gradients N A O-WS Luo et al. (2018) 2.90 2.5 one-shot + gradients SN AS Xie et al. (2018) 2.85 2.9 one-shot + gradients P-D AR TS Chen et al. (2019) 2.50 3.4 one-shot + gradients PC-D AR TS Xu et al. (2019) 2.57 3.6 one-shot + gradients BayesN AS Zhou et al. (2019) 2.81 3.4 one-shot + gradients ProxylessN AS Cai et al. (2018) 2.08 5.7 one-shot + gradients Random Liu et al. (2018b) 3.29 3.2 random Balanced N A O 2.60 3.8 one-shot + gradients T able 4: T est performance on CIF AR-10 dataset of our discovered architecture and architectures by other NAS methods, including methods with or without one-shot method. The first block is conv entional N AS without one-shot method. The second block is one-shot NAS methods. Model/Method T op-1(%) MobileNetV1 How ard et al. (2017) 70.6 MobileNetV2 Sandler et al. (2018) 72.0 N ASNet-A Zoph et al. (2018) 74.0 AmoebaNet-A Real et al. (2018) 74.5 MnasNet T an et al. (2019) 74.0 N A ONet Luo et al. (2018) 74.3 D AR TS Liu et al. (2018b) 73.1 SN AS Xie et al. (2018) 72.7 P-D AR TS(C10) Chen et al. (2019) 75.6 P-D AR TS(C100) Chen et al. (2019) 75.3 Single-Path N AS Stamoulis et al. (2019) 74.96 Single Path One-shot Guo et al. (2019) 74.7 ProxylessN AS Cai et al. (2018) 75.1 PC-D AR TS Xu et al. (2019) 75.8 Balanced N A O 74.4 T able 5: T est performances on Imagenet dataset under mobile set- ting. The first block shows human designed networks. The second block lists NAS works that search on CIF AR-10 or CIF AR-100 and directly transfer to ImangeNet. The third block are NAS works that directly search on ImageNet ship between training time and model size is not as simple as a strictly linear relationship. Therefore, we use proportional sampling to address the problem. Specifically in N A O Luo et al. (2018), during the training of the supernet, an architecture is randomly sampled from the candidate architecture population at each step. In- stead of randomly sampling, we sample the architectures with a probability in proportion to their model sizes. Mathemati- cally speaking, we sample an architecture x i from the candi- date architecture pool X with the probability: P ( x i ) = model siz e of ( x i ) P x i ∈X model siz e of ( x i ) . (3) If the supernet is trained for T t steps in total, then the expec- tation of the training steps of architecture x i is: E T i = T t × model siz e of ( x i ) P x i ∈X model siz e of ( x i ) (4) It is proportional to the model size of x i . Following this, the architectures of dif ferent sizes could be balanced during training within the supernet. W e incorporate this proportional sampling methodology into N A O Luo et al. (2018), specifically the one-shot N A O version (i.e., N A O-WS in the original paper) which utilizes weight sharing technology , and propose Balanced NA O . The final detailed algorithm is shown in Alg. 1. 5 Experiments 5.1 Ranking Correlation Impr ovement T raining Sampling P airwise Epochs Method Accuracy(%) 5 Random sampling 62 5 Proportional sampling 69 10 Random sampling 65 10 Proportional sampling 72 20 Random sampling 71 20 Proportional sampling 76 30 Random sampling 75 30 Proportional sampling 77 50 Random sampling 76 50 Proportional sampling 78 T able 6: P airwise accurac y of one-shot performance and ground- truth performance. Firstly , to see whether our proposed proportional sampling improv es the ranking correlation, we conduct experiments following the same setting as in Sec. 3.3. W e train the 50 architectures with and without proportional sampling, and compute the pairwise accuracy , as listed in T able 6. When Algorithm 1 Balanced Neural Architecture Optimization Input : Initial candidate architectures set X . Initial archi- tectures set to be ev aluated denoted as X ev al = X . Perfor - mances of architectures S = ∅ . Number of seed architec- tures K . Number of training steps of the supernet N . Step size η . Number of optimization iterations L . for l = 1 , · · · , L do for i = 1 , · · · , N do Sample an architecture x ∈ X ev al using Eqn. (3) T rain the supernet with the sampled architecture x end for Evaluate all the candidate architectures X to obtain the dev set performances S ev al = { s x } , ∀ x ∈ X ev al . En- large S : S = S S S ev al . T rain the N A O model (encoder , performance predictor and decoder) using X and S . Pick K architectures with top K performances among X , forming the set of seed architectures X seed . For x ∈ X seed , obtain a better representation e x 0 from e x 0 by applying gradient descent with step size η , based on trained encoder and performance predictor . Denote the set of enhanced representations as E 0 = { e x 0 } . Decode each x 0 from e x 0 using decoder, set X ev al as the set of ne w architectures decoded out: X ev al = {D ( e x 0 ) , ∀ e x 0 ∈ E 0 } . Enlarge X as X = X S X ev al . end for Output : The architecture within X with the best perfor- mance equipped with proportional sampling, the accuracy increases by more than 10 points. 5.2 Stability Study Methods EN AS DAR TS N A O-WS Balanced NA O network 1 3.09 3.00 2.90 2.60 network 2 3.00 2.82 2.96 2.63 network 3 2.97 3.02 2.98 2.65 network 4 2.79 2.96 3.02 2.71 network 5 3.07 3.00 3.05 2.77 mean 2.98 2.96 2.98 2.67 T able 7: Evaluation of stability . Scores are test error rate on CIF AR- 10 of 5 architectures discovered. All models are trained with cutout. The first block lists the baseline one-shot NAS we compare to. The second block is our proposed Balanced N A O. Then, as we claim the stability to be a problem, we ev aluate the stability of our proposed Balanced NA O and compare to baseline methods (i.e., ENAS, DAR TS, N A O-WS). Same to the e xperiments before, W e ev aluate 5 architectures discov- ered by the algorithms and measure the mean performance. W e search and test on CIF AR-10 and show the results in T a- ble 7. Compared to baseline methods, all the 5 architectures per- form better with an a verage test error rate of 2 . 67 % and a best test error rate of 2 . 60 % . Notably , we find that they perform well enough with lower v ariance, leading to better stability . 5.3 Compared to V arious N AS Methods W e then compare our proposed Balanced NA O with other N AS methods, including N AS with or without the one-shot approach, to demonstrate the improvement of our approach. T able 4 reports the test error rate, model size of sev eral meth- ods and our method. The first block lists sev eral classical conv entional NAS methods without the one-shot approach and trains each can- didate architecture from scratch for sev eral epochs. They achiev ed promising test error rate but require hundreds or thousands of GPU days to search, which is intractable for most researchers. The second block lists se veral main-stream one-shot NAS methods which largely reduce the cost to less than ten GPU days, but the test performance of most works are relati vely inferior compared to con ventional methods as we claimed before. Note that our method in the last block achieves better per- formance than previous baseline one-shot NAS (e.g., ENAS, D AR TS, N A O-WS, SNAS), and is on par with conv entional N AS (e.g., N ASNet and NA ONet) under similar model size, while the latter is costly . 5.4 T ransfer to Large Scale Imagenet T o further verify the transferability of our discov ered archi- tecture, we transfer it to large scale Imagenet dataset. All the training settings and details exactly follow D AR TS Liu et al. (2018b). The results of our model and other models are listed in T able 5 for comparison. Our model achie ves a top-1 accuracy of 74 . 4 %, which sur- passes human designed models listed in the first block. W e mainly compare our model to the models in the second block for fair comparison since they hav e roughly similar search space and apply the same transfer strategy from CIF AR to Im- ageNet. Our model surpasses many N AS methods in the sec- ond block and is on par with the best-performing work with minor gap. It is worth noting that N ASNet-A, AmoebaNet-A, MnasNet and N A ONet are all discovered with con ventional N AS approaches that are not one-shot methods and the archi- tectures are trained from scratch. Our model achieves better or almost the same performance compared to them. Howe ver , it is slightly worse than Stamoulis et al. (2019), Guo et al. (2019), Cai et al. (2018) and Xu et al. (2019) in the third blocks which directly search on ImageNet with more careful design and more complex search space. 6 Conclusion In this paper , we find that due to the insufficient optimization problem in one-shot N AS, architectures of different sizes are imbalanced, which introduces incorrect rewards and leads to weak ranking correlation. This further misleads the search algorithm and leads to inferior performance ag ainst con ven- tional NAS and weak stability . W e therefore propose Bal- anced NA O by balancing the architectures via proportional sampling. Extensiv e experiments sho w that our proposed method shows significant improv ements both on performance and stability . For future work, we would like to further in ves- tigate into one-shot N AS to improve its performance. References Gabriel Bender , Pieter-Jan Kindermans, Barret Zoph, V ijay V asude van, and Quoc Le. Understanding and simplifying one-shot architecture search. In International Conference on Machine Learning , pages 549–558, 2018. Han Cai, Ligeng Zhu, and Song Han. Proxylessnas: Di- rect neural architecture search on target task and hardware. arXiv pr eprint arXiv:1812.00332 , 2018. Xin Chen, Lingxi Xie, Jun W u, and Qi T ian. Pro- gressiv e differentiable architecture search: Bridging the depth gap between search and e v aluation. arXiv pr eprint arXiv:1904.12760 , 2019. Xiangxiang Chu, Bo Zhang, Hailong Ma, Ruijun Xu, Jixiang Li, and Qingyuan Li. Fast, accurate and lightweight super- resolution with neural architecture search. arXiv preprint arXiv:1901.07261 , 2019. T errance DeVries and Graham W T aylor . Improv ed regular - ization of conv olutional neural netw orks with cutout. arXiv pr eprint arXiv:1708.04552 , 2017. Golnaz Ghiasi, Tsung-Y i Lin, and Quoc V Le. Nas-fpn: Learning scalable feature pyramid architecture for object detection. In Pr oceedings of the IEEE Conference on Com- puter V ision and P attern Recognition , pages 7036–7045, 2019. Zichao Guo, Xiangyu Zhang, Haoyuan Mu, W en Heng, Zechun Liu, Y ichen W ei, and Jian Sun. Single path one- shot neural architecture search with uniform sampling. arXiv pr eprint arXiv:1904.00420 , 2019. Andrew G Howard, Menglong Zhu, Bo Chen, Dmitry Kalenichenko, W eijun W ang, T obias W eyand, Marco An- dreetto, and Hartwig Adam. Mobilenets: Efficient con- volutional neural networks for mobile vision applications. arXiv pr eprint arXiv:1704.04861 , 2017. Liam Li and Ameet T alwalkar . Random search and repro- ducibility for neural architecture search. arXiv pr eprint arXiv:1902.07638 , 2019. Hanxiao Liu, Karen Simonyan, Oriol V inyals, Chrisantha Fernando, and Koray Kavukcuoglu. Hierarchical repre- sentations for ef ficient architecture search. In International Confer ence on Learning Repr esentations , 2018. Hanxiao Liu, Karen Simonyan, Y iming Y ang, and Hanx- iao Liu. Darts: Differentiable architecture search. arXiv pr eprint arXiv:1806.09055 , 2018. Renqian Luo, Fei T ian, T ao Qin, and T ie-Y an Liu. Neural ar- chitecture optimization. arXiv preprint , 2018. Hieu Pham, Melody Guan, Barret Zoph, Quoc Le, and Jef f Dean. Efficient neural architecture search via parameter sharing. In International Confer ence on Mac hine Learn- ing , pages 4092–4101, 2018. Esteban Real, Alok Aggarwal, Y anping Huang, and Quoc V Le. Regularized ev olution for image classifier architecture search. arXiv pr eprint arXiv:1802.01548 , 2018. Mark Sandler, Andrew Howard, Menglong Zhu, Andrey Zh- moginov , and Liang-Chieh Chen. Mobilenetv2: In verted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer V ision and P attern Recog- nition , pages 4510–4520, 2018. David So, Quoc Le, and Chen Liang. The ev olved trans- former . In International Confer ence on Machine Learning , pages 5877–5886, 2019. Dimitrios Stamoulis, Ruizhou Ding, Di W ang, Dimitrios L ymberopoulos, Bodhi Priyantha, Jie Liu, and Diana Marculescu. Single-path nas: Designing hardware- efficient convnets in less than 4 hours. arXiv pr eprint arXiv:1904.02877 , 2019. Mingxing T an, Bo Chen, Ruoming Pang, V ijay V asude v an, Mark Sandler , Andre w How ard, and Quoc V Le. Mnasnet: Platform-aware neural architecture search for mobile. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 2820–2828, 2019. Sirui Xie, Hehui Zheng, Chunxiao Liu, and Liang Lin. Snas: Stochastic neural architecture search, 2018. Y uhui Xu, Lingxi Xie, Xiaopeng Zhang, Xin Chen, Guo-Jun Qi, Qi Tian, and Hongkai Xiong. Pc-darts: Partial chan- nel connections for memory-ef ficient differentiable archi- tecture search, 2019. Jiahui Y u and Thomas Huang. Network slimming by slimmable networks: T owards one-shot architecture search for channel numbers. arXiv preprint , 2019. Hongpeng Zhou, Minghao Y ang, Jun W ang, and W ei Pan. Bayesnas: A bayesian approach for neural architecture search. arXiv pr eprint arXiv:1905.04919 , 2019. Barret Zoph and Quoc V Le. Neural architecture search with reinforcement learning. arXiv preprint , 2016. Barret Zoph, V ijay V asudev an, Jonathon Shlens, and Quoc V Le. Learning transferable architectures for scalable im- age recognition. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , pages 8697– 8710, 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment