On Adaptive Linear-Quadratic Regulators

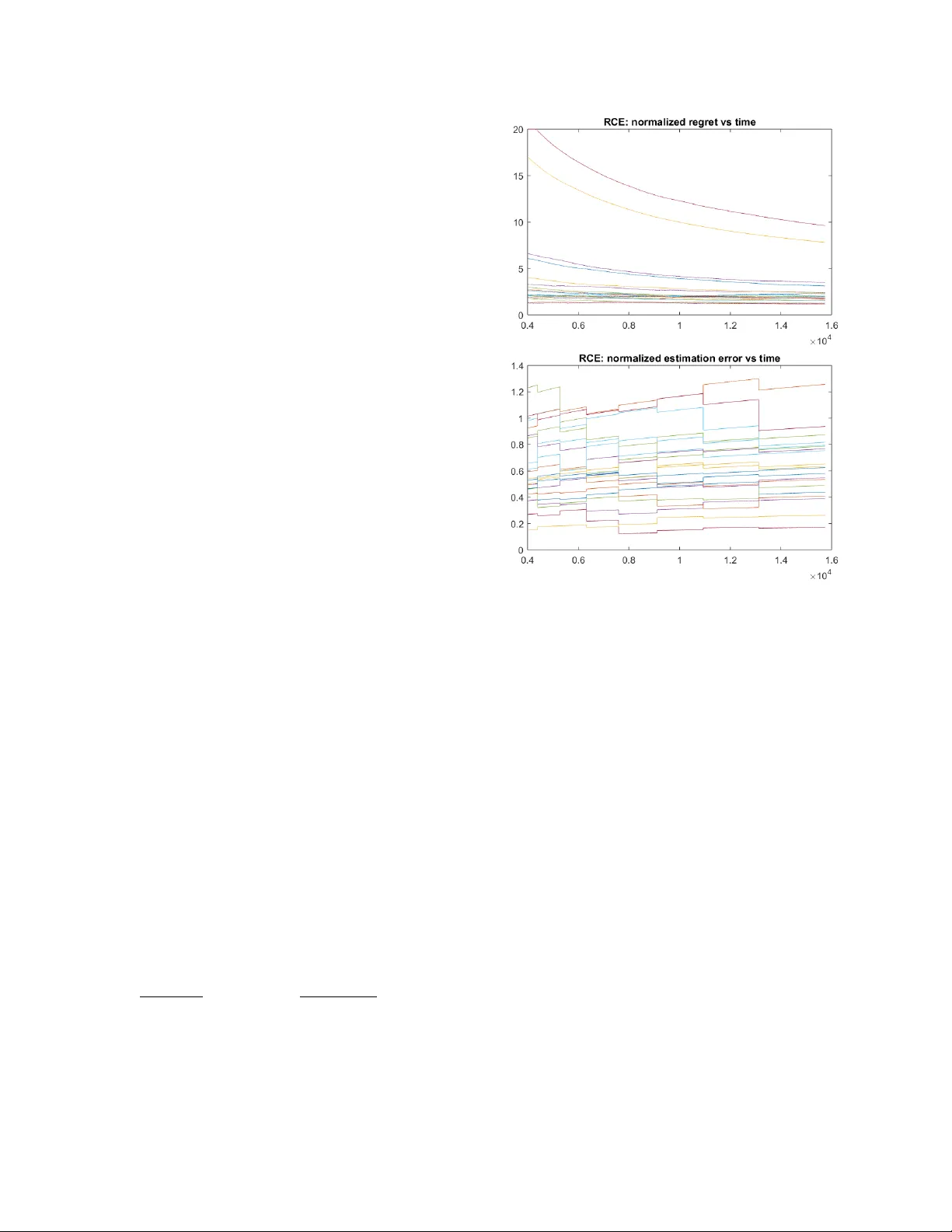

Performance of adaptive control policies is assessed through the regret with respect to the optimal regulator, which reflects the increase in the operating cost due to uncertainty about the dynamics parameters. However, available results in the liter…

Authors: Mohamad Kazem Shirani Faradonbeh, Ambuj Tewari, George Michailidis