Causal Simulation Experiments: Lessons from Bias Amplification

💡 Research Summary

**

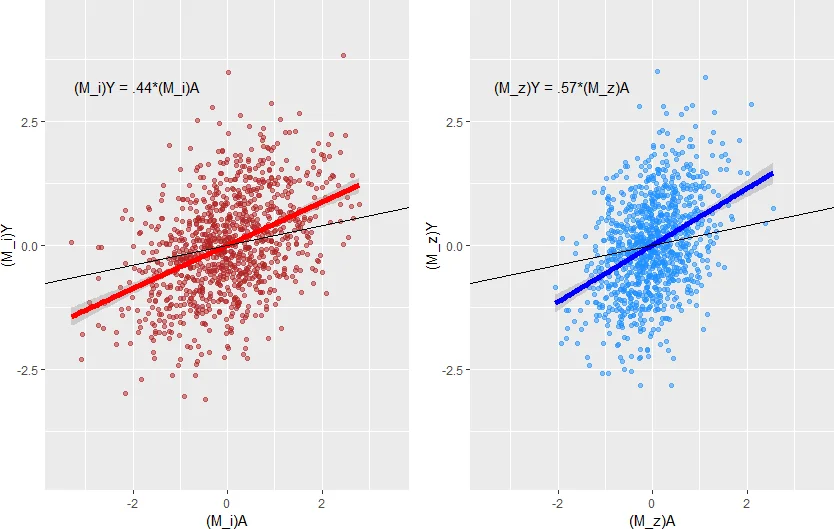

The paper tackles a subtle but important problem in causal inference: the phenomenon of bias amplification, where conditioning on certain observed variables—called bias‑amplifying variables (BAVs)—can increase, rather than decrease, bias caused by unmeasured confounding. The authors begin by formalizing a data‑generating process that includes a treatment A, an outcome Y, an unmeasured confounder U, and ten measured variables BAV₁,…,BAV₁₀. Each BAV influences both A and U but has no direct effect on Y. Consequently, there is an unblocked path A←U→Y and ten potentially blockable paths A←BAVᵢ→U→Y. Intuitively, researchers would include the BAVs to block the latter paths, yet theory and prior simulations suggest that doing so may amplify bias from the former path.

To understand why, the authors recast ordinary least‑squares (OLS) estimators in matrix form and apply the Frisch‑Waugh‑Lovell (FWL) theorem. They derive the expectation of the naïve estimator (regressing Y on A alone) and the estimator that also includes the BAVs. The naïve estimator’s bias is proportional to βᵤγᵤσ²_U/σ²_A, while the BAV‑adjusted estimator’s bias contains an additional term −γ²_BAVσ²_BAV/(σ²_A−γ²_BAVσ²_BAV). The denominator σ²_A−γ²_BAVσ²_BAV represents the residual variance of A after removing the linear contribution of the BAVs. When BAVs explain a large share of A’s variance, this denominator shrinks, causing the bias term to blow up. In other words, the more the BAVs “explain” the treatment, the larger the amplification of bias from the unmeasured confounder.

The authors point out that Pearl’s original derivation of bias amplification assumes a linear conditional expectation E

Comments & Academic Discussion

Loading comments...

Leave a Comment