An Efficient Mechanism for Computation Offloading in Mobile-Edge Computing

Mobile edge computing (MEC) is a promising technology that provides cloud and IT services within the proximity of the mobile user. With the increasing number of mobile applications, mobile devices (MD) encounter limitations of their resources, such a…

Authors: Mahla Rahati-Quchani, Saeid Abrishami, Mehdi Feizi

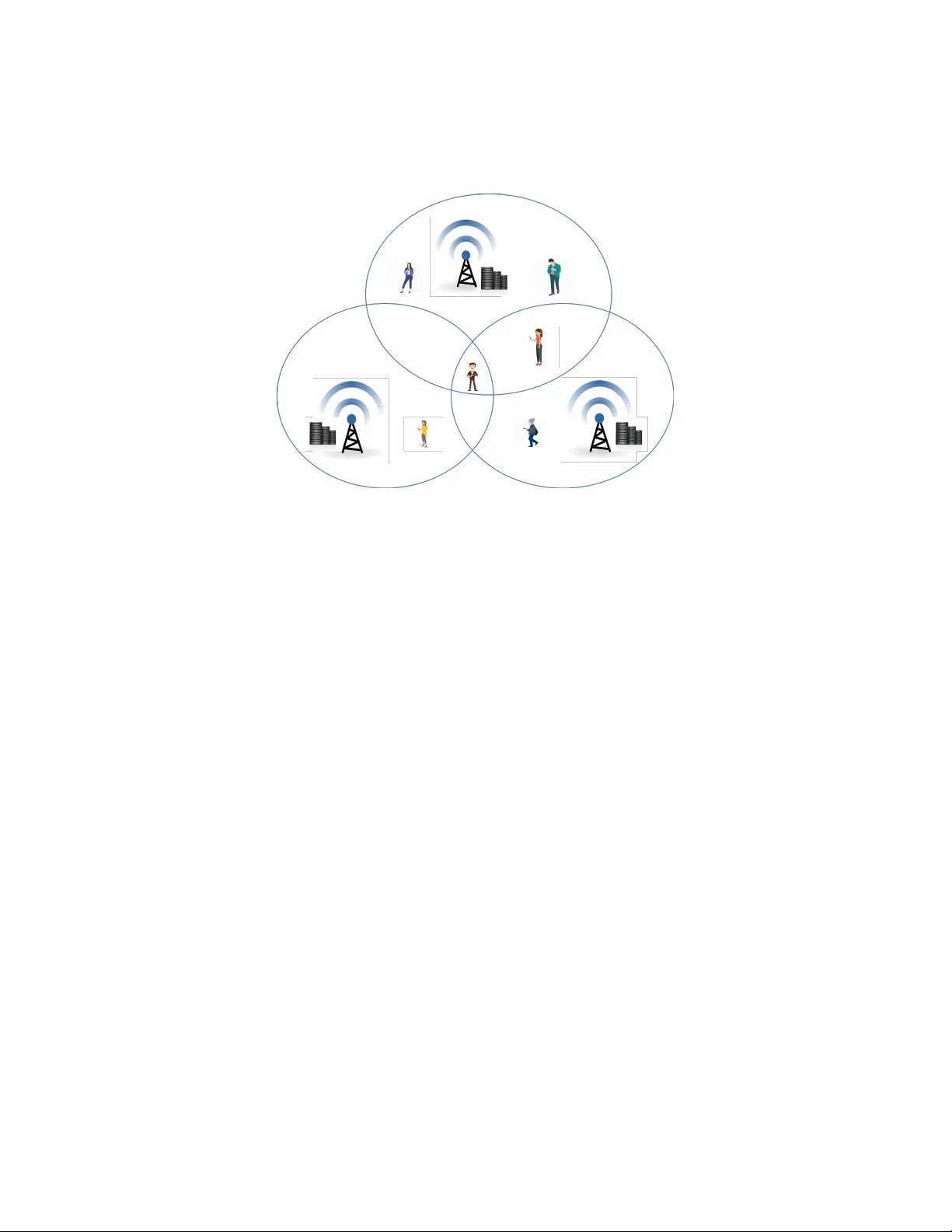

An Efficien t Mec hanism for Computation Offloading in Mobile-Edge Computing Mahla Rahati-Quc hani a, ∗ , Saeid Abrishami a, ∗ , Mehdi F eizi b, ∗ a Dep artment of Computer Engine ering, Engine ering F aculty, F er dowsi University of Mashhad, Azadi Squar e, Mashhad, Ir an b Dep artment of Ec onomics, F erdowsi University of Mashhad, Azadi Squar e, Mashhad, Ir an Abstract Mobile edge computing (MEC) is a promising technology that pro vides cloud and IT services within the pro ximity of the mobile user. With the increasing n umber of mobile applications, mobile devices (MD) encoun ter limitations of their resources, such as battery life and computation capacity . The compu- tation offloading in MEC can help mobile users to reduce battery usage and sp eed up task execution. Although there are many solutions for offloading in MEC, most usually only employ one MEC server for impro ving mobile device energy consumption and execution time. Instead of conv en tional centralized optimization metho ds, the current paper considers a decentralized optimization mec hanism b etw een MEC serv ers and users. In particular, an assignmen t mec h- anism called sc ho ol c hoice is emplo yed to assist heterogeneous users to select differen t MEC op erators in a distributed environmen t. With this mechanism, eac h user can benefit from minimizing the price and energy consumption of ex- ecuting tasks while also meeting the sp ecified deadline. The present research has designed an efficient mec hanism for a computation offloading scheme that ac hieves minimal price and energy consumption under latency constrain ts. Nu- merical results demonstrate that the proposed algorithm can attain efficien t and successful computation offloading. ∗ Corresponding author Email addr esses: mahla.rahati@mail.um.ac.ir (Mahla Rahati-Quc hani ), s-abrishami@um.ac.ir (Saeid Abrishami ), feizi@um.ac.ir (Mehdi F eizi ) Pr eprint submitte d to Journal of L A T E X T emplates Mar ch 10, 2020 Keywor ds: Mobile edge computing, Resource allo cation, Efficien t computation offloading, Assignmen t mechanism, School choice 1. In troduction No wada ys, mobile devices face some restrictions due to the rapid develop- men t of mobile applications, suc h as those for face recognition, natural language pro cessing, interactiv e gaming, and augmented realit y . These applications are usually latency sensitiv e, computationally in tensive, and high in the energy con- sumption, such that MDs with limited battery and computational resources can hardly supp ort such programs. The Europ ean T elecommunications Standards Institute (ETSI) has in tro duced mobile edge computing as a new standard- ization group. The purp ose is to provide information technology and cloud computing capabilities in the proximit y of mobile users. In this wa y , users are offered a service environmen t characterized by proximit y , lo w latency , high rate access, and sufficien t resources that combine edge computing, mobile devices, and a wireless net w ork. As a result, users can tak e adv antage of more in telligent applications[1]. The field of computation offloading researc h addresses the sending of tasks to MEC servers, which can be a small data cen ter in the users area created b y the telecom op erator. More exactly , with less than one-millisecond standard latency , MEC and 5G facilitate the usage of cloud resources in the proximit y of MDs and so can effectively supp ort dela y sensitive applications. In comparison to MDs, the mobile edge offers many significan t adv antages, including servers pro viding more computational resources which enable applications to run faster and more efficien tly . Connected with one hop, MEC serv ers consume less energy and time than a cloud in the sending and receiving of application data. In a dis- tributed geographic area, disparate op erators hav e several serv ers with different and limited computational resources. These may serve many MDs with endless sequences of computational tasks, v arious application c haracteristics, and v aried comm unication requiremen ts. Therefore, multiple heterogeneous MDs comp ete 2 for n umerous heterogeneous MEC resources[2]. Computation offloading in MEC is an essential tec hnique to allow users to access computational capabilities at the netw ork edge. Eac h user can decide to offload a computational task instead of running it lo cally . Since the primary purp ose of offloading is to reduce the energy consumption and execution time, most previous works hav e considered these t wo parameters [3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18]. Ho w ever, for MEC op erators, the usage of resources is costly . As a result, more recen t w orks ha v e fo cused on the monetary rev enue of op erators [19, 20, 21, 22, 23]. F ew researchers ha ve considered all of these parameters at the same time[24, 25, 26]. Numerous studies hav e solved the computation offloading problem from the standp oin t of one single service op erator, thus indicating that the authors em- plo yed only one MEC with a centralized manager [4, 3, 8, 27]. How ev er, the most recen t w orks ha ve in v estigated the possibility of m ultiple MECs within the user area. With its significant o verhead and complexity , a centralized con troller for multi-MEC environmen ts is less applicable. As a result, most recen t w orks ha ve concentrated on decentralized metho ds [28, 29, 30, 31]. Game theory has b een one of the most p ow erful to ols emplo yed to tackle this problem [32, 33, 34, 29, 23, 35, 2, 36, 37, 38, 28]. This is a useful framework to analyze interactions among indep enden t MDs acting in their interests. Users can mak e offloading decisions for their benefit and pla y the game until reac hing a stable state (i.e., Nash Equilibrium). Without a cen tral authorit y , suc h decisions can b e reached based on lo cal information ab out the system and en vironment conditions, thus prev enting information collection from massiv e mobile devices b y a central controller. In mec hanism design, a market maker generally assumes the preference of supp osedly rational agents seeking to promote their own b enefit and matc hes eac h of them with another agent/ob ject. Ho w ever, since the market maker is in terested in certain c haracteristics of the final match and dep ends on these, he or she might c ho ose different matching mechanisms. Most previous works on MEC mechanisms hav e concentrated on the stability of the final matc hing as 3 its desired characteristic despite having sacrificed efficiency [25, 39, 26]. Ev en so, efficiency plays a critical role in MEC because of the necessity for quic k resp onses. In our sp ecific case, we are lo oking for a matching mechanism which guaran tees efficiency . F urthermore, the matching theory considers b oth sides, i.e. MECs and MDs, as agents with preferences. In this paper, although MDs ma y prefer cheaper and low er latency MECs, but the MECs prioritize users based on distance. The presen t study holds that MECs ha v e no preferences on MDs, ev en though MECs ha ve a higher priority for MD’s nearer to them. Consequently , MECs can b e considered as ob jects and the current w ork may utilize the concept of assignmen t mec hanism instead of matching. In the assignment mechanism, there are tw o sets of agen ts and ob jects whic h should be assigned to eac h other. The difference b et ween these t wo sets is that agents hav e preferences o ver ob jects, while ob jects only ha ve priorities on agents [40]. MECs do not ha ve preferences o v er MDs, but they nevertheless rank them. T o differen tiate these rankings o ver MDs from the concept of preferences, will call suc h rankings priorities or priorit y lists. In real life, those priorities are often the outcome of technical constrain ts. F or instance, MDs who are lo cated close to a MEC ha ve a higher priority for connecting to that MEC than the MDs who are farther aw a y . One may think that the MECs ”prefers” MDs who are closer, although it is b etter to say that the MDs who are closer ha ve a higher priority than the MDs who are not. T o the b est of our knowledge, this w ork is the first attempt to employs one of the w ell-kno wn assignmen t mechanisms, the sc ho ol c hoice, to tackle the prob- lem of computation offloading in a multi-user and multi-MEC en vironment. An efficien t but unstable algorithm is used to meet the low latency requirements. The prop osed algorithm aims to minimize the price and energy consumption of the user task while meeting its deadline. In comparison with energy based of- floading, price based offloading, and the heuristic offloading decision algorithm (HOD A) prop osed in [41], simulation results show that the current studys algo- rithm outperforms these in terms of minimize the price and energy consumption of the user task and increas the p ercen tage of successful offloading users. 4 The rest of the pap er is organized as follo ws. Section I I presents related w orks on computation offloading tec hnologies in MEC. Section I I I introduces the system mo del of this sc heme. Section IV describ es the sc ho ol choice for the computation offloading algorithm. Section V discusses the sim ulation results and, finally , Section VI concludes the w ork. 2. Related W orks The ob jective of the curren t research is to study the MEC offloading problem. A set of studies ha v e already fo cused on how to optimize MEC task offloading from different p ersp ectives. In fact, there are plent y of works in the area of computation offloading in MEC environmen ts. In terms of arc hitecture, most early studies consider single-user and single-MEC environmen ts [7, 6, 4, 5, 3]. Ho wev er, most recen t works study the multi-user and multi-MEC [16, 28, 29]. In terms of metho d, these studies are divided into tw o categories, namely cen- tralized offloading and decen tralized offloading approaches. 2.1. Centr alize d Offlo ading Appr o aches In the cen tralized method, all information m ust b e sen t to a con troller whic h mak es the decisions. F rom the start, most w orks hav e fo cused on single-user and simple scenarios [42, 14, 43], but these approac hes are not practical in a real w orld with multiple users. Zhang et al. explored a new multi-user scenario with one MEC. T o mini- mize energy consumption, they formulated an optimization problem in which the energy cost of b oth task computing and file transmission are taken into consideration [3]. Zhao et al. designed other m ulti-users and single-MECs, whic h jointly optimize the offloading selection, radio resource allocation, and computational resource allo cation [4]. Because of the limited MEC servers, many users cannot offload in each time slot. So, T ang et al. addressed a scenario ab out maximizing the offloaded tasks n umber in MEC. They analyzed and solved the partial offloaded task num b er 5 maximization problem by using the blo ck co ordinate descent (BCD) method. Also, they inv estigated their metho d to UA V (Unmanned Aerial V ehicle) en- abled MEC system. The solution idea of UA V enabled MEC systems optimiza- tion problem is the same as first metho d. Therefore, their partial offloading strategy can b e seamlessly adapted to UA V enabled MEC system [44]. Y ou et al. in [8] proposed a scenario in which mobile users ha v e different computation workloads and lo cal computation capacities. In addition, they form ulated a conv ex optimization problem with partial offloading to minimize the sum of mobile-energy consumption. Their key finding was that the opti- mal p olicy for controlling offloading data size and time allo cation has a simple threshold-based structure. Besides, this study assumed that the edge has p er- fect knowledge of the lo cal computing energy consumption, c hannel gains, and fairness factors of all mobile users. This information is utilized for the design of a centralized resource allo cation to achiev e the minim um weigh ted sum of mo- bile energy consumption. This result was also extended to OFDMA-based MEC systems, whic h offer orthogonal frequency-division multiple access for devising a near-optimal computation offloading p olicy . T o minimize the latency of all devices under limited communication and com- putation resource constraints, Ren et al. designed a multi-user video compres- sion offloading. They studied and compared three mo dels: lo cal compression, edge cloud compression, and partial compression offloading. F or the lo cal com- pression m odel, a conv ex optimization problem was formulated to minimize the w eighted-sum dela y of all devices under the communication resource constrain t. They considered that massive online monitoring data should b e transmitted and analyzed b y a central unit. F or the edge cloud compression mo del, this w ork analyzed the task completion pro cess b y mo deling a join t resource allo- cation problem with the constraints of b oth communication and computation resources. F or the partial compression offloading model, they first devised a piecewise optimization problem and then derived an optimal data segmentation strategy in a piecewise structure. Finally , n umerical results demonstrated that the partial compression offloading can more efficien tly reduce end-to-end latency 6 in comparison with the t wo other mo dels [27]. A centralized computational offloading mo del may b e challenging to run when massiv e offloading information is receiv ed in real time. As errors during the data gathering step may pro duce inefficient results, the local mo de is more reliable and accurate than centralized solutions. Therefore, in many cases, the results of distributed approaches are more robust than those of centralized solu- tions. Due to the computational complexity of the scenario and numerous data from indep endent MDs, computation offloading for multi-user and m ulti-MEC systems p oses a great c hallenge in a centralized environmen t [45]. 2.2. De c entr alize d Offlo ading Appr o ach With the dev elopment of MEC, data traffic has rapidly grown in recent y ears. In resp onse to this, offloading for MDs has b een considered as an op- timal solution. T raditional centralized offloading approaches cannot meet the requiremen ts of emerging interactiv e programs for long-term communications. Consequen tly , the distributed computation mo del is preferred. Esp ecially in a m ulti-server scenario, information gathering and decision-making do not require a cen tral controller and eac h user can c ho ose to offload as desired. F urthermore, distributed computation offloading mo dels are flexible and scalable. 2.2.1. Heuristic metho ds Some practical studies ha v e inv estigated the task offloading problem in or- der to minimize execution time and energy consumption. F or the distributed computation offloading mo del, Ugwuanyi et al. [16] prop osed a resource provi- sioning algorithm based on the Bankers algorithm. This was a w ay to pro vide higher reliabilit y of netw ork in teractions that utilize softw are-defined net work- ing for the reduction of comm unication ov erhead. Because edge no des hav e a finite amoun t of resources, this study attempted to av oid a deadlo ck situa- tion. F urthermore, since a deadlo ck may o ccur due to the considerable num ber of devices contending for a limited amount of resources, they also considered o verdemand and delays while provisioning resources. 7 T ran et al. prop osed an algorithm for a multi-MEC and m ulti-user scenario whic h minimizes the latency and energy consumption b y optimizing the task offloading decision, uplink transmission p ow er, and computational resource al- lo cation. Ho wev er, their algorithm do es not guarantee the latency requirements for all users [13]. The heuristic offloading decision algorithm (HODA) prop osed in [41] is semi- distributed and runs in tw o stages. In the first stage, each mobile user inde- p enden tly optimizes the transmission p ow er and determines whether to send an offloading request. In the second stage , the macro cell lo cally forms an optimal offloading set by prioritizing users according to the maximum utility . Finally , the selected mobile users offload their computation tasks. In this pap er, the resource pro viders can either increase the capabilit y of the MEC computing cen ter or deplo y multiple computing cen ters. In the case of multiple computing cen ters, the mobile users can apply the offloading p olicies that make offloading decisions among multiple providers independently and send offloading requests to the selected computing cen ter. 2.2.2. Game the ory Recen t adv ances in technology and the ever-increasing demand for comput- ing and communications hav e generated an urgent need for a new analytical framew ork to address the present and future tec hnical challenges of wireless and comm unication netw orks. As a result, game theory has b een recognized in recen t years as a cen tral tool for the design of wireless netw orks and future com- m unications. The Game theory arose out of the necessity to combine the rules and techniques of decision-making for future generations of wireless and com- m unication nodes. Thus, dep ending on their requiremen ts for v arious services, users can effectively communicate, for example, b y video streaming ov er mo- bile netw orks. In brief, game theory offers excellent b enefits for future wireless net works, such as decision making based on lo cal information and distributed implemen tation, robust results, appropriate approac hes for solving problems of a combinatorial nature, and rich mathematical and analytical to ols for opti- 8 mization [46]. Lately , game theory has b een widely employ ed as a p ow erful to ol for the distributed computation offloading approac h among m ultiple MDs with v aried interests. In this con text, Yi et al. sc heduled join t computation offloading and wireless transmission sc heduling with delay-sensitiv e applications in a single MEC sce- nario. In this mo del, there is a base station with multi-c hannels and multi-users who send a request to each channel. If there is more than one request for one c hannel, a queuing mo del is required for dynamic managemen t of the compu- tation offloading and transmission sc heduling for mobile edge computing. This study formulated a nov el mechanism, named MOTM, to join tly decide compu- tation offloading, transmission scheduling, and a pricing rule [36]. Nevertheless, single-MEC is a simple scenario and there are currently several serv ers within a user area from whic h to choose. With a multi-user and multi-MEC scenario in mind, Y ang et al. designed a p oten tial game b et ween a multi-user and m ulti-MEC for a distributed computa- tion offloading approach. They solv ed the total ov erhead in terms of latency and energy consumption problem which consist of the w eigh ting parameters affecting total system o verhead: latency , in terference, and energy consumption[28]. F or multi-serv er and multi-user netw orks, Dinh et al. in [29] prop osed a reinforcemen t learning offloading mec hanism (Q-learning) to achiev e long-term utilities. They modeled a theoretical game framework in whic h MDs select their targeted edge no des and the transmission p ow er to maximize their pro cessed CPU cycles in each time slot, while also saving on energy consumption. Unlike existing works requiring predefined sto chastic dynamics of channels for learning strategies, this study adopted a mo del-free reinforcement learning mechanism to design an offloading p olicy for pla yers. 2.2.3. Me chanism design Mec hanism design has some distinctive features. F or example, a game de- signer c ho oses the game structure rather than inheriting one. Therefore, the mec hanism design is often called rev erse game theory .” Also, the designer is in- 9 terested in the games outcome [46]. In computational offloading problems, the mec hanism design has tw o important fields, auctions, and matc hing theory . Some works only fo cus on the user’s side to reduce the running time and energy consumption of the user device for offloading. How ev er, other stud- ies concen trate on the financial asp ects of the op erator’s side since the use of resources incurs a cost for op erators in terms of pow er consumption or other exp enditures. Some authors, suc h as [30, 31, 24], hav e solved the issue by an auction. Bahreini et al. in [30] prop osed an envy-free auction mechanism for resource allo cation in the MEC and cloud, in which users place bids for using a cer- tain amount of resources. In this work, welfare was maximized when serv ers with different capacities in the edge or cloud and heterogeneous users comp eted for resources. The authors demonstrated that the proposed mechanism was individually-rational and returned envy-free allo cations. Sun et al. also formu- lated their problem as an auction-based mechanism to address the computing resource allo cation issue. They explored t w o double auction schemes with dy- namic pricing in the MEC: a breakev en-based double auction (BD A) and a more efficient dynamic pricing-based double auction (DPD A). Under lo cality constrain ts, the schemes determined the matched pairs b etw een MDs and edge serv ers, as well as the pricing mechanisms for high system efficiency [31]. Considering heterogeneous requests, Zhang et al. in [24] deploy ed the matc h- ing problem b etw een MEC service providers (SPs) and MDs, which is a combi- natorial auction-based service provider selection with limited wireless and com- putational resources. They mo deled the matc hing relationship betw een MECs and MDs as a commo dity trading pro cess, which was offered a m ulti-round sealed sequential combinatorial auction (MSSCA) mechanism to match the SPs to MDs. In the one-round auction, the bidder cannot decide from which seller to buy and there must also be a cen tral con troller or coordination among sellers. This was not practical for this studys net work of m ultiple SPs and MDs with- out a centralized con troller. As a result, the m ulti-round auction was applied to their auction design. 10 Some other works hav e utilized a matching mec hanism to solv e computa- tional offloading problems [25, 39, 26]. In their pap er [25], Gu et al. prop osed a matc hing model called the Student Pro ject Allo cation (SP A), by which v arious studen ts are assigned different pro jects b elonging to different lecturers. In fog computing, they mo deled the resource allo cation problem as the SP A game, in whic h lecturers prop ose pro jects and students request these pro jects. Similarly , SPs offered av ailable radio and CPU resource bundles, while users requested acceptable resource bundles from the SPs. SPs based their decisions on the rev enue that could b e generated from user requests for resource bundles. In order to minimize the total computation ov erhead in terms of execution time and energy consumption, Pham et al. in [39] considered an optimiza- tion problem for jointly determining the computation offloading decision and allo cating the offloading p ow er at MEC serv ers. The computation offloading decision problem was divided into tw o subproblems for c ho osing b et ween MEC serv ers and deciding on sub c hannels. T o adopt these approaches, they employ ed the stable marriage problem whic h was tw o matching games with t wo sets of matc hing pla yers. The first man y-to-one matching game consisted of users with serv ers and the second one-to-one matching game had users with sub channel pairs. Recen tly , Gu et al. in [26] has also started to study offloading in the MEC with m ulti-user and multi-MEC and prop osed a decentralized task offloading strategy b et ween MDs and MEC servers. The task offloading problem was for- m ulated as a one-to-many matc hing game to reduce ov erall energy consumption and terminated as a stable matching b etw een tasks and no des. These authors fo cused on the three parameters of energy consumption, execution time, and price. Also, they considered mobile devices with the excessive computational capabilit y and MEC servers as edge no des. Moreo ver, the total edge energy consumption for task execution w as the sum of the transmission energy con- sumption and computation energy in the MEC. In summary , for the m ulti-user and m ulti-MEC scenario, most existing works do not provide an efficient approac h that reduces energy consumption and price 11 Figure 1: System architecture under latency constraints. In con trast, the current w ork considers the distance of users and the benefits of the users when users and MEC op erators w ant to select eac h other. In the scenario dev elop ed b y the present study , once MEC servers ha ve performed user tasks b efore the deadline and so hav e sav ed energy , they can earn money in exchange for providing resources to closer users. This scenario is efficient with multi-user and multi-MEC, which can select among op erators with different prices in a decen tralized en vironment to achiev e minimal energy consumption and price under latency constrain ts. 3. System Mo del This section describ es a system mo del adopted b y the present study . As sho wn in Fig. 1, a netw ork is assumed with multiple MDs denoted as U = { u 1 , , u n , ..., u N } with a finite amount of money . Eac h MD i has a different computational capabilit y f local i with a computationally in tensiv e and a dela y sensitiv e task featuring a different w orkload with a different deadline and users w anting to finish tasks b efore the deadline. The tasks include augmen ted re- alit y , a health monitoring application, and an infotainment application, all of 12 whic h differ in some prop erties, such as data upload or download. F or the het- erogeneous computing task, t i , some prop erties are defined, such as t i = ( d i , b i , T max i ), in which d i (in MI) is the amount of computing resource required for task t i and b i (in bits) is the data size of computation task t i , that is, the amoun t of data con tent (for example, the pro cessing co de and parameter). Also, the task is not divisible and must b e completed b efore deadline T max i . It should b e noted that these prop erties are inherent parameters of the application and will not c hange according to where the application is pro cessed. In different areas, it is assumed that the edge computing system consists of m ulti-MEC donated as S = { s 1 , ..., s m , ..., s M } . In the current work, each MEC serv er, j , has one host with differen t limited computational capabilities and is measured with million instructions p er second (MIPS) shared among users. F or each MEC serv er j , f max j indicates the maximum computational capability shared among users and q j denotes the maximum num ber of users which the MEC server can enroll. Man y articles, suc h as [39], ha ve assumed that MEC serv ers hav e limited resources divided equally among users. Ho w ever, equal allo cation of resources is not appropriate b ecause the user requirement may b e more or less than the amoun t allo cated. In this scenario, many MEC serv ers are placed in the user location and users m ust decide which serv er is the b est for offloading so as to ac hieve a successful pro cess with a v ariant deadline. λ j is the unit price p er CPU cycle to be paid b y MD i for the computation capability pro vided by MEC j . The present w ork assumes that every one should pay the selected operator based on their consumption. Therefore, the user price is determined b y λ j d i . This price does not affect the selection b y MEC servers b ecause these servers initially serve closer users. Op erators decide whether or not to select MD i as their servers according to the serv er resource capacity f max j , and the distance to MD i . Besides, the offloading decision for each computational task t i is denoted b y x ij , which takes zero and one. If MD i is c hosen to offload a task to MEC serv er j , then x ij m ust be equal to one or else x ij is zero, th us indicating that task t i is lo cally executed. A quasi-static scenario is considered when the set of 13 users remains unc hanged during a computation offloading p erio d (e.g., several h undred milliseconds), ev en though this ma y c hange across different p erio ds[32]. Since b oth communication and computation play a key role in mobile edge computing, the next section discusses comm unication and computation mo dels in detail. 3.1. Communic ation Mo del Let H ij b e the c hannel gain betw een MD i and MEC serv er j and show P i as the transmission p ow er of MD i . According to the Shannon-Hartley formula, the ac hiev able rate of MD i can then be obtained as follows: r i = B ij log 2 (1 + P i H ij σ 2 ) (1) where σ 2 denotes the noise p ow er and MD i has bandwidth B ij represen ting the channel bandwidth of MEC j . The total bandwidth of B j should b e equally divided among the MDs, such that eac h MD i can obtain a non-o verlapping frequency to sim ultaneously offload its data to the edge [21],[38],[27]. In this pap er, the downlink transmission dela y is not considered because the size of the results is often smaller than the input data and the downlink rate from the MEC server j to the MD i is higher than the uplink rate from the MD i to the MEC serv er j [6], [42]. 3.2. Computation Mo del The following discusses the computation ov erhead in terms of b oth energy consumption and the pro cessing time for b oth lo cal computing and edge com- puting approac hes. 3.2.1. L o c al Computing F or execution of lo cal tasks, data need not b e transmitted, but computation energy and processing time should b e considered. According to the w ork of [28], local i denotes the energy consumption co efficient p er CPU cycle of MD i . Thus, the computational energy in lo cal computing is: 14 E local i = local i ( d i ) (2) The lo cal pro cessing time T local i is computed as: T local i = d i f local i (3) 3.2.2. Edge Computing In the offloading mo de, when the MEC remotely carries out the task, the MD is idle. Ho wev er, an extra cost is necessary to send the input data for calculation. Hence, the transmission time T trans i and transmission energy E trans i for MD i in the edge computation is: T trans i = b i r i (4) E trans i = b i P i r i (5) The pro cessing time of tasks in a MEC server is calculated by: T exe i = d i f ij (6) In fact, T i = T exe i + T trans i in the edge computing mode and T i = T local i in the lo cal computing mo de. Based on the deadline, w orkload, and transmission time, each MD i with distance D ij to MEC server j , determines the amount of resources it needs, which is denoted by f ij . F or this purp ose, each task t i has a deadline with a different workload. Consequently , f ij can b e calculated by considering the sum of transmission time T trans i , the deadline of offloading task T max i , and the w orkload of task d i . f ij = d i T max i − T trans i (7) Based on this information, MD i determines the required resource f ij on MEC server j . Since for each MD i , tw o parameters, namely the energy con- sumption and price received by the MEC, are in volv ed in the selection of servers. 15 So to b e able to prioritize the serv ers, eac h user i has a α i and β i parameter that indicates the imp ortance of the price and energy consumption for users. 3.3. Pr oblem F ormulation The main goal in this problem is to minimize the energy consumption and price due to the task features and resources of the servers av ailable to the user. Based on the user’s decision, the total energy consumed by each task t i is equal to: E i = ( x ij ∗ E trans i ) + ((1 − x ij ) ∗ E local i ) (8) T o minimize the energy consumption of the task execution and price, the utilit y function is : U i = ( α i ∗ λ j d i ) + ( β i ∗ E i ) (9) the optimization problem can b e form ulated as follows: M in N X i =1 U i s.t. C 1 : T i ≤ T max i ∀ i ∈ N C 2 : N X i =1 x ij ≤ q j ∀ i ∈ N , ∀ j ∈ M C 3 : f ij > 0 ∀ i ∈ N , ∀ j ∈ M C 4 : x ij = { 0 , 1 } ∀ i ∈ N , ∀ j ∈ M C 5 : N X i =1 f ij ≤ f max j ∀ i ∈ N , ∀ j ∈ M C 6 : M X j =1 x ij ≤ 1 ∀ i ∈ N , ∀ j ∈ M (10) Here, the total MD energy consumption in the lo cal execution or edge execu- tion must b e minimized. Completion of tasks is ensured b efore the deadline in 16 Figure 2: Sc ho ol c hoice the first constraint. Constrain t C2 sp ecifies that the total n umber of p eople of- floading their tasks will not exceed the capacity of the MEC servers. Constraints C3 and C4 first assure that the computational capabilit y allo cated to each task t i is more than zero and that the MD’s decisions hav e tw o modes: lo cal execu- tion and edge execution. Constraint C5 demonstrates that the total capacit y receiv ed b y the MDs does not exceed the en tire capacity of the MEC server since resources hav e f max j resource constraints. Constrain t C6 sp ecifies that one MD can offload to one MEC server at the most. With this constraint, the ob jective function based on v ariable x ij aims to reduce the energy of task offloading. If n tasks exist, then the edge and lo cal pro cesses ha v e 2 n +1 c hoices. The exis- tence of binary v ariable x ij c hanges the optimization problem to a mixed in teger programming problem which is non-con vex and NP-hard [41, 12, 15]. In consid- eration of problem conditions and different factors, such as the deadline, energy consumption, distance of MDs, and lack of user a wareness of others decisions, the problem is solv ed via the school choice. 4. Design Of School Choice In the following, a new multi-user and multi-MEC scenario is presented where MDs hav e different requirements. With considering the energy consump- tion and price, MDs offload their tasks to MEC serv ers. In a distributed man- 17 ner, MDs determine task deadlines and w orkload whereas multi-a v ailable MEC serv ers broadcast their resources to heterogeneous MDs in their area. After that, MDs find a v ailable servers and send a request to the b est MEC serv er based on price and energy consumption while eac h MEC server j has a priorit y o ver users. This pro cess con tinues un til all users are assigned or all servers hav e con- sumed their resources. The con tributions of the present pap er are minimizing the energy consumption and price of task execution with consideration of the task deadline and limited capacity in MEC servers. The problem is formulated as a mixed integer programming problem which is non-con v ex and NP-hard. Therefore, the optimization problem is mo deled as a school choice. 4.1. What Is the Scho ol Choic e An imp ortant application of mechanism design is the school choice [47]. Some studen ts ar e assigned to a n umber of schools which hav e a certain capacit y with a strict priorit y ov er all studen ts. In this mo del, schools do not hav e preferences. In other words, schools do not keep track of which studen ts they register. Ho w ever, there are sp ecific priorities, such as the distance to the sc ho ol or a sibling enrolled in the same sc ho ol. Eac h student has strict preferences ov er all schools and an outside option (e.g., attending a priv ate sc ho ol or b eing homeschooled). The sc ho ol choice is an assignment with agents and ob jects that assigns each student to a school or his/her outside option while resp ecting the sc ho ol’s capacity and priority . 4.2. Mo deling the Pr oblem as a Scho ol Choic e The current study utilizes the school choice to mo del the optimization prob- lem, in which MEC op erators with resources (mo deled as sc ho ols) aim to select MDs with b etter b enefits (mo deled as students). Accordingly , a decentralized approac h is in vestigated to determine the offloading decision of MDs. With the prop osed computation offloading sc heme, (i) MDs decide to offload their com- putation tasks only if offloading to the MEC serv er satisfies them; (ii) MEC op erators c ho ose MDs that they can supp ort; (iii) MDs and servers make their 18 offloading decision in a distributed manner; and (iv) the num ber of successful offloading tasks increases. As sho wn in Fig. 2, according to the sc ho ol’s capacity and the student’s preference, school choice is a many-to-one assignment in which set of student map to set of sc ho ols. Assigning MDs to MEC servers resembles the problem of sc ho ol selection b y students b ecause many students assign to sc ho ols with lim- ited capacit y and sc ho ols are unable to enroll more studen ts than their capacit y . Similarly , many MDs use MEC resources and MEC serv ers ha ve a finite amoun t of resources. Consequen tly , this is also comparable to MEC servers with limited capacit y . Within the MDs area, serv ers are located at differen t distances from MDs and ma y b elong to differen t op erators. Dep ending on the limitation of the computational resources, the op erators can only cov er a sp ecified n umber of MDs. The school choice consists of pla y ers that are agents and ob jects. In the curren t pap er, MEC serv ers more closely resem ble ob jects than agen ts since they offer a service to b e used. Therefore, in the present context, MEC serv ers are assumed to b e ob jects and ha ve a priority ov er MDs. Also, MDs are selfish and determine task deadlines and workload. Besides, MDs kno w nothing about other MDs. As a result, as agents, MDs must make the b est decisions for offloading and selecting op erators. On the other hand, eac h MEC server considers the in teractions among m ultiple MDs and ma y either accept or reject MDs based on priorities. In this situation, the sc ho ol choice is used to deal with the offloading task in m ulti-user and m ulti-MEC serv er scenarios as the short deadline and the sp eed and efficiency of the offloading tasks are more imp ortant than stability . F urthermore, MEC servers ha ve no preference for MDs. In the prop osed metho d, the sc ho ol choice includes the prop erties sp ecified b elow [40]: • A set of MDs U = { u 1 , u 2 , , u N } • A set of MEC servers S = { s 1 , s 2 , ..., s M } • A capacity vector q = ( q 1 ., ..., q M ), which sp ecifies for each MEC server j , the maxim um num ber of MDs that MEC server can enroll. 19 • A profile of strict MDs preferences P = ( P 1 , ..., P N ) • A strict priority structure of the MEC serv er o ver MDs, L = ( L 1 , ..., L M ) • U and S are kept constant during the decision-making p erio d. • Eac h MD i can offload task on one of the MEC servers or perform it lo cally . • The MDs and MEC servers are completely indep endent. F ormally , an assignment is defined as a mapping, µ , from the set of MEC serv ers and MDs, S ∪ U , to a set of all p ossible sets of MDs and MEC servers: µ : S ∪ U → 2 U ∪ S (11) In the current work, a school choice consists of a p opulation of MDs and a list of MEC servers. MDs are defined b y their preferences on MECs, which are the minimum energy consumption for offloading tasks and the price paid to op erators. On the other hand, MEC servers are defined by their capacities and priorities in MD selection. Since they hav e no preferences o ver MDs and obtain a fixed price based on workloads, MEC servers are not concerned ab out whic h MDs send a request. As a result, MEC servers select MDs that are closer to them b ecause of the edges limited capacity and the abilit y to provide b etter service. 4.3. De c entr alize d Solution Sev eral approaches can solve the school choice problem, including the De- ferred Acceptance Algorithm (D A) and the Immediate Acceptance Algorithm (IA). The IA is very similar to the D A, but with the difference that, once a p erson o ccupies a place, this acceptance is final and does not change. As a result, the allo cation is p erformed more rapidly . F urthermore, the immediate acceptance mec hanism assigns MDs to MEC servers according to the MD’s pref- erences ov er MEC servers and the MEC’s priorities for MDs. More precisely , 20 at ev ery step r , each MEC server accepts (up to its remaining capacity) the highest priorit y MDs among those that ranked it. Therefore, b ecause MECs choose users in their area, a strict priority struc- ture L ij of MEC serv er j for choosing MD i is only the MDs distance. L ij = D ij (12) The preference P ij for each MEC server j and MD i is described as follo ws: P ij = ( α i ∗ λ j d i ) + ( β i ∗ E i ) (13) Therefore, the minim um energy consumption for offloading tasks and the pa yment to op erators are the preference P ij for eac h MEC server j and MD i . The immediate acceptance mec hanism is a member of the family of so-called rank-priorit y mec hanisms. Eac h rank-priority mechanism is asso ciated with an order of all pairs that consists of a (studen ts) preference and a (schools) priorit y . Given the studen ts preferences on schools and the schools priorities for studen ts, a rank-priorit y mec hanism assigns students to schools follo wing the order of rank-priority pairs. In the IA, the output is efficient. The definition of efficiency is pro vided as follows [40]: The assignment is efficient if there is no other assignment in which the num- b er of MDs for at least one MEC server is higher while the other MECs accept few er MDs. In the curren t w ork, the mobile iden tifies the energy consumption of all serv ers and first selects the serv er with the least energy and at a reasonable price. As a result, there is no b etter server to offload at each stage and eac h user p erforms its best and mo ves on to the next serv er, only if the preferred serv er no longer has the capacity to run. Therefore, with the IA, an efficient and quick assignment is achiev ed within the minimum num b er of rounds and the low est resp onse time, which is very practical for MECs and MDs. In the prop osed metho d, the IA in the sc ho ol choice works as follows: • MDs find preferences for MEC servers based on the minimum energy con- sumption and price of MEC serv ers for offloading tasks in their area. 21 • MEC servers set the priorit y of MDs based on the distance of MDs to MEC serv ers. • MDs apply to their first choice b etw een MEC servers. • MEC servers reject the low est-ranking MDs ov er their capacit y . All other offers are immediately accepted and b ecome p ermanent assignments. Af- ter that, MEC serv ers capacities are up dated again. • MDs apply to the next MEC server on their preference list if they rejected in the previous step. • Ev entually , if their lists are empty , MDs apply to the lo cal computing. • The algorithm stops when MEC servers use their entire capacity or no MD left without resource. Based on the fixed MD priorities and submitted preferences, the MD assign- men t is determined in several rounds. In round one, only the first choice of each MD is considered. Then, one at a time, MDs are assigned to their first choice follo wing their priority order un til there is no capacit y left at their first choice. In round t wo, those who cannot b e assigned according to their first choice (b e- cause of low priority) are considered in regard to their second choice (in case an y capacity remains from round one). The pro cess contin ues in this manner un til each MD is assigned [48]. Note that the applications in the IA are immediately accepted or rejected at each step. How ev er, in the D A, acceptance is deferred until the end. This sc ho ol c hoice finishes when edge serv ers ha ve used up all of their capacity and the other MDs either remain unassigned or ha ve all received a resource. In the prop osed method, when MEC servers ha v e depleted their capacit y and there are still MDs without resources, the MD’s decision is to run tasks lo cally . Thus, when there are no longer MDs without a decision, the algorithm is completed. With the proposed mo del, by minimizing price and energy consumption b efore the task deadlines, the offloading decision can be met. In comparison to the 22 T able 1: Application Properties App name Data upload(KB) T ask length(MI) Augmen ted reality 1500 12000 Health application 200 6000 Infotainmen t application 250 15000 D A and game theory , the significant adv an tage of this schema is the efficient algorithm in the minim um rounds. Moreo ver, firstly in the proposed system, users can mak e offloading decisions to execute the task b efore the deadline and so sav e mobile device energy . Sec- ondly , users make these decisions themselves by emplo ying the intelligence on the device. Thirdly , in decen tralized offloading, it is unnecessary to send a lot of information for eac h offloading to the edge. F urthermore, the edge load is reduced and edge managemen t b ecomes more manageable. 5. Ev aluation In this section, the EdgeCloudSim simulator ev aluates the p erformance of ef- ficien t multi-user and multi-MEC computation offloading. EdgeCloudSim builds up on CloudSim to address the specific demands of edge computing researc h and supp ort necessary functionality in terms of computation and net working abilities [49]. 5.1. Par ameter Settings Through n umerical studies, this section ev aluates the prop osed distributed computation offloading algorithm. T o ev aluate its algorithm, the current pap er uses the follo wing sim ulation settings for all simulations. First, a scenario is considered in which five MEC serv ers, with one host and v arious num b ers of computational resources, are deploy ed in the user’s area to serv e a differen t num- b er of users. The mobile devices hav e computation-intensiv e and data-intensiv e 23 tasks. It is also assumed that the mobile devices are randomly scattered o v er the co verage region. Thus, the mobile devices can offload tasks to the MEC server. The present study also sets the transmission bandwidth B ij and transmitting p o wer P i of the mobile device to 20 M H z and 0 . 5 W , resp ectively . The channel gain H ij for MD i and MEC server j is mo deled as 127 + 30 log 2 ( D ij ), where D ij is the distance b et ween user i and MEC server j ; this can differ based on the user location. T able 1 presen ts three task types of whic h each has a differ- en t v alue for input size, file output size, and task length. These properties are generated b y an exp onential num b er generator for eac h task [49]. 5.2. Use Cases In this subsection, we fo cus on some of the most use cases that exp erience the need for offloading in mobile edge computing. As sho wn in table 1, with resp ect to our goal, w e will tak e the examples of augmented reality , health application and infotainmen t application. 1. Augmen ted reality is a t yp e of in teractive, reality-based display environ- men t that takes the capabilities of effects to increase the user’s real-w orld exp erience. Augmen ted realit y combines real and computer-based scenes and images to pro vide a view of the world. 2. One of the ma jor applications of biomedical research is to utilize inexp en- siv e mobile biomedical sensors and cloud computing for perv asive health monitoring. How ev er, real-w orld user exp eriences with mobile cloud-based health monitoring were p o or, due to factors suc h as excessive netw orking latency and longer resp onse time [50]. So this program can b e one of the b est programs to tak e adv an tage of offloading at the edge. 3. Infotainmen t app like v ehicular infotainmen t systems includes tw o parts: 1) the information part and 2) the en tertainment part, which are in te- grated to pro vide a unified platform to drivers and passengers [51]. 24 Figure 3: The comparison of algorithms under differen t num bers of users Figure 4: The comparison of energy consumption under different numbers of users Figure 5: the comparison of av erage utilit y function under different numbers of users 5.3. Performanc e Evaluation of Algorithm The distributed computation offloading algorithm is compared with the fol- lo wing solutions: 1. Energy based offloading: In this method, all users offload their computa- tion tasks to the MEC serv ers that hav e the lo west energy consumption. 2. Price based offloading: All users offload their computation tasks to the MEC serv ers that hav e the lo west price for the users. 3. HOD A: The heuristic offloading decision algorithm (HODA) prop osed in [41]. The first experiment v aries the num ber of tasks from 10 to 100 and examines the p ercentage of offloading tasks. In Fig. 3, the percentage of completed tasks is relatively high while the num ber of tasks is low. Ho wev er, the p ercentage of completed tasks gradually decreases as the num b er of tasks increases. This is reasonable since eac h user might ha ve a high probabilit y of matching with its preferred MEC serv er when the num b er of tasks is low. So they will offload their computation task in go o d condition, suc h as bandwidth. Also, as the n umber of tasks contin ues to gro w, eac h user must comp ete with the others for computation resources, th us lo werin g the probabilit y of a tasks b eing assigned to its preferred MEC server. Due to task preferences, the limited n um b er of MEC serv ers and computational resources, the prop osed algorithm must reject some of the user requests and their tasks are executed lo cally . 25 Figure 6: The comparison of completed task in different applications Figure 7: The comparison of av erage energy consumption in differen t applications Figure 8: The comparison of av erage utility function in dif- ferent applications It can b e seen in Fig. 3 that the p ercentage of users who are able to p erform tasks in energy based offloading mo de is higher than users in the price based offloading algorithm. Due to features such as distance and workload when users c ho ose based on minimum server energy consumption, the chance to p erform tasks b efore the deadline increased. In this case, if the user fails to fulfill this condition, lo cal execution should be p erformed. But based on the conditions of this algorithm, lo cal execution is not an option. As a result, the user will not b e able to complete the task b efore the deadline. Fig. 4 examine the effect of raising the n um b er of tasks on a verage energy consumption. The present exp erimen t utilizes a health application with five MEC serv ers. As the num b er of users increases, users sa ve more energy on sc ho ol choice scenario than HOD A; because HODA do es not p erform the task allo cation well. As a result, the distribution of resources b etw een users is not done correctly . Also, the capacity of edge servers decreases when the num b er of users increases, and more users are forced to p erform their tasks lo cally . There- fore, the total energy consumption increases due to increased lo cal execution. Also, in Fig. 5, the utility function is increased by increasing the num ber of users and the energy consumption due to more users switching to local execu- tion. On the other hand, in the price-based offloading metho d, users hav e less successful execution than the school choice method, b ecause they fo cus on price parameters which results in a lo wer utilit y function than school choice metho d. In this pap er, m ultiple heterogeneous MDs comp ete for numerous heteroge- 26 neous MEC resources as the w orkload increases. Hence, the offloading scenario will b e more difficult, and some users will hav e to select the local execution mo de, which may not be able to p erform the tasks prop erly due to the high w orkload of tasks. As sho wn in Fig. 6, as the workloads increase, the p ercent- age of users who p erform their tasks is reduced b ecause the edge serv er has limited resources and cannot handle all users. On the other hand, in the mobile device, the user will not hav e the capacit y to p erform these tasks successfully b efore the deadline, which will result in unsuccessful execution of tasks. In the price-based offloading metho d, price is the only imp ortan t factor for the user, and the workload has a direct impact on the user’s paymen t. The price paid by the user also increases when w orkloads increment. In many cases, this amount exceeds the maximum price that the user can pa y , and the user is forced to perform lo cally . In this condition, users can bid the second price through methods suc h as an auction. How ev er, this article assumed that the maxim um user price is only set once at the b eginning. Hence, tasks are forced to run lo cally , but they are unable to p erform before the deadline in some cases, due to the increased workload. As shown in Fig. 8, although utility function has improv ed dramatically with increasing workload, the school choice metho d has still b een less than HODA metho d due to b etter allo cation based on b oth energy factor and user price. In Fig. 9, with the increase in the num b er of servers to 5, the school c hoice approac h has reached its b est, and all users can offload their tasks to a server with lo w energy consumption and cost based on user-selected parameters. How- ev er, in this case, HODA still has to push some users to run lo cally , b ecause it cannot determine the appropriate allocation to perform tasks based on the user’s parameters. As a result in range of 100 concurren t users, as shown in Fig. 10, the a verage energy consumption increase. Fig. 10 also shows that with the increase in MEC server resources and the reduction of resource constrain ts, p eople’s c hances of offloading tasks increase. As a result, the a verage energy consumption is reduced due to reduced lo cal execution. How ev er, as in the previous cases , the energy consumption, when 27 Figure 9: The comparison of completed task in different num ber of MEC servers Figure 10: The comparison of av erage energy consumption in different num ber of MEC servers Figure 11: The comparison of av erage utility function in different num ber of MEC servers b oth energy and price parameters are equal, has a more significant impact on the success of the users, and if the user chooses a server with less energy c on- sumption, the chances of successful execution b efore the deadline are increased. In Fig. 11, as the n umber of serv ers increases, the range of suggested base prices are increased. Giv en all of this, with increasing the c hance of successful execution b efore the deadline, the utility function will also decrease. It is due to the increasing num ber of edge serv er resources and the increasing probability of users to find the serv er at low er price and energy consumption. 6. Conclusion With the aim of reducing mobile device energy consumption and price, the presen t research studied m ulti-user and multi-MEC computation offloading. By form ulating different task offloading decisions as a sc ho ol choice problem, the curren t study minimized user energy consumption and price under latency con- strain ts. Instead of conv en tional centralized optimization metho ds, this ap- proac h considered a decentralized mechanism b etw een users and MEC serv ers. Sim ulation results indicated that the prop osed scheme is more efficient in com- parison with other computation offloading schemes. F uture work shall consider task offloading in more complicated deplo yment with user mobility . 28 References References [1] Y. C. Hu, M. P atel, D. Sab ella, N. Sprecher, V. Y oung, Mobile edge computingA key tec hnology tow ards 5G. ETSI White Paper, ETSI White P ap. 11 (11) (2015) 1–16. URL https://yucianga.info/wp- content/uploads/2015/11/ Ref02- 2015- 09- etsi{_}wp11{_}mec{_}a{_}key{_}technology{_}towards{_}5g. pdf [2] K. Li, A Game Theoretic Approach to Computation Offloading Strategy Optimization for Non-co op erative Users in Mobile Edge Computing, IEEE T rans. Sustain. Comput. (2018) 1–1 doi:10.1109/TSUSC.2018.2868655 . URL https://ieeexplore.ieee.org/document/8454762/ [3] K. Zhang, Y. Mao, S. Leng, Q. Zhao, L. Li, X. Peng, L. Pan, S. Maharjan, Y. Zhang, Energy-Efficien t Offloading for Mobile Edge Computing in 5G Heterogeneous Netw orks, IEEE Access 4 (c) (2016) 5896–5907. doi:10. 1109/ACCESS.2016.2597169 . URL http://www.mdpi.com/2076- 3417/7/6/557 [4] P . Zhao, H. Tian, C. Qin, G. Nie, Energy-Saving Offloading b y Jointly Al- lo cating Radio and Computational Resources for Mobile Edge Computing, IEEE Access 5 (2017) 11255–11268. doi:10.1109/ACCESS.2017.2710056 . [5] J. Zhang, X. Hu, Z. Ning, E. C. Ngai, L. Zhou, J. W ei, J. Cheng, B. Hu, Energy-latency T rade-off for Energy-a ware Offloading in Mobile Edge Computing Netw orks, IEEE Internet Things J. 4662 (c) (2017) 1–13. doi:10.1109/JIOT.2017.2786343 . [6] Y. Hao, M. Chen, L. Hu, M. S. Hossain, A. Ghoneim, Energy Efficient T ask Cac hing and Offloading for Mobile Edge Computing, IEEE Access 6 (Marc h) (2018) 11365–11373. doi:10.1109/ACCESS.2018.2805798 . 29 [7] G. Zhang, W. Zhang, Y. Cao, D. Li, L. W ang, Energy-Delay T radeoff for Dynamic Offloading in Mobile-Edge Computing System with Energy Harv esting Devices, IEEE T rans. Ind. Informatics 3203 (c) (2018) 1–1. doi:10.1109/TII.2018.2843365 . URL https://ieeexplore.ieee.org/document/8371267/ [8] C. Y ou, K. Huang, H. Chae, B. H. Kim, Energy-Efficient Resource Allo ca- tion for Mobile-Edge Computation Offloading, IEEE T rans. Wirel. Com- m un. 16 (3) (2017) 1397–1411. , doi:10.1109/TWC. 2016.2633522 . [9] S. Li, Z. Zhang, P . Zhang, X. Qin, Y. T ao, L. Liu, Energy-a ware Mobile Edge Computation Offloading for IoT o ver Heterogenous Netw orks, IEEE Access 7 (2019) 1–1. doi:10.1109/access.2019.2893118 . [10] W. F an, Y. Liu, B. T ang, F. W u, Z. W ang, Computation Offloading Based on Co op erations of Mobile Edge Computing-Enabled Base Stations, IEEE Access 6 (X) (2017) 22622–22633. doi:10.1109/ACCESS.2017.2787737 . [11] F. Guo, H. Zhang, H. Ji, X. Li, V. C. Leung, An Efficient Computation Offloading Management Scheme in the Densely Deplo yed Small Cell Net- w orks With Mobile Edge Computing, IEEE/ACM T rans. Netw. (2018) 1–14 doi:10.1109/TNET.2018.2873002 . [12] Y. Dai, D. Xu, S. Maharjan, Y. Zhang, Joint Computation Offloading and User Asso ciation in Multi-task Mobile Edge Computing, IEEE T rans. V eh. T ec hnol. 9545 (c) (2018) 1–13. doi:10.1109/TVT.2018.2876804 . [13] T. X. T ran, D. P ompili, Joint T ask Offloading and Resource Allo cation for Multi-Server Mobile-Edge Computing Netw orks, IEEE Access 5 (2017) 3302–3312. . URL [14] T. Q. Dinh, J. T ang, Q. D. La, T. Q. Quek, Offloading in Mobile Edge Computing: T ask Allo cation and Computational F requency Scaling, IEEE 30 T rans. Commun. 65 (8) (2017) 3571–3584. doi:10.1109/TCOMM.2017. 2699660 . [15] M. Chen, Y. Hao, T ask Offloading for Mobile Edge Computing in Softw are Defined Ultra-Dense Netw ork, IEEE J. Sel. Areas Comm un. 36 (3) (2018) 587–597. doi:10.1109/JSAC.2018.2815360 . [16] E. E. Ugwuan yi, S. Ghosh, M. Iqbal, T. Dagiuklas, Reliable resource pro- visioning using bankers’ deadlo ck a voidance algorithm in MEC for indus- trial IoT, IEEE Access 6 (2018) 43327–43335. doi:10.1109/ACCESS.2018. 2857726 . [17] L. Huang, X. F eng, L. Zhang, L. Qian, Y. W u, Multi-Server Multi-User Multi-T ask Computation Offloading for Mobile Edge Computing Net works, Sensors 19 (6) (2019) 1446. doi:10.3390/s19061446 . URL https://www.mdpi.com/1424- 8220/19/6/1446 [18] K. Li, Computation Offloading Strategy Optimization with Multiple Het- erogeneous Serv ers in Mobile Edge Computing, IEEE T rans. Sustain. Com- put. XX (2019) 1–1. doi:10.1109/tsusc.2019.2904680 . [19] D. T. Nguy en, L. B. Le, V. Bhargav a, Price-based Resource Allo cation for Edge Computing: A Market Equilibrium Approach, IEEE T rans. Cloud Comput. (2018) 1–19, doi:10.1109/TCC.2018. 2844379 . URL [20] G. Gao, M. Xiao, J. W u, H. Huang, S. W ang, G. Chen, Auction-based VM Allo cation for Deadline-Sensitive T asks in Distributed Edge Cloud, IEEE T rans. Serv. Comput. PP (201806340014) (2019) 1–1. doi:10.1109/TSC. 2019.2902549 . URL https://ieeexplore.ieee.org/document/8657771/ [21] M. Liu, Y. Liu, Price-Based Distributed Offloading for Mobile-Edge Com- puting with Computation Capacity Constraints, IEEE Wirel. Commun. 31 Lett. 7 (3) (2018) 420–423. , doi:10.1109/LWC.2017. 2780128 . URL https://ieeexplore.ieee.org/abstract/document/8166725/ [22] S. Ranadheera, S. Maghsudi, E. Hossain, Computation Offloading and Activ ation of Mobile Edge Computing Serv ers: A Minority Game, IEEE Wirel. Commun. Lett. (2018) 1–4, doi:10.1109/LWC. 2018.2810292 . [23] M. Li, Q. W u, J. Zhu, R. Zheng, M. Zhang, A Computing Offloading Game for Mobile Devices and Edge Cloud Servers, Wirel. Commun. Mob. Comput. 2018 (2018) 1–10. doi:10.1155/2018/2179316 . URL https://www.hindawi.com/journals/wcmc/2018/2179316/ [24] H. Zhang, F. Guo, H. Ji, C. Zhu, Combinational auction-based service pro vider selection in mobile edge computing net w orks, IEEE Access 5 (2017) 13455–13464. doi:10.1109/ACCESS.2017.2721957 . [25] Y. Gu, Z. Chang, M. P an, L. Song, Z. Han, Join t Radio and Computational Resource Allo cation in IoT F og Computing, IEEE T rans. V eh. T echnol. 67 (8) (2018) 7475–7484. doi:10.1109/TVT.2018.2820838 . [26] B. Gu, Y. Chen, H. Liao, Z. Zhou, D. Zhang, A distributed and context- a ware task assignmen t mechanism for collaborative mobile edge computing, Sensors (Switzerland) 18 (8). doi:10.3390/s18082423 . [27] J. Ren, G. Y u, Y. Cai, Y. He, Latency optimization for resource allo cation in mobile-edge computation offloading, IEEE T rans. Wirel. Commun. 17 (8) (2018) 5506–5519. , doi:10.1109/TWC.2018.2845360 . URL [28] L. Y ang, H. Zhang, X. Li, H. Ji, V. C. Leung, A Distributed Compu- tation Offloading Strategy in Small-Cell Netw orks Integrated With Mo- bile Edge Computing, IEEE/A CM T rans. Netw. (2018) 1–12 doi:10.1109/ TNET.2018.2876941 . 32 [29] T. Q. Dinh, Q. D. La, T. Q. S. Quek, H. Shin, Distributed Learning for Computation Offloading in Mobile Edge Computing, IEEE T rans. Com- m un. 66 (12) (2018) 6353–6367. doi:10.1109/TCOMM.2018.2866572 . URL https://ieeexplore.ieee.org/document/8444467/ [30] T. Bahreini, D. Grosu, An Envy-F ree Auction Mechanism for Resource Allo cation in Edge Computing Systems, 2018 IEEE/ACM Symp. Edge Comput. (2018) 313–322 doi:10.1109/SEC.2018.00030 . [31] W. Sun, J. Liu, Y. Y ue, H. Zhang, Double Auction-based Resource Allo- cation for Mobile Edge Computing in Industrial Internet of Things, IEEE T rans. Ind. Informatics PP (c) (2018) 1. doi:10.1109/TII.2018.2855746 . URL https://ieeexplore.ieee.org/document/8410767/ [32] X. Chen, L. Jiao, W. Li, X. F u, Efficien t Multi-User Computation Of- floading for Mobile-Edge Cloud Computing, IEEE/ACM T rans. Netw. 24 (5) (2016) 2795–2808. , doi:10.1109/TNET.2015. 2487344 . URL https://ieeexplore.ieee.org/iel7/90/4359146/07307234.pdf [33] H. Guo, J. Liu, J. Zhang, W. Sun, N. Kato, Mobile-Edge Computation Offloading for Ultra-Dense IoT Netw orks, IEEE Internet Things J. (2018) 1 doi:10.1109/JIOT.2018.2838584 . URL https://ieeexplore.ieee.org/abstract/document/8361406/ https://ieeexplore.ieee.org/document/8361406/ [34] B. Y ang, Z. Li, W. Liu, Non-co op erative game approach for task offloading in edge clouds, arXiv (2018) 1–12. URL [35] N. Li, J. F. Martinez-Ortega, V. H. Diaz, Distributed p ow er con trol for in terference-aw are m ulti-user mobile edge computing: A game theory approac h, IEEE Access 6 (i) (2018) 36105–36114. , doi:10.1109/ACCESS.2018.2849207 . 33 URL org/document/8390908/ [36] C. Yi, J. Cai, Z. Su, A Multi-User Mobile Computation Offloading and T ransmission Scheduling Mechanism for Dela y-Sensitive Applications, IEEE T rans. Mob. Comput. (2019) 1–1 doi:10.1109/TMC.2019.2891736 . URL https://ieeexplore.ieee.org/document/8606230/ [37] T. Zhang, Data Offloading in Mobile Edge Computing: A Coalition and Pricing Based Approac h, IEEE Access 6 (2018) 2760–2767. arXiv:1709. 04148 , doi:10.1109/ACCESS.2017.2785265 . URL http://ieeexplore.ieee.org/document/8226751/http://arxiv. org/abs/1709.04148 [38] Z. Zhu, J. Peng, X. Gu, H. Li, K. Liu, Z. Zhou, W. Liu, F air resource allo cation for system throughput maximization in mobile edge computing, IEEE Access 6 (2018) 5332–5340. doi:10.1109/ACCESS.2018.2790963 . [39] Q.-V. V. Pham, T. Leanh, N. H. T ran, B. J. P ark, C. S. Hong, Decen- tralized Computation Offloading and Resource Allo cation for Mobile-Edge Computing: A Matching Game Approac h, IEEE Access 6 (2018) 75868– 75885. doi:10.1109/ACCESS.2018.2882800 . URL https://ieeexplore.ieee.org/document/8543561/ [40] G. Haeringer, Market design : auctions and matching, The MIT Press, 2018. [41] X. Lyu, H. Tian, C. Sengul, P . Zhang, Multiuser joint task offloading and re- source optimization in proximate clouds, IEEE T rans. V eh. T echnol. 66 (4) (2017) 3435–3447. doi:10.1109/TVT.2016.2593486 . [42] Y. Mao, J. Zhang, K. B. Letaief, Dynamic Computation Offloading for Mobile-Edge Computing with Energy Harvesting Devices, IEEE J. Sel. Areas Comm un. 34 (12) (2016) 3590–3605. , doi:10. 1109/JSAC.2016.2611964 . 34 [43] Y. W ang, M. Sheng, X. W ang, L. W ang, J. Li, Mobile-Edge Computing: P artial Computation Offloading Using Dynamic V oltage Scaling, IEEE T rans. Commun. 64 (10) (2016) 4268–4282. doi:10.1109/TCOMM.2016. 2599530 . URL http://ieeexplore.ieee.org/document/7542156/ [44] Q. T ang, L. Chang, K. Y ang, K. W ang, J. W ang, P . K. Sharma, T ask n umber maximization offloading strategy seamlessly adapted to UA V sce- nario, Comput. Commun. 151 (December 2019) (2020) 19–30. doi: 10.1016/j.comcom.2019.12.018 . URL https://doi.org/10.1016/j.comcom.2019.12.018 [45] Z. Han, Y. Gu, W. Saad, Matc hing Theory for Wireless Netw orks, Springer In ternational Publishing, 2017. doi:10.1007/978- 3- 319- 56252- 0 . URL http://link.springer.com/10.1007/978- 3- 319- 56252- 0 [46] Z. Han, Han Z., et al. Game Theory in Wireless and Communication Net- w orks (CUP , 2011)(ISBN 9780521196963)(O)(553s) GA .pdf (2012). [47] A. Ab dulk adirolu, T. S¨ onmez, Sc ho ol Choice: A Mechanism Design Approac h, Am. Econ. Rev. 93 (3) (2003) 729–747. doi:10.1257/ 000282803322157061 . URL http://pubs.aeaweb.org/doi/10.1257/000282803322157061 [48] H. Ergin, T. S¨ onmez, Games of school choice under the Boston mec hanism, J. Public Econ. 90 (1-2) (2006) 215–237. doi: 10.1016/j.jpubeco.2005.02.002 . URL https://linkinghub.elsevier.com/retrieve/pii/ S004727270500040X [49] C. Sonmez, A. Ozgovde, C. Erso y , EdgeCloudSim: An environmen t for p erformance ev aluation of Edge Computing systems, in: 2017 Second In t. Conf. F og Mob. Edge Comput., IEEE, 2017, pp. 39–44. doi:10.1109/ FMEC.2017.7946405 . URL http://ieeexplore.ieee.org/document/7946405/ 35 [50] Y. Cao, P . Hou, D. Brown, J. W ang, S. Chen, Distributed analytics and edge in telligence: P erv asive health monitoring at the era of fog com- puting, in: Proc. In t. Symp. Mob. Ad Ho c Net w. Comput., V ol. 2015- June, ACM Press, New Y ork, New Y ork, USA, 2015, pp. 43–48. doi: 10.1145/2757384.2757398 . URL http://dl.acm.org/citation.cfm?doid=2757384.2757398 [51] J. Guo, B. Song, Y. He, F. R. Y u, M. So okhak, A Survey on Compressed Sensing in V ehicular Infotainment Systems (2017). doi:10.1109/COMST. 2017.2705027 . URL http://ieeexplore.ieee.org/document/7934315/ 36

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment