Adaptive Online Distributed Optimal Control of Very-Large-Scale Robotic Systems

💡 Research Summary

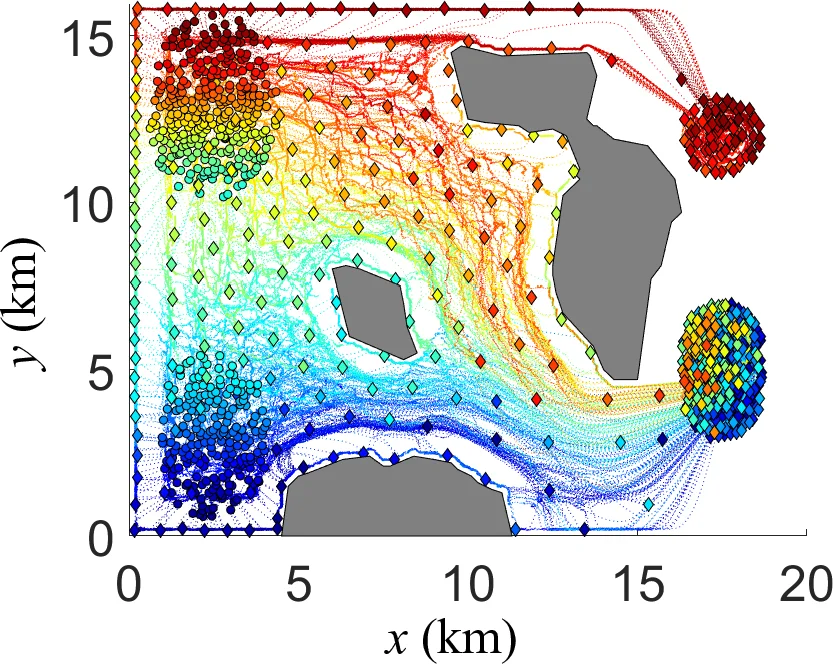

The paper introduces an Adaptive Distributed Optimal Control (ADOC) framework designed for very‑large‑scale robotic (VLSR) systems consisting of hundreds of autonomous agents operating in highly uncertain and dynamically changing environments. Traditional approaches—mean‑field/Nash Certainty Equivalence and Distributed Optimal Control (DOC)—either rely on microscopic state coupling or solve a continuous‑space nonlinear programming (NLP) problem for the evolution of robot probability density functions (PDFs). Both suffer from prohibitive computational cost and inability to re‑plan in real time when obstacle information is unknown or evolves during execution.

ADOC overcomes these limitations by (1) representing the macroscopic state of the swarm as a PDF approximated by a Gaussian Mixture Model (GMM), (2) formulating the optimal transport of PDFs in the Wasserstein‑GMM space using the optimal mass transport (OMT) theorem, and (3) embedding time‑varying environmental information directly into the cost functional via an obstacle map function m(x,t). The obstacle map is generated online from robot sensor data using a Hilbert occupancy map, which yields a continuous probability field h(x,t) that is thresholded to a binary occupancy map.

The control problem is cast as a reinforcement‑learning/approximate‑dynamic‑programming (RL‑ADP) task. A novel value functional V_k(·) and a Q‑functional Q_k(·) are defined, where the Q‑functional incorporates the Lagrangian term L

Comments & Academic Discussion

Loading comments...

Leave a Comment