Loss Functions, Axioms, and Peer Review

It is common to see a handful of reviewers reject a highly novel paper, because they view, say, extensive experiments as far more important than novelty, whereas the community as a whole would have embraced the paper. More generally, the disparate mapping of criteria scores to final recommendations by different reviewers is a major source of inconsistency in peer review. In this paper we present a framework inspired by empirical risk minimization (ERM) for learning the community’s aggregate mapping. The key challenge that arises is the specification of a loss function for ERM. We consider the class of $L(p,q)$ loss functions, which is a matrix-extension of the standard class of $L_p$ losses on vectors; here the choice of the loss function amounts to choosing the hyperparameters $p, q \in [1,\infty]$. To deal with the absence of ground truth in our problem, we instead draw on computational social choice to identify desirable values of the hyperparameters $p$ and $q$. Specifically, we characterize $p=q=1$ as the only choice of these hyperparameters that satisfies three natural axiomatic properties. Finally, we implement and apply our approach to reviews from IJCAI 2017.

💡 Research Summary

The paper tackles the long‑standing problem of inconsistency in peer review, where different reviewers assign disparate weights to criteria such as novelty, significance, or experimental rigor. The authors propose a data‑driven approach that learns a single monotonic mapping from multi‑dimensional criterion scores to an overall recommendation, thereby capturing the collective opinion of the reviewing community.

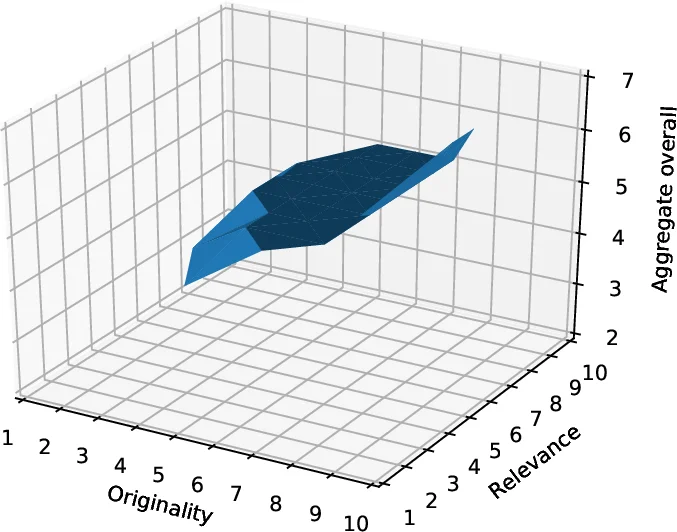

The methodological core is an empirical risk minimization (ERM) framework. For each reviewer i, the observed reviews are treated as samples of an unknown monotonic function g_i that maps a paper’s criterion vector x to its overall score y. The goal is to find a single monotonic function b_f that best fits all observed (x, y) pairs. To measure fit, the authors introduce a family of matrix‑norm loss functions L(p, q). Concretely, for a candidate function f, the error for reviewer i is the L_p‑norm of the vector of absolute deviations across that reviewer’s papers; the overall loss is then the L_q‑norm of the vector of reviewer‑wise errors. When p = q = 1 this reduces to the average absolute error across all reviews.

Because peer review lacks a ground‑truth “correct” mapping, the choice of (p, q) cannot be guided by standard cross‑validation alone. Instead, the authors draw on social‑choice theory and impose three axioms that any reasonable aggregation method should satisfy:

- Consensus – if every reviewer gives the same overall score to a paper, the aggregate function must output that same score.

- Efficiency – if the multiset of scores for paper a pointwise‑dominates that for paper b (i.e., after sorting, each reviewer’s score for a is at least as high as the corresponding score for b), then the aggregate score for a must be at least as high as that for b.

- Strategy‑proofness – no reviewer can improve the proximity of the aggregate recommendation vector to his own reported vector by misreporting his scores. The authors formalize this using an L_2‑based utility, but the result holds for any L_ℓ norm.

The central theoretical contribution is a characterization theorem: L(p, q) aggregation satisfies all three axioms if and only if p = q = 1. The “if” direction is proved by showing that the L_1‑averaged loss yields a consensus‑respecting, Pareto‑efficient, and manipulation‑immune solution. The “only‑if” direction constructs counter‑examples for any p > 1 or q > 1, demonstrating that either the loss over‑emphasizes a single reviewer’s errors (breaking strategy‑proofness) or the aggregation treats reviewers asymmetrically (again allowing manipulation). Thus the axioms uniquely single out the L(1, 1) loss.

Empirically, the authors apply the L(1, 1) aggregation to a dataset of 9,197 reviews from IJCAI 2017, which includes eight criterion scores per paper and an overall recommendation. They compare three aggregation strategies: (i) the proposed L(1, 1) method, (ii) a naïve average of overall scores, and (iii) alternative L(p, q) settings (e.g., L(2, 2)). The L(1, 1) approach yields an overall recommendation list that overlaps with the actual accepted papers by 79.2 %, substantially higher than the naïve average (≈65 %). Moreover, when p or q deviates from 1, the resulting aggregations violate the efficiency and strategy‑proofness axioms in observable ways (e.g., a dominated paper receiving a higher aggregate score, or a reviewer being able to shift the aggregate by altering a single score).

The paper discusses several practical considerations. The theoretical analysis assumes (a) every reviewer evaluates every paper and (b) criterion scores are objective and identical across reviewers, so disagreement stems solely from the mapping to overall scores. While these assumptions simplify the axiomatic characterization, real conferences often have partial coverage and noisy criteria. The monotonicity assumption on reviewers’ internal functions is also idealized; reviewers may apply non‑monotonic heuristics. Nonetheless, the authors argue that the framework remains robust and can be extended to settings with missing data or more complex utility models.

In conclusion, the work bridges machine‑learning loss design with social‑choice axioms, providing a principled answer to the “which loss should we use?” question in the context of peer‑review aggregation. By proving that only the L(1, 1) loss satisfies consensus, efficiency, and strategy‑proofness, the authors offer both a theoretically justified and empirically effective method for aligning individual reviewer judgments into a community‑wide recommendation function. The approach has broader implications for any ML problem where hyper‑parameter or loss‑function selection lacks ground truth, suggesting that axiomatic criteria can serve as a powerful guide for principled design.

Comments & Academic Discussion

Loading comments...

Leave a Comment