Privacy, Secrecy, and Storage with Multiple Noisy Measurements of Identifiers

The key-leakage-storage region is derived for a generalization of a classic two-terminal key agreement model. The additions to the model are that the encoder observes a hidden, or noisy, version of the identifier, and that the encoder and decoder can…

Authors: Onur G"unl"u, Gerhard Kramer

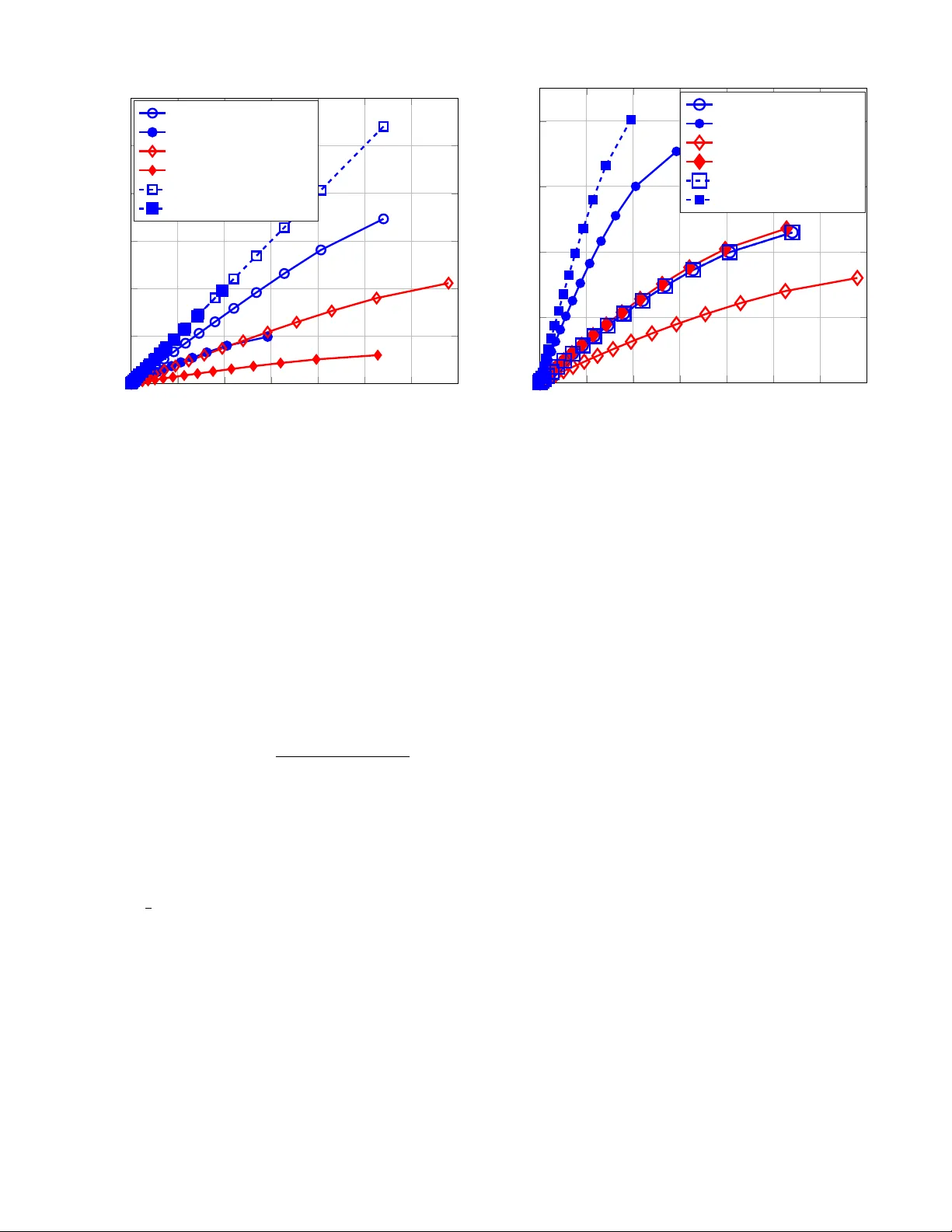

IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY 1 Pri v ac y , Secrec y , and Storage with Multiple Noisy Measurements of Identifiers Onur G ¨ unl ¨ u, Student Member , IEEE, and Gerhard Kramer , F ellow , IEEE Abstract —The key-leakage-storage r egion is derived f or a generalization of a classic two-terminal key agr eement model. The additions to the model are that the encoder observ es a hidden, or noisy , version of the identifier , and that the encoder and decoder can perform multiple measurements. T o illustrate the behavior of the r egion, the theory is applied to binary identifiers and noise modeled via binary symmetric channels. In particular , the key-leakage-storage r egion is simplified by applying Mrs. Gerber’s lemma twice in different directions to a Marko v chain. The gro wth in the r egion as the number of measurements increases is quantified. The amount by which the privacy-leakage rate reduces for a hidden identifier as compared to a noise-free (visible) identifier at the encoder is also given. If the encoder incorrectly models the sour ce as visible, it is shown that substantial secrecy leakage may occur and the r eliability of the r econstructed key might decrease. Index T erms —Inf ormation theoretic privacy , physical unclon- able functions, hidden source model, Mrs. Gerber’ s lemma. I . I N T RO D U C T I O N B IOMETRIC identifiers can be used to authenticate or identify a user , and to generate secret keys [2]. Similarly , physical identifiers such as fine v ariations of ring oscillator (R O) outputs produce device “fingerprints” that can authenti- cate a device and generate ke ys [3]–[5]. For instance, physical unclonable functions (PUFs) are physical identifiers that are cheaper and safer alternatives to key storage in non-v olatile memories [6], [7]. Replacing biometric or physical identifiers, e.g., if the fingerprint is stolen, is often not possible [8] or might require reconfigurable identifier designs [9]. Replaced physical identifiers may hav e correlated outputs with previous identifiers due to surrounding logic [10]. One should, there- fore, limit the information leaked about the identifier outputs, as well as the information leaked about the secret key . Consider the key agreement model introduced in [11] and [12] where two terminals observe dependent random variables and hav e access to a public communication link; an eav es- dropper observes the messages, called helper data , transmitted ov er this link. W e consider a generated-secr et (GS) model and a chosen-secr et (CS) model. For the GS model, an encoder extracts a key from the source, while for the CS model a key Manuscript receiv ed August 30, 2017; revised January 26, 2018 and April 28, 2018; accepted April 28, 2018. O. G ¨ unl ¨ u was supported by the German Research Foundation (DFG) through the project HoliPUF under the grant KR3517/6-1. G. Kramer was supported by an Alexander von Humboldt Professorship endo wed by the German Federal Ministry of Education and Research. P arts of this paper were presented at the 2015 IEEE Conference on Communications and Network Security in [1]. The associate editor coordinating the revie w of this manuscript and approving it for publication was Dr . T an ya Ignatenko ( Corresponding Author: Onur G ¨ unl ¨ u ). The authors are with the Chair of Communications Engineering, T echnical Univ ersity of Munich, 80290 Munich, Germany (e-mail: { onur .gunlu, ger- hard.kramer } @tum.de). that is independent of the source is giv en to the first terminal. The information through helper data about the secret key , called the secr ecy leakage , should be negligible. The infor- mation leaked about the identifier , called the privacy leakage , should be minimized so that an eav esdropper cannot obtain information about a secret key stored by another encoder that uses the same or a correlated identifier . The secret-ke y vs. priv acy-leakage, or key-leakage, regions for the two models are gi ven in [8] and [13]. In addition to the secret-key and pri v acy-leakage rates, it is important to consider the amount of storage in the public link that is required for the decoder to reliably reconstruct the secret key [14]. The storage rate is generally equal to the priv acy-leakage rate when we consider the GS model. Similarly , for the CS model, the storage rate is generally equal to the sum of the secret-key and priv ac y-leakage rates. The storage rate is different from the pri v acy-leakage rate for general (non-negligible) secrecy- leakage lev els [15], unlike for the negligible secrecy-leakage rate constraint considered in [8] and [13]. W e show that the storage and priv acy-leakage rates are dif ferent also when the identifier is a remote or hidden source [16, p. 118], [17, p. 78]. Secret-key based user or device authentication with a priv ac y-leakage constraint is considered in [18]. There is an assumption in [18] that the eavesdropper has side information correlated with the identifier outputs, which is reasonable for biometric identifiers because they are continuously av ail- able for attacks. Howe v er , physical identifiers like PUFs are used for on-demand key reconstruction. In v asiv e attacks on PUFs also permanently change the identifier output [7], so we assume that the eavesdropper cannot obtain information correlated with the PUF output. K ey agreement with correlated side information at the eav esdropper has been studied in [19]– [21]. A. Motivation Multiple measurements of biometric or physical identifiers at the decoder can substantially decrease the priv ac y-leakage and storage rates because less side information is required to reconstruct the secret ke y as compared to a single measure- ment. One obtains a diversity gain, corresponding to a gain in reliability , to combat erroneous measurements by averaging ov er different channels. One can also exploit the additional degrees of freedom by increasing the extracted secret-key size. The latter gain can be viewed as a multiplexing gain, in anal- ogy to multiple antenna systems for wireless communications. Such gains in the achie v able ke y-leakage rates are illustrated in [1] when there are multiple noisy measurements of the source at the decoder . 2 IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY Q X ( S, M ) = Enc 1 ( e X N ) P Y | X P e X | X ˆ S = Dec 1 Y N , M M X N Y N e X N S ˆ S Fig. 1. The GS model where a secret key is generated from a noisy identifier measurement. The abov e models assume that the encoder measures the “true” source. W e propose that the true source, i.e., the ground truth, is instead hidden from the encoder and the encoder measures a noisy version of the source (see also discussions in [3] on key-binding with a hidden identifier , [22] where a hid- den source is considered for authentication, and [23, Sec. II] for indirect rate-distortion problems with action-dependent side information). For example, many secrecy systems require multiple measurements at the encoder to obtain the “noise- free” output. As a second example, different systems may generate different sequences from the same identifier . Consider multiple encoders with independent channels from the hidden source to the corresponding encoder measurements. This is a v alid scenario for biometric and physical identifiers due to differences in the en vironmental conditions when ex- tracting secret ke ys by different encoders. An eavesdropper who wants to seize a secret key can use the information av ailable from other encoders about the hidden source, which leads to priv ac y-leakage with respect to the hidden source rather than the noisy encoder measurements. B. Models for Identifier Outputs W e study the physical and biometric identifier outputs that are independent and identically distributed (i.i.d.) according to a probability distribution with a discrete alphabet. These models are reasonable if one uses transform-coding algo- rithms, as in [24], to extract almost i.i.d. bits from PUFs under varying en vironmental conditions. Similar transform-coding based algorithms have been applied to biometric identifiers to obtain independent output symbols [25]. C. Summary of Contributions and Or ganization W e extend the model of [8] and [13] to include multiple noisy identifier measurements at the encoder and decoder . A summary of the main contributions is as follo ws. • W e derive the key-leakage-storage regions for the GS and CS models with a hidden source; see Figs. 1 and 2 for the corresponding models. Our rate regions recover sev eral results in the literature, including various results for a vis- ible source without ea vesdropper side information in [8], [11]–[14]. W e further recover our previous results from Q X M = Enc 2 ( e X N , S ) P Y | X P e X | X ˆ S = Dec 2 Y N , M M X N Y N e X N S ˆ S Fig. 2. The CS model where a secret key is given to the encoder together with a noisy identifier measurement. [1] that studied the visible source model, as discussed in Section III. • W e ev aluate the rate re gion for a binary hidden source with multiple measurements at the decoder and a single noisy measurement at the encoder by applying Mrs. Gerber’ s lemma (MGL) [26]. The analysis differs from [8] and [13] because we need to apply MGL twice in different directions to a Marko v chain rather than once. For measurement channels with a certain symmetry , we find the optimal auxiliary random variable for coding. • W e show that a significant amount of secrecy might be leaked, and the reliability of the reconstructed ke y might decrease, if the visible source model is mistakenly used for multiple decoder measurements of a hidden source. Such a mistake leads to violations of the security and reliability constraints. • Gains from having multiple measurements at the encoder are also illustrated. W e sho w that gains in the secret-key rate can come at a large cost of storage. This paper is or ganized as follo ws. In Section II, we describe our problem and dev elop the key-leakage-storage regions for the GS and CS models. The key-leakage-storage region of a binary hidden source with multiple measurements at the decoder is derived in Section III. In Section IV, we illustrate gains when using the hidden source model as compared to the visible one and depict the maximum secret-key rates achiev ed by having multiple encoder and decoder measure- ments. Achiev ability proofs and con verses for the derived rate regions are gi ven in Sections V and VI, respecti vely . D. Notation Upper case letters represent random variables and lower case letters their realizations. Superscripts denote a string of variables, e.g., X N = X 1 . . . X i . . . X N , and subscripts denote the position of a v ariable in a string. A random variable X has probability distribution Q X or P X . Calligraphic letters such as X denote sets; set sizes are written as |X | and set complements are denoted as X c . T N ( Q X ) denotes the set of length- N letter -typical sequences with respect to the probability distribution Q X and the positive number [27, Ch. 3], [28]. H b ( x ) = − x log x − (1 − x ) log(1 − x ) is the binary G ¨ UNL ¨ U AND KRAMER: PRIV A CY , SECRECY , AND STORA GE WITH MUL TIPLE NOISY MEASUREMENTS OF IDENTIFIERS 3 entropy function and H − 1 b ( · ) denotes its in v erse with range [0 , 0 . 5] . The ∗ -operator is defined as p ∗ x = p (1 − x )+(1 − p ) x . Unif [1 : N ] denotes the uniform distribution ov er the integers 1 , 2 , . . . , N . The ⊕ -operator denotes modulo-2 summation. I I . S Y S T E M M O D E L S A N D R A T E R E G I O N S A. System Models Consider a discrete memoryless source that generates i.i.d. symbols X N from a finite set X according to a probabil- ity distribution Q X . Identifier outputs are noisy due to, for instance, cuts on a finger . The noise at the encoder and decoder is modeled as memoryless channels P e X | X and P Y | X , respectiv ely . The outputs of P e X | X and P Y | X are, respecti vely , the strings e X N with realizations from a finite set e X N , and Y N with realizations from a finite set Y N . W e thus hav e P e X N X N Y N ( ˜ x N , x N , y N ) = N Y i =1 P e X | X ( ˜ x i | x i ) Q X ( x i ) P Y | X ( y i | x i ) . (1) The distributions P e X | X and P Y | X are assumed to be known for no w , although we later study what happens if the encoder treats e X N as the true source. In the GS model depicted in Fig. 1, an encoder sees e X N and generates a secret key S and helper data M as ( S, M ) = Enc 1 ( e X N ) , where Enc 1 ( · ) is an encoder map- ping. The decoder estimates the key as ˆ S = Dec 1 ( Y N , M ) , where Dec 1 ( · ) is a decoder mapping. In the CS model shown in Fig. 2, S is independent of ( X N , e X N , Y N ) and an encoder mapping Enc 2 ( · ) generates the helper data as M = Enc 2 ( e X N , S ) . The decoder estimates the key as ˆ S = Dec 2 ( Y N , M ) , where Dec 2 ( · ) is a decoder mapping. A (secret-key , pri v acy-leakage, storage) rate triple ( R s , R l , R m ) is achiev able if, given any δ > 0 , there is some N ≥ 1 , an encoder, and a decoder for which R s = log |S | N and Pr[ S 6 = ˆ S ] ≤ δ ( r eliability ) (2) 1 N I ( S ; M ) ≤ δ ( w eak secr ecy ) (3) 1 N I X N ; M ≤ R l + δ ( pr ivacy ) (4) 1 N H ( S ) ≥ R s − δ ( unif ormity ) (5) 1 N H ( M ) ≤ R m + δ ( stor age ) . (6) The key-leakage-storage region is the closure of the set of achiev able rate tuples. W e refer to models where e X N = X N as visible source models (VSMs) and other cases as hidden source models (HSMs). B. Ke y-leaka ge-stor age Re gions W e present the key-leakage-storage re gions for the GS and CS models in Theorems 1 and 2, respecti vely . The proofs of the theorems are giv en in Sections V-VI. W e derive cardinality bounds for the auxiliary random v ariable in Appendix A. Using standard arguments, one can establish the con ve xity of the rate regions, i.e., there is no need for con ve xification via a time-sharing random variable. Theorem 1. The ke y-leaka ge-stor age re gion for the GS model is R 1 = [ P U | f X n ( R s , R l , R m ) : 0 ≤ R s ≤ I ( U ; Y ) , R l ≥ I ( U ; X ) − I ( U ; Y ) , R m ≥ I ( U ; e X ) − I ( U ; Y ) o (7a) wher e P U e X X Y = P U | e X · P e X | X · Q X · P Y | X . (7b) Theorem 2. The ke y-leakage-stor age re gion for the CS model is R 2 = [ P U | f X n ( R s , R l , R m ) : 0 ≤ R s ≤ I ( U ; Y ) , R l ≥ I ( U ; X ) − I ( U ; Y ) , R m ≥ I ( U ; e X ) o (8a) wher e P U e X X Y = P U | e X · P e X | X · Q X · P Y | X . (8b) Remark. The Markov conditions in (7b) and (8b) state that U − e X − X − Y forms a Markov chain. One may restrict the cardinality of the auxiliary random variable U to |U | ≤ | e X | + 2 for both theorems. Remark. The con v erses for Theorems 1 and 2 permit random- ization at the encoder (see (54) ( b ) and (57) ( b ) ) and decoder (see (51) ( a ) ). Since achiev ability requires no randomization, we may use deterministic encoders and decoders. The achie v- ability of R 2 follows directly from the achiev ability of R 1 by using the key S of the GS model as a key of a one-time pad to secure a chosen ke y and storing the output at rate I ( U ; Y ) . W e recover the previous results in [8] and [13] if e X = X in both theorems so that the maximum achiev able secret-key rate I ( e X ; Y ) in these regions is at most I ( X ; Y ) , which is the maximum achiev able secret-key rate if the identifier X N is observed noise-free at the encoder . The minimum achiev able priv ac y-leakage rate in these regions decreases as compared to in [8] and [13] because I ( U ; X ) ≤ I ( U ; e X ) . I I I . B I NA RY I D E N T I FI E R M E A S U R E M E N T S W e ev aluate the key-leakage-storage regions for a binary hidden source. The binary random sequence e X N corresponds to a single noisy measurement of the binary source X N at the encoder , and the random sequence Y N 1: M D is the output of M D measurements of X N for M D ≥ 1 at the decoder . W e assume that the inv erse channel P X | e X is a BSC, an assumption that is fulfilled if P X is uniform and P e X | X is a BSC. Moreo ver , we assume that the channel P Y 1: M D | X can be decomposed into a mixture of BSCs (i.e., binary-input symmetric memoryless channels [29], [30]), as described in [1] and illustrated below in Fig. 3 for dependent BSCs. The former constraint lets us apply MGL to the Marko v chain U − e X − X ; the latter lets us apply an extension of MGL to the Marko v chain U − X − 4 IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY Y 1: M D . Recall that MGL is based on the result that, for any 0 ≤ p ≤ 1 , the function f ( ν ) = H b ( p ∗ H − 1 b ( ν )) (9) is con v ex in ν for 0 ≤ ν ≤ 1 [26]. Evaluating the key-leakage-storage regions corresponds to maximizing I ( U ; Y 1: M D ) and minimizing I ( U ; e X ) for a fixed I ( U ; X ) . It thus requires minimizing H ( Y 1: M D | U ) and max- imizing H ( e X | U ) for a fixed H ( X | U ) . Let ˜ p i ∈ [0 , 0 . 5] be the smaller transition probability from U = u i to X = 0 or X = 1 for i ∈ { 1 , 2 , . . . , |U |} . W e hav e H ( X | U ) = |U | X i =1 P U ( u i ) H b ( ˜ p i ) (10) H ( Y 1: M D | U ) = |U | X i =1 P U ( u i ) g ( ˜ p i ) (11) where g ( ˜ p i ) = H ( Y 1: M D | U = u i ) . (12) In the follo wing, we first study dependent BSCs P Y 1: M D | X , which can be decomposed into a mixture of BSCs. W e next discuss the conv exity of the function g ( H − 1 b ( ν )) in ν for binary-input channels P Y 1: M D | X that can be decomposed into a mixture of BSCs to establish a tight lower bound on H ( Y 1: M D | U ) if we fix H ( X | U ) . Then, we simplify the key- leakage-storage regions of binary identifiers measured through such channels P Y 1: M D | X . A. Measurements Thr ough Dependent BSCs W e show that channels with multiple measurements of X through dependent BSCs can be decomposed into a mixture of BSCs. For simplicity , consider M D = 3 with Y 1 Y 2 Y 3 = X 1 1 1 ⊕ B 1 B 2 B 3 (13) where B 1 , B 2 , and B 3 are mutually dependent binary random variables that are jointly independent of X . W e can decompose the channel (13) into three BSCs, since we have P Y 1 Y 2 Y 3 | X ( y 1 , y 2 , y 3 | x ) = P Y 1 Y 2 Y 3 | X ( ¯ y 1 , ¯ y 2 , ¯ y 3 | ¯ x ) (14) where ¯ x = 1 − x is the one’ s complement of x . Define q y 1 y 2 y 3 = P Y 1 Y 2 Y 3 | X ( y 1 , y 2 , y 3 | 0) . (15) The decomposed channel is depicted in Fig. 3, where the subchannel probabilities are P A (0) = q 000 + q 111 (16) P A (1) = q 001 + q 110 (17) P A (2) = q 010 + q 101 (18) P A (3) = q 011 + q 100 (19) and the crossover probabilities are p 0 = q 111 /P A (0) , p 1 = q 110 /P A (1) , p 2 = q 101 /P A (2) , and p 3 = q 100 /P A (3) . X 0 1 001 110 010 101 000 111 011 100 Y 1 Y 2 Y 3 P A (1) P A (2) P A (0) P A (3) p 1 = q 110 /P A (1) p 2 = q 101 /P A (2) p 0 = q 111 /P A (0) p 3 = q 100 /P A (3) Fig. 3. M D = 3 dependent BSCs represented as a mixture of BSCs. More generally , we can decompose a channel with M D de- pendent BSC measurements into 2 M D − 1 subchannels each with output symbols such that one symbol is the one’ s complement of the other symbol. W e define q b M D = P Y 1: M D | X ( b M D | 0) (20) for the length- M D binary string b M D = b 0 b 1 . . . b M D − 1 and P A ( a ) = q Bin ( a ) + q Bin ( a ) (21) where Bin ( a ) is the one’ s complement of Bin ( a ) for a = 0 , 1 , . . . , 2 M D − 1 − 1 . The crossov er probability of the a -th subchannel is p a = q Bin ( a ) /P A ( a ) . B. Mixtures of BSCs Consider a channel P Y 1: M D | X with a binary input and M D binary measurements as output, i.e., the channel has 2 M D possible output symbols. W e decompose the channel into L = 2 M D − 1 BSCs as described above. W e inde x these BSCs from 1 to L . Let A = a represent the BSC index chosen by the channel and let p a be the crossover probability of a -th subchannel. The conditional decoder-output entropy is H ( Y 1: M D | U ) ( a ) = H ( Y 1: M D A | U ) ( b ) = H ( A ) + |U | X i =1 P U ( u i ) L − 1 X a =0 P A ( a ) H ( Y 1: M D | A = a, U = u i ) ( c ) = H ( A ) + |U | X i =1 P U ( u i ) L − 1 X a =0 P A ( a ) H b ( p a ∗ H − 1 b H ( X | U = u i ) ) = |U | X i =1 P U ( u i ) L − 1 X a =0 P A ( a ) H b ( p a ∗ ˜ p i ) − log P A ( a ) (22) where ( a ) follo ws because the output symbols determine A , ( b ) follo ws since A is independent of X so that U and A are G ¨ UNL ¨ U AND KRAMER: PRIV A CY , SECRECY , AND STORA GE WITH MUL TIPLE NOISY MEASUREMENTS OF IDENTIFIERS 5 independent, and ( c ) follows because H b ( p a ∗ p ) is symmetric with respect to p = 1 2 . Using (12) and (22), we hav e g ( ˜ p ) = L − 1 X a =0 P A ( a ) H b ( p a ∗ ˜ p ) − log P A ( a ) . (23) Examples of channels that are mixtures of BSCs are the depen- dent BSCs in Section III-A, the binary erasure channel (BEC) [31, p. 107], and additiv e white Gaussian noise (A WGN) channels with binary phase shift keying (BPSK) signals and symmetric (e.g., uniform) quantizers [31, p. 108]. The con ve xity property (9) carries over to channels P Y 1: M D | X that can be decomposed into a mixture of BSCs [29], i.e., the function g ( · ) in (23) has the property that g ( H − 1 b ( ν )) is conv ex in ν for 0 ≤ ν ≤ 1 . T o see this, note that P A ( · ) is fixed and the follo wing term in (22) ( c ) L − 1 X a =0 P A ( a ) H b ( p a ∗ H − 1 b ( ν i ))) where ν i = H ( X | U = u i ) , is a weighted sum of conv ex functions of ν i by MGL. W e e xtend this result belo w in Theorem 3 to show that the boundary points of R 1 and R 2 are achiev ed by channels P e X | U that are BSCs. C. T wo Lemmas Consider a binary-input channel P Y 1: M D | X . For Theorem 3 below , we use the follo wing two technical lemmas. Lemma 1. W e have H ( Y 1: M D | U ) ≥ g H − 1 b H ( X | U ) . (24) Pr oof: Since g ( H − 1 b ( ν )) is con v ex in ν , by Jensen’ s inequality we hav e H ( Y 1: M D | U ) = |U | X i =1 P U ( u i ) g H − 1 b H b ( ˜ p i ) ≥ g H − 1 b |U | X i =1 P U ( u i ) H b ( ˜ p i ) !! . Lemma 2. Ther e is a unique ˜ p ∗ in the interval [0 , 0 . 5] for which H ( X | U ) = H b ( ˜ p ∗ ) = ν . Pr oof: The function H b ( · ) is strictly increasing from 0 to 1 in the interval [0 , 0 . 5) . W e further hav e 0 ≤ H ( X | U ) ≤ H ( X ) ≤ 1 . D. Simplified Rate Region Char acterizations W e now simplify the key-leakage-storage regions for the measurement channels P e X | X and P Y 1: M D | X considered above so that a single parameter characterizes the regions. Theorem 3. Suppose P X | e X is a BSC with cr ossover pr oba- bility p , where 0 ≤ p ≤ 0 . 5 , and P Y 1: M D | X is a mixture of BSCs. The boundary points of R 1 and R 2 ar e achieved by channels P e X | U that ar e BSCs. Pr oof: Consider the boundary points of R 1 ( R s , R l , R m ) = I ( U ; Y 1: M D ) , I ( U ; X ) − I ( U ; Y 1: M D ) , I ( U ; e X ) − I ( U ; Y 1: M D ) . For a fix ed H ( X | U ) , we obtain I ( U ; Y 1: M D ) ≤ H ( Y 1: M D ) − g H − 1 b ( H ( X | U )) (25) and I ( U ; X ) − I ( U ; Y 1: M D ) ≥ H ( X ) − H ( X | U ) − H ( Y 1: M D ) + g H − 1 b ( H ( X | U ) ) (26) and I ( U ; e X ) − I ( U ; Y 1: M D ) ≥ H ( e X ) − H b H − 1 b ( H ( X | U )) − p 1 − 2 p ! − H ( Y 1: M D ) + g H − 1 b ( H ( X | U ) ) (27) where we used Lemma 1 to bound H ( Y 1: M D | U ) , and the MGL result in (9) with ν = H ( e X | U ) to bound H ( e X | U ) . By choosing P U | e X such that P e X | U is a BSC with crossov er probability ˜ x = H − 1 b ( H ( X | U )) − p 1 − 2 p (28) where ˜ x ∈ [0 , 0 . 5] , we achieve the right-hand sides of (25)- (27) since assigning H ( e X | U ) = H b ( ˜ x ) achie ves equality in (9) and (24) for the given channels. By Lemma 2, this ˜ x is the unique solution. The proof for R 2 is similar . The con ve xity property for a BSC, used in MGL, is ex- tended to any binary channel P Y 1 | X by Witsenhausen in [32], by W yner as a remark in [32, Sec. III], and also by Ahslwede and K ¨ orner in [33]. Therefore, the channels P Y 1: M D | X that can be decomposed into a mixture of binary channels also satisfy the conv e xity property . This result follo ws because the function g ( · ) for such channels, obtained from (12), also consists of a constant part and a weighted sum of functions that are con ve x in ν i . Remark. In [1, Theorem 1], we claimed that for a mixture P Y 1: M D | X of binary channels, we achieve the boundary points of R 1 and R 2 when e X N = X N by using channels P X | U that are BSCs. It turns out that this claim is valid for mixtures P Y 1: M D | X of BSCs, but not necessarily otherwise. The reason is that one cannot necessarily achie v e equality in [1, (25) and (26)]. This is illustrated in Appendix B for a binary asymmetric channel. I V . M O D E L C O M PA R I S O N S A. Hidden Source Model W e study the GS model with a hidden binary symmetric source (BSS) such that Q X (0) = Q X (1) = 0 . 5 . Suppose P e X | X is a BSC with crossover probability p E and P Y 1: M D | X consists of M D independent BSCs each with crosso ver prob- ability p D . The in verse channel P X | e X is also a BSC with 6 IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 Storage Rate (bits/source-bit) Priv ac y-leakage Rate (bits/source-bit) HSM p E =0.03&M D =1 HSM p E =0.03&M D =3 HSM p E =0.10&M D =1 HSM p E =0.10&M D =3 VSM p E =0.03&M D =1 VSM p E =0.03&M D =3 Fig. 4. Storage-leakage projection of the boundary triples for the GS model with p D = 0 . 10 . crossov er probability p E due to source symmetry . Due to the independence assumption for M D BSCs, the probabilities of sequences y 1: M D with the same Hamming weight are equal. Therefore, the decoder-output entrop y is H ( Y 1: M D ) = M D X k =0 Pr " M D X m =1 Y m = k # × log 2 M D k , Pr " M D X m =1 Y m = k # ! (29) where Pr " M D X m =1 Y m = k # = M D k ¯ p M D − k D p k D + ¯ p k D p M D − k D 2 ! . (30) By Theorem 3, the crossover probability ˜ x , as in (28), of the BSC P e X | U is the only parameter required to characterize R 1 for the considered source and channels. Thus, using Theo- rem 3, the conditional entropy H ( Y 1: M D | U ) can be calculated similarly as in (29) by using the weighted sum in (30) with the weights ˜ p = ˜ p 1 = ˜ p 2 and 1 − ˜ p , defined in Section III, instead of 1 2 . B. Mismatched Code Design The encoder , e.g., a hardware manufacturer (for PUFs) or a trusted entity (for biometrics), models the source as visible or hidden, and a code is then constructed for the assumed model. Therefore, the assumed model determines the performance of the actual system. In the literature, the physical and biometric identifiers are modeled by the VSM; see, e.g., [8], [13], [34], [35]. W e first illustrate that treating the HSM as if it were a VSM might give pessimistic pri v acy-leakage rate results for M D ≥ 1 and over -optimistic secret-key and storage rate results for M D > 1 , which results in unnoticed secrecy leakage and reduced reliability . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 0 . 2 0 . 4 0 . 6 0 . 8 Storage Rate (bits/source-bit) Secret-key Rate (bits/source-bit) HSM p E =0.03&M D =1 HSM p E =0.03&M D =3 HSM p E =0.10&M D =1 HSM p E =0.10&M D =3 VSM p E =0.03&M D =1 VSM p E =0.03&M D =3 Fig. 5. Storage-key projection of the boundary triples for the GS model with p D = 0 . 10 . Consider the crossov er probabilities p E ∈ { 0 . 03 , 0 . 10 } , which are realistic v alues for biometric [8] and physical identifiers [3]. W e fix the crossov er probability of P Y i | X for i = 1 , 2 , . . . , M D to p D = 0 . 10 . For the supposed VSM, e X N is mistakenly considered to be a noise-free source, i.e., p V S M E = 0 , and the corresponding decoder-output channel P V S M Y 1: M D | e X consists of M D independent BSCs each with crosso ver prob- ability p E ∗ p D because P Y | e X is estimated from identifier measurements. Howe v er , the HSM considers an encoder mea- surement through a BSC with crossover probability p E and M D independent decoder measurements through BSCs, each with crossover probability p D . Therefore, the HSM results in a conditional probability distrib ution P Y 1: M D | e X that is dif ferent from the supposed VSM distrib ution P V S M Y 1: M D | e X for M D > 1 and in a key-leakage-storage region R 1 that is different from the supposed VSM region R V S M 1 for M D ≥ 1 . W e next illustrate the rate regions for dif ferent numbers of encoder and decoder measurements with p E and p D values given abo ve. C. Single Encoder and Multiple Decoder Measur ements The projections of the boundary triples ( R s , R l , R m ) for the HSM and VSM onto the ( R m , R l ) -plane and onto the ( R m , R s ) -plane are depicted in Fig. 4 and Fig. 5, respectiv ely , for dif ferent crossov er probabilities at the encoder and dif ferent numbers of measurements at the decoder . Every marker on each curve corresponds to the ev aluation of the rate-region boundaries for a fixed crossov er probability ˜ x gi ven in (28), so Figs. 4 and 5 should be considered jointly for analysis. Recall from (7a) that an y smaller R s and greater R l and R m than the boundary triples are achiev able. At the highest storage-leakage points ( R ∗ m , R ∗ l ) in Fig. 4, one achiev es the maximum secret-ke y rates R ∗ s , which corre- sponds to the highest points in Fig. 5. Moreov er , Fig. 4 shows that if M D = 1 , for the supposed VSM the priv ac y-leakage and G ¨ UNL ¨ U AND KRAMER: PRIV A CY , SECRECY , AND STORA GE WITH MUL TIPLE NOISY MEASUREMENTS OF IDENTIFIERS 7 storage rates are equal, and are also equal to the storage rate for the HSM, and the supposed VSM gives pessimistic priv acy- leakage rate results. Fig. 5 shows that increasing the number of decoder measurements increases the maximum secret-key rate R ∗ s for the HSM and supposed VSM. The R ∗ s of the HSM and supposed VSM are equal if M D = 1 , but the supposed VSM gi ves over -optimistic secret-k ey and storage rate results for M D > 1 . These comparisons show that designing a code for the supposed VSM can lead to substantial secrecy leakage, which violates (3), and reliability reduction, which violates (2). Consider , for instance, the parameters p E = 0 . 03 , p D = 0 . 10 , M D = 35 . For the GS and CS models with the HSM, R ∗ l is approximately 3 × 10 − 9 bits/source-bit. The priv acy- leakage rate can thus be made small for both models with multiple decoder measurements. R ∗ m is approximately 0 . 194 bits/source-bit for the GS model, which is smaller than the 0 . 541 bits/source-bit obtained for M D = 1 . W e remark that less storage decreases the hardware cost. It is not possible for the CS model to giv e such a small storage rate with multiple decoder measurements since the key is independent of the hidden source. Independence of the key results in an additional storage rate, equal to R ∗ s that is non-decreasing with respect to M D , to reliably reconstruct the secret key at the decoder . One may build intuition about the gains achieved by having multiple decoder measurements as follows. Since the decoder sees M D noisy versions Y 1: M D of the same hidden source symbol X , it can “combine” the measurements to form a less noisy equiv alent channel. This is entirely similar to using maximal ratio combining to obtain a sufficient statistic about a symbol that is transmitted several times over an A WGN channel. The resulting gain may thus be interpreted as a div ersity gain. Figs. 4 and 5 further show that increasing p E decreases R ∗ s and R ∗ l , and increases R ∗ m for the HSM. For instance, consider the HSM, and fix M D = 1 and the secret-key rate to its maximum R ∗ s = 0 . 320 bits/source-bit achieved for p E = 0 . 10 . The storage rate for p E = 0 . 03 is approximately 56 . 2% less than the storage rate for p E = 0 . 10 and their priv ac y- leakage rates are equal. Therefore, more reliable encoder- output channels P e X | X , i.e., channels with smaller p E values, achiev e better storage rates. Similarly , we can sho w that more reliable decoder-output channels P Y 1: M D | X , i.e., channels with smaller p D values, impro ve the rate triples (see also [1, Fig. 5]) due to the independence assumption for encoder and decoder measurements. Remark. One can alternativ ely consider encoder and decoder measurements through a broadcast channel. An unreliable channel at the encoder might be desirable for this case if the decoder-output channel is unreliable, since such correlations might allow less storage and priv ac y-leakage, and greater secret-key rates. D. Multiple Encoder and Decoder Measurements Consider the general case with M E ≥ 1 measurements e X N 1: M E = [ e X 1 e X 2 . . . e X M E ] N of the hidden source X N at the encoder for the GS model with the HSM. Suppose each encoder-output channel P e X i | X for i = 1 , 2 , . . . , M E is an T ABLE I K E Y - L E A KA GE - S TO R AG E ( R ∗ s , R ∗ l , R ∗ m ) R A T E P O IN T S F O R T H E G S M O DE L W I TH T H E H S M F O R p E = 0 . 03 A N D p D = 0 . 10 . ( M E , M D ) ( R ∗ s , R ∗ l , R ∗ m ) bits/source-bit (1 , 1) (0 . 459 , 0 . 346 , 0 . 541) (1 , 3) (0 . 707 , 0 . 098 , 0 . 293) (3 , 1) (0 . 525 , 0 . 458 , 1 . 041) (3 , 3) (0 . 849 , 0 . 134 , 0 . 717) independent BSC with crossover probability p E . The maximum secret-key rate R ∗ s is achieved by choosing U = e X 1: M E for R 1 . W e list the ( R ∗ s , R ∗ l , R ∗ m ) points in T able I for different numbers of encoder and decoder measurements with p E = 0 . 03 and p D = 0 . 10 . T able I shows that the storage rates for multiple encoder measurements can be greater than 1 bit/source-bit, which cannot be the case for a single encoder measurement. Increasing the number M E of encoder measurements to in- crease the secret-key rate, as listed in T able I, can therefore come at a large cost of storage and can increase the pri vac y- leakage rate. V . A C H I E V A B I L I T Y P R O O F S A. Achievability Pr oof for Theor em 1 1) Overview: W e choose the conditional probabilities P U | e X ( u | ˜ x ) for all u ∈ U and ˜ x ∈ e X . W e randomly and independently generate about 2 N I ( U ; e X ) sequences u N ( m, s ) for m = 1 , . . . , 2 N R m and s = 1 , . . . , 2 N R s . Consider ap- proximately 2 N ( I ( U ; e X ) − I ( U ; Y )) storage labels m and 2 N I ( U ; Y ) key labels s , which can be considered as bins. The encoder finds a u N ( m, s ) sequence that is jointly typical with the observed measurement ˜ x N of the source x N . It then publicly sends the storage label m to the decoder . The decoder sees another measurement y N of the source and it determines the unique u N ( m, ˆ s ) that is jointly typical with y N . Using standard arguments, one can show that the error probability Pr[ S 6 = ˆ S ] → 0 as N → ∞ . The secrec y-leakage rate is negligible if there is no error . The priv ac y-leakage rate is approximately I ( U ; X ) − I ( U ; Y ) , which requires a different analysis than in [8]. 2) Pr oof: Fix P U | e X . Randomly and independently generate codew ords u N ( m, s ) , m = 1 , . . . , 2 N R m , s = 1 , . . . , 2 N R s according to Q N i =1 P U ( u i ) , where P U ( u i ) = X ( ˜ x,x ) ∈ e X ×X P U | e X ( u i | ˜ x ) P e X | X ( ˜ x | x ) Q X ( x ) . (31) These codew ords define the codebook C = { u N ( m, s ) , m = 1 , . . . , 2 N R m , s = 1 , . . . , 2 N R s } and we denote the random codebook by ˜ C = { U N ( m, s ) } (2 N R m , 2 N R s ) ( m,s )=(1 , 1) . (32) Let 0 < 0 < . Encoding : Giv en ˜ x N , the encoder looks for a code word that is jointly typical with ˜ x N , i.e., ( u N ( m, s ) , ˜ x N ) ∈ T N 0 ( P U e X ) . If 8 IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY there is one or more such codew ord, then the encoder chooses one of them and puts out ( m, s ) . If there is no such codeword, set m = s = 1 . The encoder publicly stores the label m . Decoding : The decoder puts out ˆ s if there is a unique key label ˆ s that satisfies the typicality check ( u N ( m, ˆ s ) , y N ) ∈ T N ( P U Y ) ; otherwise, it sets ˆ s = 1 . Err or Pr obability : Define the error events E 1 = ( U N ( m, s ) , e X N ) 6∈ T N 0 ( P U e X ) for all ( m, s ) ∈ [1 : 2 N R m ] × [1 : 2 N R s ] E 2 = ( U N ( M , s ) , e X N , Y N ) 6∈ T N ( P U e X Y ) for all s ∈ [1 : 2 N R s ] E 3 = ( U N ( M , s 0 ) , Y N ) ∈ T N ( P U Y ) for some s 0 6 = S . and the overall error ev ent E = ∪ 3 i =1 E i . Using the union bound, we hav e Pr[ E ] ≤ Pr[ E 1 ] + Pr[ E c 1 ∩ E 2 ] + Pr[ E 3 ] . (33) Pr[ E 1 ] is small with large N and small 0 if R m + R s > I ( U ; e X ) + δ ( 0 ) (34) where δ ( 0 ) is small with small 0 (see the cov ering lemma [36, Lemma 3.3]). Note that the event { e X N = ˜ x N , U N = u N } implies Y N ∼ Q N i =1 P Y | e X ( y i | ˜ x i ) . By the conditional typicality lemma [36, Section 2.5], we obtain that Pr[ E c 1 ∩ E 2 ] is small with large N . Due to symmetry in the code generation, we can set M = 1 and hav e Pr[ E 3 ] = Pr[( U N (1 , s 0 ) , Y N ) ∈ T N ( P U Y ) for some s 0 6 = S ] . Using the packing lemma [36, Lemma 3.1], we find that Pr[ E 3 ] is small with large N and small if R s < I ( U ; Y ) − δ ( ) (35) where δ ( ) is small with small . W e therefore define some δ 1 and δ 2 , where δ 2 > δ ( ) and δ 1 > δ ( 0 )+ δ 2 , that are small with small and some δ 0 that is small with large N and small such that Pr[ E ] ≤ δ 0 (36) R m = I ( U ; e X ) − I ( U ; Y ) + δ 1 (37) R s = I ( U ; Y ) − δ 2 . (38) W e first establish bounds on the secrecy-leakage, secret-key , priv ac y-leakage, and storage rates av eraged over the random codebook ˜ C and then we show that there exists a codebook satisfying (2)-(6). In the following, U N represents U N ( M , S ) . Secr ecy-leakag e Rate : Observe that H ( M S | ˜ C ) ( a ) = H ( U N M S | ˜ C ) ≥ H ( U N | ˜ C ) = H ( U N e X N | ˜ C ) − H ( e X N | U N ˜ C ) ( b ) ≥ N H ( e X ) − H ( e X N | U N ˜ C ) ( c ) ≥ N H ( e X ) − N ( H ( e X | U ) + δ ) = N ( I ( U ; e X ) − δ ) ( d ) = N ( R m + R s − δ 1 + δ 2 − δ ) (39) where ( a ) follo ws because, gi v en the codebook, M S determines U N , ( b ) follows because e X N is independent of the codebook, ( c ) follows by using [37, Lemma 4] for δ that is small with small , ( d ) follows by (37) and (38). Using (39), we obtain 1 N I ( S ; M | ˜ C ) = 1 N ( H ( S | ˜ C ) + H ( M | ˜ C ) − H ( M S | ˜ C )) ≤ 1 N ( N R s + N R m − H ( M S | ˜ C )) ≤ δ 1 − δ 2 + δ (40) which is small with small . K e y Uniformity : W e ha ve 1 N H ( S | ˜ C ) ≥ 1 N ( H ( M S | ˜ C ) − H ( M | ˜ C )) ( a ) ≥ R s − δ 1 + δ 2 − δ . (41) where ( a ) follows by (39). Privacy-leakage Rate : First, consider H ( M | X N ˜ C ) = H ( M e X N | X N ˜ C ) − H ( e X N | M X N ˜ C ) ( a ) ≥ H ( e X N | X N ) − H ( e X N | M X N ˜ C ) ( b ) = H ( e X N | X N ) − H ( e X N S | M X N ˜ C ) ( c ) ≥ N H ( e X | X ) − H ( S | M X N ˜ C ) − H ( e X N | X N U N ˜ C ) ( d ) = N H ( e X | X ) − H ( S | M X N Y N ˆ S ˜ C ) − H ( e X N | X N U N ˜ C ) ( e ) ≥ N H ( e X | X ) − Pr[ E ] log |S | − H b (Pr[ E ]) − H ( e X N | X N U N ˜ C ) ( f ) = N H ( e X | X ) − N δ 00 − H ( e X N | X N U N ˜ C ) ( g ) ≥ N H ( e X | X ) − N δ 00 − N ( H ( e X | X U ) + δ 0 ) = N ( I ( U ; e X | X ) − ( δ 00 + δ 0 )) (42) where ( a ) follows because ˜ C is independent of e X N X N , ( b ) follows because, given the codebook, e X N determines S , ( c ) follows since, given the codebook, M S determines U n , ( d ) follows by the Marko v chain S M U N − e X N − X N − Y N , ( e ) follows from Fano’ s inequality , ( f ) follows by using |S | ≤ | e X | N and defining a parameter δ 00 that is small with large N and small due to (36), ( g ) follo ws by using [37, Lemma 4] for δ that is small with small . Using (42), we hav e 1 N I ( X N ; M | ˜ C ) = 1 N ( H ( M | ˜ C ) − H ( M | X N ˜ C )) ≤ R m − ( I ( U ; e X | X ) − ( δ 00 + δ 0 )) ( a ) = R m − ( H ( U | X ) − H ( U | e X ) − ( δ 00 + δ 0 )) G ¨ UNL ¨ U AND KRAMER: PRIV A CY , SECRECY , AND STORA GE WITH MUL TIPLE NOISY MEASUREMENTS OF IDENTIFIERS 9 ( b ) = I ( U ; X ) − I ( U ; Y ) + δ 00 + δ 0 + δ 1 (43) where ( a ) follo ws by the Marko v chain U − e X − X and ( b ) follows by (37). Storag e Rate : Using (37), we hav e 1 N H ( M | ˜ C ) ≤ R m = I ( U ; e X ) − I ( U ; Y ) + δ 1 . (44) Applying the selection lemma [38, Lemma 2.2] to these re- sults, there exists a codebook for the GS model that approaches the key-leakage-storage triple ( R s , R l , R m ) = I ( U ; Y ) , I ( U ; X ) − I ( U ; Y ) , I ( U ; e X ) − I ( U ; Y ) . B. Achievability Pr oof for Theor em 2 1) Overview: W e use the achiev ability proof of the GS model in combination with a one-time pad to conceal the embedded secret key S by the ke y S 0 generated by the GS model. The embedded key S is uniformly distrib uted and independent of other random variables. The secret-key and priv ac y-leakage rates do not change, b ut the storage rate I ( U ; e X ) is approximately the sum of the storage and secret- key rates of the GS model. 2) Pr oof: Suppose S has the same cardinality as S 0 , i.e., |S | = |S 0 | . W e use the codebook, encoder , and decoder of the GS model and add the masking layer (one-time pad) approach of [11] and [8] for the CS model as follows: M = Enc 2 ( e X N , S ) = [ S 0 + S, M 0 ] (45) ˆ S = Dec 2 ( Y N , M ) = S 0 + S − ˆ S 0 (46) where M 0 is the helper data for the GS model, and the addition and subtraction operations are modulo- |S | . Err or Pr obability : W e have Pr[ S 6 = ˆ S ] = Pr[ S 0 6 = ˆ S 0 ] (47) which is small by (36). Secr ecy-leakag e Rate : The helper data M of the CS model consists of S 0 + S and the helper data M 0 of the GS model. Using (40), (41), and since S is independent of S 0 M 0 ˜ C and uniformly distributed, we obtain 1 N I ( S ; M 0 , S 0 + S | ˜ C ) ≤ 2( δ 1 − δ 2 + δ ) . (48) W e thus have a secrecy-leakage rate that is small with small . Privacy-leakage Rate : Using (43), we ha ve 1 N I ( X N ; M 0 , S 0 + S | ˜ C ) ≤ I ( U ; X ) − I ( U ; Y ) + δ 00 + δ 0 + δ 1 (49) since S 0 + S is independent of M 0 X N ˜ C . Storag e Rate : W e obtain 1 N H ( M 0 , S 0 + S | ˜ C ) ( a ) ≤ R m + 1 N H ( S 0 + S ) ( b ) = I ( U ; e X ) − I ( U ; Y ) + δ 1 + I ( U ; Y ) − δ 2 = I ( U ; e X ) + δ 1 − δ 2 (50) where ( a ) follo ws because S 0 + S is independent of M 0 ˜ C and ( b ) follows by (37) and (38). Using the selection lemma [38, Lemma 2.2], there exists a codebook for the CS model that approaches the key-leakage- storage triple ( R s , R l , R m ) = I ( U ; Y ) , I ( U ; X ) − I ( U ; Y ) , I ( U ; e X ) . V I . C O N V E R S E S The con verses for Theorems 1 and 2 follo w similar steps. Therefore, we gi ve the proofs of both theorems with dif ferent bounds for the storage rates. Suppose that for some δ > 0 and N there is an encoder and a decoder such that (2)-(6) are satisfied for the GS or CS model by the ke y-leakage-storage triple ( R s , R l , R m ) . F ano’ s inequality for S and ˆ S gives N N ≥ H ( S | ˆ S ) ( a ) ≥ H ( S | M Y N ) (51) where N = δ R s + H b ( δ ) / N and ( a ) permits randomized decoding. Note that N → 0 if δ → 0 . W e use (51) to bound the key , leakage, and storage rates. Secr et-ke y rate : Using (3), (5), (51), and because Y i − 1 − M S X i − 1 − Y i forms a Markov chain, we obtain N ( R s − δ ) ≤ H ( S ) = I ( S ; M ) + I ( S ; Y N | M ) + H ( S | M Y N ) ≤ N H ( Y ) − N X i =1 H ( Y i | M S X i − 1 ) + N ( δ + N ) . (52) Identify U i = M S X i − 1 in (52) so that U i − e X i − X i − Y i forms a Markov chain, which follows since P Y | X and P e X | X are memoryless channels. Introduce a time-sharing random variable Q ∼ Unif [1 : N ] independent of other random variables. Define X = X Q , e X = e X Q , Y = Y Q , and U = ( U Q ,Q ) so that U − e X − X − Y forms a Markov chain. Using (52), we obtain R s ≤ H ( Y ) − 1 N N X i =1 H ( Y i | U i ) + 2 δ + N = H ( Y ) − H ( Y Q | U Q Q ) + 2 δ + N = I ( U ; Y ) + 2 δ + N . (53) Storag e rate : F or the GS model, we hav e N ( R m + δ ) ( a ) ≥ H ( M ) ≥ H ( M | Y N ) ( b ) ≥ H ( M S Y N ) − H ( Y N ) − H ( S | M Y N ) − H ( M S | e X N ) ( c ) ≥ h N X i = 1 I ( M S e X i − 1 ; e X i ) − I ( M S Y i − 1 ; Y i ) i − N N ( d ) ≥ h N X i = 1 I ( M S X i − 1 ; e X i ) − I ( M S X i − 1 ; Y i ) i − N N = h N X i = 1 I ( U i ; e X i ) − I ( U i ; Y i ) i − N N (54) 10 IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY where ( a ) follo ws by (6), ( b ) follows from the encoding step, ( c ) follows by (51) and because e X N and Y N are i.i.d., and ( d ) follows by the Marko v chains Y i − 1 − M S X i − 1 − Y i (55a) X i − 1 − M S e X i − 1 − e X i . (55b) Using the definition of U above, we obtain for the GS model R m ≥ I ( U ; e X ) − I ( U ; Y ) − ( δ + N ) . (56) For the CS model, we ha ve N ( R m + δ ) ( a ) ≥ H ( M ) = I ( M S ; e X N ) − H ( S | M ) + H ( M S | e X N ) ( b ) ≥ I ( M S ; e X N ) + I ( S ; M ) ≥ N X i =1 I ( M S e X i − 1 ; e X i ) ( c ) ≥ N X i =1 I ( M S X i − 1 ; e X i ) = N X i =1 I ( U i ; e X i ) (57) where ( a ) follo ws by (6), ( b ) follo ws because S is independent of e X N and from the encoding step, and ( c ) follows by applying (55b). Using the definition of U above, we hav e for the CS model R m ≥ I ( U ; e X ) − δ. (58) Privacy-leakage r ate : Observe that N ( R l + δ ) ( a ) ≥ I ( X N ; M ) ≥ H ( M | Y N ) − H ( M | X N ) = H ( M S Y N ) − H ( S | M Y N ) − H ( Y N ) − H ( M | X N ) ≥ I ( M S ; X N ) − I ( M S ; Y N ) − H ( S | M Y N ) ( b ) ≥ h N X i = 1 I ( M S X i − 1 ; X i ) − I ( M S Y i − 1 ; Y i ) i − N N ( c ) ≥ h N X i = 1 I ( M S X i − 1 ; X i ) − I ( M S X i − 1 ; Y i ) i − N N = h N X i = 1 I ( U i ; X i ) − I ( U i ; Y i ) i − N N (59) where ( a ) follows by (4), ( b ) follows by (51), and ( c ) follows from the Marko v chains in (55a). Using the definition of U abov e, we hav e R l ≥ I ( U ; X ) − I ( U ; Y ) − ( δ + N ) . (60) The conv erse for Theorem 1 follows by (53), (56), and (60), and by letting δ → 0 . The con verse for Theorem 2 follo ws by (53), (58), and (60), and by letting δ → 0 . V I I . C O N C L U S I O N W e deriv ed the ke y-leakage-storage regions for a HSM for noisy biometric and physical identifiers. For a BSS, we used MGL to evaluate the key-leakage-storage regions for decoder- output channels that can be decomposed into a mixture of BSCs and quantified the impro v ements in all rates with multiple measurements at the decoder as compared to a single measurement. By taking a large number of measurements of the hidden source at the decoder, the priv acy-leakage rate is made small for the GS and CS models, and the storage rate is decreased for the GS model, which is not possible for the CS model. W e sho wed that if one mistakenly uses the VSM when the source is hidden, the pri vac y-leakage rate might be pessimistic, whereas the secret-ke y and storage rates might be ov er-optimistic, which leads to unnoticed secrecy leakage and reliability reductions. The points that achie ve the maximum secret-key rates in the ke y-leakage-storage regions for multiple encoder measurements show that the gain in the secret-key rate from multiple encoder measurements results in greater priv ac y-leakage and significantly greater storage rates. The examples illustrated that higher reliability in the en- coder measurements impro ves the storage rate, which also applies to decoder measurements because the encoder and decoder measurements are obtained through separate chan- nels. In future w ork, we plan to consider key-leakage-storage regions for encoder and decoder measurements through a broadcast channel, and to show that reduced reliability in the measurements might enlarge the key-leakage-storage region for this case. A C K N O W L E D G M E N T O. G ¨ unl ¨ u thanks Roy T imo and Kittipong Kittichokechai for useful discussions and insightful comments. A P P E N D I X A C A R D I NA L I T Y B O U N D Consider e X = { ˜ x 1 , ˜ x 2 , . . . , ˜ x | e X | } and the follo wing | e X | + 2 real-valued continuous functions on the connected compact subset P of all probability distributions on e X : f j ( P e X ) = P e X ( ˜ x j ) for j = 1 , 2 , . . . , | e X | − 1 H ( X ) for j = | e X | H ( e X ) for j = | e X | + 1 H ( Y ) for j = | e X | + 2 . (61) By using the support lemma [16, Lemma 15.4], we find that there is a random variable U 0 taking at most | e X | + 2 values such that P e X , H ( e X ) , H ( X | U ) , H ( e X | U ) , and H ( Y | U ) are preserved if we replace U with U 0 . W e preserve the joint distribution P e X X Y ( ˜ x, x, y ) = P e X ( ˜ x ) P X | e X ( x | ˜ x ) P Y | X ( y | x ) by preserving P e X ( ˜ x ) , so the entropies H ( X ) and H ( Y ) are also G ¨ UNL ¨ U AND KRAMER: PRIV A CY , SECRECY , AND STORA GE WITH MUL TIPLE NOISY MEASUREMENTS OF IDENTIFIERS 11 preserved. Hence, the e xpressions in Theorems 1 and 2 I ( U ; Y ) = H ( Y ) − H ( Y | U ) I ( U ; X ) − I ( U ; Y ) = H ( X ) − H ( X | U ) − H ( Y ) + H ( Y | U ) I ( U ; e X ) − I ( U ; Y ) = H ( e X ) − H ( e X | U ) − H ( Y ) + H ( Y | U ) I ( U ; e X ) = H ( e X ) − H ( e X | U ) are preserved by some U 0 that satisfies the Marko v condition U − e X − X − Y with |U 0 | ≤ | e X | + 2 . A P P E N D I X B O N A L OW E R B O U N D F O R B I NA RY A S Y M M E T R I C C H A N N E L S Consider a Markov chain U − X − Y 1 , a binary random variable X with the probability distribution Q X , and a binary channel P Y 1 | X with probability transition matrix T = a 1 − a b 1 − b (62) which is asymmetric if a + b 6 = 1 . One can restrict attention to the cases a + b ≤ 1 and a ≥ b by swapping the outputs and inputs, respectively , if necessary [32]. The conditional entropies H ( X | U ) and H ( Y 1 | U ) are as defined in (10) and (11), respectiv ely . Since the con vexity property is satisfied for all binary channels P Y 1 | X (see Section III), we have the following lower bound due to Lemma 1: H ( Y 1 | U ) ≥ H b aH − 1 b ( H ( X | U )) + b 1 − H − 1 b ( H ( X | U )) . (63) Note that if a = b , then (63) does not depend on H ( X | U ) , since the channel would then hav e zero capacity . Consider the achiev ability of the bound in (63) for the model considered in [1, Theorem 1], where a ternary U suffices to ev aluate the key-leakage-storage region. For a channel P X | U with probability transition matrix T u = a u 1 − a u b u 1 − b u c u 1 − c u (64) and i ∈ { 1 , 2 , . . . , |U |} , we obtain H ( X | U ) = P U ( u 0 ) H b ( a u ) + P U ( u 1 ) H b ( b u ) + P U ( u 2 ) H b ( c u ) (65) H ( Y 1 | U ) = P U ( u 0 ) H b ( aa u + b (1 − a u )) + P U ( u 1 ) H b ( ab u + b (1 − b u )) + P U ( u 2 ) H b ( ac u + b (1 − c u )) (66) where P U ( u 2 ) = 1 − P U ( u 0 ) − P U ( u 1 ) and P U ( u 1 ) = P X (0) − c u − P U ( u 0 )( a u − c u ) b u − c u . (67) Fig. 6 shows the possible ( H ( X | U ) , H ( Y 1 | U ) ) pairs by assigning an appropriate set of values to the first column of T u and to P U ( u 0 ) for a uniform X and an asymmetric binary 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 H(X|U) 0.72 0.74 0.76 0.78 0.8 0.82 0.84 0.86 0.88 0.9 H(Y|U) Ternary Us Lower Bound Fig. 6. Comparison of the lower bound and possible choices of U for a uniform input and a binary channel P Y 1 | X with parameters a = 0 . 4 and b = 0 . 2 in (62). channel P Y 1 | X with parameters a = 0 . 4 and b = 0 . 2 . The lower bound in Fig. 6 is thus not tight for such an asymmetric binary channel. Our simulations suggest that this is the case for all asymmetric channels, except for special cases like a = b . R E F E R E N C E S [1] O. G ¨ unl ¨ u, G. Kramer, and M. Sk ´ orski, “Priv acy and secrecy with multiple measurements of physical and biometric identifiers, ” in IEEE Conf. Commun. Network Sec. , Florence, Italy , Sep. 2015, pp. 89–94. [2] R. Plamondon, “The handwritten signature as a biometric identifier: Psychophysical model and system design, ” in Europ. Con v . Security Detect. , Brighton, U.K., May 1995, pp. 23–27. [3] O. G ¨ unl ¨ u and O. ˙ Is ¸can, “DCT based ring oscillator physical unclonable functions, ” in IEEE Int. Conf. Acoust., Speech Sign. Proc. , Florence, Italy , May 2014, pp. 8198–8201. [4] J. Guajardo, S. S. Kumar , G.-J. Schrijen, and P . Tuyls, FPGA Intrinsic PUFs and Their Use for IP Pr otection . Berlin, Germany: Springer- Verlag, 2007. [5] C. B ¨ ohm and M. Hofer , Physical Unclonable Functions in Theory and Practice . New York: Springer-Verlag, 2012. [6] B. Gassend, “Physical random functions, ” Master’ s thesis, M.I.T ., Cam- bridge, MA, Jan. 2003. [7] R. Pappu, “Physical one-way functions, ” Ph.D. dissertation, M.I.T ., Cambridge, MA, Oct. 2001. [8] T . Ignatenko and F . M. J. Willems, “Biometric systems: Priv acy and secrecy aspects, ” IEEE T rans. Inf. F orensics Security , vol. 4, no. 4, pp. 956–973, Dec. 2009. [9] K. Kursawe, A.-R. Sadeghi, D. Schellekens, B. ˇ Skori ´ c, and P . T uyls, “Reconfigurable physical unclonable functions - Enabling technology for tamper-resistant storage, ” in IEEE Int. W orkshop Hardwar e-Oriented Sec. T rust , San Francisco, CA, July 2009, pp. 22–29. [10] H. Onodera, “V ariability: Modeling and its impact on design, ” IEICE T r ans. Electr on. , vol. 89, no. 3, pp. 342–348, Dec. 2006. [11] R. Ahlswede and I. Csisz ´ ar , “Common randomness in information theory and cryptography - Part I: Secret sharing, ” IEEE T rans. Inf. Theory , vol. 39, no. 4, pp. 1121–1132, July 1993. [12] U. M. Maurer , “Secret ke y agreement by public discussion from common information, ” IEEE T rans. Inf. Theory , v ol. 39, no. 3, pp. 2733–742, May 1993. [13] L. Lai, S.-W . Ho, and H. V . Poor , “Priv acy-security trade-offs in biometric security systems - Part I: Single use case, ” IEEE T r ans. Inf. F or ensics Security , vol. 6, no. 1, pp. 122–139, Mar . 2011. [14] I. Csisz ´ ar and P . Narayan, “Common randomness and secret key generation with a helper , ” IEEE Tr ans. Inf. Theory , vol. 46, no. 2, pp. 344–366, Mar . 2000. [15] M. Koide and H. Y amamoto, “Coding theorems for biometric systems, ” in IEEE Int. Symp. Inf. Theory , Austin, TX, June 2010, pp. 2647–2651. [16] I. Csisz ´ ar and J. K ¨ orner , Information Theory: Coding Theor ems for Discr ete Memoryless Systems , 2nd ed. Cambridge, U.K.: Cambridge Uni. Press, 2011. [17] T . Berger , Rate Distortion Theory: A Mathematical Basis for Data Compr ession . Englewood Cliffs, NJ: Prentice-Hall, 1971. 12 IEEE TRANSA CTIONS ON INFORMA TION FORENSICS AND SECURITY [18] K. Kittichokechai and G. Caire, “Secret key-based identification and authentication with a priv ac y constraint, ” IEEE T r ans. Inf. Theory , vol. 62, no. 11, pp. 6189–6203, Nov . 2016. [19] V . M. Prabhakaran, K. Eswaran, and K. Ramchandran, “Secrecy via sources and channels, ” IEEE T rans. Inf. Theory , vol. 58, no. 11, pp. 6747–6765, Nov . 2012. [20] R. A. Chou and M. R. Bloch, “Separation of reliability and secrecy in rate-limited secret-key generation, ” IEEE Tr ans. Inf. Theory , vol. 60, no. 8, pp. 4941–4957, Aug. 2014. [21] A. Khisti, S. N. Diggavi, and G. W . W ornell, “Secret-ke y generation using correlated sources and channels, ” IEEE T rans. Inf. Theory , vol. 58, no. 2, pp. 652–670, Feb . 2012. [22] Y . W ang, S. Rane, S. C. Draper, and P . Ishwar, “ A theoretical analysis of authentication, priv acy , and reusability across secure biometric systems, ” IEEE T rans. Inf. F or ensics Security , vol. 7, no. 6, pp. 1825–1840, July 2012. [23] H. Permuter and T . W eissman, “Source coding with a side information “Vending Machine”, ” IEEE Tr ans. Inf. Theory , vol. 57, no. 7, pp. 4530– 4544, July 2011. [24] O. G ¨ unl ¨ u, O. ˙ Is ¸can, and G. Kramer, “Reliable secret ke y generation from physical unclonable functions under varying environmental conditions, ” in IEEE Int. W orkshop Inf. F orensics Security , Rome, Italy , Nov . 2015, pp. 1–6. [25] J. W ayman, A. Jain, D. Maltoni, and D. M. (Eds), Biometric Systems: T echnology , Design and P erformance Evaluation . London, U.K.: Springer-Verlag, 2005. [26] A. D. W yner and J. Ziv , “ A theorem on the entropy of certain binary sequences and applications: Part I, ” IEEE Tr ans. Inf. Theory , v ol. 19, no. 6, pp. 769–772, Nov . 1973. [27] J. L. Massey , Applied Digital Information Theory . Zurich, Switzerland: ETH Zurich, 1980-1998. [28] A. Orlitsky and J. R. Roche, “Coding for computing, ” IEEE T rans. Inf. Theory , vol. 47, no. 3, pp. 903–917, Mar . 2001. [29] N. Chayat and S. Shamai, “Extension of an entropy property for binary input memoryless symmetric channels, ” IEEE T r ans. Inf . Theory , vol. 35, no. 5, pp. 1077–1079, Sep. 1989. [30] I. Land, S. Huettinger , P . A. Hoeher , and J. B. Huber , “Bounds on information combining, ” IEEE T r ans. Inf. Theory , vol. 51, no. 2, pp. 612–619, Feb. 2005. [31] G. Kramer, Lecture Notes in Information Theory . Munich, Germany: TU Munich, Oct. 2017. [32] H. S. W itsenhausen, “Entropy inequalities for discrete channels, ” IEEE T r ans. Inf. Theory , vol. 20, no. 5, pp. 610–616, Sep. 1974. [33] R. Ahlswede and J. K ¨ orner , “On the connection between the entropies of input and output distributions of discrete memoryless channels, ” in Conf. Prob . Theory , Bras ¸ov , Romania, Sep. 1974, pp. 13–22. [34] A. Juels and M. W attenberg, “ A fuzzy commitment scheme, ” in A CM Conf. Comp. Commun. Security , New Y ork, NY , Nov . 1999, pp. 28–36. [35] Y . Dodis, R. Ostrovsky , L. Reyzin, and A. Smith, “Fuzzy extractors: How to generate strong keys from biometrics and other noisy data, ” SIAM J. Comput. , vol. 38, no. 1, pp. 97–139, Jan. 2008. [36] A. E. Gamal and Y .-H. Kim, Network Information Theory . Cambridge, U.K.: Cambridge Uni. Press, 2011. [37] K. Kittichokechai, T . J. Oechtering, M. Skoglund, and Y . K. Chia, “Secure source coding with action-dependent side information, ” IEEE T r ans. Inf. Theory , vol. 61, no. 12, pp. 6444–6464, Dec. 2015. [38] M. Bloch and J. Barros, Physical-layer Security . Cambridge, U.K.: Cambridge Uni. Press, 2011. Onur G ¨ unl ¨ u (S’10) receiv ed the B.Sc. degree in electrical and electronics engineering from Bilkent Univ ersity , Ankara, in 2011, and the M.Sc. degree in communications engineering from the T echnical Univ ersity of Munich (TUM), Munich, in 2013, where he is currently pursuing the Dr.-Ing. degree. He is a Research and T eaching Assistant with TUM Chair of Communications Engineering. In 2018, he visited the Information and Communication Theory Lab, TU Eindhoven, The Netherlands. His research interests include information theoretic privac y and security , code design for key agreement, statistical signal processing for biometric secrecy systems and physical unclonable functions (PUFs). Gerhard Kramer (S’91–M’94–SM’08–F’10) received the Dr . sc. techn. degree from ETH Zurich in 1998. From 1998 to 2000, he was with Endora T ech AG in Basel, Switzerland, and from 2000 to 2008 he was with the Math Center at Bell Labs in Murray Hill, NJ, USA. He joined the University of Southern California, Los Angeles, CA, USA, as a Professor of Electrical Engineering in 2009. He joined the T echnical University of Munich (TUM) in 2010, where he is currently Alexander von Humboldt Professor and Chair of Communications Engineering. His research interests include information theory and communications theory , with applications to wireless, copper , and optical fiber networks. Dr . Kramer served as the 2013 President of the IEEE Information Theory Society .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment