Jittering Samples using a kd-Tree Stratification

Monte Carlo sampling techniques are used to estimate high-dimensional integrals that model the physics of light transport in virtual scenes for computer graphics applications. These methods rely on the law of large numbers to estimate expectations vi…

Authors: Alex, ros D. Keros, Divakaran Divakaran

Jittering Samples using a kd-T ree Stratification Alexandros D. K eros Uni versity of Edinbur gh Di vakaran Di vakaran Uni versity of Edinbur gh Kartic Subr Uni versity of Edinbur gh Abstract Monte Carlo sampling techniques are used to estimate high-dimensional integrals that model the physics of light transport in virtual scenes for computer graphics applications. These methods rely on the law of lar ge numbers to estimate expectations via simulation, typically re- sulting in slow con vergence. Their errors usually manifest as undesirable grain in the pictures generated by image synthesis algorithms. It is well known that these errors diminish when the samples are chosen appropriately . A well known technique for reducing error operates by subdividing the integration domain, estimating integrals in each stratum and aggregating these values into a stratified sampling estimate. Na ¨ ıve methods for stratification, based on a lattice (grid) are known to improv e the con vergence rate of Monte Carlo, but require samples that grow e xponentially with the dimensionality of the domain. W e propose a simple stratification scheme for d dimensional hypercubes using the kd-tree data structure. Our scheme enables the generation of an arbitrary number of equal volume par- titions of the rectangular domain, and n samples can be generated in O ( n ) time. Since we do not alw ays need to e xplicitly build a kd-tree, we provide a simple procedure that allo ws the sample set to be drawn fully in parallel without any precomputation or storage, speeding up sampling to O (log n ) time per sample when executed on n cores. If the tree is implicitly precomputed ( O ( n ) storage) the parallelised run time reduces to O (1) on n cores. In addition to these benefits, we pro vide an upper bound on the worst case star-discrepanc y for n samples matching that of lattice-based sampling strategies, which occur as a special case of our pro- posed method. W e use a number of quantitati ve and qualitati ve tests to compare our method against state of the art samplers for image synthesis. 1. Introduction Photo-realistic visuals of virtual environments are generated by simulating the physics of light and its interaction within the virtual environments. The simulation of light transport requires estimation of integrals ov er high-dimensional spaces, for which 1 1 INTR ODUCTION Monte Carlo integration is the method of choice. The defining characteristic of a Monte Carlo estimator is its choice of locations where the function to be integrated is e valuated. Giv en a fixed computational b udget, the quality of the pictures rendered by this technique depends hea vily on the choice of these sample locations. Despite its many benefits, one of the problems of Monte Carlo integration is its relativ ely slow con ver gence of O (1 / √ n ) , gi ven n samples. The primary expectation of a sampling algorithm is that it results in low error . Many theories such as equi-distrib ution [ Zaremba 1968 ] and spectral signatures [ Subr et al. 2016 ] underpin sampling choices. A simple way to improve equi-distribution is to partition the domain into homogeneous strata, and to aggregate the estimates within each of the strata. One popular variant of this type of stratified sampling is jitter ed sampling – where the hypercube of random numbers is partitioned as a grid and a single sample is drawn from each cell. While stratification results in improved con ver gence, a more dramatic improv ement is obtained when the samples optimise a measure of equi-distribution called discr epancy [ Kuipers and Niederreiter 1975 ; Shirley 1991 ; Owen 2013 ]. A second consideration is the time taken to generate samples. Random num- bers and deterministic sequences [ Niederreiter 1987 ] are popular choices since they can be generated fast and in parallel. Deterministic samples provide the added ad- v antage of repeatability , which is desirable during dev elopment and deb ugging. Fi- nally , the impact of the dimensionality of the integration domain is an important fac- tor . Many sampling algorithms either suffer from disproportionately greater error or poor performance for high-dimensional domains. Some algorithms require sample sizes that are exponentially dependent on the dimensionality . e.g. jittered sampling requires that n = k d , k ∈ Z . T o av oid such problems, modern renderers sacrifice stratification in the high-dimensional space by interpreting it as a chain of outer prod- ucts of low-dimensional spaces (e.g. one or two), which can be sampled indepen- dently , a technique kno wn as “padding”. It has recently been sho wn that for samplers such as Halton [ Halton 1964 ] or Sobol [ Sobol’ 1967 ], which are able to multiply- stratify high-dimensional spaces, that they project undesirably to lo wer dimensional subspaces [ Jarosz et al. 2019 ]. In this paper , we stratify the d -dimensional hypercube using a kd-tree. Our algo- rithm is simple, efficient and scales well (with n , d and parallelisation). For any n , we design a kd-tree so that all leav es of the tree result to domain partitions that oc- cupy the same v olume. Then, we draw a single random v alue from each of the lea ves. This can be vie wed as a generalisation of jittered sampling. When n = 2 kd for some integer k , the cells of the kd-tree align perfectly with those of the regular grid. W e deri ve the worst-case star -discrepancy of samples generated via kd-tree stratification. Finally , we perform empirical quantitati ve and qualitati ve experiments comparing the performance of kd-tree stratification with state of the art sampling methods. 2 2 RELA TED WORK Contributions In this paper, we propose a kd-tree stratification scheme with the fol- lo wing properties: 1. it generalises jittered sampling for arbirary sample counts; 2. the i th of n samples can be calculated independently; 3. an upper bound on the star-discrepancy of our samples as 2 d − 1 dn − 1 d ; 4. error that is empirically at least as good as jittered sampling but similar to Hal- ton and Sobol in many situations; and 5. it can easily be parallelised. 2. Related W ork Although Monte Carlo inte gration is the de facto statistical technique for estimating high dimensional integrals pertaining to light transport, it can be applied in a fe w dif ferent ways. e.g. path tracing, bidirectional path tracing, Marko v Chains [ V each 1998 ], etc. The computer graphics literature is rich with sampling algorithms to re- duce the v ariance of the estimates [ Christensen et al. 2016 ] and a discussion of these techniques is beyond the scope of this paper . Here, we address a fe w closely related classes of works that are rele vant to our proposed scheme for stratification. Assessing sample sets Empirical comparisons of samplers on specific scenes is the most common method for assessment. Of the se veral image comparison metrics fe w are suited to high dynamic range images [ Mantiuk et al. 2007 ]. Of those, many fo- cus on assessing the quality of tone-mapping operators rather than their suitability for assessing noisy rendering. The de facto choice of metric for assessing samplers in rendering is an adaptation of numerical mean squared error (MSE). Theoretical considerations such as equidistribution [ Zaremba 1968 ] and spectral properties [ Du- rand 2011 ; Subr et al. 2016 ] provide a more general assessment of sample quality . A well kno wn measure for equidistribution of a point set in a domain is the maximum discr epancy [ Kuipers and Niederreiter 1975 ; Shirle y 1991 ; Dick and Pillichshammer 2010 ] between the proportion of points falling into an arbitrary subset of the do- main and the measure of that subset. Since this is not tractable across all subsets, a restricted v ersion considers all sub-hyperrectangular box es with one verte x at the ori- gin. This so-called star-discrepancy can be used to bound error introduced by the point set when used in numerical integration of functions with certain properties [ K oksma 1942 ; Aistleitner and Dick 2014 ]. Although it is desirable to know the discrepancy of a sampling strategy , so that this bound may be known, it is generally non-trivial to deri ve. W e deriv e an upper bound on the discrepancy of points resulting from our pro- posed sampling technique. For the remainder of the paper , unless otherwise clarified, we will use discrepancy to refer to L ∞ star discrepancy . 3 2 RELA TED WORK Stratified sampling A po werful way to reduce the v ariance of sampling-based esti- mators is to partition the domain into strata with mutually disparate values, estimate integrals within each of the strata and then carefully aggregate them into a collective estimator [ Cochran 1977 ]. Unfortunately , stratified sampling is challenging when there is insufficient information a priori about the integrand. T ypically , the domain is partitioned anyway , into strata of known measures, and proportional allocation is used to dra w samples within them. In the simplest case, the d -dimensional hypercube is partitioned using a regular lattice (grid) and one random sample is drawn from each cell [ Haber 1967 ]. This is kno wn as jittered sampling [ Cook et al. 1984 ] in the graphics literature and has been shown to improve con ver gence. Although jittered sample is simple and parallelisable, it suffers from the curse of dimensionality [ Pharr and Humphreys 2010 , Chapter 7.3, § 3] , i.e. it is only effecti ve when the number of samples required is a perfect d th po wer . This does not allow fine-grained control ov er the computational budget for lar ge d . V arious sampling strate gies are based on such equal-measure stratification with proportional allocation, since it can potentially improv e con ver gence and is nev er worse than not stratifying. Another example is n-rooks sampling [ Shirley 1991 ] or latin hypercube sampling [ McKay et al. 1979 ], where stratification is performed along multiple ax es. Multi-jittered sampling [ Chiu et al. 1994 ; T ang 1993 ] combines jittered grids with latin hypercube sampling. Se v- eral tiling-based approaches [ K opf et al. 2006 ; Ostromoukhov et al. 2004 ] have been proposed for generating sample distributions with desirable blue-noise characteris- tics. Although they produced blue-noise patterns that are useful in halftoning, stip- pling and image anti-aliasing, it is unclear – based on recent theoretical connections between blue-noise and error and con vergence rates [ Pilleboue et al. 2015 ] – whether those methods are useful in building useful estimators. For the benefits of stratifica- tion to be realised in a practical setting, for example when the hypercube is mapped to arbitrary manifolds, the mapping needs to be constrained. e.g. it needs to satisfy area-preserv ation [ Arvo 2001 ]. Low-discrepancy sequences Infinite sequences in the unit hypercube whose star discrepancy is O ( l og ( n ) d /n ) are kno wn as low-discrepancy sequences [ Niederreiter 1987 ]. One such sequence, the V an de Corput sequence [ van der Corput 1936 ] forms the core of state-of-the-art methods such as Halton [ Halton 1964 ] and Sobol [ Sobol’ 1967 ] samplers. These quasi-random sequences [ Niederreiter 1992b ] can be used to produce deterministic samples that result in rapidly con ver ging quasi Monte Carlo (QMC) estimators [ Niederreiter 1992a ]. QMC has been shown to significantly speed up high-quality , offline rendering [ K eller 1995 ; K eller et al. 2012 ]. QMC samplers, and their randomized v ariants [ Owen 1995 ] based on the more general concept of ele- mentary intervals , can be vie wed as the ultimate form of equal-measure stratification, since they strive to stratify across all origin-anchored hyperrectangles in the domain 4 3 JITTERED KD-TREE STRA TIFICA TION simultaneously . In addition to lo w-discrepancy , infinite sequences ha ve the additional desirable property that any prefix set of samples is well distributed. This is an acti ve area of research and recent w ork includes methods for progressi ve multijitter [ Chris- tensen et al. 2018 ] and low-discrepanc y samples with blue noise properties [ Ahmed et al. 2016 ]. There are two hurdles to using QMC samplers: the first, a technical issue, is that their ef fecti veness in high-dimensional problems is limited; the second, a le gal issue, is that their use for rendering is patented [ Keller U.S. Patent US7453461B2, Nov . 2008 ]. W e propose a simple, parallelisable alternative that, in the worst case matches, and often surpasses the performance of alternati ves in terms of MSE. kd-trees Space partitioning data structures such as quadtrees, octrees and kd-trees [ Fried- man et al. 1977 ] are well kno wn in computational geometry and computer graphics. These data structures are typically used to optimise location queries such as nearest- neighbour queries. In computer graphics, kd-trees hav e been used to speed up ray in- tersections [ W ald and Ha vran 2006 ], optimise multiresolution frame works [ Gosw ami et al. 2013 ] and its variants have been used to perform high-dimensional filtering [ Adams et al. 2009 ]. They hav e been used for sampling in a variety of ways including im- portance sampling via domain warping [ McCool and Harwood 1997 ; Clarber g et al. 2005 ], progressi ve refinement for antialiasing [ Painter and Sloan 1989 ], ef ficient mul- tiscale sampling from products of gaussian mixtures [ Ihler et al. 2004 ] and optimisa- tion of the sampling of mean free paths [ Y ue et al. 2010 ]. In this paper , we propose a ne w kd-tree stratification scheme that results in com- parable error to state of the art method, with the added advantages of being simple to construct and easily parallelisable. W e deri ve an upper bound for the star-discrepanc y of sample sets produced using this stratification scheme. 3. Jittered kd-tree Stratification The central idea of our construction is to use a kd-tree to partition the d -dimensional hypercube into n equal-volume strata. One sample is then drawn from each of these cells. 3.1. Sample generation W e illustrate our method using a simple (linear time) recursive procedure to generate n deterministic strata in d dimensions. Then, we explain how this construction can be used to obtain stochastic samples and deriv e a formula for determining the axis- aligned boundaries of the i th cell (leaf of the kd-tree) out of n samples. Samples are obtained by drawing a random sample within each of these cells. Constructing the kd-tree T o construct n strata with equal volumes in a d -dimensional hypercube V , we first partition V into two strata V 0 and V 1 by a splitting plane whose 5 3.1 Sample generation 3 JITTERED KD-TREE STRA TIFICA TION input : number of strata n dimension d output: Lo wer bounds array L with elements l i m Upper bounds array U with elements u i m 1 N rem ← n ; // remaining partitions 2 l ← (0 , . . . , 0) ; // lower partition bound 3 u ← (1 , . . . , 1) ; // upper partition bound 4 if N r em > 1 then 5 m ← 0 ; // dimension to partition 6 c ← l m + d N rem 2 e N rem ( u m − l m ) ; 7 l right ← l ; // right subtree lower bounds 8 l right m ← c ; 9 RightSubT ree ( m + 1)% d, l right , u , N rem − d N rem 2 e ; 10 u left ← u ; // left subtree upper bounds 11 u left m ← c ; 12 LeftSubT ree ( m + 1)% d, l , u left , d N rem 2 e ; 13 else 14 L . push ( l ) ; 15 U . push ( u ) ; 16 end Algorithm 1: CalculateBoundsRecursive normal is parallel to an arbitrary axis m, 0 ≤ m ≤ d − 1 . If n is ev en, the plane is located mid-way along the l th axis in V . If n is odd, the plane is positioned so that the volumes of V 0 and V 1 are proportional to d n/ 2 e and n − d n/ 2 e respectiv ely . e.g. if n = 5 , d = 3 and m = 0 , the first split is performed by placing a plane parallel to the Y Z plane at X = 3 / 5 . The splitting procedure is recursi vely applied to V 0 and V 1 using ( m + 1) m o d d as the splitting axis and numbers n 0 = d n/ 2 e and n 1 = n − d n/ 2 e respecti vely . A binary digit is prefixed to the subscript at each split – a zero for the ”lo wer” stratum (left branch) and a one to indicate the ”upper” stratum (right branch). At the first recursion le vel (second split), the resulting partitions are V 00 , V 01 V 10 and V 11 respecti vely . The recursion bottoms out when the number of stratifications required within a sub-domain is one. Algorithm 1 , along with the accompan ying functions of Algorithms 2 and 3 , implement the af forementioned recursiv e procedure in O ( n ) time, for the complete partitioning. Figure 2 visualises the cells of the tree and samples drawn within them for n = 16 , n = 59 and n = 152 . F or mula for jittered sampling The abov e procedure induces a tree whose n leav es are axis-aligned hypercubes with equal v olume. Although we could use the recursiv e procedure to generate a random sample in each stratum (e very time the recursion bot- 6 3.1 Sample generation 3 JITTERED KD-TREE STRA TIFICA TION input : dimension m lo wer bound l upper bound u remaining partitions N rem 1 if N r em > 1 then 2 c ← l m + b N rem 2 c N rem ( u m − l m ) ; 3 l right ← l ; // right subtree lower bounds 4 l right m ← c ; 5 RightSubT ree ( m + 1)% d, l right , u , d N rem 2 e ; // right subtree of right subtree 6 u left ← u ; // left subtree upper bounds 7 u left m ← c ; 8 RightSubT ree ( m + 1)% d, l , u left , b N rem 2 c ; // left subtree of right subtree 9 else 10 L . push ( l ) ; 11 U . push ( u ) ; 12 end Algorithm 2: RightSubTree toms out), this would induce unwanted computational overhead when a single sample is required. Instead, we deri ve a direct procedure (see Alg. 4 ) to calculate the lo wer and upper bounds for each cell with a run time complexity of O ( d log 2 n e ) per sam- ple. If all n samples are to be generated at once, the recursiv e procedure is more ef ficient since it is O ( n ) instead of O ( n d log 2 n e ) . Ho wev er , the former is not easily parallelisable and requires pre generated samples to be stored. The latter is fully par- allelisable and pregenerated samples are notional and may be independently drawn when required. Example T o find the bounds for the 8 th sample ( i = 7 ) out of n = 12 samples in d = 2 D, we first express i as the bit-string 0111 . Starting from the right end of this string, we process one digit at a time while progressi vely halving the number of points n . At each step, we update the bounds appropriately . Alternate digits correspond to bounds for X and Y respectiv ely and we adopt the con vention that the first digit (rightmost) corresponds to a split in X. Since the first digit is a 1 , it corresponds to a lower bound on X . i.e. x > l i 0 . Since N 0 = 12 is divisible by 2 , N 1 / N 0 = 1 / 2 leading to x > 1 / 2 . The second least-significant digit is 1 , which again leads to a lo wer bound but this time on Y . Since N 1 = 6 is ev en, the bound is y > 1 / 2 . The third digit is 1 as well, and imposes a lo wer bound on X. Howe ver , since N 2 = 3 is odd, N 3 = 2 . The new bound for X is x > 1 / 2 + (1 − 1 / 2) ∗ 2 / 3 . i.e. x > 5 / 6 . 7 3.1 Sample generation 3 JITTERED KD-TREE STRA TIFICA TION input : dimension m lo wer bound l upper bound u remaining partitions N rem 1 if N r em > 1 then 2 c ← l m + d N rem 2 e N rem ( u m − l m ) ; 3 l right ← l ; // right subtree lower bounds 4 l right m ← c ; 5 LeftSubT ree ( m + 1)% d, l right , u , b N rem 2 c ; // right subtree of left subtree 6 u left ← u ; // left subtree upper bounds 7 u left m ← c ; 8 LeftSubT ree ( m + 1)% d, l , u left , d N rem 2 e ; // left subtree of left subtree 9 else 10 L . push ( l ) ; 11 U . push ( u ) ; 12 end Algorithm 3: LeftSubTree X X Y Y 0011 i=7 0000 0010 1010 0100 1011 0001 1001 0000 1000 0100 1010 0110 1001 0111 1011 0101 0010 0001 0011 000 100 010 110 001 011 111 101 00 10 11 01 0 1 < > > > < < < > > < < < 0101 0100 0110 > < x>1/2 > > > > y>1/2 x>5/6 0 1 1 1 no sibling: max. one bound for y Figure 1 . W e stratify the d-dimensional hypercube into axis-aligned hyperrectangles of equal volume using a kd-tree. The figure illustrates an example with 12 samples in 2 D. Each leaf node in the tree represents a cell with area 1 / 12 . In practice, we do not need to build the tree explicitly . When a sample is needed from a particular cell, we calculate the bounds the cell us- ing Algorithm 4 and then dra w a random sample within it. The coloured portions of the figure illustrate the example described in the te xt to obtain the bounds of the 8 th sample. i.e. i = 7 . Finally , the most significant bit is 0 , so it corresponds to an upper bound. Howe ver , because the current node 0111 does not hav e a sibling ( 1111 corresponds to 15 which is greater than 12 which is the number of samples), the upper bound is not updated. Combining all this information, we obtain x ∈ [5 / 6 , 1] and y ∈ [1 / 2 , 1] . See Fig. 1 for an illustration of this procedure. 8 3.1 Sample generation 3 JITTERED KD-TREE STRA TIFICA TION input : number of strata n dimension d sample number i ∈ { 0 , 1 , · · · , n − 1 } output: d -dimensional bounds l i and u i 1 m ← 0 ; // dimension to partition 2 N rem ← n ; // remaining points in partition 3 s ← d log 2 n e ; // num. bits 4 B = [ b i s − 1 b i s − 2 . . . b i 0 ] ; // big-endian binary representation of point index i 5 l i ← (0 , . . . , 0) ; // lower partition bound 6 u i ← (1 , . . . , 1) ; // upper partition bound 7 while B not empty and N r em > 1 do 8 b ← pop last element of B ; 9 r ← d N rem 2 e ; 10 if b is zer o then // update upper bound 11 N curr ← d N rem 2 e ; 12 u i m ← ( u i m − l i m ) r N rem + l i m ; 13 else // update lower bound 14 N curr ← b N rem 2 c ; 15 l i m ← ( u i m − l i m ) r N rem + l i m ; 16 end 17 N rem ← N curr ; 18 m ← ( m + 1)% d ; 19 end Algorithm 4: CalculateBounds 0 3 6 9 12 15 0 12 24 36 48 58 0 22 44 66 88 110 132 151 16 samples 59 samples 152 samples Figure 2 . A visualisation of our kd-tree stratification and samples. The cells are coloured by the sample number . 9 3.2 Discrepancy of kd-tree samples 3 JITTERED KD-TREE STRA TIFICA TION 3.2. Discrepancy of kd-tree samples Samples on a regular grid hav e a discrepancy of O (1 / d √ n ) . Jittered sampling [ Pausinger and Steinerber ger 2016 ] and Latin hypercube sampling [ Doerr et al. 2018 ] e xploit the correlation structure imposed by the grid, and, by randomly drawing samples within each stratum, improve the expected discrepancy to O log n 1 2 n 1 2 + 1 2 d (under sufficiently dense sampling assumption) and O q d n , respecti vely . Kd-tree stratification, as a generalization of jittered sampling to arbitrary sam- ple counts, results to the exact same domain partition as jittered sampling for n = 2 kd , k ∈ Z . Thus, for these special cases, our method satisfies the same expected discrepancy bounds as jittered sampling [ Pausinger and Steinerber ger 2016 ], namely O log n 1 2 n 1 2 + 1 2 d . Theorem 3.1 deri ves a w orst-case upper bound for the star -discrepancy of the general case of our jittered kd-tree stratification method. Theorem 3.1. Given a set P of n samples, D ∗ ( P ) ≤ 2 d − 1 dn − 1 d . Pr oof. If P is an n point set and X is a set, then let D ( P , X ) denote the discrepancy of P in X . Observation 1.3 in [ Matousek 2009 ] states that if A and B are disjoint sets, then | D ( P , A ∪ B ) | = | D ( P, A ) + D ( P , B ) | ≤ | D ( P , A ) | + | D ( P , B ) | . W e use this observation inductiv ely to compute discrepancy . Any axis-parallel rectangle with a vertex anchored at the origin, as used for the star-discrepanc y computation, contains partial and complete cells from our kd-tree. By construction, complete cells in our kd-tree hav e zero discrepanc y . The discrepanc y of incomplete cells is less than or equal to 1 /n . This value, multiplied by a bound on the number of partial cells in such a rectangle can provide an upper bound for discrepancy . The number of cells a splitting plane (perpendicular to an axis) intersects increases as N increases. The maximum number of intersections occurs when the cells are identical to cells in a regular grid. i.e. when n = (2 k ) d , k ∈ Z . Thus, each splitting plane intersects at most 2 d d log 2 d ( n ) e d − 1 d ≤ 2 d log 2 d ( n ) 2 d d − 1 d = 2 d − 1 n d − 1 d (1) cells. Thus, an axis-parallel rectangle intersects at most 2 d − 1 dn d − 1 d cells, and the star-discrepanc y of samples obtained using kd-tree stratification has an upper bound of 2 d − 1 dn − 1 d . Empirical computation of star-discrepanc y in Figure 3 suggests the looseness of the bound deriv ed in Theorem 3.1 , and the fact that the e xpected star -discrepancy of our jittered kd-tree stratification method should satisfy the bounds of jittered sam- pling [ Pausinger and Steinerber ger 2016 ] for general sample counts n ∈ Z as well. 10 4 EMPIRICAL EV ALU A TION 4. Empirical ev aluation W e performed quantitati ve and qualitativ e e xperiments to assess the proposed stratifi- cation scheme. First, we plotted (Fig. 3 ) the expected L 2 -star discrepancy computed by W arnock’ s formula [ W arnock 1973 ] versus number of samples (up to 4500 , 0 ) for v arious samplers in 2D and 4D. Our method exhibits discrepancy similar to jit- tered sampling, which, as expected, is inferior to the discrepancy of QMC sample sequences. Then, we tested the errors resulting from jittered kd-tree sampling when integrating analytical functions (sec. 4.1 ) of variable comple xity in low-dimensions. Finally , we tested a padded version of jittered kd-tree stratification using rendering scenarios consisting of high-dimensional light paths (sec. 4.2 ). For all empirical re- sults, we compare against popular baseline samplers such as random and jittered sam- pling, and state-of-the art Quasi-Monte Carlo methods Halton and Sobol. 2 Dimensions 4 Dimensions 7 Dimensions 10 2 10 3 10 4 10 -5 10 -4 10 -3 10 -2 10 -1 10 2 10 3 10 4 10 -5 10 -4 10 -3 10 -2 10 -1 10 2 10 3 10 4 10 -4 10 -3 10 -2 10 -1 Figure 3 . The empirical expected L 2 -star discrepancy (av eraged ov er 100 sample realiza- tions) for our method matches that of jittered sampling. It outper forms all e xamined samplers except the lo w-discrepancy QMC methods. 4.1. Ev aluation on analytic integr ands W e compare the performance of our jittered kd-tree stratification method (KDT) against popular baseline methods (Jittered and Random) and state-of-the art samplers (Halton and Sobol). W e also compare our sampler against a ne w stratification method based on Bush’ s Orthogonal Arrays [ Jarosz et al. 2019 ] (b ushO A CMJ), using fully f actorial strengths where possible. W e plotted (Figs. 4 , 5 ) mean-squared error vs number of samples, a veraging o ver 100 sample realizations per method and per sample count, for v arious samplers used in estimating integrals of kno wn (analytical) functions in 2 and 4 dimensions (rows respecti vely). W e estimated integrals with up to 10 6 samples of smooth and dis- continuous integrands of increasing variability . As the smooth function, we used a normalised Gaussian mixture model with k randomly centered, randomly weighted modes ( GM M k ). The standard deviation of each component is set to one third of the minimum distance between centres. W e performed four experiments on this in- 11 4.1 Ev aluation on analytic integrands 4 EMPIRICAL EV ALU A TION GM M 3 GM M 20 2 Dimensions 10 2 10 3 10 4 10 5 10 6 10 -14 10 -12 10 -10 10 -8 10 -6 10 -4 10 -2 10 2 10 3 10 4 10 5 10 6 10 -12 10 -10 10 -8 10 -6 10 -4 10 -2 4 Dimensions 10 2 10 3 10 4 10 5 10 6 10 -14 10 -12 10 -10 10 -8 10 -6 10 -4 10 -2 10 0 10 2 10 3 10 4 10 5 10 6 10 -10 10 -9 10 -8 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 -1 Figure 4 . Error con ver gence of 7 samplers on 2D and 4D continuous analytic integrands of varied complexity . Our method (KDT) exhibits similar performance to jittered sampling, which is comparable to state-of-the-art QMC samplers in 2D, without limiting the allowed number of samples. In 4D the conv ergence of KDT again matches that of jittered sampling, both of which appear inferior to popular QMC methods. tegrand: k = 3 and k = 20 each in 2 and 4 dimensions. As the discontinuous test integrand, we used a piece wise constant function by triangulating the domain using k randomly selected points ( P W C onst k ). The faces created were weighted randomly and normalised so that the function inte grates to unity . As with the Gaussian mixture, we performed 4 sets of experiments with k = 3 and k = 20 each in 2D and 4D. In tw o dimensions the performance of our sampler consistently matches the per- formance of state-of-the-art QMC methods Halton and Sobol. F or discontinuous inte- grands, P W C onst 3 and P W C onst 20 , our sampler exhibits lower “oscillations” than QMC methods. Jittered sampling performs similar to our kd-tree stratification method in all cases except P W C onst 3 . Our sampler exhibits lo wer error than jittered for this case. In four dimensions QMC methods clearly outperform all other samplers. Our method again exhibits conv ergence similar to jittered sampling. Nev ertheless, when the complexity of the integrand increases, in terms of non-linearities or modes, the distinction between dif ferent sampling strategies becomes increasingly dif ficult. 12 4.2 Ev aluation on rendered images 4 EMPIRICAL EV ALU A TION P W C onst 3 P W C onst 20 2 Dimensions 10 2 10 3 10 4 10 5 10 6 10 -11 10 -10 10 -9 10 -8 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 2 10 3 10 4 10 5 10 6 10 -10 10 -9 10 -8 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 -1 4 Dimensions 10 2 10 3 10 4 10 5 10 6 10 -10 10 -9 10 -8 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 2 10 3 10 4 10 5 10 6 10 -10 10 -9 10 -8 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 Figure 5 . Error con ver gence of 7 samplers on 2D and 4D discontinuous analytic integrands of varied complexity . Our method (KDT) exhibits similar performance to jittered sampling, which is comparable to state-of-the-art QMC samplers in 2D, without limiting the allowed number of samples. In 4D the conv ergence of KDT again matches that of jittered sampling, both of which appear inferior to popular QMC methods. 4.2. Ev aluation on rendered images The experiments on the photorealistically rendered images are performed using PBR T version 3 [ Pharr and Humphreys 2010 ]. W e use the Empirical Err or Analysis tool- box [ Subr et al. 2016 ] to calculate errors due to v arious samplers. W e implemented jittered kd-tree stratification within PBR T as a pixel sampler . i.e. a jittered kd-tree stratified sample set is generated for each pixel independently . Since our sampler ap- proaches that of random sampling for n 2 d , we also implemented a padded v ersion for 2D subspaces, which we hereby refer to as KDT2Dpad. W e compare KDT2Dpad against other pixel samplers found within PBR T , such as stratified (Jitter2Dpad) and Random, as well as QMC methods Halton and Sobol. The QMC methods are implemented as global samplers , which generate samples for the whole image plane and associate pix el coordinates to the indices of samples from the appropriate sequences. This scheme ensures that each pixel is assigned the correct 13 4.2 Ev aluation on rendered images 4 EMPIRICAL EV ALU A TION number of samples. W e also compare our method against Bush’ s Orthogonal Arrays stratification [ Jarosz et al. 2019 ] with strength 2 (O AbushMJ2) implemented as a pixel sampler . W e ev aluated our method using two metrics, RGB-MSE (RGBMSE) and Log- Luminance-MSE (LLMSE), both computed on the high-dynamic range images output by the renderer . The images sho wn in the paper are tonemapped for visualisation, b ut we include the original images along with an html bro wser as supplementary material. The former is computed as the squared norm of the dif ferences in RGB space: | R ref ( p ) − R test ( p ) | 2 + | G ref ( p ) − G test ( p ) | 2 + | B ref ( p ) − B test ( p ) | 2 , for a pixel p , where R ref , G ref , B ref are the linear RGB channels of the reference image and R test , G test , B test are those of the test image respectiv ely . The Log- Luminance-MSE metric measures the error in the percei ved luminance, | log( L ref ( p )) − log( L test ( p )) | 2 , where L ref and L test are the luminances of the reference and test image respecti vely . W e tested with other perceptually-based metrics such as SSIM but did not observe significant dif ferences with the simple Log-Luminance-MSE metric. W e rendered ORB and ORB-GLOSSY scenes, sho wn in Figures 6 and 7 , which contain a light source and an orb placed inside a glossy sphere with an occluder abo ve the orb model. These two versions of a similar scene feature 19 and 41 dimensional light paths respectively due to dif ferent material and rendering parameters. W e used 529 spp for most samplers and 512 spp for QMC methods. Finally , the P A VILION scene, in Figure 8 , contains high frequency textures, various materials and complex geometry , resulting in 43 dimensional light paths. W e use 3,969 spp for samplers that support it, and 4,096 spp for QMC methods. Reference scenes are rendered with 10,000 Random spp. For the ORB scene, our method KDT2Dpad performs comparably good in both metrics, matching, and, in the case of RGBMSE, surpassing the performance of state- of-the-art QMC methods. In ORB-GLOSS, KDT2Dpad exhibits the least error in both metrics for the shadow region considered. Sobol and Jitter2Dpad methods ex- hibit some structured artif acts. In the P A VILION scene, Sobol performs best in terms of RGBMSE, and KDT2Dpad is better than Jitter2Dpad and Halton. All samplers perform similarly with respect to LLMSE with Random sampling being consistently the worst, follo wed by Jitter2Dpad. W e tested the intricate combinations of high-dimensional light paths, gloss, tex- tures and defocus by rendering the ORB-GLOSS-DOF scene (Fig. 9 and Fig. 10 ) which is identical to ORB-GLOSS but with a wide aperture (shallow depth of field). W e observed that Halton performs well, as expected, and Sobol e xhibits tell-tale struc- tured artifacts. Our KDT sampler performs well in areas with complex light paths 14 4.2 Ev aluation on rendered images 4 EMPIRICAL EV ALU A TION (high-dimensional paths, gloss, te xture and depth of field). Surprisingly , jittered sam- pling performs best with respect to multi-bounce paths (like on the side of the cuboidal occluder). Error plots The box plots accompanying rendered results show the mean (dashed horizontal line), median (solid line), quantiles around the median (shaded box) and the upper and lower fences (end points of whiskers). The mean value is printed abov e each sampler . KDT2Dpad Random Jitter2Dpad OAbushMJ2 Halton Sobol 0 0.0002 0.0004 0.0006 0.0008 0.001 0.0012 0.0014 0.0016 0.0018 0.002 0.0022 0.0024 0.0026 0.0028 0.003 RGB MSE KDT2Dpad Random Jitter2Dpad OAbushMJ2 Halton Sobol 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 Log Luminance MSE Figure 6 . Our sampler (KDT2DPad) performs comparably to state of the art techniques in this 19 dimensional ORB scene. The region of interest is highlighted in the reference image (top left).Plots comparing L 2 -norm mean squared error of the RGB v alues (RGB-MSE) (bot- tom left) and the log Luminance mean squared error (Log-Luminance-MSE) (bottom right) of the pixels within the region of interest are shown, along with enlarged versions of the high- lighted region for each sampler to aid visual inspection (bottom row). Note: we hav e chosen a representativ e crop within the image. 15 4.3 Discussion 4 EMPIRICAL EV ALU A TION KDT2Dpad Random Jitter2Dpad Halton Sobol 0 0.1e−4 0.2e−4 0.3e−4 0.4e−4 0.5e−4 0.6e−4 0.7e−4 0.8e−4 0.9e−4 1e−4 1.1e−4 1.2e−4 RGB MSE KDT2Dpad Random Jitter2Dpad Halton Sobol 0 0.0005 0.001 0.0015 0.002 0.0025 0.003 0.0035 0.004 0.0045 0.005 Log Luminance MSE Figure 7 . KDT2Dpad clearly outperforms all other considered samplers for the indicated shadow region of this 41 dimensional ORB-GLOSS scene. The region of interest is high- lighted in the reference image (left).Plots comparing L 2 -norm mean squared error of the RGB values (RGB-MSE) (bottom left) and the log Luminance mean squared error (Log- Luminance-MSE) (bottom right) of the pixels within the re gion of interest are shown, along with enlar ged versions of the highlighted region for each sampler to aid visual inspection (top right). 4.3. Discussion No parameters to tune One advantage of our method over samplers such as Halton and Sobol is that it is parameter-free. The output of the sampler only depends on n and d . W e experimented with sev eral variants such as replacing the binary tree structure with a ternary structure, combining the use of Halton sampling in image space with KDT in path space, etc. None of these v ariants provided a notable gain in performance. Generalization of jittered sampling to arbitrar y n Our method essentially provides a generalization of the lattice-based jitter ed sampling strategy to arbitrary n , thus 16 5 CONCLUSIONS & FUTURE WORK breaking the lattice symmetry and partly amending the curse of dimensionality from which the latter suffers. Nev ertheless, as the domain dimensionality increases the sample count should proportionally increase as to avoid performance degradation of the method to that of random sampling for the latter d − log 2 n subspace projections, depending on the dimension partitioning order . Limitation - requires large n Although our stratification does not impose constraints on the number of samples, the benefit due to the stratification vanishes as n d . e.g. if n = 1 , our method is equi valent to random sampling regardless of d . For large d , unless a large number of samples are drawn, the strata induced by the kd- tree tend to be lar ge thereby weakening the ef fect of stratification. The full power of our method is exploited when estimating discontinuous integrands in high dimensions with an enormous computational b udget. Ho wev er , the “padded” version of our sam- pler o vercomes this limitation by sacrificing the ability to sample in high dimensional spaces. Sample sets, not sample sequences It should be noted that jittered kd-tree stratifica- tion results in sample sets , not sample sequences, ostensibly deeming it inappropriate for adapti ve sampling. Ne vertheless, the recursiv e nature of our method enables ex- tensions able to generate sample sequences, and allows for adapti ve sampling capabil- ities with minor modifications to the original method, which are left for future work. Furthermore, adaptiv e dimension partitioning strategies based on our kd-tree method seem attracti ve for cases where the integrand is k -dimensional additiv e, k < d . Theoretical upper bound Theorem 3.1 provides a worst-case upper bound for the star-discrepanc y of our method. W e conjecture that the expected star-discrepancy sat- isfies the same asymptotic bounds as jittered sampling [ Pausinger and Steinerberger 2016 ], as observed by the epirical discrepanc y computation of Fig. 3 . 5. Conclusions & future w ork W e ha ve presented a novel stratification method for sampling the d -dimensional hy- percube, with a theoretical upper bound on its L ∞ star-discrepanc y . Our sampling algorithm is simple, parallelisable and we have presented comprehensive qualitative and qualitative comparisons to demonstrate that it performs comparably with state-of- the-art sampling methods on analytical tests as well as complex scenes. W e believ e that this work will inspire future work on how the kd-tree strata might be interleaved or how this scheme can be combined with existing algorithms (as in the case of mul- tijitter). 17 REFERENCES REFERENCES References A D A M S , A . , G E L FA N D , N . , D O L S O N , J . , A N D L E VO Y , M . 2009. Gaussian kd-trees for fast high-dimensional filtering. In A CM T ransactions on Graphics (T oG) , vol. 28, A CM, 21. 5 A H M E D , A . G . , P E R R I E R , H . , C O E U R J O L LY , D . , O S T R O M O U K H OV , V . , G U O , J . , Y A N , D . - M . , H UA N G , H . , A N D D E U S S E N , O . 2016. Low-discrepanc y blue noise sampling. A CM T ransactions on Gr aphics (Pr oceedings of SIGGRAPH Asia) 35 , 6. 5 A I S T L E I T N E R , C . , A N D D I C K , J . 2014. Functions of bounded variation, signed measures, and a general Koksma-Hlawka inequality . arXiv pr eprint arXiv:1406.0230 . 3 A RVO , J . 2001. Stratified sampling of 2-manifolds. In A CM SIGGRAPH Courses . 4 C H I U , K . , S H I R L E Y , P . , A N D W A N G , C . 1994. Graphics gems iv . Academic Press Profes- sional, Inc., San Diego, CA, USA, ch. Multi-jittered Sampling, 370–374. 4 C H R I S T E N S E N , P . H . , J A R O S Z , W . , E T A L . 2016. The path to path-traced movies. F ounda- tions and T rends R in Computer Graphics and V ision 10 , 2, 103–175. 3 C H R I S T E N S E N , P . , K E N S L E R , A . , A N D K I L PA T R I C K , C . 2018. Progressi ve multi-jittered sample sequences. In Computer Graphics F orum , vol. 37, W iley Online Library , 21–33. 5 C L A R B E R G , P . , J A RO S Z , W . , A K E N I N E - M ¨ O L L E R , T. , A N D J E N S E N , H . W . 2005. W av elet importance sampling: efficiently ev aluating products of comple x functions. In A CM T rans- actions on Graphics (T OG) , vol. 24, A CM, 1166–1175. 5 C O C H R A N , W. G . 1977. Sampling techniques. 4 C O O K , R . L . , P O RT E R , T . , A N D C A R P E N T E R , L . 1984. Distributed ray tracing. SIGGRAPH Comput. Graph. 18 , 3 (Jan.), 137–145. 4 D I C K , J . , A N D P I L L I C H S H A M M E R , F . 2010. Digital Nets and Sequences: Discrepancy Theory and Quasi-Monte Carlo Integr ation . Cambridge Univ ersity Press, Ne w Y ork, NY , USA. 3 D O E R R , B . , D O E R R , C . , A N D G N E W U C H , M . 2018. Probabilistic lower bounds for the discrepancy of latin hypercube samples. In Contemporary Computational Mathematics-A Celebration of the 80th Birthday of Ian Sloan . Springer , 339–350. 10 D U R A N D , F. 2011. A frequency analysis of monte-carlo and other numerical integration schemes. T ech. Rep. MIT -CSAIL TR-2011-052, CSAIL, MIT ,, MA, February . 3 F R I E D M A N , J . H . , B E N T L E Y , J . L . , A N D F I N K E L , R . A . 1977. An algorithm for finding best matches in logarithmic e xpected time. ACM T rans. Math. Softw . 3 , 3 (Sept.), 209–226. 5 G O S W A M I , P . , E RO L , F. , M U K H I , R . , P A JA R O L A , R . , A N D G O B B E T T I , E . 2013. An efficient multi-resolution frame work for high quality interactiv e rendering of massi ve point clouds using multi-way kd-trees. The V isual Computer 29 , 1 (Jan), 6983. 5 H A B E R , S . 1967. A modified monte-carlo quadrature. ii. Mathematics of Computation 21 , 99, 388–397. 4 H A LT O N , J . H . 1964. Algorithm 247: Radical-in verse quasi-random point sequence. Com- munications of the A CM 7 , 12, 701–702. 2 , 4 18 REFERENCES REFERENCES I H L E R , A . T. , S U D D E RT H , E . B . , F R E E M A N , W . T. , A N D W I L L S K Y , A . S . 2004. Ef- ficient multiscale sampling from products of gaussian mixtures. In Advances in Neural Information Pr ocessing Systems , 1–8. 5 J A RO S Z , W. , E NAY E T , A . , K E N S L E R , A . , K I L PA T R I C K , C . , A N D C H R I S T E N S E N , P . 2019. Orthogonal array sampling for Monte Carlo rendering. Computer Graphics F orum (Pr o- ceedings of EGSR) 38 , 4 (July), 135–147. 2 , 11 , 14 K E L L E R , A . , P R E M O Z E , S . , A N D R A A B , M . 2012. Advanced (quasi) Monte Carlo methods for image synthesis. In ACM SIGGRAPH 2012 Courses , A CM, Ne w Y ork, NY , USA, SIGGRAPH ’12, 21:1–21:46. 4 K E L L E R , A . 1995. A quasi-Monte Carlo algorithm for the global illumination problem in the radiosity setting. In Monte Carlo and Quasi-Monte Carlo Methods in Scientific Comput ing . Springer , 239–251. 4 K E L L E R , A . , U.S. Patent US7453461B2, No v . 2008. Image generation using low-discrepancy sequences. 5 K O K S M A , J . 1942. Een algemeene stelling uit de theorie der gelijkmatige verdeeling modulo 1. Mathematica B (Zutphen) 11 , 7-11, 43. 3 K O P F , J . , C O H E N - O R , D . , D E U S S E N , O . , A N D L I S C H I N S K I , D . 2006. Recursive W ang tiles for r eal-time blue noise , vol. 25. ACM. 4 K U I P E R S , L . , A N D N I E D E R R E I T E R , H . 1975. Uniform distribution of sequences. Bull. Amer . Math. Soc 81 , 672–675. 2 , 3 M A N T I U K , R . , K R AW C Z Y K , G . , M A N T I U K , R . , A N D S E I D E L , H . - P . 2007. High-dynamic range imaging pipeline: perception-motiv ated representation of visual content. In Human V ision and Electr onic Imaging XII , v ol. 6492, International Society for Optics and Photon- ics, 649212. 3 M A T O U S E K , J . 2009. Geometric discr epancy: An illustrated guide , vol. 18. Springer Science & Business Media. 10 M C C O O L , M . D . , A N D H A RW O O D , P . K . 1997. Probability trees. In IN GRAPHICS INTERF ACE 97 , 37–46. 5 M C K A Y , M . D . , B E C K M A N , R . J . , A N D C O N O V E R , W. J . 1979. Comparison of three methods for selecting values of input variables in the analysis of output from a computer code. T ec hnometrics 21 , 2, 239–245. 4 N I E D E R R E I T E R , H . 1987. Point sets and sequences with small discrepancy . Monatshefte f ¨ ur Mathematik 104 , 4, 273–337. 2 , 4 N I E D E R R E I T E R , H . 1992. Quasi-Monte Carlo Methods . John W iley & Sons, Ltd. 4 N I E D E R R E I T E R , H . 1992. Random number generation and quasi-Monte Carlo methods , vol. 63. Siam. 4 O S T RO M O U K H OV , V . , D O N O H U E , C . , A N D J O D O I N , P . - M . 2004. Fast hierarchical im- portance sampling with blue noise properties. In A CM T ransactions on Gr aphics (T OG) , vol. 23, A CM, 488–495. 4 19 REFERENCES REFERENCES O W E N , A . B . 1995. Randomly permuted (t,m,s)-nets and (t, s)-sequences. 4 O W E N , A . B . 2013. Monte Carlo theory , methods and examples . 2 P A I N T E R , J . , A N D S L O A N , K . 1989. Antialiased ray tracing by adaptive progressi ve refine- ment. In Pr oceedings of the 16th Annual Confer ence on Computer Graphics and Interac- tive T ec hniques , A CM, New Y ork, NY , USA, SIGGRAPH ’89, 281–288. 5 P AU S I N G E R , F. , A N D S T E I N E R B E R G E R , S . 2016. On the discrepancy of jittered sampling. Journal of Comple xity 33 , 199–216. 10 , 17 P H A R R , M . , A N D H U M P H R E Y S , G . 2010. Physically Based Rendering, Second Edition: F rom Theory T o Implementation , 2nd ed. Morgan Kaufmann Publishers Inc., San Fran- cisco, CA, USA. 4 , 13 P I L L E B O U E , A . , S I N G H , G . , C O E U R J O L LY , D . , K A Z H D A N , M . , A N D O S T RO M O U K H OV , V . 2015. V ariance analysis for Monte Carlo inte gration. ACM T ransactions on Graphics (TOG) 34 , 4, 124. 4 S H I R L E Y , P . 1991. Discrepancy as a quality measure for sample distributions. In In Eur o- graphics ’91 , Else vier Science Publishers, 183–194. 2 , 3 , 4 S O B O L ’ , I . 1967. On the distribution of points in a cube and the approximate ev alu- ation of integrals. USSR Computational Mathematics and Mathematical Physics 7 , 4, 86 – 112. URL: http://www.sciencedirect.com/science/article/pii/ 0041555367901449 , doi:https://doi.org/10.1016/0041-5553(67)90144-9. 2 , 4 S U B R , K . , S I N G H , G . , A N D J A RO S Z , W . 2016. Fourier analysis of numerical integration in Monte Carlo rendering: Theory and practice. In A CM SIGGRAPH Courses , A CM, New Y ork, NY , USA. 2 , 3 , 13 T A N G , B . 1993. Orthogonal array-based latin hypercubes. Journal of the American statistical association 88 , 424, 1392–1397. 4 V A N D E R C O R P U T , J . 1936. V erteilungsfunktionen: Mitteilg 5 . N. V . Noord- Hollandsche Uitgev ers Maatschappij. URL: https://books.google.be/books? id=DpODswEACAAJ . 4 V E A C H , E . 1998. Robust Monte Carlo Methods for Light T ransport Simulation . PhD thesis, Stanford, CA, USA. AAI9837162. 3 W A L D , I . , A N D H A V R A N , V . 2006. On building fast kd-trees for ray tracing, and on doing that in O (N log N). In 2006 IEEE Symposium on Inter active Ray T racing , IEEE, 61–69. 5 W A R N O C K , T. T. 1973. Computational In vestigations of Low-Discr epancy P oint-Sets. PhD thesis. AAI7310747. 11 Y U E , Y . , I W A S A K I , K . , C H E N , B . - Y . , D O B A S H I , Y . , A N D N I S H I TA , T . 2010. Unbiased, adaptiv e stochastic sampling for rendering inhomogeneous participating media. In A CM T ransactions on Graphics (T OG) , vol. 29, A CM, 177. 5 Z A R E M B A , S . 1968. Some applications of multidimensional inte gration by parts. In Annales P olonici Mathematici , vol. 1, 85–96. 2 , 3 20 REFERENCES REFERENCES A uthor Contact Information Alexandros D. K eros Univ ersity of Edinbur gh Informatics Forum, 10 Crichton St. Edibur gh, EH8 9AB, UK a.d.keros@sms.ed.ac.uk Div akaran Di vakaran Univ ersity of Edinbur gh Informatics Forum, 10 Crichton St. Edibur gh, EH8 9AB, UK ddiv akar@staf fmail.ed.ac.uk Kartic Subr Univ ersity of Edinbur gh Informatics Forum, 10 Crichton St. Edibur gh, EH8 9AB, UK K.Subr@ed.ac.uk 21 REFERENCES REFERENCES KDT2Dpad Random Jitter2Dpad Halton Sobol 0 0.5e−5 1e−5 1.5e−5 2e−5 2.5e−5 3e−5 3.5e−5 4e−5 4.5e−5 5e−5 5.5e−5 6e−5 6.5e−5 7e−5 RGB MSE KDT2Dpad Random Jitter2Dpad Halton Sobol 0 0.0002 0.0004 0.0006 0.0008 0.001 0.0012 0.0014 0.0016 Log Luminance MSE Figure 8 . QMC methods clearly outperform all others in terms of RGB-MSE for the shaded textured region of the P A VILION scene. Regarding Log-Luminance-MSE all metrics exhibit similar performance, with the worst being Random sampling follo wed by Jitter2Dpad. The region of interest is highlighted in the reference image (top).Plots comparing L 2 -norm mean squared error of the RGB values (RGB-MSE) (bottom left) and the log Luminance mean squared error (Log-Luminance-MSE) (bottom right) of the pix els within the re gion of interest are shown, along with enlarged versions of the highlighted region for each sampler to aid visual inspection (middle). 22 REFERENCES REFERENCES KDT2Dpad Random Jitter2Dpad Halton Sobol 0 0.0005 0.001 0.0015 0.002 0.0025 0.003 0.0035 0.004 0.0045 0.005 0.0055 0.006 RGB MSE KDT2Dpad Random Jitter2Dpad Halton Sobol 0 0.002 0.004 0.006 0.008 0.01 0.012 0.014 0.016 0.018 Log Luminance MSE Figure 9 . The ORB-GLOSS-DOF scene is identical to ORB-GLOSS but rendered with a shallow depth of field (wide aperture). This scene is interesting because it sho ws the interplay between high-frequency , high-dimensional light transport due to multiple bounces within the glossy bounding sphere and blur due to defocus. Errors in one region (256spp) are shown here and additional zoomed insets are shown in Figure 10 . 23 REFERENCES REFERENCES 128 spp 256 spp 128 spp 256 spp 128 spp 256 spp 128 spp 256 spp Figure 10 . The figure shows several insets from the ORB-GLOSS-DOF scene shown in Figure 9 . 24

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

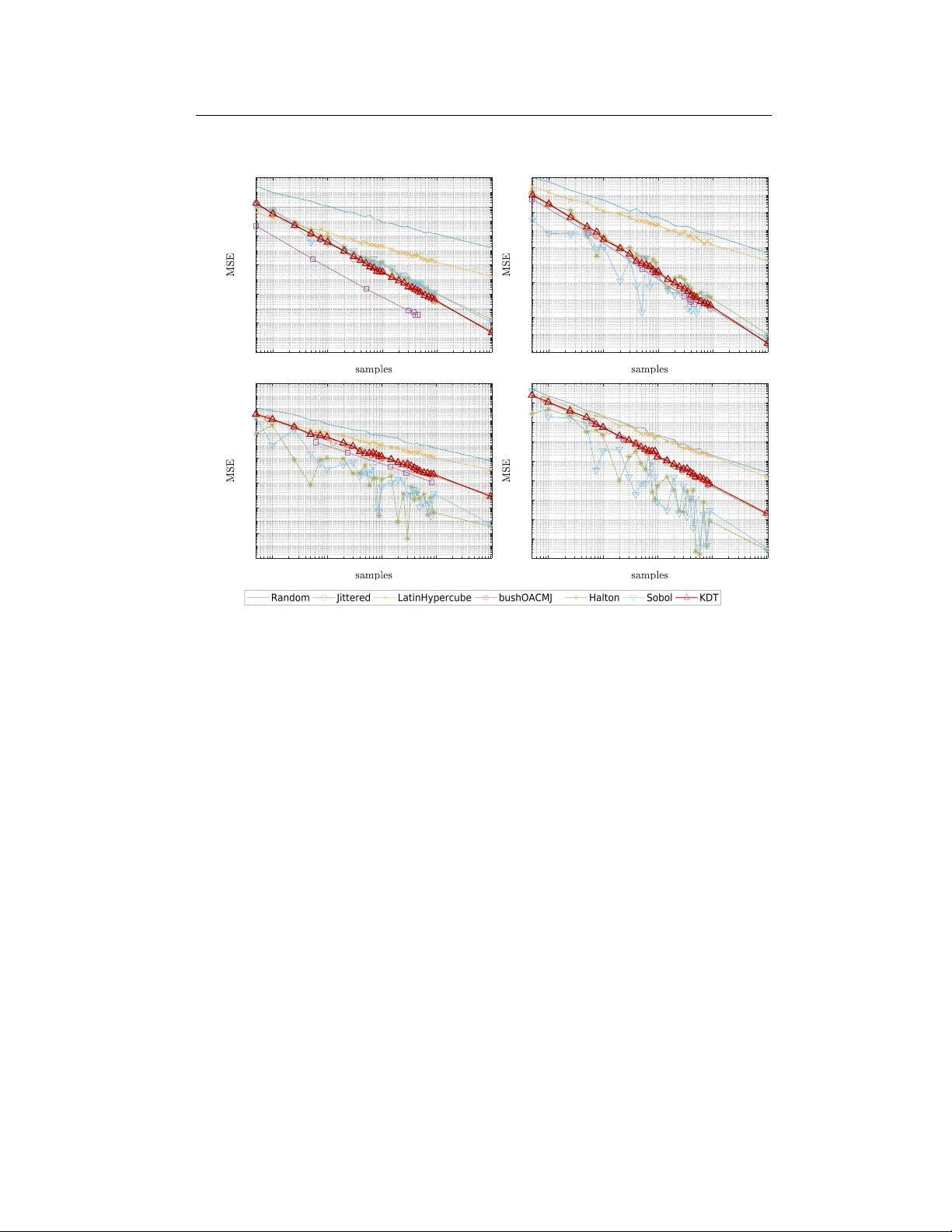

Leave a Comment