Technology-enhanced pre-instructional peer assessment: Exploring students perceptions in a Statistical Methods course

There has been strong interest among higher education institution in implementing technology-enhanced peer assessment as a tool for enhancing students' learning. However, little is known on how to use the peer assessment system in pre-instructional a…

Authors: Yosep Dwi Kristanto

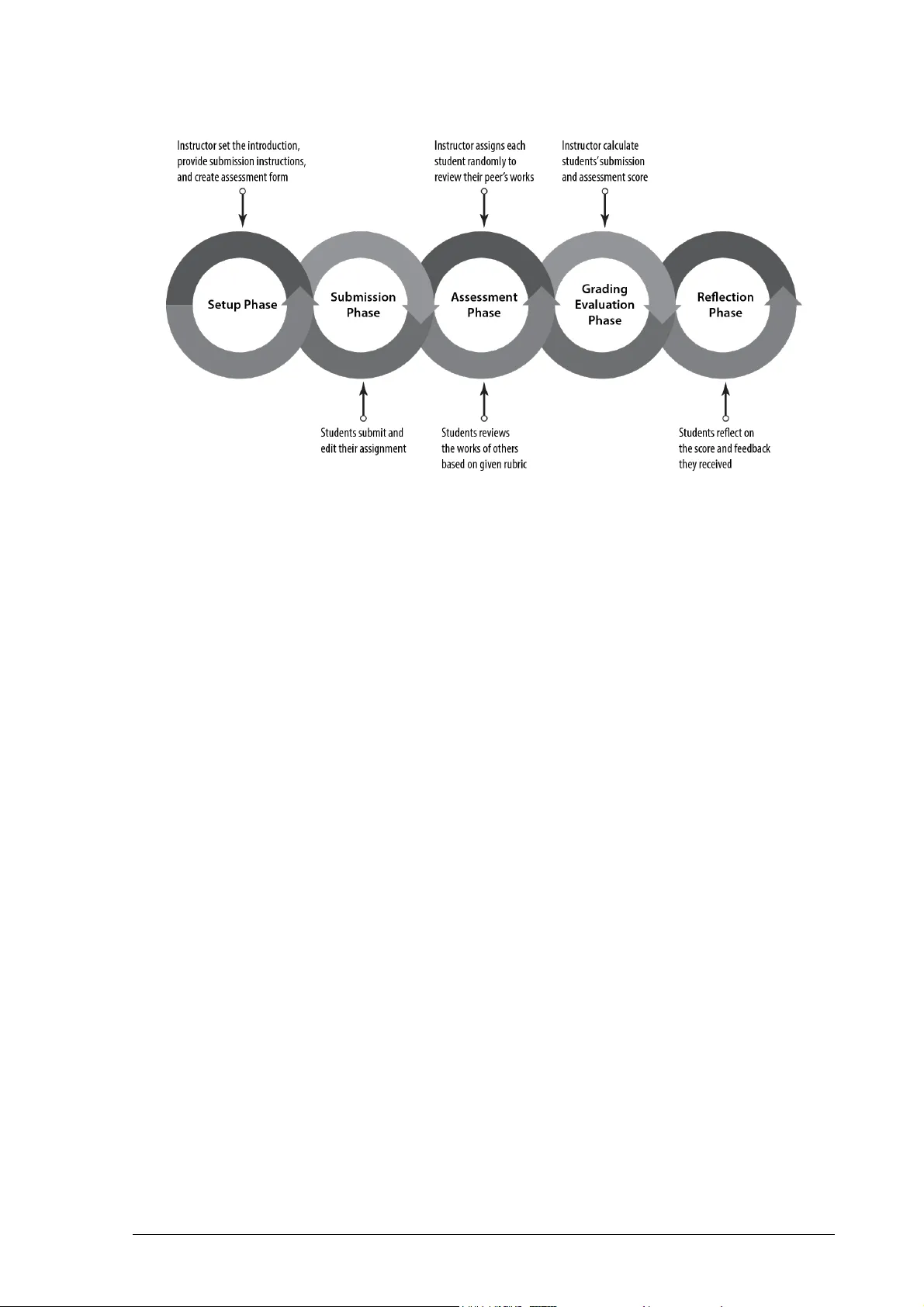

Copyright © 2018 , REiD (R esearch and Evaluati on in Ed ucation) ISSN 2460- 6995 RE iD (Research and Evaluation in Edu cation), 4(2), 2018, 1 05 - 116 Available online at: http ://journal.uny.a c.id/index.p hp/reid Technol ogy-enhanc ed pre-instructi onal peer asse ssment: Exploring students’ perc eptions in a Statist ical Me thod s course Yosep Dwi Kristanto Department of Mathematics Education, Universi tas Sanata Dharma Painga n, Maguw oh arjo, Depok, Slem a n, Yogyakarta 55 282 , I ndon esia E-mail: yos epdwikristanto @usd.a c.id Submitted: 25 August 20 18 | Revised: 20 November 20 18 | Acce pted: 22 Nov ember 2018 Abstract Ther e has been strong interest among higher educa tion institution in implemen ting technology -enhanced peer asse ss men t as a t ool for enhancing studen ts’ learning. Ho wever, little i s known o n h ow to use th e peer assessment system in pre-instruction al activi tie s. This study ai ms to explor e how tech nology - enh anced peer ass essmen t can be embedded i nt o pre -instr uctional activities to enhance students’ learning. Ther efore, t he present study w as a n explor ativ e descriptive study that used the qu alitative appr oach to attain the research aim. This study used a q uestionnaire, s tudent s’ reflection s, and i nt erview i n collecting s tudent’s perception s toward the intervention s. The re sults suggest that t he technology -enhanced pre - instruction al peer as se ssment helps s tudent s to prepare the new conte nt acqu isition and become a source of students’ motivation in improving their lea rn ing p e rfor mance for the following main body of the lesson. A set of practical suggestion s i s also pro posed for design ing and imp lementing te chnology - enh anced pre-instructional peer assessm ent. Keyw ords : peer assessment, pre-ins truct ional activities, perception s, S tat istical Method s, higher education Introduction Ther e has b een strong interest among higher education institution s in impleme nt ing peer ass essmen t as a tool for enhancing stu - dents’ learning. In de ed, the growth o f com - puter technology has a signif icant role in impro vi ng peer ass essment ap plications in various educational settings (Yang & Tsa i, 2010). It is also the case in m athematics learn- ing. Mathematics education researchers h ave shown substantial evidence of t echno logy- enh an ced peer assessment ’s b enefits on the students’ learn ing (Chen & Tsai, 200 9; Peter, 2012; W illey & G ardner, 2010). Sp ecifically, Tanner and Jones ( 1994) posit t hat peer as- sessment helps the students to perform reflec- tion throu gh r eview ing th e works of o thers and rec all ing their own works . Reflection process through wh ich the students recall th eir existing men tal co ntext is fundamental components in learning (Lee & Hutchison, 199 8; v an Woer kom, 2010; Wain, 2017). Therefore, t his process o f r eflection meets the purpose of pre- ins tructional act iv- ities. In the p re -ins tructional activities, it is expected t hat s tudents can link their prior knowledge with th e new conte nt to be learned (Dick, C arey, & Carey, 2015 ) . For this ration- ale, it is accept able to stimulate reflection pro cess b y conducting peer assess ment in pre- instructional activi ties. However, litt le h as been shown in the literature that peer assess- ment is used in pr e-instr uctio nal activi ties, tho ugh Scott ( 2017) has utilized t he simulated peer assess ment in improvi ng n umerical prob- lem-solving skills as a prer equi site for learn ing Biology. The questions and the solutions used in Scott’s study wer e not genuine studen ts’ works but were constructed b y the researcher . Ther ef or e, th e p resent study tr ies to shed a lig ht on how t o emb ed technology -enhanced peer assessment into pre- ins tructional activi- ties to enhance students’ learning. This p aper REiD ( Research and Evalua tion in Educa tion), 4(2), 201 8 ISSN 2460-6 995 Technolo gy- en hanced pre-ins tr uc tional peer assessment... - 106 Yosep Dw i Kristanto investig ates students’ perceptions in an at - tempt to portray students’ lea rning. Technology-En ha nce d Pee r Assessment In understanding pee r assess men t, this study refers to the definition proposed by Topping ( 1998) . He defined peer ass essment as a p rocess in which student measures th e learning achieveme nt of his/her peers. In the pro cess , students have two different roles, namely assessors and assessees. As assessor s, they evalua te and, in m an y cases, p rovide feedback t o the wor ks of their fellow stu - dents. In assessees role, they receive marking and fee dback for their works and may act upon it. Recent studies found t hat p eer assess- ment has positiv e impact s on t he st uden ts’ learning. Several studies demonstrate that peer assess men t can benefit the students in the assess men t task, i.e. t he quality of asse ssment they provided (Ashton & Davies, 2015; Gielen & De Wever, 2015; Jo nes & Alcock, 2014; Patchan , Schunn, & Clark, 2018) . Furthermore, peer assess ment also h as effects on the students’ acquisition of knowledge and skill s in t he core domain. In their study, Hwang, Hu ng, an d Chen ( 2014) show that peer assess ment effectively promotes the students’ le arning achievemen t and p roblem- solvi ng s ki lls. In p articula r, gaining learning achievement was also shown in St atistics class (Sun, Harris, Walther, & Baiocch i, 2015). On e possible ration ale of such benefits of pee r assess men t in th e stude nt s’ learning is th e expo sure to the works of their peers. When the students view t heir peers’ works, they compare and contrast the works with their alternative so lu tion s. Th is pr ocess of compar - ing and contrasting has t he potential to facili- tate studen ts learning ( Alfi eri, Nokes- Malach, & Schunn , 2013; Reinholz, 201 6). Even t hough p eer assessment has a number of advantages in facilitating learning, it also has several i ssu es. Th e major co ncer n in peer assessment is its val idity as w ell as reliability (Cho , Schunn, & Wil son , 2006 ) . Topping ( 1998) found disagreement on the degree o f val idity and reliabil ity o f peer assess- ment on h is review, some studies rep ort h igh validi ty and reliabil ity ( Haag a, 1993; Stefani, 1994; St rang, 201 3), and t he o thers repo rt otherwise (C heng & Warren, 1999 ; M owl & Pain, 19 95) . However, the issu es regarding validi ty and reliabili ty can be reduced by pro- vidi ng th e students with a ssessment rubrics (Hafner & Hafner, 2003 ; Jon sson & Svingby, 2007) since it makes expectations an d criter ia explicit. Another issu e r egarding the peer assess- ment system is about administrative wo rkload (Hanrahan & I saa cs, 2001 ) . When imp lement- ing peer assessment in th eir class, instructor s at l east should manage the studen ts’ submis - sion, assessment, and grading e valua tion . For - tunately, t hese funct ions can be administered by usi ng tech nology (Kwok & Ma, 1999) . Technology can be used to record and assem- ble the r esul ts of scoring and commentary ef - ficiently. In addition, t echnology also en ables the teach er to p rovide immediate f eedback based on the automated score calcula tion . In the sp irit of making t he most of peer assess men t’s benefits and addressing its p rob - lems, peer feedback can be employed to ac- compa ny the peer assessment process. In p eer feedback, the students discuss each other re- garding p erform ance and s tandards (Liu & Carless, 2006). They comment or annotate the draft or final assignm ents of their p eers t o giv e ad vice for the i mprovement of the as- sig nm ents. When feedback comes with grad - ing, it can be us ed to explain and justify t he grade. It is also used to p ose th ought -pr ovok- ing questions. The presence of the tho ug ht- pro voki ng qu estions can fo ste r t he assessees’ reflection on their assi gnmen ts . Pre-instr uctio nal Act iv ity: Th eory and Prac- tice From the instructional design perspec - tive, Gagné, B rigg s, an d Wager (1992) p osit that a n inst ruction should be designed sys tem - aticall y to a ffect the students’ development. Th us , inst ructional activities should be desig n - ed to facili tate the students’ learning. On e ma - jor component o f th e activi ties is pre -instr uc- tional activiti es. Th e activities are done p rior to b eginning formal instr uction and it is sig- nificantly impo rtan t to mot iv ate the students, inform the m t he learn ing o bjectives, an d sti- mulate recall of prer equi site skills. T his st udy REiD ( Resea rch and Evaluation in Education), 4(2), 2018 ISSN 2460-6995 107 - Technology-e nhanced pre- instructional peer asses sment... Yosep Dw i Kristanto will no t t heoret ical ly di scuss all of t he pre- instructional activities in dep th. Instead, it will briefly present the examples of pre -instruc- tional activities that appear in literature. Pre-instr uctional activi ties can be do ne in different strategies. It also applies to mathe- matics l earn ing. Loch, Jordan, Lowe, an d Mestel ( 20 14), in the Calcul us o f V aria tion s and Advanced Calcul us cla ss, use screen casts to facil itate st udents in r evis ing the prerequi- site knowledge regarding the calculus tech- niques. Furth er, some scholars ( Jungić, Kaur, Mulholland, & Xin, 2015 ; L ove, Hodge, Corr it or e, & Ernst, 2015) use p eer instructio n as a pre -instructional str ategy. The lesson in- tro ductio n also can be done by simply telling the student s of the p rerequ isites o r t esting them on entry skil ls (Conn e r, 201 5). Method This study was an explor ative descr ip- tive research em ploying a qualitative appr oach in exploring how tec hnology -enhanced peer assess men t can be embedded into p re- inst ruc- tional activities to enh a nce students’ learning. The following sect ions give det ail s o f th e re - search’s set ting, data collection, and also data analysi s. Research Setting The research was conducted at a private universi ty in Yo gyak arta, In d on esia to investi- gate students’ perceptions of t he peer assess - ment system in Statistical Method s class. The class was co nducted in a multimedia labora - tor y in whic h st udents have a co mputer to as - sist t hem in learning statistics. The author was the instructor o f the clas s. The class utilized Exelsa, M oodle-based learning management system devel oped by the u niversity, for the course administration purpose. In Exelsa, th e students can access learning material s, post to a for um, and discuss with their p eers abo ut a certain topic, submit their assignments, assess and give feedb ack to their peers’ works. Th e class was co nducted biweekly with 24 meet - ings of instruction , one meeting o f the m id- term exam, and one m eeting of the final ex - am. Each m eeting con sis ted of 100-minute learning activiti es. In three out of twent y-four m eetings, the class was b egun with peer -assessment ac- tivity. Ther efore, students must submit their assignment s befor e th e class start ed. The as- sig nm ents used in peer assess ment were on the top ics of on e-way and two -way AN OVA. The assig nmen ts wer e done indivi dually and required Micro soft Excel and SPSS Statistics in p rocessing and analyzing real data given in the p roble ms. The more detai ls of the assi g n- ments wil l b e described in t he Findings sec - tion. The peer assessment system used in this study was a wor kshop module (D ooley, 2009 ) pro vi ded b y the LMS. The peer assess ment takes place duri ng th e p re-instructional ac - tiviti es. The peer assessment sys tem has five phases, i.e. set up, submission, assess men t, grading evaluation, and r eflection phases. In the setup phase, the instructor shoul d set t he intro ductio n, pro vi de submission instructions, and create an assessment form. Aft e r all of the component s are set up, th e instructor can activate th e submission phase. In this phase, students can submit and edit their assignment. Option all y, they also can giv e a note on their assignment s. However, st udents can only sub- mit and edit th eir assig nm ents before the class started. Rig ht after th e class started, the instr uc- tor activ ated t he assessment p hase. In t his phase, each student was assig ned r andomly to review assignm ents by their two p eers. T hus , each student has two assessors. In reviewi ng their peers’ assignment s, s tudent s used a ru - bric t o ob tain a more objectiv e assessment . The grading st rategy used in the peer assess- ment system is th e n um ber o f errors t hrough which st udents grade each criterion b y an - swering yes or no qu estion s and optional ly pro vi de comm ents on the criterion. After all of t he assi gnmen ts were rev iewed, th e instruc- tor can swi tch on the grading eval uation phase in wh ich submission and as sessment grade of each student wer e calcul ated auto - maticall y. In the end, student s can directly see their score and feedback p rovi ded by their peers and reflect on it. The last mention ed is a reflection phase. Th e peer assessment process can be seen in Figu re 1. REiD ( Research and Evalua tion in Educa tion), 4(2), 201 8 ISSN 2460-6 995 Technolo gy- en hanced pre-ins tr uc tional peer assessment... - 108 Yosep Dw i Kristanto Figu re 1. Peer assessment phases Data Collection The data collection process in this study was conducted bet wee n May and June 2018 and has bee n carried out in three phases. In the first phase, the r esearcher asked th e stu - dents t o write th e reflection about their learn - ing exper iences in th e course. Th e research er prompted the students to use Gibbs reflective model (Gibbs, 1988 ). One learn ing experien ce that should be reflected b y students was their exper ien ce in peer assess ment activ ity. Th is phase of the data c ollection process was ad - ministered by the LMS. In the second ph ase, a qu estionn aire adapted from Bri ndley and Scoffiel d (1998) was used to examine students’ p erce pt io ns on the peer assess men t. The question naire con- sists of thr ee sections. The first section asked the students’ personal data while th e second section asked students’ p erceptions o f peer assess men t. The last sect ion invited students to assess how usefu l t he p eer assessment pro - cess was. The second phase was do ne in the week right b efore the final exam an d admin - istered by Google form. The t hird phase was con ducted b y interviewing th ree students o n t heir general opinion about t he learning process. The three students were purposively chosen to represent students’ achievement . These stude nt s were interviewed simultaneo usl y so th ey feel co m - fortab le since the interviewer was their lectur- er. The inter view was reco rded with the ap - pro val o f th ese studen ts to prevent data loss . In addition, logs of three p eer assess- ment activities in the LMS was also gen erated and downloaded. This l ogs file reco rds the students’ activiti es in the p eer assessment sys - tem. Once downloaded, the logs da ta were then so rted in Microsoft Excel to know t he duration o f assessment task fulfil lmen t do ne by each student. M oreover, t he data also were used t o find t otal time -frame o f the assess- ment phase in each meeting. Data An alysi s Data from the questionnaire and dat a logs were analyzed using descriptive statistics. Studen ts’ response from each item of the questi on naire was described as a p rop ortion or mean valu e whereas data logs were de - scribed as a m ean va lu e for each mee ting. Data from students’ r eflection and intervi ew were examined and cat egorized by the re - searcher. T he cat egories are deriv ed from Wen and Tsai (2 006, pp. 33 – 34) study, i.e. positive att itude, o nline attitude, underst and- ing-and-action , an d n egativ e att itude. The data were labeled with the correspon ding codes and analyzed via the At las .ti package p rogram (for more information about c on ducting qual- itative data analysis with At las .ti see, Friese, 2014). REiD ( Resea rch and Evaluation in Education), 4(2), 2018 ISSN 2460-6995 109 - Technology-e nhanced pre- instructional peer asses sment... Yosep Dw i Kristanto Research Part ici pants In total, 34 student s were enrolled in the author -tau ght co urse unde r study. St uden t gender de mo gra ph ics consisted o f eight male and 26 female students. Most stude nt s were in their junior ye ar with only five students from senior year. All of the students were p rospec- tive mathematics teachers. Findin gs and Discus sion Findings Pre-instruct ional A ct ivi ties Profiles The t hree m eetings utili zed t echnology - enh an ced peer assessment in th e pre -instruc- tional act iv ities. At t he beginning of each ses - sion, t he instr uctor infor med the students about the learning objectives th at sho uld be achieved an d linked t he o bjectives with t he previous assignments. The students were then asked t o assess th eir peer s’ w or ks t hrough LMS. During the peer assess men t process, the instructor m oved ab out the classroom, o b- served students’ progress on th e assessment task, p rovided gui dance if nec essary, and an- swered questions if they arose. A ft er t he peer assess men t process was complete, the instruc - tor gave the stu den ts the opportunity to re - flect on the score and feedb ack they rec eiv ed. The latter activi ty was the end of the pre - instructional activities. The description o f the assignments to be su bm itted before each meeting started is as follows. First m eeting required st udents to submit an ass ignmen t on the topic of one - way ANOVA. The assignment asked students to investig ate if ther e is a dif ference in the mean of fo otball p layers’ height in each p osition, i.e. forward, m idfi elder, defen der, and goalkeeper. In the assi gnment, the ins tructor p rovided real data obtained fr om various sources. In this meet i ng, two students did not submit their assig nm ent and there were also t wo stu- dents who submitted their assignment but did not attend the class. In the seco nd meeting, the student s should have su bm itted a one-way ANOVA problem from the accompanyin g t extb oo k (Bluman, 2012 , p. 632) . The problem asked them to de termine the effective method in lowering bloo d p ressure by examining t he mean o f indivi duals’ blood pressure from thr e e samples categor iz ed by the m eth ods they follow. T he p eer assessment process used in this meeting was slightly differen t from the previous mee ting. I n th e assessment phase, the students had to assess an example submissi on pr ovided by the instr uct or as an assessing p ractice befo re they assessed th eir peers’ works. Three studen ts did n ot subm it their assi gnment in t his m e eting . In the third meeting, students shoul d have submitted their assig nm ent for the 'Car Crash T est Measurements' p roblem from the accomp a nying textbook (Tr iola , 2012, p . 64 3) . In th is p roble m, the students wer e instructed to test for an interaction effect, an effect from car type and car size. One s tudent di d not submit th eir assignment in t his meet ing and there were also th ree students who submitted their assi gnme nt but did not at tend the clas s. The mean o f assessment t ask s carried out by all students in each meeting was cal - cula ted and r e po rted in Table 1. On average, the p eriod st arting from the assessment ph ase begins unt il the assessment p hase clo ses were 43.50 minutes. Th e table reveal s that there has been a sharp decrease in the mean of first and second assessment tasks per iod carried out by the s tudent s in each meeting. In particula r, the decreasing t rend als o applied in the second meeting when the student s f irst reviewed an assess men t example. In this m eeting, the stu- dents r eview ed example assessment in nearly a h alf of an ho ur (26.83 mins), the first peer’s works in almost a qu arter of an hour ( 12.6 9 mins), and the second peer’s works i n just over six m inutes (6.7 2 mins) . Table 1. M ea n of ass essment phase t im e -frame in mi nutes Example assessment Assessment 1 Assessment 2 Total Meeting 1 – 20.03 5.83 39.97 Meeting 2 26.83 12.69 6.72 59.80 Meeting 3 – 10.77 4.83 30.72 M = 26.83 M = 14.50 M = 5. 79 M = 43.50 REiD ( Research and Evalua tion in Educa tion), 4(2), 201 8 ISSN 2460-6 995 Technolo gy- en hanced pre-ins tr uc tional peer assessment... - 110 Yosep Dw i Kristanto Students’ Perception T o investigate t he students’ p erceptions of peer assessment , th is study employed both qua nt itativ e an d quali tati ve data. Th e quantita- tive data w ere obt ained f rom the questi on - naire, while t he qua litative data were o btained fr o m the students’ reflection s, the question - naire, and i nter vi ew. From t he questionnaire result, it is re - ported th a t most of the students ( 86.2 1%) in this study h ad previous exper ience on peer as - sessment. It is also found t hat approximately thr e e out of four st udents perceiv ed the ne- cessity o f assessing their pee rs. Fu rt her, it is only 27.59 % o f t he students who fully under - stood the expec tation imposed on them when reviewi ng their p eers’ works, whereas the rest only have a moder a te understanding. In othe r words, all students understo od what others expect on them in assessment task s. Four items of th e questi on naire were rating-scale quest ions and used to exp lore the students’ p erceiv ed easiness, fairness, pres - sure, and ben efit o f p eer assessment. A mean r eport o f the st ude nts’ respon ses to the items is sho wn in Table 2. The st udents gave a h ig h rating on fairness and responsibility of their marking (M = 4.07) an d benefits of peer as - sessment they receive (M = 4.21). With regard to the grading task, they te nd to po sit that they have difficu lties in assessi ng th eir peers’ works (M = 3.24). However, they were under moder a te pressure when they are doing the assess men t task (M = 3.03 ). The sources of the pressu re are various, more than half comes from their role (62.07 %), almost a third comes from their experiences (31.03 %), and the rest comes from their peers (6.90 %) . The students’ written reflect ion an d in - terview are used t o examine the students’ p er - cept io ns as well. Th e perceptions were group - ed int o four de fined ca tegor ies and presented in Table 3. The main them e o f the students’ statements was t he h elpful ness of p eer ass ess - ment in enhancing their learning. Regarding this th eme, students st ated th at pee r assess- ment helps them to en a ble reflective pr ocess, viz ., r eflecting o n t heir m istak es shown by peers as well as reflecting and reviewing th eir Table 2. St udents’ per cep tions scale on p e er assess ment Question Mean How dif ficult was assess ing your peers’ wor k? 3.24 How fair and respon sible were you in assessing your peers' wor k? 4.07 How much pr essure did the exp erience put you under? 3.03 How ben eficial was the p eer-assessm ent to you? 4.21 Table 3. Categories of students’ perceptions Category Code (frequen cy) Positive attitude Helping le a rning (42) Providing Feedback ( 5) Enabling int eraction (3) Sustainabil ity (3) Helping instructor (2) Engaging ( 1) Motivating (1) Online attit ude Ano nymity (1) Efficiency (1) Transp arency (1) Understanding-an d-action Grading strat egy (8) Action for i mpro vement (7) Ass essment criteria ( 2) Negative att itude Credibilit y (15) No feedba ck (6) Underestimat ing self-ab ility (3) REiD ( Resea rch and Evaluation in Education), 4(2), 2018 ISSN 2460-6995 111 - Technology-e nhanced pre- instructional peer asses sment... Yosep Dw i Kristanto own works to be compared an d contrasted to p eer s’ works. Se cond, the students perceived the peer assess men t process as a too l for knowledge buil d ing since t hey sho ul d review their knowledge when assess ing ot hers. They added that ass essing t heir p eers e nco uraged them to discuss to t heir friends if th ey are in - decisiv e ab out their assessment. This discus - sion led them to constr uct n ew kno wl edge to pro vi de marking and feedback on the assess- ment task. Third, the students thought that peer assessment p rocess develops their evalu- ative judgment maki ng sk ills r egarding th eir own works or others when th ey p rovi de feed - back to p eers. Finally, t he process o f review- ing peers’ works gives cr itical underst anding and develop s higher -level learning sk ills, such as analy zing a nd evalua ting. The quotation s from five student s th at reflect th e benefits of peer assessment with regard to its use fulness in enhancing their learning are giv en below: In my o pin ion, the pee r assessment is use ful. (It is) bec ause it encourages me to review my own works if there is a mismatch betwee n my own works and peers. So, ( I) learned twice at once regardi ng the wo rks. (S 6 ) … because I don’t know ( it is right or wrong) … I ask for he lp to m y friend and found that my insight was improved. (S 15 ) This ( peer) assessment w as good to provide fe ed - backs to peers’ works as w ell as to be responsible with my marking. (S 31 ) (P eer assessment) help us to think critically in assessing friends’ works. (S 12 ) … we also must evaluate the ans we r of our friends w hich in directly makes us reviewing t he topics so that we can know/analyze w here t he friends’ mistakes are. (S 29 ) Assessment c r edibili ty is ano ther major theme of students’ per ception s o n pee r as - sessment. On one h a nd, the students agreed that peer assessment gives the inst ruc to r othe r persp e ctives to provide mor e accurate grading and t imely feedback. On the other han d, the studen ts also questioned their p e ers’ ability in assessing their works. I t is possible that their peer assessors m ade an inaccurate assessment if t he assessors’ o wn works were inaccurate since the assessors often referred to it when undertaking an assessment task. Underrating self-abil ity also b ecomes a source of credibility issu es. When t he student s feel incompetence on the subject- s pecific tasks, they are afraid of not being able to pro vi de ap propriate judg- ments. Reliability is student s’ n ext concern on peer assessment . T hey found th at their asses- sors give different grades on the same item. Hence, they questioned peers’ unde rstanding of r ubric criter ia giv en by th e instructor. The following are the students’ statements related to the credibility of peer assessment. Pee r assessment is very useful as if t he instructor makes an error on assessment, it can be remedied by peers ’ grading. (S 8 ) … However, the pee r assessment doesn’t work optimally whe n the assessor lacks understandin g on what being assesse d. (Moreover ) the accuracy of each student’s assessment is different from one another. (S 34 ) … Maybe the asse ssors’ opinions are d ifferent from eac h ot her, since t here are two friends t ha t get different scores although their an sw ers ar e more or less the same. (S 19 ) Th e students thought that feedback is an imp ortant component in peer assessment. Corrective feedback p rovi ded by p eers was helpful for t he st udents to know the errors on their works wher eas sugg estive feedback use - ful to make impro vements later on. The im- po rtance of feedback was also r eflected in stu- dents’ res ponses when th ey did not receive feedback. They b eli eve that assessors’ task was n ot only give marking but also p rovide con st ructive comments. S om e of t he students’ comments regarding the import a nce of feed- back are as fo ll ows. The one who said ‘ no ’ also comment. It is a const ruc tive thing for us (t o know) our mistakes that (the location of) the mistakes are in here, in here, and in here … There is a friend (that not only) said ‘ correct ’ but also giv e a co mment, (you ) should write like this and like this. So, that’ s the positive. It’s like c onstructing (the und er - standing of) us. (S 15 ) Sometimes the r e is a friend who said that our answer was not correct, but does not give a single comment. That’s it. So, we do not know whe r e it goes wrong. (S 24 ) REiD ( Research and Evalua tion in Educa tion), 4(2), 201 8 ISSN 2460-6 995 Technolo gy- en hanced pre-ins tr uc tional peer assessment... - 112 Yosep Dw i Kristanto Other peer ass essmen t aspec ts did n ot escape the studen ts’ attention. With regard to the number of errors g rading strategy, they perce iv ed th a t it p rovided no t many options in marking peers’ works. I nstead of an sw ering yes o r no in each cr ite rion , t hey prefer to use scale-rating st rategy. However, t hey thought that the p eer assessment process can facili tate students’ discussi on as wel l as students - instructor interaction. Other benefits of tec h- nology- enha nced p eer assess men t were also unfolded. Students stated that such assess- ment model was t ransparent a nd efficient as well as en gagi ng and mot iv ating. Discuss ion The aim o f this study was to ex plore how technology enh anced peer assessment can be embedded into pre - in structional activ- ities t o enhance the students’ learning. This paper interprets t he students’ p erceptions in an effor t to investigate student s’ learning exper ien ces. In general, the research resu lts show th at technology- e nh a nce d peer assess- ment holds signifi cant p romise to be an effec- tive p re-instr uctio nal strategy. The learning ben efits provided b y p eer assess men t meet the purpose of the pre- instru ction al st rategy. The findings of the present study show that the p rocess of assess ing and comm enting on t h e works of others facili tate the students’ learning. T his finding is in l ine with the result of p rior studi es in peer assessment investig a - tion (Hanrahan & I saa cs, 2001; S un et a l., 2015) . One possi ble explanation of this find - ing can be deriv ed fro m comparativ e th inking persp e ctive (Alfieri et a l., 2013; Silver, 2010) . When the student revi ews pee rs’ works, t hey compare and contrast it with their own works. If they doubt their own works, they ask for help to others o r the instructor. Th is p rocess of comparing and co ntrasting h elps t hem to rehear se their own understanding t hat is use- ful for preparing the m to gain n ew kno wledge related to it. The findings also s ugg est that p eer assess men t stimula tes reflective thinking that drives action for impr ovement. Similar to th e results of oth er studies (Davies & Ber row, 1998; Liu, Lin, Ch iu, & Yuan, 2001) , th e p eer assess men t p rocess leads the st udents to think criticall y and reflect the quality of t heir own works comp ared to th e others’. This evalu - ative p rocess h elps th e students to devise a plan in improving th eir learn ing products later on. As a feedback rec eiver, the students also take advantages o f the feedback to enhance their learning. In oth e r words, p e er assess- ment can becom e a source o f students’ mo - tivation in im pro ving their lear ning p erfor- mance in the commencing mai n body of a lesson (Jenkins, 2005). The study also shows th e impo rtance of feedback in student s’ learning. A s a salient element of p eer assessment, peer feedback fa- cili tates students in taking a n active ro le in their learning (L iu & Carless, 2006). When th e students p rovi de co rrective feedback on the peers’ wor ks, th ey develop an o bjective attitude in conducting t heir assessment task (Nicol & Macfarla ne -Dick, 2006). Th rough pro vi ding suggestive feedback, t he students think cr itical ly on the drawbacks of their peers ’ works even when the wor ks are corr ect (Chi, 1996) . As a feedb ack r eceiver, t he stu- dents us e p eers ’ comment to i mpro ve t heir works. Mor eover, peer’s comments are poten - tial to sp ark cognitive conflict when t he com- ments contradict the student’s pr io r knowl - edge. Fro m the so cio-cognitive p erspective , cognitive con fli ct is fundamental in facilitating students’ learning when it is successfu lly re - solved (Nastasi & Clements, 1992 ) . However, the results of th is study also reveal the resistance of peer assessment. Many students in this study have negative att itudes toward th e fairness of p eer grading. The simi- lar result also can be found in th e literature (Cheng & Warren, 1999 ; Davies, 2000; Liu & Carless, 2006). Th e negative per ceptions come from the students’ skepticism a bo ut the expertise o f their fellow students. Even when a rubric was pro vided, the students thought that some o f their peer s were not really fair in giving m arking. Ano ther issue arose fro m grading strategy used in the assessment t ask . The correct and n ot-corr e ct dichotomy into which student s should categor ize t heir p eers’ work is considered to be inflexible (Sh eatsley, 1983). The student s want more flexible grad - ing strategy in o rder to be more confident in assessing their peers. REiD ( Resea rch and Evaluation in Education), 4(2), 2018 ISSN 2460-6995 113 - Technology-e nhanced pre- instructional peer asses sment... Yosep Dw i Kristanto Last b ut not least, the study has several limitations to be considered. The first li mita - tion of the current study r elates to its explo - rator y design in investigating the student s’ learning exper ie nce. Fu ture studies with a larger sample an d a lon ger p eriod are nee d ed to verify the evidence found in th is study. Secon d, th is study on ly focuses o n imple - menting pee r assessment. Comparative stu- dies are n eeded t o compare t he effectiveness of p eer assess ment and other strategies, such as advance organizers and ove rviews, to be used in pr e-instructional act iv ities. Finally, design-based studies co uld co ntr ibute to fu- ture literature in giving p eer assessment de- sig n that o ptimizes the learni ng t ransitio n from lesson introduction to the m ain body o f the lesson. Conclusion and Sugge stions The contr i bution of this study is t o show t he pot ential o f tech nology- enhanced peer assess ment to b e used as p re-instruc- tional activities. Th e results of t he current stu - dy, in general, sugg est th at the technology- enh an ced p re-instructional p eer assessment helps th e stude nt s to p repare the new content acquisi tion for the following lesson . It is also found t hat peer feed back h as a significant role in the p eer assessment p rocess in facili tating students’ learning . Based o n the findings in th e present study, the author proposed a set o f sug ges - tions for designing an d imp lementing techno - logy-enh a nced pre -instructional p eer ass ess- ment. First, a training sho uld be pr ovided to students so that t hey can pro vi de and m anage feedback as well as take action upo n it effec- tively. Seco nd, discussi on s between students and the i nstructor about a ssessment criteria are n eeded in order to improve students’ understanding abo ut what to be assess ed by their fellow students’ works . If n ecess ary, the instructor also can invite students to develo p the assessment criteria . T hird, the instructor should mon it or students’ attitude toward grading strategy. This m onito ring proce ss aims to k no w th e s uitabili ty o f t he gradi ng strategy to st uden ts, tasks, and learning c on - text. Finally, the instr uct or shoul d use the assignment features ( e.g., its content and con - text) used in p eer assessment as a l ink to th e commen cing main body o f the less on . Acknowledgment The researcher would lik e to t hank the students who part icipated in this s tudy and LPPM o f Universitas Sanata Dh arma that supported this study. In addition, th e re- searcher expresses gratitude to Russasmita Sr i Padmi who kindly agreed t o edit this manu - script. Refer en ces Alfieri, L., No kes-Malach, T. J., & Schunn, C. D. (201 3). L earning t hrough case com - parisons: A meta- analytic review. Edu ca- tional Psychologist , 48 (2), 87 – 113. ht tps:// doi.org/10.1080 /00461520.2013.775 712 Ashton, S., & D avi es, R. S. ( 201 5). Using scaffolded rubrics to impro ve pee r assess men t in a MOOC wr iting co urse. Distance Edu cat ion , 36 (3), 312 – 334 . https://doi.org/10.1 080/01587919.2015 .1081733 Bluman, A. G. (2012). Elementary s tatistics: A ste p by s tep approa ch ( 8th ed.). N ew York, NY: McGraw-Hill. Brindley, C., & Scoffield, S. (199 8). Peer assess men t in under gradua te pr o- grammes. Teachin g in Higher Edu cation , 3 (1), 79 – 90. ht tps://doi.org/ 10.1080/1 356215980030106 Chen , Y., & Tsai, C. (200 9). An e ducational research co urse facilitated b y on li ne peer assess men t. Innovations in Education and Teaching International , 46 (1), 105 – 117 . https://doi.org/10.1 080/147032908026 46297 Chen g, W., & Warr en, M. (1999 ). Peer and teacher assessment o f the oral and written tasks of a group project. Assessment & Evalu ation in H igher Edu cat ion , 24 (3), 301 – 314. htt ps:// doi.org/10.1080 /0260293990240304 Chi, M. T. H. (1996). Con structing self- explanations and scaffolded exp la nat ions in tutoring. Appli ed Cognitive P sychology , 10 (7), 33 – 49. https://doi.org/ REiD ( Research and Evalua tion in Educa tion), 4(2), 201 8 ISSN 2460-6 995 Technolo gy- en hanced pre-ins tr uc tional peer assessment... - 114 Yosep Dw i Kristanto 10.1002/(SICI)1099-07 20(199611)10:7 <33 ::AID -ACP436>3.0 .CO;2-E Cho , K., Schunn, C. D., & Wilson, R. W. (2006 ). Validity and reliabili ty of scaffolded p eer assess men t o f writing from instructor and student perspectives. Journal o f Educational P sycho logy , 98 (4), 891 – 90 1. http s://doi. or g/1 0.1037/0022- 0663.98.4 .891.supp Conner , K. ( 201 5). Investigati ng diag no stic preassessment s. Ma the matic s Teacher , 108 (7), 536 – 542. Davies, P. (2000). Com puterized p eer assess- ment. Innovations in Education and Tr aining International , 37 (4), 346 – 355. ht tps://doi. org/10.1080 / 13 558 0000 750052955 Davies, R., & Berro w, T. (1998 ). An eval uat ion of t he use o f comp ute r supported p eer review for developing higher-level skills. Computers & Education , 30 (1), 111 – 115. Dick, W., Carey, L ., & C arey, J. O. (2 015). Systematic design of instruction (8t h ed.). Boston, MA: Pearson. Doo ley, J . F. (2009). Peer assess men ts usi ng the m oodle workshop tool. I n Proceedings of t he 14th Annua l ACM SI GCSE Conference on Innovation and Technolo gy in Computer Sc ience Education (Vo l. 41, pp . 344 – 34 4). New York, N Y: ACM. https:/ /doi.org/10.1145 /1562877.1562985 Friese, S. (2014). Qualitative data analys is with ATLAS.ti ( 2nd ed.) . L on d on : SAGE. Gagné, R. M., B rigg s, L. J., & Wager, W. W. (1992 ). P rincip les of instructional design (4th ed.). Fort Worth , TX: Harcourt Brace College. Gibbs, G. ( 198 8). Learning by do ing: A g uid e to teaching and learning metho ds . London: FEU. Gielen, M., & De Wever, B. (2015 ). Structuring the pee r assessme nt process: A m ulti level appr oach for the impact on pro duct im provement and peer f eedback quali ty. Journal of C omputer Assisted Learning , 31 (5), 435 – 449. h ttp s://doi. org/10.1111 / jcal .12096 Haaga , D. A. F. (1993). Peer review of ter m paper s in gradu ate psychology courses. Teaching of P sychology , 20 (1), 28 – 32. https://doi.org/10.1 207/s15328023top2 001 _ 5 Hafner, J., & Hafner, P. ( 200 3). Quantitative analysi s of t he r ubric as an assessment too l: An empirical study of student peer - group rating. International Journal of Science Edu cat ion , 25 ( 12 ), 1509 – 1528. htt ps:// doi.org/10.1080 /0950069022000038268 Hanrahan, S . J ., & Isaa cs, G . (20 01). Assessing self- and p eer- assessment: The students’ view s. Higher Education Rese arch & Development , 20 (1), 53 – 70 . h ttp s://d oi.or g /10.1080/0 7294360123776 Hwang, G .-J., Hung, C .--M ing, & Ch e n, N .-S. (2014 ). Im pro ving learning achieve - ments, m otiv ations and problem- solvi ng skill s t hrough a p eer assess men t -based game developm ent appro ac h. Educationa l Technology Research and Development , 62 (2), 129 – 14 5. Jenkins, M. ( 200 5). Unfulilled Promise: Format iv e ass essment using computer - aided assessmen t. Le arni ng and Teaching in Higher Education , (1), 67 – 80. Jon es, I., & Alcock, L. ( 20 14). Pee r assess men t without assessment criter ia. Studies in H igher Edu cation , 39 ( 10), 1 774 – 1787. http s://doi.org/1 0.1080/0307507 9.2013 .821974 Jon sso n, A ., & Svingby, G. ( 200 7). The use o f scoring rubrics: Relia bi lity, vali d ity and educational co nsequences. Edu cat iona l Research Review , 2 (2), 130 – 1 44. https://d oi.org/10.1016 /J.ED UREV.2007 .05.002 Jungić, V., Kaur, H., M ul ho lla nd, J., & Xin, C . (2015 ). On f lipping the classroom in large first year calculus courses. Inte r- nationa l Journal of Mathematical Education in Science and Technolo gy , 46 (4), 508 – 520 . https://doi.org/10.1 080/0020739X.201 4.990529 Kwok, R. C. W., & Ma, J. (1999 ). Use of a group su pport system f or co llaborative assess men t. Computers & Edu cation , 32 (2), 109 – 12 5. REiD ( Resea rch and Evaluation in Education), 4(2), 2018 ISSN 2460-6995 115 - Technology-e nhanced pre- instructional peer asses sment... Yosep Dw i Kristanto Lee, A. Y., & Hutchison, L. ( 19 98). Im - pro vi ng learning from exam ples thro ugh reflection. Journal of Experim ental Psycho - lo gy: Appli ed , 4 (3), 187 – 210. https://doi. org/10.1037 / 10 76- 898X.4.3 . 187 Liu, E. Z.-F., Lin, S. S. J., Chiu, C.- H., & Yuan, S.- M. ( 200 1). Web -based peer review: The learner as both adap ter and reviewer. IEEE Transactions on Ed ucation , 44 (3), 2 46 – 251. http s://doi.org/10.11 0 9/13 . 940995 Liu, N.-F., & Carless, D. (200 6). Peer feedback: Th e learning element o f peer assess men t. Te aching in Higher Education , 11 (3), 279 – 2 90. h ttp s://doi.org/1 0.10 80/13562 510600680582 Loch, B., Jordan, C. R., Lowe, T . W., & Mestel, B . D. (2014). D o scr eencasts help to revise p rerequ isite mathem atics? An investigati on of student performance and percept io n. International Journal of Mathematical Education in Science and Technology , 45 (2), 256 – 2 68. https://doi. org/10.1080 / 00 207 39 X.201 3.822581 Love, B., Hodge, A., Corritore, C., & Er nst, D. C. (2015 ). Inquiry- based learning and the flipped classroom m odel. PRIMUS: Problems, Resourc es, and Issues in Mathematics Undergraduate St udi es , 25 (8), 74 5 – 762 . https://doi.org/10.1 080/10511970.2015 .1046005 Mowl, G., & Pain, R. ( 1995). Using self and peer assessment to improve students’ essay writing: A case st udy from geo- graphy. Innovations in Education a nd Train- ing International , 32 (4), 324 – 335. htt ps:// doi.org/10.1080 /1355800950320404 Nastasi, B. K., & Clemen ts, D . H. (1992). Social-cognitive behaviors and h igher- order th inking in educational comp ute r environments. L earni ng and Instruct ion , 2 (3), 215 – 238 . http s://doi.org/1 0.1016/ 0959-4752 (9 2)90 010-J Nicol, D. J., & Macfarlane -Dick , D . (2006 ). Format iv e assess men t and self-regulated learning: A m odel and seven pr inciples of goo d feedback practice. Studi es in Higher Education , 31 (2), 19 9 – 218. Patchan, M. M ., Schunn, C. D., & Clark, R. J. (2018 ). Account abili ty in peer assess- ment: Examining t he effects of r eview- ing grades on p eer r atings and p eer feedback. Studies in H igher Ed ucation , 43 (12), 2 263 – 2278. h ttp s://d oi.or g/ 10.1080/0 3075079.2017.1320374 Peter, E. E. (2012 ). Critical t hinking: Essence for t eac hing m athematics and mathe- matics pr oblem solv ing skills. African Journal of Mathematics and Computer Science Research , 5 (3), 39 – 43. h ttps://doi.org/ 10.5897/AJMCSR11.1 61 Reinholz, D . (2016). The assessment cycle: A model for learning throug h p eer assess- ment. Asse ssment & Evaluation in H igher Edu cat ion , 41 (2), 3 01 – 315. h ttps://do i.or g/10 . 1080/0260293 8.2015.1008982 Scott, F. J . (2017 ). A simulated peer - assess - ment approach to improve students’ performan c e in numerical problem- solvi ng questions in high school biology. Journal of Biological Ed ucation , 51 (2), 107 – 122 . htt ps://doi.org/10 .1080/00219266 . 2016.117 7571 Sheatsley, P. B. ( 19 83). Questionn aire con st ruction and item writing. In P. H. Rossi, J. D . Wright, & A. B. Anderson (Eds.), Handbook of survey re search (pp. 195 – 23 0). N ew Y or k, N Y: A cademic Press. Silv er, H. F. ( 2010). Compare & co ntrast: Teach- ing comparative thinking t o strengthen s tudent learnin g (A st rategic teacher P LC gui de) . Alexandria, VA: Association for Super- vision & Curriculum Development. Stefani, L. A. J. ( 199 4). Peer, self and tuto r assess men t: Rela tive reliabilities. Studies in H igher Ed ucation , 19 (1), 69 – 75. https://doi.org/10.1 080/030750794123 3138215 3 Strang, K. D. ( 20 13). Determining the con - sistency o f st udent grading in a Hybrid Business co urse using a L MS and statis- tical software. International Journal o f Web - Based Learning and Teaching Technolo gies (IJWLTT) , 8 (2), 58 – 76. htt ps://doi.org/ 10.4018/j wltt .20130 40103 REiD ( Research and Evalua tion in Educa tion), 4(2), 201 8 ISSN 2460-6 995 Technolo gy- en hanced pre-ins tr uc tional peer assessment... - 116 Yosep Dw i Kristanto Sun, D. L., Harris, N ., Walther, G., & Baiocchi, M. (20 15). Peer assessment enh an ces student learning: The results of a m atched randomized crossover exp eri- ment i n a co lleg e statistics class. PLoS ONE , 10 (12), e0143177 . h ttps://do i. org/10.1371 / journal.pone.01431 77 Tanner, H., & Jo nes, S. (1994 ). Using p eer and self-assess men t to develop model- ling skills w ith students aged 11 to 16: A socio-constructive vi ew. Educational Studies in Mathematics , 27 (4), 413 – 4 31. https://doi.org/10.1 007/BF01273381 Topping, K. (1998 ). Peer a ssessment bet wee n students in colleges and universities. Review of Educational Rese arch , 68 (3), 249 – 276 . ht tps://doi.org/10 .3102/00346543 0680032 49 Triola, M. F. (2012 ). Elementary stat istics technology upda te (11th ed.). Boston, MA: Addison-Wisley. van Woerkom, M . ( 20 10). Critical reflection as a ration al istic ideal. Adult Ed ucation Qua rte rly , 60 (4), 339 – 356. ht tps://doi.org /10.1177/0 741713609358446 Wain, A. ( 2017). Learn ing through r eflection. British Journa l of Midwifery , 25 ( 10 ), 662 – 666 . http s://doi.org/1 0.12968/bjom.20 17.25.1 0.662 Wen, M. L., & Tsai, C.-C . (2006). Univ ers ity students’ perceptions o f and att itudes toward (on li ne) p eer assessment . Higher Edu cat ion , 51 (1), 2 7 – 44. h ttps://doi.org/ 10.1007/s10734-0 04-63 75 -8 Willey, K., & Gardner, A. (201 0). Inves- tigating the capacity of self and peer assess men t activi ties t o en gag e st udents and p romote learning. European Journal of Engineering Education , 35 ( 4), 429 – 443. https://doi.org/10.1 080/03043797.2010 .490577 Yang, Y.-F., & Tsa i, C.-C . (2010 ). Co ncep- tions of and appro aches to learning through online pee r assess men t. Learning and Instruction , 20 (1), 72 – 83.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment