A Time-Frequency Perspective on Audio Watermarking

Existing audio watermarking methods usually treat the host audio signals of a function of time or frequency individually, while considering them in the joint time-frequency (TF) domain has received less attention. This paper proposes an audio waterma…

Authors: Haijian Zhang

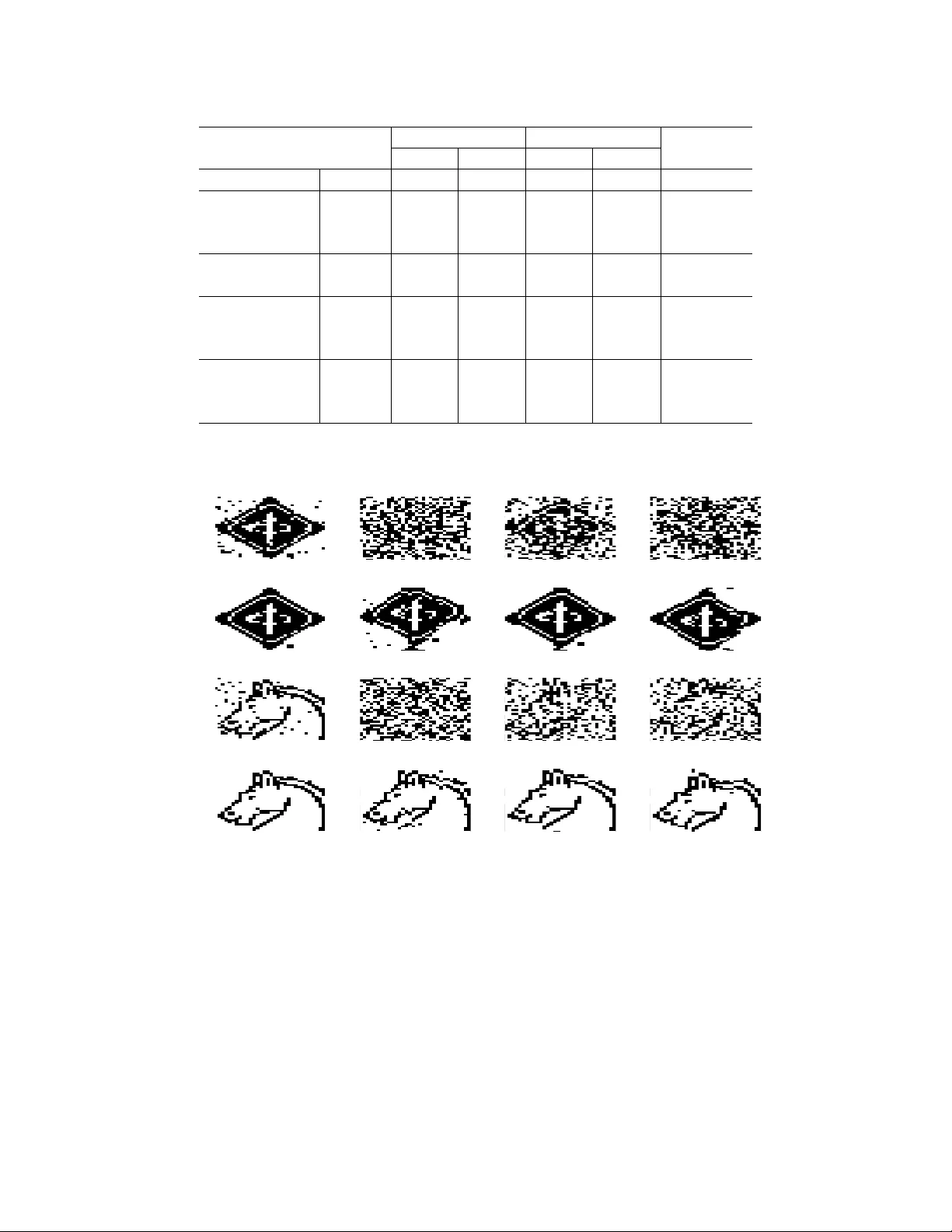

TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 1 A T ime-Frequenc y Perspecti v e on Audio W atermarking Haijian Zhang Abstract —Existing audio watermarking methods usually tr eat the host audio signals of a function of time or frequency individually , while considering them in the joint time-frequency (TF) domain has receiv ed less attention. This paper proposes an audio watermarking framework from the perspective of TF analysis. The proposed framework treats the host audio signal in the 2-dimensional (2D) TF plane, and selects a series of patches within the 2D TF image. These patches correspond to the TF clusters with minimum averaged energy , and are used to form the feature vectors for watermark embedding. Classical spread spectrum embedding schemes are incorporated in the framework. The feature patches that carry the watermarks only occupy a few TF regions of the host audio signal, thus leading to impro ved imperceptibility property . In addition, since the feature patches contain a neighborhood area of TF repr esentation of audio samples, the correlations among the samples within a single patch could be exploited for improved rob ustness against a series of processing attacks. Extensive experiments are carried out to illustrate the effectiveness of the proposed system, as compared to its counterpart systems. The aim of this work is to shed some light on the notion of audio watermarking in TF feature domain, which may potentially lead us to more robust watermarking solutions against malicious attacks. Index T erms —A udio watermarking, F eature domain water - marking, Time-fr equency domain watermarking, Short-time Fourier transform, Imperceptibility , Robustness. I . I N T RO D U C T I O N W ith the rapid development of modern communication and multimedia technologies, the dissemination and processing of digital multimedia products are becoming more and more popular , which inevitably giv es rise to a variety of piracy and infringement issues. W atermarking techniques have receiv ed significant research attention as a means to ef ficiently protect the copyright of digital multimedia product [1]. While water- marks can be embedded into media formats including but not limited to document, image, audio, and video, in this paper , we focus on watermarking for audio signals, which are functions of time. Recently , the authors in [2] revie wed the research, de- velopment, and commercialization achievements of digital audio watermarking technology for the past twenty years. Generally , the existing audio watermarking techniques could be classified according to the domains in which the water- marks are embedded. More specifically , time domain methods either modify the raw audio samples frame by frame [3]–[5], or change the histogram of host audio signals [6]. On the other hand, transform domain methods, which hav e receiv ed much more research attention, can be classified into spread H. Zhang is with Signal Processing Laboratory , School of Electronic Information, W uhan University , China. spectrum (SS) [7]–[10], patchwork [11], quantization index modulation (QIM) [12], and a special case based on over - complete transform dictionaries [13], [14]. It could be seen from the literature that although audio watermarking solutions hav e been extensiv ely studied in time or a transform domain individually , less efforts hav e been dev oted to the case in which the host audio signal is analyzed and represented jointly in time-frequency (TF) domain, based on the well established TF analysis techniques (transforms) [15]–[18]. TF analysis is a generalization of Fourier analysis for the case when the signal frequency characteristics are time-varying. Since many practical signals of interest, such as speech and music, hav e v arying frequency characteristics, TF analysis has a broad scope of applications, and one of the most basic forms of TF analysis is the short-time Fourier transform (STFT) [19], [20], while more sophisticated techniques have also been developed [21]–[23]. In [24], [25], the authors proposed two efficient approaches to speech watermarking based on the STFT and the S-method [26]. In this paper , we discuss audio watermarking from the perspectiv e of time-frequency analysis, and propose an audio watermarking framew ork based on the fact that audio signals are a function of time. Specifically , we propose to embed the watermark signal into a set of low-ener gy points in the TF representation, which correspond to noise-only or silent segments in audio signals. The selected points can form non- ov erlapping 2-dimensional (2D) feature frames, each of which is composed of TF domain samples across multiple time and frequency bins. T o achieve this purpose, a method to automatically determine these low-ener gy TF positions is in- troduced, and the energy inv ariance before and after watermark embedding is exploited. The proposed scheme only modifies a few frames within the feature space, while other frames are kept intact. The imperceptibility property of the watermarking system could hence be improved in that the host audio signal containing strong audio content is less modified. Therefore, while the robustness against host signal interference could be ensured via the use of improved spread spectrum (ISS) method [9], the inability of ISS method to control imperceptibility is remedied by the proposed localized watermark embedding. Furthermore, the proposed system enjoys improv ed robustness against a series of signal processing attacks including adding noise, amplitude scaling, and lossy compressions, thanks to the appropriately designed 2D embedding frames. In general, the proposed frame work could be considered as a design for feature domain audio watermarking, in which the features correspond to the appropriately selected embedding locations. W e concretize the above framework via the realizations TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 2 x x i y i y w , i x w , i x w Framing T Embed T - 1 Construct (a) x y y i y w , i y w x w Framing T Embed T - 1 Construct (b) x Y Feature Selection f i f w , i Embed T (TF) Y w x w Construct T - 1 (TF) (c) Fig. 1. Commonly used and the proposed watermark embedding schemes: (a) T ransform after framing. (b) Transform before framing. (c) Proposed scheme. of the basic STFT and a similarly formed short-time cosine transform (STCT), with both SS and ISS embedding and extraction mechanisms. Con ventional SS and ISS schemes with a uniform embedding rule across different frequency bands are also implemented for comparison. Extensive experi- ments are carried out to ev aluate the proposed framew ork and demonstrate its performance adv antages. I I . A U D I O W A T E R M A R K I N G I N T F F E A T U R E D O M A I N A. General F rameworks W e use the follo wing notations in this paper . The host audio signal is denoted by vector x ∈ R N × 1 , and its i th frame after time domain non-ov erlapping framing is x i ∈ R M 0 × 1 , where N is the number of samples of the host signal, M 0 is the number of samples per frame, and i ∈ { 0 , 1 , . . . , d N / M 0 e− 1 } , where d·e is the ceiling function. Similarly , the host audio signal in transform domain is denoted by y with the same length as x , and the i th frame of y after transform domain non- ov erlapping framing is y i . W e use subscript {·} w to denote watermarked version of a signal, thus the representations of watermarked signal in time and transform domain, and in terms of frame and ensemble, are denoted by x w ,i , x w , y w ,i , and y w , respectively . Since this paper mainly utilizes SS based watermark embedding and extraction mechanisms, the corresponding spreading sequence is a pseudo-random noise sequence p ∈ { +1 , − 1 } L × 1 . In con ventional full spectrum SS settings, we ha ve L = M 0 so that the spreading sequence could be additively embedded into host signal frames. Audio watermark embedding in transform domain could be carried out under two basic schemes, i.e., transform after and before framing, which are sho wn in Fig. 1 (a) and (b). T ransform after framing is the most widely adopted processing flow in the existing literature, which is summarized in [2]. On the other hand, host audio signal has also been considered as a single frame to calculate its corresponding transform domain representations, directly obtaining y from x . Good examples of such works could be seen in [10], [11], [27]. In this paper , we propose an alternati ve watermark embedding frame work as depicted in Fig. 1 (c), which is similar to the transform before framing case. Specifically , instead of calculating the transform y , we fist obtain the TF representation of the host signal x , denoted by matrix Y ∈ C M 0 ×d N/ M 0 e composed of both time and frequency bins. In this way , the proposed x Embeding Scheme x w ODG Calculation α Good? x w Y es No Fig. 2. Heuristic tuning mechanism to control imperceptibility based on ODG. The dashed line is validated only if the decision is “Y es” (stopping criterion). framew ork dif fers from most of existing ones by considering watermark embedding based on a 2D TF image. Furthermore, the TF domain feature, denoted by f i ∈ C W 2 × 1 (where W is a window dimension), is selected as the patches with lo w-energy values, which correspond to noise-only or silent locations. One of the advantages of using the proposed framework o ver the similar framew ork in Fig. 1 (b) is that the modification of host signal in one area will not affect the host signal in other areas, while for a system in Fig. 1 (b), any modification in transform domain samples will cause changes of all samples in time domain. In addition, since each of the selected feature vectors contains multiple time and frequency bins, the correlation of the host signal at both dif ferent time intervals and frequency ranges is considered for watermark embedding, which could lead to improv ed robustness against a series of processing and attacks. Details of the proposed framew ork are provided in next subsection. B. W atermark Embedding and Extraction Schemes In this subsection, STFT is used as an example for TF analysis. T o ensure well controlled imperceptibility , heuristic tuning is incorporated in the embedding scheme, in which the objectiv e difference grade (ODG) [28] is utilized to quantify current imperceptibility condition, according to which the wa- termark embedding strength parameter α is adjusted according to the feedback of the ODG v alue. The ODG v alue is a real non-positiv e number in the intervals of {− 4 , − 3 , − 2 , − 1 , 0 } , with 0 corresponding to imperceptible and - 4 corresponding to very annoying. The heuristic tuning mechanism is depicted in Fig. 2. The proposed watermark embedding scheme is detailed as follows. TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 3 1) Partition x into non-overlapping frames x i with M 0 samples 1 . Perform Hilbert transform on x i to remov e the symmetry of the frequency spectrum within 2 π radius [29]. For simplicity of notation, we still use x i to denote the Hilbert transform output, x i ∈ C M 0 × 1 ← Hilbert ( x i ) , i = 0 , 1 , · · · , d N / M 0 e − 1 , (1) but note here x i becomes complex quantities. 2) Compute the non-symmetric STFT of the host audio sig- nal x , and obtain the TF representation Y . Specifically , perform fast Fourier transform (FFT) for each frame, y i = Hx i , (2) where H is the orthonormal FFT matrix. Then we have Y = [ y 0 , y 1 , . . . , y d N/ M 0 e− 1 ] . (3) Further , select low to middle frequency bins bounded by f 1 and f 2 , e.g., f 1 = 60 Hz, and f 2 = 2800 Hz, as the feasible watermark embedding region. The exact dimension, M , resulted from this process depends on f 1 , f 2 , the sampling frequency , and the length of FFT . W e simply denote the refined 2D TF image as ˜ Y ∈ C M ×d N / M 0 e . Therefore, vertically , the M samples correspond to a frequenc y re gion within [ f 1 , f 2 ] . 3) Partition ˜ Y into square patches using a W × W window , and index the patches in raster scanning order . Usually , we hav e W < M < M 0 N . (4) For con venience, we further assume W is cho- sen such that W 2 is divisible by both M and d N / M 0 e , hence there is no residual after partition, and M ( d N/ M 0 e ) /W 2 patches are obtained in total. 4) Calculate the average energy of each patch. Denote each patch as P j , j ∈ { 0 , 1 , . . . , M ( d N / M 0 e − 1) /W 2 − 1 } , then, the average energy is given by E j = 1 W 2 W − 1 X m 1 =0 W − 1 X m 2 =0 | P j ( m 1 , m 2 ) | 2 . (5) 5) Sort E j in ascending order . According to the binary payload vector w ∈ { +1 , − 1 } P × 1 , usually , P < M ( d N/ M 0 e ) /W 2 , (6) select the first P patches with minimum average en- ergies as features for watermark embedding. V ectorize the selected patches into feature v ectors f i ∈ C W 2 × 1 , i ∈ { 0 , 1 , . . . , P − 1 } . Next, the embedding order is from top to bottom and column-wise, i.e., the feature patches in the first column are first embedded from high frequency bands to low frequency bands, follo wed by patches in the second column, as so on. 1 Here, the frames could not be overlapped because otherwise the wa- termarked patches will affect multiple ov erlapped frames and the inverse transform would become unstable. This is slightly different from TF analysis literature. Ho wever , such a treatment will not cause performance degradation since we are not interested in the resolution or accuracy of TF analysis in the context of watermarking. (a) frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 500 1000 1500 2000 2500 (b) frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 500 1000 1500 2000 2500 (c) frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 0 1000 2000 3000 time (s) (d) frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 0 1000 2000 3000 0 0.5 1 0.2 0.4 0.6 0.8 1 0.2 0.4 0.6 0.8 0 0.05 0.1 Fig. 3. Demonstration of the proposed STFT -based watermark embedding scheme. (a) STFT of host audio signal. (b) Partition of the 2D TF image. (c) Energy image of the patches of the 2D TF image. (d) Selected feature patches (red, payload size P = 32 ). 6) For each feature vector ordered as above, generate the PN sequence p ∈ { +1 , − 1 } W 2 × 1 as the spreading code, and perform SS or ISS watermark embedding additively , i.e., f w , i = f i + ( α w ( i ) − I Φ ) p , (7) where Φ , f T i p k p k 2 2 , (8) {·} T is transpose operator , and 0 < α < 1 controls wa- termark embedding strength. For simplicity , parameter I is a binary indicator , i.e., if I = 0 , then the scheme is based on SS, while if I = 1 , then the scheme is based on ISS. 7) After embedding the payload w , Y w is obtained by simply replacing its subset ˜ Y with ˜ Y w . Then, perform in verse STFT according to the same framing rule as used in Step 1, and reorder the output to vector form and discard the imaginary part to obtain x w . 8) Calculate the ODG value according to x and x w . Adjust parameter α according to a desired ODG le vel, i.e., if the ODG v alue is greater than the desired value (more imperceptible), then α could be slightly increased as long as the resultant ODG is within a tolerant distance from the desired value; if the ODG v alue is smaller than the desired value (less imperceptible), then α should be reduced accordingly . This process is shown in Fig. 2. The watermark embedding process is visualized in Fig. 3. At the recei ving end, assuming an error -free channel, the extraction of the payload in terms of the detection of each embedded information bit is carried out as follo ws. 1) Partition x w into non-overlapping frames x w ,i with M 0 samples, and perform Hilbert transform similar to (1). 2) Compute the non-symmetric STFT of x w , and obtain the TF representation Y w . Specifically , perform FFT for each frame, y w ,i = Hx w ,i , (9) TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 4 then we have Y w = [ y w , 0 , y w , 1 , . . . , y w , d N/ M 0 e− 1 ] . (10) Further , according to f 1 and f 2 , construct the sub-matrix ˜ Y w ∈ C M ×d N / M 0 e . 3) Partition ˜ Y w into square patches using a W × W window , and index the patches in raster scanning order . 4) Calculate the average energy of each patch. Denote each patch as P w ,j , j ∈ { 0 , 1 , . . . , M ( d N / M 0 e − 1) /W 2 − 1 } , then, the a verage energy is giv en by E w ,j = 1 W 2 W − 1 X m 1 =0 W − 1 X m 2 =0 | P w ,j ( m 1 , m 2 ) | 2 . (11) 5) Sort E w ,j in ascending order , and find P patches with least energy values. V ectorize these patches to form f w ,i which are ordered column-wise and from top to bottom. The embedded information bit is estimated by the following function ˆ w ( i ) = sgn h< ( f w ,i ) , p i / k p k 2 2 = sgn < ( f i ) T p + ( α w ( i ) − I < ( Φ )) k p k 2 2 k p k 2 2 ! = sgn ((1 − I ) < ( Φ ) + α w ( i )) , (12) where <{·} denotes the real part. It can be seen from (12) that the SS scheme ( I = 0 ) suffers from host signal interference Φ , while the ISS scheme ( I = 1 ) is able to remov e the interference term in a closed-loop en vironment. Note that the abo ve embedding and e xtraction schemes could be modified to other schemes by simply re- placing the STFT with STCT or other transforms. C. F eatur e Invariance In this subsection, we address an important issue to v alidate the proposed system, i.e., feature inv ariance before and after watermark embedding. It can be seen from the embedding function (7) that the energy of watermarked feature vector will be altered. Therefore, after the whole embedding process, the energy distrib ution of the feature patches in Y w , at least the P patches with least ener gy le vels, should still ha ve least energy levels. T o study the feature reco very property of the proposed system under dif ferent TF transforms, the recovery results using a sample audio clip in a closed-form environment are depicted in Fig. 4, where the audio clip has a duration of 10 seconds, and P = 32 . It could be seen that STFT based method is more suitable for the proposed framework. The reason behind is that DCT tends to compact the signal’ s ener gy into smaller frequency band and also de-correlate the signal in frequency domain, making the small energy regions more ambiguous in terms of energy difference. Therefore, in the sequel, we will only consider STFT as the TF analysis tool in the following experiments. Extensiv e experimental results will be provided later to demonstrate ho w additi ve noise and other processing attacks af fect the ef fectiv eness of the proposed framew ork. It is w orth noting that there actually exists another solu- tion for the requirement of feature in variance, which could frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 500 1000 1500 2000 2500 frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 0 1000 2000 3000 frequency (Hz) time (s) 0 1 2 3 4 5 6 7 8 9 10 0 1000 2000 3000 0 0.5 1 0 0.05 0.1 0 0.05 0.1 (a) T op: STFT . Mid.: Feature patches. Bot.: Recov ered patches. frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 500 1000 1500 2000 2500 frequency (Hz) 0 1 2 3 4 5 6 7 8 9 10 0 1000 2000 3000 frequency (Hz) time (s) 0 1 2 3 4 5 6 7 8 9 10 0 1000 2000 3000 0 0.5 1 0 0.05 0.1 0 0.05 0.1 (b) T op: STCT . Mid.: Feature patches. Bot.: Recov ered patches. Fig. 4. Detection results of watermark positions via (a) STFT and (b) STCT . be obtained using an indexing array pointing to P patches randomly to identify which patches are watermarked. This array could be considered as a priv ate k ey shared among the authority and trusted parties. Note that the system proposed in the previous subsection is strictly a blind watermarking scheme whose watermark extraction does not requir e any auxillary information, but if the random indexing array is introduced, then this array , serving as a key , should be transmitted to authorized receivers via some secure channels. The study of random index key based TF feature domain watermarking is noted here for future research attention. I I I . E V A L UAT I O N S A N D E X P E R I M E N TA L R E S U LT S In this section, we carry out extensiv e experiments to ev al- uate the proposed framework in terms of imperceptibility and robustness. The imperceptibility is measured quantitatively by document-to-watermark ratio (DWR) and ODG respectively . For comparison, the counterpart system based on DCT and the scheme in Fig. 1 (b) is also implemented. Therefore, the e x- periments and comparisons will be conducted on four systems, i.e., STFT -SS, STFT -ISS, DCT -SS, and DCT -ISS, respectively . Some measurement metrics are defined as follo ws. First, the D WR is giv en by D WR = 10log 10 k x k 2 2 k x w − x k 2 2 . (13) TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 5 25 30 35 40 45 DWR 0.8 0.85 0.9 0.95 1 Detection Rate DCT-ISS DCT-SS STFT-ISS STFT-SS (a) DR versus DWR. -2 -1.5 -1 -0.5 0 ODG 0.8 0.85 0.9 0.95 1 Detection Rate DCT-ISS DCT-SS STFT-ISS STFT-SS (b) DR versus ODG. Fig. 5. W atermark detection rates av eraged by running 3 audio samples: (a) Detection Rate versus D WR. (b) Detection Rate versus ODG. The signal-to-noise ratio (SNR) is defined by SNR = 10log 10 k x w k 2 2 σ 2 , (14) where σ 2 is the variance of additiv e white Gaussian noise (A WGN). T o characterize watermark extraction performance, the detection rate (DR) is defined by DR = 1 2 P P − 1 X i =0 | w ( i ) − ˆ w ( i ) | × 100% . (15) Parameters are set as follows. M 0 = 1024 , f 1 = 60 Hz, f 2 = 2800 Hz, W = 16 , P = 32 , 32 2 , L = W 2 , α is heuristically controlled by ODG v alues no less than − 1 . During comparison, ODG v alues are tuned to be similar for fair comparison. In our simulation, four music samples are selected, including male song ( 240 s), female song ( 10 s), violin and piano duet ( 10 s), and electronic music ( 10 s). All the samples audio files ha ve 16-bit quantization and a sampling frequency of 44 . 1 kHz. A. Imper ceptibility The imperceptibility property of the implemented systems is demonstrated in Fig. 5, where three of the four audio samples with 10 s duration are used to generate the performance curves, and A WGN with SNR = 30 dB is considered. It can be observed from both sub-figures that the proposed schemes constantly yield better imperceptibility when the DR v alues T ABLE I I M PE R C E PT I B IL I T Y O F D C T - S S A N D D C T - I S S M ET H O D S Data DCT -SS DCT -ISS DWR (dB) ODG DWR (dB) ODG Sample 1 33.9 -0.76 33.7 -0.70 Sample 2 34.7 -0.13 32.4 -0.02 Sample 3 40.4 -0.40 32.8 -0.90 Sample 4 33.5 -0.95 32.3 -0.44 T ABLE II I M PE R C E PT I B IL I T Y O F S T F T - S S A ND S T F T - I S S M E T H OD S Data STFT -SS STFT -ISS DWR (dB) ODG DWR (dB) ODG Sample 1 39.9 -0.61 39.6 -0.44 Sample 2 40.7 -0.03 42.6 -0.02 Sample 3 46.4 -0.43 42.1 -0.40 Sample 4 39.5 -0.55 39.9 -0.34 are the same. In terms of ODG, the proposed systems could obtain ODG values between − 0 . 5 and 0 with abov e 90% DRs. Further, the D WR and ODG v alues of the four audio watermarking systems applied on four audio samples are summarized in T ables I and II. It can be seen that performance improv ement on imperceptibility is consistent across different samples, and the inability of ISS based methods in controlling imperceptibility is resolved, thanks to localized embedding in selected features. The robustness testing results will be provided in next subsection, also based on the four audio samples, and the corresponding imperceptibility information is as shown in the two tables. W e will demonstrate that while the proposed systems could achieve impro ved imperceptibility , the robustness against several common processing attacks can also be improved. Original Watermark (IEEE Logo) Extracted Watermark (No Attack) Original Watermark (Springer Logo) Extracted Watermark (No Attack) Fig. 6. 32 × 32 -bit watermark logos used with DCT -ISS and STFT -ISS. B. Robustness to a Series of Attacks The attacks considered in this paper include adding Gaus- sian noise, amplitude scaling, AA C lossy compression, and MP3 lossy compression, each with se veral different attack strength settings. Here, we use two sets of watermarks and two sets of sample audio clips. In the first setting, a random binary sequence of 32 bits is used as the watermark, which is embedded into four audio samples of 10 seconds. The embedding D WR and ODG v alues in this setting are gi ven TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 6 T ABLE III D R S ( % ) O F D C T - S S M E TH O D U N DE R D I FF ER E N T A T TAC K S ( FI R ST S E T T IN G ) Attach T ype Sample 1 Sample 2 Sample 3 Sample 4 A verage Re-Quantization 8 Bit 84.4 96.8 78.1 93.8 88.2 Gaussian Noise 30 dB 84.4 96.8 78.1 90.6 87.5 50 dB 84.4 96.8 78.1 93.8 88.2 Amplitude Scal. 1.2 84.4 96.8 78.1 93.8 88.2 1.8 84.4 96.8 78.1 93.8 88.2 AA C Compression 96 kbps 84.4 96.8 78.1 93.8 88.2 160 kbps 84.4 96.8 78.1 93.8 88.2 MP3 Compression 64 kbps 84.4 96.8 78.1 93.8 88.2 128 kbps 84.4 96.8 78.1 93.8 88.2 T ABLE IV D R S ( % ) O F D C T - I S S M E TH O D U ND E R D I FFE R E N T ATTAC K S ( FI RS T S E T TI N G ) Attach T ype Sample 1 Sample 2 Sample 3 Sample 4 A verage Re-Quantization 8 Bit 100 100 100 100 100 Gaussian Noise 30 dB 75.0 75.0 68.8 71.9 72.7 50 dB 100 100 100 100 100 Amplitude Scal. 1.2 100 100 100 100 100 1.8 100 100 100 100 100 AA C Compression 96 kbps 96.8 96.8 96.8 96.8 96.8 160 kbps 100 100 100 100 100 MP3 Compression 64 kbps 87.5 100 93.8 100 95.3 128 kbps 100 100 96.8 100 99.2 T ABLE V D R S ( % ) O F S T F T - S S M E TH O D U ND E R D I FFE R E N T ATTAC K S ( FI RS T S E T TI N G ) Attach T ype Sample 1 Sample 2 Sample 3 Sample 4 A verage Re-Quantization 8 Bit 96.8 100 81.3 96.8 93.8 Gaussian Noise 30 dB 93.7 100 78.1 93.8 91.4 50 dB 96.8 100 81.3 96.8 93.8 Amplitude Scal. 1.2 96.8 100 81.3 96.8 93.8 1.8 96.8 100 81.3 96.8 93.8 AA C Compression 96 kbps 96.8 100 78.1 96.8 92.9 160 kbps 96.8 100 81.3 96.8 93.8 MP3 Compression 64 kbps 96.8 100 78.1 96.8 92.9 128 kbps 96.8 100 81.3 96.8 93.8 T ABLE VI D R S ( % ) O F S T F T - I S S M E T H OD U N D E R D I FF ER E N T A T TAC K S ( FI R ST S E T T IN G ) Attach T ype Sample 1 Sample 2 Sample 3 Sample 4 A verage Re-Quantization 8 Bit 100 100 100 100 100 Gaussian Noise 30 dB 96.8 93.7 96.8 87.5 93.7 50 dB 100 100 100 100 100 Amplitude Scal. 1.2 100 100 100 100 100 1.8 100 100 100 100 100 AA C Compression 96 kbps 100 100 100 100 100 160 kbps 100 100 100 100 100 MP3 Compression 64 kbps 100 100 100 100 100 128 kbps 100 100 100 100 100 TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 7 T ABLE VII D R S ( % ) O F D C T - I S S A N D S T F T - I SS M ET H O DS ( S E C ON D S E T TI N G ) Attack T ype DCT -ISS STFT -ISS A verage Improvement IEEE Springer IEEE Springer Re-Quantization 8 Bit 94.2 95.1 100 100 5.35 Gaussian Noise 30 dB 55.2 58.9 85.2 86.7 29.3 40 dB 70.1 69.5 94.3 95.0 24.9 50 dB 92.6 94.5 98.6 100 5.75 Amplitude Scal. 1.2 100 100 100 100 0 1.8 100 100 100 100 0 AA C Compression 96 kbps 75.5 73.9 85.4 82.5 9.25 128 kbps 81.5 81.9 90.0 87.9 7.25 160 kbps 93.4 91.9 100 100 7.35 MP3 Compression 64 kbps 64.6 75.1 85.1 86.3 15.9 128 kbps 86.7 99.2 100 100 7.05 192 dbps 99.5 100 100 100 0.25 ODG -0.75 -0.76 -0.65 -0.66 Re-Quantization (8 Bit) DCT-ISS Gaussian Noise (30 dB) AAC (96 kbps) MP3 (64 kbps) STFT-ISS DCT-ISS STFT-ISS Fig. 7. Recovered 32 × 32 -bit watermark logos (second setting) under dif ferent significant attacks used with DCT -ISS and STFT -ISS. in T ables I and II. In the second setting, two graphic logos of 32 × 32 bits, as shown in Fig. 6, are used for watermark embedding, and the 4 -minute audio file is used as the host signal. The ODG values for the second setting are gi ven at the bottom of T able VII. The implemented methods for the second setting are DCT -ISS and STFT -ISS only , for simplicity . The DRs against se veral attacks with dif ferent attack strength settings for DCT -SS, DCT -ISS, STFT -SS, and STFT - ISS methods are shown in T ables III to VI respecti vely . For DCT and STFT based methods respectiv ely , we observe better robustness when ISS is used. This agrees with the classical property of the ISS technique. More importantly , we can see from these tables that the proposed TF feature domain watermarking systems outperform DCT based frequency do- main methods for both SS and ISS implementations. The best performance is observed in T able VI, which corresponds to STFT -ISS method. Recall T able II, in which the DWR and ODG values of STFT -ISS method are very close to, or even slightly better than those obtained from its SS counterpart or DCT based methods. Theref ore, it could be concluded that the proposed system is able to simultaneously achieve impro ved imperceptibility and r obustness. Finally , we test the two ISS based systems, i.e., DCT -ISS and STFT -ISS in the second setting where IEEE and Springer logos are embedded in a 4 -minute audio clip. The results are shown in T able VII. The improvement of the proposed TIME-FREQUENCY ANAL YSIS AND ITS APPLICA TIONS 8 system against its frequency domain counterpart is consistent across different attacks for both logos. The recovered IEEE and Springer logos under different significant attacks using the DCT -ISS and the STFT -ISS are shown in Fig. 7. T wo observations can be made: the DCT -ISS fails to reconstruct the original logos; Although the STFT -ISS does not achie ve completely error-free performance, it can still well recov er the shape and content of the logos. I V . C O N C L U S I O N In this paper, we proposed an audio watermarking frame- work from the perspective of TF analysis. Different from existing schemes, the proposed framework considers the 2D TF representation of host audio signal as the raw signal for watermark embedding. Based on partitioning the 2D TF image into small patches, and selecting patches with lower energy values as features, w atermarks are embedded into the vectorized feature patches using SS and ISS mechanisms. Extensiv e e xperimental results ha ve been carried out in com- parison with the counterpart systems that embed watermark in frequenc y domain. Consistent performance impro vements hav e been shown via the experimental results, both using random sequences and image logos as watermarks. Due to the requirement of feature in variance, the proposed systems are less rob ust against A WGN attacks. W e have noted in Section II.C that using a random indexing key instead of sorting patch energies would be an effecti ve solution to this problem. Future research efforts will be put into this issue. In general, it is worth in vestigating more efficient TF feature do- main audio watermarking methods that could potentially lead to substantially impro ved robustness against desynchronization attacks. This may possibly be approached via exploring desyn- chronization in variant features in TF domain and utilizing both SS and QIM based embedding mechanisms. R E F E R E N C E S [1] Cox, I., Miller , M., Bloom, J., Fridrich, J., Kalker, T .: Digital water- marking and steganography . Morg an Kaufmann (2007) [2] Hua, G., Huang, J., Shi, Y . Q., Thing, V . L. L.: T wenty years of digital audio watermarking - a comprehensi ve re view . Signal Process. 128, 222– 242 (2016) [3] Nishimura, R.: Audio watermarking using spatial masking and ambison- ics. IEEE Trans. Audio, Speech, Lang. Process. 20, 2461–2469 (2012) [4] Hua, G., Goh, J., Thing, V . L. L.: Time-spread echo-based audio wa- termarking with optimized imperceptibility and robustness. IEEE/ACM T rans. Audio, Speech, Language Process. 23, 227–239 (2015) [5] Hua, G., Goh, J., Thing, V . L. L.: Cepstral analysis for the application of echo-based audio watermark detection. IEEE Trans. Inf. Forensics Security . 10, 1850–1861 (2015) [6] Xiang, S., Huang, J.: Histogram-based audio watermarking against time- scale modification and cropping attacks. IEEE Trans. Multimed. 9, 1357–1372 (2007) [7] Cox, I. J., Kilian, J., Leighton, F . T ., Shamoon, T .: Secure spread spectrum watermarking for multimedia. IEEE Trans. Image Process. 6, 1673–1687 (1997) [8] Kirovski, D., Malvar , H. S.: Spread-spectrum watermarking of audio signals. IEEE Trans. Signal Process. 51, 1020–1033 (2003) [9] Malvar , H. S., Florencio, D.: Improved spread spectrum: A new modu- lation technique for robust watermarking. IEEE Trans. Signal Process. 51, 898–905 (2003) [10] Xiang, Y ., Natgunanathan, I., Rong, Y ., Guo, S.: Spread spectrum- based high embedding capacity watermarking method for audio signals. IEEE/A CM T rans. Audio, Speech, Language Process. 23, 2228–2237 (2015) [11] Xiang, Y ., Natgunanathan, I., Guo, S., Zhou, W ., Nahav andi, S.: P atchwork-based audio watermarking method robust to de- synchronization attacks. IEEE/ACM Trans. Audio, Speech, Language Process. 22, 1413–1423 (2014) [12] Lei, B., Soon, I. Y ., T an, E. L.: Robust svd-based audio watermarking scheme with differential evolution optimization. IEEE Trans. Audio, Speech, Language Process. 21, 2268–2377 (2013) [13] Cancelli, G., Barni, M.: MPSteg-Color: Data Hiding Through Redundant Basis Decomposition. IEEE T rans. Inf. Forensics Security . 4, 346–358 (2009) [14] Hua, G., Xiang, Y ., Bi, G.: When compressi ve sensing meets data hiding. IEEE Signal Process. Lett. 23, 473–477 (2016) [15] Sejdi ´ c, E., Djurovi ´ c, I., Jiang, J.: T imefrequency feature representation using energy concentration: An overvie w of recent advances. Digital Signal Processing. 19, 153–183 (2009) [16] Stankovi ´ c, S., Krishnan, S., Mobasseri, B., Zhang, Y .: T ime-Frequency Analysis and Its Applications to Multimedia Signals. EURASIP J. Adv . Signal Process. 2010, 739017 (2011) [17] Boashash, B.: T ime-Frequency Signal Analysis and Processing (Second Edition). Academic Press. (2016) [18] Flandrin, P .: Explorations in Time-Frequenc y Analysis. Cambridge Uni- versity Press. (2018) [19] Zhang, H., Bi, G., Cai, Y ., Gulam Razul, S., See, C.-M.: DOA estimation of closely-spaced and spectrally-ov erlapped sources using a STFT -based MUSIC algorithm. Digital Signal Processing. 52, 25–34 (2016) [20] Zhang, H., Hua, G., Y u, L., Cai, Y ., Bi, G.: Underdetermined blind separation of ov erlapped speech mixtures in time-frequency domain with estimated number of sources. Speech Communication. 89, 1–16 (2017) [21] Zhang, H., Bi, G., Y ang, W ., Razul, S. G., See, C.-M.: IF Estimation of FM Signals based on Time-Frequenc y Image. IEEE Trans. Aerosp. Electron. Syst. 51, 326–343 (2015) [22] Zhang, H., Y u, L., Xia, G.-S.: Iterativ e Time-Frequenc y Filtering of Sinusoidal Signals W ith Updated Frequency Estimation. IEEE Signal Process. Lett. 23, 139–143 (2016) [23] Hua, G., Zhang, H.: ENF Signal Enhancement in Audio Recordings. IEEE T ransactions on Information Forensics and Security . 15, 1868– 1878 (2020) [24] Stankovi ´ c, S., Orovi ´ c, I., ˇ Zari ´ c, N.: Robust Speech W atermarking Pro- cedure in the Time-Frequenc y Domain. EURASIP Journal on Advances in Signal Processing. DOI: 10.1155/2008/519206 (2008) [25] Orovi ´ c, I., Stankovi ´ c, S.: T ime-Frequency-Based Speech Re gions Char- acterization and Eigen value Decomposition Applied to Speech W ater- marking. EURASIP Journal on Advances in Signal Processing. DOI: 10.1155/2010/572748 (2010) [26] Stankovi ´ c, L.: A method for time-frequency signal analysis. IEEE Trans. Signal Process. 42, 225–229 (1994) [27] Kang, X., Y ang, R., Huang, J.: Geometric inv ariant audio watermarking based on an LCM feature. IEEE T rans. Multimedia. 13, 181–190 (2011) [28] Recommendation ITU BS.1387-1: Method for objecti ve measurements of perceiv ed audio quality , 1998-2001. [29] Grafakos, L.: Classical and Modern Fourier Analysis, Pearson Educa- tion, Inc., 253–257 (2004)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment