Robust and interpretable blind image denoising via bias-free convolutional neural networks

Deep convolutional networks often append additive constant ("bias") terms to their convolution operations, enabling a richer repertoire of functional mappings. Biases are also used to facilitate training, by subtracting mean response over batches of …

Authors: Sreyas Mohan, Zahra Kadkhodaie, Eero P. Simoncelli

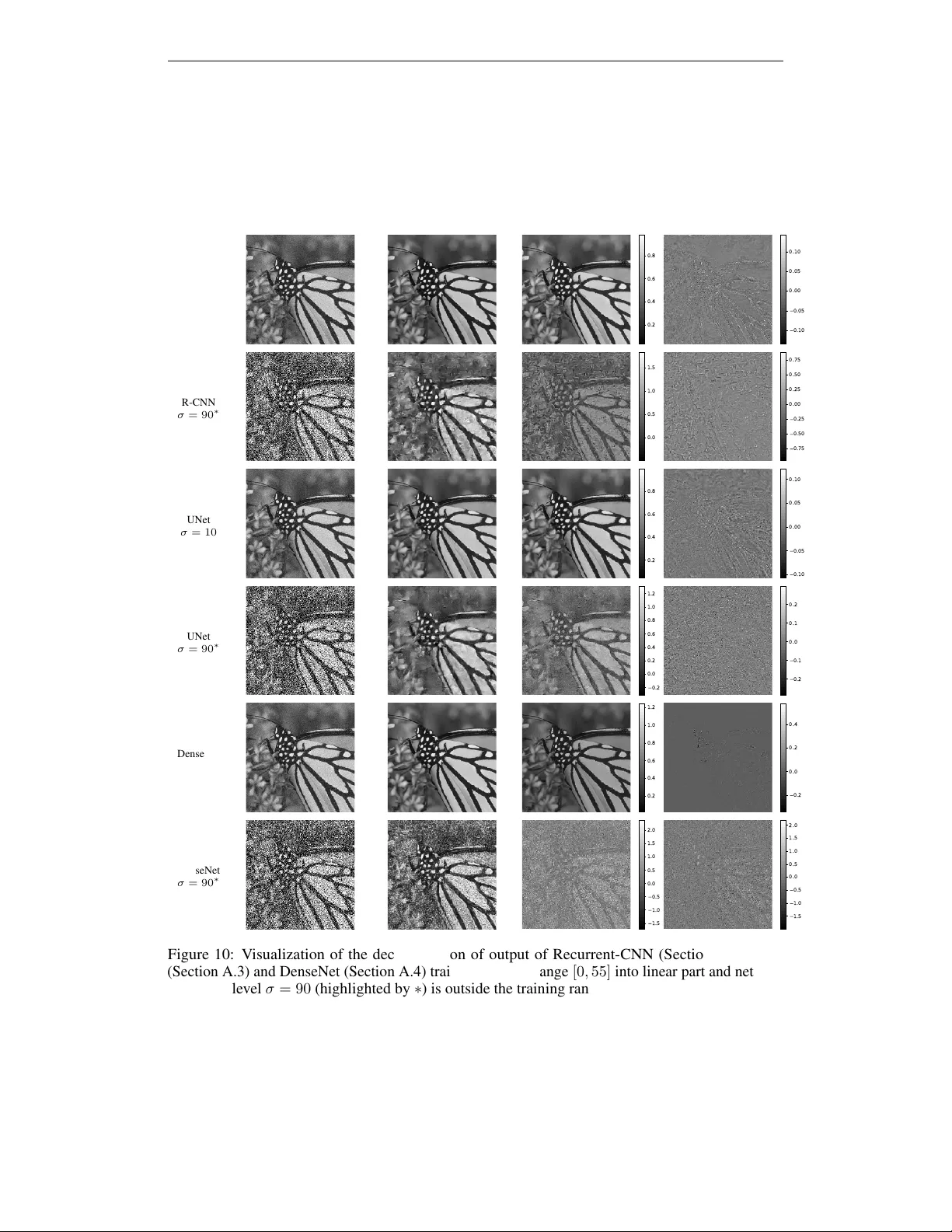

Published as a conference paper at ICLR 2020 R O B U S T A N D I N T E R P R E T A B L E B L I N D I M AG E D E N O I S I N G V I A B I A S - F R E E C O N V O L U T I O N A L N E U R A L N E T W O R K S Sreyas Mohan ∗ Center for Data Science New Y ork Univ ersity sm7582@nyu.edu Zahra Kadkhodaie ∗ Center for Data Science New Y ork Univ ersity zk388@nyu.edu Eero P . Simoncelli Center for Neural Science, and How ard Hughes Medical Institute New Y ork Univ ersity eero.simoncelli@nyu.edu Carlos Fernandez-Granda Center for Data Science, and Courant Inst. of Mathematical Sciences New Y ork Univ ersity cfgranda@cims.nyu.edu A B S T R A C T W e study the generalization properties of deep con volutional neural netw orks for image denoising in the presence of varying noise lev els. W e provide extensiv e empirical evidence that current state-of-the-art architectures systematically o verfit to the noise le vels in the training set, performing v ery poorly at new noise le vels. W e show that strong generalization can be achie ved through a simple architectural modification: removing all additi ve constants. The resulting "bias-free" networks attain state-of-the-art performance ov er a broad range of noise le vels, e ven when trained ov er a narrow range. They are also locally linear , which enables direct anal- ysis with linear-algebraic tools. W e sho w that the denoising map can be visualized locally as a filter that adapts to both image structure and noise le vel. In addi- tion, our analysis re veals that deep networks implicitly perform a projection onto an adapti vely-selected lo w-dimensional subspace, with dimensionality in versely proportional to noise lev el, that captures features of natural images. 1 I N T RO D U C T I O N A N D C O N T R I B U T I O N S The problem of denoising consists of recov ering a signal from measurements corrupted by noise, and is a canonical application of statistical estimation that has been studied since the 1950’ s. Achieving high-quality denoising results requires (at least implicitly) quantifying and exploiting the differences between signals and noise. In the case of photographic images, the denoising problem is both an important application, as well as a useful test-bed for our understanding of natural images. In the past decade, con volutional neural networks (LeCun et al., 2015) hav e achieved state-of-the-art results in image denoising (Zhang et al., 2017; Chen & Pock, 2017). Despite their success, these solutions are mysterious: we lack both intuition and formal understanding of the mechanisms they implement. Network architecture and functional units are often borro wed from the image-recognition literature, and it is unclear which of these aspects contributes to, or limits, the denoising performance. The goal of this work is adv ance our understanding of deep-learning models for denoising. Our contributions are twofold: First, we study the generalization capabilities of deep-learning models across dif ferent noise le vels. Second, we pro vide novel tools for analyzing the mechanisms implemented by neural networks to denoise natural images. An important advantage of deep-learning techniques over traditional methodology is that a single neural network can be trained to perform denoising at a wide range of noise lev els. Currently , this is achiev ed by simulating the whole range of noise lev els during training (Zhang et al., 2017). Here, we sho w that this is not necessary . Neural networks can be made to gener alize automatically acr oss noise ∗ Equal contribution. 1 Published as a conference paper at ICLR 2020 5 15 25 35 45 55 65 75 85 95 noise level (sd) 5 15 25 35 45 55 65 75 85 95 norms b i a s , b y r e s i d u a l , y x n o i s e , z (a) 5 15 25 35 45 55 65 75 85 95 noise level (sd) 5 15 25 35 45 55 65 75 85 95 norms (b) 5 15 25 35 45 55 65 75 85 95 noise level (sd) 5 15 25 35 45 55 65 75 85 95 norms (c) Figure 1: First-order analysis of the residual of a denoising con v olutional neural network as a function of noise lev el. The plots show the norms of the residual and the net bias a veraged ov er 100 20 × 20 natural-image patches for networks trained o ver dif ferent training ranges. The range of noises used for training is highlighted in blue. (a) When the network is trained over the full range of noise le vels ( σ ∈ [0 , 100] ) the net bias is small, gro wing slightly as the noise increases. (b-c) When the netw ork is trained ov er the a smaller range ( σ ∈ [0 , 55] and σ ∈ [0 , 30] ), the net bias gro ws explosi vely for noise le vels beyond the training range. This coincides with a dramatic drop in performance, reflected in the dif ference between the magnitudes of the residual and the true noise. The CNN used for this example is DnCNN (Zhang et al., 2017); using alternati ve architectures yields similar results as shown in Figure 8. levels through a simple modification in the architecture: removing all additi ve constants. W e find this holds for a variety of network architectures proposed in previous literature. W e provide extensi ve empirical evidence that the main state-of-the-art denoising architectures systematically o verfit to the noise lev els in the training set, and that this is due to the presence of a net bias. Suppressing this bias makes it possible to attain state-of-the-art performance while training o ver a v ery limited range of noise lev els. The data-driv en mechanisms implemented by deep neural networks to perform denoising are almost completely unknown . It is unclear what priors are being learned by the models, and how they are affected by the choice of architecture and training strategies. Here, we provide nov el linear-algebraic tools to visualize and interpret these strategies through a local analysis of the Jacobian of the denoising map. The analysis re veals locally adapti ve properties of the learned models, akin to existing nonlinear filtering algorithms. In addition, we show that the deep networks implicitly perform a projection onto an adaptiv ely-selected low-dimensional subspace capturing features of natural images. 2 R E L A T E D W O R K The classical solution to the denoising problem is the W iener filter (Wiener, 1950), which assumes a translation-in v ariant Gaussian signal model. The main limitation of W iener filtering is that it ov er-smoothes, eliminating fine-scale details and te xtures. Modern filtering approaches address this issue by adapting the filters to the local structure of the noisy image (e.g. T omasi & Manduchi (1998); Milanfar (2012)). Here we show that neural networks implement such strate gies implicitly , learning them directly from the data. In the 1990’ s powerful denoising techniques were de veloped based on multi-scale ("wa velet") transforms. These transforms map natural images to a domain where the y have sparser representations. This makes it possible to perform denoising by applying nonlinear thresholding operations in order to discard components that are small relativ e to the noise lev el (Donoho & Johnstone, 1995; Simoncelli & Adelson, 1996; Chang et al., 2000). From a linear-algebraic perspectiv e, these algorithms operate by projecting the noisy input onto a lo wer-dimensional subspace that contains plausible signal content. The projection eliminates the orthogonal complement of the subspace, which mostly contains noise. This general methodology laid the foundations for the state-of-the-art models in the 2000’ s (e.g. (Dabov et al., 2006)), some of which added a data-driven perspective, learning sparsifying transforms (Elad & Aharon, 2006), and nonlinear shrinkage functions (Hel-Or & Shaked, 2008; Raphan & Simoncelli, 2008), directly from natural images. Here, we show that deep-learning models learn similar priors in the form of local linear subspaces capturing image features. 2 Published as a conference paper at ICLR 2020 Noisy training image, σ = 10 (max level) Noisy test image, σ = 90 T est image, denoised by CNN T est image, denoised by BF-CNN Figure 2: Denoising of an example natural image by a CNN and its bias-free counterpart (BF-CNN), both trained over noise lev els in the range σ ∈ [0 , 10] (image intensities are in the range [0 , 255] ). The CNN performs poorly at high noise levels ( σ = 90 , far beyond the training range), whereas BF-CNN performs at state-of-the-art levels. The CNN used for this example is DnCNN (Zhang et al., 2017); using alternativ e architectures yields similar results (see Section 5). In the past decade, purely data-driv en models based on con volutional neural networks (LeCun et al., 2015) hav e come to dominate all previous methods in terms of performance. These models consist of cascades of con volutional filters, and rectifying nonlinearities, which are capable of representing a div erse and powerful set of functions. T raining such architectures to minimize mean square error ov er large databases of noisy natural-image patches achie ves current state-of-the-art results (Zhang et al., 2017; Huang et al., 2017; Ronneberger et al., 2015; Zhang et al., 2018a). 3 N E T W O R K B I A S I M PA I R S G E N E R A L I Z A T I O N W e assume a measurement model in which images are corrupted by additi ve noise: y = x + n , where x ∈ R N is the original image, containing N pixels, n is an image of i.i.d. samples of Gaussian noise with v ariance σ 2 , and y is the noisy observ ation. The denoising problem consists of finding a function f : R N → R N , that provides a good estimate of the original image, x . Commonly , one minimizes the mean squared error : f = arg min g E || x − g ( y ) || 2 , where the expectation is taken over some distribution o ver images, x , as well as over the distrib ution of noise realizations. In deep learning, the denoising function g is parameterized by the weights of the network, so the optimization is ov er these parameters. If the noise standard deviation, σ , is unknown, the e xpectation must also be taken ov er a distribution of σ . This problem is often called blind denoising in the literature. In this work, we study the generalization performance of CNNs acr oss noise levels σ , i.e. when they are tested on noise lev els not included in the training set. Feedforward neural networks with rectified linear units (ReLUs) are piecewise af fine: for a giv en activ ation pattern of the ReLUs, the effect of the network on the input is a cascade of linear trans- formations (con volutional or fully connected layers, W k ), additiv e constants ( b k ), and pointwise multiplications by a binary mask corresponding to the fixed activ ation pattern ( R ). Since each of these is af fine, the entire cascade implements a single affine transformation. For a fixed noisy input image y ∈ R N with N pixels, the function f : R N → R N computed by a denoising neural network may be written f ( y ) = W L R ( W L − 1 ...R ( W 1 y + b 1 ) + ...b L − 1 ) + b L = A y y + b y , (1) where A y ∈ R N × N is the Jacobian of f ( · ) ev aluated at input y , and b y ∈ R N represents the net bias . The subscripts on A y and b y serve as a reminder that both depend on the ReLU acti vation patterns, which in turn depend on the input vector y . Based on equation 1 we can perform a first-order decomposition of the error or r esidual of the neural network for a specific input: y − f ( y ) = ( I − A y ) y − b y . Figure 1 shows the magnitude of the residual and the constant, which is equal to the net bias b y , for a range of noise lev els. Over the training range, the net bias is small, implying that the linear term is responsible for most of the denoising (see Figures 9 and 10 for a visualization of both components). Howe ver , when the network is ev aluated at noise le vels outside of the training range, the norm of the bias increases dramatically , and the residual is significantly smaller than the noise, suggesting a form of overfitting. Indeed, network performance 3 Published as a conference paper at ICLR 2020 28 22 19 16 14 13 11 10 9 8 Input PSNR 28 22 19 16 14 13 11 10 9 8 Output PSNR DnCNN BF-CNN identity 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 Figure 3: Comparison of the performance of a CNN and a BF-CNN with the same architecture for the experimental design described in Section 5. The performance is quantified by the PSNR of the denoised image as a function of the input PSNR. Both networks are trained over a fix ed ranges of noise le vels indicated by a blue background. In all cases, the performance of BF-CNN generalizes robustly be yond the training range, while that of the CNN degrades significantly . The CNN used for this example is DnCNN (Zhang et al., 2017); using alternati ve architectures yields similar results (see Figures 11 and 12). generalizes very poorly to noise le vels outside the training range. This is illustrated for an example image in Figure 2, and demonstrated through extensi ve e xperiments in Section 5. 4 P RO P O S E D M E T H O D O L O G Y : B I A S - F R E E N E T W O R K S Section 3 shows that CNNs overfit to the noise lev els present in the training set, and that this is associated with wild fluctuations of the net bias b y . This suggests that the ov erfitting might be ameliorated by removing additi ve (bias) terms from ev ery stage of the network, resulting in a bias- fr ee CNN (BF-CNN). Note that bias terms are also removed from the batch-normalization used during training. This simple change in the architecture has an interesting consequence. If the CNN has ReLU activ ations the denoising map is locally homogeneous, and consequently in variant to scaling : rescaling the input by a constant v alue simply rescales the output by the same amount, just as it would for a linear system. Lemma 1. Let f BF : R N → R N be a feedforwar d neural network with ReLU activation functions and no additive constant terms in any layer . F or any input y ∈ R and any nonnegative constant α , f BF ( αy ) = αf BF ( y ) . (2) Pr oof. W e can write the action of a bias-free neural network with L layers in terms of the weight matrix W i , 1 ≤ i ≤ L , of each layer and a rectifying operator R , which sets to zero any negati ve entries in its input. Multiplying by a nonnegati ve constant does not change the sign of the entries of a vector , so for any z with the right dimension and any α > 0 R ( αz ) = α R ( z ) , which implies f BF ( αy ) = W L R ( W L − 1 · · · R ( W 1 αy )) = αW L R ( W L − 1 · · · R ( W 1 y )) = αf BF ( y ) . (3) Note that networks with nonzero net bias are not scaling in variant because scaling the input may change the activ ation pattern of the ReLUs. Scaling in variance is intuitiv ely desireable for a denoising method operating on natural images; a rescaled image is still an image. Note that Lemma 1 holds for networks with skip connections where the feature maps are concatenated or added, because both of these operations are linear . In the following sections we demonstrate that removing all additiv e terms in CNN architectures has two important consequences: (1) the networks gain the ability to generalize to noise le vels not encountered during training (as illustrated by Figure 2 the improvement is striking), and (2) the denoising mechanism can be analyzed locally via linear-algebraic tools that re veal intriguing ties to more traditional denoising methodology such as nonlinear filtering and sparsity-based techniques. 4 Published as a conference paper at ICLR 2020 σ Noisy Denoised Pixel 1 Pixel 2 Pixel 3 10 Pixel 1 Pixel 2 Pixel 3 30 100 Figure 4: V isualization of the linear weighting functions (ro ws of A y in equation 4) of a BF-CNN for three example pix els of an input image, and three levels of noise. The images in the three rightmost columns show the weighting functions used to compute each of the indicated pixels (red squares). All weighting functions sum to one, and thus compute a local av erage (note that some weights are negativ e, indicated in red). Their shapes vary substantially , and are adapted to the underlying image content. As the noise lev el σ increases, the spatial extent of the weight functions increases in order to a verage out the noise, while respecting boundaries between different re gions in the image, which results in dramatically dif ferent functions for each pixel. The CNN used for this example is DnCNN (Zhang et al., 2017); using alternativ e architectures yields similar results (see Figure 13). 5 B I A S - F R E E N E T W O R K S G E N E R A L I Z E A C RO S S N O I S E L E V E L S In order to ev aluate the effect of removing the net bias in denoising CNNs, we compare sev eral state-of- the-art architectures to their bias-free counterparts, which are exactly the same except for the absence of any additive constants within the networks (note that this includes the batch-normalization additi ve parameter). These architectures include popular features of existing neural-network techniques in image processing: recurrence, multiscale filters, and skip connections. More specifically , we examine the following models (see Section A for additional details): • DnCNN (Zhang et al., 2017): A feedforward CNN with 20 con volutional layers, each consisting of 3 × 3 filters, 64 channels, batch normalization (Ioffe & Szegedy, 2015), a ReLU nonlinearity , and a skip connection from the initial layer to the final layer . • Recurrent CNN: A recurrent architecture inspired by Zhang et al. (2018a) where the basic module is a CNN with 5 layers, 3 × 3 filters and 64 channels in the intermediate layers. The order of the recurrence is 4. • UNet (Ronneberger et al., 2015): A multiscale architecture with 9 conv olutional layers and skip connections between the different scales. • Simplified DenseNet: CNN with skip connections inspired by the DenseNet architec- ture (Huang et al., 2017; Zhang et al., 2018b). W e train each network to denoise images corrupted by i.i.d. Gaussian noise over a range of standard deviations (the tr aining range of the network). W e then ev aluate the network for noise lev els that are both within and beyond the training range. Our experiments are carried out on 180 × 180 natural images from the Berkeley Se gmentation Dataset (Martin et al., 2001) to be consistent with previous 5 Published as a conference paper at ICLR 2020 0 200 400 600 800 1000 1200 1400 1600 axis number 0.0 0.5 1.0 1.5 2.0 2.5 3.0 singular values (a) 0.5 0.6 0.7 0.8 0.9 1.0 cos between u and v 0 50 100 150 200 250 300 350 (b) 20 40 60 80 100 noise level 0 100 200 300 400 500 600 effective dimensionality ave (c) Figure 5: Analysis of the SVD of the Jacobian of a BF-CNN for ten natural images, corrupted by noise of standard deviation σ = 50 . (a) Singular value distributions. For all images, a large proportion of the v alues are near zero, indicating (approximately) a projection onto a subspace (the signal subspace ). (b) Histogram of dot products (cosine of angle) between the left and right singular vectors that lie within the signal subspaces. (c) Effecti ve dimensionality of the signal subspaces (computed as sum of squared singular values) as a function of noise le vel. For comparison, the total dimensionality of the space is 1600 ( 40 × 40 pixels). A verage dimensionality (red curve) falls approximately as the in verse of σ (dashed curve). The CNN used for this example is DnCNN (Zhang et al., 2017); using alternativ e architectures yields similar results (see Figure 17). results (Schmidt & Roth, 2014; Chen & Pock, 2017; Zhang et al., 2017). Additional details about the dataset and training procedure are provided in Section B. Figures 3, 11 and 12 show our results. For a wide range of different training ranges, and for all architectures, we observe the same phenomenon: the performance of CNNs is good over the training range, but de grades dramatically at new noise le vels; in stark contrast, the corresponding BF-CNNs provide strong denoising performance over noise levels outside the training range. This holds for both PSNR and the more perceptually-meaningful Structural Similarity Index (W ang et al., 2004) (see Figure 12). Figure 2 shows an example image, demonstrating visually the striking difference in generalization performance between a CNN and its corresponding BF-CNN. Our results provide strong evidence that removing net bias in CNN architectures results in effecti ve generalization to noise lev els out of the training range. 6 R E V E A L I N G T H E D E N O I S I N G M E C H A N I S M S L E A R N E D B Y B F - C N N S In this section we perform a local analysis of BF-CNN networks, which reveals the underlying denoising mechanisms learned from the data. A bias-free network is strictly linear , and its net action can be expressed as f BF ( y ) = W L R ( W L − 1 ...R ( W 1 y )) = A y y , (4) where A y is the Jacobian of f BF ( · ) ev aluated at y . The Jacobian at a fixed input provides a local characterization of the denoising map. In order to study the map we perform a linear-algebraic analysis of the Jacobian. Our approach is similar in spirit to visualization approaches– proposed in the context of image classification– that differentiate neural-network functions with respect to their input (e.g. Simonyan et al. (2013); Monta von et al. (2017)). 6 . 1 N O N L I N E A R A D A P T I V E FI LT E R I N G The linear representation of the denoising map gi ven by equation 4 implies that the i th pixel of the output image is computed as an inner product between the i th row of A y , denoted a y ( i ) , and the input image: f BF ( y )( i ) = N X j =1 A y ( i, j ) y ( j ) = a y ( i ) T y . (5) 6 Published as a conference paper at ICLR 2020 Figure 6: V isualization of left singular vectors of the Jacobian of a BF-CNN, ev aluated on two different images (top and bottom ro ws), corrupted by noise with standard deviation σ = 50 . The left column shows original (clean) images. The next three columns show singular v ectors corresponding to non-negligible singular v alues. The vectors capture features from the clean image. The last three columns on the right show singular v ectors corresponding to singular values that are almost equal to zero. These vectors are noisy and unstructured. The CNN used for this example is DnCNN (Zhang et al., 2017); using alternativ e architectures yields similar results (see Figure 16). The vectors a y ( i ) can be interpreted as adaptive filters that produce an estimate of the denoised pixel via a weighted a verage of noisy pixels. Examination of these filters rev eals their div ersity , and their relationship to the underlying image content: they are adapted to the local features of the noisy image, av eraging over homogeneous re gions of the image without blurring across edges. This is sho wn for two separate e xamples and a range of noise le vels in Figures 4, 13, 14 and 15 for the architectures described in Section 5. W e observe that the equi valent filters of all architectures adapt to image structure. Classical W iener filtering (W iener, 1950) denoises images by computing a local ave rage dependent on the noise lev el. As the noise lev el increases, the av eraging is carried out over a lar ger region. As illustrated by Figures 4, 13, 14 and 15, the equi valent filters of BF-CNNs also display this beha vior . The crucial difference is that the filters are adapti ve. The BF-CNNs learn such filters implicitly from the data, in the spirit of modern nonlinear spatially-varying filtering techniques designed to preserve fine-scale details such as edges (e.g. T omasi & Manduchi (1998), see also Milanfar (2012) for a comprehensiv e revie w , and Choi et al. (2018) for a recent learning-based approach). 6 . 2 P R O J E C T I O N O N TO A DA P T I V E L O W - D I M E N S I O NA L S U B S PAC E S The local li near structure of a BF-CNN f acilitates analysis of its functional capabilities via the singular value decomposition (SVD). For a giv en input y , we compute the SVD of the Jacobian matrix: A y = U S V T , with U and V orthogonal matrices, and S a diagonal matrix. W e can decompose the effect of the network on its input in terms of the left singular vectors { U 1 , U 2 . . . , U N } (columns of U ), the singular v alues { s 1 , s 2 . . . , s N } (diagonal elements of S ), and the right singular vectors { V 1 , V 2 , . . . V N } (columns of V ): f BF ( y ) = A y y = U S V T y = N X i =1 s i ( V T i y ) U i . (6) The output is a linear combination of the left singular vectors, each weighted by the projection of the input onto the corresponding right singular vector , and scaled by the corresponding singular value. Analyzing the SVD of a BF-CNN on a set of ten natural images rev eals that most singular values are very close to zero (Figure 5a). The network is thus discarding all but a very low-dimensional portion of the input image. W e also observe that the left and right singular vectors corresponding to the singular values with non-ne gligible amplitudes are approximately the same (Figure 5b). This means that the Jacobian is (approximately) symmetric, and we can interpret the action of the network as projecting the noisy signal onto a lo w-dimensional subspace, as is done in wa velet thresholding schemes. This is confirmed by visualizing the singular vectors as images (Figure 6). The singular vectors corresponding to non-negligible singular values are seen to capture features of the input image; those corresponding to near-zero singular v alues are unstructured. The BF-CNN therefore implements 7 Published as a conference paper at ICLR 2020 0 20 40 60 80 100 noise level (sd) 0.0 0.2 0.4 0.6 0.8 1.0 relative norm 20 30 40 50 60 70 80 90 100 noise level of the nested subspace 0.0 0.2 0.4 0.6 0.8 1.0 nestedness Figure 7: Signal subspace properties. Left: Signal subspace, computed from Jacobian of a BF-CNN ev aluated at a particular noise lev el, contains the clean image. Specifically , the fraction of squared ` 2 norm preserved by projection onto the subspace is nearly one as σ grows from 10 to 100 (relati ve to the image pixels, which lie in the range [0 , 255] ). Results are av eraged over 50 e xample clean images. Right: Signal subspaces at different noise lev els are nested. The subspace axes for a higher noise lev el lie largely within the subspace obtained for the lowest noise le vel ( σ = 10 ), as measured by the sum of squares of their projected norms. Results are shown for 10 e xample clean images. an approximate projection onto an adaptive signal subspace that preserves image structure, while suppressing the noise. W e can define an "effecti ve dimensionality" of the signal subspace as d := P N i =1 s 2 i , the amount of variance captured by applying the linear map to an N -dimensional Gaussian noise vector with variance σ 2 , normalized by the noise variance. The remaining variance equals E n || A y n || 2 = E n || U y S y V T y n || 2 = E n || S y n || 2 = E n N X i =1 s 2 i n 2 i = N X i =1 s 2 i E n ( n 2 i ) ≈ σ 2 N X i =1 s 2 i , where E n indicates expectation o ver noise n , so that d = E n || A y n || 2 /σ 2 = P N i =1 s 2 i . When we examine the preserved signal subspace, we find that the clean image lies almost completely within it. For inputs of the form y := x + n (where x is the clean image and n the noise), we find that the subspace spanned by the singular vectors up to dimension d contains x almost entirely , in the sense that projecting x onto the subspace preserves most of its energy . This holds for the whole range of noise lev els ov er which the network is trained (Figure 7). W e also find that for an y giv en clean image, the effecti ve dimensionality of the signal subspace ( d ) decreases systematically with noise lev el (Figure 5c). At lower noise lev els the network detects a richer set of image features, and constructs a larger signal subspace to capture and preserve them. Empirically , we found that (on av erage) d is approximately proportional to 1 σ (see dashed line in Figure 5c). These signal subspaces are nested: the subspaces corresponding to lower noise lev els contain more than 95% of the subspace axes corresponding to higher noise le vels (Figure 7). Finally , we note that this behavior of the signal subspace dimensionality , combined with the fact that it contains the clean image, explains the observ ed denoising performance across different noise le vels (Figure 3). Specifically , if we assume d ≈ α/σ , the mean squared error is proportional to σ : MSE = E n || A y ( x + n ) − x || 2 ≈ E n || A y n || 2 ≈ σ 2 d ≈ α σ (7) Note that this result runs contrary to the intuitive expectation that MSE should be proportional to the noise variance, which would be the case if the denoiser operated by projecting onto a fixed subspace. The scaling of MSE with the square root of the noise v ariance implies that the PSNR of the denoised image should be a linear function of the input PSNR, with a slope of 1 / 2 , consistent with the empirical results sho wn in Figure 3. Note that this behavior holds e ven when the networks are trained only on modest lev els of noise (e.g., σ ∈ [0 , 10] ). 8 Published as a conference paper at ICLR 2020 7 D I S C U S S I O N In this work, we show that removing constant terms from CNN architectures ensures strong generaliza- tion across noise lev els, and also provides interpretability of the denoising method via linear-algebra techniques. W e provide insights into the relationship between bias and generalization through a set of observations. Theoretically , we argue that if the denoising network operates by projecting the noisy observation onto a linear space of “clean” images, then that space should include all rescalings of those images, and thus, the origin. This property can be guaranteed by eliminating bias from the network. Empirically , in networks that allow bias, the net bias of the trained network is quite small within the training range. Howe ver , outside the training range the net bias grows dramatically resulting in poor performance, which suggests that the bias may be the cause of the failure to general- ize. In addition, when we remove bias from the architecture, we preserve performance within the training range, but achieve near-perfect generalization, e ven to noise le vels more than 10x those in the training range. These observations do not fully elucidate how our network achie ves its remarkable generalization- only that bias prev ents that generalization, and its remov al allows it. It is of interest to e xamine whether bias remov al can facilitate generalization in noise distributions beyond Gaussian, as well as other image-processing tasks, such as image restoration and image compression. W e ha ve trained bias-free networks on uniform noise and found that they generalize outside the training range. In fact, bias-free networks trained for Gaussian noise generalize well when tested on uniform noise (Figures 18 and 19). In addition, we hav e applied our methodology to image restoration (simultaneous deblurring and denoising). Preliminary results indicate that bias-free networks generalize across noise le vels for a fix ed blur lev el, whereas networks with bias do not (Figure 20). An interesting question for future research is whether it is possible to achieve generalization across blur lev els. Our initial results indicate that removing bias is not sufficient to achiev e this. Finally , our linear-algebraic analysis uncovers interesting aspects of the denoising map, but these interpretations are very local: small changes in the input image change the acti vation patterns of the network, resulting in a change in the corresponding linear mapping. Extending the analysis to rev eal global characteristics of the neural-network functionality is a challenging direction for future research. R E F E R E N C E S S Grace Chang, Bin Y u, and Martin V etterli. Adaptiv e wav elet thresholding for image denoising and compression. IEEE T rans. Imag e Pr ocessing , 9(9):1532–1546, 2000. Y unjin Chen and Thomas Pock. T rainable nonlinear reaction diffusion: A flexible framew ork for fast and ef fectiv e image restoration. IEEE T rans. P att. Analysis and Machine Intelligence , 39(6): 1256–1272, 2017. Sungjoon Choi, John Isidoro, Pascal Getreuer , and Peyman Milanfar . Fast, trainable, multiscale denoising. In 2018 25th IEEE International Conference on Imag e Pr ocessing (ICIP) , pp. 963–967. IEEE, 2018. K ostadin Dabov , Alessandro Foi, Vladimir Katko vnik, and Karen Egiazarian. Image denoising with block-matching and 3d filtering. In Imag e Pr ocessing: Algorithms and Systems, Neural Networks, and Machine Learning , v olume 6064, pp. 606414. International Society for Optics and Photonics, 2006. D Donoho and I Johnstone. Adapting to unkno wn smoothness via wa velet shrinkage. J American Stat Assoc , 90(432), December 1995. Michael Elad and Michal Aharon. Image denoising via sparse and redundant representations o ver learned dictionaries. IEEE T rans. on Imag e pr ocessing , 15(12):3736–3745, 2006. Y Hel-Or and D Shaked. A discriminative approach for wa velet denoising. IEEE T rans. Image Pr ocessing , 2008. 9 Published as a conference paper at ICLR 2020 Gao Huang, Zhuang Liu, Laurens V an Der Maaten, and Kilian Q W einberger . Densely connected con volutional networks. In Pr oc. IEEE Conf. Computer V ision and P attern Recognition , pp. 4700–4708, 2017. Serge y Ioffe and Christian Szegedy . Batch normalization: Accelerating deep network training by reducing internal cov ariate shift. arXiv preprint , 2015. Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv pr eprint arXiv:1412.6980 , 2014. Y ann LeCun, Y oshua Bengio, and Geoffre y Hinton. Deep learning. natur e , 521(7553):436, 2015. D. Martin, C. Fo wlkes, D. T al, and J. Malik. A database of human segmented natural images and its application to ev aluating segmentation algorithms and measuring ecological statistics. In Pr oc. 8th Int’l Conf. Computer V ision , volume 2, pp. 416–423, July 2001. Peyman Milanf ar . A tour of modern image filtering: New insights and methods, both practical and theoretical. IEEE signal pr ocessing magazine , 30(1):106–128, 2012. Grégoire Montav on, Sebastian Lapuschkin, Alexander Binder , W ojciech Samek, and Klaus-Robert Müller . Explaining nonlinear classification decisions with deep taylor decomposition. P attern Rec. , 65:211–222, 2017. M Raphan and E P Simoncelli. Optimal denoising in redundant representations. IEEE T rans Imag e Pr ocessing , 17(8):1342–1352, Aug 2008. doi: 10.1109/TIP .2008.925392. Olaf Ronneberger , Philipp Fischer , and Thomas Brox. U-net: Con volutional networks for biomedical image segmentation. In International Conference on Medical image computing and computer - assisted intervention , pp. 234–241. Springer , 2015. Uwe Schmidt and Stefan Roth. Shrinkage fields for ef fectiv e image restoration. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pp. 2774–2781, 2014. E P Simoncelli and E H Adelson. Noise removal via Bayesian wav elet coring. In Pr oc 3r d IEEE Int’l Conf on Image Pr oc , volume I, pp. 379–382, Lausanne, Sep 16-19 1996. IEEE Sig Proc Society . doi: 10.1109/ICIP .1996.559512. Karen Simonyan, Andrea V edaldi, and Andrew Zisserman. Deep inside con volutional networks: V isualising image classification models and saliency maps. arXiv pr eprint arXiv:1312.6034 , 2013. Carlo T omasi and Roberto Manduchi. Bilateral filtering for gray and color images. In ICCV , volume 98, 1998. Zhou W ang, Alan C Bovik, Hamid R Sheikh, Eero P Simoncelli, et al. Image quality assessment: from error visibility to structural similarity . IEEE T rans. Ima ge Pr ocessing , 13(4):600–612, 2004. Norbert W iener . Extrapolation, interpolation, and smoothing of stationary time series: with engi- neering applications . T echnology Press, 1950. Kai Zhang, W angmeng Zuo, Y unjin Chen, Deyu Meng, and Lei Zhang. Beyond a gaussian denoiser: Residual learning of deep CNN for image denoising. IEEE T rans. Image Pr ocessing , 26(7): 3142–3155, 2017. Xiaoshuai Zhang, Y iping Lu, Jiaying Liu, and Bin Dong. Dynamically unfolding recurrent restorer: A moving endpoint control method for image restoration. arXiv pr eprint arXiv:1805.07709 , 2018a. Y ulun Zhang, Y apeng Tian, Y u Kong, Bineng Zhong, and Y un Fu. Residual dense network for image restoration. CoRR , abs/1812.10477, 2018b. URL . A D E S C R I P T I O N O F D E N O I S I N G A R C H I T E C T U R E S In this section we describe the denoising architectures used for our computational experiments in more detail. 10 Published as a conference paper at ICLR 2020 A . 1 D N C N N W e implement BF-DnCNN based on the architecture of the Denoising CNN (DnCNN) (Zhang et al., 2017). DnCNN consists of 20 con volutional layers, each consisting of 3 × 3 filters and 64 channels, batch normalization (Ioffe & Szegedy, 2015), and a ReLU nonlinearity . It has a skip connection from the initial layer to the final layer, which has no nonlinear units. T o construct a bias-free DnCNN (BF-DnCNN) we remove all sources of additi ve bias, including the mean parameter of the batch-normalization in ev ery layer (note howe ver that the scaling parameter is preserv ed). A . 2 R E C U R R E N T C N N Inspired by Zhang et al. (2018a), we consider a recurrent framew ork that produces a denoised image estimate of the form ˆ x t = f ( ˆ x t − 1 , y noisy ) , at time t where f is a neural network. W e use a 5-layer fully con volutional network with 3 × 3 filters in all layers and 64 channels in each intermediate layer to implement f . W e initialize the denoised estimate as the noisy image, i.e ˆ x 0 := y noisy . For the version of the network with net bias, we add trainable additi ve constants to e very filter in all b ut the last layer . During training, we run the recurrence for a maximum of T times, sampling T uniformly at random from { 1 , 2 , 3 , 4 } for each mini-batch. At test time we fix T = 4 . A . 3 U N E T Our UNet model (Ronneberger et al., 2015) has the follo wing layers: 1. conv1 - T akes in input image and maps to 32 channels with 5 × 5 con volutional k ernels. 2. conv2 - Input: 32 channels. Output: 32 channels. 3 × 3 conv olutional kernels. 3. con v3 - Input: 32 channels. Output: 64 channels. 3 × 3 con volutional k ernels with stride 2. 4. conv4 - Input: 64 channels. Output: 64 channels. 3 × 3 conv olutional kernels. 5. con v5 - Input: 64 channels. Output: 64 channels. 3 × 3 con volutional k ernels with dilation factor of 2. 6. con v6 - Input: 64 channels. Output: 64 channels. 3 × 3 con volutional k ernels with dilation factor of 4. 7. con v7 - T ranspose Conv olution layer . Input: 64 channels. Output: 64 channels. 4 × 4 filters with stride 2 . 8. con v8 - Input: 96 channels. Output: 64 channels. 3 × 3 con volutional k ernels. The input to this layer is the concatenation of the outputs of layer con v7 and conv2 . 9. conv9 - Input: 32 channels. Output: 1 channels. 5 × 5 conv olutional kernels. The structure is the same as in Zhang et al. (2018a), b ut without recurrence. For the version with bias, we add trainable additiv e constants to all the layers other than conv9 . This configuration of UNet assumes ev en width and height, so we remov e one row or column from images in with odd height or width. A . 4 S I M P L I FI E D D E N S E N E T Our simplified version of the DenseNet architecture (Huang et al., 2017) has 4 blocks in total. Each block is a fully con volutional 5 -layer CNN with 3 × 3 filters and 64 channels in the intermediate layers with ReLU nonlinearity . The first three blocks hav e an output layer with 64 channels while the last block has an output layer with only one channel. The output of the i th block is concatenated with the input noisy image and then fed to the ( i + 1) th block, so the last three blocks have 65 input channels. In the version of the network with bias, we add trainable additive parameters to all the layers except for the last layer in the final block. B D A TA S E T S A N D T R A I N I N G P RO C E D U R E Our experiments are carried out on 180 × 180 natural images from the Berkeley Segmentation Dataset (Martin et al., 2001). W e use a training set of 400 images. The training set is augmented via 11 Published as a conference paper at ICLR 2020 do wnsampling, random flips, and random rotations of patches in these images (Zhang et al., 2017). A test set containing 68 images is used for ev aluation. W e train the DnCNN and it’ s bias free model on patches of size 50 × 50 , which yields a total of 541,600 clean training patches. For the remaining architectures, we use patches of size 128 × 128 for a total of 22,400 training patches. W e train DnCNN and its bias-free counterpart using the Adam Optimizer (Kingma & Ba, 2014) over 70 epochs with an initial learning rate of 10 − 3 and a decay factor of 0 . 5 at the 50 th and 60 th epochs, with no early stopping. W e train the other models using the Adam optimizer with an initial learning rate of 10 − 3 and train for 50 epochs with a learning rate schedule which decreases by a f actor of 0 . 25 if the v alidation PSNR decreases from one epoch to the ne xt. W e use early stopping and select the model with the best validation PSNR. C A D D I T I O NA L R E S U LT S In this section we report additional results of our computational experiments: • Figure 8 sho ws the first-order analysis of the residual of the dif ferent architectures described in Section A, except for DnCNN which is sho wn in Figure 1. • Figures 9 and 10 visualize the linear and net bias terms in the first-order decomposition of an example image at dif ferent noise lev els. • Figure 11 shows the PSNR results for the experiments described in Section 5. • Figure 12 shows the SSIM results for the experiments described in Section 5. • Figures 13, 14 and 15 sho w the equi valent filters at sev eral pixels of tw o example images for different architectures (see Section 6.1). • Figure 16 sho ws the singular vectors of the Jacobian of dif ferent BF-CNNs (see Section 6.2). • Figure 17 shows the singular v alues of the Jacobian of different BF-CNNs (see Section 6.2). • Figure 18 and 19 sho ws that networks trained on noise samples drawn from Gaussian distribution with 0 mean generalizes to noise drawn from uniform distribution with 0 mean during test time. Experiments follow the procedure described in Section 5 except that the networks are e valuated on a dif ferent noise distribution during the test time. • Figure 20 shows the application of BF-CNN and CNN to the task of image restoration, where the image is corrupted with both noise and blur at the same time. W e sho w that BF-CNNs can generalize outside the training range for noise lev els for a fixed blur le vel, b ut do not outperform CNN when generalizing to unseen blur lev els. 12 Published as a conference paper at ICLR 2020 R-CNN 5 15 25 35 45 55 65 75 85 95 noise level(sd) 20 40 60 80 100 120 140 norms b i a s , b y r e s i d u a l , y x noise, z 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 20 40 60 80 100 120 140 norms 5 15 25 35 45 55 65 75 85 95 noise level(sd) 20 40 60 80 100 120 140 norms UNet 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 20 40 60 80 100 120 140 norms b i a s , b y r e s i d u a l , y x noise, z 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 20 40 60 80 100 120 140 norms 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 20 40 60 80 100 120 140 norms DenseNet 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 20 40 60 80 100 120 140 norms b i a s , b y r e s i d u a l , y x noise, z 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 25 50 75 100 125 150 norms 5 15 25 35 45 55 65 75 85 95 noise level(sd) 0 20 40 60 80 100 120 140 norms Figure 8: First-order analysis of the residual of Recurrent-CNN (Section A.2), UNet (Section A.3) and DenseNet (Section A.4) as a function of noise level. The plots show the magnitudes of the residual and the net bias averaged ov er 68 images in Set68 test set of Berkeley Segmentation Dataset (Martin et al., 2001) for networks trained over dif ferent training ranges. The range of noises used for training is highlighted in gray . (left) When the network is trained ov er the full range of noise lev els ( σ ∈ [0 , 100] ) the net bias is small, growing slightly as the noise increases. (middle and right) When the network is trained ov er the a smaller range ( σ ∈ [0 , 55] and σ ∈ [0 , 30] ), the net bias grows explosi vely for noise levels outside the training range. This coincides with the dramatic drop in performance due to ov erfitting, reflected in the difference between the residual and the true noise. 13 Published as a conference paper at ICLR 2020 Noisy Input ( y ) Denoised ( f ( y ) ) Linear Part ( A y y ) Net Bias ( b y ) σ = 10 0.2 0.4 0.6 0.8 1.0 0.1 0.0 0.1 0.2 σ = 30 0.2 0.4 0.6 0.8 1.0 0.2 0.0 0.2 0.4 0.6 0.8 σ = 50 0.0 0.2 0.4 0.6 0.8 1.0 0.4 0.2 0.0 0.2 0.4 0.6 σ = 70 ∗ 4 3 2 1 0 1 2 3 4 4 3 2 1 0 1 2 3 4 Figure 9: V isualization of the decomposition of output of DnCNN trained for noise range [0 , 55] into linear part and net bias. The noise lev el σ = 70 (highlighted by ∗ ) is outside the training range. Over the training range, the net bias is small, and the linear part is responsible for most of the denoising effort. Howe ver , when the network is ev aluated out of the training range, the contribution of the bias increases dramatically , which coincides with a significant drop in denoising performance. 14 Published as a conference paper at ICLR 2020 Noisy Input ( y ) Denoised ( f ( y ) ) Linear Part ( A y y ) Net Bias ( b y ) R-CNN σ = 10 0.2 0.4 0.6 0.8 0.10 0.05 0.00 0.05 0.10 R-CNN σ = 90 ∗ 0.0 0.5 1.0 1.5 0.75 0.50 0.25 0.00 0.25 0.50 0.75 UNet σ = 10 0.2 0.4 0.6 0.8 0.10 0.05 0.00 0.05 0.10 UNet σ = 90 ∗ 0.2 0.0 0.2 0.4 0.6 0.8 1.0 1.2 0.2 0.1 0.0 0.1 0.2 DenseNet σ = 10 0.2 0.4 0.6 0.8 1.0 1.2 0.2 0.0 0.2 0.4 DenseNet σ = 90 ∗ 1.5 1.0 0.5 0.0 0.5 1.0 1.5 2.0 1.5 1.0 0.5 0.0 0.5 1.0 1.5 2.0 Figure 10: V isualization of the decomposition of output of Recurrent-CNN (Section A.2, UNet (Section A.3) and DenseNet (Section A.4) trained for noise range [0 , 55] into linear part and net bias. The noise le vel σ = 90 (highlighted by ∗ ) is outside the training range. Over the training range, the net bias is small, and the linear part is responsible for most of the denoising effort. Howe ver , when the network is e valuated out of the training range, the contribution of the bias increases dramatically , which coincides with a significant drop in denoising performance. 15 Published as a conference paper at ICLR 2020 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (a) 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (b) 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (c) 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (d) Figure 11: Comparisons of architectures with (red curves) and without (blue curv es) a net bias for the experimental design described in Section 5. The performance is quantified by the PSNR of the denoised image as a function of the input PSNR of the noisy image. All the architectures with bias perform poorly out of their training range, whereas the bias-free versions all achie ve excellent generalization across noise le vels. (a) Deep Con volutional Neural Netw ork, DnCNN (Zhang et al., 2017). (b) Recurrent architecture inspired by DURR (Zhang et al., 2018a). (c) Multiscale architecture inspired by the UNet (Ronneberger et al., 2015). (d) Architecture with multiple skip connections inspired by the DenseNet (Huang et al., 2017). 0.71 0.48 0.35 0.26 0.2 0.16 0.13 0.11 0.08 0.0 0.2 0.4 0.6 0.8 1.0 (a) 0.71 0.48 0.35 0.26 0.2 0.16 0.13 0.11 0.08 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 (b) 0.72 0.48 0.35 0.26 0.2 0.16 0.13 0.11 0.08 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 (c) 0.71 0.48 0.35 0.26 0.2 0.16 0.13 0.11 0.08 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 (d) Figure 12: Comparisons of architectures with (red curves) and without (blue curves) a net bias for the experimental design described in Section 5. The performance is quantified by the SSIM of the denoised image as a function of the input SSIM of the noisy image. All the architectures with bias perform poorly out of their training range, whereas the bias-free versions all achie ve excellent generalization across noise le vels. (a) Deep Con volutional Neural Netw ork, DnCNN (Zhang et al., 2017). (b) Recurrent architecture inspired by DURR (Zhang et al., 2018a). (c) Multiscale architecture inspired by the UNet (Ronneberger et al., 2015). (d) Architecture with multiple skip connections inspired by the DenseNet (Huang et al., 2017). 16 Published as a conference paper at ICLR 2020 σ Noisy Input( y ) Denoised ( f ( y ) = A y y ) Pixel 1 Pixel 2 Pixel 3 5 1 2 3 1 2 3 55 1 2 3 1 2 3 5 1 2 3 1 2 3 55 1 2 3 1 2 3 5 1 2 3 1 2 3 55 1 2 3 1 2 3 Figure 13: V isualization of the linear weighting functions (rows of A y ) of Bias-Free Recurrent-CNN (top 2 rows) (Section A.2), Bias-Free UNet (next 2 rows) (Section A.3) and Bias-Free DenseNet (bottom 2 rows) (Section A.4) for three example pixels of a noisy input image (left). The next image is the denoised output. The three images on the right show the linear weighting functions corresponding to each of the indicated pixels (red squares). All weighting functions sum to one, and thus compute a local a verage (although some weights are negati ve, indicated in red). Their shapes vary substantially , and are adapted to the underlying image content. Each row corresponds to a noisy input with increasing σ and the filters adapt by averaging o ver a lar ger region. 17 Published as a conference paper at ICLR 2020 σ Noisy Input( y ) Denoised ( f ( y ) = A y y ) Pixel 1 Pixel 2 Pixel 3 5 1 2 3 1 2 3 15 1 2 3 1 2 3 35 1 2 3 1 2 3 55 1 2 3 1 2 3 Figure 14: V isualization of the linear weighting functions (rows of A y ) of a BF-DnCNN for three example pixels of a noisy input image (left). The next image is the denoised output. The three images on the right show the linear weighting functions corresponding to each of the indicated pixels (red squares). All weighting functions sum to one, and thus compute a local average (although some weights are negati ve, indicated in red). Their shapes v ary substantially , and are adapted to the underlying image content. Each ro w corresponds to a noisy input with increasing σ and the filters adapt by av eraging over a lar ger region. 18 Published as a conference paper at ICLR 2020 σ Noisy Input( y ) Denoised ( f ( y ) = A y y ) Pixel 1 Pixel 2 Pixel 3 5 1 2 3 1 2 3 55 1 2 3 1 2 3 5 1 2 3 1 2 3 55 1 2 3 1 2 3 5 1 2 3 1 2 3 55 1 2 3 1 2 3 Figure 15: V isualization of the linear weighting functions (rows of A y ) of Bias-Free Recurrent-CNN (top 2 rows) (Section A.2), Bias-Free UNet (next 2 rows) (Section A.3) and Bias-Free DenseNet (bottom 2 rows) (Section A.4) for three example pixels of a noisy input image (left). The next image is the denoised output. The three images on the right show the linear weighting functions corresponding to each of the indicated pixels (red squares). All weighting functions sum to one, and thus compute a local a verage (although some weights are negati ve, indicated in red). Their shapes vary substantially , and are adapted to the underlying image content. Each row corresponds to a noisy input with increasing σ and the filters adapt by averaging o ver a lar ger region. 19 Published as a conference paper at ICLR 2020 Figure 16: V isualization of left singular vectors of the Jacobian of a BF Recurrent CNN (top 2 rows), BF UNet (ne xt 2 rows) and BF DenseNet (bottom 2 rows) e v aluated on three different images, corrupted by noise with standard de viation σ = 25 . The left column sho ws original (clean) images. The next three columns sho w singular vectors corresponding to non-negligible singular v alues. The vectors capture features from the clean image. The last three columns on the right show singular vectors corresponding to singular values that are almost equal to zero. These vectors are noisy and unstructured. 0 500 1000 1500 2000 2500 axis number 0.0 0.5 1.0 1.5 2.0 2.5 singular value (a) 0 500 1000 1500 2000 2500 axis number 0.0 0.5 1.0 1.5 2.0 singular value (b) 0 500 1000 1500 2000 2500 axis number 0.0 0.5 1.0 1.5 2.0 singular value (c) Figure 17: Analysis of the SVD of the Jacobian of BF-CNN for ten natural images, corrupted by noise of standard deviation σ = 50 . For all images, a large proportion of the singular v alues are near zero, indicating (approximately) a projection onto a subspace (the signal subspace ). (a) Recurrent architecture inspired by DURR (Zhang et al., 2018a). (b) Multiscale architecture inspired by the UNet (Ronneberger et al., 2015). (c) Architecture with multiple skip connections inspired by the DenseNet (Huang et al., 2017). 20 Published as a conference paper at ICLR 2020 28 22 19 16 14 13 11 10 9 8 Input PSNR 28 22 19 16 14 13 11 10 9 8 Output PSNR DnCNN BF-CNN identity 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 Figure 18: Comparison of the performance of a CNN and a BF-CNN with the same architecture for the experimental design described in Section 5. The networks are trained using i.i.d. Gaussian noise but ev aluated on noise drawn i.i.d. from a uniform distribution with mean 0 . The performance is quantified by the PSNR of the denoised image as a function of the input PSNR of the noisy image. All the architectures with bias perform poorly out of their training range, whereas the bias-free versions all achiev e excellent generalization across noise lev els, i.e. they are able to generalize across the two different noise distrib utions. The CNN used for this example is DnCNN (Zhang et al., 2017); using alternativ e architectures yields similar results (see Figures 19). 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (a) 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (b) 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (c) 28 22 19 16 14 13 11 10 9 8 28 22 19 16 14 13 11 10 9 8 (d) Figure 19: Comparisons of architectures with (red curves) and without (blue curv es) a net bias for the experimental design described in Section 5. The networks are trained using i.i.d. Gaussian noise but ev aluated on noise drawn i.i.d. from a uniform distribution with mean 0 . The performance is quantified by the PSNR of the denoised image as a function of the input PSNR of the noisy image. All the architectures with bias perform poorly out of their training range, whereas the bias-free versions all achiev e excellent generalization across noise lev els, i.e. they are able to generalize across the two different noise distributions. (a) Deep Conv olutional Neural Network, DnCNN (Zhang et al., 2017). (b) Recurrent architecture inspired by DURR (Zhang et al., 2018a). (c) Multiscale architecture inspired by the UNet (Ronneberger et al., 2015). (d) Architecture with multiple skip connections inspired by the DenseNet (Huang et al., 2017). 21 Published as a conference paper at ICLR 2020 10 20 30 40 50 60 70 80 90 100 noise 1 2 3 4 5 6 7 blur 0.0 1.5 3.0 4.5 6.0 28 22 19 16 14 13 11 10 9 8 Input PSNR 28 22 19 16 14 13 11 10 9 8 Output PSNR DnCNN BF-CNN Figure 20: Comparison of the performance of DnCNN and a corresponding BF-CNN for image restoration. T raining is carried out on data corrupted with Gaussian noise σ noise ∈ [0 , 55] and Gaussian blur σ blur ∈ [0 , 4] . Performance is measured on test data for inside and outside the training ranges. Left: The difference in performance measured in ∆ PSNR = PSNR BF-CNN − PSNR DnCNN . The training region is illustrated by the rectangular boundary . Bias-free network generalizes across noise lev els for each fixed blur le vels, whereas DnCNN does not. Howe ver , BF-CNN does not generalize across blur le vels. Right: A horizontal slice of the left plot for a fixed blur lev el of σ blur = 2 . BF-CNN generalizes robustly be yond the training range, while the performance of DnCNN degrades significantly . 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment