Minimax Defense against Gradient-based Adversarial Attacks

State-of-the-art adversarial attacks are aimed at neural network classifiers. By default, neural networks use gradient descent to minimize their loss function. The gradient of a classifier's loss function is used by gradient-based adversarial attacks…

Authors: Blerta Lindqvist, Rauf Izmailov

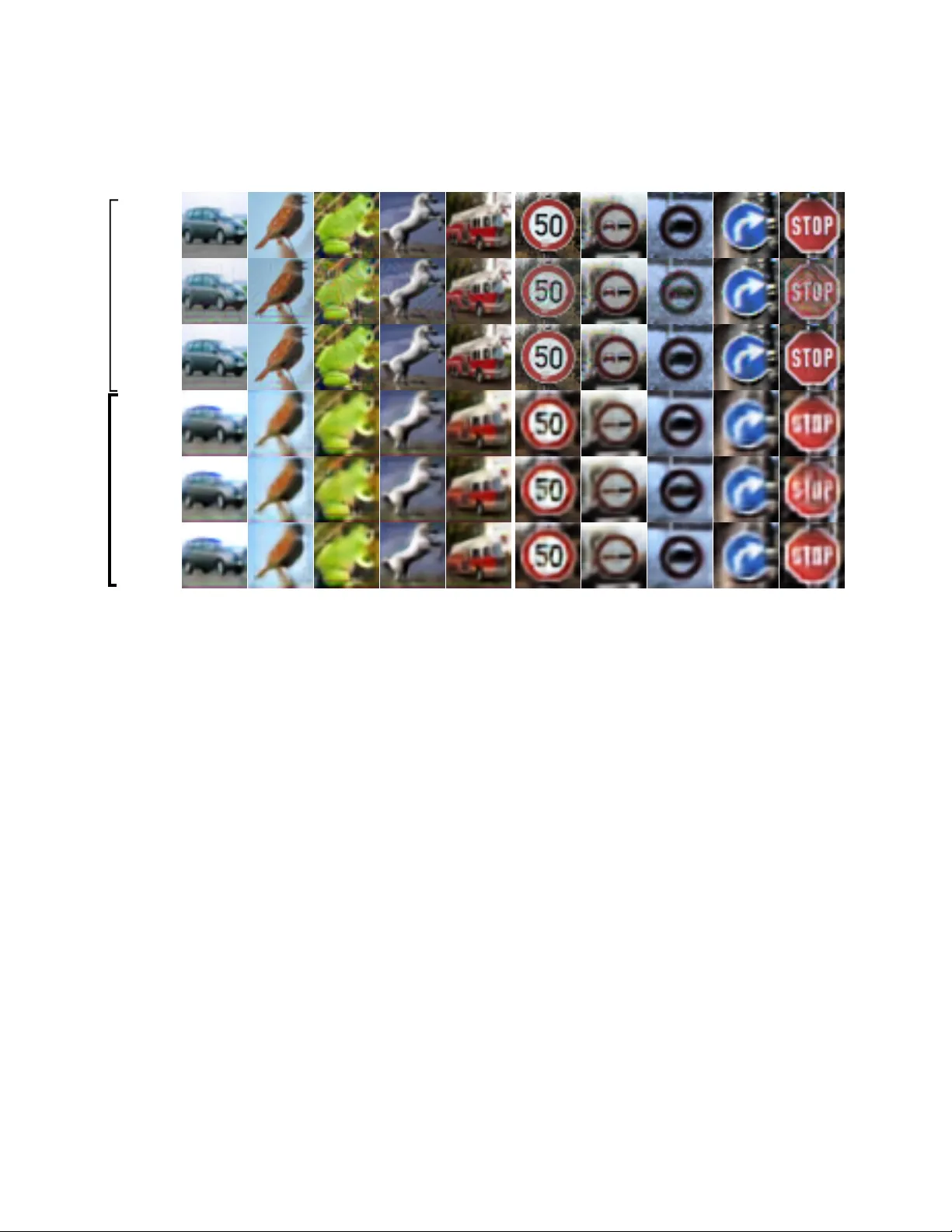

Minimax Defense against Gradient-based Adversarial Attacks Blerta Lindqvist Aalto Uni versity blerta.lindqvist@aalto.fi Rauf Izmailov Perspecta Labs rizmailov@perspectalabs.com Abstract State-of-the-art adversarial attac ks ar e aimed at neural network classifiers. By default, neural networks use gr a- dient descent to minimize their loss function. The gradi- ent of a classifier’ s loss function is used by gradient-based adversarial attac ks to generate adversarially perturbed im- ages. W e pose the question whether another type of op- timization could give neural network classifiers an edge. Her e, we intr oduce a no vel appr oach that uses minimax op- timization to foil gradient-based adversarial attacks. Our minimax classifier is the discriminator of a generative ad- versarial network (GAN) [ 15 ] that plays a minimax game with the GAN gener ator . In addition, our GAN gener ator pr ojects all points onto a manifold that is differ ent fr om the original manifold since the original manifold might be the cause of adversarial attacks. T o measur e the per- formance of our minimax defense, we use adversarial at- tacks - Carlini W agner (CW) [ 7 ], DeepF ool [ 27 ], F ast Gradient Sign Method (FGSM) [ 16 ] - on thr ee datasets: MNIST [ 21 ], CIF AR-10 [ 17 ] and German T raffic Sign (TRAFFIC) [ 40 ]. Against CW attacks, our minimax de- fense achieves 98.07% (MNIST -default 98.93%), 73.90% (CIF AR-10-default 83.14%) and 94.54% (TRAFFIC-default 96.97%). Against DeepF ool attacks, our minimax de- fense achie ves 98.87% (MNIST), 76.61% (CIF AR-10) and 94.57% (TRAFFIC). Against FGSM attacks, we achieve 97.01% (MNIST), 76.79% (CIF AR-10) and 81.41% (TRAF- FIC). Our Minimax adversarial appr oach pr esents a signif- icant shift in defense strate gy for neural network classifiers. 1. Introduction Machine learning classifying algorithms are suscepti- ble to misclassification of adversarially and imperceptably perturbed inputs that are called adversarial samples. The misclassification of adversarial samples has been shown to transfer not only among diverse neural network clas- sifiers [ 41 , 3 ], b ut also to many other types of classi- fiers [ 41 , 16 , 35 , 43 ], such as logistic regression, support vector machines, decision trees, nearest neighbors, and en- semble classifiers. One common defense against adversarial attacks on various types of classifiers is adversarial train- ing [ 18 , 41 , 16 , 42 ], which augments the training data with adversarial samples. The increasing deployment of machine learning classifiers in security and safety-critical domains such as traf fic signs [ 12 ], autonomous driving [ 1 ], health- care [ 13 ], and malware detection [ 10 ] mak es countering ad- versarial attacks important. The field of adversarial attacks and defenses is dom- inated by gradient-based approaches, since gradient de- scent [ 20 , 19 ] is used for optimizing neural networks. State- of-the-art adversarial attacks use the gradient of the loss function in white-box, gradient-based attacks. Such attack methods include CW [ 7 ], DeepFool [ 27 ], FGSM [ 16 ], the Jacobian-based Saliency Map (JSMA) [ 33 ], the Basic Iter- ativ e Method (BIM) [ 18 ], ZOO [ 8 ], the Projected Gradient Descent attack (PGD) [ 23 ]. Defensiv e approaches against these gradient-based attacks try to mask the gradients in different w ays. Defensi ve distillation [ 34 ] does this implic- itly , b ut not always successfully [ 4 ]. Other defense methods use saturated non-linearities [ 28 ] or non-differential classi- fiers [ 22 ] to mask gradients. Ho wever , masked and obfus- cated gradients have been successfully circumvented, either by approximation of the gradient [ 2 ], or by using the gradi- ents of another classifier [ 32 ] based on adversarial transfer - ability [ 35 ] across classifiers. The same gradient approach can also be used to circumvent defenses that use subnet- works to identify adversarial samples implicitly [ 24 ] or ex- plicitly [ 25 ]. Therefore, gradient-based approaches are not an effecti ve defense for against adversarial attacks. Minimax optimization by GANs has been sho wn [ 11 ] to reach limit points not reachable by gradient descent opti- mization. Whereas gradient descent [ 20 ] aims to minimize a classifier’ s loss function, a GAN is a minimax, tw o-player game between two agents, the generator and discrimina- tor [ 15 ]. By ending up in an unreachable optimization point, a classifier that is playing a minimax game in a GAN could be able to fool gradient-based, adversarial attacks. Many adversarial defenses aim to preserve the original manifold and probability distribution by projecting adver - sarial points onto the input manifold of images. In Defense- 1 CIF AR Dataset images CW L2 images Deep- Fool images Dataset images CW L2 images Deep- Fool images TRAFFIC auto. bird frog horse truck Projected Without adversarial training Projection of Samples on Manifold Reshaped by Minimax Defense Original Figure 1. W e sho w that original and adversarial images are projected onto a reshaped manifold, not the original one. Notice how our Minimax projections differ from the original dataset images: they are more blurry in the background and in details that do not matter for classification, for e xample the feathers on the bird or the window detail of the truck. Other details get highlighted. For e xample, the shapes of traffic signs and the signs and letters on them, the white color of the horse seems more prominent, the inside of the sign with 50 on it. For these results, our Minimax GAN has been trained only with the original training dataset, no adv ersarial training. GAN [ 37 ], after random initializations in the latent space of a GAN, the closest match for the adv ersary image is chosen. Both MagNet [ 24 ] and Ape-GAN [ 38 ] use autoencoders to move adversarial samples to wards the original manifold. PixelDefend by Song et al . [ 39 ] generates sev eral similar images, then choses those with highest probability within a distance from an image. Howe ver , the original manifold of data points can be the cause of adversarial attacks. Low probability regions of the distrib ution have been attributed for the susceptibility to adversarial attacks by Szegedy et al . [ 41 ], Song et al . [ 39 ] and T ram ` er et al . [ 43 ]. Further- more, adversarial attack samples have been found to be transferable to not only other deep learning classifiers of different architectures and parameters, but also to very di- verse types of classifiers [ 35 ], such as logistic regression, support vector machines, decision trees, nearest neighbors, and ensemble classifiers. Such div erse classifiers have only one thing in common, the dataset, which determines the data manifold and the probability distribution. When the dataset is indeed the cause of adversarial attacks, then ad- hering to and maintaining the original probability distribu- tion and manifold could be incorrect. Figure 1 and Figure 2 show that Minimax GAN generator does not project the im- ages onto the original manifold. In this paper , we present a no vel defense method against state-of-the-art gradient-based adversarial attacks with two combined approaches. Based on minimax optimization in GANs, the first, novel approach counters the assumption of gradient-based attacks that neural network classifiers per- form gradient descent optimization. The second approach reshapes the original manifold based on the transferability of adversarial attacks across classifiers, which points to the dataset and its manifold as the cause of adversarial attacks. The major contributions of this paper are: • A nov el Minimax defense against adversarial attacks that defends from state-of-the-art gradient-based at- tacks. • W e identify current attacks as being gradient-based, on which Minimax defense is based. • Against state-of-the-art attacks, we achieve accuracy comparable to that of non-adversarial samples. • T o the best of our kno wledge, this is the first GAN minimax approach against adversarial attacks. 2. Related work Minimax versus adversarial training. A study on the con ver gence properties of gradient-based methods in mini- max problems [ 11 ] has focused on their limit points, asking the question whether gradient descent methods con ver ge to local minimax solutions. Their conclusion is that they fail to do so. This has implications for adversarial attacks and defenses, because following in this section, we will show that all current adversarial attacks are gradient-based. Further , we discuss adversarial attacks and defenses and provide summaries. W e show that all attacks are gradient- based and that current defenses are ov ercome by the attacks. 2.1. Adversarial attacks Here, we detail state-of-the-art adversarial attacks. CW is formulated as a constrained optimization prob- lem [ 7 ]: minimize k x adv − x 0 k 2 2 + c · loss f ( x adv , l ) subject to x adv ∈ [0 , 1] n , (1) where f is the classification function, l is an adversary tar- get label. W ith a change of v ariable, CW obtains an uncon- strained minimization problem that allo ws it to do optimiza- tion through backpropagation. CW has three attacks that use the same optimization framework as in Equation 1 , but are based on different norms: L 0 , L 2 and L ∞ attacks. CW is a gradient-based attack because it uses back-propagation on the classifier neural network. The DeepFool attack [ 27 ] looks at the distance of a point from the classifier decision boundary as t he minimum amount of perturbation needed to change its classification. T o estimate this distance, DeepFool approximates the clas- sifier with a linear one, and then estimates the distance of the point from the linear boundary . After determining the minimum distance from all boundaries, DeepFool takes a step in the direction of the closest boundary . This is re- peated until DeepFool finds an adversarial sample. Deep- Fool uses the deriv ati ve of the affine approximation of the classifier , therefore Deepfool is a gradient-based method. The FGSM attack [ 16 ] uses the gradient of a classifier’ s loss function with respect to the input image. FGSM per- turbs each input dimension in the direction of the gradient by a magnitude of . For model θ , with loss J ( θ , x, y ) , x an input image and y its label, adversarial images are obtained: x adv = x + sig n ( ∇ x J ( θ , x, y )) . (2) FGSM is a gradient-based method, because it uses the deriv ative. FGSM is a one-step method, its iterati ve version is the Basic Iterativ e Method (BIM) [ 18 ]. The BIM attack [ 18 ] extends the FGSM attack [ 16 ] by applying iterativ ely with a smaller step α . After each step, pixels are clipped to k eep adversarial image within neigh- borhood of the image. As an extension of the FGSM attack, the BIM attack is also gradient-based. The JSMA attack [ 33 ] is a greedy algorithm using the classifier gradient to compute a salienc y map. which makes JSMA a gradient-based attack. The saliency map embodies the effect of pixels on classification. JSMA goes through the pixels in saliency-decreasing order and changes them. If misclassification is achiev ed the iterations are stopped. Universal Adversarial Perturbations (U AP) seeks to find a uni versal perturbation that can cause misclassification of most points in the dataset [ 26 ]. UAP iterates over the images, calculating for each the minimal perturbation that causes that image to move to the classifier boundary . U AP aggregates all these perturbations. Se veral iterations over the X data points are performed and the uni versal perturba- tion is centered at 0 and its norm constrained. UAP uses the same approach as DeepFool to calculate the minimum per- turbation for an image. Since DeepFool is a gradient-based attack, U AP also is gradient-based. Summary of adversarial attacks. All state-of-the-art adversarial attacks are gradient-based . They exploit the gradients of neural network classifiers to perform optimiza- tion that creates adversarial samples. This opens up the op- portunity for defending from all adversarial attacks by tar- geting the gradients that they relie on. CW and DeepFool are considered the strongest state-of-the-art attacks. 2.2. Adversarial defenses Follo wing are e xamples and details of se v eral techniques used for defending against adversarial attacks. Adversarial training. In adversarial training, the dataset is augmented with adversarial samples, often of the same kind as the attack, and the classifier is retrained [ 18 ]. Adversarial training augments the dataset by filling out lo w- probability gaps with additional data points and then re- training the classifier to find a better boundary . The benefit of adversarial training is that it is easy to use and improv es defense when attack is known. The drawback is that it only works against the attack that was used to generate the ad- versarial samples, not against others. Identification or projection of adversary samples on the original manifold. MagNet by Meng et al . [ 24 ] identi- fies adversarial samples and mov es them tow ards the orig- inal manifold using an autoencoder or a collection of au- toencoders. It contains sev eral detector networks that de- tect adversarial examples from the distance between the original image and the reconstructed image. The reformer network mov es adversarial samples towards the manifold. MagNet is the closest defence to our Minimax defense, but it cannot be used as a baseline for it because it has been shown [ 6 ] that a small custimization of the CW attack o ver - comes MagNet. Defense-GAN by Samangouei et al . [ 37 ] which uses a GAN with a generator to project adv ersarial points onto the manifold of natural images. Given an input point that is potentially adversarial, Defense-GAN does several random initializations in the latent space and chooses from them a latent space seed that generates the closest match to the in- put. The closest match is considered as the projection of the original input point, though due to the randomness and depending on the number of random initializations, this pro- jection might end up far from the real projection of the point on the manifold. Defense-GAN uses a classifier to which GAN input or GAN output or both GAN input and GAN output data points are used. PixelDefend by Song et al . [ 39 ] proposes generativ e models to mo ve adv ersarial images to wards the distrib ution seen in the data. PixelDefend identifies adv ersarial samples with statistical methods ( p -v alue) and finds more probable samples by generating similar images with an optimization that uses gradient descent, looking for highest probability images within a distance. APE-GAN [ 38 ] trains a pre-processing network to project normal as well as adversarial data points onto the original manifold using a GAN. Gradient masking and obfuscation. Defensi ve Distil- lation (DD) [ 34 ] is based on distillation - a kno wledge trans- fer method. DD aims to provide resilience by reducing the amplitude of gradients of the loss function which are used by gradient-based attacks. DD trains a teacher network and calculates the output based on the output of the layer be- fore the softmax layer divided by a temperature parameter T . Then, DD generates soft labels for the training dataset by running the dataset through the teacher network. The soft labels are used for training the distilled netw ork and reduce ov erfitting of the original dataset. Summary . Unlike adversarial training, both other de- fenses hav e been ov ercome by gradient-based attacks. The approach of identification or projection of adversar - ial samples onto the original manifold has been successfully attacked. Identification [ 5 ] and projection on original man- ifold [ 6 ] hav e been sho wn to be vulnerable to CW . The rea- son is that these defenses use neural networks to identify and to project points, and neural networks use gradient de- scent, which is exploited by gradient-based attacks. Gradient masking and obfuscation has also been ov er- come by the CW attack [ 4 ]. CW attacks the DD by changing the inputs to the final layer to a v oid v anishing gradients [ 4 ]. More generally , masked and or obfuscated gradients can be ov ercome due to transferability . An attack can create an- other classifier, train it on hard labels if available, or soft if not, and use the gradients of the new classifier for attack. Due to the transferability of adversarial attacks, adversar - ial attacks from the new classifier will likely transfer to the original defense. 3. Our approach: Minimax adversarial de- fense Our Minimax adversarial defense is a generic defense against adv ersarial attacks that does not identify adversarial points explicitly and does not necessitate adversarial train- ing but can benefit from it. Figure 2 shows image projec- tions using the GAN generator . Minimax defense counters state-of-the-art, gradient- based adversarial attacks by doing minimax optimization with a GAN discriminator . Our Minimax classifier is a GAN discriminator where the Real and F ak e labels hav e been specialized to incorporate the classification labels. The presence of the dataset labels in the discriminator reshapes the original manifold to a different manifold. As a result, the generator projects images onto the reshaped manifold. What causes adversarial attacks? It is commonly ac- cepted that the data points of natural image datasets oc- cupy lo w-dimensional manifolds in high-dimensional input space [ 14 ]. Manifolds are defined as collections of points in the input space that are connected. Many adversarial de- fense methods aim to preserve the original manifold and to project adversarial data points onto it. For example, Defense-GAN [ 37 ] finds close matches of adversary im- ages on the manifold and chooses the closest. MagNet [ 24 ] and Ape-GAN [ 38 ] mov e points onto the original manifold with autoencoder and GAN autoencoder generator respec- tiv ely . Pix elDefend by Song et al . [ 39 ] chooses a substi- tute for an adversarial image by generating sev eral sim- ilar images, then choosing those with highest probability within a distance from the adversary image. W e argue that the adversarial transferability across very different classi- fiers [ 35 ] means that the dataset and its manifold shape are the cause, specifically low probability regions of the distri- bution [ 41 , 39 , 43 ]. Furthermore, adversarial attack sam- ples hav e been found to be transferable to not only other deep learning classifiers of different architectures and pa- rameters, but also to very di verse types of classifiers [ 35 ], such as logistic regression, support vector machines, de- cision trees, nearest neighbors, and ensemble classifiers. Such di verse classifiers have only one thing in common, the dataset, which determines the data manifold and the proba- bility distrib ution. Therefore, to counter adversarial attacks, MNIST Dataset images CW L2 images Dataset images CW L2 images TRAFFIC Projected Dataset images FGSM images Dataset images FGSM images Projected With adversarial training Projection of Samples on Manifold Reshaped by Minimax Defense Original Original Figure 2. W e show that MNIST and TRAFFIC images are projected on a reshaped manifold by our Minimax defense. For comparison, original images and their projections are included as well. CW and FGSM attacks are used. Notice how our Minimax projections differ from the original dataset images whether they are original images or adv ersary . It can be seen in the TRAFFIC images that projected images are more blurry in the background. It appears that the numbers in MNIST images and the sign details get emphasized. In the MNIST images, we can also see that the shape of the projected digits is not exactly the same as the original image. For example, digit 3 loses the little turn at the top left. Therefore, the manifold is not the same as the original one. the manifold needs to be reshaped and not preserved. Figure 3 depicts our view that low probability regions cause adversarial attacks [ 41 , 39 , 43 ]. The boundaries of different types of classifiers overlap each-other , as sup- ported by adversarial transferability . As all classifiers aim to keep same distances from different dataset classes, gaps in the manifold cause the boundaries to be shifted. Shifted boundaries create areas in which images will misclassify due to the shift. GAN choice T o reshape manifolds, autoencoders are fre- quently used, either on their own [ 24 ] as in MagNet [ 24 ], or as part of a GAN with an autoencoder generator [ 44 ]. W e choose to use a GAN in our approach because GANs hav e been shown to be very good at reconstructing mani- folds of natural image datasets in original high-dimensional input spaces [ 15 ]. In distinction to other methods that also use GANs against adversarial attacks, our Minimax genera- tor is an autoencoder because it enables direct projection of images onto the reshaped manifold. The inclusion of class labels into Real and F ake labels of our Minimax discrimi- nator allo ws our defense to reshape the manifold e v en in the absence of adversarial samples in training. Multi-label GAN discriminator in Minimax adver- sarial defense. In Minimax defense, we combine and ex- tend these GAN variations [ 30 , 36 ]. W e combine them by having the GAN discriminator act as a classifier and e xtend them by having K labels for each Real and F ak e category of labels. As a result, a Minimax discriminator has twice the number of labels in the dataset, in all 2 × K labels. How our approach differs fr om similar defenses. The defenses that are most similar to Minimax defense, Mag- Net [ 24 ] and Defense-GAN [ 37 ], are essentially differ - ent from Minimax in that they do not deply the minimax defense. MagNet [ 24 ] and Defense-GAN [ 37 ] reposition their sample points with autoencoders/GANs and then clas- sify the repositioned points on a separate classifier . Our Minimax defense uses the GAN discriminator as classifier , does not perform gradient descent. Instead, the descrimina- tor/classifier is inv olv ed in a minimax game with the GAN generator , which leads it to different optimization solutions than gradient descent. This is the crucial difference from MagNet [ 24 ] and Defense-GAN [ 37 ]. Labels. The original GAN definition [ 15 ] specified two discriminator labels: R eal , F ak e . Our Minimax defense further breaks down these labels into the classes of the clas- sification problem. If the original classification problem has K classes, our Minimax defense discriminator has 2 × K classes. For example, for MNIST , the discriminator labels are: Real − 0 , F ak e − 0 , Real − 1 , F AK E − 1 , . . . , RE AL − 9 and F AK E − 9 ; 20 labels in total. Perfect boundary V arious classifier boundaries Adversarial samples Gapinthemanifold Digitsthree Digitsseven Adversarial perturbation Figure 3. W e illustrate our understanding of how manifold gaps cause adversarial attacks for a simple case of classification of dig- its 3 and 7 . There is a small gap in the lower manifold that lacks data points from the square class. Classifiers aim to maintain same distance from points of dif ferent classes. This causes them to pass roughly in the same region of space. Due to the manifold gap, all classifiers shift towards the gap to maintain same distance from points of different classes. As a result, in the vicinity of the gap, all classifiers are surrounded by space the points of which would belong to one of the classes. They all misclassify the data points shown as adversarial samples, which are between the classifiers and the perfect boundary . Loss function. The Minimax GAN loss function is: L = 9 X i =0 E x ∼ p data ( x ) | y i [ log D ( x )]+ + 19 X i =10 E x ∼ p data ( x ) | y i [1 − log D ( G ( x ))] . (3) The term l og D ( G ( x )) reflects the usage of an autoen- coder as the generator , where the input is dataset images. Minimax defense ar chitectur e. Our no vel Minimax de- fense against adversarial attacks is a GAN. The generator of this GAN is an autoencoder that takes images as input and outputs images. In addition to the Real and F ak e labels of a GAN discriminator , we hav e added the class labels to the discriminator . As a result, the number of labels in the dis- criminator is twice the number of labels in the dataset. The architecture of our Minimax GAN is shown in Figure 4 . Figure 4 also sho ws ho w an image in a digit binary clas- sification problem ( 3 and 7 for simplicity) gets classified by Minimax defense. First, the image goes through the gener- ator and then through the discriminator . The probability of MNIST CIF AR TRAFFIC Con v .R 3x3x32 Con v .E 3x3x32 Con v .R 3x3x32 BatchNorm BatchNorm BatchNorm Con v .R 3x3x64 Con v .E 3x3x32 Con v .R 3x3x32 BatchNorm BatchNorm BatchNorm MaxPool 2x2 MaxPool 2x2 MaxPool 2x2 Dropout 0.25 Dropout 0.2 Dropout 0.2 Dense 128 Con v .E 3x3x64 Con v .R 3x3x64 Dropout 0.5 BatchNorm BatchNorm Dense.Soft 20 Con v .E 3x3x64 Con v .R 3x3x64 BatchNorm BatchNorm MaxPool 2x2 MaxPool 2x2 Dropout 0.3 Dropout 0.2 Con v .E 3x3x128 Con v .R 3x3x128 BatchNorm BatchNorm Con v .E 3x3x64 Con v .R 3x3x128 BatchNorm BatchNorm MaxPool 2x2 MaxPool 2x2 Dropout 0.4 Dropout 0.2 Dense.Soft 20 Dense 512 BatchNorm Dropout 0.5 Dense.Soft 20 T able 1. Discriminator architecture for MNIST , CIF AR and TRAFFIC datasets. the input image being, for e xample, digit 3 is the sum of the probabilities of labels Real − 3 and F ak e − 3 . GAN Discriminators The discriminators for all three datasets have con volutional layers, batch-normalization lay- ers, max-pooling, drop-out layers. They all use regulariza- tion and SGD optimization. The architecture of all three discriminators is shown in T able 1 . GAN Generators. The generators for all three datasets hav e con volutional layers, batch-normalization layers, max- pooling, drop-out layers. The final layer is a conv olutional layer with sigmoid activ ation. They all use regularization and Adadelta optimization. The architecture of all three generators is shown in T able 2 . 4. Experiments and Results T o ev aluate our Minimax defense method, it is impor- tant to sho w that the accuracy of adversarial samples with Minimax is comparable to the accuracy of non-adversarial samples with a def ault classifier . W e present the results first followed by the details for obtaining them. Results of Minimax defense evaluation. Our results in T able 3 show that our Minimax adversarial defense main- tains very high accuracy for the MNIST dataset, with values very close to the accuracy of the default classifier trained and tested on the original MNIST dataset - 98.93%. Evaluating our Minimax adversarial defense on CIF AR- InputImages inOriginal Manifold Imagesin Reshaped Manifold Discriminator Autoencoder /Generator Encoder Decoder T rue3 False3 T rue7 False7 3 7 Probabilities Figure 4. Here, we show an overvie w of the transformations that images go through in Minimax defense. First, it gets projected on the reshaped manifold by going through the generator . Second, the projected image is classified by the discriminator , where the probability for each label is calculated as the sum of the true and false probabilities for that label. MNIST CIF AR TRAFFIC Con v .R 3x3x32 Con v .R 3x3x64 Con v .R 3x3x32 BatchNorm BatchNorm BatchNorm MaxPool 2x2 Con v .R 3x3x64 MaxPool 2x2 Con v .R 3x3x32 BatchNorm Con v 3x3x32 BatchNorm MaxPool 2x2 BatchNorm MaxPool 2x2 Con v .R 3x3x128 MaxPool 2x2 Con v .R 3x3x32 BatchNorm Dropout 0.2 BatchNorm Con v .R 3x3x128 Conv .R 3x3x32 Upsampling 2x2 BatchNorm BatchNorm Con v .R 3x3x32 MaxPool 2x2 Upsampling 2x2 BatchNorm Upsampling 2x2 Con v 3x3x32 Upsampling 2x2 Con v .R 3x3x128 BatchNorm Con v .Sig 3x3x1 BatchNorm Upsampling 2x2 Upsampling 2x2 Dropout 0.2 Con v .R 3x3x64 Con v .Sig 3x3x3 BatchNorm Con v .Sig 3x3x3 T able 2. Generator architecture for MNIST , CIF AR and TRAFFIC datasets. MNIST Default Accuracy 98.93% Attack Parameter No Minimax Defense Defense FGSM = 0 . 1 81.18% 97.01% CW L2 conf = 40 0.79% 97.50% CW L2 conf = 0 0.84% 98.07% DeepFool 1.12% 98.87% T able 3. Comparison of MNIST dataset classification accuracy for no defense and for Minimax defense. MNIST default accuracy is at the top of the table. 10, our results in T able 4 show that the Minimax accuracy for the CIF AR-10 dataset remains v ery close to the accuracy of the default classifier trained and tested on the original CIF AR-10 dataset - 83.14%. W e e valuate our Minimax adv ersarial defense on TRAF- FIC and find, based on in T able 5 , that the accuracy of CIF AR Default Accurac y 83.14% Attack Parameter No Minimax Defense Defense FGSM = 0 . 1 10.28% 76.79% CW L2 conf = 40 8.82% 69.73% CW L2 conf = 0 8.73% 73.90% DeepFool 8.99% 76.61% T able 4. W e compare CIF AR-10 dataset classification accuracy for no defense and for Minimax defense. Adversarial training was used for the results in italics. our Minimax adversarial defense for the TRAFFIC dataset remains very close to the accuracy of the default classi- fier trained and tested on the original TRAFFIC dataset - 96.97%. TRAFFIC-32x32 Default Accuracy 96.97% Attack Parameter No Minimax Defense Defense FGSM = 0 . 1 28.74% 81.41% CW L2 conf = 40 1.56% 94.54% CW L2 conf = 0 1.41% 93.66% DeepFool 1.43% 94.57% T able 5. W e compare classification accuracy for the TRAFFIC dataset for no defense and for Minimax defense. Adversarial training was used for the results in italics. Comparison with MagNet. The MagNet method has been sho wn [ 6 ] to not withstand customized CW attacks. Nev ertheless, we compare the two approaches to provide context for our results since the y both use autoencoders. MagNet [ 24 ] is tested against very small perturbations for FGSM attack on MNIST dataset - up to = 0 . 01 , whereas we show Minimax withstands attacks of very high perturbations, up to = 0 . 3 , shown here in T able 6 . Attacks. W e generate adversarial samples with Clev- erHans 3.0.1 [ 31 ] for the FGSM, CW L2 and DeepFool attacks and the IBM Adversarial Robustness 360 T oolbox (AR T) toolbox [ 29 ] for the JSMA attack. W e ev aluate Comparison to MagNet for MNIST Attack Minimax / No MagNet / No defense / attack Defense / attack FGSM = 0 . 3 86.72% / 98.93% *0.13% / 99.4% FGSM = 0 . 2 98.52% / 98.93% N A FGSM = 0 . 1 98.52% / 98.93% N A FGSM = 0 . 01 98.52% / 98.93% 100.00% / 99.4% CW L2 97.50% / 98.93% 99.50% / 99.4% conf = 40 CW L2 98.07% / 98.93% 99.50% / 99.4% conf = 0 DeepFool 98.87% / 98.93% 99.40% / 99.4% T able 6. Comparison of Minimax defense results to MagNet re- sults. The Magnet results are obtained from the Magnet paper [ 24 ] with the exception of entries marked with *, which were a v eraged from comparison results from the Defense-GAN [ 37 ] paper . against three types of white-box attacks: CW [ 7 ], Deep- Fool [ 27 ] and FGSM [ 16 ]. W e choose CW [ 7 ] and Deep- Fool [ 27 ] because they are state-of-the-art, and FGSM [ 16 ] because it is one of the first identified attacks. Minimax is written in Python 3.5.2, using Keras 2.2.4 [ 9 ]. Baselines. T o calculate a baseline in the absence of ad- versarial attack, we trained classifiers with identical models as our Minimax discriminators, except for the number of output labels in the last softmax layer . The baselines in the absence of adversarial attack are: for MNIST 98.93%, for CIF AR-10 83.14%, for TRAFFIC 96.97%. Datasets W e ev aluate our Minimax adversarial defense on three datasets - MNIST [ 21 ], CIF AR-10 [ 17 ] and TRAF- FIC [ 40 ]. The MNIST dataset [ 21 ] is a dataset of hand- written digits with ten classes of size 28 × 28 × 1 . MNIST has 60K training samples and 10K testing samples. The CIF AR-10 dataset [ 17 ] is a 10-class dataset of objects, with images of size 32 × 32 × 3 . The sizes of the CIF AR-10 training and testing datasets are 50K and 10K. The TRAF- FIC dataset [ 40 ] is a dataset of 43 classes of images of traffic signs in dif ferent sizes, from 15 × 15 × 3 to 250 × 250 × 3 pixels. W e rescale the images to 32 × 32 × 3 the same size as CIF AR-10 images. The TRAFFIC training and testing datasets hav e respecti vely 39209 and 12630 samples. Discriminator and generator architectures. The model architecture of all discriminators is shown in T able 1 , the model architecture of all autoencoders is shown in T a- ble 2 . Optimizers. For classification of the MNIST dataset, we use an Adadelta optimizer for the discriminator, clas- sifier and generator; and SGD for the adversarial model. For CIF AR-10, we use RMSprop for the discriminator and classifier; Adadelta for the generator; and SGD for the ad- versarial model. For the TRAFFIC dataset, we use SGD for the discriminator and classifer; Adadelta for the generator; SGD for the adversarial model. T raining. W e use L 2 kernel regularization in the con vo- lutional layers of the discriminators, classifiers, and gener- ators for the classification of all three datasets. W e do not perform image augmentation in the training of any of the datasets. The batches are chosen randomly . The training is done in random batches of 32 images for MNIST and TRAFFIC, and 128 images for CIF AR-10. The number of epochs is 10 for MNIST , TRAFFIC, and 64 for CIF AR- 10. W e conduct experiments with and without adversarial training. For adversarial training, we enhance the training dataset with adversarial samples of the same type as the the attack. During training, the training adv ersarial samples are refreshed after 60 batches. 5. Discussion and Conclusions In this paper , we have shown that all state-of-the-art attacks were gradient-based and that our Minimax de- fense countered state-of-the-art adversarial attacks against neural-network classifiers. This was done without mask- ing gradients which has been shown to be an ineffec- tiv e defense. Since all state-of-the-art gradient attacks are gradient-based, minimax defends against all of them. Our nov el Minimax defense has combined a minimax ap- proach against gradient-based attacks with an approach that reshapes the manifold for better classification. Our results showed that Minimax defense countered gradient- based attacks for three di verse datasets. F or CW at- tacks, Minimax defense achiev ed 98.07% (MNIST -default 98.93%), 73.90% (CIF AR-10-default 83.14%) and 94.54% (TRAFFIC-default 96.97%). Against DeepFool attacks, our minimax defense achiev es 98.87% (MNIST), 76.61% (CIF AR-10) and 94.57% (TRAFFIC). These results show that Minimax maintains accuracy for adv ersarial samples. W e have also demonstrated using the TRAFFIC dataset in adversarial attacks and our Minimax defense. The TRAFFIC dataset could be a replacement for CIF AR-10 in adversarial methods. Though CIF AR-10 is commonly used for adversarial attacks and defenses, it needs many epochs to con ver ge. In conclusion, we have identified gradient descent as an underlying crucial aspect of current attacks, which has lead to our Minimax defense that does not mask its gradients b ut is still able to counter state-of-the-art attacks. References [1] Dario Amodei, Chris Olah, Jacob Steinhardt, Paul F . Chris- tiano, John Schulman, and Dan Man ´ e. Concrete problems in AI safety . CoRR , abs/1606.06565, 2016. 1 [2] Anish Athalye, Nicholas Carlini, and David W agner . Ob- fuscated gradients gi ve a false sense of security: Circum- venting defenses to adversarial examples. arXiv preprint arXiv:1802.00420 , 2018. 1 [3] Battista Biggio, Igino Corona, Davide Maiorca, Blaine Nel- son, Nedim ˇ Srndi ´ c, Pa vel Lasko v , Giorgio Giacinto, and Fabio Roli. Evasion attacks against machine learning at test time. In Joint Eur opean confer ence on machine learning and knowledge discovery in databases , pages 387–402. Springer , 2013. 1 [4] Nicholas Carlini and David W agner . Defensiv e distilla- tion is not robust to adversarial examples. arXiv preprint arXiv:1607.04311 , 2016. 1 , 4 [5] Nicholas Carlini and David W agner . Adversarial examples are not easily detected: Bypassing ten detection methods. In Pr oceedings of the 10th ACM W orkshop on Artificial Intelli- gence and Security , pages 3–14. A CM, 2017. 4 [6] Nicholas Carlini and David W agner . Magnet and” efficient defenses against adversarial attacks” are not robust to adver - sarial examples. arXiv pr eprint arXiv:1711.08478 , 2017. 4 , 7 [7] Nicholas Carlini and David W agner . T owards ev aluating the robustness of neural networks. In 2017 IEEE Symposium on Security and Privacy (SP) , pages 39–57. IEEE, 2017. 1 , 3 , 7 [8] Pin-Y u Chen, Huan Zhang, Y ash Sharma, Jinfeng Y i, and Cho-Jui Hsieh. Zoo: Zeroth order optimization based black- box attacks to deep neural networks without training sub- stitute models. In Proceedings of the 10th ACM W orkshop on Artificial Intelligence and Security , pages 15–26. A CM, 2017. 1 [9] Franc ¸ ois Chollet et al. Keras. https://keras.io , 2015. 7 [10] Zhihua Cui, Fei Xue, Xingjuan Cai, Y ang Cao, Gai-ge W ang, and Jinjun Chen. Detection of malicious code variants based on deep learning. IEEE T ransactions on Industrial Informat- ics , 14(7):3187–3196, 2018. 1 [11] Constantinos Daskalakis and Ioannis Panageas. The limit points of (optimistic) gradient descent in min-max optimiza- tion. In Advances in Neur al Information Pr ocessing Systems , pages 9236–9246, 2018. 1 , 3 [12] Ke vin Eykholt, Ivan Evtimov , Earlence Fernandes, Bo Li, Amir Rahmati, Chaowei Xiao, Atul Prakash, T adayoshi K ohno, and Dawn Song. Robust physical-world attacks on deep learning visual classification. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recog- nition , pages 1625–1634, 2018. 1 [13] Oliver Faust, Y uki Hagiwara, T an Jen Hong, Oh Shu Lih, and U Rajendra Acharya. Deep learning for healthcare applica- tions based on physiological signals: a revie w . Computer methods and pr ogr ams in biomedicine , 2018. 1 [14] Ian Goodfello w , Y oshua Bengio, and Aaron Courville. Deep Learning . MIT Press, 2016. http://www. deeplearningbook.org . 4 [15] Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farle y , Sherjil Ozair , Aaron Courville, and Y oshua Bengio. Generative adversarial nets. In Advances in neur al information pr ocessing systems , pages 2672–2680, 2014. 1 , 5 [16] Ian J Goodfellow , Jonathon Shlens, and Christian Szegedy . Explaining and harnessing adversarial examples (2014). arXiv pr eprint arXiv:1412.6572 , 2014. 1 , 3 , 7 [17] Alex Krizhevsky , V inod Nair , and Geoffre y Hinton. Cifar- 10 and cifar-100 datasets. URl: https://www . cs. tor onto. edu/kriz/cifar . html , 6, 2009. 1 , 8 [18] Alexey Kurakin, Ian Goodfellow , and Samy Bengio. Ad- versarial machine learning at scale. arXiv preprint arXiv:1611.01236 , 2016. 1 , 3 [19] Y ann LeCun, Bernhard E Boser , John S Denker , Donnie Henderson, Richard E Howard, W ayne E Hubbard, and Lawrence D Jackel. Handwritten digit recognition with a back-propagation network. In Advances in neural informa- tion pr ocessing systems , pages 396–404, 1990. 1 [20] Y ann LeCun, L ´ eon Bottou, Y oshua Bengio, Patrick Haffner , et al. Gradient-based learning applied to document recog- nition. Pr oceedings of the IEEE , 86(11):2278–2324, 1998. 1 [21] Y ann LeCun, Corinna Cortes, and Christopher JC Burges. The mnist database of handwritten digits, 1998. URL http://yann. lecun. com/exdb/mnist , 10:34, 1998. 1 , 8 [22] Jiajun Lu, Theerasit Issaranon, and David Forsyth. Safe- tynet: Detecting and rejecting adversarial examples robustly . In Pr oceedings of the IEEE International Confer ence on Computer V ision , pages 446–454, 2017. 1 [23] Aleksander Madry , Aleksandar Makelov , Ludwig Schmidt, Dimitris Tsipras, and Adrian Vladu. T o wards deep learn- ing models resistant to adversarial attacks. arXiv pr eprint arXiv:1706.06083 , 2017. 1 [24] Dongyu Meng and Hao Chen. Magnet: a two-pronged de- fense against adversarial examples. In Proceedings of the 2017 ACM SIGSAC Conference on Computer and Commu- nications Security , pages 135–147. ACM, 2017. 1 , 2 , 3 , 4 , 5 , 7 , 8 [25] Jan Hendrik Metzen, Tim Genewein, V olker Fischer, and Bastian Bischoff. On detecting adversarial perturbations. arXiv pr eprint arXiv:1702.04267 , 2017. 1 [26] Seyed-Mohsen Moosavi-Dezfooli, Alhussein Fawzi, Omar Fa wzi, and Pascal Frossard. Univ ersal adversarial perturba- tions. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , pages 1765–1773, 2017. 3 [27] Seyed-Mohsen Moosavi-Dezfooli, Alhussein Fa wzi, and Pascal Frossard. Deepfool: a simple and accurate method to fool deep neural networks. In Proceedings of the IEEE con- fer ence on computer vision and pattern r ecognition , pages 2574–2582, 2016. 1 , 3 , 7 [28] Aran Nayebi and Surya Ganguli. Biologically inspired pro- tection of deep networks from adversarial attacks. arXiv pr eprint arXiv:1703.09202 , 2017. 1 [29] Maria-Irina Nicolae, Mathieu Sinn, Minh Ngoc Tran, Beat Buesser , Ambrish Raw at, Martin W istuba, V alentina Zant- edeschi, Nathalie Baracaldo, Bryant Chen, Heiko Ludwig, Ian Molloy , and Ben Edwards. Adversarial robustness tool- box v1.0.1. CoRR , 1807.01069, 2018. 7 [30] Augustus Odena, Christopher Olah, and Jonathon Shlens. Conditional image synthesis with auxiliary classifier gans. arXiv pr eprint arXiv:1610.09585 , 2016. 5 [31] Nicolas Papernot, Fartash Faghri, Nicholas Carlini, Ian Goodfellow , Reuben Feinman, Alexe y K urakin, Cihang Xie, Y ash Sharma, T om Bro wn, Aurko Roy , Ale xander Matyasko, V ahid Behzadan, Karen Hambardzumyan, Zhishuai Zhang, Y i-Lin Juang, Zhi Li, Ryan Sheatsley , Abhibhav Garg, Jonathan Uesato, W illi Gierke, Y inpeng Dong, Da vid Berth- elot, Paul Hendricks, Jonas Rauber, and Rujun Long. T ech- nical report on the clev erhans v2.1.0 adversarial examples library . arXiv pr eprint arXiv:1610.00768 , 2018. 7 [32] Nicolas P apernot, Patrick McDaniel, Ian Goodfellow , Somesh Jha, Z Berkay Celik, and Ananthram Swami. Practi- cal black-box attacks against machine learning. In Pr oceed- ings of the 2017 ACM on Asia conference on computer and communications security , pages 506–519. A CM, 2017. 1 [33] Nicolas Papernot, Patrick McDaniel, Somesh Jha, Matt Fredrikson, Z Berkay Celik, and Ananthram Swami. The limitations of deep learning in adversarial settings. In 2016 IEEE European Symposium on Security and Privacy (Eu- r oS&P) , pages 372–387. IEEE, 2016. 1 , 3 [34] Nicolas Papernot, Patrick McDaniel, Xi W u, Somesh Jha, and Ananthram Swami. Distillation as a defense to adver- sarial perturbations against deep neural networks. In 2016 IEEE Symposium on Security and Privacy (SP) , pages 582– 597. IEEE, 2016. 1 , 4 [35] Nicolas Papernot, Patrick D. McDaniel, and Ian J. Good- fellow . Transferability in machine learning: from phenom- ena to black-box attacks using adversarial samples. CoRR , abs/1605.07277, 2016. 1 , 2 , 4 [36] Tim Salimans, Ian Goodfellow , W ojciech Zaremba, V icki Cheung, Alec Radford, and Xi Chen. Improv ed techniques for training gans. In Advances in Neural Information Pr o- cessing Systems , pages 2234–2242, 2016. 5 [37] Pouya Samangouei, Maya Kabkab, and Rama Chellappa. Defense-gan: Protecting classifiers against adversarial at- tacks using generative models. CoRR , abs/1805.06605, 2018. 1 , 4 , 5 , 8 [38] Shiwei Shen, Guoqing Jin, Ke Gao, and Y ongdong Zhang. Ape-gan: Adversarial perturbation elimination with gan. arXiv pr eprint arXiv:1707.05474 , 2017. 2 , 4 [39] Y ang Song, T aesup Kim, Sebastian Nowozin, Stefano Er- mon, and Nate Kushman. Pixeldefend: Lev eraging genera- tiv e models to understand and defend against adv ersarial ex- amples. CoRR , abs/1710.10766, 2017. 2 , 4 , 5 [40] J. Stallkamp, M. Schlipsing, J. Salmen, and C. Igel . Man vs. computer: Benchmarking machine learning algorithms for traffic sign recognition. Neural Networks , 32(0):–, 2012. 1 , 8 [41] Christian Szegedy , W ojciech Zaremba, Ilya Sutskev er , Joan Bruna, Dumitru Erhan, Ian J. Goodfello w , and Rob Fer- gus. Intriguing properties of neural networks. CoRR , abs/1312.6199, 2013. 1 , 2 , 4 , 5 [42] Florian Tram ` er , Alexe y Kurakin, Nicolas Papernot, Ian Goodfellow , Dan Boneh, and Patrick McDaniel. Ensemble adversarial training: Attacks and defenses. arXiv pr eprint arXiv:1705.07204 , 2017. 1 [43] Florian Tram ` er , Nicolas Papernot, Ian Goodfellow , Dan Boneh, and Patrick McDaniel. The space of transferable ad- versarial examples. arXiv pr eprint arXiv:1704.03453 , 2017. 1 , 2 , 4 , 5 [44] Jun-Y an Zhu, Philipp Kr ¨ ahenb ¨ uhl, Eli Shechtman, and Alex ei A Efros. Generativ e visual manipulation on the nat- ural image manifold. In European Confer ence on Computer V ision , pages 597–613. Springer , 2016. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment