Motor Imagery Classification of Single-Arm Tasks Using Convolutional Neural Network based on Feature Refining

Brain-computer interface (BCI) decodes brain signals to understand user intention and status. Because of its simple and safe data acquisition process, electroencephalogram (EEG) is commonly used in non-invasive BCI. One of EEG paradigms, motor imager…

Authors: Byeong-Hoo Lee, Ji-Hoon Jeong, Kyung-Hwan Shim

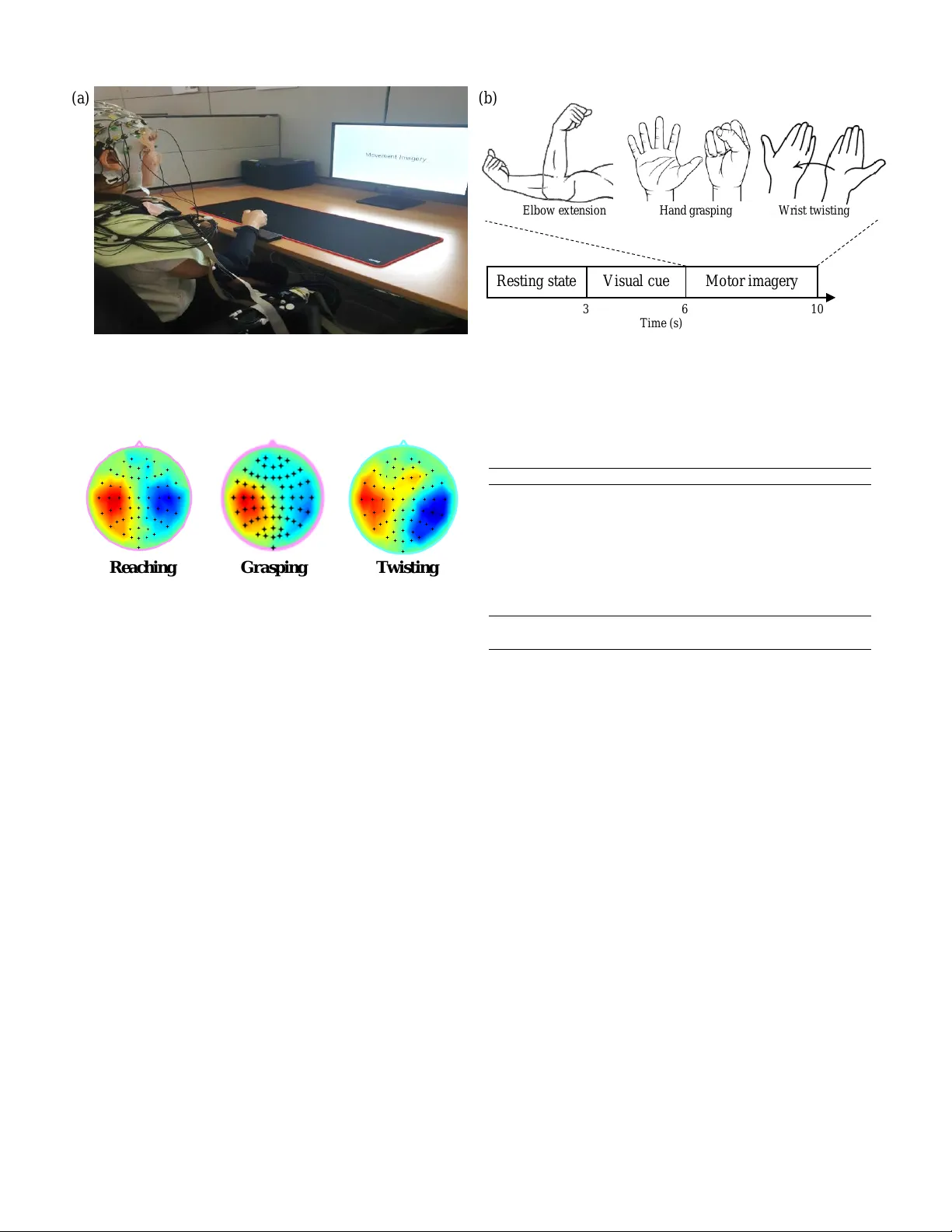

Motor Imagery Classification of Single-Arm T asks Using Con v olutional Neural Network based on Feature Refining Byeong-Hoo Lee 1 , Ji-Hoon Jeong 1 , K yung-Hwan Shim 1 , Dong-Joo Kim 1 1 Department of Brain and Cognitiv e Engineering, K orea University , Seoul, Republic of Korea bh lee@korea.ac.kr , jh jeong@korea.ac.kr , kh shim@korea.ac.kr , dongjookim@korea.ac.kr Abstract —Brain-computer interface (BCI) decodes brain sig- nals to understand user intention and status. Because of its simple and safe data acquisition process, electroencephalogram (EEG) is commonly used in non-in vasiv e BCI. One of EEG paradigms, motor imagery (MI) is commonly used f or recov ery or rehabilitation of motor functions due to its signal origin. Ho wever , the EEG signals are an oscillatory and non-stationary signal that makes it difficult to collect and classify MI accurately . In this study , we pr oposed a band-power feature refining con volutional neural network (BFR-CNN) which is composed of two con volu- tion blocks to achiev e high classification accuracy . W e collected EEG signals to create MI dataset contained the mo vement imagination of a single-arm. The proposed model outperforms con ventional appr oaches in 4-class MI tasks classification. Hence, we demonstrate that the decoding of user intention is possible by using only EEG signals with rob ust performance using BFR- CNN. Keyw ords-brain-computer interface; electroencephalogram; motor imagery; con voulutional neural network I . I N T RO D U C T I O N Brain-computer interface (BCI) decodes brain signals to un- derstand user intention and status that can be used for external device control. Since brain signals contain diverse information about user status, many studies hav e attempted to understand brain signals through BCI [1]–[5]. In vasi ve BCI directly places the electrodes on the brain to acquire high-quality brain signals such as electrocorticogram (ECoG) [6]. Howe ver , there are many safety issues associated with in vasi ve BCI because it in volv es sur gery to implant electrodes. On the other hand, non-in vasi ve BCI uses electroencephalogram (EEG) because it is easy to acquire without brain surgery . EEG-based BCI has sev eral paradigms for signal acquisition such as motor imagery (MI) [7]–[9], movement-related cortical potential (MRCP) [4], and ev ent-related potential (ERP) [10]–[12]. As applications of EEG-based BCI, speller [13] and wheelchair [14], and Research was partly supported by Institute of Information & Communica- tions T echnology Planning & Evaluation (IITP) grant funded by the Korea government (No. 2017-0-00432, Dev elopment of Non-Inv asive Integrated BCI SW Platform to Control Home Appliances and External De vices by Users Thought via AR/VR Interface) and partly funded by Institute of Information & Communications T echnology Planning & Ev aluation (IITP) grant funded by the Korea government (No. 2017-0-00451, Development of BCI based Brain and Cognitiv e Computing T echnology for Recognizing Users Intentions using Deep Learning). drone [15] were commonly used for communication between user and devices. Among these paradigms, MI is related to specific potentials from the supplementary motor area and pre-motor cortex [16]. When the user imagines specific mov ements, event-related desynchronization/synchronization (ERD/ERS) patterns are generated in supplementary motor area and pre-motor cortex [17]. MI paradigm captures these patterns to detect user intention. Due to its origin, MI is commonly used for recov ery or rehabilitation of the user’ s motor functions using external devices [18]. Additionally , MI- based BCI provides extra motor functions using robotic arm [2]. EEG is an oscillatory and non-stationary signal thus de- coding EEG signals is challenging work [19], [20]. Similar to the denoising technique in computer vision [21], [22], EEG signal should be treated after denoising using filters. A number of MI classification methods hav e been dev eloped to achiev e satisfactory classification performance. Filter bank common spatial patterns (FBCSP) is con ventional feature extraction method to decode EEG signal using spectral power modula- tions [23]. Linear discriminant analysis (LD A) is jointly used with FBCSP as a classifier . Cho et al. [24] used FBCSP with regularized linear discriminant analysis (RLD A) to decode MI tasks focusing on a single category of MI tasks such as hand grasping and arm reaching. Con volutional neural netw ork (CNN) approaches are applied in BCI [25]. Schirrmeister et al. [26] proposed three dif ferent types of CNN-based models de- pending on the number of layers, inspired by FBCSP . Among the three models, ShallowCon vNet extracts log band power features. MI classification performance of the ShallowCon vNet is better than the DeepConvNet which is designed for general purpose dealing with signal amplitude. Using the depth wise and separable conv olutions, CNN performs classification well regardless of the types of EEG signals including MI [27]. Howe ver , these studies mainly focused on simple tasks using competition dataset (left-hand, right-hand, foot, and tongue) and classes are not related to each other to perform sequential work such as drinking water and opening the door . Since the commands are not intuitive, artificial command matching should be required to control external devices. In this study , we collected three different types of MI tasks of a single-arm: elbo w e xtension, wrist-twisting, and hand grasping to perform sequential upper limb works. Second, we Figur e3 C Vis u al cu e Mo to r im ag er y C R esti n g s tate 3 6 10 T i me ( s) El b o w e x t e n si o n H a n d g r a sp i n g W r i st t w i st i n g ( a) ( b) Fig. 1. (a) Experimental environment for EEG data acquisition. (b) Experimental paradigm of single-arm tasks. From 0 to 3 seconds, resting state was given as relaxation. After resting state, 3 seconds of visual cue like above figures was given for readiness. Finally , 4 seconds of imagery period was given. Rea ching G ra s pin g T w is t ing Fig. 2. Representative topoplots of each MI task. 8-12 Hz of frequenc y band was selected from a subject. High amplitudes were observed in the left side of supplementary motor area and the pre-motor cortex because the subject is right-handed. proposed a band-power feature refining con volutional neural network (BFR-CNN) which has only two con volution blocks for MI classification by extracting band-power features. It is designed to classify single-arm MI tasks without artificial command matching. Finally , the proposed BFR-CNN achiev ed robust classification performance in the 4-class single-arm MI tasks classification. This paper is structured as follows. Section II gi ves a description of the data acquisition, dataset for ev aluation, and the proposed BFR-CNN model. Section III presents the results of classification accuracies, performance comparison using other models and discusses the adv antages and limitations. In session IV, conclusions and future work are described. I I . M E T H O D S A. Data description Data acquisition process was conducted with eight healthy subjects at the age of 22-30 (6 right-handed males and 2 right- handed females). W e used EEG signal amplifier (BrainAmp, BrainProduct GmbH, Germany) to record EEG signals. The sampling rate was 1,000 Hz and a band-pass filter (1-60 Hz) was applied in all channels. W e applied 60 Hz notch filter to T ABLE I C O MPA R IS O N O F M I TA S KS C LA S S I FIC ATI O N R E S ULT S BFR-CNN DeepCon vNet ShallowCon vNet EEGNet FBCSP+RLDA sub1 0.82 0.74 0.83 0.68 0.68 sub2 0.83 0.61 0.74 0.63 0.70 sub3 0.84 0.78 0.83 0.84 0.69 sub4 0.80 0.50 0.72 0.58 0.64 sub5 0.90 0.71 0.85 0.71 0.75 sub6 0.80 0.59 0.71 0.63 0.53 sub7 0.84 0.60 0.76 0.66 0.65 sub8 0.88 0.55 0.68 0.60 0.72 A vg . 0.84 0.64 0.77 0.67 0.67 Std. 0.04 0.10 0.06 0.10 0.11 remov e noise from the wires. Brain Products V isionRecorder (BrainProduct GmbH, German y) recorded and filtered raw EEG data from the subjects. 64 Ag/AgCl electrodes in 10- 20 international system were used. The FPz and FCz channels were selected as ground and reference respectiv ely . Impedance of each electrode was measured to maintain the impedance below 10k Ω using conductive gel. 64 EEG channels were used for data acquisition and we selected 24 channels (F3, F1, Fz, F2, F4, FC3, FC1, FC2, FC4, C3, C1, Cz, C2, C4, CP3, CP1, CPz, CP2, CP4, P3, P1, Pz, P2, and P4) for ev aluation [24]. These channels are placed on the somatosensory area and pre- motor cortex. During the data acquisition experiment, ev ery subject performed the 150 trials of MI tasks (i.e., 50 trials of elbow extension, twisting and grasping tasks). Relaxation was given before the imagery period and extracted as a resting state (Fig. 1). Subjects were asked to imagine specific muscle mov ements. Collected MI dataset were resampled at 250 Hz for the classification and it contained 3 classes of single- arm tasks and resting state. Data validation was conducted using FBCSP algorithm and RLD A for each MI task. The protocols and environments were revie wed and approv ed by the Institutional Revie w Board at Korea Univ ersity [1040548- KU-IRB-17-172-A-2]. 7 2 202 7 2 6 7 C o n v B lo ck 1 5 3 1 4 4 1 4 4 1 7 C o n v B lo ck 2 2 8 8 3 2 8 8 1 C o n v B lo ck 3 C on v olut i on 144 E x p o e n t ia l L in e a r Un it s Av e ra g e P oo l i ng S t r id e 3 x 1 C on v olut i on 72 E x p o e n t ia l L in e a r Un it s Av e ra g e P oo l i ng S t r id e 3 x 1 C on v olut i on 288 E x p o e n t ia l L in e a r Un it s Av e ra g e P oo l i ng S t r id e 3 x 1 Den s e L ay er 2 S of t m a x U nit s 1 288 Figur e1 24 751 In p u t S i g n a l 687 36 36 229 36 18 594 36 215 1 24 687 36 T e m p o r a l C o nv o lut ion 36 E x po n e n t i a l L i n e a r Un i t s 65 K e r n e l S i z e S pati al F il ter 24 Un i t s ( a l l c h a n n e l s ) A v e r age P ooli n g 3x 1 s i z e 3x 1 s t r i de s C on v olu ti on 36 E x po n e n t i a l L i n e a r Un i t s 15 K e r n e l s i z e A v e r age P ooli n g 15x 1 S i z e 15x 1 S t r i de s C las s if ication 4 S o f t m a x Un i t s C o n v o l u t i o n B l o c k I C o n v o l u t i o n B l o c k II C l a ssi f i c a t i o n L a y e r F l a t t e n R aw E E G data 751t i m e x 24 c h a n n e l s Fig. 3. Overall flowchart of the proposed BFR-CNN. It consists of two con volution blocks. The first conv olution block was designed for creating a receptiv e field and the second block is for feature refining. 0.85 0.05 0.0 5 0.05 0.0 0.85 0.0 0.15 0.0 0.0 1.0 0.0 0.0 0.0 0.0 1.0 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0 Figur e2 ( c) Sh a llo w Co nv Net ( e ) A A A mo d e l 0 . 8 5 0 . 1 5 0 . 0 0 . 0 0 . 1 0 0 . 8 0 0 . 0 0 . 1 0 0 . 0 0 . 0 1 . 0 0 . 0 0 . 1 5 0 . 2 0 0 . 0 0 . 6 5 0 . 5 5 0 . 2 0 0 . 2 5 0 . 0 0 . 2 0 0 . 7 0 0 . 1 0 0 . 0 0 . 1 5 0 . 1 0 0 . 7 5 0 . 0 0 . 0 5 0 . 1 0 0 . 0 0 . 8 5 ( d ) E E G Net ( b) Dee pCo nv Net 0 . 6 5 0 . 1 0 0 . 0 0 . 2 5 0 . 2 5 0 . 6 0 0 . 0 0 . 1 5 0 .1 5 0 .1 5 0 .7 0 0 .0 0 . 0 0 . 1 0 0 . 0 0 . 9 0 ( e) FB CS P + RL DA 0 . 5 5 0 . 1 5 0 . 1 0 0 . 2 0 0 .1 5 0 .6 0 0 .1 0 0 .1 5 0 . 1 5 0 . 2 0 0 . 6 0 0 . 0 5 0 . 0 5 0 . 0 5 0 . 0 0 . 9 0 T rue la bel Pre d icte d la b el T r u e l a b e l T r u e l a b e l P r e d i c t e d l a b e l P r e d i c t e d l a b e l ( a) B F R - CNN El b o w e x ten sio n G ra sp in g T w isti n g Re stin g El b o w e x ten sio n G ra sp in g T w isti n g Re stin g T r u e l a b e l T r u e l a b e l P r e d i c t e d l a b e l P r e d i c t e d l a b e l Fig. 4. Representati ve confusion matrices of each model. Through these confusion matrices, we could analyze the classification tendencies of each model. B. BFR-CNN BFR-CNN is a singular CNN architecture and it is designed for single-arm MI tasks classification. Raw EEG signal contains lar ge amounts of information (channel by time size of matrix) which are not rele vant to MI tasks. Therefore, if the classification model is able to extract features and refine them into more rele vant features, then the classification performance can be improved. Because the classes of our dataset are composed of single-arm tasks, we assumed that spatial features from the restricted cortex region would not be suf ficient to be used on CNN. In addition, since the EEG signals have a high temporal resolution, a higher performance can be achieved by extracting frequency features rather than spatial features [28]. W e were inspired by the concept of shallowCon vnet which extracts log-band power features. Considering class complexity of our data, we assumed that using more and refined features would be proper . W e conducted frequency domain analysis and there was high amplitude in the similar brain regions (left side of somatosensory cortex and motor corte x) found in topoplot of each MI task (Fig. 2). Thus, we attempted to dev elop the shallow CNN architecture that would extract frequency features that are highly relev ant to single-arm MI tasks through the conv olutional layer . The first conv olution block consists of a temporal con volution layer, spatial filter layer , and a verage pooling layer [29]. Spatial filter was applied along the input channels to reduce the dimensionality as a single input channel. W e set the temporal filter size to a quarter of sampling rate to remov e the ocular artifact creating a recepti ve field abov e 4 Hz. The second block was designed to refine band-power features. W e comprised the second conv olution block with conv olution layer and av erage pooling layer to reduce the number of features that are less rele vant for classification. The last layer contains softmax function with the flatten layer for classification which normalizes output probability distribution. The exponential linear unit (ELU) was applied as an activ ation function in ev ery conv olution block [30]. W e used adam optimizer [31] and cross-entropy loss function for training [32]. The overall flowchart of BFR-CNN is described in Fig. 3. I I I . E X P E R I M E N T A L R E S U LT S A N D D I S C U S S I O N For the ev aluation, we set mini-batch size as 32 and 200 times of training epochs. Evaluation en vironment was Windo w 10 desktop with specification Intel(R) Core i7-7700 CPU at 3.60 GHz, 32GB RAM, and Geforce T itan XP GPU. All comparisons were conducted under the same conditions. T able I shows a comparison results of classification. The av erage accuracy of the BFR-CNN is 0.84 as the highest ac- curacy among the comparison groups. Howe ver , the Shallow- Con vNet ranked as second-place records 0.77 and that is be- cause it extracts log band po wer features similarly BFR-CNN which refines band-power features. The remaining methods show similar classification performance. The DeepCon vNet records the lo west performance that is because it is designed for general purpose especially concerning signal amplitude. EEGNet is also designed to decode EEG signal regardless of its dominant features ev en in MI classification thus EEGNet classifies slightly better than DeepCon vNet. Interesting thing is that FBCSP with RLDA performs MI classification as well as EEGNet ev en it is not a deep learning. Through the comparison, we confirm that using the band-po wer features is advantageous for MI task classification, and refinement can yield higher classification performance. Fig. 4 is the confusion matrices of classification results. DeepCon vNet tends to confuse all MI tasks (elbow extension, grasping and twisting) especially elbow extension but it classifies relativ ely well the resting state. The ShallowCon vNet clearly classifies twisting but confuses elbow extension, grasping and resting state. Unlike other methods, ShallowCon vNet is weak in classifying resting states. On the other hand, the Shallo wCon vNet performs MI tasks classification with high accuracies. EEGNet strongly confuses the elbow e xtension class with the grasping and twisting. Howe ver , none of the MI tasks hav e been misclassified as resting state. FBCSP with RLD A classifies MI tasks as well as EEGNet but it sho ws higher classification accuracy in elbow extension classification. BFR-CNN clearly classifies twisting and resting state. Like ShallowCon vNet, there is a tendency to slightly confuse elbow extension and grasping. Overall, we find that all methods used in this study tend to confuse MI tasks rather than resting state. I V . C O N C L U S I O N A N D F U T U R E W O R K S In this paper, we propsed a BFR-CNN that refines band- power features to classify single-arm MI tasks. The decoding of MI dataset is time-consuming and costly work because it is oscillatory and non-stationary signals. T o improv e MI classification performance, we proposed BFR-CNN to extract and refine frequency features that are highly relev ant to MI. Through the e valuation, we demonstrated that the BFR-CNN achiev ed the highest classification accuracies compared to existing approaches. Thus, the proposed model can be applied to control external devices with high performance such as a robotic arm. V . A C K N OW L E D G E M E N T The authors thanks to J.-H. Cho for their help with the dataset construction and discussion of the data analysis. R E F E R E N C E S [1] J. R. W olpaw , N. Birbaumer , D. J. McF arland, G. Pfurtscheller , and T . M. V aughan, “Brain-computer interfaces for communication and control, ” Clin. Neurophysiol. , v ol. 113, pp. 767–791, 2002. [2] C. I. Penaloza and S. Nishio, “BMI control of a third arm for multitasking, ” Sci. Robot. , vol. 3, pp. eaat1228, 2018. [3] G. Buzs ´ aki, C. A. Anastassiou, and C. Koch, “The origin of extracellular fields and currentsEEG, ECoG, LFP and spikes, ” Nat. Rev . Neur osci. , vol. 13, pp. 407, 2012. [4] I. K. Niazi, N. Jiang, O. T iberghien, J. F . Nielsen, K. Dremstrup, and D. Farina, “Detection of movement intention from single-trial movement- related cortical potentials, ” J. Neural Eng. , v ol. 8, pp. 066009, 2011. [5] X. Ding and S.-W . Lee, “Changes of functional and ef fective connectiv- ity in smoking replenishment on deprived heavy smokers: a resting-state FMRI study, ” PLoS One , vol. 8, pp. e59331, 2013. [6] M.-H. Lee, J. W illiamson, D.-O. W on, S. F azli, and S.-W . Lee, “A high performance spelling system based on EEG-EOG signals with visual feedback, ” IEEE T rans. Neural Syst. Rehabil. Eng. , vol. 26, pp. 1443– 1459, 2018. [7] J.-H. Kim, F . Bießmann, and S.-W . Lee, “Decoding three-dimensional trajectory of executed and imagined arm movements from electroen- cephalogram signals, ” IEEE. Tr ans. Neural. Syst. Rehabil. Eng. , vol. 23, pp. 867–876, 2014. [8] J.-H. Jeong, K.-T . Kim, D.-J. Kim, and S.-W . Lee, “Decoding of multi- directional reaching movements for EEG-based robot arm control, ” in Conf. Pr oc. IEEE Int. Conf. Syst. Man. Cybern. (SMC) . IEEE, 2019, pp. 511–514. [9] T .-E. Kam, H,-I Suk, and S.-W . Lee, “Non-Homogeneous Spatial Filter Optimization for ElectroEncephaloGram (EEG)-based Motor Imagery Classification, ” Neur ocomputing , vol. 108, pp. 58–68, 2013. [10] S.-K. Y eom, S. Fazli K.-R. M ¨ uller , and S.-W . Lee, “ An efficient erp- based brain-computer interface using random set presentation and face familiarity , ” PLoS one , vol. 9, pp. e111157, 2014. [11] Y . Chen, A. D. Atnafu, I. Schlattner , W . T . W eldtsadik, and M.-C. Roh, H. J. Kim, S.-W . Lee, B. Blankertz, and S. Fazli, “ A high-security ee g- based login system with rsvp stimuli and dry electrodes, ” IEEE Inf. F ore . Sec. , vol. 11, pp. 2635–2647, 2016. [12] X. Zhu, H.-I. Suk, S.-W . Lee, and D. Shen, “Canonical feature selection for joint regression and multi-class identification in alzheimers disease diagnosis, ” Brain imaging behav . , vol. 10, pp. 818–828, 2016. [13] D.-O. W on, H.-J. Hwang, S. D ¨ ahne, K. R. M ¨ uller , and S.-W . Lee, “Effect of higher frequency on the classification of steady-state visual ev oked potentials, ” J. Neural Eng. , vol. 13, pp. 016014, 2015. [14] K.-T . Kim, H.-I. Suk, and S.-W . Lee, “Commanding a brain-controlled wheelchair using steady-state somatosensory ev oked potentials, ” IEEE. T rans. Neural. Syst. Rehabil. Eng. , vol. 26, pp. 654–665, 2016. [15] K. LaFleur, K. Cassady , A. Doud, K. Shades, E. Rogin, and B. He, “Quadcopter control in three-dimensional space using a nonin vasiv e motor imagery-based brain-computer interface, ” J . Neural. Eng. , vol. 10, pp. 1–15, 2013. [16] G. Pfurtscheller and C. Neuper, “Motor imagery and direct brain- computer communication, ” Proc. IEEE , vol. 89, pp. 1123–1134, 2001. [17] C. Neuper , M. W ¨ ortz, and G. Pfurtscheller, “ERD/ERS patterns reflecting sensorimotor activ ation and deactiv ation, ” Pro g. Brain Res. , vol. 159, pp. 211–222, 2006. [18] J.-H. Jeong, K.-H. Shim, J.-H. Cho, and S.-W . Lee, “Trajectory decoding of arm reaching movement imageries for braincontrolled robot arm system, ” in Int. Conf. Pr oc. IEEE Eng. Med. Biol. Soc. (EMBC) , 2019, pp. 23–27. [19] S. R. Liyanage, C. Guan, H. Zhang, K. K. Ang, J. Xu, and T . H. Lee, “Dynamically weighted ensemble classification for non-stationary EEG processing, ” J. Neural Eng. , vol. 10, pp. 036007, 2013. [20] M.-H. Lee, S. F azli, J. Mehnert, and S.-W . Lee, “Subject-dependent clas- sification for robust idle state detection using multi-modal neuroimaging and data-fusion techniques in BCI, ” P attern Recognit. , vol. 48, pp. 2725– 2737, 2015. [21] H. H. Blthoff, S.-W . Lee, T . A. Poggio, and C. W allraven, “Biologically motiv ated computer vision, ” Springer-V erlag , 2003. [22] M. Kim, G. Wu, Q. W ang, S.-W . Lee, and D. Shen, “Improved image registration by sparse patch-based deformation estimation, ” Neuroimag e , vol. 105, pp. 257–268, 2015. [23] K. K. Ang, Z. Y . Chin, H. Zhang, and C. Guan, “Filter bank common spatial pattern (FBCSP) in brain-computer interface, ” in Pr oc. IEEE Int. Jt. Conf. Neural Netw . , 2008, pp. 2390–2397. [24] J.-H. Cho, J.-H. Jeong, K.-H. Shim, D.-J. Kim, and S.-W . Lee, “Classi- fication of hand motions within EEG signals for non-in vasiv e BCI-based robot hand control, ” in Conf. Pr oc. IEEE Int. Conf. Syst. Man. Cybern. (SMC) , 2018, pp. 515–518. [25] N.-S. Kwak, K.-R. M ¨ uller , and S.-W . Lee, “A conv olutional neural network for steady state visual evok ed potential classification under ambulatory en vironment, ” PLoS one , vol. 12, pp. e0172578, 2017. [26] R. T . Schirrmeister, J. T . Springenberg, L. D. J. Fiederer , M. Glasstetter, K. Eggensperger, M. T angermann, F . Hutter , W . Burgard, and T . Ball, “Deep learning with conv olutional neural networks for EEG decoding and visualization, ” Hum. Brain Mapp. , vol. 38, pp. 5391–5420, 2017. [27] V . J. Lawhern, A. J. Solon, N. R. W ayto wich, S. M. Gordon, C. P . Hung, and B. J. Lance, “EEGNet: A compact con volutional neural network for EEG-based brain-computer interfaces, ” J. Neural Eng. , vol. 15, pp. 056013, 2018. [28] L. F . Nicolas-Alonso and J. Gomez-Gil, “Brain computer interfaces, a revie w, ” Sensors , vol. 12, pp. 1211–1279, 2012. [29] C.-S. W ei, T . K oike-Akino, and Y . W ang, “Spatial component-wise con volutional network (SCCNet) for motor-imagery EEG classification, ” in 9th Int. IEEE EMBS Conf. Neural Eng. , 2019, pp. 328–331. [30] D.-A. Clev ert, T . Unterthiner , and S. Hochreiter, “Fast and accurate deep network learning by exponential linear units (ELUs), ” arXiv pr eprint arXiv:1511.07289 , 2015. [31] D. P . Kingma and J. Ba, “Adam: A Method for stochastic optimization, ” arXiv preprint arXiv:1412.6980 , 2014. [32] Z. Zhang and M. Sabuncu, “Generalized cross entropy loss for training deep neural networks with noisy labels, ” in Adv . Neural Inf. Pr ocess. Syst. (NIPS) , 2018, pp. 8778–8788.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment