Word-level Deep Sign Language Recognition from Video: A New Large-scale Dataset and Methods Comparison

Vision-based sign language recognition aims at helping deaf people to communicate with others. However, most existing sign language datasets are limited to a small number of words. Due to the limited vocabulary size, models learned from those datasets cannot be applied in practice. In this paper, we introduce a new large-scale Word-Level American Sign Language (WLASL) video dataset, containing more than 2000 words performed by over 100 signers. This dataset will be made publicly available to the research community. To our knowledge, it is by far the largest public ASL dataset to facilitate word-level sign recognition research. Based on this new large-scale dataset, we are able to experiment with several deep learning methods for word-level sign recognition and evaluate their performances in large scale scenarios. Specifically we implement and compare two different models,i.e., (i) holistic visual appearance-based approach, and (ii) 2D human pose based approach. Both models are valuable baselines that will benefit the community for method benchmarking. Moreover, we also propose a novel pose-based temporal graph convolution networks (Pose-TGCN) that models spatial and temporal dependencies in human pose trajectories simultaneously, which has further boosted the performance of the pose-based method. Our results show that pose-based and appearance-based models achieve comparable performances up to 66% at top-10 accuracy on 2,000 words/glosses, demonstrating the validity and challenges of our dataset. Our dataset and baseline deep models are available at \url{https://dxli94.github.io/WLASL/}.

💡 Research Summary

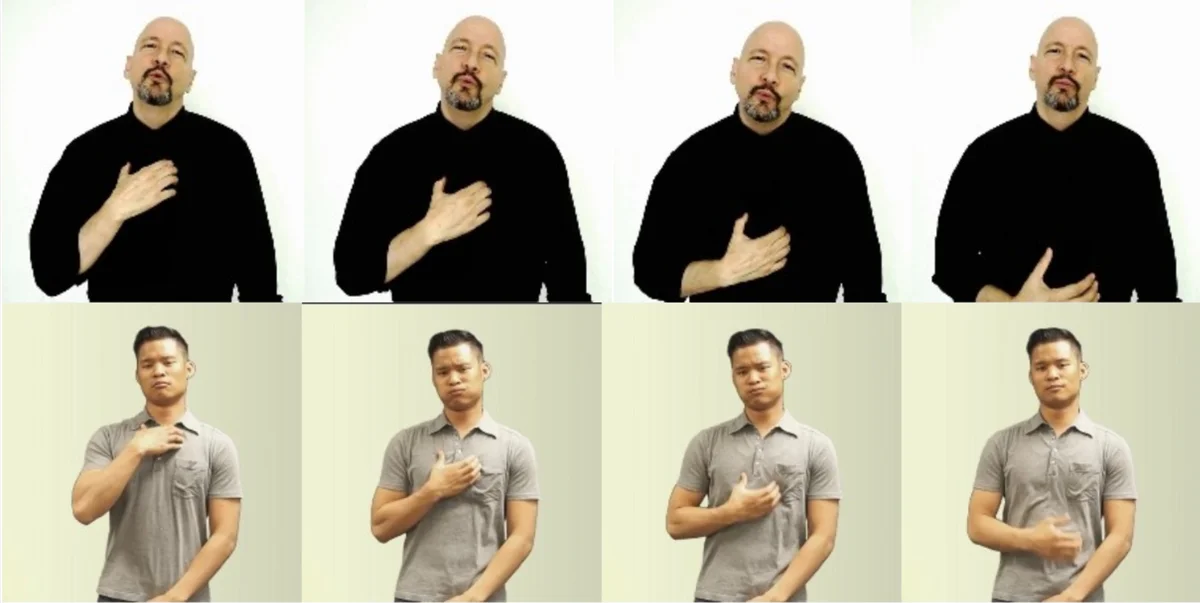

The paper introduces WLASL, a new large‑scale word‑level American Sign Language (ASL) video dataset, and provides a systematic comparison of several deep learning approaches for isolated sign recognition. Existing ASL corpora contain only a few dozen to a few hundred glosses, limiting the scalability of models trained on them. WLASL addresses this gap by collecting 21,083 videos covering 2,000 distinct glosses, performed by 119 different signers. The videos were sourced from educational ASL websites (e.g., ASLU, ASL‑LEX) and YouTube tutorials, filtered to keep only frontal‑view recordings with a single sign per clip. Each gloss has at least three signers and an average of 10.5 examples, providing sufficient inter‑signer variation for robust training.

In addition to the raw video, the authors annotate temporal boundaries, person bounding boxes (via YOLOv3), signer IDs (using FaceNet embeddings), and dialect variations. This rich metadata helps prevent leakage between training and test splits and enables analysis of dialectal effects. The dataset is partitioned into four subsets—WLASL100, WLASL300, WLASL1000, and WLASL2000—allowing evaluation of scalability as the vocabulary grows.

The experimental section evaluates two major modalities: (i) holistic visual appearance and (ii) 2‑D human pose. For appearance‑based baselines, a VGG‑GRU pipeline and a fine‑tuned I3D network are implemented; I3D outperforms the VGG‑GRU, confirming the benefit of spatio‑temporal convolution. For pose‑based baselines, 2‑D joint coordinates extracted with a pose estimator are fed into a GRU, which captures temporal dynamics but ignores spatial relationships among joints. To overcome this limitation, the authors propose Pose‑TGCN, a temporal graph convolutional network that treats each frame’s skeleton as a graph (joints as nodes, anatomical connections as edges) and applies graph convolutions across time. Pose‑TGCN simultaneously models spatial configuration and temporal evolution, leading to a consistent 2–3 % gain over the GRU baseline in Top‑10 accuracy.

Results on the full WLASL2000 subset show that both modalities achieve comparable performance, with the best Top‑10 accuracy reaching 62–66 % (the paper reports 66 % for the appearance‑based I3D and 62.63 % for the pose‑based Pose‑TGCN). These figures demonstrate that, despite the challenging 2,000‑class problem, the dataset is learnable and that pose information alone can rival raw pixel‑based methods.

The authors discuss several limitations: the average of only ten samples per gloss still leaves class imbalance and potential over‑fitting issues; the experiments focus on CNN‑RNN and graph‑based models, leaving newer architectures such as Vision Transformers or multimodal fusion unexplored; and real‑world robustness to extreme lighting or background clutter remains to be validated.

In summary, the paper makes two major contributions: (1) the release of WLASL, the largest publicly available word‑level ASL dataset to date, complete with extensive annotations that facilitate rigorous benchmarking; and (2) a thorough baseline study showing that both appearance‑based and pose‑based deep models can achieve meaningful performance on large‑vocabulary sign recognition, with the proposed Pose‑TGCN offering a principled way to exploit skeletal structure. The work lays a solid foundation for future research aiming at practical, scalable sign language recognition systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment