Emotion Recognition Using Wearables: A Systematic Literature Review Work in progress

Wearables like smartwatches or wrist bands equipped with pervasive sensors enable us to monitor our physiological signals. In this study, we address the question whether they can help us to recognize our emotions in our everyday life for ubiquitous c…

Authors: Stanis{l}aw Saganowski, Anna Dutkowiak, Adam Dziadek

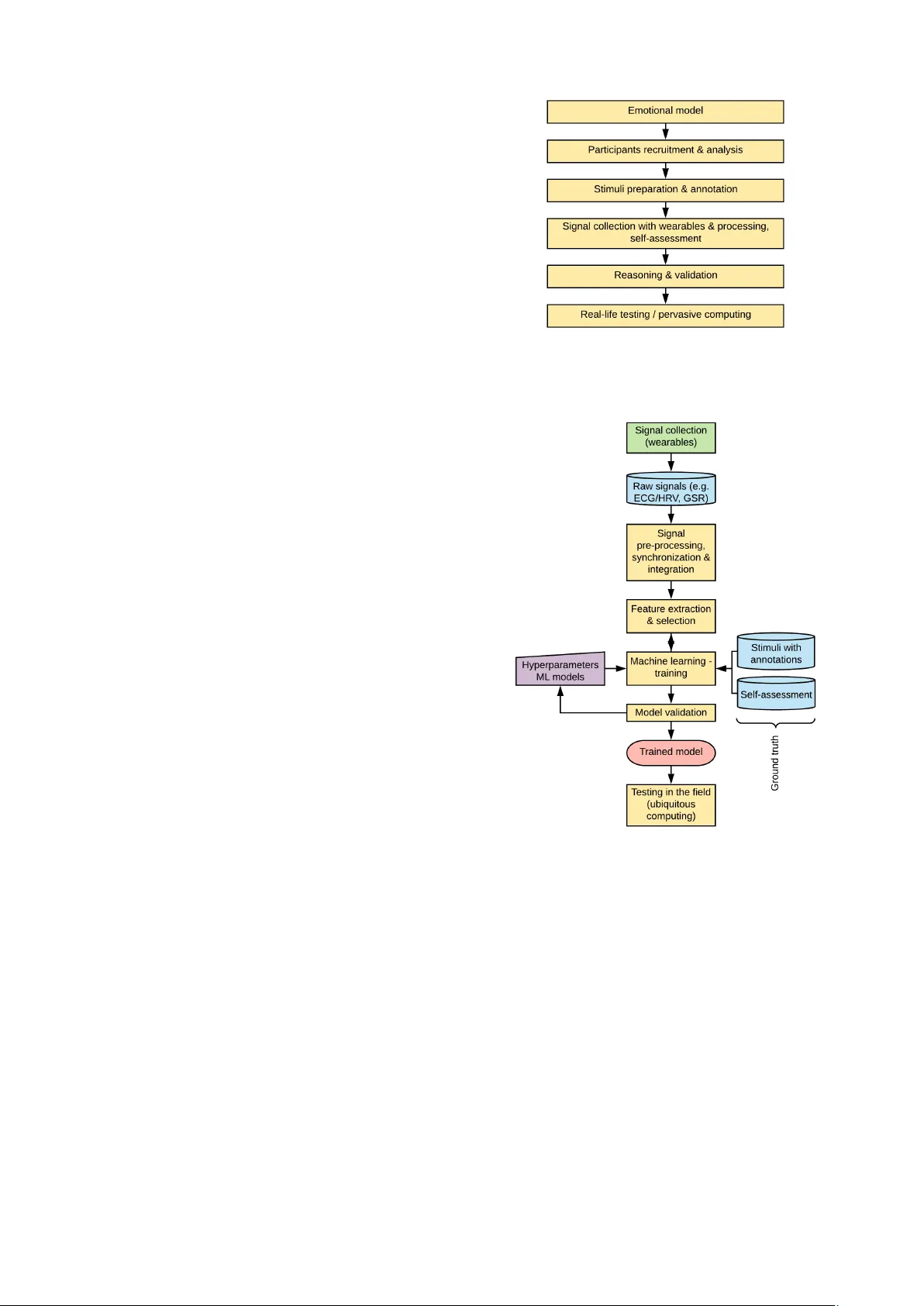

Emotion Recognition Using W earables: A Systematic Literature Re vie w – W ork-in-progress Stanisław Sagano wski 1,2,* , Anna Dutko wiak 1 , Adam Dziadek 3 , Maciej Dzie ˙ zyc 1,2 , Joanna K omoszy ´ nska 1 W eronika Michalska 1 , Adam Polak 4 , Michał Ujma 3 , Przemysław Kazienko 1,2 1 Department of Computational Intelligence, Wr ocław University of Science and T echnology , Wr ocław , P oland 2 F aculty of Computer Science and Manag ement, Wr ocław University of Science and T echnology , Wr ocław , P oland 3 Capgemini, Wr ocław , P oland 4 F aculty of Electr onics, Wr ocław University of Science and T echnology , Wr ocław , P oland * stanislaw .saganowski@pwr .edu.pl Abstract —W earables like smartwatches or wrist bands equipped with per vasive sensors enable us to monitor our phys- iological signals. In this study , we addr ess the question whether they can help us to recognize our emotions in our e veryday life f or ubiquitous computing. Using the systematic literature re view , we identified crucial research steps and discussed the main limitations and problems in the domain. Index T erms —emotion, emotion r ecognition, affective comput- ing, wearable, smartwatch, systematic literature review , surv ey , re view , smart device, smart band, personal device I . I N T RO D U C T I O N Emotions driv e most of our decisions [1], not only intuitive ones [2], so they directly af fect our e veryday life. Most of the research on emotion recognition conducted so far focused on participant (subject) reactions e vok ed by the prepared stimuli in the controlled en vironment (laboratory setup). Therefore, complex emotion identification in the real-life en vironment, especially for pervasi ve computing, remains a significant chal- lenge for this relatively ne w but promising field of study . It is quite difficult to provide a commonly agreed definition of emotion. W e should rather consider a set of features that distinguish an emotion from a non-emotion [3]. Overall, affect is seen as a neurophysiological state that is consciously accessible but not directed at any specific entity . Mood is a lasting and not very intense sensation. Finally , a short, intense, and directed feeling is described as emotion [4]. Further in this revie w , we refer to emotions interchangeably with affect. Besides, it is clear that two affecti ve computing topics: stress and emotion recognition hav e recently become separate research lines [5]. Emotions can be identified and ev aluated from different but complementary points of view: 1) Subjective perception of the participant (self-assessment of the subject), 2) Reaction of participant’ s organism (physiological sig- nals), which is objective b ut may be contaminated by the This work was partially supported by the National Science Centre, Poland, project no. 2016/21/B/ST6/01463; European Union’ s Horizon 2020 research and innov ation program under the Marie Skłodowska-Curie grant agreement No. 691152 (RENOIR); the Polish Ministry of Science and Higher Education fund for supporting internationally co-financed projects in 2016–2019 no. 3628/H2020/2016/2; and the statutory funds of the Department of Compu- tational Intelligence, Wroclaw Uni versity of Science and T echnology . individual’ s body condition and functioning (influenced by drugs or illnesses), 3) Behavioral signals like facial expressions, voice, specific body movements or e ven ke ystroke patterns [6], 4) External ev aluation made by the subject’ s peers, e.g. an adult recognizing the state of the child [7]. In this paper , we focus on the second perspecti ve - physi- ological signals that can be gathered using pervasi ve sensors built into wearable devices like smartwatches, wrist bands, smart rings or headbands. These kinds of devices possess an undoubted advantage. Due to their unobtrusiv eness and con venience, they facilitate pervasi ve computing and context- aware systems by monitoring human affecti ve states in the real-life environment, a.k.a field studies . Recently , surve y studies on emotion recognition have changed their primary focus from the EEG-based solutions [8], [9], through facial and speech analysis [10], [11], to physiology-oriented [4], [12]. In [12], all essential aspects of emotion identification (emotion models, stimuli, features, classifiers, etc.) are described, b ut it does not consider whether the research was conducted in the lab or field setup. Schmidt at al. respect the en vironment of the surveyed studies and conclude that emotion recognition outside the controlled lab is much more dif ficult [4]. The authors mention about 50 stress and emotion recognition papers (15 of which were field or constrained field studies). W e go a step further and consider only studies that are (or could be) placed in the field, i.e. they use devices and techniques that allow us to conduct the study in everyday situations. Additionally , by following the systematic literature revie w (SLR) procedure, we cover all relev ant research pub- lished up to date. W e expect SLR to be completed by the date of the conference. It means the extended results will be presented during the workshop. I I . S Y S T E M A T I C L I T E R A T U R E R E V I E W A great method to summarize e xisting knowledge in a gi ven domain is Systematic Literatur e Review (SLR). It inv olves identifying, ev aluating and interpreting av ailable research rel- ev ant to a certain research question [13]. In our SLR, we are posing the following research question: Can wearables be used to identify emotions in everyday life supporting pervasive computing? T o refine the number of studies considered in our SLR, we support our question with a set of criteria. Inclusion criteria: • V arious emotions identified. • Personal device/wearable used. • At least one physiological signal monitored. Exclusion criteria: • Study performed on a population smaller than fiv e sub- jects. • Single emotion or its levels is considered. • Device is not personal/wearable/portable. • Device or its modules are connected with a cable. W e used three databases to find articles rele vant to our re- search question: Scopus, W eb of Science, and Google Scholar via Publish or Perish. Our search was narrowed do wn by the follo wing terms: emotion, affective, wearable, smartwatch, smart device , smart band, personal device, iot, ambient in- telligence . In total, 2,424 papers were found. At the current stage, 1,104 (45%) articles were carefully revie wed. Only 27 of them (2.5%) satisfied our research criteria. W e have prioritized papers including emotion recognition phrase in the title or abstract; therefore, it is likely that the majority of the relev ant papers are already identified at this stage. I I I . G E N E R A L S T U DY D E S I G N F O R E M OT I O N D E T E C T I O N W e can distinguish six stages in research design for emotion recognition for pervasi ve computing, Fig. 1. Decision about emotional model directly impact on reasoning output, Sec. IV -A. The second stage covers recruitment, training, profiling and selection of study participants. Some of them need to be excluded due to diseases, e.g. heart problems can significantly interfere with physiological ECG or PPG signals. Then, the appropriate study setup has to be planned differently for a laboratory and field environment (pervasi ve computing). For laboratory studies, stimuli with annotations may be prepared, whereas ecological momentary assessment (EMA) question- naires are more suitable for field studies. Next, physiological signals from wearables are collected (Sec. IV -C) together with self-assessments forms. Raw signals are pre-processed, sam- pled, synchronized and descriptiv e features are derived, Sec. IV -F, Fig. 2. The selected features combined with the ground truth emotional labels are used to train reasoning model, Sec. IV -G. T o adjust the model, hyper-parameter optimization should be considered in order to make it ready for ubiquitous computing. I V . C R U CI A L R E S E A R C H C O M P O N E N T S A. Emotional Models Since human beings are complex, also their emotions can be modelled using various concepts. There is no single, commonly agreed emotion model. In general, categorical and multidimensional approaches are utilized. The former distin- guishes sev eral discrete emotional categories like six types of emotions proposed by Ekman and Friesen [14] used in Fig. 1. Research stages for emotion recognition in the real-life en vironment. Fig. 2. Emotion recognition process using biological signals from wearables. [15]; Plutchik’ s ’wheel of emotions’ [16] with 8 primary emotions utilized as 8 × 3=24 emojis [17] (only four+neutral were actually analyzed more in-depth) or limited to only 6 basic emotions [18]. Man y authors decided to apply their o wn emotion categories, usually as a subset or small modification of the classical ones, e.g. happy-sad-anger + neutral state [19] extended in [20] with fear , happy-sad-anger-pain [21] joy- sadness-anger -pleasur e [22], or anger-sadness-fear -disgust- joy/amusement + neutral [23], also extended with the seventh one – affection [24], anger-sadness-fear -surprise-frustration- amusement [25], extended in [26] with disgust-other (they did not applied these two to reasoning), excited-bor ed-str essed- r elaxed-happy [27]. The number of reported categorical emo- tions can be even greater like as many as eight+neutral in [28]. In [29], happy-sad-flow categories reflecting flow theory were used. Sometimes, authors treated each emotion as an independent but potentially co-occurring state, each having its lev el/scale [26], [30]. It actually makes them a multi- dimensional approach. A multi-dimensional emotion model assumes multiply di- mensions and separate values assigned to each. The most popular is the 2-dimensional ar ousal-valence Russell’ s model. Arousal denotes affecti ve intensity and v alence emotion type (between sad and happy). T ypically , dimension values are discrete, e.g. binary states low-high [28], [31] (forming four quadrants in the 2-dimensional space) or 3-valued low- medium/neutral-high each [32], [33]. They can be transferred into discrete classes, e.g. joy-sad-str ess-calm [31], [34], happy- bor ed-neutral [7]. Sometimes, the third dimension: domi- nance [35], liking [36] or relaxed [6] is also used. If only valence is applied, we receiv e a simple binary model like ne gative/fear/sad against positive/relax/happy [37], sometimes extended with neutral [38]. Single valence may have multiple lev els [38], [39]. There are also categorical approaches, which combine stress with some other states like neutral-str ess-amusement [5] or with more other states [27]. B. P articipants There are significant dif ferences in experiencing emotions between indi viduals. A sev eral studies revealed that the age [40], gender [41], and personality [42] have influence on emotions. In many papers the health of the participants is con- sidered, especially a treatment history and current medications [5], [6], [17], [24], [30], [31], [35], information about vision correction [24], [33], and pregnanc y [5]. Some e xperiments are preceded with a questionnaire about depression [22], [24], ex- cessiv e sweating [17], or the use of cigarettes/tobacco, alcohol or cof fee [5], [31], [33], [35]. The total number of participants enrolled vary from two up to nearly two hundred. Some authors disclose whether the study participants volunteered [24], or receiv ed a gratification [33]. Surprisingly , only a few studies mention they are approved by the ethical committee [17], [22], [24], [38], [39]. C. Signals and Sensors Detecting emotions from the physiological state requires monitoring body condition by tracking parameters such as heart rate (HR), galv anic skin response (GSR), body temper- ature (BT). SLR revealed the following signals (T ab . I) are used for detecting af fect for perv asi ve computing. Electrocar- diography (ECG) measures the electrical activity of the heart, photoplethysmogram (PPG) registers flow changes in blood volume of the monitored vessel, GSR quantifies variation in the skin conductance. Electroencephalography (EEG) records the electrical acti vity of the brain, and respiration (RSP) is the breathing rate. Additional set of parameters (T ab. II) is deri ved from the above mentioned. HR is the number of heartbeats per minute, while heart rate v ariability (HR V) describes the variation between interbeat interv als (IBI). T ABLE I P H YS I O L OG I C AL S I G NA L S U SE D F O R E M OT IO N R E C O GN I T I ON Signal Used by ECG [18], [20], [22], [23], [30], [36] PPG [24], [27] GSR, ED A, EDR, SC [5], [7], [15], [17], [21], [24]–[27], [29], [31]–[34], [36], [39] EEG [19], [28], [35]–[38] RSP [22] BT [5], [15], [21], [25]–[27], [29], [31], [39] T ABLE II P A R A ME T E RS D E R IV E D F RO M P H Y S IO L O G IC A L S I G NA L S Parameter Used by HR [6], [17], [21], [25], [26], [29], [33], [39] HR V [29], [30], [34] IBI [6], [17] BVP [5], [15], [28], [31], [32] W e noted sev eral commercially available wearable devices, that seem to be comfortable and useful for collecting physi- ological signals throughout the day , see T ab . III. In addition, some articles propose self-made de vices [18], [20], [22], [23]. T ABLE III W E AR A B L ES F O R C O LL E C T IN G P H Y S IO L O G IC A L S I G NA LS Device Sensors Used by Empatica E4 PPG, GSR, BT [5], [15], [17], [21], [27]–[29], [31]–[33] Microsoft Band 2 PPG, GSR, BT [32], [34], [39] Samsung Gear S HR [6] BodyMedia SenseW ear Armband HR, GSR, BT [25], [26] Neurosky MindW ave EEG [19], [36]–[38] XYZlife Bio-Clothing ECG [30] D. Emotion Self-assessment A big challenge for studies on human emotions is to obtain ground truth. Authors most commonly request subjects to self- report their emotional state. Howe ver , no information or not enough details about self-assessment are the issue in seven studies [7], [20], [22], [24], [32], [34], [37]. Authors usually ask about the intensity of the experienced emotions in Likert scale (fi ve papers) [6], [30], [31], [36], [38] or the subjects select their emotions from the closed list (three cases) [15], [27], [28]. In [19], the open-ended questions are used, and in two other papers, the combination of open-ended and close-ended questions is applied [25], [26]. Some researchers use the well-established questionnaires or their modified versions. In six studies, Self Assessment Manikin (SAM) is applied [5], [15], [21], [33], [35], [39]. Much less frequently , the IAPS qustionare is utilized [18], [23], AniA vatare [17], Photographic Affect Meter (P AM) [15], State-Trait Anxiety Inv entory (ST AI) [15], Game Experi- ence Questionnaire (GEQ) [29], Positive and Negati ve Affect Schedule (P ANAS) [5], and Short Stress State Questionnaire [5]. In [7], adult experts assess emotional state based on children face expression. E. Stimuli There are many procedures to elicit emotions. Most often used are affecti ve videos [5], [17], [21], [22], [24], [25], [28], [30], [31], [38], images [18], [23], [32], [33], [37], and music/sounds [19], [22], [32], [36], [43]. They are easy to explain, simple to apply and av ailable in many data sets: IAPS [44], NAPS [45], CAPS [46], IADS [47], MAHNOB [48]. On the other hand, many studies use stimuli that can be experienced in real-life like playing a game [29], [32], solving a math problem [25], learning [27], walking around the city [39], and other [6], [7], [15], [49]. Howe ver , most of the experiments were performed in the lab, where obtaining high- quality measurements is relativ ely easy , and only a fe w studies were conducted in an uncontrolled environment [6], [15], [17], [39]. Usually , the exposure to stimuli lasts 5-10 seconds per image, 15-30 seconds per audio, 2-5 minutes per video, and 2-60 minutes in case of everyday acti vities. F . Signal Processing Most of the papers follo w the classical approach to signal processing in the field of machine learning, consisting of three explicit stages: pre-processing, feature extraction and selection, Fig. 2. The role of pre-processing is to remo ve from signals information that is not related to emotional patterns and can negati vely impact on results. It also helps to extract the discriminativ e features more efficiently . The most frequently used pre-processing methods are gathered in T able IV. T ABLE IV T H E M OS T P O PU L A R M E TH O D S U S E D F OR P R E - P RO C E S SI N G Pre-pr ocessing method Used by Filtration (lo wpass, bandpass, notch, median, drift removal) [5], [7], [15], [20], [24], [27], [28], [30], [31], [35] Normalization (to ±1 or [0,1] range) and standardization (di viding by SD) [25]–[27], [29], [34], [37] W insonization (remo ving outliers and dubious or corrupted fragments, interpolation of removed samples) [7], [22] The stage of feature extraction is used to reduce the di- mensionality of the problem while maintaining the rele vant information. It results in the feature vector representing the original signal or its segment. Usually , features are calculated in time or other domain T ab . V, i.e. an original variable is transformed or decomposed into other signals. Then, different metrics are superimposed on them. The last stage, consisting in the selection of a subset of features, focuses on the further reduction of dimensionality , taking into account the redundancy of previously extracted features or their inability to distinguish between considered classes. Carrying it out increases the ef ficiency of classification algorithms. Despite this, the feature selection is not mentioned or performed in most of the revie wed papers. Only in [22] a genetic algorithm is used, and in [31] Sequential Forward Floating Selection is applied. T wo more works perform Prin- cipal Component Analysis (PCA) [7], [31]. G. Reasoning Models Only four studies apply a deep learning algorithm such as con volutional neural networks [29], [39], long short-term memory neural networks [28], [39], or neural networks with backpropagation [25]. All other works use simple supervised learning models among which Support V ector Machine is the most often used. T ABLE V T H E M OS T P O PU L A R M E TH O D S U S E D F OR F E A T U R E E X TR AC T I O N Featur e extraction method Used by T ime domain Signal morphology (amplitude, e xtrema, intervals, etc.) [5], [6], [20]–[26], [29], [32], [35] Rate of specific e vents [5], [6], [22], [23], [30], [33] RMS [18], [24], [32] Frequency domain PSD [5], [28], [31], [33], [35], [36] Frequency spectrum [28], [30], [31], [36] T ime-frequency or time-scale domain STFT , WT (CWT , D WT , FWT) [7], [18], [35], [38] Statistical indices Mean, median, SD, ske wness, kurtosis, etc. [5]–[7], [18], [22]–[26], [28], [30]–[36] Nonlinear measures Measures of chaos, complexity , entropy [28], [31], [35] Really surprising is the fact that only two papers mention imbalance in collected samples [29], [36]. When dealing with multiple emotions (or ev en just two) it is very unlikely that the distribution will be equal, i.e. each emotion will occur a similar number of times. At the same time, the majority of works use the accuracy as the classification quality measure, which is not appropriate for imbalanced data sets (unless calculated for the correctly classified cases only). Furthermore, most papers do not provide information on model validation, in particular , ev aluated hyper-parameters. Just three compare div erse setups [7], [22], [28], two exam- ine different feature sets [23], [31], and a few test various algorithms [6], [23], [25], [26], [31], [32], [35]. Only [39] addresses a vital question whether to train a general model for all subjects or to build multiple personalized classifiers adjusted to each individual. The most common validation techniques are k-fold and leav e-one-out cross-validation. Labels (output and ground truth for models) are usually obtained from self-assessments or annotated stimuli data. A less popular approach is to employ experts (psychologists) [7], [36], [38]. V . A P P L I C A T I O N S T O P E R V A S I V E C O M P U T I N G A N D C O N T E X T - AW A R E S Y S T E M S Affect recognition presents a wide range of possible appli- cations in pervasi ve computing. W ith progressi ve adv ancement in physiological sensor signal quality and miniaturization, use cases including emotion detection are at the fingertips. During SLR, we have noticed two main application areas emerging: human-computer interaction (HCI) and healthcare. HCI can be greatly impro ved once the computer understands human af fect [34]. An af fecti ve loop can be established to learn and foster user experience, and virtual assistants may respond better [32]. Recommendations for search engine results [36], user interface and content [25], [26] may be enhanced with user’ s emotional context. Affect-aw are robots might provide better user experience [18], [23], and tele vision content sug- gestions could be more accurate [38]. Emotions play an important role in computer games, [32] supporting game-w orld design process based on a player’ s affecti ve model. Player’ s experience can be crafted to emotional feedback [35], making it more realistic. Healthcare can benefit from the fact that the physiological system and ability to feel are intertwined. Ubiquitous emotion detection can aid to monitor our well-being [20], [24]. Further - more, emotion recognition could help at stress control, e.g. in order to reduce the probability of cardiov ascular diseases [5]. Mental health could be monitored by means of af fect detection [6], [30], [39]; emotional self-awareness can improv e mental health [17] In [31], the authors alert that neg ativ e emotional states can have a degrading impact on our health. Therapeutic contexts are proposed in [7], [21], where children with autism could be taught to understand their emotions better . Affect recognition is also proposed as a comprehensive tool for helping people with emotion-based disorders [22]. Other applications include: detecting driv er drowsiness and establishing cognitive load [28], monitoring classroom attitude [27], lesson content difficulty adjustment [29], and creating alternativ e emotional channel via music [19]. V I . D I S C U S S I O N Simple emotional models like low arousal-high ar ousal or no str ess-low stress-high stress were extensi vely explored. It mainly resulted from the strong correlation between arousal or stress and some biological signals - GSR or BVP [4], [50]. Howe ver , emotions are much more complex [51], and their multidimensional nature remains a great challenge for future work. This also appears to be the main reason why in only a few studies, the authors monitor and recognize emotions in the non-controlled (field study) [6], [15], [39] or semi-controlled en vironment, e.g. emotion monitoring while watching the live football game in TV [17]. Emotion model directly impacts on the detection model. V arious emotion models make it almost impossible to compare results with each other , e.g. does fear from [20] corresponds to pain in [21]? Multi-dimensional models should straightfor- ward lead to a multi-label classification problem, which in turn requires much more cases to train the classifier . None of the paper approach to reasoning in this way . Moreover , correct multi-label models can recognize combinations that do not occur in the training set. There is actually no research, which could be seen as comprehensiv e with respect to all research design stages, Fig. 1. For example, only two out of all 27 papers consider and properly solve the imbalanced data problem, three optimize machine learning model parameters, fiv e claim to have an ethical committee approv al, another five recruited more than 50 participants, sampling rates of the sensor signals are commonly not considered at all. Finally , only four identify complex emotions in real-life conditions. W e focused on pervasi ve wireless wearables that can mon- itor physiological signals. Howe ver , some other signals and data may be complementary , e.g. data gathered by our smart- phone about our acti vity [52], voice [53], or smartwatch built- in camera monitoring our face [54]. The low variety of hardware tested might stem from the fact that most of f-the-shelf wearables do not provide access to raw physiological signals. V I I . C O N C L U S I O N S In our systematic literature revie w (SLR), we analyzed methods and solutions that enable us to effecti vely recognize human emotions in ev eryday life and utilize such information in pervasi ve computing. Half-way through, based on the lit- erature, we believ e that such a solution is achie vable but still requires further in vestigation. There are still many challenges and problems to solve in order to ensure high quality of emotion detection in context- aware applications. W e found a great potential, especially in the improv ement of signal data processing, model learning, and tailoring the suitable deep machine learning architecture. W e hav e shown that emotion recognition from personal devices is already a mature but very promising direction for further research. R E F E R E N C E S [1] J. S. Lerner, Y . Li, P . V aldesolo, and K. S. Kassam, “Emotion and decision making, ” Annual re view of psychology , vol. 66, pp. 799–823, 2015. [2] D. Kahneman, Thinking, fast and slow . Macmillan, 2011. [3] C. A. Smith, R. S. Lazarus et al. , “Emotion and adaptation, ” Handbook of per sonality: Theory and r esear ch , pp. 609–637, 1990. [4] P . Schmidt, A. Reiss, R. Dürichen, and K. V . Laerhoven, “W earable- based affect recognition—a revie w , ” Sensors , vol. 19, no. 19, p. 4079, 2019. [5] P . Schmidt, A. Reiss, R. Duerichen, C. Marberger , and K. V an Laer- hoven, “Introducing wesad, a multimodal dataset for wearable stress and affect detection, ” in Pr oceedings of the 2018 on International Confer ence on Multimodal Interaction . A CM, 2018, pp. 400–408. [6] M. R. Kamdar and M. J. W u, “Prism: a data-driv en platform for monitoring mental health, ” in Biocomputing 2016: Pr oceedings of the P acific Symposium . W orld Scientific, 2016, pp. 333–344. [7] H. Feng, H. M. Golshan, and M. H. Mahoor, “ A wavelet-based ap- proach to emotion classification using eda signals, ” Expert Systems with Applications , v ol. 112, pp. 77–86, 2018. [8] M. Z. Soroush, K. Maghooli, S. K. Setarehdan, and A. M. Nasrabadi, “ A revie w on eeg signals based emotion recognition, ” International Clinical Neur oscience Journal , vol. 4, no. 4, p. 118, 2017. [9] T . Xu, Y . Zhou, Z. W ang, and Y . Peng, “Learning emotions eeg-based recognition and brain activity: A survey study on bci for intelligent tutoring system, ” Pr ocedia computer science , vol. 130, pp. 376–382, 2018. [10] C. Marechal, D. Mikołajewski, K. T yburek, P . Prokopo wicz, L. Bouguer- oua, C. Ancourt, and K. W ˛ egrzyn-W olska, “Surve y on ai-based multi- modal methods for emotion detection, ” in High-P erformance Modelling and Simulation for Big Data Applications . Springer , 2019, pp. 307–324. [11] E. Maria, L. Matthias, and H. Sten, “Emotion recognition from phys- iological signal analysis: A revie w , ” Electr onic Notes in Theoretical Computer Science , vol. 343, pp. 35–55, 2019. [12] L. Shu, J. Xie, M. Y ang, Z. Li, Z. Li, D. Liao, X. Xu, and X. Y ang, “ A review of emotion recognition using physiological signals, ” Sensors , vol. 18, no. 7, p. 2074, 2018. [13] B. Kitchenham, “Procedures for undertaking systematic revie ws: Joint technical report, ” Computer Science Department, Keele University (TR/SE-0401) and National ICT Australia Ltd.(0400011T . 1) , 2004. [14] P . Ekman and W . V . Friesen, “Facial Action Coding System: A technique for the measurement of facial movement, Consulting Psychologists Press, ” P alo Alto, CA, 1978. [15] P . Schmidt, A. Reiss, R. Dürichen, and K. V an Laerhov en, “Labelling affecti ve states in the wild: Practical guidelines and lessons learned, ” in Pr oceedings of the 2018 ACM International Joint Confer ence and 2018 International Symposium on P ervasive and Ubiquitous Computing and W earable Computer s . ACM, 2018, pp. 654–659. [16] R. Plutchik, Emotions and life: P erspectives fr om psychology , biology , and e volution. American Psychological Association, 2003. [17] A. Albraikan, B. Hafidh, and A. El Saddik, “iaware: A real-time emotional biofeedback system based on physiological signals, ” IEEE Access , v ol. 6, pp. 78 780–78 789, 2018. [18] K. Rattanyu, M. Ohkura, and M. Mizukawa, “Emotion monitoring from physiological signals for service robots in the living space, ” in ICCAS 2010 . IEEE, 2010, pp. 580–583. [19] X. Lu, X. Liu, and E. Stolterman Bergqvist, “It sounds like she is sad: Introducing a biosensing prototype that transforms emotions into real- time music and facilitates social interaction, ” in Extended Abstracts of the 2019 CHI Conference on Human F actors in Computing Systems . A CM, 2019, p. LBW2219. [20] L. Hu, J. Y ang, M. Chen, Y . Qian, and J. J. Rodrigues, “Scai-svsc: Smart clothing for effectiv e interaction with a sustainable vital sign collection, ” Futur e Gener ation Computer Systems , vol. 86, pp. 329–338, 2018. [21] D. Pollreisz and N. T aheriNejad, “ A simple algorithm for emotion recognition, using physiological signals of a smart watch, ” in 2017 39th Annual International Conference of the IEEE Engineering in Medicine and Biolo gy Society (EMBC) . IEEE, 2017, pp. 2353–2356. [22] C. He, Y .-j. Y ao, and X.-s. Y e, “ An emotion recognition system based on physiological signals obtained by wearable sensors, ” in W earable sensors and r obots . Springer , 2017, pp. 15–25. [23] K. Rattanyu and M. Mizukawa, “Emotion recognition using biological signal in intelligent space, ” in International Conference on Human- Computer Inter action . Springer , 2011, pp. 586–592. [24] L. Fernández-Aguilar, A. Martínez-Rodrigo, J. Moncho-Bog ani, A. Fernández-Caballero, and J. M. Latorre, “Emotion detection in aging adults through continuous monitoring of electro-dermal activity and heart-rate variability , ” in International W ork-Conference on the Interplay Between Natural and Artificial Computation . Springer , 2019, pp. 252– 261. [25] C. L. Lisetti and F . Nasoz, “Categorizing autonomic nervous system (ans) emotional signals using bio-sensors for hri within the maui paradigm, ” in R OMAN 2006-The 15th IEEE International Symposium on Robot and Human Interactive Communication . IEEE, 2006, pp. 277–284. [26] C. L. Lisetti and F . Nasoz, “Using nonin vasi ve wearable computers to recognize human emotions from physiological signals, ” EURASIP journal on applied signal processing , vol. 2004, pp. 1672–1687, 2004. [27] M. S. Dao, D. Nguyen, D. Tien, A. Kasem et al. , “Healthyclassroom- a proof-of-concept study for discovering students’ daily moods and classroom emotions to enhance a learning-teaching process using het- erogeneous sensors, ” 2018. [28] B. Nakisa, M. N. Rastgoo, A. Rakotonirainy , F . Maire, and V . Chandran, “Long short term memory hyperparameter optimization for a neural network based emotion recognition framework, ” IEEE Access , vol. 6, pp. 49 325–49 338, 2018. [29] M. Maier, C. Marouane, and D. Elsner , “Deepflow: Detecting optimal user experience from physiological data using deep neural networks, ” in Pr oceedings of the 18th International Confer ence on Autonomous Agents and MultiAgent Systems . International Foundation for Autonomous Agents and Multiagent Systems, 2019, pp. 2108–2110. [30] H. W . Guo, Y . S. Huang, J. C. Chien, and J. S. Shieh, “Short-term analysis of heart rate v ariability for emotion recognition via a wearable ecg device, ” in 2015 International Confer ence on Intelligent Informatics and Biomedical Sciences (ICIIBMS) . IEEE, 2015, pp. 262–265. [31] B. Zhao, Z. W ang, Z. Y u, and B. Guo, “Emotionsense: Emotion recognition based on wearable wristband, ” in 2018 IEEE SmartW orld, Ubiquitous Intelligence & Computing, Advanced & T rusted Com- puting, Scalable Computing & Communications, Cloud & Big Data Computing, Internet of P eople and Smart City Innovation (Smart- W orld/SCALCOM/UIC/ATC/CBDCom/IOP/SCI) . IEEE, 2018, pp. 346– 355. [32] G. J. Nalepa, K. K utt, B. Gi ˙ zycka, P . Jemioło, and S. Bobek, “ Analysis and use of the emotional conte xt with wearable devices for games and intelligent assistants, ” Sensors , vol. 19, no. 11, p. 2509, 2019. [33] M. Ragot, N. Martin, S. Em, N. Pallamin, and J.-M. Div errez, “Emotion recognition using physiological signals: laboratory vs. wearable sensors, ” in International Confer ence on Applied Human F actors and Er gonomics . Springer , 2017, pp. 15–22. [34] F . Setiawan, S. A. Khow aja, A. G. Prabono, B. N. Y ahya, and S.-L. Lee, “ A frame work for real time emotion recognition based on human ans using pervasiv e device, ” in 2018 IEEE 42nd Annual Computer Software and Applications Conference (COMPSAC) , vol. 1. IEEE, 2018, pp. 805–806. [35] T . Xu, R. Y in, L. Shu, and X. Xu, “Emotion recognition using frontal eeg in vr affecti ve scenes, ” in 2019 IEEE MTT -S International Micr owave Biomedical Confer ence (IMBioC) , vol. 1. IEEE, 2019, pp. 1–4. [36] R. Gupta, M. Khomami Abadi, J. A. Cárdenes Cabré, F . Morreale, T . H. Falk, and N. Sebe, “ A quality adaptive multimodal af fect recognition system for user -centric multimedia indexing, ” in Pr oceedings of the 2016 ACM on international conference on multimedia retrieval . A CM, 2016, pp. 317–320. [37] Y . Nie, Y . W u, Z. Y ang, G. Sun, Y . Y ang, and X. Hong, “Emotional ev aluation based on svm, ” in 2017 2nd International Confer ence on Au- tomation, Mechanical Contr ol and Computational Engineering (AMCCE 2017) . Atlantis Press, 2017. [38] A. Jalilifard, A. G. da Silva, and M. K. Islam, “Brain-tv connection: T oward establishing emotional connection with smart tvs, ” in 2017 IEEE Re gion 10 Humanitarian T echnology Confer ence (R10-HTC) . IEEE, 2017, pp. 726–729. [39] E. Kanjo, E. M. Y ounis, and C. S. Ang, “Deep learning analysis of mobile physiological, environmental and location sensor data for emotion detection, ” Information Fusion , vol. 49, pp. 46–56, 2019. [40] L. Fernández-Aguilar , J. Ricarte, L. Ros, and J. M. Latorre, “Emotional differences in young and older adults: Films as mood induction proce- dure, ” F r ontiers in psyc hology , vol. 9, p. 1110, 2018. [41] Y . Deng, L. Chang, M. Y ang, M. Huo, and R. Zhou, “Gender differences in emotional response: Inconsistency between experience and expressiv- ity , ” PloS one , vol. 11, no. 6, p. e0158666, 2016. [42] A. Costa, J. A. Rincon, C. Carrascosa, V . Julian, and P . Nov ais, “Emotions detection on an ambient intelligent system using wearable devices, ” Futur e Generation Computer Systems , vol. 92, pp. 479–489, 2019. [43] M. Magno, M. Pritz, P . Mayer , and L. Benini, “Deepemote: T owards multi-layer neural networks in a low power wearable multi-sensors bracelet, ” in 2017 7th IEEE International W orkshop on Advances in Sensors and Interfaces (IW ASI) . IEEE, 2017, pp. 32–37. [44] P . J. Lang, “International affectiv e picture system (iaps): Affectiv e ratings of pictures and instruction manual, ” T echnical report , 2005. [45] A. Marchewka, Ł. ˙ Zurawski, K. Jednoróg, and A. Grabowska, “The nencki affecti ve picture system (naps): Introduction to a novel, stan- dardized, wide-range, high-quality , realistic picture database, ” Behavior r esear ch methods , vol. 46, no. 2, pp. 596–610, 2014. [46] B. Lu, M. Hui, and H. Y u-Xia, “The development of native chinese affecti ve picture system–a pretest in 46 college students. ” Chinese Mental Health Journal , 2005. [47] M. M. Bradley and P . J. Lang, “The international affecti ve digitized sounds (; iads-2): Affectiv e ratings of sounds and instruction manual, ” University of Florida, Gainesville , FL, T ech. Rep. B-3 , 2007. [48] M. Soleymani, J. Lichtenauer , T . Pun, and M. Pantic, “ A multimodal database for affect recognition and implicit tagging, ” IEEE T ransactions on Af fective Computing , vol. 3, no. 1, pp. 42–55, 2011. [49] M. V . Sokolov a, A. Fernández-Caballero, M. T . López, A. Martínez- Rodrigo, R. Zangróniz, and J. M. Pastor , “ A distributed architecture for multimodal emotion identification, ” in T rends in Practical Applications of Agents, Multi-Agent Systems and Sustainability . Springer, 2015, pp. 125–132. [50] J. Choi, B. Ahmed, and R. Gutierrez-Osuna, “Development and ev alua- tion of an ambulatory stress monitor based on wearable sensors, ” IEEE transactions on information technology in biomedicine , vol. 16, no. 2, pp. 279–286, 2011. [51] A. S. Cowen and D. Keltner , “Self-report captures 27 distinct categories of emotion bridged by continuous gradients, ” Pr oceedings of the Na- tional Academy of Sciences , vol. 114, no. 38, pp. E7900–E7909, 2017. [52] W . Sasaki, J. Nakazawa, and T . Okoshi, “Comparing esm timings for emotional estimation model with fine temporal granularity , ” in Pr oceedings of the 2018 ACM International Joint Confer ence and 2018 International Symposium on P ervasive and Ubiquitous Computing and W earable Computer s . ACM, 2018, pp. 722–725. [53] P . Denman, E. Lewis, S. Prasad, J. Healey , H. Syed, and L. Nach- man, “ Affsens: a mobile platform for capturing affect in context, ” in Pr oceedings of the 20th International Confer ence on Human-Computer Interaction with Mobile Devices and Services Adjunct . A CM, 2018, pp. 321–326. [54] J. A. Rincon, A. Costa, P . Novais, V . Julian, and C. Carrascosa, “Intelligent wristbands for the automatic detection of emotional states for the elderly , ” in International Confer ence on Intelligent Data Engineering and A utomated Learning . Springer , 2018, pp. 520–530.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment