Poly-time universality and limitations of deep learning

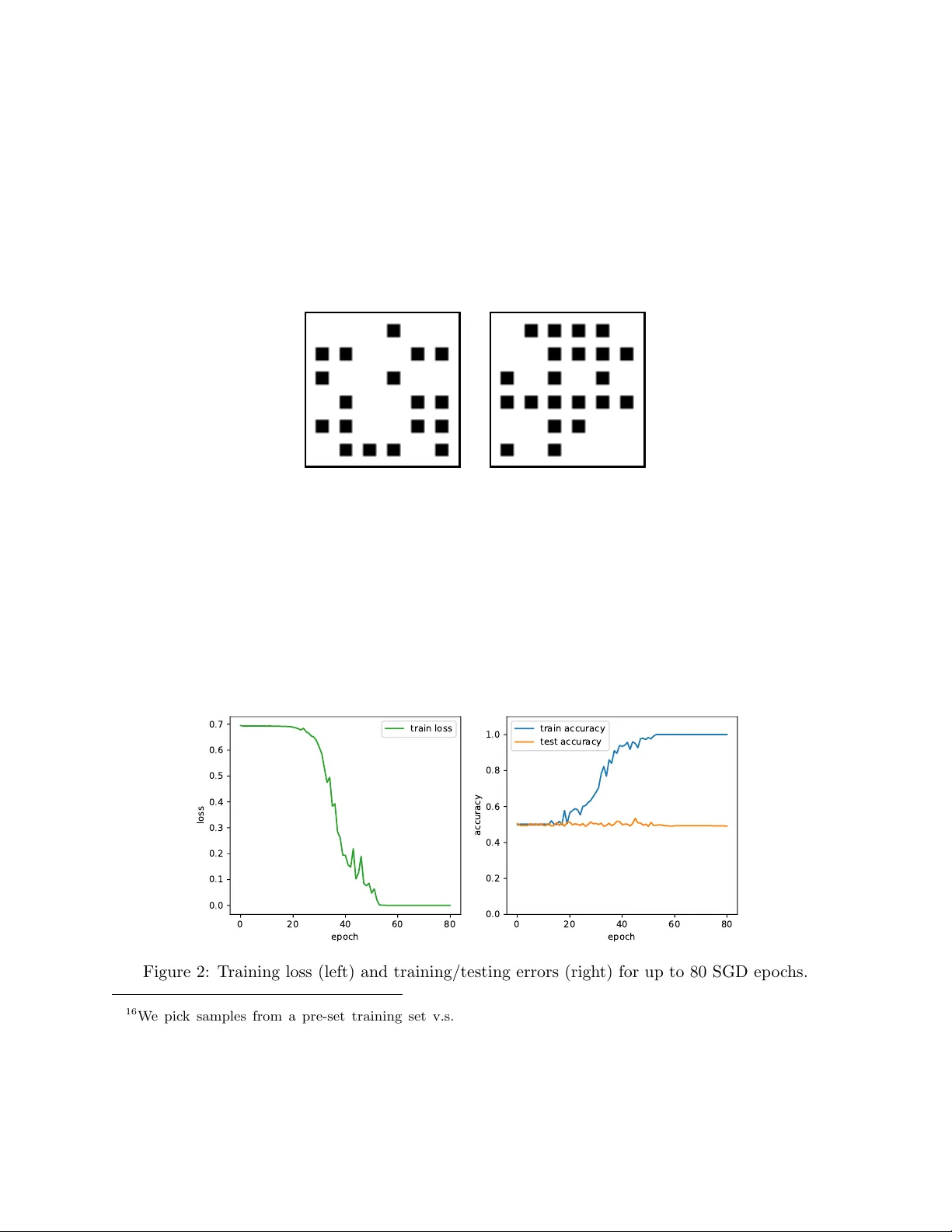

The goal of this paper is to characterize function distributions that deep learning can or cannot learn in poly-time. A universality result is proved for SGD-based deep learning and a non-universality result is proved for GD-based deep learning; this…

Authors: Emmanuel Abbe, Colin S, on