YouTube UGC Dataset for Video Compression Research

Non-professional video, commonly known as User Generated Content (UGC) has become very popular in today's video sharing applications. However, traditional metrics used in compression and quality assessment, like BD-Rate and PSNR, are designed for pri…

Authors: Yilin Wang, Sasi Inguva, Balu Adsumilli

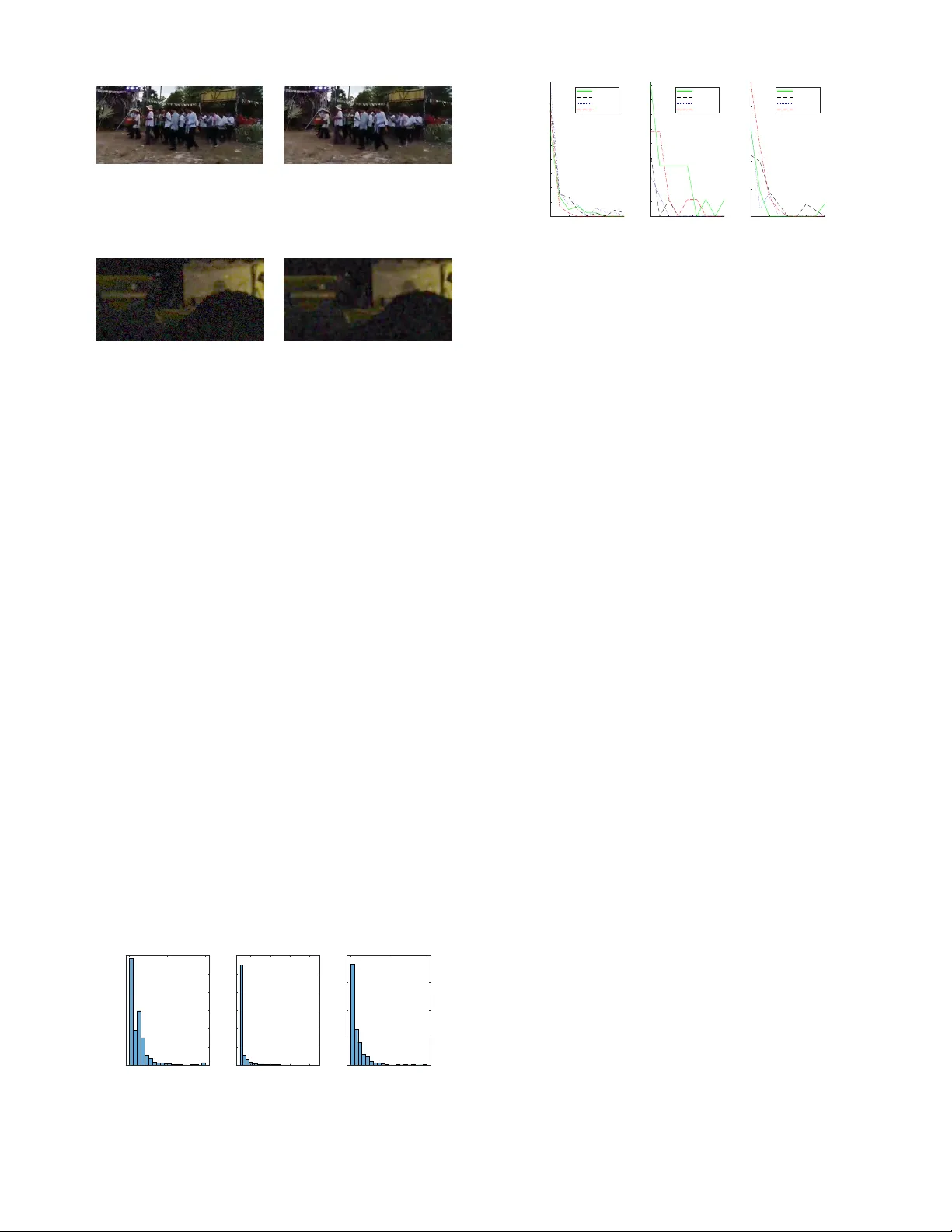

Y ouT ube UGC Dataset for V ideo Compression Research Y ilin W ang, Sasi Inguva, Balu Adsumilli Google Inc. Mountain V iew , CA, USA { yilin,isasi,badsumilli } @google.com Abstract —Non-professional video, commonly known as User Generated Content (UGC) has become very popular in todays video sharing applications. Howe ver , traditional metrics used in compression and quality assessment, like BD-Rate and PSNR, are designed for pristine originals. Thus, their accuracy drops significantly when being applied on non-pristine originals (the majority of UGC). Understanding difficulties for compression and quality assessment in the scenario of UGC is important, but there are few public UGC datasets available f or research. This paper introduces a large scale UGC dataset (1500 20 sec video clips) sampled from millions of Y ouT ube videos. The dataset covers popular categories like Gaming, Sports, and new features like High Dynamic Range (HDR). Besides a novel sampling method based on features extracted from encoding, challenges for UGC compression and quality evaluation are also discussed. Shortcomings of traditional reference-based metrics on UGC are addressed. W e demonstrate a promising way to ev aluate UGC quality by no-r eference objectiv e quality metrics, and e valuate the current dataset with three no-refer ence metrics (Noise, Banding, and SLEEQ). Index T erms —User Generated Content, V ideo Compression, V ideo Quality Assessment I . I N T R O D U C T I O N V ideo makes up the majority of todays Internet traffic. Consequently , this motiv ates video service companies (e.g. Y ouT ube) to spend substantial effort to control bandwidth usage [1]. The main remedy deployed is typically video bitrate reduction. Howe ver , aggressiv e bitrate reduction may hurt perceptual visual quality at the same time as both creators and vie wers hav e increasing expectations for streaming media quality . The ev olution of new codec technology (HEVC, VP9, A V1) continues to address this bitrate/quality tradeoff. T o measure the quality degradation, numerous quality met- rics hav e been proposed in the last fe w decades. Some reference-based metrics (like PSNR, SSIM [2] and VMAF [3]) hav e been widely used in the industry . A common assumption held by most video quality and compression research is that the original video is perfect, and any operation on the original (processing, compression etc.) makes it worse. Most research measures how good the resulting video is by comparing it to the original. Ho we ver , such an assumption does not hold for most of User Generated Content (UGC) due to the following reasons: • Non-pristine original When there are visual artifacts present in the original, it is not clear whether an encoder should be putting in efforts to accurately represent those artifacts. It is necessary to consider the effect that the encoding has on those undesired artifacts, but it is also necessary to consider the effect that the artifacts have on the ability to encode the video ef fectiv ely . • Mismatched absolute and reference quality Using the original as a reference does not alw ays make sense when the original isnt perfect. Quality improvement may be affected by pre/post processing before/after transcoding, but reference-based metrics (e.g. PSNR, SSIM) cannot fairly ev aluate the impact of these tools in a compression chain. W e created this large scale UGC dataset in order to en- courage and facilitate research that considers the practical and realistic needs of video compression and quality assessment in video processing infrastructure. A major contribution of this work is the analysis of the enormous content in Y ouT ube in a way that illustrates the breadth of visual quality in media worldwide. That analysis leads to the creation of a statistically representativ e set that is more amenable to academic research and computational resources. W e built a large scale UGC dataset(Section III), and propose (Section IV) a novel sampling scheme based on features extracted from encoding logs, which achie ves high cov erage over millions of Y ouT ube videos. Shortcomings of traditional reference-based metrics on UGC are discussed (Section V), and we e v aluate the dataset with three no- reference metrics: Noise [4], Banding [5], and Self-reference based LEarning-free Evaluator of Quality (SLEEQ) [6]. The dataset can be pre viewed and do wnloaded from https://media.withyoutube.com/ugc-dataset . I I . R E L A T E D W O R K Some large-scale datasets have already been released for UGC videos, like Y ouT ube-8M [7] and A V A [8]. Howe ver , they only provide extracted features instead of raw pixel data, which makes them less useful for compression research. Xiph.org V ideo T est Media [9] is a popular dataset for video compression and it contains around 120 individual video clips (including both pristine and UGC samples). These videos have various resolutions (e.g. SD, HD, and 4K) and multiple content categories (e.g. mo vie and gaming). LIVE datasets [10]–[12] are also quite popular . All of them contain less than 30 individual pristine clips, along with about 978-1-7281-1817-8/19/$31.00 © 2019 IEEE 150 distorted versions. The goal of the LIVE datasets is subjectiv e quality assessment. Each video clip in the dataset was assessed by 35 to 55 human subjects. V ideoSet [13] contains 220 5 sec clips extracted from 11 videos. The target here is also quality assessment, but instead of providing Mean Opinion Score (MOS) like the LIVE datasets, it provides the first three Just-Noticeable-Dif ference (JND) scores collected from more than 30 subjects. Crowdsourced V ideo Quality Dataset [14] contains 585 10 sec video clips, captured by 80 ine xpert videographers. The dataset has 18 different resolutions and a wide range of quality owing to the intrinsic nature of real-world distortions. Subjec- tiv e opinions were collected from thousands of participants using Amazon Mechanical T urk. K oNV iD-1k [15] is another large scale dataset which con- tains 1200 clips with corresponding subjective scores. All videos are in landscape layout and ha ve resolution higher than 960 × 540 . They started from a collection of 150K videos, grouping them by multiple attributes like blur , colorfulness etc. The final set was created by a “fair-sampling” strategy . The subjectiv e mean opinion scores were gathered through crowdsourcing. I I I . Y O U T U B E U G C D A TA S E T O V E RV I E W Our dataset is sampled from Y ouTube videos with the Creativ e Commons license. W e selected an initial set of 1.5 millions videos belonging to 15 categories annotated by Knowledge Graph [16] (as shown in Fig. 1): Animation, Cov er Song, Gaming, HDR, How T o, Lecture, Liv e Music, L yric V ideo, Music V ideo, News Clip, Sports, T elevision Clip, V ertical V ideo, Vlog, and VR. The video category is an important feature of our dataset, which allo ws users to easily explore characteristics of different kinds of videos. For example, Gaming videos usually contain lots of fast motion, while many L yric videos hav e still backgrounds. Compression algorithms can be optimized in different ways based on such category information. V ideos within each category are further di vided into sub- groups based on their resolutions. Resolution is an important feature re vealing the diversity of user preferences, as well as the different behaviors of various de vices and platforms. So it would be helpful to treat resolution as an independent dimension. In our dataset, we provided 360 P , 480 P , 720 P , 1080 P for all categories (except for HDR and VR) and 4 K for HDR, Gaming, Sports, V ertical V ideo, Vlog, and VR. The final dataset contains 1500 video clips, each of 20 sec duration. All clips are in Raw YUV 4:2:0 format with constant framerate. Details of the sampling scheme are discussed in the next section. I V . D A TA S ET S A M P L I N G Selecting representative samples from millions of videos is challenging. Not only is the sheer number of videos a challenge to process and generate features, but also the long duration of some videos (which can be in hours) makes it that much more dif ficult. Compared with another large Animation Gaming Vlog Lecture Cover Song How To News Clip Lyric Video Music Video Television Clip Live Music Vertical Video VR HDR Sports Fig. 1. All categories in the Y ouT ube UGC dataset. scale dataset, K onvid-1k, this set has videos sampled from a collection that is 10 times larger (1.5M vs. 150K). A video in our dataset can be sampled at any time offset from its original content, instead of taking only the first 30 sec of the original like K on vid-1k. This makes the search space for our dataset 200 times bigger (an average video being 600s long). Due to this huge search space, computing third party metrics (like Blur metric used in K on vid-1k) is resource heavy or e ven infeasible. Large scale video compression/transcoding pipelines typ- ically di vide long videos into chunks and encode them in parallel. Maintaining the quality consistency among chunk boundaries becomes an issue in practice. Thus, besides the three common attributes (spatial, temporal, and color) sug- gested in [17], we propose the variation of complexity across the video as the fourth attribute that reflects inter -chunk quality consistency . W e made the length of video clips in our dataset 20 sec, which is long enough to inv olve multiple complexity variations. These 20 sec clips could be tak en from any se gment of a video. For the 5 million hours of videos therefore, there were 1.8 billion putati ve 20 sec clips. W e used Google’ s Borg system [18] to encode each video in the initial collection with FFmpeg H.264 encoder with PSNR stats enabled. The detailed compression settings we used are constant QP 20, fixed GOP size 14 frames with no B frames. Other reasonable settings will also work and bring similar features. The encoder reports on statistics from processing on a per frame basis. That diagnostic output was used to collect the following features ov er 20 sec clip stepped by 1 sec throughout each video: • Spatial In general spatial detail in a frame is correlated with the bits used to encode that frame, when encoded as an Intra frame. Over a 20 sec chunk therefore, we calculate our spatial complexity feature as the av erage I frame bitrate normalized by the frame area. Fig. 2 (a) and Fig. 2 (b) are frames for lo w and high spatial complexity respectiv ely . • Color W e define color complexity metric as the ratio between the av erage of mean Sum of Squared Error (SSE) in U and V channels to the mean SSE in Y chan- nel (obtained from PSNR measurements). A high score means complex color v ariations in the frame (Fig. 2 (d)), and a lo w score usually means a gray image with only luminance changes (Fig. 2 (c)). (a) Spatial = 0.08 (b) Spatial = 4.17 (c) Color = 0.00 (d) Color = 1.07 Fig. 2. V arious complexity in spatial and color spaces. (a) T emporal = 0.07 (b) T emporal = 1.03 (c) Chunk V ariation = 0.05 (d) Chunk V ariation = 22.26 Fig. 3. V arious complexity in temporal and chunk variation spaces, where the i th column is the row sum of the i th frame. • T emporal The number of bits used to encode a P frame is proportional to the temporal complexity of a sequence. Howe ver visual material with high spatial complexity (large I frames) tends to have large P frames because small motion compensation errors lead to large residuals. T o decouple this effect, we normalize the P frame bits by taking a ratio with the I frame bits, as a fair indicator of temporal complexity . Fig. 3.(a) and Fig. 3.(b) show row sum map of videos with low and high temporal complexity , where the i th column is the row sum of the i th frame. Frequent column color changes in Fig. 3.(b) imply fast motion among frames, while small changes in temporal domain leads to homogeneous regions in Fig. 3.(a). • Chunk V ariation T o explore quality variation within the video, we measured the standard deviation of compressed bitrates among all 1 sec chunks (also normalized by the frame size). If the scene is static or has a smooth motion, the chunk v ariation score should be close to 0, and the row sum map has no sudden changes (Fig. 3 (c)). Mul- tiple scene changes within a video (common in Gaming videos) will lead to multiple different regions on the row sum map (Fig. 3 (d)). Each original video and time offset combination forms a candidate clip for the dataset. W e sample from this sparse 4- D feature space as described belo w: 1) Normalize the feature space F by subtracting min( F ) and dividing with (percentile( F, 99) − min( F )) . 2) For each feature, divide the normalized range [0 , 1] uniformly into N (= 3) bins, where the last bin can go 0 1 2 3 4 Spatial 0 0.05 0.1 0.15 0.2 Probability All Sampled 0 0.2 0.4 0.6 0.8 Temporal 0 0.05 0.1 0.15 Probability All Sampled 0 0.5 1 Color 0 0.05 0.1 0.15 Probability All Sampled 0 5 10 15 20 Chunk Variation 0 0.2 0.4 0.6 Probability All Sampled Fig. 4. Complexity distributions (normalized) for initial and sampled datasets. ov er 1 and e xtend up to the maximum v alue. 3) Permutate all non-empty bins uniformly at random. 4) For current bin, randomly select one candidate clip and add it to the dataset if and only if: • The Euclidean distance of feature scores, between this clip and each of the already selected clips is greater than a certain threshold (0.3). • The original video that this clip belongs to, doesn’t already have another clip in the dataset. 5) Move to the next bin and repeat step 4 until desired number of samples are added. W e manually remov e mislabeled clips from the 50 selected clips, leaving with 15 to 25 selected clips from each (category , resolution) subgroup. W e design the sampling scheme to comprehensiv ely sample along all four feature spaces. As the complexity distribution (normalized) shown in Fig. 4, the feature scores in our sampled set are less spik y than that of the initial set. The sample distribution in color space is left ske wed, because many videos with high complexity in other feature spaces are uncolorful. T o ev aluate the coverage of the sampled set, we divide each pairwise space into 10 × 10 grid. The percentage of grids covered by the sampled set is sho wn in T able I, where the a verage coverage rate is 89%. T ABLE I C OV E RA GE R A T E S F O R PAI RW I S E F E A T U R E S PAC ES . T emporal Color Chunk V ariation Spatial 93% 90% 88% T emporal 92% 88% Color 83% V . C H A L L E N G E S O N U G C Q UA L I T Y A S S E S S M E N T As mentioned in Section I, most UGC videos are non- pristine, which may confuse traditional reference-based quality metrics. Fig. 5 and Fig. 6 show two clips (id: Vlog 720P-343d and Vlog 720P-670d) from our UGC dataset, as well as their compressed versions (by H.264 with CRF 32). W e can see that the corresponding PSNR, SSIM, and VMAF scores are bad, howe ver the original and compressed versions are similar (a) Original (SLEEQ = 0.21) (b) Compressed (SLEEQ = 0.18) Fig. 5. Misleading reference quality scores, where PSNR, SSIM, and VMAF are 29.02, 0.86, and 58.15. (a) Original (noise = 0.32) (b) Compressed (noise = 0.11) Fig. 6. Misleading reference scores caused by noise artifacts, where PSNR, SSIM, and VMAF are 30.28, 0.63, and 31.43. visually . Both the original and compressed versions in Fig. 5 contain significant artifacts, while the compressed version in Fig. 6 actually has less noise artifacts than the original one. The low reference quality scores are mainly caused by not correctly accounting for artifacts in originals. Although no existing quality metric can perfectly ev aluate quality degradation between the original and compressed ver - sions, a possible way is to identify quality issues separately . For example, since we can tell the major quality issue for videos in Fig. 5 is distortions in natural scene, we can compute SLEEQ [6] scores on the original and compressed versions independently , and use their differences to ev aluate the quality degradation. In this case, SLEEQ scores for the original and compressed versions are 0.21 and 0.18, respectively , which implies that compression didn’t introduce noticeable quality degradation. W e can get the same conclusion for videos in Fig. 6 by applying a noise detector [4]. W e analyze perceptual quality of our UGC dataset based on existing no-reference metrics. Current ev aluation includes three no-reference metrics: Noise, Banding [5], and SLEEQ. The first two metrics are artif act-oriented, which can be interpreted as meaningful e xtents of specific compression issues. The third metric (SLEEQ) is designed to measure compression artifacts on natural scenes. All these metrics hav e good correlations with human ratings, and outperform other Noise 0 0.5 1 0 100 200 300 400 500 600 Banding 0.2 0.4 0.6 0.8 0 200 400 600 800 1000 1200 SLEEQ 0 0.5 1 0 200 400 600 800 Fig. 7. Distribution of no-reference quality metrics. 0.2 0.4 0.6 0.8 Noise 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 Normalized Sample Ratio Animation Cover LiveMusic UgcVert 0.4 0.6 0.8 Banding 0 0.01 0.02 0.03 0.04 0.05 0.06 0.07 0.08 0.09 Normalized Sample Ratio Animation Cover LiveMusic UgcVert 0.2 0.4 0.6 0.8 SLEEQ 0 0.05 0.1 0.15 0.2 Normalized Sample Ratio Animation Cover LiveMusic UgcVert Fig. 8. Quality comparison for various categories. related no-reference metrics. Fig. 7 shows the distribution for the three quality metric scores (normalized to [0 , 1] where lower score is better) on our UGC dataset. W e can see that heavy artifacts are not detected in most videos, which in some sense tells us that the overall quality of videos uploaded to Y ouT ube is good. Fig. 8 shows some quality issues within individual categories. For example, Animation videos seem to hav e more banding artifacts than others, and nature scenes in Vlog tend to contain more artifacts than other categories. V I . C O N C L U S I O N This paper introduced a large scale dataset for UGC videos, which highly represents the videos uploaded to Y ouT ube. A nov el sampling scheme was proposed to extract features from millions of video samples, and we in vestigated complexity distribution as well as quality distribution for videos across 15 categories. The difficulties of UGC quality assessment were cited. An important open question remains regarding how to ev aluate quality degradation caused by compression for UGC (non-pristine reference). W e hope this dataset can inspire and facilitate research on practical requirements of video compression and quality assessment. R E F E R E N C E S [1] Chao Chen, Y ao-Chung Lin, Stev e Benting, and Anil Kokaram, “Op- timized transcoding for large scale adaptive streaming using playback statistics, ” in 2018 IEEE International Conference on Image Processing , 2018. [2] Zhou W ang, Alan C. Bovik, Hamid R. Sheikh, and Eero P . Simoncelli, “Image quality assessment: From error visibility to structural similarity , ” IEEE T ransactions on Image Pr ocessing , vol. 13, no. 4, 2004. [3] Zhi Li, Anne Aaron, Ioannis Katsavounidis, Anush Moorthy , and Megha Manohara, “T ow ard a practical perceptual video quality metric, ” Blog, Netflix T echnolo gy , 2016. [4] Chao Chen, Mohammad Izadi, , and Anil Kokaram, “ A no-reference perceptual quality metric for videos distorted by spatially correlated noise, ” A CM Multimedia , 2016. [5] Y ilin W ang, Sang-Uok Kum, Chao Chen, and Anil Kokaram, “ A perceptual visibility metric for banding artifacts, ” IEEE International Confer ence on Image Pr ocessing , 2016. [6] Deepti Ghadiyaram, Chao Chen, Sasi Inguva, and Anil Kokaram, “ A no- reference video quality predictor for compression and scaling artifacts, ” IEEE International Conference on Image Pr ocessing , 2017. [7] Sami Abu-El-Haija, Nisarg Kothari, Joonseok Lee, Paul Natsev , George T oderici, Balakrishnan V aradarajan, and Sudheendra Vijayanarasimhan, “Y outube-8m: A large-scale video classification benchmark, ” arXiv pr eprint arXiv:1609.08675 , 2016. [8] Chunhui Gu, Chen Sun, David A. Ross, Carl V ondrick, Caroline Pantofaru, Y eqing Li, Sudheendra V ijayanarasimhan, George T oderici, Susanna Ricco, Rahul Sukthankar, Cordelia Schmid, and Jitendra Malik, “ A va: A video dataset of spatio-temporally localized atomic visual actions, ” Pr oceedings of the Confer ence on Computer V ision and P attern Recognition , 2018. [9] Xiph.org, “V ideo test media, ” https://media.xiph.org/video/derf/. [10] K. Seshadrinathan, R. Soundararajan, A. C. Bovik, and L. K. Cormack, “Study of subjectiv e and objectiv e quality assessment of video, ” IEEE T ransactions on Image Pr ocessing , vol. 19, no. 6, 2010. [11] C. G. Bampis, Z. Li, A. K. Moorthy , I. Katsavounidis, A. Aaron, and A. C. Bovik, “Live netflix video quality of experience database, ” http: //liv e.ece.utexas.edu/research/LIVE NFLXStudy/index.html, 2016. [12] D. Ghadiyaram, J. P an, and A.C. Bovik, “Li ve mobile stall video database-ii, ” http://live.ece.ute xas.edu/research/LIVEStallStudy/ index.html, 2017. [13] Haiqiang W ang, Ioannis Katsav ounidis, Xin Zhou, Jiwu Huang, Man- On Pun, Xin Jin, Ronggang W ang, Xu W ang, Y un Zhang, Jeonghoon Park, Jiantong Zhou, Shawmin Lei, Sam Kwong, and C.-C. Jay Kuo, “V ideoset, ” http://dx.doi.org/10.21227/H2H01C, 2016. [14] Zeina Sinno and Alan C. Bovik, “Lar ge scale subjectiv e video quality study , ” IEEE International Conference on Image Pr ocessing , 2018. [15] Vlad Hosu, Franz Hahn, Mohsen Jenadeleh, Hanhe Lin, Hui Men, T am ´ as Szir ´ anyi, Shujun Li, and Dietmar Saupe, “The konstanz natural video database, ” http://database.mmsp- kn.de, 2017. [16] Amit Singhal, “Introducing the knowledge graph: Things, not strings, ” 2016. [17] Stefan Winkler , “ Analysis of public image and video databases for quality assessment, ” IEEE JOURN AL OF SELECTED TOPICS IN SIGNAL PROCESSING , vol. 6, no. 6, 2012. [18] Abhishek V erma, Luis Pedrosa, Madhukar R. K orupolu, David Oppen- heimer , Eric T une, and John W ilkes, “Large-scale cluster management at Google with Borg, ” in Pr oceedings of the European Conference on Computer Systems (Eur oSys) , Bordeaux, France, 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment