A deep learning-based method for prostate segmentation in T2-weighted magnetic resonance imaging

We propose a novel automatic method for accurate segmentation of the prostate in T2-weighted magnetic resonance imaging (MRI). Our method is based on convolutional neural networks (CNNs). Because of the large variability in the shape, size, and appea…

Authors: Davood Karimi, Golnoosh Samei, Yanan Shao

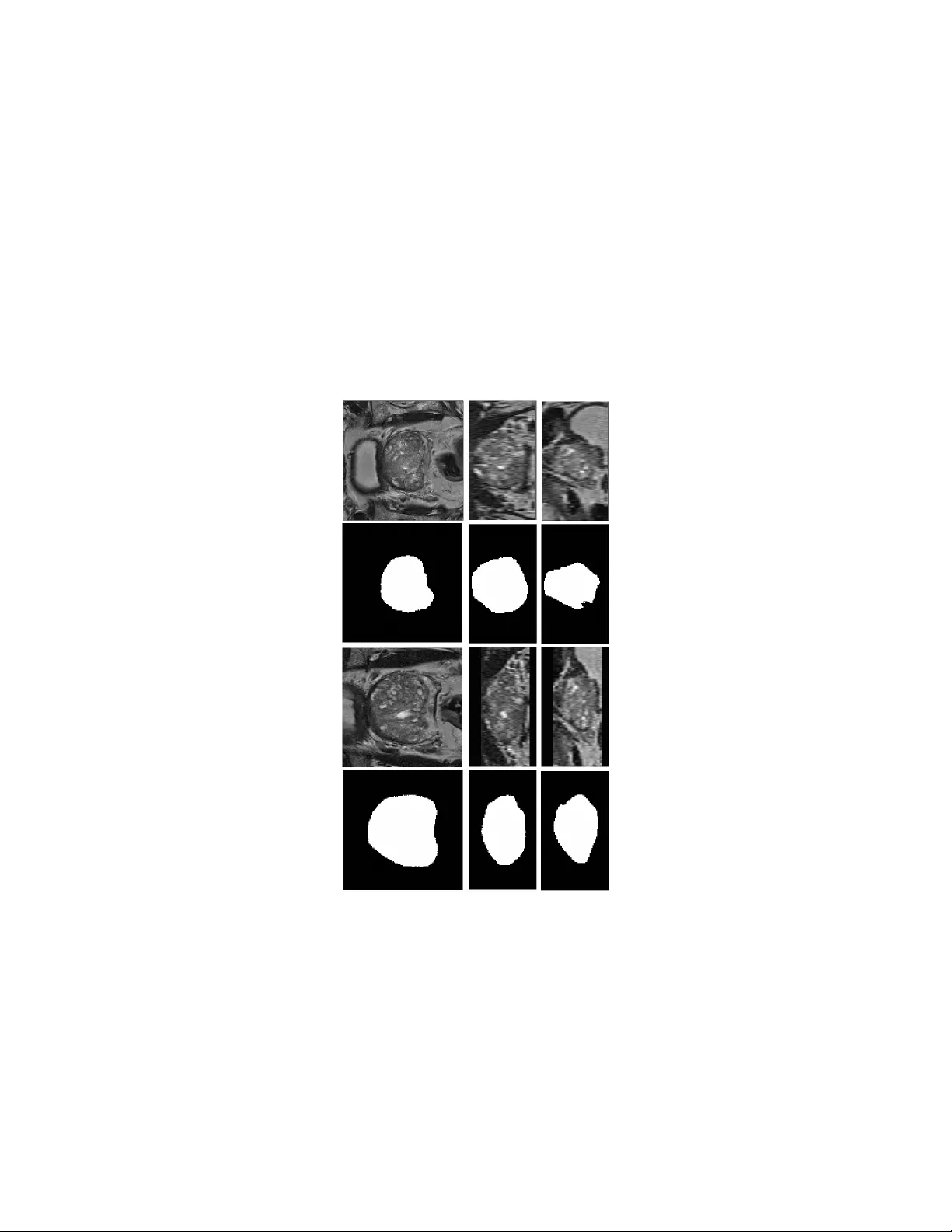

A no v el deep learning-based metho d for prostate seg- men tation in T2-w eigh ted magnetic resonance imaging Da voo d Karimi, Golno osh Samei, Y anan Shao, Septimiu Salcudean Departmen t of Electrical and Computer Engineering, The Universit y of British Colum bia, V ancouver, BC, Canada Abstract W e propose a no vel automatic method for accurate segmen tation of the prostate in T2-w eighted magnetic resonance imaging (MRI). Our metho d is based on conv olutional neural netw orks (CNNs). Because of the large v ariabilit y in the shape, size, and app earance of the prostate and the scarcit y of annotated training data, w e suggest training tw o separate CNNs. A global CNN will determine a prostate b ounding box, whic h is then resampled and sent to a local CNN for accurate delineation of the prostate b oundary . This wa y , the local CNN can effectively learn to seg- men t the fine details that distinguish the prostate from the surrounding tissue using the small amount of av ailable training data. T o fully exploit the training data, we syn thesize additional data by deforming the train- ing images and segmen tations using a learned shape model. W e apply the prop osed method on the PROMISE12 c hallenge dataset and achiev e state of the art results. Our prop osed metho d generates accurate, smooth, and artifact-free segmen tations. On the test images, w e achiev e an av erage Dice score of 90.6 with a small standard deviation of 2.2, which is supe- rior to all previous metho ds. Our t wo-step segmentation approach and data augmentation strategy ma y b e highly effectiv e in segmentation of other organs from small amounts of annotated medical images. 1 In tro duction Segmen tation of the prostate in T2-w eighted magen tic resonance imaging (MRI) is an essential step for many tasks in treatmen t planning and interv ention [22, 53]. Automatic segmentation metho ds are highly desirable b ecause they can increase the sp eed and reproducibility of the segmen tation. In the past decades, man y studies ha ve prop osed (semi-)automatic metho ds for prostate segmen- tation in T2-weigh ted MRI [51, 12, 24]. Ho wev er, fully automatic prostate segmen tation is v ery challenging b ecause of the inter-patien t v ariabilit y in the prostate size, shape, and app earance, v ariations in the scanners and scanning proto cols, and similarit y of the prostate with the surrounding tissue. A large n umber of the metho ds prop osed for prostate segmentation in T2- w eighted MRI use atlases [19, 31]. In these metho ds, a num b er of MR images with known prostate segmen tation are registered to the target image. Mutual information, cross-correlation, image feature correspondence, and the image gra- dien t are among the image similarity metrics used for registration. The deformed prostate segmentation masks of the atlas images are then com bined to infer the 1 segmen tation of the prostate in the target image. Therefore, atlas-based meth- o ds turn the segmen tation problem in to a registration problem. A critical c hoice in these metho ds is how to combine/fuse the registered segmen tation masks. One can rank the segmen tation masks based on some image similarit y metric and choose the most similar segmentation, or use more elaborate metho ds such as ma jorit y voting, simultaneous truth and p erformance level estimation (ST A- PLE), or iterative lab el fusion [6, 19, 21]. In general, atlas-based metho ds are computationally expensive and can pro duce p oor segmen tations, esp ecially if the target image is very differen t from the p opulation of the images in the atlas. T o ac hiev e acceptable results, some atlas-based metho ds rely on additional steps based on statistical shap e mo dels [31, 30, 10]. Moreo ver, most of the atlas-based metho ds follow a global registration strategy , which mak es them unnecessarily sensitiv e to the anatomical features that are far aw ay from the prostate and in- creases the computational time. T o o v ercome these shortcomings, some studies ha ve prop osed tw o-step registration approaches in which a global registration is first p erformed to identify the lo cation of the prostate in the image. In the sec- ond stage, a lo cal registrtation is p erformed by fo cusing on the prostate region [50, 34]. Another class of methods includes those based on deformable models suc h as activ e shap e models and level sets [52, 25, 18, 44, 47]. A great app eal of these metho ds is that they are based on sound theory from physical sciences and mathematics. How ever, these metho ds can b e very sensitive to initialization [45] and a go od initialization may b e hard to obtain. Moreov er, the quality of segmen tation can b e p oor, esp ecially where the edge information is not strong. Therefore, some of these metho ds dep end on man ual initialization or rely on other prioir information in the form of shap e models to regularize or refine the generated segmen tation [48, 5]. Some studies ha ve prop osed methods based on graph cuts [8, 27]. Although these metho ds are versatile, they hav e their own limitations. F or example, they pro duce p oor results at the lo cations of weak edges and typically need p ost-processing steps in order to obtain satisfactory results. Recen tly , some studies hav e shown that the p erformance of graph cut-based metho ds can b e substan tially improv ed by using activ e contours and b y form ulating the graph cut metho d in terms of super-vo xels instead of raw vo xel intensities [41, 42]. Because of the difficulties faced b y the methods mentioned ab o v e, a large n umber of studies hav e tried to combine the adv antages of tw o or more of these framew orks. Many of these metho ds also use some t yp e of mac hine learning to ac hieve impro v ed results. F or example, several studies ha ve com bined proba- bilistic learning of the distribution of prostate texture or vo xel intensities with shap e mo dels [43, 29, 1]. Sup ervised and un-sup ervised machine learning meth- o ds such as random forests and clustering metho ds hav e also been com bined with deformable mo dels and atlas-based methods for prostate semen tation in T2-w eighted MRI [28, 11, 9]. One study has suggested using shape models in the framew ork of marginal space learning for prostate segmentation in T2-weigh ted MRI [2] Despite the great efforts and n umerous metho ds that hav e b een prop osed in 2 recen t years, automatic segmentation of the prostate in T2-weigh ted MRI still remains a challenge. Most of the proposed metho ds achiev e muc h low er per- formance than manual segmentation. If the test images are v ery different from the images used for model developmen t, e.g., due to in ter-patient v ariabilit y or differen t scanning proto cols, the p erformance of these metho ds can deteriorate substan tially . In recent years, deep conv olutional neural net w orks (CNNs) hav e achiev ed unpreceden ted results in segmentation of natural images [26, 3, 33]. Compared to the more traditional segmentation metho ds, the new CNN architectures that ha ve been proposed for dense segmen tation p ossess a n umber of highly desirable c haracteristics: 1) they hav e a very high capacit y that enables them to effectively describ e the large v ariations that exist in the training data, 2) they are able to explain lo cal and global information at differen t resolutions simultaneously , 3) in many applications they can ac hieve quite satisfactory results without the need to additional post-pro cessing steps to refine their segmen tation, which also implies that they can b e trained end-to-end as a single mo dule, and 4) ev en though they ha v e long training times, their inference time is very fast. Consequen tly , many studies hav e recen tly emplo yed CNNs for segmentation of medical images [23] and, in general, they hav e rep orted v ery promising results. F or segmentation of the prostate in T2-w eighted MRI, in particular, deep CNNs with volumetric conv olutional filters hav e b een shown to ac hieve v ery go o d results [32]. One study resampled the ground-truth segmen tation to generate prostate masks with differen t resolutions for more effectiv e training of a deep CNN [49]. The trained CNN w as applied on sub-volumes of the input image and a veraging of the probability maps estimated for all sub-v olumes w as used to obtain the final prostate segmentation. The proposed metho d achiev ed state of the art results, which w as also attributed to the use of short and long residual connections in the netw ork. Another study prop osed a deep CNN with 2D and 3D residual connections and achiev ed state of the art results [37]. Karimi et al. prop osed to segmen t the prostate in MR images using a no vel netw ork arc hitecture that predicted the lo cation, orien tation, and the coefficients of a statistical shap e model [16]. In this pap er, we propose a new CNN-based metho d for segmentation of the prostate in T2-weigh ted MR images. W e argue that the difficulty in achiev- ing h uman-level p erformance in this task is due to the large v ariabilit y in the shap e, size, and app earance of the prostate in these images. Based on the results achiev ed by CNNs in segmentation of natural images, w e think that the- oretically they should be able to ac hieve human-lev el p erformance in prostate segmen tation in T2-weigh ted MRI. How ever, this is not e asy to achiev e in prac- tice b ecause it is hard to effectiv ely train large CNNs with small amounts of annotated data. T o reduce this gap and effectively utilize the capacit y of deep CNNs with limited training data, w e suggest tw o strategies: 1. W e suggest training tw o CNNs. The first, glob al , CNN will accept the en tire image as its input and generate a soft prostate segmentation mask. This initial segmentation is then used to determine the lo cation and the 3 exten t of the prostate in the image. A second, lo c al , CNN will then work on a sub-volume of the image that is resampled from the input image in suc h a w a y that the prostate is approximately at the cen ter of the v olume and has an appro ximately fixed size. This will allo w the lo cal CNN to fo cus on learning features that are most relev an t for accurate delineation of the prostate boundary , whic h is a ma jor c hallenge due to similarity with the surrounding tissue, large v ariability , and scarcit y of training data. 2. W e use massive data augmen tation for training of the tw o CNNs. Here, our argumen t is that even 50 training images are not sufficient to train large CNNs. Therefore, we syn thesize additional realistic data by deforming the training images and their segmen tation masks using displacement fields that are computed based on a prostate shap e mo del. T o further improv e the training and av oid lo cal minima, random displacements and noise are in troduced during training. Moreo ver, using cross-v alidation we will iden tify the images that are most difficult to segmen t and will use this information in training our final mo del. 2 Materials and metho ds A schematic represen tation of the steps inv olved in our fully-automatic segmen- tation metho d is sho wn in Figure 1. Considering the great v ariabilit y in the shap e, size, texture, and appearance of the prostate and its surrounding organs and the limited av ailabilit y of training data, we suggest learning tw o separate CNNs. After some pre-pro cessing steps, the input image is passed to a global CNN, whic h generates an initial soft segmen tation of the prostate. W e will use this initial segmen tation, along with a prostate shap e mo del, to identify the lo cation and the extent of the prostate in the image. Using this information, we extract a v olume of interest that contains the prostate and send it to a second, lo cal, CNN that accurately delineates the prostate b oundary . Basic thresholding and post-pro cessing steps on the output probabilit y map of the lo cal CNN will generate the final prostate segmen tation mask. In the follo wing sub-sections, w e first pro vide a description of the steps in volv ed in our proposed segmentation metho d. W e will then pro vide more detailed information on v arious steps. 2.1 Steps of the prop osed segmentation metho d As shown in Figure 1, giv en a test image the follo wing steps are p erformed to generate the prostate segmen tation mask. Pre-pro cessing First, a bias correction is applied to the image using the N4ITK algorithm [39, 46]. The image is resampled to an isotropic v o xel size of 1 mm 3 . A volume of size 128 × 128 × 72 vo xels is then created, us- ing either centered-cropping or zero-padding if the image is, resp ectiv ely , larger or smaller than this desired size in each dimension. Finally , the im- 4 age is normalized such that the vo xels hav e a mean of zero and a standard deviation of one. Determining the prostate location and extent The pre-pro cessed 128 × 128 × 72-vo xel image is sent to the global CNN. The output of this net work is a probabilit y map where the v alue of each vo xel sho ws the probability of that v o xel b elonging to the prostate. As we will show in the Results section, this probabilit y map itself pro vides a goo d segmentation of the prostate. Ho wev er, in our metho d we will treat it as an initial segmenta- tion that is used only to identify the location and the extent of the prostate in the image. The center of mass of this segmentation can give us an ac- curate estimation of the location of the cen ter of the prostate. How ever, in order to obtain an accurate estimation of the extent of the prostate, w e need to remo ve the possible outlier v o xels. F or this purpose, we fit a prostate shape model to the learned probability map using a particle sw arm optimization algorithm. Fine segmentation of the prostate Given the information on the lo cation and the exten t of the prostate from the ab ov e step, the pre-pro cessed MR image is resampled again to generate an input for the lo cal CNN. Similar to the global CNN, the local CNN has an input size of 128 × 128 × 72 vo xels. Ho wev er, whereas the input to the global CNN has an isotropic vo xel size of 1 mm 3 , the input to the local CNN is generated suc h that the prostate is at the cen ter and has a pre-determined size. Specifically , in this stage w e resample the image such that the prostate has a size of approximately 80 × 80 × 48 v oxels. F or example, if the prostate size estimated using the global CNN is 40 × 32 × 36 mm, the MR image is resampled to hav e a v oxel size of 0 . 50 × 0 . 40 × 0 . 75 mm such that the prostate in the resampled image has, appro ximately , the desired size of 80 × 80 × 48 v oxels. This resampled volume of interest is passed to the lo cal CNN, which estimates Figure 1: A schematic represen tation of the steps inv olved in the prop osed segmen tation metho d. 5 a more accurate segmen tation of the prostate. P ost-pro cessing The probability map pro duced b y the lo cal CNN, thresh- olded at 0.50, constitutes our p enultimate prostate segmen tation. Our p ost-processing consists only of applying an opening op eration (erosion follo wed by dilation) [38] using a spherical structuring elemen t with a radius of 2 mm. This op eration will remo ve the o ccasional non-smo oth artifacts on the surface of the prostate mask obtained by thresholding the probabilit y map. 2.2 CNN arc hitecture The CNN arc hitecture used in this study tak es adv an tage of man y of the ef- fectiv e CNN design practices. A sc hematic represen tation of this arc hitecture is sho wn in Figure 2. The netw ork has a contracting and an expanding path that enable it to learn features at fine and coarse resolutions [35, 4]. In the con tracting path, the netw ork computes conv olutional feature maps with k er- nels of increasing size k ∈ { 3 , 5 , ..., 2 d + 1 } and stride s ∈ { 1 , 2 , ..., d } . The net work sho wn in Figure 2 is for d = 4. These conv olutional feature maps will capture different levels of detail directly in the source image. In each resolution lev el, a residual mo dule with short and long skip connections is used to increase the expressiveness of these features and netw ork’s capacit y and also make the training faster [14, 7]. Each of the computed feature maps is passed forw ard to all low er lev els b y applying conv olutional filters with the prop er stride. This will promote feature reuse and make the training easier by reducing the num b er of parameters and improving the gradient flow [15]. Therefore, in the con- tracting path the num b er of feature maps geometrically increases while their size becomes smaller. The computed features then go through a series of up- con volutions in order to build up the prostate segmentation mask. Thsi pro cess will start from the low-resolution feature maps of the con tracting path, which moslt y con tain coarse information. In order to aid reco v ery of the fine detail, the m ulti-resolution information a v ailable in the feature maps of the con tracting path is re-in tro duced into the expanding path via concate nating those feature maps to the feature maps of the expanding path. Residual mo dules, similar to those in the con tracting path, are also applied here in the expanding path. All con volutional and up-con volutional lay ers, except for those applied directly on the input MR image, use k ernels of size 3. F urthermore, all these lay ers, except for the last are follow ed b y rectified linear units [20]. The final feature map, which has the same dimensions as the source image is passed to a final con volutional la yer follo wed by a soft-max op eration to produce a pixel-wise probabilit y map of the prostate. Both CNNs follow the same architecture as shown in Figure 2, except that they hav e different depths. W e found that for the global CNN the results were impro ved as w e increased the net w ork depth to d = 5. W e think this is b ecause the low-resolution feature maps hav e a larger field of view that enables them to learn features that b etter represent the lo cation and extent of the prostate in 6 Figure 2: A sc hematic represen tation of the CNN arc hitecture used in this study . The netw ork s ho wn in this figure has a depth of 4. The global and lo cal net works used in this study had depths of 5 and 3, resp ectiv ely . the image. F or the lo cal CNN, how ever, a shallow er netw ork with a depth d = 3 led to b etter results. This is b ecause the input to this netw ork is a resampled v olume of interest in whic h the prostate is approximately at the center and has appro ximately a fixed pre-determined size. Therefore, the lo cal CNN do es not ha ve to learn to estimate the lo cation or the extent of the prostate but only to delineate the prostate boundary . Therefore, for the lo cal CNN increasing the depth unnecessarily increases the n umber of netw ork parameters and in tro duces coarse features that do not contribute substan tially to the delineation of the prostate b oundary . 2.3 T raining and ev aluation W e used the data from the PROMISE12 c hallenge [24]. This dataset consists of 50 training and 30 test images, whic h ha ve b een acquired at different centers and using different scanners and scanning proto cols. The dataset is very c hallenging b ecause of the large v ariation in the vo xel size, field of view, and dynamic range of the images as well as in the app earance of the prostate. Approximately half of the images include an endorectal coil. W e used a five-fold cross v alidation approach to determine the netw ork size, n umber of features and other imp ortan t training parameters such as the learning rate sc heduling and the dropout rate. Each time, the t wo CNNs w ere trained on 40 of the images and ev aluated on the remaining 10 images. Ha ving found the optimal net w ork size and learning parameters using cross-v alidation, the final global and local CNNs were trained on all 50 training images and applied on the 30 test images. The final global and local CNNs w ere both initialized at random using He’s method [13] and trained using Adam’s metho d [17] with a batch size 7 of one to maximize the Dice score b etw een the output probabilit y m ap of the net work and the ground-truth segmentation mask. T raining was p erformed for 1000 ep ochs. The starting learning rate was 10 − 5 , which was m ultiplied by 0.5 when the training cost function plateaued. In order to cop e with the limited training data, w e use a data augmentation sc heme based on a learned shape mo del. In this strategy , the training images and its labels are deformed using displacemen ts suggested b y a learned shape model. Moreo ver, we add white Gaussian noise with a standard deviation of 0.03 to each image at each training step. T o further reduce the risk of o verfitting, we use drop out [40] with a rate of 0.15 on all con volutional la yers in the net work. This drop out rate significantly reduced the o v erfitting suc h that the segmentation p erformance on the training and v alidation images w as almost equal. 3 Results and discussion T able 1 sho ws the a verage and standard deviation of the Dice score on the training, v alidation, and test images. In order to show the effect of the different steps in our segmentation pipeline, w e hav e shown the resulting Dice score after eac h step. Our prop osed metho d achiev es a high final Dice score of 90 . 6 with a lo w standard deviation of 2 . 2. Global CNN Local CNN Post-processing T raining 85 . 0 ± 3 . 9 91 . 0 ± 2 . 3 91 . 2 ± 2 . 2 V alidation 84 . 9 ± 4 . 1 90 . 4 ± 2 . 3 91 . 2 ± 2 . 0 T est 85 . 4 ± 3 . 6 90 . 2 ± 2 . 4 90 . 6 ± 2 . 2 T able 1: Mean ± standard deviation of the Dice score for training, v alidation, and test images after eac h step in the segmentation pip eline. A test image and its prostate segmen tation masks produced by the global and local CNNs in axial, sagittal, and coronal directions are sho wn in Figure 3. The accuracy of segmen tation of the global CNN is not very high. The Dice score achiev ed by the global CNN is 85 . 4 ± 3 . 6, which is substan tially lo wer than that ac hieved b y the lo cal CNN (90 . 2 ± 2 . 4). Nonetheless, as w e mentioned ab o v e, our metho d uses the segmentation provided by the global CNN only to estimate the lo cation and exten t of the prostate in the image. Our results show that it is possible to accurately extract this information from the probability map produced by the global CNN. The error in the estimation of the start and end of the prostate in axial, sagittal, and coronal directions were, resp ectiv ely , 0 . 8 ± 0 . 9, 1 . 4 ± 1 . 5, and 1 . 2 ± 1 . 0 millimeters, and the error in estimating the size of the prostate in these three directions were, 1 . 6 ± 1 . 4, 2 . 2 ± 2 . 0, and 2 . 1 ± 1 . 7 millimeters, resp ectiv ely . These errors are quite within the range of acceptable error range for our intended goal. Using the information on the size and location of the prostate in the image, a 128 × 128 × 72-vo xel v olume is resampled such that the prostate is approximately at the center and has a size of 80 × 80 × 48 v oxels. As can b e seen in the example image shown in Figure 3, dep ending on 8 the size of the MR image and the size and lo cation of the prostate, this ma y lead to stretching or shrinking of the image in different directions and may need cropping or zero padding. Ho wev er, it will ensure that the prostate is at the cen ter of the volume and has a pre-determined size, allowing the local CNN to ac hieve sup erior segmen tation accuracy . As can b e seen in Figure 3, the lo cal CNN generates an accurate segmen tation mask that usually does not need any p ost-processing. Ho w ever, in our metho d w e alwa ys p erform a morphological op ening op eration that remov es any small non-smo oth features caused by hard- thresholding the output probability map of the lo cal CNN. As shown in T able 1, this operation has a noticeable p ositive effect on the a verage Dice coefficient. Figure 3: The prostate segmentation masks pro duced b y the global and lo cal CNNs. The first row sho ws the 128 × 128 × 72-v oxel image sen t to the global CNN. The second row shows the segmen tation mask produced b y the global CNN. The third ro w sho ws the volume resampled from the input image to b e used b y the lo cal CNN. The last ro w sho ws the prostate segmentation mask pro duced b y the lo cal CNN. 9 More examples of the p erformance of the prop osed segmen tation algorithm on test images are shown in Figure 4. They further demonstrate that the pro- p osed method is able to accurately segment the prostate. As the n umbers in T able 1 suggest, one of the reasons for the success of the proposed metho d is the t wo-step segmentation metho d that enables the lo cal CNN to achiev e sup erior results. As w e argued ab ov e, the v ariability in the shap e, size, and app earance of the prostate and it surrounding anatomy is very high. Although large CNNs ha ve the capacit y to capture such complexit y , the amount of training data is not large enough to realize their full p otential. Therefore, in the absence of v ery large datasets as those av ailable for natural images [36], it will be very difficult to achiev e segmentation accuracies close to human experts. Our strat- egy of training t w o separate CNNs eases the situation b y introducing a division of lab or in whic h the global CNN is only exp ected to produce a s egmen tation that is accurate enough to estimate the lo cation and size of the prostate while the lo cal CNN is giv en a well-formatted input that makes it easy to accurately segmen t the prostate b oundary . Figure 4: The prostate segmentation masks pro duced b y the global and lo cal CNNs. The first row sho ws the 128 × 128 × 72-v oxel image sen t to the global CNN. The second row shows the segmen tation mask produced b y the global CNN. The third ro w sho ws the volume resampled from the input image to b e used b y the lo cal CNN. The last ro w sho ws the prostate segmentation mask pro duced b y the lo cal CNN. Another imp ortan t factor that contributes to the p erformance of our pro- p osed method is data augmentation. In order to examine the impact of data aug- men tation, we trained the CNNs without any data augmen tation and ac hieved a final Dice s core of 86 . 1 ± 6 . 6. Another widely used data augmen tation strategy is to deform the image and its segmentation mask using a random deforma- 10 tion field. W e found that this strategy was also very useful in pushing the p erformance of our prop osed method, but achiev ed a sligh tly low er Dice score 0 . 893 ± 0 . 040 that our augmen tation approach based on shap e mo dels. Another imp ortan t factor in our optimization is the careful c hoice of the drop out rate. Dropout is kno wn to be essen tial in net works with large fully- connected lay ers. How ever, its imp ortance is usually assumed to b e less signif- ican t in the conv olutional lay ers [40]. How ever, our exp erimen ts sho w ed that, esp ecially for ac hieving sup erior p erformance in the local CNN, it was necessary to choose a drop out rate of 10 − 20%. This ensured that training and v alidation p erformance w ere very close and the netw ork conv erged nicely , whereas without drop out our method achiev ed a go od p erformance on the training set but 4 Conclusion Deep learning mo dels ha ve b een v ery successful for medical image segmentation. Giv en the small size of t ypical datasets, training of these mo dels is c hallenging. In this work, we show ed that for prostate segmen tation in MRI, an effective approac h is to p erform the segmen tation in tw o stages: in Stage One the prostate is lo cated and roughly segmented; in Stage Tw o, the prostate is accurately delineated using the b ounding box obtained from Stage One. A similar proce dure as that prop osed in this pap er for prostate segmentation in MRI ma y b e useful for other medical image segmentation applications. References [1] Philip D Allen, James Graham, David C Williamson, and Charles E Hutc hinson. Differen tial segmentation of the prostate in mr images us- ing com bined 3d shap e mo delling and vo xel classification. In Biome dic al Imaging: Nano to Macr o, 2006. 3r d IEEE International Symp osium on , pages 410–413. IEEE, 2006. [2] Neil Birkb ec k, Jingdan Zhang, Martin Requardt, Berthold Kiefer, and Pe- ter Gall. Region-specific hierarchical segmen tation of mr prostate using discriminativ e learning. [3] Liang-Chieh Chen, George P apandreou, Iasonas Kokkinos, Kevin Murphy , and Alan L. Y uille. Deeplab: Semantic image segmentation with deep con volutional nets, atrous con volution, and fully connected crfs. CoRR , abs/1606.00915, 2016. [4] ¨ Ozg ¨ un C ¸ i¸ cek, Ahmed Ab dulk adir, So eren S Lienk amp, Thomas Brox, and Olaf Ronneberger. 3d u-net: learning dense v olumetric segmen tation from sparse annotation. In International Confer enc e on Me dic al Image Comput- ing and Computer-Assiste d Intervention , pages 424–432. Springer, 2016. 11 [5] Timothy F Co otes, Andrew Hill, Christopher J T aylor, and Jane Haslam. The use of active shap e mo dels for locating structures in medical images. In Biennial International Confer enc e on Information Pr o c essing in Me dic al Imaging , pages 33–47. Springer, 1993. [6] Jason A Dowling, Jurgen F ripp, Shekhar Chandra, Josien P W Pluim, Jonathan Lambert, Joel P arker, James Denham, P eter B Greer, and Olivier Salv ado. F ast automatic m ulti-atlas segmen tation of the prostate from 3d mr images. In International Workshop on Pr ostate Canc er Imaging , pages 10–21. Springer, 2011. [7] Michal Drozdzal, Eugene V oron tsov, Gabriel Chartrand, Samuel Kadoury , and Chris P al. The importance of skip connections in biomedical image segmen tation. In International Workshop on L ar ge-Sc ale Annotation of Biome dic al Data and Exp ert L ab el Synthesis , pages 179–187. Springer, 2016. [8] Jan Egger. Pcg-cut: graph driv en segmen tation of the prostate central gland. PloS one , 8(10):e76645, 2013. [9] Qinquan Gao, Aksha y Asthana, T ong T ong, Yip eng Hu, Daniel Ruec kert, and Philip Edwards. Hybrid decision forests for prostate segmen tation in m ulti-channel mr images. In Pattern R e c o gnition (ICPR), 2014 22nd International Confer enc e on , pages 3298–3303. IEEE, 2014. [10] Y. Gao, R. Sandhu, G. Fich tinger, and A. R. T annenbaum. A coupled global registration and segmentation framework with application to mag- netic resonance prostate imagery . IEEE T r ansactions on Me dic al Imaging , 29(10):1781–1794, Oct 2010. [11] Soumy a Ghose, Jhimli Mitra, Arnau Oliver, R Mart ´ ı, Xavier Llad´ o, Jordi F reixenet, Joan C Vilanov a, D´ esir ´ e Sidib ´ e, and F abrice Meriaudeau. A random forest based classification approac h to prostate se gmen tation in mri. MICCAI Gr and Chal lenge: Pr ostate MR Image Se gmentation , 2012, 2012. [12] Soumy a Ghose, Arnau Oliv er, Robert Mart ´ ı, Xavier Llad´ o, Joan C Vi- lano v a, Jordi F reixenet, Jhimli Mitra, D ´ esir´ e Sidib´ e, and F abrice Meri- audeau. A survey of prostate segmen tation methodologies in ultrasound, magnetic resonance and computed tomography images. Computer metho ds and pr o gr ams in biome dicine , 108(1):262–287, 2012. [13] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Delving deep in to rectifiers: Surpassing h uman-level p erformance on imagenet classifica- tion. In IEEE International Confer enc e on Computer Vision (ICCV) 2015 , 2015. [14] Kaiming He, Xiangyu Zhang, Shao qing Ren, and Jian Sun. Deep residual learning for image recognition. In Computer Vision and Pattern R e c o gni- tion (CVPR), 2016 IEEE Confer enc e on , 2016. 12 [15] Gao Huang, Zhuang Liu, Kilian Q W einberger, and Laurens v an der Maaten. Densely connected conv olutional net works. arXiv pr eprint arXiv:1608.06993 , 2016. [16] Dav o od Karimi, Golno osh Samei, Claudia Kesch, Guy Nir, and Septi- miu E Salcudean. Prostate segmentation in mri using a conv olutional neu- ral netw ork architecture and training strategy based on statistical shape mo dels. International journal of c omputer assiste d r adiolo gy and sur gery , 13(8):1211–1219, 2018. [17] Diederik P . Kingma and Jimm y Ba. Adam: A metho d for sto chastic opti- mization. In Pr o c e e dings of the 3r d International Confer enc e on L e arning R epr esentations (ICLR) , 2014. [18] Matthias Kirschner, Florian Jung, and Stefan W esarg. Automatic prostate segmen tation in mr images with a probabilistic active shape mo del. MIC- CAI Gr and Chal lenge: Pr ostate MR Image Se gmentation , 2012, 2012. [19] Stefan Klein, Uulk e A. v an der Heide, Irene M. Lips, Marco v an V ulp en, Marius Staring, and Josien P . W. Pluim. Automatic segmentation of the prostate in 3d mr images by atlas matc hing using lo calized mutual infor- mation. Me dic al Physics , 35(4):1407–1417, 2008. [20] Alex Krizhevsky , Ily a Sutskev er, and Geoffrey E Hin ton. Imagenet clas- sification with deep conv olutional neural netw orks. In A dvanc es in neur al information pr o c essing systems , pages 1097–1105, 2012. [21] Thomas Robin Langerak, Uulk e A v an der Heide, Alexis NTJ Kotte, Max A Viergev er, Marco V an V ulp en, and Josien PW Pluim. Label fusion in atlas-based segmentation using a selective and iterativ e metho d for perfor- mance level estimation (simple). IEEE tr ansactions on me dic al imaging , 29(12):2000–2008, 2010. [22] Scott Leslie, Alvin Goh, Pierre-Marie Lew ando wski, Eric Yi-Hsiu Huang, Andre Luis de Castro Abreu, Andre K Berger, Hamed Ahmadi, Isuru Ja- y aratna, Sunao Sho ji, Inderbir S Gill, et al. 2050 contemporary image- guided targeted prostate biopsy b etter c haracterizes cancer volume, glea- son grade and its 3d location compared to systematic biopsy . The Journal of Ur olo gy , 187(4):e827, 2012. [23] Geert Litjens, Thijs Kooi, Babak Eh teshami Bejnordi, Arnaud Arindra Adiy oso Setio, F rancesco Ciompi, Mohsen Ghafo orian, Jero en A.W.M. v an der Laak, Bram v an Ginnek en, and Clara I. Snc hez. A surv ey on deep learning in medical image analysis. Me dic al Image Analysis , 42(Supplemen t C):60 – 88, 2017. [24] Geert Litjens, Rob ert T oth, W endy v an de V en, Caroline Ho eks, Sjo erd Kerkstra, Bram v an Ginnek en, Graham Vincent, Gw enael Guillard, Neil 13 Birb ec k, Jindang Zhang, et al. Ev aluation of prostate segmen tation algo- rithms for mri: the promise12 c hallenge. Me dic al image analysis , 18(2):359– 373, 2014. [25] Xin Liu, DL Langer, MA Haider, TH V an der Kw ast, AJ Ev ans, MN W er- nic k, and IS Y etik. Unsup ervised segmen tation of the prostate using mr images based on level set with a shap e prior. In Engine ering in Me dicine and Biolo gy So ciety, 2009. EMBC 2009. A nnual International Confer enc e of the IEEE , pages 3613–3616. IEEE, 2009. [26] Jonathan Long, Ev an Shelhamer, and T revor Darrell. F ully conv olutional net works for semantic segmen tation. In Pr o c e e dings of the IEEE Confer enc e on Computer Vision and Pattern R e c o gn ition , pages 3431–3440, 2015. [27] D. Mahapatra and J. M. Buhmann. Prostate mri segmentation us- ing learned seman tic knowledge and graph cuts. IEEE T r ansactions on Biome dic al Engine ering , 61(3):756–764, March 2014. [28] Nasr Makni, Nacim Betrouni, and Olivier Colot. Introducing spatial neigh- b ourhoo d in eviden tial c-means for segmen tation of m ulti-source images: Application to prostate m ulti-parametric mri. Information F usion , 19(Sup- plemen t C):61 – 72, 2014. Special Issue on Information F usion in Medical Image Computing and Systems. [29] Nasr Makni, P . Puech, R. Lopes, A. S. Dew alle, O. Colot, and N. Be- trouni. Com bining a deformable mo del and a probabilistic framework for an automatic 3d segmen tation of prostate on mri. International Journal of Computer Assiste d R adiolo gy and Sur gery , 4(2):181, Dec 2008. [30] S´ ebastien Martin, Vincen t Daanen, and Jo celyne T ro ccaz. A tlas-based prostate segmentation using an hybrid registration. International Journal of Computer Assiste d R adiolo gy and Sur gery , 3(6):485–492, 2008. [31] Sbastien Martin, Jo celyne T ro ccaz, and Vincent Daanen. Automated seg- men tation of the prostate in 3d mr images using a probabilistic atlas and a spatially constrained deformable mo del. Me dic al Physics , 37(4):1579–1590, 2010. [32] F austo Milletari, Nassir Na v ab, and Seyed-Ahmad Ahmadi. V-net: F ully con volutional neural netw orks for v olumetric medical image segmen tation. In 3D Vision (3DV), 2016 F ourth International Confer enc e on , pages 565– 571. IEEE, 2016. [33] Hyeon woo Noh, Seungho on Hong, and Boh yung Han. Learning decon- v olution netw ork for seman tic segmentation. In The IEEE International Confer enc e on Computer Vision (ICCV) , December 2015. [34] Y angming Ou, Jimit Doshi, Guray Erus, and Christos Dav atzikos. Multi- atlas segmentation of the prostate: A zooming process with robust regis- tration and atlas selection. MICCAI Gr and Chal lenge: Pr ostate MR Image Se gmentation , 2012, 2012. 14 [35] Olaf Ronneb erger, Philipp Fisc her, and Thomas Brox. U-net: Conv olu- tional netw orks for biomedical image segmentation. In International Con- fer enc e on Me dic al Image Computing and Computer-Assiste d Intervention , pages 234–241. Springer In ternational Publishing, 2015. [36] Olga Russak ovsky , Jia Deng, Hao Su, Jonathan Krause, Sanjeev Satheesh, Sean Ma, Zhiheng Huang, Andrej Karpathy , Adity a Khosla, Michael Bern- stein, et al. Imagenet large scale visual recognition challenge. International Journal of Computer Vision , 115(3):211–252, 2015. [37] Bruno Sciolla, Matthieu Martin, and Philipp e Delac hartre. Multi-pass 3d con volutional neural netw ork segmentation of prostate mri images. 2017. [38] Jean Serra. Introduction to mathematical morphology . Computer vision, gr aphics, and image pr o c essing , 35(3):283–305, 1986. [39] John G Sled, Alex P Zijden b os, and Alan C Ev ans. A nonparametric metho d for automatic correction of intensit y non uniformity in mri data. IEEE tr ansactions on me dic al imaging , 17(1):87–97, 1998. [40] Nitish Sriv astav a, Geoffrey E Hinton, Alex Krizhevsky , Ilya Sutskev er, and Ruslan Salakhutdino v. Drop out: a simple wa y to preven t neural netw orks from ov erfitting. Journal of machine le arning r ese ar ch , 15(1):1929–1958, 2014. [41] Zhiqiang Tian, Lizhi Liu, Zhenfeng Zhang, and Bao wei F ei. Superpixel- based segmentation for 3d prostate mr images. IEEE tr ansactions on me d- ic al imaging , 35(3):791–801, 2016. [42] Zhiqiang Tian, LiZhi Liu, Zhenfeng Zhang, Jianru Xue, and Bao wei F ei. A supervo xel-based segmentation metho d for prostate mr images. Me dic al physics , 44(2):558–569, 2017. [43] Rob ert T oth, B Nicolas Blo c h, Elizab eth M Genega, Neil M Rofsky , Rob ert E Lenkinski, Mark A Rosen, Arjun Kalyanpur , Sona Pungavk ar, and Anant Madabhushi. Accurate prostate volume estimation using mul- tifeature active shap e mo dels on t2-weigh ted mri. A c ademic r adiolo gy , 18(6):745–754, 2011. [44] Rob ert T oth and Anant Madabhushi. Multifeature landmark-free activ e app earance mo dels: application to prostate mri segmen tation. IEEE T r ans- actions on Me dic al Imaging , 31(8):1638–1650, 2012. [45] Rob ert T oth, Palla vi Tiwari, Mark Rosen, Galen Reed, John Kurhanewicz, Arjun Kalyanpur, Sona Pungavk ar, and Anan t Madabh ushi. A magnetic resonance sp ectroscopy driven initialization sc heme for activ e shape model based prostate segmentation. Me dic al Image Analysis , 15(2):214 – 225, 2011. 15 [46] N. J. T ustison, B. B. Av ants, P . A. Co ok, Y. Zheng, A. Egan, P . A. Y ushke- vic h, and J. C. Gee. N4itk: Improv ed n3 bias correction. IEEE T r ansactions on Me dic al Imaging , 29(6):1310–1320, June 2010. [47] Graham Vincent, Gwenael Guillard, and Mike Bow es. F ully automatic segmen tation of the prostate using activ e app earance mo dels. MICCAI Gr and Chal lenge: Pr ostate MR Image Se gmentation , 2012, 2012. [48] Xiong Y ang, Shu Zhan, Dongdong Xie, Hong Zhao, and T oru Kurihara. Hierarc hical prostate mri segmentation via lev el set clustering with shap e prior. Neur o c omputing , 257(Supplement C):154 – 163, 2017. Mac hine Learning and Signal Pro cessing for Big Multimedia Analysis. [49] Lequan Y u, Xin Y ang, Hao Chen, Jing Qin, and Pheng-Ann Heng. V ol- umetric convnets with mixed residual connections for automated prostate segmen tation from 3d mr images. 2017. [50] Baow ei F ei Zhiqiang Tian, LiZhi Liu. A fully automatic multi-atlas based segmen tation metho d for prostate mr images, 2015. [51] Y anong Zh u, Stuart Williams, and Reyer Zwiggelaar. Computer tec hnology in detection and staging of prostate carcinoma: a review. Me dic al Image A nalysis , 10(2):178–199, 2006. [52] Y anong Zhu, Stuart Williams, and Reyer Zwiggelaar. A hybrid asm ap- proac h for sparse v olumetric data segmentation. Pattern r e c o gnition and image analysis , 17(2):252–258, 2007. [53] Chiara Zini, Elisab eth Hipp, Stephen Thomas, Alessandro Napoli, Carlo Catalano, and Aytekin Oto. Ultrasound-and mr-guided fo cused ultrasound surgery for prostate cancer. World journal of r adiolo gy , 4(6):247, 2012. 16

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment