Evolutionary Clustering via Message Passing

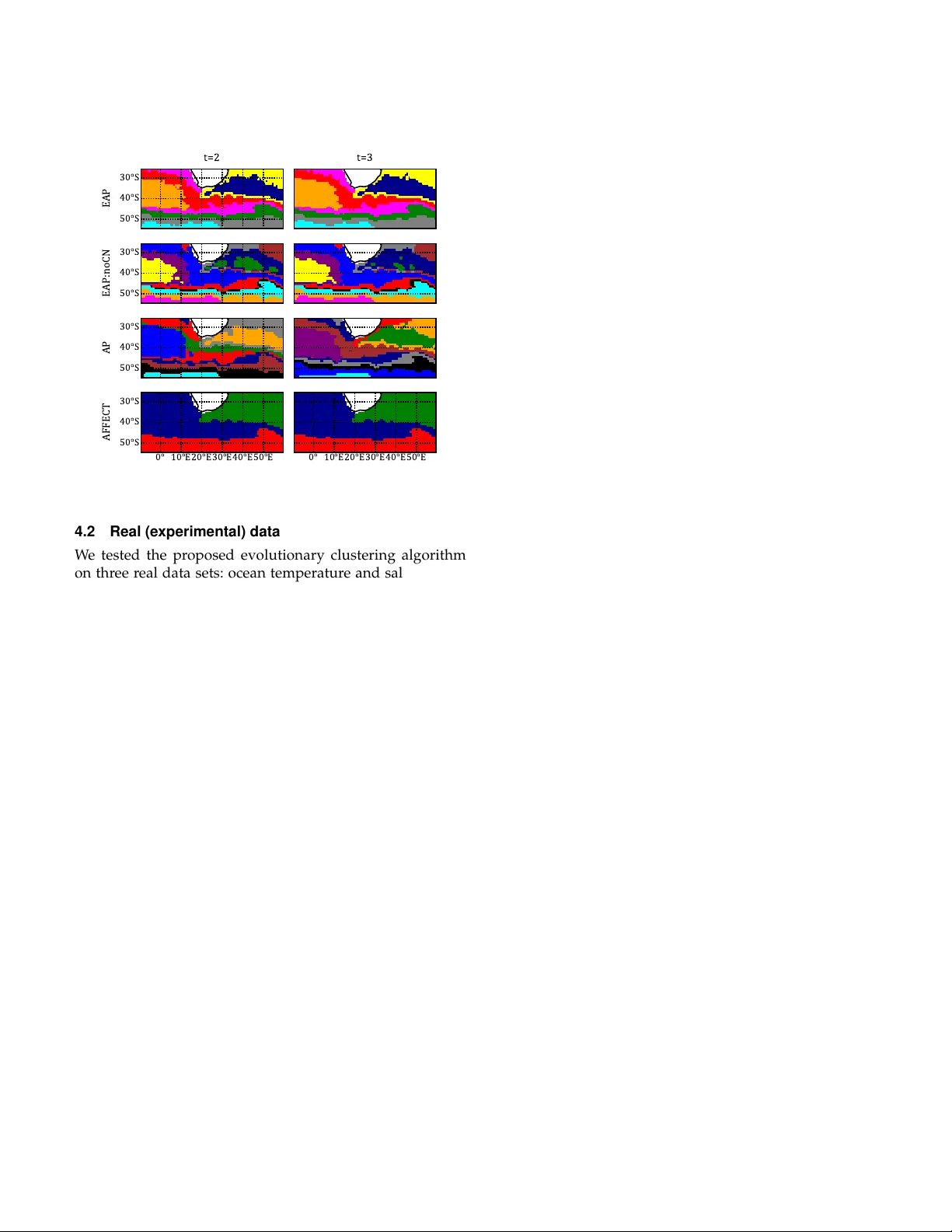

We are often interested in clustering objects that evolve over time and identifying solutions to the clustering problem for every time step. Evolutionary clustering provides insight into cluster evolution and temporal changes in cluster memberships w…

Authors: Natalia M. Arzeno, Haris Vikalo