Leveraging Topics and Audio Features with Multimodal Attention for Audio Visual Scene-Aware Dialog

With the recent advancements in Artificial Intelligence (AI), Intelligent Virtual Assistants (IVA) such as Alexa, Google Home, etc., have become a ubiquitous part of many homes. Currently, such IVAs are mostly audio-based, but going forward, we are w…

Authors: Shachi H Kumar, Eda Okur, Saurav Sahay

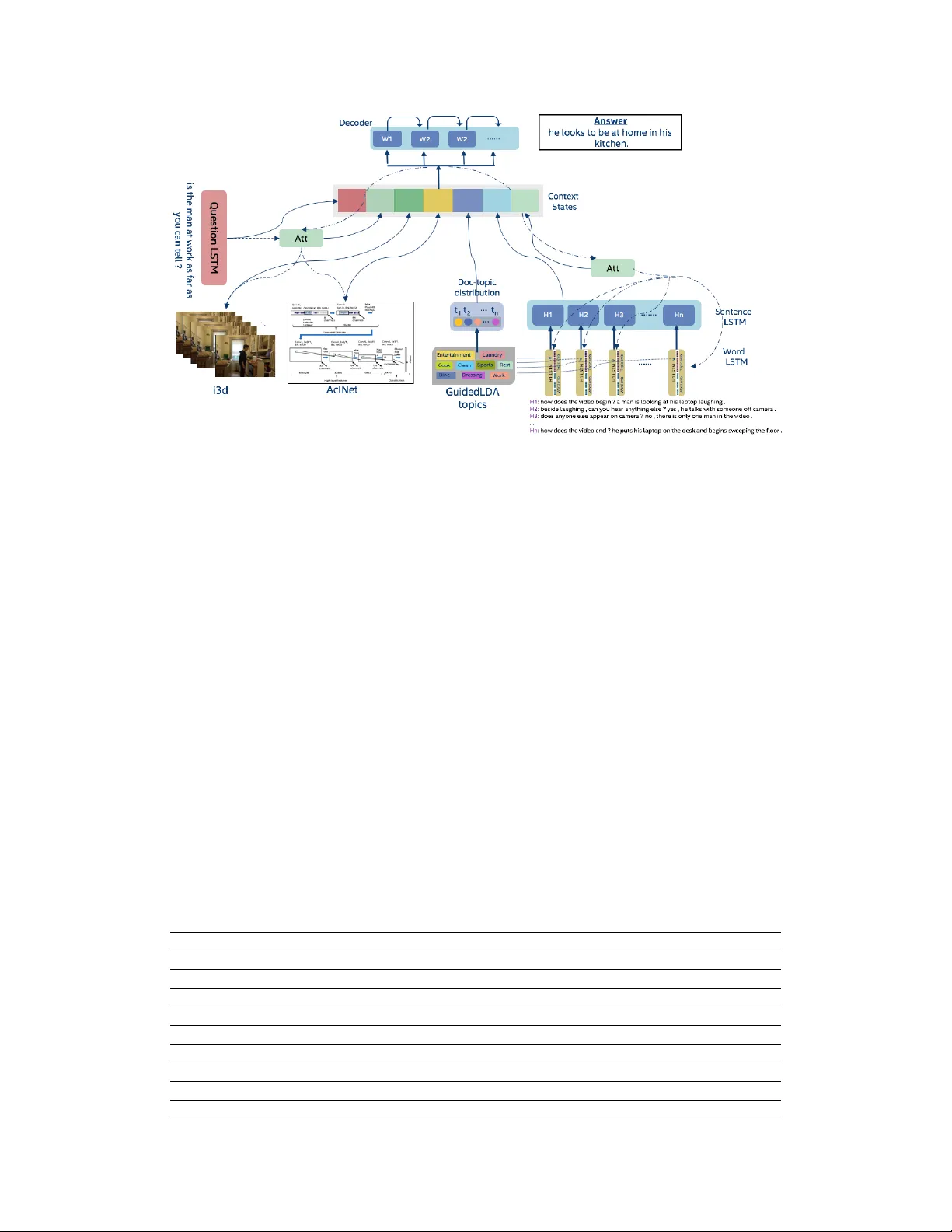

Le veraging T opics and A udio F eatur es with Multimodal Attention f or A udio V isual Scene-A war e Dialog Shachi H Kumar Intel Labs Anticipatory Computing Lab Santa Clara, CA 95054 shachi.h.kumar@intel.com Eda Okur Intel Labs Anticipatory Computing Lab Hillsboro, OR 97124 eda.okur@intel.com Saurav Sahay Intel Labs Anticipatory Computing Lab Santa Clara, CA 95054 saurav.sahay@intel.com Jonathan Huang Intel Labs Anticipatory Computing Lab Santa Clara, CA 95054 jonathan.huang@intel.com Lama Nachman Intel Labs Anticipatory Computing Lab Santa Clara, CA 95054 lama.nachman@intel.com Abstract W ith the recent advancements in Artificial Intelligence (AI), Intelligent V irtual Assistants (IV A) such as Alexa, Google Home, etc., hav e become a ubiquitous part of many homes. Currently , such IV As are mostly audio-based, but going forward, we are witnessing a confluence of vision, speech and dialog system technologies that are enabling the IV As to learn audio-visual groundings of utterances. This will enable agents to have con versations with users about the objects, activities and e vents surrounding them. In this work, we present three main architectural explorations for the Audio V isual Scene-A ware Dialog (A VSD): 1) inv estigating ‘topics’ of the dialog as an important contextual feature for the con versation, 2) exploring se veral multimodal attention mechanisms during response generation, 3) incorporating an end-to-end audio classification Con vNet, AclNet, into our architecture. W e discuss detailed analysis of the experimental results and sho w that our model v ariations outperform the baseline system presented for the A VSD task. 1 Introduction W e are witnessing a confluence of vision, speech and dialog system technologies that are enabling the IV As to learn audio-visual groundings of utterances and have con versations with users about the objects, acti vities and ev ents surrounding them. Recent progress in visual grounding techniques [ 3 , 6 ] and audio understanding [ 7 ] are enabling machines to understand shared semantic concepts and listen to the v arious sensory e vents in the en vironment. With audio and visual grounding methods [ 18 , 8 ], end-to-end multimodal Spoken Dialog Systems (SDS) [ 15 ] are no w being trained to meaningfully communicate in natural language about the real dynamic audio-visual sensory world around us. In this work, we explore the role of ‘topics’ of the dialog as the conte xt of the conv ersation along with multimodal attention into an end-to-end audio-visual scene-aware dialog system architecture. W e also incorporate an end-to-end audio classification Con vNet, AclNet, into our models. W e de velop and test our approaches on the Audio V isual Scene-A ware Dialog (A VSD) dataset [1, 2] released as part of the 7th Dialog System T echnology Challenges (DSTC7) task, sho wing that some of our model variations outperform the A VSD baseline model [9]. 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), V ancouv er , Canada. Figure 1: Architecture of Our System 2 Model Description In this section, we describe the main architectural explorations of our work as sho wn in Figure 1. Adding T opics of Con versations : T opics form a very important source of conte xt in a dialog. Charades dataset [ 16 ] contains videos on common household activities such as w atching TV , eating, cleaning, using a laptop, sleeping, and so on. W e train Latent Dirichlet Allocation (LDA) [ 5 ] and Guided LD A [ 11 ] models on questions, answers, QA pairs, captions and dialog history . Since we are interested in identifying domain-specific topics such as entertainment, cooking, cleaning, resting, etc., we use Guided LD A to generate topics via seed words. A detailed list of sample seed words provided to Guided LD A for the 9-topics configuration is presented in T able 1. These seed words are constructed by identifying a set of most common nouns (objects), verbs, scenes, and actions from the Charades dataset analysis [ 16 ]. Generated topic distributions are incorporated as features into our models or used to learn topic embeddings. Attention Explorations : W e explore sev eral configurations of the attention-based model where at ev ery step, the decoder attends to the dialog history representations and audio/video (A V) features to selectiv ely focus on relev ant parts of the dialog history and A V . W e calculate the attention weights [ 4 , 17 ] corresponding to e very dialog history turn, multimodal features and the decoder representation, and apply the weights to the history and multimodal features to compute the rele v ant representations. These help create a combination of the dialog history and multimodal context that is richer than the single context vectors of the indi vidual modalities. W e append the input encoding along with the A V multimodal feature encodings and pass that to the decoder LSTM for learning the output encodings. T able 1: Sample of Seed W ords for 9 T opics T opic Seed W ords Entertainment/LivingRoom living, room, recreation, garage, basement, entryway , television, tv , phone, laptop, sofa, chair, couch, armchair , seat, picture, sit ... Cooking/Kitchen kitchen, pantry , food, water, dish, sink, refrigerator , fridge, stove, micro wav e, toaster , kettle, oven, ste wpot, saucepan, cook, wash ... Eating/Dining dining, room, table, chair, plate, fork, knife, spoon, bo wl, glass, cup, mug, coffee, tea, sandwich, meal, breakfast, lunch, dinner ... Cleaning/Bath bathroom, hallway , entryway , stairs, restroom, toilet, towel, broom, v acuum, floor , sink, water , mirror , cabinet, hairdryer, clean ... Dressing/Closet walk-in, closet, clothes, wardrobe, shoes, shirt, pants, trousers, skirt, jacket, t-shirt, underwear , sweatshirt, coat, rack, dress, wear ... Laundry laundry , room, basement, clothes, clothing, cloth, basket, bag, box, towel, shelf, dryer, w asher, w ashing, machine, do, wash, hold ... Rest/Bedroom bedroom, room, bed, pillow , blanket, mattress, bedstand, nightstand, commode, dresser, bedside, lamp, nightlight, night, light, lie ... W ork/Study home, office, den, workroom, garage, basement, laptop, computer , pc, screen, mouse, keyboard, phone, desk, chair, light, w ork, study ... Sports/Exercise recreation, room, garage, basement, hallway , stairs, gym, fitness, floor, bag, to wel, ball, treadmill, bike, rope, mat, run, walk, ex ercise ... 2 A udio Featur e Explorations : W e used an end-to-end audio classification Con vNet, called AclNet [ 10 ]. AclNet takes raw , amplitude-normalized 44.1 kHz audio samples as input, and produces classification output without the need to compute spectral features. AclNet is trained using the ESC- 50 [ 14 ] corpus, a dataset of 50 classes of environmental sounds or ganized in 5 semantic categories (animals, interior/domestic, exterior/urban, human, natural landscapes). 3 Dataset W e use the dialog dataset consisting of con versations between two parties about short videos (from Charades human action dataset [ 16 ]), which was released as part of the A VSD challenge track of DSTC7 [ 1 ]. The two parties in the con versation discuss about ev ents in the video, where one plays the role of a questioner and the other is the answerer [ 2 ]. For the results presented in this work, we use the official training and v alidation sets to train and optimize our models, which are ev aluated on the of ficial test set. T able 2 shows the distrib ution of DSTC7 A VSD data across different sets. Further details of our A VSD dataset analysis and previous results on prototype sets can be found in [12]. T able 2: Audio V isual Scene-A ware Dialog Dataset T raining V alidation T est # of Dialogs 7,659 1,787 1,710 # of T urns 153,180 35,740 13,490 # of W ords 1,450,754 339,006 110,252 4 Experiments and Results T opic Modeling Experiments : W e use separate topic models trained on questions (Q), answers (A), QA pairs, captions (C), history and history+captions to generate topics for samples from each category . The generated topic v ectors are incorporated as features for questions and dialog history . The question topics are added to the decoder state directly . In one variation, the dialog history topics (QA and C, or all topics) are copied to all the decoder states directly . In another variation, the dialog history topics are added as features to the history encoder LSTM (HLSTM). W e learn T able 3: T opic Modeling Experiments BLEU 1 BLEU 2 BLEU 3 BLEU 4 METEOR R OUGE L CIDEr Baseline 0.621 0.480 0.379 0.305 0.217 0.481 0.733 GuidedLD A (Q,QA,C) 0.614 0.475 0.374 0.299 0.215 0.474 0.695 GuidedLD A (Q,QA,C) + GloV e 0.629 0.491 0.390 0.315 0.219 0.484 0.731 StandardLD A (All topics) 0.621 0.480 0.380 0.306 0.221 0.483 0.753 GuidedLD A (All topics) 0.619 0.480 0.378 0.303 0.217 0.476 0.701 GuidedLD A (All topics) + GloV e 0.631 0.493 0.390 0.315 0.224 0.492 0.773 HLSTM with topics 0.627 0.489 0.387 0.311 0.218 0.480 0.723 T opic Embeddings 0.623 0.488 0.387 0.311 0.217 0.479 0.701 T opic Embeddings + GloV e 0.632 0.499 0.402 0.329 0.223 0.488 0.762 T able 4: T opic Model Performances on Binary/Non-binary Answers BLEU 1 BLEU 2 BLEU 3 BLEU 4 METEOR R OUGE L CIDEr Binary Baseline 0.626 0.479 0.371 0.294 0.214 0.474 0.676 GuidedLD A (Q,QA,C) + GloV e 0.616 0.476 0.374 0.301 0.215 0.474 0.673 GuidedLD A (All topics) + GloV e 0.629 0.486 0.381 0.306 0.223 0.488 0.728 HLSTM with topics 0.623 0.480 0.375 0.297 0.214 0.473 0.696 T opic Embeddings + GloV e 0.635 0.497 0.398 0.325 0.224 0.491 0.746 Non-binary Baseline 0.624 0.486 0.387 0.312 0.219 0.482 0.726 GuidedLD A (Q,QA,C) + GloV e 0.633 0.497 0.396 0.320 0.220 0.487 0.759 GuidedLD A (All topics) + GloV e 0.632 0.495 0.394 0.318 0.225 0.494 0.796 HLSTM with topics 0.629 0.492 0.392 0.316 0.220 0.483 0.740 T opic Embeddings + GloV e 0.630 0.499 0.403 0.330 0.223 0.487 0.776 3 topic embeddings from topics generated for the questions, QA pairs and captions as well. In addition, GloV e embeddings [13] (200-dim) are incorporated with fine-tuning for questions and history . T able 3 compares the baseline model [ 9 ] with the topic-based model variations. GuidedLD A (All topics) + GloV e performs better than the baseline in all metrics. Adding topics as part of the HLSTM also slightly improves performance compared to the baseline. Learning topic embeddings along with the word embeddings (+GloV e fine-tuning) achie ves the best performance in most of the metrics (BLEU-scores), whereas GuidedLDA (All topics) + GloV e succeeds in other metrics. W e also e valuated topic-based models on subsets having binary and non-binary answers. As shown in T able 4, for the non-binary subset, all topic-based models perform better than the baseli ne in all metrics, which shows that these models can generate better responses for the more comple x, non-binary answers. Attention Experiments : The baseline architecture [ 9 ] only lev erages the last hidden state information from the sentence LSTM in the dialog history encoder . In our experiments, we hav e modified the baseline architecture and added attention layer for the answer decoder to le verage information directly from the dialog history LSTMs and multimodal audio/video features, with 4 different configurations described belo w . T o ev aluate the performance of attention solely for questions that could benefit from dialog history , we isolate the questions containing coreferences. T able 5 sho ws the performance of our models on this coreference-subset. T o compare the results at a more semantic le vel, we further performed quantitati ve analysis on dialogs that contained binary answers. W e ev aluate our models on their ability to predict these binary answers correctly (using precision, recall and F1-scores) as presented in Figure 2. The results show that the configuration where decoder attends to all of the sentence-LSTM output states performs better than the baseline. T able 5: Decoder Attention over Dialog History and Multimodal Features on Coreference-subset BLEU 1 BLEU 2 BLEU 3 BLEU 4 METEOR R OUGE L CIDEr Baseline 0.611 0.475 0.374 0.297 0.210 0.467 0.704 W ord LSTM (all output states) 0.594 0.447 0.336 0.262 0.190 0.432 0.553 W ord LSTM (last hidden states) 0.627 0.485 0.379 0.297 0.208 0.468 0.701 Sentence LSTM (all output states) 0.619 0.484 0.384 0.307 0.213 0.472 0.749 Sentence LSTM (all outputs) + A V 0.598 0.464 0.360 0.284 0.209 0.458 0.685 Figure 2: Precision, Recall, F1-scores for Attention Experiments on Coreference-Binary-subset 1. Attention on Dialog History W or d LSTMs, all output states : In this configuration, we remove the sentence level dialog history LSTM and the decoder computes the attention scores directly between the decoder state and the word le vel output states for all dialog history . W e first padded the W ord LSTM outputs from Dialog History LSTMs (see W ord LSTM in History in Figure 1) to the maximum sentence length of all the sentences. W e summed up all the attention scores from each of the sentence context vectors with the query decoder state. Using this kind of attention, we had hoped that the system could remember answers that were already given (directly or indirectly) in the earlier turns of the dialog. Directly attending to the output states of the word LSTMs in the dialog history encoder did not perform well compared to the baseline. This attention mechanism possibly attended to way more information than needed. 2. Attention on Dialog History W or d LSTMs, last hidden states : This configuration is similar to the previous configuration with the difference that we only use the last hidden state output representations of the word LSTMs corresponding to the different turns in the dialog. 4 Simpler than the pre vious setup, we stack up the hidden states from the history sentences for attention computation. This configuration performed better that the baseline on the coreference-subset in most of the ev aluation metrics. 3. Attention on Sentence LSTM, all output states : The baseline architecture only lev erages the last hidden state information from the sentence LSTM in the dialog history encoder . Instead, we extract the output states from all timesteps of the LSTM corresponding to n turns of the dialog history . This v ariation helps the decoder consider all the dialog turn compressed sentence representations via the attention mechanism. This model performed better than the baseline in all metrics on both coreference-subset (T able 5) and binary answers (Figure 2). 4. Attention on Sentence LSTM, all output states and Multimodal Audio/V ideo F eatur es : This configuration is similar to the last one with the difference that we add multimodal audio/video features as additional state to the attention module. This mechanism allows the decoder to selectiv ely focus on the multimodal features along with the dialog history sentences. This configuration did not really help improv e the e v aluation metrics compared to the baseline. A udio Experiments : T able 6 shows the comparison of the baseline (B) model without audio features, B+VGGish (provided as a part of the A VSD task), and B+AclNet features. W e in vestigate the effects of audio features on the overall dataset as well as on the subset of audio-related questions. W e observe that B+AclNet sho ws improved performances as compared to the baseline and B+VGGish, both on the overall dataset and audio-related subset. T able 7 presents a qualitativ e analysis of the addition of the VGGish and AclNet features to the baseline model. For these audio-related examples (e.g., ’oscillating’, ’eating’, ’ sneeze’), baseline and B+VGGish models generate irrelev ant responses, whereas the answers generated by B+AclNet are in accordance with the ground truth. T able 6: Audio Feature Performances on Overall vs. Audio-related Questions BLEU 1 BLEU 2 BLEU 3 BLEU 4 METEOR R OUGE L CIDEr Overall Baseline (B) 0.621 0.480 0.379 0.305 0.217 0.481 0.733 B + VGGish 0.622 0.487 0.389 0.315 0.216 0.481 0.732 B + AclNet 0.625 0.491 0.391 0.316 0.218 0.484 0.736 A udio-related Baseline (B) 0.666 0.526 0.413 0.329 0.230 0.504 0.767 B + VGGish 0.657 0.519 0.408 0.324 0.230 0.500 0.754 B + AclNet 0.659 0.527 0.424 0.348 0.236 0.507 0.796 T able 7: Audio Examples (VGGish vs. AclNet) Question: is the fan oscillating ? is he eating something ? how many times does she sneeze ? Ground Truth the fan is on but is still . yes he appears to be eating something she sneezes a few times in the video . Baseline yes it is very well lit no he is not drinking anything can only see her face Baseline + VGGish no don ’t see any music no he is not drinking anything she laughs at the end of the video Baseline + AclNet no it is hard to tell yes he is eating sandwich she sneezes at the end of the video 5 Conclusion In this paper , we present our explorations to wards architectural e xtensions for contextual and mul- timodal end-to-end audio-visual scene-aware dialog system. W e incorporate context of the dialog in the form of topics, inv estigate various attention mechanisms to enable the decoder to focus on relev ant parts of the dialog history and audio/video features, and incorporate audio features from an end-to-end audio classification architecture, AclNet. W e validate our approaches on the A VSD dataset and show that some of the explored techniques yields in improved performances compared to the baseline system for A VSD task. References [1] H. AlAmri, V . Cartillier, R. G. Lopes, A. Das, J. W ang, I. Essa, D. Batra, D. Parikh, A. Cherian, T . K. Marks, and C. Hori. Audio visual scene-aware dialog (A VSD) challenge at DSTC7. CoRR , abs/1806.00525, 2018. URL . 5 [2] H. Alamri, V . Cartillier , A. Das, J. W ang, S. Lee, P . Anderson, I. Essa, D. Parikh, D. Batra, A. Cherian, T . K. Marks, and C. Hori. Audio-visual scene-aware dialog. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2019. [3] S. Antol, A. Agrawal, J. Lu, M. Mitchell, D. Batra, C. L. Zitnick, and D. Parikh. Vqa: V isual question answering. In 2015 IEEE International Confer ence on Computer V ision (ICCV) , pages 2425–2433, Dec 2015. doi: 10.1109/ICCV .2015.279. [4] D. Bahdanau, K. Cho, and Y . Bengio. Neural machine translation by jointly learning to align and translate. CoRR , abs/1409.0473, 2014. URL . [5] D. M. Blei, A. Y . Ng, and M. I. Jordan. Latent dirichlet allocation. In T . G. Dietterich, S. Becker , and Z. Ghahramani, editors, Advances in Neural Information Processing Systems 14 [Neural Information Pr ocessing Systems: Natural and Synthetic, NIPS 2001] . MIT Press, 2001. URL http://papers.nips. cc/paper/2070- latent- dirichlet- allocation . [6] A. Das, S. Kottur , K. Gupta, A. Singh, D. Y ada v , J. M. F . Moura, D. Parikh, and D. Batra. V isual dialog. 2017 IEEE Conference on Computer V ision and P attern Recognition (CVPR) , Jul 2017. doi: 10.1109/cvpr .2017.121. URL http://dx.doi.org/10.1109/CVPR.2017.121 . [7] S. Hershey , S. Chaudhuri, D. P . Ellis, J. F . Gemmeke, A. Jansen, R. C. Moore, M. Plakal, D. Platt, R. A. Saurous, B. Seybold, et al. Cnn architectures for large-scale audio classification. In Acoustics, Speech and Signal Pr ocessing (ICASSP), 2017 IEEE International Conference . IEEE, 2017. [8] C. Hori, T . Hori, T .-Y . Lee, Z. Zhang, B. Harsham, J. R. Hershe y , T . K. Marks, and K. Sumi. Attention- based multimodal fusion for video description. 2017 IEEE International Confer ence on Computer V ision (ICCV) , Oct 2017. doi: 10.1109/iccv .2017.450. URL http://dx.doi.org/10.1109/ICCV.2017.450 . [9] C. Hori, H. AlAmri, J. W ang, G. Wichern, T . Hori, A. Cherian, T . K. Marks, V . Cartillier , R. G. Lopes, A. Das, I. Essa, D. Batra, and D. Parikh. End-to-end audio visual scene-aware dialog using multimodal attention-based video features. CoRR , abs/1806.08409, 2018. URL 08409 . [10] J. J. Huang and J. J. A. Leanos. Aclnet: Efficient end-to-end audio classification cnn. arXiv preprint arXiv:1811.06669 , 2018. [11] J. Jagarlamudi, H. D. III, and R. Udupa. Incorporating lexical priors into topic models. In W . Daelemans, M. Lapata, and L. Màrquez, editors, EACL 2012, 13th Conference of the Eur opean Chapter of the Association for Computational Linguistics . The Association for Computer Linguistics, 2012. ISBN 978-1-937284-19-0. URL http://aclweb.org/anthology/E/E12/E12- 1021.pdf . [12] S. H. Kumar , E. Okur, S. Sahay , J. J. A. Leanos, J. Huang, and L. Nachman. Context, attention and audio feature explorations for audio visual scene-aware dialog. In DSTC7 W orkshop at AAAI 2019, arXiv:1812.08407 , 2019. URL http://workshop.colips.org/dstc7/papers/32.pdf . [13] J. Pennington, R. Socher, and C. D. Manning. Glove: Global vectors for word representation. In Empirical Methods in Natural Language Pr ocessing (EMNLP) , pages 1532–1543, 2014. URL http: //www.aclweb.org/anthology/D14- 1162 . [14] K. J. Piczak. ESC: Dataset for En vironmental Sound Classification. In Pr oceedings of the 23rd Annual A CM Confer ence on Multimedia , pages 1015–1018. A CM Press, 2015. ISBN 978-1-4503-3459-4. doi: 10.1145/2733373.2806390. URL http://dl.acm.org/citation.cfm?doid=2733373.2806390 . [15] I. V . Serban, A. Sordoni, Y . Bengio, A. C. Courville, and J. Pineau. Building end-to-end dialogue systems using generativ e hierarchical neural network models. In AAAI , volume 16, pages 3776–3784, 2016. [16] G. A. Sigurdsson, G. V arol, X. W ang, A. Farhadi, I. Lapte v , and A. Gupta. Hollywood in homes: Cro wd- sourcing data collection for acti vity understanding. In B. Leibe, J. Matas, N. Sebe, and M. W elling, editors, Computer V ision - ECCV 2016 - 14th Eur opean Conference , Pr oceedings , 2016. ISBN 978-3-319-46447-3. doi: 10.1007/978- 3- 319- 46448- 0\_31. URL https://doi.org/10.1007/978- 3- 319- 46448- 0_31 . [17] A. V aswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser , and I. Polosukhin. Attention is all you need. In I. Guyon, U. von Luxburg, S. Bengio, H. M. W allach, R. Fergus, S. V . N. V ishwanathan, and R. Garnett, editors, Advances in Neural Information Pr ocessing Systems 30: Annual Confer ence on Neural Information Processing Systems 2017 , 2017. URL http://papers.nips.cc/ paper/7181- attention- is- all- you- need . [18] H. Y u, J. W ang, Z. Huang, Y . Y ang, and W . Xu. V ideo paragraph captioning using hierarchical recurrent neural networks. In Pr oceedings of the IEEE conference on computer vision and pattern reco gnition , pages 4584–4593, 2016. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment