Reducing Storage in Large-Scale Photo Sharing Services using Recompression

The popularity of photo sharing services has increased dramatically in recent years. Increases in users, quantity of photos, and quality/resolution of photos combined with the user expectation that photos are reliably stored indefinitely creates a gr…

Authors: Xing Xu, Zahaib Akhtar, Wyatt Lloyd

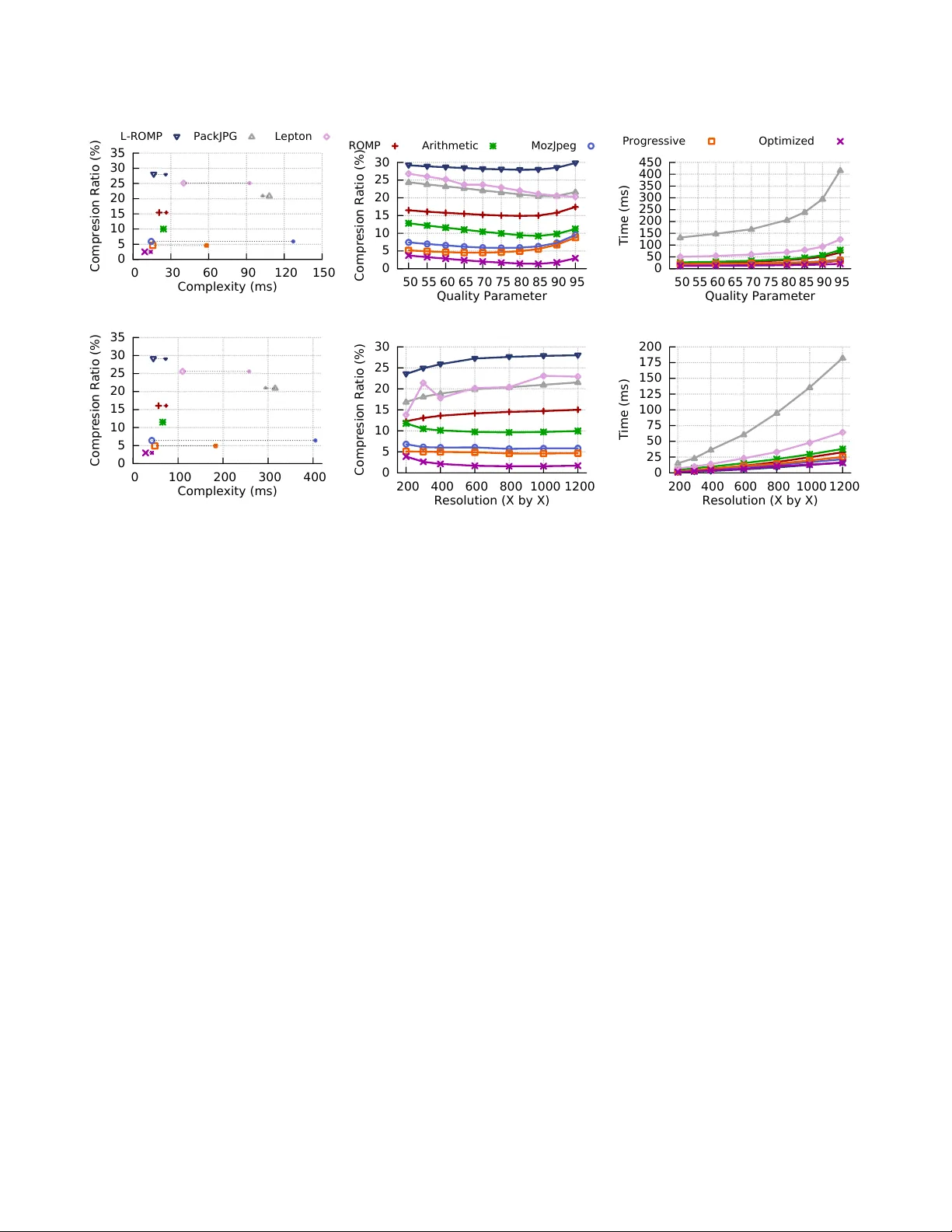

1 Reducing Storage in Lar ge-Scale Photo Sharing Services using Recompression Xing Xu, Zahaib Akhtar , W yatt Lloyd, Antonio Orte ga, Ramesh Govindan Abstract —The popularity of photo sharing services has increased dramatically in recent years. Increases in users, quantity of photos, and quality/resolution of photos combined with the user expectation that photos are r eliably stor ed indefinitely creates a growing burden on the storage back end of these services. W e identify a new opportunity for storage savings with application-specific compression for photo sharing services: photo recompression . W e explore new photo storage management techniques that are fast so they do not adversely affect photo download latency , are complementary to existing distributed erasure coding techniques, can efficiently be conv erted to the standard JPEG user devices expect, and significantly increase compression. W e implement our photo recompression techniques in two novel codecs, RO M P and L - R O M P . R O M P is a lossless JPEG recompr ession codec that compresses typical photos 15% ov er standard JPEG. L - RO M P is a lossy JPEG recompression codec that distorts photos in a perceptually un-noticeable way and typically achieves 28% compression over standard JPEG. W e estimate the benefits of our approach on F acebook’s photo stack and find that our approaches can r educe the photo storage by 0.3-0.9 × the logical size of the stored photos, and offer additional, collateral benefits to the photo caching stack, including 5-11% fewer requests to the backend storage, 15- 31% reduction in wide-area bandwidth, and 16% reduction in external bandwidth. Index T erms —JPEG, image compression, photo-sharing ser- vice, photo storage. I . I N T RO D U C T I O N I N recent years, there has been a dramatic gro wth in the popularity of large-scale photo sharing services such as Facebook, Flickr , and Instagram. As of 2013, F acebook alone had 350 million photo uploads per day [1]. The storage footprint of these services is already significant; Facebook stored ov er 250 billion photos according to the same 2013 report [1]. Furthermore, the commoditization of high-quality digital cameras in mobile devices has created three trends that each increases the footprint of these services: people are taking more photos, at higher resolution, and at higher quality . These trends combined with the user e xpectation that photos are stored indefinitely results in an ev er growing storage footprint. T oday , photos uploaded to photo sharing services predom- inantly use the JPEG [2] standard, which already compresses images by lev eraging properties empirically observed in natural images. Despite this compression, as photo sharing service scale, additional tools for managing photo storage become necessary . One technique, already used in Facebook’ s f4 system [3], is distributed erasure coding. This reduces the storage foot- print of a service by replacing the redundant copies of data that were used for fault tolerance and load balancing re- quests with combined parity information for multiple sets of data. Another prominent technique explored by the storage systems community is deduplication (Section V). T o our knowledge, this has not been applied to images, in part because it is not clear if, after JPEG’ s compression, duplicate elimination is likely to provide significant benefit. For a similar reason, generic object compression techniques—e.g., gzip, bzip2—are unlikely to produce additional savings in storage. T wo other tools are av ailable to large-scale photo shar - ing services: image resizing and reducing JPEG’ s quality parameter (this is a lossy transformation that re-quantizes information to enable better compression). As cameras and displays mo ve to wards higher resolution, users will likely want to view larger images, so the benefit of image resizing is limited. Moreov er , as we show later , reducing JPEG’ s quality parameter by re-quantization can introduce signif- icant error (uploaded images ha ve already been quantized once when the JPEG was generated at the source). In this paper , we focus on the problem of image r ecom- pr ession : taking uploaded compressed images, and recom- pressing them by taking advantage of the special character- istics of large-scale photo sharing systems. Recompressing images reduces the logical size of the stored corpus and thus is complimentary to more generic techniques like distributed erasure coding. There are three primary challenges for recompression schemes. The first is finding opportunities for additional compression giv en that images are already compressed. The second is to introduce minimal error if lossy recompression is used, a property that reducing JPEG’ s quality parameter does not ha ve. The third stems from ensuring compatibil- ity with client devices. Maintaining compatibility requires clients receiv e images in the JPEG format their devices understand and this in turn requires decompression from the storage format back into JPEG on the download path. This means that decompression should be fast (or , equiv alently , hav e low complexity )—i.e., it should take < 0.1s and ideally 2 < 50ms—because it adds to the user-percei ved download latency of a photo sharing service (Section II). Contributions. Our key insight that enables this recom- pression is that large-scale photo sharing services represent a different domain than those for which image formats were designed. The lar ge-scale aspect enables further compres- sion of already compressed photos. At a high le vel, our approach decouples the size of the codec tables used for encoding and decoding many types of images. This decou- pling enables much richer and larger codec tables than that are practical for individual files, i.e. , the size of these large tables can be amortized ov er the large-scale storage and thus become negligible. In addition, in contrast to the traditional compression setting that considers indi vidual images sent between a distinct sender and recei ver , recompression for photo sharing services inv olves many photos that are com- pressed and decompressed by the same entity . These insights leads to our first recompression scheme, Recompression Of Many Photos ( RO M P , Section III). RO M P achie ves high recompression rates by replacing the small coding tables that are stored with each image (or used by encoders/decoders as default) in traditional schemes with a single large coding table that is not stored with the images. The co-location of compression and decompression allows RO M P to av oid storing the coding table with each image. Instead, it stores the table in the memory of machines on the download path. This in turn allo ws R O M P to use a much lar ger coding table than would be practical for individual images. Such significant increase in coding table sizes can be amortized over the billions of photos for large- scale storage. RO M P achiev es low complexity by keeping the coding table in memory on the download path of a photo sharing service and only making a single pass ov er an image. RO M P pro vides high compression and low comple xity for recompressing many photos and is lossless, i.e., re- compressing a JPEG into R O M P and back produces a bit-wise identical image. For further compression gains, one could think of using other existing image compression algorithms (e.g., JPEG 2000 [4]). Note, ho wever , that JPEG is the de facto standard for “raw” image representation, that is, most images are first stored as high quality JPEG files on a digital camera. Then, applying other compression schemes would require decoding JPEG images (at upload) and generating again JPEG images (at download), as many potential clients only support JPEG, which would incur a significant complexity penalty . As a result, the standard method for recompressing images is decreasing JPEG’ s quality factor , but this increases distortion due to double- rounding of image coefficients. Our Lossy-Recompression Of Many Photos (L - RO M P ) is designed to reduce bit-rate in JPEG images and av oids this double-rounding problem. L - RO M P amplifies the compression gain of R O M P, adds no addition complexity to decompression, and is perceptually nearly lossless. R O M P and L - RO M P hav e been published in [5]. This paper includes more results for ev aluation of R O M P and L - RO M P . 1 More importantly , this paper focuses more on le veraging RO M P and L - RO M P in photo sharing services, b ut not the codec itself, and presents system lev el ev aluation. In addition to a storage backend, photo sharing services typically deploy a photo-caching stack [6]. The stack is a set of caching layers that are progressively smaller and closer to clients. The goals of the stack are three-fold: to reduce the load on the storage backend, to reduce the amount of external bandwidth needed to deliv er photos, and to decrease user-percei ved download latency . The most straightforward deployment of RO M P would place the decompression step between the storage backend and the caching stack. W e find, howe ver , that doing the decompression inside the caching stack would improve each of the three goals. W e term these “collateral benefits” because they are in addition to the reduction in storage cost. Our ev aluation explores the compression and complexity of RO M P and L - R O M P across a variety of image qualities and resolutions. W e find that RO M P and L - RO M P rob ustly provide high compression—approximately 15% and 28% ov er JPEG Standard respecti vely—and low complexity— less than 60 ms decompress time. This translates to 13% and 26% compression over JPEG Optimized 2 . These storage savings multiply when inte grated into the photo-sharing services. Because photos are replicated or erasure coded in the backend for fault-tolerance, RO M P and L - RO M P can reduce the storage footprint by 0 . 5 × – 0 . 9 × the logical size of stored photos using the lossless/lossy codecs respectiv ely . W e also estimate the collateral benefits of deploying our schemes in Facebook’ s photo caching stack. These would, for example, include 5%–11% fewer requests to the back- end storage, a 15%–31% reduction in wide-area network bandwidth, and a 500ms decrease in 99 th percentile photo download latency . I I . I N T E G R A T I N G R E C O M P R E S S I O N I N T O P H O T O S H A R I N G S E RV I C E S In this section, we discuss the benefits, for lar ge-scale photo sharing services, of recompressing uploaded images and how to inte grate the functionality pro vided by RO M P or L - RO M P into these photo sharing services. Our discussion is especially informed by the descriptions of Facebook’ s photo sharing stack [7], [3], [6], and our measurements of it. From a systems point of vie w , R O M P and L - RO M P each consist of two conceptual modules: a compression module 1 [5] presents the compression ratio results of Figures 7a and 7b (but not complexity results), and complexity results of Figure 9a for quality parameter 75 only . These are the only ov erlap in results presented in the two papers. 2 JPEG Optimized is a lossless compression technique for JPEG Standard format, which provides additional compression with negligible overhead. It is a more popular JPEG format. 3 Storage ! W eb Server ! Transcoder ! 1 ! 2 ! 3 ! 4 ! 5 ! 6 ! Fig. 1: A typical upload path (1–6) for a large-scale photo sharing service. Photos are sent synchronously from users to backend storage via front-end W eb serv ers and a transcoding tier . that recompresses an uploaded image, and a decompression module that decompresses images before download. Compressing on Upload. A typical upload path for a large- scale photo sharing service is shown in Figure 1. Users upload photos to the service synchronously , i.e., the user waits until the photo is safely stored in the backend storage. The user’ s de vice initially sends the photo to a front-end W eb server that handles incoming requests from clients (1). That W eb server then sends the photo to a transcoder machine in the transcoding tier (2). The transcoder then sends the photo to the backend storage tier (3), and, once the photo is safely stored, acknowledgements flow in the reverse direction (4– 6). The transcoder sits on the upload path and canonicalizes photos before storing them. This canonicalization typically in volv es resizing photos and/or reducing the JPEG image quality . The JPEG standard permits the reduction of im- age quality using a scalar quality parameter . This quality reduction reduces the storage required for images but can introduce undesirable perceptual artifacts. Resizing photos giv es them standard sizes and ensures that photo storage growth is consistent and predictable. For instance, this pre- vents the release of a ne w popular phone model with a higher resolution camera from increasing the required storage per photo. Reducing image quality to a fixed factor also keeps storage gro wth consistent and predictable for similar reasons. Both of these transformations are lossy , a topic we cover and explore in more depth in Section III. Giv en that the transcoding tier is already doing image processing on uploaded photos it is a natural place to also do recompression. Doing recompression here requires that compression must have low complexity (time taken to recompress the images). In particular , the complexity of recompression must be comparable to, or less than, the complexity of resizing or reducing image quality . An alternati ve place to integrate recompression of photos would be off the upload path. This would require storing the photos in their initial format and then recompressing them during of f-peak periods. This might be feasible b ut adds complexity to the entire path: these recompression operations may need to be carefully scheduled, and the download path (described below) needs to be able to retrie ve images before they hav e been scheduled for recompression (since users expect images to be av ailable immediately). For these reasons, we do not consider this alternativ e in this Origin Cache ! Edge Cache ! Edge ! Edge ! Storage ! Transcoder ! Edge ! Edge ! 2 ! 3 ! 4 ! 5 ! 6 ! 7 ! 8 ! 1 ! Fig. 2: A typical do wnload path (1, 1-2-8, 1-2-3-7-8, or 1–8) for a large-scale photo sharing service. A photo is returned from the first cache in the path that has it. If the photo is not present in any of the caches it is fetched from the backend storage via the transcoding tier (4–6). paper . Decompressing on Download. A typical download path for a large-scale photo sharing service in shown in Figure 1. Users download photos from the first on-path cache they encounter with the photo: the device cache (1), the edge cache that handles their request (1-2-8), or the origin cache (1-2-3-7-8). If the photo is not in one of those caches it is fetched from the backend storage system via a transcoder (1–8). The transcoder conv erts the photo from the format and size as stored in the backend to what will be deli vered to a user device. For instance, Facebook transcodes stored JPEGs into the W ebP format before sending them back to Android de vices [8]. The transcoder tier also resizes photos depending on their destination [6]. For instance, a desktop user with a large open window may receive a larger version of the photo than a dif ferent desktop user with a smaller open window . The transcoding tier is again a natural place to do de- compression on the download path because it is already manipulating photos. This placement of decompression on the download path leads to a requirement that it have low complexity , to ensure both low latenc y for user requests for photos and a small impact on the required size of the transcoding tier . Complexity/Storage T radeoff. RO M P trades-off addi- tional complexity for greater sa vings in storage. The storage saving is of more important because that the storage in- creases linearly with time, b ut the additional comple xity does not: recent measurements of photo sharing services suggest that each image is viewed many times soon after they are uploaded, and very rarely thereafter [3]. This means that the complexity cost is nev er proportional to time. Benefits of Low Complexity Decompression. Even though the storage saving is more significant over time, it is still important to hav e lo w complexity , especially for decompres- 4 sion. The reason is that low complexity in the download path ensur es low access latency . Lar ge-scale content deliv ery ser - vices optimize latency aggressively and moderate increases in latency can negati vely impact user experience. The latency introduced by decompression may not affect all downloads, because of caching. For example, if an image is decompressed and transcoded once, it can be cached either at the origin cache or the edge cache for subsequent accesses (unless the subsequent accesses are from devices of a different type or a different resolution, in which case decompression must happen again). Howe ver , ev en if only cache misses require decompression (about 29% [6]), it is still important to have a low latency decompression. The most well-kno wn efficient JPEG recompression scheme, PackJPG [9], can provide about 20% additional compression in photo sizes, but has significant decompres- sion complexity . 3 For downloading a 2048 × 1536 image from the backend, PackJPG’ s recompression inflates the latency of the fastest 40% of downloads by more than 50%. Ev en though the decompression latency is incurred only for cache misses, we still see significant impact on the ov erall latency distrib ution (from all the layers of the cache stack): ~80% of the distrib ution incurs more than 150ms additional latency . 4 For commercial photo sharing services, these increases in latency may not be acceptable. These photo services can also reap other benefits of recompression. For example, moving the decompression and transcoding close to the clients can have two important benefits. First, caches would now be more storage efficient, because the y would store recompressed v ersions of the photos. This would result in fe wer accesses to the back- end storage and caches. Second, the bandwidth required between the caches and the backend storage would be reduced because only recompressed images would need to be transferred. Ho we ver , to reap these benefits, the additional decompression latency would affect all the accesses . In this case, low comple xity decompression is ev en more important, using the state-of-the-art PackJPG, with its high decompres- sion complexity , can increase latency by more than half a second . For this reason, we make low complexity decompression a primary design requirement for RO M P and L - R O M P. I I I . L O W - C O M P L E X I T Y R E C O M P R E S S I O N F O R P H OT O S H A R I N G S E RV I C E S In this section, we discuss the need for a new recom- pression strategy , and then describe the design of R OMP and L-R OMP , which recompress JPEG images to reduce the storage requirements of large-scale photo sharing services significantly with low complexity ov erhead. 3 A more recent recompression scheme, Lepton [10], does achiev e lower latency than PackJPG. W e will ev aluate Lepton in Section IV. 4 The methodology for this experiment is explained in Section IV -D. A. Why a New Recompr ession Strate gy? Limitations of T raditional Approaches. While photo storage services such as Flickr maintain a copy of the original uploaded images, large-scale photo sharing services modify the uploaded image in order to manage the scale of their storage infrastructure. Specifically , Facebook (a) resizes images and (b) recompresses them using a smaller quality parameter . Resizing, while useful in managing storage, has its limits. Camera resolution has been increasingly steadily ov er the last fiv e years, as hav e display resolutions, even on mobile devices. As a result, it is likely that photo sharing services will increasingly face pressure to serve high-resolution im- ages in the near future, so photo sharing services will need additional tools to manage photo storage. Recompressing an image by reducing JPEG’ s quality parameter (requantizing) is a con venient knob for managing storage; for example, most cameras generate high-quality images at quality parameter of 95, but Facebook reduces the quality parameter on an uploaded image to ~75. T o achie ve further storage saving, we have to apply the second quality parameter reduction. As we demonstrate experimentally in Section IV, two consecutive quality parameter reductions lead to unacceptable quality degradation. In summary , while resizing and quality adjustments are currently used, they are not a viable solution for producing additional storage savings. Thus, in this paper we explore a completely different approach for recompression, enabled by the unique setting of photo-sharing services. New Opportunities in Photo-Sharing Services. As op- posed to traditional sender/receiver compression scheme where sender encodes the image and the receiv er decodes it, the large-scale photo sharing services represent a new compression setting, with some special properties that offer opportunities for better compression. 5 Pr operty 1: Collocated Encoder/Decoder . Instead of having two distinct sender/receiver entities where the encoder and the decoder are in physically separate locations, the recom- pression setting in photo-sharing service includes both com- pression and decompression within the service . In traditional distinct encoder/decoder setting data is typically encoded in one of two ways. One is using a default codec ( e.g . , JPEG Standard) that is present at the sender and recei ver of the data and does not need to be transmitted with it. The other is using a customized codec table ( e.g. , JPEG Optimized) that is customized at the sender, transmitted along with the data to the receiver , and then used to decode the data. A customized codec table can typically represent the data more compactly than a default table, but there is a tension between the size of the codec table and the compactness of its representations. Larger customized codec tables often 5 W e use encode/decode and compress/decompress interchangeably . 5 10 1 10 2 10 3 10 4 10 5 N u m b e r o f P h o t o s 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 1 . 2 1 . 4 1 . 6 N o r m a l i z e d S i z e P e r P h o t o 3 . 2 6 1 . 0 9 0 . 8 7 0 . 8 5 0 . 8 5 C o d e c R O M P J P E G S t a n d a r d J P E G O p t i m i z e d Fig. 3: Normalized storage size per photo of codec + image using JPEG Standard, JPEG Optimized, and ROMP for an increasing quantity of 2048 × 1536 photos. R OMP makes sense at the large scale it was designed for where the size of its larger codec is negligible compared to the storage saving it offers. lead to higher compression because they can model the data more effecti vely . Y et, the customized codec table must be kept small to ensure that the space savings obtained by the richer encoding is not negated by having to transmit the table along with the data. In the collocated setting the encoder and decoder are at the same location and thus the codec table used for encoding the data can be decoupled from the data itself. This decoupling removes the constraint on the size of codec tables: the codec tables can be much larger than what is practical for individual files because they are shared across many files and stored separately . As a result, this new design can potentially reduce the storage of a service beyond what standard approaches can achieve. Pr operty 2: Large-Scale Photo Stora ge. Despite the freedom to use large codec tables, the codec table constitutes storage ov erhead that must be carefully managed. Ho wev er, the large-scale aspect of photo-sharing services helps in this regard: the same codec overhead that would negate the ad- ditional storage reduction in a small-scale case will become negligible for lar ge-scale case as it will be amortized across the entire storage (Figure 3) because the codec tables are shared across many photos. At small scales, this large codec tables (Section III-B) can be much lar ger than the image itself. In contrast, at large scale, the size of the ROMP codec is negligible compared to the size of the stored images, e.g. , in R OMP design, it needs to store 10,000 images to make the codec negligible. Our ev aluation in Section IV sho ws that these larger shared tables can compress photos better than traditional customized tables, and have other benefits across the photo stack. In summary , enabled by the collocating of en- coder/decoder and the large-scale setting, large codec tables can be used which would not be practical for traditional 0 2 4 6 8 10 12 14 16 1 8 16 24 32 40 48 56 64 Probability (%) Position Fig. 4: Probabilities of 3 Bit Coefficients at different posi- tions. small-scale sender/receiv er setting. In the following two sub- sections we describe: (a) R OMP , a lossless coding technique that uses enhanced Huf fman tables, and (b) L-R OMP , a perceptually near-lossless coder that does not suffer from the significant quality degradation inherent in re-quantization. At a high lev el, RO M P and L - RO M P make use of a large set of codec tables generated from a large corpus of images, where each of the tables is optimized for a specific context, and has similar structure as that of a typical JPEG entropy coding table. Other than using the new codec tables, RO M P and L - RO M P proceed block by block and does not in volv e any transformations or re-orderings of DCT coefficients. This ensures that the coding complexity is v ery low (see Fig. 5), which is approximately equi valent to a JPEG entrop y decoding follo wed by a JPEG entropy coding. T ogether, these techniques can reduce photo storage requirements by 25% or more, a significant gain for large photo sharing services that store billions of images. B. R OMP: Lossless Coding using Enhanced Huffman T ables Our design, RO M P, exploits the decreasing cost of storing and managing large tables by designing context-sensitive coding tables that result in lossless compression. Recall that JPEG’ s Huf fman tables are used to code symbols (informa- tion about run-lengths and quantized values), based on the expected frequency of symbols’ occurrence. R O M P learns context-sensitive Huffman tables by learning the empirical probability of occurrence of these symbols from a large corpus of images. This learning leverages the av ailability of such corpora in a large-scale photo service. Concretely , RO M P uses below two insights deriv ed from properties of natural images to obtain context-sensitiv e Huffman tables. Position-dependence. The empirical probability of occur- rence of a symbol can depend on its position in the zig-zag scan. That is, for a gi ven symbol, its empirical probability of occurrence at position p a along the zig-zag scan is likely to be different from its empirical distribution at position p b (Figure 4 illustrates this). For example, for natural images, it is kno wn that non-zero coef ficients are increasingly unlikely at higher frequencies [11]. Thus, if p b > p a then a non- 6 Fig. 5: RO M P Encoding Architecture zero value will be less likely at p b . For this reason, RO M P generates dif ferent tables for dif ferent positions: i.e., position is one aspect of the context used for encoding. Thus, the same symbol may be encoded using different bit-patterns depending on the position where it occurs. Energy-dependence. The second insight is based on the observation that, relati ve to image sizes predominantly in use today , an 8 × 8 block represents a very small patch of the image. T o see this, imagine capturing the same visual information (a photo of a person, say) with two cameras of different resolutions, and then using JPEG encoding for both images. Clearly 8 × 8 blocks in the higher resolution image represent smaller regions of the field of view , and thus will tend to be smoother . This has two useful implications. First, information within a block will tend to be smooth, with additional smoothness expected for larger images. Smoother images are such that coefficients at higher frequencies tend to be smaller . Second, because the same region in the field of vie w comprises more blocks when lar ger images (higher resolutions) are generated, it becomes more likely that neighboring blocks will have similar characteristics. W e exploit these two ideas by creating tables such that the probability of occurrence of a symbol at a gi ven position can also depend upon the ener gy of other coefficients within the block ( intra-block energy) and of neighboring blocks ( inter - block energy). For a giv en runsize that occurs at zigzag position p of the n -th block, we use the av erage of the observed coefficient sizes in a block as an estimate of intra- block energy: intr a ( n, p ) = 1 p − 1 p − 1 X i =1 S I Z E ( b n ( i )) max S I Z E ( i ) (1) where b n denotes the n -th block, and b n ( i ) denotes the coefficient at position i , S I Z E ( · ) denotes the bits required to represent the amplitude of the coef ficient, max S I Z E ( i ) is the observed maximum coefficient size for position i of images in the training set. Similarly , the inter-block energy value is estimated based on the av erage sizes of coef ficients in nearby blocks: F nearby zigzag positions in B adjacent prior blocks (ROMP uses F = 5 and B = 3 ): inter ( n, p ) = 1 B · F n − 1 X i = n − B p + F − 1 X j = p S I Z E ( b i ( j )) max S I Z E ( j ) (2) Putting it all together . Context in RO M P is defined by a triple < p, i, e > to define context: zigzag position p , intra-block energy i and inter-block energy e . Note that the statistical dependencies captured by this conte xt information are well known in image coding and e xploited by state of the art compression techniques, b ut to the best of our kno wledge we are the first to take advantage of them for lo w latency JPEG transcoding (where complexity is minimized by using memory to store many , context-dependent Huffman tables). At a high-level, R O M P works as follows (Figure 5). From a training set of images, R O M P learns a Huffman table for each unique context (i.e., for each unique combina- tion of position, intra- and inter-block energy). For runsize that occurs in any image in the training set, RO M P first determines its context triple and then gathers it together with other runsize s belonging to the same context. After gathering all the runsize s for each context, RO M P can generate a table for this context based on the number of occurrences of each. RO M P pre-defines 20 different energy lev els for both intra-energy and inter-energy , which leads to ~ 64 × 20 × 20 = 25600 dif ferent contexts and Huf fman tables to be learned. 6 These tables are quite dif ferent, which allows RO M P to achiev e better compression ov er standard JPEG. When an image is uploaded, R O M P decodes the JPEG image, computes the corresponding triple < p, i, e > , the then uses the learned Huffman tables to re-code the image. Before delivering an image to a client, RO M P re verses its context-sensiti ve entrop y code, then applies the def ault JPEG entropy code. C. L-R OMP: A Gracefully Lossy Coder RO M P ’ s entropy coder is lossless with respect to the uploaded JPEG. In this section, we describe L - RO M P , which introduces a controlled, perceptually insignificant, amount of loss (or distortion ) in uploaded images, as a w ay of achieving further savings in photo storage. As discussed previously , users upload high quality JPEG images and many photo sharing services, e.g., Facebook, change the JPEG quality parameter to a lower lev el in order to ensure predictable storage usage, a step that introduces additional distortion. Figure 6 quantifies the dramatic in- crease in distortion caused by re-quantization, compared to quantizing the original raw image directly to the tar get qual- ity parameter , showing rate-distortion performance with two different objecti ve quality metrics: PSNR and MS-SSIM. The “Re-quantize (raw)” curve is obtained by reducing the quality parameter when compressing the original ra w images, while the “Re-quantize (JPEG)” curve is obtained by re-quantizing a high-quality JPEG image deriv ed from the same set of raw images. The former curve represents much more graceful degradation. By contrast, re-quantization in- troduces much more distortion for the same size reduction. This happens because the latter suffers from cumulativ e errors due to two consecutiv e re-quantizations. 6 These tables take up less than 16MBs of the memory , a negligible memory usage increment for modern machines. 7 1 5 0 2 0 0 2 5 0 3 0 0 3 5 0 ( a ) F i l e si z e ( K B ) 3 2 3 3 3 4 3 5 3 6 3 7 3 8 3 9 P S N R ( d B ) 1 3 0 1 4 0 1 5 0 1 6 0 1 7 0 1 8 0 1 9 0 2 0 0 ( b ) F i l e si z e ( K B ) . 9 8 8 . 9 9 0 . 9 9 2 . 9 9 4 . 9 9 6 . 9 9 8 M S - S S I M L - R O M P R e - q u a n t i z e ( R a w ) R e - q u a n t i z e ( J P E G ) Fig. 6: Rate/distortion performance of L - RO M P , compared to re-quantization from raw image and from JPEG image using T ecnick images of 1200 × 1200. (a) : Using PSNR as the quality metric, and showing performance on JPEG images of four quality parameters (70,80,86,90). (b) : Using MS-SSIM metric, and focusing on JPEG images of quality paramter of 75; “o” marks the perceptually lossless setting of L - RO M P . L - RO M P avoids re-quantizing coef ficients, b ut introduces distortion by carefully setting some non-zero (quantized) coefficients to zero, a specific instance of a general idea called thresholding [12]. The intuition behind thresholding is that, by setting a well-chosen non-zero coefficient to zero, it is possible to increase the number of consecutive zero- valued coefficients in the sequence of coefficients along the zig-zag scan. This in general helps reduce size as it replaces two separate runs of zeros together and a non-zero coefficient value, by a single run of zeros. Optimization of coefficient thresholding has been considered from a rate- distortion perspecti ve in the literature [13], [12], we are not aware of it being e xplored as an alternati ve to re-quantization in large photo sharing services. Here we use a simplified version where only coef ficients of size equal to 1 (i.e., 1 or − 1 ) can be removed. This means that the distortion increase for any coefficient being removed will be the same. 7 Thus, we only need to decide if for a given coef ficient the bit-rate savings are sufficient to remo ve it. W e make the decision by introducing a rate threshold and only thresholding a coefficient if the bits saving by doing so would exceed this threshold. Howe ver , setting too many coefficients to zero within a block can introduce local artifacts (e.g., blocking). Thus, L - RO M P uses a per ceptual threshold T p that limits the percentage of non-zero coefficients that will be set to zero. By doing this L - R O M P can guarantee that the block-wise SSIM with respect to the original JPEG is always higher than 1 − T p 2 − T p . For example, if we use T p = 0 . 1 (i.e., we can threshold at most 10% of the non-zero coefficients), then block-wise SSIM metric is guaranteed to be higher than 0.947. The proof of such bound is based on results from [14] 7 Note that the actual MSE will be different if two coefficients hav e same value 1 , but different frequency weights in the quantization matrix. Howe ver , by ignoring this difference we take into account the different perceptual weighting given to each frequency and obtain better perceptual quality . and is omitted for brevity . In Figure 6 (a), we observe that L - RO M P degrades PSNR more gracefully than simply re-quantizing. W e see that with conservati ve thresholds, L - RO M P ’ s curve is actually higher than re-quantizing from the ra w image curv e, illustrating the efficienc y of L - R O M P’ s trading distortion for bits-saving. L - RO M P performs equally well on MS-SSIM metric (plot (b)). T o achiev e perceptually lossless compression, we also conducted a subjecti ve e valuation, by dev eloping a compar- ison tool that can choose thresholds and shows the image at the chosen setting as well as size reduction. W e find that using rate threshold of 2.0 and perceptual threshold of 0.4 provides maximum bits-saving without noticeable quality distortion (“o” of Figure 6 (b)). W e use these parameters for L-ROMP . Finally , L - R O M P can be easily introduced into RO M P’ s pipeline: before applying the context-sensitiv e entropy cod- ing, L - R O M P’ s thresholding can be applied to each block (Figure 5). No changes are required to RO M P ’ s entropy coder . D. Encoding/Decoding in P arallel to Reduce Latency A recompression codec that can achieve both high com- pression and low coding latency is ideal to photo sharing services. Howe ver , generally speaking, a high compression ratio codec introduces higher comple xity , and thus higher coding latency at the same time. With reducing “complex- ity”, a parallelized codec can reduce the coding latency , and some codecs hav e explored this idea [10]. Parallelizing can reduce the latency in terms of time, but because the codec still requires the same number of CPU c ycles, it cannot improv e the coding throughput. It means that, when CPU resources is the bottleneck, parallelized codecs would not hav e benefits. 8 Because of this, the number of CPU cycles is the right metric for complexity . Howe ver , when CPU resources is not the bottleneck, the capability of enabling parallelism is an important fea- ture for recompression codecs to further reduce coding latency . R O M P can be easily e xtended to parallelized, multi- threaded version. RO M P just needs to break the original image into N sub-images to enable RO M P ’ s encoding in N threads. R O M P needs to sav e each encoded sub-image separately , with this sub-image’ s of fset of the original JPEG image, i.e., for the original JPEG, where is the first bit of this sub-image. At the decoding, RO M P can enable N decoding threads, each decodes one encoded/compressed sub-image, but writes the decoded bits to the right location indicated by the of fset information. By doing so, these N decoding threads collectively recov er the original JPEG image. Enabling N threads can take the encoding/decoding la- tency to approximately 1 / N of the original, single-thread 8 Actually , parallelized codec will introduce additional CPU overhead to enable parallelism. 8 RO M P . The next question is whether the enabling of parallelism negati vely affects compression. Multi-threaded RO M P requires more bits to represent the original JPEG, for two reasons. The first reason is that, multi-threaded R O M P needs to store the extra offset information. The second reason is that, to encode one block, R O M P uses B adjacent prior blocks to predict current block (inter -block energy- dependence). But for the first B blocks of each image, this information is not complete. This affects the predictability , and then the compressibility . Fortunately , these two penal- ties are both negligible for RO M P’ s compression. Of fset information requires 64 bits per thread for an image that is no larger than 4GB. This is clearly negligible, as each thread usually handles hundreds of KBs data, a less than 0.01% penalty . The second penalty is also insignificant. Each thread will encode thousands of blocks (e.g., a 2048 × 1536 image contains 50000 blocks), only 3 ( RO M P uses B = 53 ) of them are lack of information for prediction is not a big deal. On the other hand, for L - R O M P, enabling parallelism would not introduce any penalties. In our experiment, a 4- thread R O M P reduces the coding latency to less than 1/3 of the original single-thread RO M P’ s latency , while only introduces 0.01% of extra bits. W e conclude that RO M P and L - RO M P can both be extended to multi-threaded version easily . I V . E V A L UA T I O N W e experimentally explore three key questions: • Ho w do R OMP and L-ROMP compare to the state of the art in terms of compression ratio and complexity under a variety of settings? • What compression can R OMP and L-R OMP achie ve and what storage savings does that translate into? • What collateral benefits does ROMP and L-R OMP enable? A. Methodology a) Implementation: Our ev aluations use an implemen- tation of R OMP that has two software components: a train- ing script and a codec. The training script is implemented in Python and takes a training set of JPEG images, decodes them, and generates Huffman tables. Training is done once and is off-path for photo uploads and do wnloads and so it is not included in complexity measurements. The codec is implemented on top of libjpeg-turbo [15]. The imple- mentation of L-R OMP is an extension of R OMP that adds an additional block-modification stage to the image processing pipeline. ROMP and L-R OMP are publicly av ailable [16]. b) Baselines: T o quantify the compression ratios and complexity of R OMP and L-ROMP , we compare them with lossless JPEG codecs and alternati ve photo formats. The lossless JPEG codecs include all that we are aw are of that have publicly a vailable implementations: JPEG Stan- dard, JPEG Optimized, JPEG Progressive, JPEG Arithmetic, MozJPEG [17], PackJPG [9] and Lepton [10]. The alterna- tiv e photo formats include W ebP and JPEG2000. c) Image Sets: Our ev aluation uses two sets of images: • T ecnick [18] is an image set of 100 images, each av ailable in many resolutions up to 1200 × 1200 . The images are in a raw format (PNG), which allo ws us to transcode them to JPEGs of dif ferent resolutions and different quality parameters. W e use T ecnick because it is commonly used in image processing benchmarks. • F iveK [19] is an image set from MIT -Adobe that contains 5,000 publicly av ailable raw images taken from different SLR cameras. This data set is used to ev aluate images with higher resolution than the max of 1200 × 1200 in the T ecnick image set. d) Metrics: W e ev aluate our compression schemes and their baselines on two metrics: compression ratio, and encod- ing/decoding time. Compression ratio measures the storage efficienc y and is the ratio of sav ed storage o ver old storage. More precisely , let s 0 be the size of an image generated by a scheme and s be the size generated by JPEG Standard. Then, the compression ratio is s − s 0 s . The encoding time is the time to recompress from JPEG Standard and the decoding time is the time to decompress back to JPEG Standard. T o make f air coding complexity comparison among schemes, we measure the encoding/decoding time of single-thread version of each scheme. 9 e) T esting: The images used for our experiments are obtained from the image sets described abov e. For the small T ecnick image set, we use 10-fold cross validation: the 100 image set is divided into 10 groups of 10 images; we test on one group of 10 images after training on the other 90 images, and repeat this procedure for every group in order to test on every image of the set. For the FiveK image sets we use 1000 randomly chosen images for training and the rest of the images for testing. B. Compr ession & Complexity RO M P and L - RO M P are designed to provide high compression ratios with low complexity . This subsection compares the complexity and compression ratios of R OMP and L-ROMP against the state of the art. W e e valuate under a variety of settings with an eye to the future where photos will be larger in size and higher in resolution. a) Compr ession/Complexity T radeof f: Compression techniques represent different points in the complex- ity vs. compression tradeof f. In general, with higher complexity—- i.e . , higher encoding/decoding time—higher compression ratio are possible. Figures 7a and 7b present this tradeof f for the alternatives considered in the paper , for 9 The only scheme that supports parrellelism is Lepton [10], we use its single-thread version for e valuation. 9 0 5 10 15 20 25 30 35 0 30 60 90 120 150 Compre sion Ratio (%) Complexi ty (ms) L-ROMP PackJPG Lepton (a) 1152 × 864, quality is 75. 0 5 10 15 20 25 30 35 0 100 200 300 400 Compre sion Ratio (%) Complexi ty (ms) (b) 2048 × 1536 , quality is 75. Fig. 7: Compression/complexity tradeof f of Fiv eK image set. The bigger marker indicates the decoding complexity while the smaller sho ws the encoding comple x- ity . 0 5 10 15 20 25 30 50 55 60 65 70 75 80 85 90 95 Compre sion Ratio (%) Quality P arameter ROMP Arithmetic MozJpeg (a) 1200 × 1200 0 5 10 15 20 25 30 200 400 600 800 1000 1200 Compre sion Ratio (%) R esolution (X by X ) (b) Quality is 75 Fig. 8: The compression ratio over JPEG Standard baseline as (a) quality parameter changes and (b) resolution changes for T ecnick image set. 0 50 100 150 200 250 300 350 400 450 50 55 60 65 70 75 80 85 90 95 Time (ms) Quality P arameter Progr essive Optimized (a) 1200 × 1200 0 25 50 75 100 125 150 175 200 200 400 600 800 1000 1200 Time (ms) R esolution (X by X ) (b) Quality is 75 Fig. 9: The decoding complexity as (a) quality parameter changes and (b) reso- lution changes for T ecnick image set. (Y - axis ranges differ for readability .) two different image resolutions and a quality parameter of 75 on the Fi veK dataset. W e exclude results for transcoding back and forth from other image formats, i.e., W ebP and JPEG2000, because they are clear outliers: they require 700– 2900ms to decompress a 2048 × 1536 photo. For encoding time, R OMP is comparable to JPEG Arith- metic, and much faster than other competitors. In particular , the high compression schemes, PackJPG and Lepton, both require roughly 4 × encoding time. Compared to R OMP , L- R OMP’ s additional step of thresholding does not induce any extra complexity . Decoding time is the more rele vant metric for R OMP because it affects user-percei ved delay . R OMP’ s decoder is slightly faster than JPEG Arithmetic, comparable to JPEG Progressive and MozJPEG, 5 × faster than PackJPG and 2 × faster than Lepton. L-R OMP’ s decoder is identical to R OMP , b ut after thresholding the image becomes smaller , which makes it ~20% faster than ROMP . Interestingly , Pack- JPG is the only scheme whose decoding time is higher than its encoding time. Finally , ROMP has higher compression ratio than almost an y other alternati ve: only PackJPG and Lepton achieve higher compression ratio. This experiment shows that ROMP and L-ROMP occupy a unique position in the tradeoff space, achieving both high compression ratio ( 15 − 29 %) and low comple xity ( 60 ms encoding/decoding time for a 2048 × 1536 image). By contrast, the other high-compression schemes, PackJPG and Lepton, hav e a compression ratio of 20%, but this comes at considerable complexity cost. Their encoding time are over 250ms, and decoding time are ov er 110ms (more precisely , PackJPG’ s decoding is more than 310ms), which we consider unacceptable because it would shift the latency distribution significantly (Figure 12). b) Compr ession Ratio as Quality and Resolution In- cr ease: As camera technology continues to impro ve, we expect users to upload images with higher quality and resolution and thus the compression performance for higher quality parameters and resolutions is important. Figure 8a ev aluates the compression ratio of R OMP , L-R OMP , and baselines as a function of v arying quality parameter on the T ecnick dataset. These schemes are generally robust to changing quality factors, but with their lowest compression ratio around quality parameter 75. W e believ e this is because we are comparing against JPEG Standard and its Huffman tables are optimized for the widely used quality parameter , 75. The robustness of R OMP’ s compression is validated by this experiment, where we see compression ratios over 15% for all quality parameters. Figure 8b explores the effect of the trend tow ards higher 10 resolution images. The figure shows the compression ratio of R OMP and L-R OMP over JPEG Standard for increasing image resolutions. R OMP’ s compression ratio increases with image resolution, in contrast with all other low complexity alternativ es. R OMP’ s compression ratio is close to PackJPG and Lepton at the highest quality parameter . This is an important property gi ven the trend to wards larger image sizes with higher quality parameters. One reason for this good property is that R OMP can train and use dif ferent coding tables for dif ferent image parameters, while other schemes might hav e poor performances on certain image parameters. W e have also verified this trend in the FiveK dataset (omitted for brevity). c) Decoding Comple xity as Quality and Resolution Incr ease: Figure 9a shows how decoding time scales with increasing quality of images. W e observe that schemes with low decoding complexity scale well; the decoding time for PackJPG and Lepton, howe ver , scales poorly with image size and image quality . Figure 9b shows how decoding time scales with increasing resolution of images. W e see a similar trend to increasing quality; lo w decoding complexity schemes scale well. This re-af firms our findings that R OMP and L-R OMP are better codecs. R OMP occupies a sweet- spot in the complexity/compression tradeoff space: ev en though PackJPG and Lepton ha ve higher compression ratio, it scales poorly with the trend to wards larger , higher quality images. L-R OMP is an even better choice if perceptually indistinguishable changes are acceptable. C. Stora ge Reduction for Photo Backends This section estimates the compression ratios R OMP and L-R OMP can achieve for a real photo-sharing service, i.e., Facebook’ s photo storage system. Abov e e xperiment demonstrates compression ratio o ver JPEG Standard, we need to translate above compression result to benefits over JPEG Optimize, which is more popular as it is a clear winner over JPEG Standard. W e estimate ROMP and L-R OMP would result in 13% and 26% compression ratios respectiv ely on JPEG Optimize (instead of 15% and 28% on JPEG Standard). This estimate is done by pre-optimize images we use in abov e experiment to JPEG Optimize and re-run the e xperiment. Note that, we see slightly different compression ratios on images with different quality parameter or resolution in abo ve experi- ment, we do wnload F acebook photos, get the av erage JPEG quality parameter and resolution and use that to pick these compression ratio values. These are the values we use later to calculate the storage reduction and collateral benefits of deploying R OMP and L-R OMP into the photo-sharing service. a) Stora ge Reduction: The storage reduction from a compression scheme is greater than simply its compression ratio because photo sharing services replicate images for fault tolerance and load balancing. This results in a physical R OMP L-R OMP Compression 0% 13% 26% Haystack 3.6 × 3.1 × 2.7 × f4 2.1 × 1.8 × 1.6 × Fig. 10: New effecti ve replication factor if compression schemes were deployed based on the compression ratio ov er JPEG Optimized for large photos. image storage that is a multiple of the logical size of the stored images, i.e. , the effecti ve replication factor . Face- book’ s Haystack [7] has an effecti ve replication factor of 3.6 × and f4 [3] has an effecti ve replication factor of 2.1 × . Figure 10 sho ws ho w ROMP and L-ROMP would reduce the effecti ve replication factor of Haystack and f4. The difference between the current effecti ve replication f actor and the new factor is the storage reduction due to the deployment of R OMP and L-R OMP . For instance, if R OMP was deployed on Haystack it would reduce the storage footprint by . 5 × the logical size of the images it stores, and if L-R OMP was used on Haystack, it would reduce the storage requirements almost by the size of one complete copy of the images ( . 9 × ). Similarly , if L-ROMP was used on f4 it would reduce the storage footprint by . 5 × the logical size of the images. D. Collateral Benefits This subsection quantifies, using a data-driv en model- based approach, the collateral benefits of placing the de- coder in the edge cache. These collateral benefits include a larger effecti ve cache size, increased hit rates at the caches, reductions in backfill requests and bytes, and a reduction in external bandwidth when L-ROMP is used. L-ROMP can impact download latency , which we also quantify . a) Methodology: Data-Driven Model-Based Estima- tion: W e use a data-driven model-based approach to estimate the collateral benefits with the deployment of R OMP , on the photo stack of a large provider described in Figure 2. At a high level, each box in this figure is associated with a distribution of processing latencies, and each link with a distribution of transfer latencies. In addition, caches have an associated hit rate and we model cache hits by assuming that photos have uniform probability of a cache hit, given by the hit rate. W e combine multiple measurement results of the current photo stack to parameterize the model. W e use measure- ments from a 2013 Facebook study [6] to get the cache hit rates for the model and we combine latency measurements from [6] and our recent measurement study [20] to obtain the transfer latencies for the model. W e need to update the model in two ways: first, the processing latency due to decompression of R OMP or L-R OMP will need to be added 11 to the edge caches; second, the cache hit rates need to be re- computed, resulting in changes in the percentage of requests served by each cache layers and the distribution of download latency . W e discuss these changes in the paragraphs below . Cache Hit Rates. In the absence of R OMP , we assume the cache hit rates of edge caches and origin caches are based on measurement results from [6]. ROMP or L-R OMP would reduce the size of each image stored in a cache, which would allow each cache to store more images. T o compute this effective cache size incr ease as a result of compression, we use the following model: for a codec with a compression ratio of x %, the cache size effecti vely increases by a factor of 1 1 − x % . For example, for L-ROMP , this results in a cache size ~1.35 × the original size. W e use this as the new cache size to update the cache hit rates at edge caches and origin caches (denoted by H e and H o , respectively) based on Figure 10 of [6]. F r action of Requests Served by Cache Layers. A change in the cache hit rates in any layer would change the per- centage of requests served by edge caches, origin caches and backend (denoted by S e , S o and S b , respectiv ely). At edge caches, we ha ve S e = H e , at origin caches S o = (1 − H e ) H o and S b = (1 − H e )(1 − H o ) at the backend. Dif ferent sets of S ∗ means the re-distributions the load on different cache layers, and we use these values to analyze the load changes of different cache layers, changes to internal/external bandwidth and also the change in the distribution of the download latency . 10 Download Latency . W e estimate the impact on download latency of changes to S ∗ by getting the distributions of download latencies from edge caches, origin caches and backend (denoted by L e , L o and L b , respectively) and combining them to form one overall distribution using the new S ∗ . W e obtain L e and L b from our previous work [20]. 11 Separately , we get origin cache to backend latency distribution from the Facebook study (Figure 7 of [6]) and subtract that (in a distrib utional sense) from L b to get L o . W e then update L ∗ by taking R OMP or L-ROMP’ s decompression latency into account and shift the distribution uniformly . With both updated S ∗ and L ∗ , we can estimate the distrib ution of o verall do wnload latenc y L as follows. Let L ∗ ( x ) be the probability of latency x ms of the distribution L ∗ , we hav e L ( x ) = S e L e ( x ) + S o L o ( x ) + S b L b ( x ) . W e can then enumerate on x to get the entire distribution of L . 10 W e use S ∗ to collecti vely denote S e , S o , and S b . 11 This study measured the distribution of latencies for Facebook (and other photo providers) by downloading small images from sev eral hundred PlanetLab sites and several thousand RIPE Atlas sites. W e extend its results to what we would expect for larger images by using the reported time- to-first-byte latency and the transfer rate of the rest of the bytes. This will slightly over inflate the latency because the transfer rate of the slow start phase in TCP is generally slower than the congestion avoidance phase. But, we expect this effect to be small and believe our estimates are representati ve. R OMP L-R OMP Compression 13% 26% Effecti ve Cache Size 1.15 × 1.35 × Hit Ratio Increase Edge Cache 1.1% 2.5% Origin Cache 1.6% 3.8% Reduction in Requests to Backend 4.9% 11.4% Reduction in Bytes Sent to Edge 15.2% 30.5% Reduction in External Bandwidth 0.0% 15.5% Fig. 11: Estimated collateral benefits from deploying R OMP in the Facebook photo caching stack. b) Results: W e now present the benefits of deploying R OMP . Cache Hit Rates. Figure 11 shows ho w deploying R OMP on the F acebook photo caching stack w ould change the ef fective cache sizes and how this would affect cache hit rates. For instance, using L-ROMP would result in a 2.5% increase in the hit rate at the edge caches, and a nearly 4% increase in hit rate at the origin caches. In turn, these increases in the hit ratio can (1) reduce the number of requests to the storage backend, (2) reduce the bandwidth used between the storage backend and users, and (3) decrease latency for user requests [6]. W e examine the ef fects of our schemes on each of these goals in turn. F ewer Requests T o The Storage Back end. Backend storage for images typically uses hard driv es, which are capable of serving a small number of requests per second. For instance, a typical 4TB disk holding a lar ge number of images is capable of a maximum of 80 Input/Output Operations Per Second (IOPS) while keeping per-request latency acceptably low [3]. As a result, one goal of an image caching stack is to reduce the number of requests to the backend storage system. Reducing requests allows images to be moved from hot storage with a high effecti ve replication factor to warm storage with a lower effecti ve replication factor sooner because the request rate would drop to what the warm storage system could handle sooner [3]. Figure 11 shows the reduction in requests to the backend. L-R OMP , for instance, would reduce requests to the back end by ov er 10%. The reduction comes from the hit rate increases in the caches. F ewer Bytes T o The Edge and Externally . One of the primary goals of Facebook’ s edge cache is to reduce the bandwidth required between it and the origin cache [6]. R OMP reduces the required bandwidth in two ways when deployed on the edge. First, our recompressed images are smaller than their JPEG Optimized counterparts. This results in a reduction in bandwidth proportional to the compression ratio. Second, the increased cache hit ratio would lead to fewer misses in the edge cache that need to be filled from 12 − 8 0 0 − 6 0 0 − 4 0 0 − 2 0 0 0 2 0 0 4 0 0 6 0 0 A d d i t i o n a l L a t e n cy ( m s ) 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 C D F R O M P L - R O M P P a ck J P G Fig. 12: Complementary cumulati ve distribution function (CCDF) on latency difference of deploying R OMP , L-R OMP and PackJPG. the origin cache or the backend. The combination of effects is shown in Figure 11. L-R OMP , for instance, would r educe the bandwidth between the edge and origin by 30.5% . An additional benefit of using L-ROMP arises from the fact that the image delivered to the user is often of smaller size than the uploaded image. Thus, it can reduce the data consumption of mobile de vices as well as decrease the amount of external bandwidth of the service. This reduction is directly proportional to the lossy savings of those schemes, i.e. , their compression ratio without the normal R OMP component (Figure 11). Latency Effects. One of the goals of a photo caching stack is to decrease the latency for users to do wnload photos. The expected latency effects of deploying R OMP in a photo caching stack are complex and our objectiv e was for them to make download latency no worse, and ideally better . The decoding time from R OMP would contribute additional latency to e very request. But, it would also reduce latency in two ways. First, the increased hit rates at the caches would result in more requests being served by caching layers closer to the user . Second, the decrease in image size with L-R OMP requires fe wer data transfers, thereby reducing ov erall download times. Figure 12 shows the estimated latency of downloading a 2048 × 1536 image. W e see that R OMP has a negligible effect on latency and L-R OMP reduces latency abov e the 40 th percentile. For the tail of the distribution abov e the 90 th percentile, L-R OMP can reduce latency by more than 500ms. This gain comes almost entirely from L-ROMP’ s reduction in image sizes; we also estimated the latency ef fect for L-R OMP cache hit rates without the image size reduction and saw a curve very similar to ROMP . The reason the increased cache hit rates do not hav e a noticeable impact is that the Facebook stack is well provisioned, i.e. , adding extra capacity has a small effect [6]. Abov e we mainly focused on deploying ROMP to the edge cache, the latency effect of deploying R OMP to the transcoder tier can be implied: the additional decoding latency would only be added to the requests served by the backend, so we observe similar latency effect abo ve the 70 th percentile (tail 30%), but lower latency for other percentiles. Photo sharing services work hard on optimizing the tail latency , and thus low complexity codecs are required as well [7]. Our analysis sho ws that (high complexity) P ackJPG inflates the latency of the fastest 40% of downloads by more than 50%. Even though the decompression latency is incurred only for cache misses, we still see significant impact on the ov erall latency distribution: ~80% of the distribution incurs more than 150ms additional latency . W e also estimated the collateral benefits of deploying R OMP for photo sharing services that are not as well provisioned as F acebook, e.g. , services with limited cache sizes. For such services we see slightly larger impro vements in all metrics, but we omit details due to space constraints. V . R E L AT E D W O R K This section explains how our approach relates to prior work in compressed storage systems, photo compression, and photo-sharing architectures. Compression in Storage Systems. The use of compression for improving the efficiency of storage systems dates back sev eral decades. These inv olve techniques for achieving compression in file systems such as [21], [22], [23], [24], in databases [25], [26] or for unstructured inputs [27]. R OMP is inspired by this line of work, especially the idea of “online compression” [22], [21], [23], b ut focuses on a relati vely new class of large-scale storage systems specifically de- signed for photos, whose requirements and workloads are different than those considered by prior works. Unlike file or database compression systems, R OMP must compress already compressed objects, leveraging the observation that large coding tables can provide compact storage across the entire storage system (which file and database compression systems can lev erage, b ut, to our kno wledge, do not). In addition, our approach requires reasoning about performance impacts across globally distributed photo stack. Photo Compr ession. Compression methods for photo- sharing services need to provide high compression and low complexity . Generic file compression techniques like gzip and bzip2, that do not le verage specific properties of images, can only provide negligible compression for photos beyond JPEG. The same is true of deduplication, which has receiv ed significant attention recently [28], [29], [30], [31], [32], [33]. W e validated this e xperimentally on the Fiv eK image set and found ≤ 0.5% compression for all generic schemes we tried: gzip, bzip2, xz, fixed-block deduplication, and variable-block deduplication. 13 Sev eral papers hav e explored JPEG compression. Some of them focus on compressing JPEG losslessly [34], [35], [36], [37], [38]. Ho wev er , as sho wn in Section IV, they either cannot achiev e as high compression as R OMP , or ha ve much higher complexity . JPEG lossy compression methods include transcoding from JPEG to another format (e.g., W ebP [39] or JPEG2000 [4]) and transcoding to JPEG with lower quality or resolution. The former introduces high comple xity [39], [4] and thus is not a viable option. T ranscoding to JPEG but with lower quality and lower resolution often introduces significant degradations in quality [40], while L- R OMP degrades more gracefully than these approaches. Recently , there hav e been sev eral proposals for compress- ing photo storage based on analyzing higher-lev el structures (objects, landmarks) in similar images [41], [42], [43], [44]. Because they ha ve to recognize such structural similarity , these techniques generally have much higher complexity; it is also not clear that their quality degradations are accept- able. R OMP outperforms other lossless JPEG compression schemes by occupying a unique point in tradeoff between compression and complexity with high compression and low complexity . L-ROMP is inspired by prior work on thresholding [12], [45], [46], but differs from them in only introducing perceptually lossless changes and in its focus on low complexity as the design constraint. Other Related W ork. Researchers have also explored sev eral complementary aspects of photo service stacks: Haystack [7] is used for image storage at Facebook and contains optimized metadata storage to reduce photo fetch latency; Huang et al. [6] present a measurement study of the efficac y of Facebook’ s distrib uted photo caching architecture which resembles Figure 2; and f4 [3] is a storage system for photos and other binary objects that are infrequently accessed. R OMP is complementary to this body of work; it can be used on Haystack or f4, and can improve caches in Facebook. V I . C O N C L U S I O N Motiv ated by the need for additional tools for managing storage in large photo sharing services, this paper explores the problem of image recompression in these services and proposes two low complexity recompression schemes, R OMP and L-R OMP , that produce perceptually lossless compression with gains of 15-28%. Compression gains of this magnitude can substantially reduce storage requirements at these services. In addition, they increase cache hit ratios, reduce requests to the backend, reduce download latenc y and download sizes, and reduce wide area network traffic. R E F E R E N C E S [1] Facebook, Ericsson, and Qualcomm, “A Focus on Efficienc y , ” September 2013. [2] G. K. W allace, “The JPEG Still Picture Compression Standard, ” in Commun. A CM , vol. 34. New Y ork, NY , USA: A CM, Apr . 1991, pp. 30–44. [3] S. Muralidhar, W . Lloyd, S. Roy , C. Hill, E. Lin, W . Liu, S. Pan, S. Shankar , V . Siv akumar, L. T ang, and S. Kumar, “f4: Facebook’s W arm BLOB Storage System, ” in 11th USENIX Symposium on Op- erating Systems Design and Implementation (OSDI 14) . Broomfield, CO: USENIX Association, Oct. 2014, pp. 383–398. [4] D. T aubman and M. Marcellin, “JPEG2000: Standard for Interacti ve Imaging, ” vol. 90, no. 8, Aug 2002, pp. 1336–1357. [5] X. Xu, Z. Akhtar, R. Govindan, W . Lloyd, and A. Ortega, “Context- Adaptiv e Quantization and Entropy Coding for V ery Low Latency JPEG Transcoding, ” in 41st IEEE International Conference on Acous- tics, Speech and Signal Pr ocessing (ICASSP 2016) . IEEE Signal Processing Society , Mar . 2016. [6] Q. Huang, K. Birman, R. van Renesse, W . Lloyd, S. Kumar, and H. C. Li, “An Analysis of Facebook Photo Caching, ” in Proceedings of the Symposium on Operating Systems Principles (SOSP) , Nov . 2013. [7] D. Beaver , S. Kumar , H. C. Li, J. Sobel, and P . V ajgel, “Finding a Needle in Haystack: Facebook’ s Photo Storage, ” in Pr oceedings of the Symposium on Operating Systems Design and Implementation (OSDI) , 2010. [8] A. Sourov , Improving F acebook on Android. , available at https://code.facebook.com/posts/485459238254631/ improving- facebook- on- android/. [9] H. Aalen, P ackJPG , av ailable at http://www .elektronik.htw- aalen.de/ packjpg/. [10] D. R. Horn, K. Elkabany , C. Lesnie wski-Lass, and K. Winstein, “The design, implementation, and deployment of a system to transparently compress hundreds of petabytes of image files for a file-storage service, ” in 14th USENIX Symposium on Networked Systems Design and Implementation (NSDI 17) . Boston, MA: USENIX Association, 2017, pp. 1–15. [Online]. A vailable: https://www .usenix. org/conference/nsdi17/technical- sessions/presentation/horn [11] E. Y . Lam and J. W . Goodman, “A Mathematical Analysis of the DCT Coefficient Distributions for Images, ” vol. 9, no. 10. IEEE, 2000, pp. 1661–1666. [12] K. Ramchandran and M. V etterli, “Rate-Distortion Optimal Fast Thresholding with Complete JPEG/MPEG Decoder Compatibility .” in IEEE T ransactions on Image Pr ocessing , vol. 3, no. 5, 1994, pp. 700–704. [13] A. Ortega and K. Ramchandran, “Rate-Distortion Methods for Image and V ideo Compression, ” in IEEE Signal Processing Magazine , vol. 15, no. 6. IEEE, 1998, pp. 23–50. [14] S. S. Channappayya, A. C. Bovik, R. W . H. Jr., and C. Caramanis, “Rate Bounds on SSIM Index of Quantized Image DCT Coefficients, ” in 2008 Data Compr ession Confer ence (DCC 2008), 25-27 Mar ch 2008, Snowbir d, UT , USA , 2008, pp. 352–361. [15] D. Commander , libjpe g-turbo , av ailable at http://libjpeg- turbo. virtualgl.org/. [16] X. Xu, Z. Akhtar , R. Govindan, W . Lloyd, and A. Ortega, ROMP , av ailable at https://github.com/xingxux/R OMP. [17] Mozilla, mozjpe g , available at https://blog.mozilla.org/research/2014/ 03/05/introducing- the- mozjpeg- project/. [18] T ecnick.com L TD, T ecnick T estimag es , available at http://www . T ecnick.com. [19] V . Bychkovsky , S. Paris, E. Chan, and F . Durand, “Learning Photo- graphic Global T onal Adjustment with a Database of Input / Output Image Pairs, ” in The T wenty-F ourth IEEE Confer ence on Computer V ision and P attern Recognition , 2011. [20] Z. Akhtar, A. Hussain, E. Katz-Bassett, and R. Govindan, “DBit: Assessing Statistically Significant Dif ferences in CDN Performance, ” in T raffic Monitoring and Analysis W orkshop , 2016. [21] M. Burrows, C. Jerian, B. Lampson, and T . Mann, “On-line Data Compression in a Log-structured File System, ” in Proceedings of the F ifth International Conference on Architectur al Support for Pr ogram- ming Languages and Operating Systems , ser . ASPLOS V . New Y ork, NY , USA: ACM, 1992, pp. 2–9. [22] Y . Klonatos, T . Makatos, M. Marazakis, M. D. Flouris, and A. Bilas, “T ransparent Online Storage Compression at the Block-Level, ” in 14 T rans. Storage , vol. 8, no. 2. New Y ork, NY , USA: ACM, May 2012, pp. 5:1–5:33. [23] F . Douglis, “The Compression Cache: Using On-line Compression to Extend Physical Memory, ” in In Proceedings of 1993 Winter USENIX Confer ence , 1993, pp. 519–529. [24] ——, “On the role of compression in distributed systems, ” in SIGOPS Oper . Syst. Rev . , vol. 27, no. 2. New Y ork, NY , USA: A CM, Apr . 1993, pp. 88–93. [25] W . K. Ng and C. V . Ravishankar , “Block-oriented Compression T echniques for Large Statistical Databases, ” in IEEE T ransactions on Knowledge and Data Engineering , vol. 9, 1997, pp. 314–328. [26] G. V . Cormack, “Data Compression on a Database System, ” in Commun. A CM , vol. 28, no. 12. New Y ork, NY , USA: A CM, Dec. 1985, pp. 1336–1342. [27] M. Ajtai, R. Burns, R. Fagin, D. D. E. Long, and L. Stockmeyer , “Compactly Encoding Unstructured Inputs with Differential Compres- sion, ” in Journal ACM , vol. 49, no. 3. Ne w Y ork, NY , USA: ACM, May 2002, pp. 318–367. [28] L. Xu, A. Pavlo, S. Sengupta, J. Li, and G. R. Ganger, “Reduc- ing Replication Bandwidth for Distributed Document Databases, ” in Pr oceedings of the Sixth ACM Symposium on Cloud Computing , ser . SoCC ’15. Ne w Y ork, NY , USA: A CM, 2015, pp. 222–235. [29] B. Zhu, K. Li, and H. Patterson, “A voiding the Disk Bottleneck in the Data Domain Deduplication File System, ” in Proceedings of the 6th USENIX Conference on F ile and Storag e T echnolo gies , ser . F AST’08. Berkeley , CA, USA: USENIX Association, 2008. [30] G. W allace, F . Douglis, H. Qian, P . Shilane, S. Smaldone, M. Cham- ness, and W . Hsu, “Characteristics of Backup W orkloads in Produc- tion Systems, ” in Proceedings of the 10th USENIX Conference on F ile and Storage T echnologies , ser . F AST’12. Berkeley , CA, USA: USENIX Association, 2012. [31] K. Srinivasan, T . Bisson, G. Goodson, and K. V oruganti, “iDedup: Latency-aw are, Inline Data Deduplication for Primary Storage, ” in Pr oceedings of the 10th USENIX Conference on File and Storag e T echnologies , ser . F AST’12. Berk eley , CA, USA: USENIX Associ- ation, 2012. [32] D. T . Meyer and W . J. Bolosky , “A Study of Practical Deduplication, ” in Pr oceedings of the 9th USENIX Confer ence on F ile and Str oage T echnologies , ser . F AST’11. Berk eley , CA, USA: USENIX Associ- ation, 2011. [33] W . Dong, F . Douglis, K. Li, H. Patterson, S. Reddy , and P . Shilane, “T radeoffs in Scalable Data Routing for Deduplication Clusters, ” in Pr oceedings of the 9th USENIX Conference on File and Str oage T ech- nologies , ser . F AST’11. Berkeley , CA, USA: USENIX Association, 2011. [34] N. Ponomarenko, K. Egiazarian, V . Lukin, and J. Astola, “ Addi- tional Lossless Compression of JPEG Images, ” in Image and Signal Pr ocessing and Analysis, 2005. ISP A 2005. Pr oceedings of the 4th International Symposium on , Sept 2005, pp. 117–120. [35] I. Bauermann and E. Steinbach, “Further Lossless Compression of JPEG Images, ” in In Proceedings of PCS 2004 - Pictur e Coding Symposium, CA , 2004. [36] M. Hasan, K. M. Nur , and H. B. Shakur , “An Improved JPEG Image Compression T echnique based on Selecti ve Quantization, ” in International Journal of Computer Applications , vol. 55, no. 3, October 2012, pp. 9–14. [37] I. Matsuda, Y . Nomoto, K. W akabayashi, and S. Itoh, “Lossless Re- encoding of JPEG Images Using Block-Adaptive Intra Prediction, ” in 16th European Signal Processing Confer ence (EUSIPCO 2008), Lausanne, Switzerland , August 2008. [38] M. Stirner and G. Seelmann., “Improv ed Redundancy Reduction for JPEG Files, ” in Proc. of Picture Coding Symposium (PCS 2007), Lisbon, P ortugal, November 7-9, 2007 , 2007. [39] Google, W ebP: A New Image F ormat for the W eb , av ailable at https: //dev elopers.google.com/speed/webp/. [40] S. Coulombe and S. Pigeon, “Low-Complexity T ranscoding of JPEG Images with Near-Optimal Quality Using a Predicti ve Quality Factor and Scaling Parameters, ” in IEEE T ransactions on Image Pr ocessing , vol. 19, no. 3. IEEE, 2010, pp. 712–721. [41] Z. Shi, X. Sun, and F . W u, “Photo Album Compression for Cloud Storage Using Local Features, ” in IEEE J. Emerg . Sel. T opics Circuits Syst. , v ol. 4, no. 1, 2014, pp. 17–28. [42] H. Y ue, X. Sun, J. Y ang, and F . W u, “Cloud-Based Image Coding for Mobile Devices - T oward Thousands to One Compression, ” vol. 15, no. 4, 2013, pp. 845–857. [43] R. Zou, O. Au, G. Zhou, W . Dai, W . Hu, and P . W an, “Personal Photo Album Compression and Management, ” in Cir cuits and Systems (ISCAS), 2013 IEEE International Symposium on , May 2013, pp. 1428–1431. [44] D. Perra and J. Frahm, “Cloud-Scale Image Compression Through Content Deduplication, ” in Pr oceedings of the British Machine V ision Confer ence . BMV A Press, 2014. [45] F .-W . Tse and W .-K. Cham, “Image Compression Using DC Co- efficient Restoration and Optimal AC Coefficient Thresholding, ” in Cir cuits and Systems, 1997. ISCAS ’97., Pr oceedings of 1997 IEEE International Symposium on , vol. 2, Jun 1997, pp. 1241–1244 v ol.2. [46] V . Ratnakar and M. Livny , “Extending RD-OPT with Global Thresh- olding for JPEG Optimization, ” in Pr oceedings of the 6th Data Compr ession Conference (DCC ’96), Snowbir d, Utah, Marc h 31 - April 3, 1996. , J. A. Storer and M. Cohn, Eds. IEEE Computer Society , 1996, pp. 379–386.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment