Destruction of Image Steganography using Generative Adversarial Networks

Digital image steganalysis, or the detection of image steganography, has been studied in depth for years and is driven by Advanced Persistent Threat (APT) groups', such as APT37 Reaper, utilization of steganographic techniques to transmit additional …

Authors: Isaac Corley, Jonathan Lwowski, Justin Hoffman

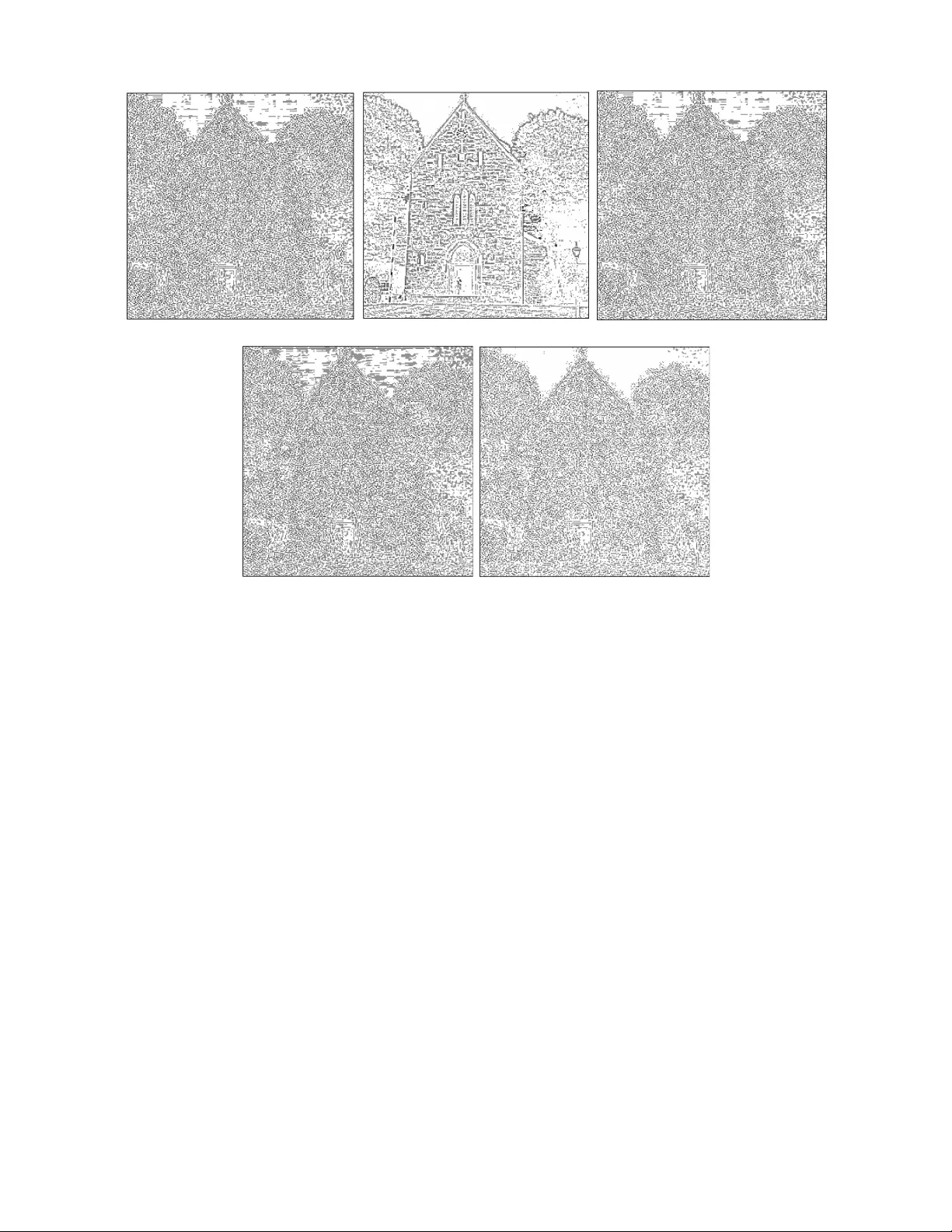

Destruction of Image Ste ganography using Generati v e Adv ersarial Netw orks Isaac Corley ∗ , Jonathan Lwo wski † , Justin Hof fman ‡ Booz Allen Hamilton San Antonio, T exas Email: ∗ corley isaac@bah.com, † lwo wski jonathan@bah.com, ‡ hoffman justin@bah.com Abstract —Digital image steganalysis, or the detection of image steganography , has been studied in depth f or years and is driven by Advanced Persistent Threat (APT) groups’, such as APT37 Reaper , utilization of stegano- graphic techniques to transmit additional malware to perform further post-exploitation activity on a compro- mised host. Howev er , many steganalysis algorithms ar e constrained to work with only a subset of all possible images in the wild or are kno wn to produce a high false positive rate. This results in blocking any suspected image being an unreasonable policy . A more feasible policy is to filter suspicious images prior to reception by the host machine. Ho wever , how does one optimally filter specifically to obfuscate or remo ve image steganography while av oiding degradation of visual image quality in the case that detection of the image was a false positive? W e propose the Deep Digital Steganography Purifier (DDSP), a Generative Adv ersarial Network (GAN) which is optimized to destroy steganographic content without compromising the perceptual quality of the original image. As verified by experimental results, our model is capable of pr oviding a high rate of destruction of steganographic image content while maintaining a high visual quality in comparison to other state-of-the-art filtering methods. Additionally , we test the transfer learning capability of generalizing to to obfuscate real malware payloads embedded into different image file formats and types using an unseen steganographic algorithm and prov e that our model can in fact be deployed to provide adequate results. Index T erms —Steganalysis, Deep Learning, Machine Learning, Neural Networks, Steganography , Image Ste- ganalysis, Inf ormation Hiding I . I N T R O D U C T I O N Steganography is the usage of an algorithm to embed hidden data into files such that during the transferral of the file only the sender and the intended recipient are aw are of the existence of the hidden payload [1]. In modern day applications, adversaries and Advanced Persistent Threat (APT) groups, such as APT37 Reaper [2], commonly utilize these algorithms to hide the trans- mission of shellcode or scripts to a compromised system. Once the file is recei ved, the adversary then extracts the malicious payload and e xecutes it to perform further post-exploitation activity on the target machine. Due to the highly undetectable nature of the current state-of- the-art steganography algorithms, adversaries are able Patent Applied for in the United States to ev ade defensive tools such as Intrusion Detection Systems (IDS) and/or Anti virus (A V) software which utilize heuristic and rule-based techniques for detection of malicious activity . Modern ste ganalysis methods, or techniques de vel- oped to specifically detect steganographic content, uti- lize analytic or statistical algorithms to detect traditional steganography algorithms, such as Least Significant Bit (LSB) ste ganography [3]. Ho wever , these methods strug- gle to detect advanced steg anography algorithms, which embed data using unique patterns based on the content of each indi vidual file of interest. This results in high false positi ve rates when detecting steganograph y and poor performance when deployed, ef fectiv ely making it unrealistic to perform prev entativ e measures such as blocking particular images or traffic from being trans- mitted within the network. Furthermore, image steganal- ysis techniques are typically only capable of detecting a small subset of all possible images, limiting them to only detect images of a specific size, color space, or file format. T o address these issues, the usage of steganography destruction techniques can be used to provide a more feasible policy to handling potential false alarms. Instead of obstructing the transmission of suspicious images, filtering said images to remo ve steganographic content effecti vely making it unusable by potential adversaries provides a simpler solution. Ho we ver , traditional and unintelligent steganographic filtering techniques result in the additional issue of degrading image quality . In this paper , we propose an intelligent image steganography destruction model which we term Deep Digital Steganography Purifier (DDSP), which utilizes a Generative Adversarial Network (GAN) [4] trained to remove steganographic content from images while maintaining high perceptual quality . T o the best of our knowledge, our DDSP model remo ves the greatest amount of steganographic content from images while maintaining the highest visual image quality in com- parison to other state-of-the-art image ste ganography destruction methods detailed in Section II-A. The rest of the paper is organized as follo ws. Section II will discuss the prior work in the image steganography purification domain, along with the background infor- mation on GANs. The dataset used for e valuating our image purification model will be presented in Section III, followed by a detailed description of DDSP in Section IV. The experimental results will be discussed and analyzed in Section V. Finally the conclusion and future works will be presented in Section VI. I I . B AC K G R O U N D A. Prior W ork Attacks on steganographic systems [5] hav e been an activ e topic of research with differing objectives of either completely removing hidden steganographic content or slightly obfuscating the steganographic data to be unus- able while av oiding significant degradation of file qual- ity . W ithin the same realm of machine learning based steganographic attacks, the PixelSte ganalysis [6] method utilizes an architecture based on PixelCNN++ [7] to build pix el and edge distributions for each image which are then manually remov ed from the suspected image. The PixelSte ganalysis method is experimented against three steganographic algorithms, Deep Steganography [8] a Deep Neural Network (DNN) based algorithm, In visible Steg anography GAN (ISGAN) [9] a GAN based algorithm, and the Least Significant Bit (LSB) algorithm. The majority of other steganographic destruc- tion techniques are based on non-machine learning based methods which utilize v arious digital filters or wa velet transforms [10 – 17]. While these approaches may appear simple to implement and do not require training on a dataset, they do not specifically remove artifacts and patterns left by ste ganographic algorithms. Instead these techniques look to filter out high frequency content, which can result in image quality degradation due to perceptual quality not being prioritized. B. Super Resolution GAN The application of GANs for removing stegano- graphic content while maintaining high visual quality draws inspiration from the application of GANs to the task of single image super resolution (SISR). Ledig et. al’ s research [18] utilized the GAN framew ork to optimize a ResNet [19] to increase the resolution of low resolution images to be as visually similar as possible to their high resolution counterparts. Their research additionally detailed that using the GAN framework as opposed solely to a pixel-wise distance loss function, e.g. mean squared error (MSE), results in additional high frequency texture detail to be recreated. They note that using the MSE loss function alone results in images that appear perceptually smooth due to the averaging nature of their objectiv e function. Instead, the authors optimize a multi-objecti ve loss function which is composed of a weighted sum of the pix el-wise MSE as a content loss and the adversarial loss produced by the GAN framew ork to reconstruct the lo w and high frequency detail, respectiv ely . I I I . D A TA T o train our proposed model, we utilized the BOSS- Base dataset [20] because it is used as a benchmark dataset for numerous steganalysis papers. The dataset contains 10,000 grayscale images of size 512x512 of the portable gray map (PGM) format. The images were preprocessed prior to training by con verting to the JPEG format with a quality factor of 95% and resizing to 256x256 dimensions. As seen in Figure 1, 4 different steganography algorithms are used to embed payloads into the 10,000 images. These algorithms (HUGO [21], HILL [22], S-UNIW ARD [23], and WO W [24]) are a few state-of-the-art steganograph y algorithms which are difficult to detect ev en by modern steganalysis al- gorithms. These algorithms are open source and made av ailable by the Digital Data Embedding Laboratory of Binghamton Uni versity [25]. For each of these steganog- raphy algorithms, 5 different embedding rates, 10%, 20%, 30%, 40%, and 50%, were used with 10% being the most difficult to detect, and 50% being the easi- est to detect. This process created a total of 210,000 images consisting of 200,000 steganographic images, and 10,000 cov er images. The BOSSBase dataset was then split into train and test sets using a train/test split criterion of 75%/25%. Fig. 1: Steganography dataset creation process I V . D E E P D I G I TA L S T E G A N O G R A P H Y P U R I FI C A T I O N Deep Digital Steganography Purifier (DDSP) consists of a similar architecture to SRGAN [18]. Howe ver in- stead of using a large ResNet, DDSP utilizes a pretrained autoencoder as the generator network in the GAN frame- work to remov e the ste ganographic content from images without sacrificing image quality . The DDSP model can be seen in Figure 2. The autoencoder is initially trained using the MSE loss function and then fine tuned using the GAN training frame work. This is necessary because Fig. 2: Deep Digital Steganography Purifier (DDSP) Architecture Fig. 3: Autoencoder Architecture Fig. 4: Encoder Network Architecture autoencoders are trained to optimize MSE which can cause images to hav e slightly lower quality than the original image due to the reasons discussed in Section II-B. More detailed descriptions of the architectures are discussed in the following sections. A. Autoencoder Ar chitectur e The residual autoencoder , seen in Figure 3, consists of encoder and decoder networks. The encoder learns to reduce the size of the image while maintaining as much information necessary . The decoder then learns how to optimally scale the image to its original size while having removed the ste ganographic content. 1) Encoder Arc hitectur e The encoder, seen in Figure 4, takes an image with steganography as its input. The input image is then normalized using Min-Max normalization, which scales pixel values to the range of [0 , 1] . After normalization, the image is fed into a 2-D con volutional layer with a kernel size of 9x9 and 64 filters, followed by a ReLU activ ation [26]. The output of the con volutional layer is then fed into a downsample block, seen in Figure 5. The downsample block consists of two 2-D con volutional layers with the first one having a stride of 2 which causes the image to be downsampled by a factor of Fig. 5: Downsample Block Architecture Fig. 6: Residual Block Architecture 2. The use of a con volutional layer to downsample the image is important because it allo ws the model to learn the near optimal approach to do wnsample the image while maintaining high image quality . The downsampled output is then passed into 16 serial residual blocks. The residual block architecture can be seen in Figure 6. Follo wing the residual blocks, a final 2-D conv olutional layer with batch normalization is added with the original output of the downsample block in a residual manner to form the encoded output. 2) Decoder Arc hitectur e The decoder, seen in Figure 9, takes the output of the encoder as its input. The input is then upsampled using nearest interpolation with a factor of 2. This will increase the shape of the encoder’ s output back to the size of the original input image. The upsampled image is then fed into a 2-D con volutional layer with a kernel size of 3x3 and 256 filters followed by a ReLU activ ation. This is then fed into another 2-D con volutional layer with a kernel size of 9x9, and 1 filter followed by a T anh activ ation. Since the output of the T anh activation function is in the range [ − 1 , 1] , the output is denormalized to scale the pixel values back to the range [0 , 255] . This output represents the purified image. 3) Autoencoder T raining T o train the autoencoder, steganographic images are used as the input to the encoder . The encoder creates the encoded image which is fed into the decoder which decodes the image back to its original size. The decoded image is then compared to its corresponding cover image counterpart using the MSE loss function. The Fig. 7: Discriminator Architecture for the DDSP Fig. 8: Discriminator Block Architecture Fig. 9: Decoder Network Architecture autoencoder was trained using early stopping and was optimized using the Adam optimizer [27] with a learning rate α = 10 − 3 , β 1 = 0 . 5 , and β 2 = 0 . 9 . B. GAN T raining Similar to the SRGAN training process, we use the pretrained model to initialize the generator network. As seen in Figure 7, the discriminator is similar to the SRGAN’ s discriminator with the exception of DDSP’ s discriminator blocks, seen in Figure 8, which contains the number of con volutional filters per layer in decreas- ing order to significantly reduce the number of model parameters which decreases training time. T o train the DDSP model, the GAN is trained by having the generator produce purified images. These purified images along with original cov er images are passed to the discriminator which is then optimized to distinguish between purified and cov er images. The GAN framework was trained for 5 epochs (enough epochs for the model to conv erge) to fine tune the gen- erator to produce purified images with high frequency detail of the original cov er image more accurately . V . R E S U LT S T o assess the performance of our proposed DDSP model in comparison to other steganography removal methods, image resizing and denoising wavelet filters, the testing dataset was used to analyze the image pu- rification quality of the DDSP model. Image quality metrics in combination with visual analysis of im- age differencing are used to provide further insight to how each method purifies the images. Finally , the DDSP model’ s generalization abilities are analyzed by testing the transfer learning performance of purifying steganography embedded using different ste ganography algorithms and file types. A. Image Purification Quality T o compare the quality of the resulting purified im- ages, the follo wing metrics were calculated between the purified images and their corresponding steganographic counterpart images: Mean Squared Error (MSE), Peak Signal-to-Noise Ratio (PSNR), Structural Similarity In- dex (SSIM) [28], and Universal Quality Index (UQI) [29]. The MSE and PSNR metrics are point-wise mea- surements of error while the SSIM and UQI metrics were dev eloped to specifically assess image quality . T o provide a quantitativ e measurement of the model’ s dis- tortion of the pixels to destroy steganographic content, we utilize the bit error ratio (BER) metric, which in our use case can be summarized as the number of bits in the image that hav e changed after purification, normalized by the total number of bits in the image. Our proposed DDSP is then baselined against se veral steganography remov al or obfuscation techniques. The first method simply employs bicubic interpolation to downsize an image by a scale factor of 2 and then resize the image back to its original size. As seen in Figure 10(b), the purified image using bicubic interpolation is blurry and does not perform well with respect to main- taining high perceptual image quality . The next baseline method consists of denoising filters using Daubechies 1 (db1) wav elets [30] and BayesShrink thresholding [31]. An example of the resulting denoised image can be seen in Figure 10(c). It is notable that the wa velet denoising method is more visually capable of maintaining adequate image quality in comparison to the bicubic resizing method. The final baseline method we compare our (a) Original Image (b) Bicubic Interpolation. (c) Denoising W av elet Filter (d) Autoencoder (e) DDSP Fig. 10: Examples of Scrubbed Images Using V arious Models DDSP model against is using the pretrained autoencoder prior to GAN fine tuning as the purifier . As seen in Figure 10(d), the autoencoder does a sufficient job in maintaining image quality while purifying the image. Fi- nally , the resulting purified image from the DDSP can be seen in Figure 10(e). The DDSP and the autoencoder’ s purified images have the best visual image quality , with the w av elet filtered image having a slightly lower image quality . Not only does our proposed DDSP maintain very high perceptual image quality , it is quantitati vely better image purifier based on image quality metrics. As seen in T able I, the images purified using DDSP resulted in the greatest performance with respect to the BER, MSE, PSNR, SSIM and UQI metrics in comparison to all baselined methods. Since our proposed DDSP model resulted in the highest BER at 82%, it changed the greatest amount of bits in the image, effecti vely obfuscating the most amount of ste ganographic content. Even though our proposed DDSP model changed the highest amount of bits within each image, it produces outputs with the highest quality as verified by the PSNR, SSIM, and UQI metrics, indicating it is the paramount method to use for steganography destruction. T ABLE I: T esting Results on the BossBase Dataset Model BER MSE PSNR SSIM UQI DDSP 0.82 5.27 40.91 0.99 0.99 Autoencoder 0.78 5.97 40.37 0.98 0.99 W av elet Filter 0.52 6942.51 9.72 0.19 0.50 Bicubic Inter . 0.53 6767.35 9.82 0.22 0.51 B. Image Differ encing T o provide additional analysis of the different image purification models, we subtract the original cov er image from their corresponding purified images allowing for the visualization of the effects caused by ste ganography and purification. As seen in Figure 11(a), when the cover image and the corresponding steganographic image are differenced, the resulting image contains a lot of noise. This is expected because the steganography algorithm injects payloads as high frequency noise into the images. The differenced bicubic interpolation purified image, seen in Figure 11(b), removes the majority of noise from the image. Howe ver , as discussed in the previous section, the bicubic interpolation method does not maintain good visual quality as it removes original content from the image. As seen in Figure 11(c) and Figure 11(d), both (a) Original Steganography (b) Bicubic Interpolation (c) Denoising W av elet Filter (d) Autoencoder (e) DDSP Fig. 11: Examples of Original Image Subtracted from Using Scrubbed Image from V arious Models the denoising wa velet filter and autoencoder purifier do not remov e the noise from the image. Instead, they both appear to inject additional noise into the image to obfuscate the steganographic content, making it un- usable. This is visually apparent in the noise located in the whitespace near the top building within the image. For both the wa velet filter and autoencoder , this noise is visually increased in comparison to the original steganographic image. Lastly , as seen in Figure 11(e), the DDSP model removes the noise from the image instead of injecting additional noise. This is again apparent in the whitespace near the top of the image. In the DDSP’ s purified image, almost all of the noise has been removed from these areas, effecti vely learning to optimally remov e the steganographic pattern, which we infer makes the DDSP hav e the highest image quality in comparison to other methods. C. T ransfer Learning T ransfer learning can be described as using a applying a model’ s knowledge gained while training on a certain task to a completely different task. T o understand the generalization capability of our model, we form exper - iments in v olving the purification of images embedded using an unseen steganography algorithm along with an unseen image format. Additionally we test the purifi- cation method of audio files embedded with an unseen steganography algorithm. 1) Application to LSB Stegano graphy T o test the generalization of the DDSP model across unseen image steganograph y algorithms, we recorded the purification performance of the BOSSBase dataset in its original PGM file format embedded with stegano- graphic payloads using LSB steganograph y [32]. The images were embedded with real malicious payloads generated using Metasploit’ s MSFvenom payload gen- erator [33], which is a commonly used exploitation tool. This was done to mimic the realism of an APT hiding malware using image steganography . Without retraining, the LSB steganography images were purified using the various methods. Similar to the results in Section V -A, the DDSP model removed the greatest amount of steganography while maintaining the highest image quality . These results can be verified quantitativ ely by looking at T able II. D. Application to Audio Ste ganography T o test the generalization of DDSP across dif ferent file formats, we additionally recorded performance metrics on audio files embedded with the same malicious pay- T ABLE II: Transfer Learning Results on the LSB Dataset Model BER MSE PSNR SSIM UQI DDSP 0.82 5.09 41.05 0.98 0.99 Autoencoder 0.78 5.63 40.62 0.98 0.99 W av elet Filter 0.53 6935.08 9.72 0.19 0.50 Bicubic Inter . 0.53 6763.73 9.83 0.22 0.51 loads detailed in Section V -C1, using the LSB algorithm. The audio files were from the V oxCeleb1 dataset [34], which contains ov er 1000 utterances from over 12000 speakers, ho wev er we only utilized their testing dataset. The testing dataset contains 40 speakers, and 4874 utterances. In order to use the DDSP model without re- training for purifying the audio files, the audio files were reshaped from vectors into matrices and then fed into the DDSP model. The output matrices from the DDSP model were then reshaped back to the original vector format to recreate the audio file. After vectorization, a butterw orth lowpass filter [35] and a hanning window filter [36] were applied to the audio file to remove the high frequency edge artifacts created when vectorizing the matrices. The models were baselined against a 1- D denoising wa velet filter as well as upsampling the temporal resolution of the audio signal using bicubic interpolation after downsampling by a scale factor of 2. As seen in T able III, the pretrained autoencoder , denoising wavelet filter , and DDSP are all capable of successfully obfuscating the steganography within the audio files without sacrificing the quality of the audio, with respect to the BER, MSE, and PSNR metrics. Howe ver , the upsampling using bicubic interpolation method provides worse MSE and PSNR in comparison to the other techniques. This shows that those models are generalized and remov e steganographic content in v ari- ous file types and ste ganography algorithms. Although the wavelet denoising filter has slightly better metrics than the DDSP and the pretrained autoencoder, we believ e that the DDSP model would greatly outperform wa velet filtering if trained to simultaneously remove image and audio steg anography and appropriately handle 1-D signals as input. T ABLE III: Transfer Learning Results on the V oxCeleb1 Dataset Model BER MSE PSNR DDSP 0.67 650.12 37.28 Autoencoder 0.67 650.14 37.28 W av elet Filter 0.67 643.94 37.65 Bicubic Inter . 0.64 1157.01 35.54 V I . C O N C L U S I O N In this paper , we developed a steganography purifica- tion model which we term Deep Digital Steganography Purifier (DDSP), which utilizes a Generati ve Adversarial Network (GAN) which, to the best of our knowledge, remov es the highest amount of steganographic content from images while maintaining the highest visual image quality in comparison to other state-of-the-art techniques as verified by visual and quantitative results. In the fu- ture, we plan to extend the DDSP model to purify inputs of various types, sizes, and color spaces. Additionally we plan to train the DDSP model on a larger dataset to make the model more robust, thus making it ready to be operationalized for a real steganography purification system. V I I . A C K N OW L E D G E M E N T S The authors would like to thank the members of our team for their assistance, guidance, and revie w of our research. R E F E R E N C E S [1] I. Cox, M. Miller, J. Bloom, J. Fridrich, and T . Kalker , Digital watermarking and ste ganography . Morgan kaufmann, 2007. [2] “ Apt37. ” [Online]. A vailable: https://attack.mitre.org/groups/ G0067/ [3] W . Bender , D. Gruhl, N. Morimoto, and A. Lu, “T echniques for data hiding, ” IBM systems journal , vol. 35, no. 3.4, pp. 313–336, 1996. [4] I. Goodfello w , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde- Farley , S. Ozair, A. Courville, and Y . Bengio, “Generative adversarial nets, ” in Advances in neural information pr ocessing systems , 2014, pp. 2672–2680. [5] A. W estfeld and A. Pfitzmann, “ Attacks on steganographic systems, ” in International workshop on information hiding . Springer , 1999, pp. 61–76. [6] D. Jung, H. Bae, H.-S. Choi, and S. Y oon, “Pixelsteg analysis: Destroying hidden information with a low degree of visual degradation, ” arXiv pr eprint arXiv:1902.11113 , 2019. [7] T . Salimans, A. Karpathy , X. Chen, and D. P . Kingma, “Pixelcnn++: Improving the pixelcnn with discretized logis- tic mixture likelihood and other modifications, ” arXiv pr eprint arXiv:1701.05517 , 2017. [8] S. Baluja, “Hiding images in plain sight: Deep steganography , ” in Advances in Neural Information Pr ocessing Systems , 2017, pp. 2069–2079. [9] R. Zhang, S. Dong, and J. Liu, “In visible steganography via generativ e adversarial networks, ” Multimedia T ools and Appli- cations , vol. 78, no. 7, pp. 8559–8575, 2019. [10] P . Amritha, M. Sethumadhavan, R. Krishnan, and S. K. Pal, “ Anti-forensic approach to remove stego content from images and videos, ” Journal of Cyber Security and Mobility , vol. 8, no. 3, pp. 295–320, 2019. [11] P . Amritha, M. Sethumadhavan, and R. Krishnan, “On the re- mov al of steganographic content from images, ” Defence Science Journal , vol. 66, no. 6, pp. 574–581, 2016. [12] S. Y . Ameen and M. R. Al-Badrany , “Optimal image steganogra- phy content destruction techniques, ” in International Conference on Systems, Contr ol, Signal Pr ocessing and Informatics , 2013, pp. 453–457. [13] A. Sharp, Q. Qi, Y . Y ang, D. Peng, and H. Sharif, “ A novel acti ve warden steganographic attack for next-generation steganogra- phy , ” in 2013 9th International W ireless Communications and Mobile Computing Conference (IWCMC) . IEEE, 2013, pp. 1138–1143. [14] F . Al-Naima, S. Y . Ameen, and A. F . Al-Saad, “Destroying steganography content in image files, ” in The 5th International Symposium on Communication Systems, Networks and DSP (CSNDSP06), Greece , 2006. [15] P . L. Shrestha, M. Hempel, T . Ma, D. Peng, and H. Sharif, “ A general attack method for steganography removal using pseudo-cfa re-interpolation, ” in 2011 International Conference for Internet T echnology and Secured T ransactions . IEEE, 2011, pp. 454–459. [16] P . Amritha, K. Induja, and K. Rajeev , “ Active warden attack on steganograph y using pre witt filter , ” in Pr oceedings of the International Conference on Soft Computing Systems . Springer, 2016, pp. 591–599. [17] C. B. Smith and S. S. Agaian, “Denoising and the acti ve warden, ” in 2007 IEEE International Confer ence on Systems, Man and Cybernetics . IEEE, 2007, pp. 3317–3322. [18] C. Ledig, L. Theis, F . Husz ´ ar , J. Caballero, A. Cunningham, A. Acosta, A. Aitken, A. T ejani, J. T otz, Z. W ang et al. , “Photo-realistic single image super-resolution using a generative adversarial network, ” in Proceedings of the IEEE conference on computer vision and pattern reco gnition , 2017, pp. 4681–4690. [19] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Pr oceedings of the IEEE confer ence on computer vision and pattern recognition , 2016, pp. 770–778. [20] P . Bas, T . Filler , and T . Pevn ` y, “”break our steganographic system”: the ins and outs of organizing boss, ” in International workshop on information hiding . Springer , 2011, pp. 59–70. [21] T . Pevn ` y, T . Filler , and P . Bas, “Using high-dimensional image models to perform highly undetectable steganograph y , ” in Inter - national W orkshop on Information Hiding . Springer , 2010, pp. 161–177. [22] B. Li, M. W ang, J. Huang, and X. Li, “ A new cost function for spatial image steganography , ” in 2014 IEEE International Confer ence on Image Pr ocessing (ICIP) . IEEE, 2014, pp. 4206– 4210. [23] V . Holub, J. Fridrich, and T . Denemark, “Univ ersal distortion function for steganography in an arbitrary domain, ” EURASIP Journal on Information Security , vol. 2014, no. 1, p. 1, 2014. [24] V . Holub and J. Fridrich, “Designing steganographic distortion using directional filters, ” in 2012 IEEE International workshop on information forensics and security (WIFS) . IEEE, 2012, pp. 234–239. [25] J. Fridrich, “Steganographic algorithms. ” [Online]. A vailable: http://dde.binghamton.edu/download/ste go algorithms/ [26] V . Nair and G. E. Hinton, “Rectified linear units improv e restricted boltzmann machines, ” in Pr oceedings of the 27th international confer ence on machine learning (ICML-10) , 2010, pp. 807–814. [27] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [28] Z. W ang, A. C. Bovik, H. R. Sheikh, E. P . Simoncelli et al. , “Image quality assessment: from error visibility to structural similarity , ” IEEE transactions on image processing , vol. 13, no. 4, pp. 600–612, 2004. [29] Z. W ang and A. C. Bovik, “ A uni versal image quality index, ” IEEE signal pr ocessing letters , vol. 9, no. 3, pp. 81–84, 2002. [30] I. Daubechies, T en lectures on wavelets . Siam, 1992, v ol. 61. [31] S. G. Chang, B. Y u, and M. V etterli, “ Adaptive wav elet thresh- olding for image denoising and compression, ” IEEE transactions on image processing , vol. 9, no. 9, pp. 1532–1546, 2000. [32] P . W ayner, Disappearing cryptography: information hiding: ste ganography and watermarking . Morgan Kaufmann, 2009. [33] “Msfvenom. ” [Online]. A v ailable: https://www . offensi ve- security .com/metasploit- unleashed/msfvenom/ [34] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxceleb: a large- scale speaker identification dataset, ” in INTERSPEECH , 2017. [35] S. Butterworth et al. , “On the theory of filter amplifiers, ” W ir eless Engineer , vol. 7, no. 6, pp. 536–541, 1930. [36] O. M. Essenwanger , “Elements of statistical analysis, ” 1986.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment