CIS-Net: A Novel CNN Model for Spatial Image Steganalysis via Cover Image Suppression

Image steganalysis is a special binary classification problem that aims to classify natural cover images and suspected stego images which are the results of embedding very weak secret message signals into covers. How to effectively suppress cover ima…

Authors: Songtao Wu, Sheng-hua Zhong, Yan Liu

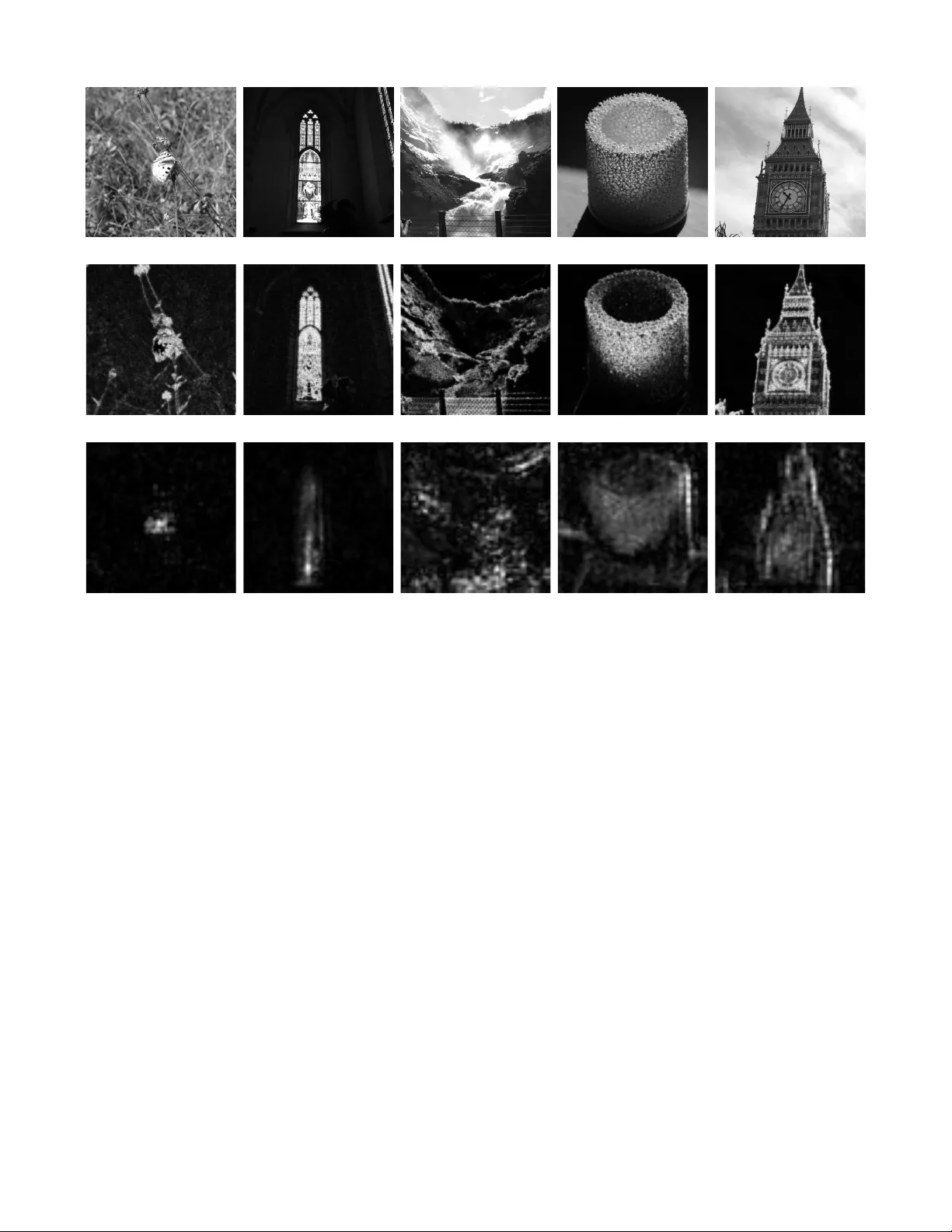

SUBMISSION TO IEEE TRANSA CTIONS ON XXX 1 CIS-Net: A No v el CNN Model for Spatial Image Ste ganalysis via Co v er Image Suppression Songtao W u, Sheng-hua Zhong*, Member , IEEE, Y an Liu, and Mengyuan Liu Abstract —Image steganalysis is a special binary classification problem that aims to classify natural cover images and suspected stego images which are the results of embedding very weak secret message signals into covers. How to effectively suppress cover im- age content and thus make the classification of cover images and stego images easier is the key of this task. Recent researches show that Con volutional Neural Networks (CNN) are very effective to detect steganography by learning discriminative features between cover images and their stegos. Several deep CNN models have been proposed via incor porating domain knowledge of image steganography/steganalysis into the design of the network and achieve state of the art performance on standard database. Follo wing such direction, we propose a novel model called Cover Image Suppr ession Network (CIS-Net), which impro ves the performance of spatial image steganalysis by suppressing cover image content as much as possible in model learning. T wo novel layers, the Single-value T runcation Layer (STL) and Sub-linear Pooling Layer (SPL), are proposed in this work. Specifically , STL truncates input values into a same threshold when they are out of a predefined interval. Theoretically , we ha ve prov ed that STL can reduce the variance of input feature map without deteriorating useful information. For SPL, it utilizes sub-linear power function to suppress large valued elements introduced by cover image contents and aggregates weak embedded signals via average pooling. Extensive experiments demonstrate the proposed network equipped with STL and SPL achieves better performance than rich model classifiers and existing CNN models on challenging steganographic algorithms. Index T erms —Steganalysis, steganography , conv olutional neu- ral network, cover image content suppression. I . I N T RO D U C T I O N As the dev elopment of social media, huge amount of digital images are uploaded to internet e very day . The proliferation of digital images on the internet provide intended users accessible media for criminal purpose easily . Image steganography , the science and art to hide secret messages into images by slightly modifying their pixels/coef ficients, is one of key methods for cov ert communication with digital images [1-8]. As a counterpart of image steganography , image steganalysis is the technique to reveal the presence of secret message in a digital image [9-14]. Because of its importance of information security , image steganalysis has been developed greatly in recent years [15-16]. Songtao W u and Sheng-hua Zhong are with the College of Computer Science and Software Engineering, Shenzhen University , Shenzhen, China. Email: {csstwu, csshzhong}@szu.edu.cn. Sheng-hua Zhong is the correspond- ing author of this paper . Y an Liu is with the Department of Computing, The Hong Kong Polytechnic Univ ersity , Hong K ong SAR, China. Email: csyliu@comp.polyu.edu.hk. Mengyuan Liu now is with the T encent Research, Shenzhen, China. Email: nkliuyifang@gmail.com. Steganalysis for natural images in spatial domain proves to be a difficult task. Modern steganographic algorithms [2- 8] embed secret messages into cover images by modifying each pixel of a cover image with a very small amplitude ( ± 1 ). Furthermore, the STC embedding scheme [17] enables steganographers to change pixels located in those complex, cluttered or noisy regions, which are difficult to be accurately modeled by statistical methods. Previous researches [11,18] indicated that it is difficult to learn discriminative features to classify cover images and their stegos when they are fed into a binary classifier directly . Consequently , designing ef fectiv e features and learning methods that both preserv e the embedded message and suppress the cover image content are essential for image steganalysis. In [11], the author proposed to model the differences between adjacent pixels rather than original val- ues for feature extraction. This operation actually suppresses the cov er image content by removing their low frequency components and thus obtain a great detection improvement to LSB matching ste ganography . Extending this approach, Fridrich in [12-13] proposed the Spatial Rich Model (SRM) method that uses thirty linear and nonlinear high-pass filters to extract noise residuals from input covers and stegos. W ith paired training method, i.e. cover images and their stegos are input to the classifier simultaneously , SRM learns a subset of features most sensitiv e to message embedding for steganalysis. Same idea is also used in Projection-SRM (PSRM) [14], which utilizes many different random vectors to project cover images and their ste gos into low dimensions, in order to highlight hidden message and suppress cover image content as much as possible. Although many efforts have been put to design features for image steganalysis, it is still hard to detect steganography accurately and mo ve them into real applications [19]. The development on deep con volutional neural network has opened a new gate for image steganalysis. Recent progresses of CNN on image related tasks [20-24] have demonstrated its po werful ability to describe the distribution of natural images. This ability , howe ver , can be used to model statistical differences between natural cov er images and unnatural stego images. Additionally , CNN deep architecture with con volution, pooling and nonlinear mapping provides steganalyzers larger space to e xtract more ef fectiv e features than hand-crafted ones [25]. For these reasons, many CNN models hav e been proposed for image steganalysis in recent years. T an in [26] firstly proposed a stacked auto-encoder network to detect steganography . Results in their paper showed that a CNN model suitable for image recognition may not be applicable to image steganalysis directly . In [27], Qian et al. proposed a SUBMISSION TO IEEE TRANSACTIONS ON XXX 2 new neural network equipped with fix ed KV high-pass filtering layer and Gaussian action function to detect steganograph y . This is the first deep learning based steganalyzer that uses domain kno wledge of steganalysis, i.e. design special layers to suppress cov er image content. Along this direction, Xu [28] proposed a new CNN model which contains absolute value layer, batch normalization layer , T anH layer and 1 × 1 con volutional layer . The purpose of these designs is to make the network specialized to the image steganalysis task and prev ent overfitting. F ollowing the Xu-network, Li in [29] extended the model with diverse activ ation modules and made the network achiev e much better performance. W u et al. in [30-31] proposed to use residual connections in a steganalytic network and obtained low detection error rates when cover images and their stegos are trained and tested in pairs. Different from pre vious models that only use one fixed kernel to suppress cover content, Y e et al. in [32] utilized all thirty SRM high-pass filters in first layer of their network. Additionally , the author proposed a linear truncation layer and a module to incorporate the selection channel information into the design, obtaining significant performance improvement to classic SRM ste ganalyzer . Recently , W ang in [33] and Boroumand in [34] proposed two clean end-to-end architec- tures to detect steganography with residual learning. W ithout any pre-calculated con volutional layers, both networks can automatically learn out high-pass filters to suppress cover image content. In parallel with steg analysis in spatial domain, deep learning based ste ganalyzer for JPEG images is also de veloped in recent years. Based on Xu-network, Chen in [35] and Zeng in [36] replaced the KV kernel with JPEG-phase-aware filters, such as DCT basis patterns and 2D Gabor filters, for suppressing JPEG image content. In [37], Xu proposed a deep residual network with fixed DCT preprocessing filters for JPEG image steganalysis. Use a same end-to-end model to the spatial domain case, Boroumand [34] trained the network with JPEG images and achieved the state of the art performances on the BOSS database. In summary , either the model with predefined or automatically learned kernels, how to effecti vely suppress cov er image content without destroying the existence of em- bedded message is central for spatial and compressed domain steganalysis with deep neural networks. Although many efforts hav e been put to incorporate the domain kno wledge of steganalysis into the design of CNN model, how to effecti vely suppress cov er image content is not fully explored. Along this research direction, we propose two nov el layers, Single-value T runcation Layer (STL) and Sublin- ear Pooling Layer (SPL), for cov er image content reduction. For STL, it truncates input data into a predefined interval using a same threshold, which is different from a general truncation linear layer that rounds off a large/small value into two different (positiv e/negati ve) thresholds. Intuitively , STL can reduce the variance introduced by out-interval elements whose values are truncated with two different thresholds. The assumption is supported by mathematical analysis based on the distribution of natural image pixels. For SPL, it uses the sublinear power function, a function whose power factor is smaller than 1, for cover image content suppression. T o av oid destroying the embedded message, SPL aggregates the feature map with av erage pooling before sublinear suppression. By unifying STL and SPL in a single model, a novel neural network called Cover Image Suppression Network (CIS-Net) is proposed in this paper . Experiments on some challenging steganographic algorithms hav e demonstrated the superiority of CIS-Net over classic SRMs and existing CNN based ste- ganalyzers. Based on proposed network, we also explore the possibility that a well-learned CNN model is able to roughly estimate embedding probability map of giv en steganographic algorithm. The rest of the paper is organized as follows. In section II, we introduce the proposed network for image steganalysis in details. The proposed STL and SPL are described and analyzed in this section. In section III, we conduct sev eral experiments on standard database to demonstrate the effecti ve- ness of proposed network over existing hand-crafted methods and deep learning based method. In the same section, we use the Classification Activ ation Map (CAM) [38] technique to draw attentional maps learned by CIS-Net for different steganographic algorithms and compare them with ground truth message embedding probability maps. The paper is finally closed with the conclusion in section IV . I I . P RO P O S E D N E T W O R K In this section, we introduce the proposed CIS-Net model for image steganalysis. Firstly , the overall architecture of CIS- Net is described in details. Then, we introduce the proposed single-value truncation and sublinear pooling, and explain their rationality for image steganalysis based on theoretical analysis and experiments. A. Overall Arc hitectur e As illustrated in Fig.1, the proposed network contains a preprocessing block, a feature fusion block, two T ype-1 blocks and two T ype-2 blocks. These building blocks are described in details as follows: Prepr ocessing block : The block contains several 5 × 5 High Pass Filters (HPF) and a STL to preprocess input images. It is noted that image steganalysis is to classify cov er images and stego images which are results of adding cov er images with very weak high frequency message signal, thus to preprocess input images to make the classification easy is necessary . Specifically , we follow Y e-network’ s [32] design that use sev eral SRM high pass filters to remov e low frequency components and a truncation layer to further filter out those lar ge elements in the co ver image. Howe ver , there are two main differences between the proposed network and Y e-network. For the first, we refine SRM filters and only select twenty of them for high frequency components extraction. Among all thirty SRM filters, we find the 4-th order HPFs are not beneficial for the effecti veness of our model and they are discarded in the design. The selected high pass filters are shown in Fig.2. For the second, rather than use a traditional truncation method, we propose a new single- valued truncation layer to filter out large elements of cover images. The main advantage of the single-valued truncation is SUBMISSION TO IEEE TRANSACTIONS ON XXX 3 Fig. 1: The proposed CIS-Net model for image steganalysis. The whole architecture consists of a preprocessing block, a feature fusion block, two T ype-1 blocks and two T ype-2 blocks.The preprocessing block suppresses cover image content by extracting high frequency components and using a single-valued truncation layer . The feature fusion block combines different preprocessed information for the following processing. T wo T ype-1 and T ype-2 blocks learn discriminativ e features for image steganalysis through the suppression of cover image content and the aggregation of embedded message signal. that it can reduce the dynamic range of cov er image content compared to traditional truncation method, without destroying preserved information. Details about the method can be found in part B of this section. Featur e fusion block : The block bridges image preprocess- ing block and the follo wing feature learning blocks. Sev eral 3 × 3 con volutional layers in the block fuse different high frequency components extracted from the preprocessed block together and augment features into higher dimensions. Instead of using popular ReLU activ ation layer, we use a Parametric ReLU (PReLU) after the con volutional layer since it allows information in the negati ve region pass through the layer , which avoids information loss caused by the ReLU layer . T ype-1 block : Each block uses a unit containing a conv o- lutional layer , a ReLU activ ation layer and an average pooling layer to extract discriminativ e feature for image steganalysis. The design is motiv ated by VGG-net [39] which recursiv ely use 3 × 3 con volutional kernels and pooling layers in the network. In the block, there are no batch normalization layers since they may make training be unstable when the mean and variance are not accurately estimated [25]. T ype-2 block : Each block consists of a 3 × 3 con volutional layer , a ReLU activ ation layer and a sublinear pooling layer . W ith the help of the proposed sublinear pooling layer (details are introduced in part C of this section), the T ype-2 block learns discriminati ve features from input feature maps by simultaneously aggre gating embedded message signal and sup- pressing cover image content. In order to summarize message information across the whole stego image, we force that the (a) Second order and third order high pass filters (b) KB, KV filters and their variations Fig. 2: T wenty high pass kernels selected from SRM filters as the HPF layers in the proposed CIS-Net. second T ype-2 block to uses a large kernel sublinear pooling (kernel size is 64 × 64 ). In addition, the dilated con volution [40] is utilized in this block in order to extract long range correlation from input features. T o summarize, the proposed CIS-Net uses a series of methods to suppress cov er image content and preserve em- bedded message in the network design. All the number of con volutional kernels in the network are optimized based on the task. B. Single-valued T runcation T runcating data into predefined interval prov es to be useful either for traditional hand-crafted feature based steg analysis [11-13] or deep learning based steganalysis [25,32]. Compared with cov er image content, signal introduced by embedded message is usually low-amplitude. Thus, truncation can filter out cover image content whose elements are usually large am- plitude without destroying secret message greatly . In addition, SUBMISSION TO IEEE TRANSACTIONS ON XXX 4 truncation can reduce the dynamic range of input feature map, making the modeling of data’ s distribution easier [12]. In this subsection, we propose an effecti ve data truncation method for image steganalysis. The method is featured that it preserves the signal of embedded message while reduces the variance of truncated elements. 1) Motivation: in [12,32], truncation is defined as the following equation: T runc ( x ) = − T , x < − T x, − T ≤ x ≤ T T , x > T (1) where T is a predefined positi ve threshold. The T runc ( · ) function preserves elements in the interv al [ − T , T ] while maps all other elements into two different v alues (we call it bi-valued truncation in this paper): T for those larger than the predefined positiv e threshold and − T for those smaller than the negati ve threshold. Generally , elements of high pass filtered images are symmetrically distributed across zero [41], thus the mean of feature map is zero. Based on such conclusion and Eq.(1), we can write the v ariance of feature map after bi-valued truncation into three parts: σ 2 b = Z − T −∞ ( − T ) 2 p ( x ) dx + Z T − T x 2 p ( x ) dx + Z + ∞ T T 2 p ( x ) dx (2) where σ 2 b is the element variance after bi-valued truncation, p ( x ) denotes the probability distribution of the element x after high pass filtering. In Eq.(2), the first term and third term are introduced by two truncated thresholds, while the second term is introduced by preserved elements in [ − T , T ] . Nev ertheless, two truncated values T and − T do not provide any useful information for the classification of cover images and stego images, b ut they increase the v ariance of feature map by adding the first term and third term in Eq.(2). T o reduce the influence of such artificially introduced terms, we propose a nov el truncation method called single-valued truncation which is defined as: S T L ( x ) = T , | x | > T x, − T ≤ x ≤ T (3) The main difference between single-valued truncation and bi- valued truncation is that all elements out of the predefined interval [ − T , T ] are mapped to a same threshold T . The variance of feature map element after single-value truncation can be written as: σ 2 s = Z − T −∞ ( T − µ s ) 2 p ( x ) dx + Z T − T ( x − µ s ) 2 p ( x ) dx + Z + ∞ T ( T − µ s ) 2 p ( x ) dx (4) where σ 2 s and µ s represent the element variance and mean after single-v alued truncation respecti vely . F or a symmetrically distributed function p ( x ) , it can easily validate that µ s is a positiv e value which is smaller than T . Intuitively , we can conclude that the first term and third term in Eq.(4) are decreased compared to two terms in Eq.(2). In the following part, we giv e a strict proof that σ 2 s is always smaller than σ 2 b for natural images processed by high-pass filters. 2−st order 3−nd order KB filter KV filter 0 1 2 3 3.5 4 High pass filter type Standard deviation Bi−valued truncation Single−value truncation (a) Standard deviations 0 10 20 30 40 50 60 70 80 0.2 0.3 0.4 0.5 0.6 0.7 Epoch number Loss Bi−valued truncation Single−valued truncation (b) Training loss curves Fig. 3: Standard deviation and training loss curves. The stan- dard deviation is calculated by 100 randomly selected cover image which are processed by two truncation methods. For training loss curves, we use the proposed architecture except for the different truncation layers to detect S-UNIW ARD steganography at 0.4 bpp. Both bi-value truncation and single- valued truncation use the same threshold, i.e. T = 5 . 2) Theoretic Analysis: In the formulation, we follow the conclusion of previous researches that each pixel in natural images processed by mean-0 highpass filters follows the “generalized Laplace distribution” [41-42]: p ( x ) = 1 Z e − | x s | α (5) where α and s are two parameters of the distribution, Z is the normalization constant to make the integral of p ( x ) be 1. For bi-valued truncation, its variance σ 2 b of Eq.(2) can be written as the following formula based on the above distribution: σ 2 b = 2 Z ∞ T T 2 Z e − | x s | α dx + Z T − T x 2 Z e − | x s | α dx (6) Based on the symmetrical property of Eq.(5), the mean value of feature map element µ s for single-v alued truncation can be obtained: µ s = Z − T −∞ T Z e − | x s | α dx + Z T − T x Z e − | x s | α dx + Z ∞ T T Z e − | x s | α dx = 2 Z ∞ T T Z e − | x s | α dx (7) and the variance of Eq.(4) is calculated as: σ 2 s = 2 Z ∞ T ( T − µ s ) 2 Z e − | x s | α dx + Z T − T ( x − µ s ) 2 Z e − | x s | α dx (8) After some mathematical operations which is illustrated in Appendix, the variance σ 2 s can be rewritten as: σ 2 s = Z T − T x 2 Z e − | x s | α dx + 2 Z ∞ T T 2 Z e − | x s | α dx − µ 2 s (9) Based on Eq.(6) and Eq.(9), the difference between σ 2 s and σ 2 b is: σ 2 s − σ 2 b = − µ 2 s < 0 (10) Eq.(10) indicates that, for any positive threshold ( T > 0 ), the variance of element in the feature map after the single-value truncation is al ways smaller than the v ariance after the bi-v alue SUBMISSION TO IEEE TRANSACTIONS ON XXX 5 truncation. The result demonstrates that the proposed single- valued truncation reduces the variance of traditional bi-valued truncation without deteriorating those preserved elements in the interval [ − T , T ] . Except for the theoretic analysis, the bi-valued truncation and single-valued truncation are compared on real images and steganographic algorithms. W e randomly select 100 cov er im- ages from BOSSbase 1.01 and use two truncation methods to process them. In addition, the proposed model with bi-valued truncation and single-v alued truncation are learned to detect Spatial UNIversal W A velet Relati ve Distortion (S-UNIW ARD) steganography [6] at payload 0.4 bpp. Fig.3(a) shows the single-valued truncation decreases standard deviations across all high pass filters, while Fig.3(b) demonstrate that the model with single-valued truncation con ver ges much faster than the model with bi-valued truncation. C. Sublinear P ooling For deep learning based image steganalysis, it is important to aggregate weak signal of embedded message. Previous re- searches [27-32] shows that av erage pooling is better than max pooling for image steganalysis since it can effecti vely merge embedded message signal in a local region and strengthen embedded message signal across the whole stego image. How- ev er , av erage pooling method only focuses on the aggregation of embedded message but do not take the suppression of cov er image content into account. T o ov ercome this limitation, we propose a novel pooling method called sublinear pooling which is depicted by Fig.4. The proposed sublinear pooling unifies both embedded message aggregation and cover con- tent suppression in one block. Mathematically , the sublinear pooling is defined as follows: S P L ( x ; γ 1 , γ 2 ) = f 2 ( av g _ pool ( f 1 ( x ; γ 1 )) ; γ 2 ) (11) where av g _ pool denote the average pooling, both f 1 and f 2 are element-wise sublinear power function f parameterized by a value γ : f ( x ; γ ) = | x | γ ◦ sg n ( x ) , 0 < γ ≤ 1 (12) where | · | , sg n ( · ) and ◦ represent the element-wise absolute value, sign function and multiplication. The element-wise function used in sublinear pooling is motiv ated by the “gen- eralized α -pooling” which is proposed in [43]. This design is easy to be implemented in code and optimized by back- propagation algorithm. Additionally , power function satisfies the following inequality: | x | γ ≤ | x | f or | x | ≥ 1 , 0 < γ ≤ 1 (13) This property can be used for cover image suppression when the parameter is set accordingly . The main difference between the proposed pooling and av er- age pooling is that our method adds sub-linear po wer functions before (pre-sublinear) and after (post-sublinear) an ordinary av erage pooling layer . This change brings two adv antages for image steganalysis: • Sublinear pooling suppress cover image content adap- tiv ely . In the proposed pooling method, sublinear power Fig. 4: Schematic illustration of proposed sublinear pooling layer . The layer adds the element-wise power function before and after an a verage pooling layer . T o make the po wer function to be sublinear , γ 1 and γ 2 in the layer should be positive and smaller than 1. T ABLE I: Detection error rates of proposed model with av eraging pooling and sublinear pooling for training, testing and their differences on S-UNIW ARD steganography at 0.4 bpp. SPL ’ s parameters, γ 1 and γ 2 , are set to 0.9 and 0.9 according to the experimental results. Pooling method Training T esting Difference A verage pooling 6.72% 15.11% 8.39% Proposed SPL 10.76% 14.82% 4.06% functions decrease values of elements with large ampli- tudes. The larger the element’ s amplitude is, the more the sublinear function reduces the element. Since large valued elements are mainly generated by cover image, sub-linear pooling decreases their amplitudes and thus suppress cover image content; • Sublinear pooling aggregates embedded message signal effecti vely . In the middle of two sublinear power func- tions, average pooling in the proposed method merges message signals from input feature maps that cov er image contents are firstly suppressed by pre-sublinear . Then, the post-sublinear further reduces the cov er image con- tent in features maps whose embedded message signals are already augmented by the av eraging pooling. Such “suppression-aggregation-suppression” is more effecti ve than a single average pooling for image steganalysis. T o validate the ef fectiv eness of proposed pooling method, we compare training and testing detection error rates of the proposed architecture on S-UNIW ARD steganograph y at 0.4 bpp in two different cases. In the first case, both T ype-1 and T ype-2 blocks use av eraging pooling method in the whole architecture. In the second case, T ype-2 blocks use sublinear pooling in the model as Fig.1 sho ws. Results in T ABLE I demonstrate that sublinear pooling not only decrease the detection error rate but also decrease the performance gap between training set and testing set, indicating our new pooling method improve the model’ s generalization ability to detect steganagraphic algorithms. I I I . E X P E R I M E N T S In this section, we conduct extensiv e experiments to demon- strate the effecti veness of proposed CIS-network for image steganalysis. At first, we introduce implementation details of the proposed model, including parameter setting, optimization method and model training strategy . Secondly , we v alidate the proposed model on challenging steganographic algorithms SUBMISSION TO IEEE TRANSACTIONS ON XXX 6 1 3 5 7 9 11 Infinity 0.1 0.15 0.2 0.25 0.3 0.35 0.4 Truncation threshold Detection error rate Single−valued truncation Bi−valued truncation (a) Detection error rates at different truncation values 1.0 0.9 0.8 0.7 0.6 1.0 0.9 0.8 0.7 0.6 0.14 0.15 0.16 0.17 0.18 γ 1 γ 2 Detection error rate (b) Detection error rates at different configuration of ( γ 1 , γ 2 ) Fig. 5: Ablation study to proposed CIS-Net. (a) Detection error rates of CIS-Net with STL and BTL at different truncation thresholds. (b) Detection error rates of CIS-Net at different configuration of ( γ 1 , γ 2 ) . and compare it with the state of the art steganalytic methods. Thirdly , we use Class Acti vation Mapping (CAM) [38] to demonstrate that the proposed CIS-Net can extract the selec- tion channel information effecti vely ev en no such information is provided in the model learning phase. Finally , we conduct experiments to validate the ef fectiveness of proposed network when the database and steganographic algorithms used in training mismatch that used in testing. A. Stegano graphic algorithms and Database W e use the BOSSbase 1.01 database [44] which contains 10,000 uncompressed natural images with the size of 512 × 512 in all follo wing experiments. For performance ev aluation, the detection error rate P E [11-13] is utilized to measure the detection ability of steganalytic algorithms: P E = min P F A 1 2 ( P M D + P F A ) (14) where P M D and P F A represents the miss detection probabil- ity and the false alert probability respectively . Since image steganalysis is a detection problem, we also ev aluate the per- formance of different steganalytic methods with R OC curves on selected payloads. Our experiments are conducted on states of the art steganographic schemes. Three representatives of adapti ve steganographic algorithms, including the W av elet Obtained W eights steganography (WO W) [5], S-UNIW ARD [6], and the HIghpass Low-pass Lo w-pass steganography (HILL) [7] are adopted for performance ev aluation. For all steganographic algorithms, we use the MA TLAB version rather than the C++ implementation to avoid the problem as [45] that all images are embedded with a same key . B. Implementations W e implement our model using Pytorch platform and the source code can be found at this link. In the implementation, all the weights in the model except the last fully connected layer are initialized by He’ s “improved Xavie” [46] method: W ij ∼ N 0 , 2 o n (15) where N ( · , · ) denotes the Gaussian distribution, o n represents the number of output channels of the con volutional layer . For the fully connected layer, we utilize zero-mean Gaussian random variable to initialize the weights but the variance is set to 0.01. This setting is to av oid that the fully connected layer has a large variance when the “impro ved Xavie” is used for initialization (the variance is 1.0), which may make the model training unstable and hard to con ver ge. The Adam optimizer [47] is used to update the model’ s parameters in the learning phase. The mini-batch size is set to 16, which contains 8 cover images and their 8 corresponding stego images. In our model, all conv olutional layers including fully con- nected layer contain bias. Unlike general methods that just initialize biases with random values, we calculate the bias of each conv olutional layer based on input cover -stego pairs in the initialization stage. For the n -th con volutional layer , its bias b n is set by the following formula: b n = − 1 |S | X i ∈S E v ec c xi n + v ec c y i n (16) where c xi n and c y i n denote the i -th feature map of the n -th con volutional layer for the cover image x and stego image y . v ec ( · ) represents the vectorization operator and E ( · ) is the expectation. S is the set of cover/stego images for initializing the bias of conv olutional/fully-connected layers. Actually , this is a mean-only version of shared normalization proposed in [25]. The adv antage of such is that it can conceal the non- zero mean introduced by the STL and make all feature map elements distributed across zero, leading to fast con vergence. In our experiment, the size of S is set to 100, which contains 50 randomly selected cover images and their corresponding stegos from training set. SUBMISSION TO IEEE TRANSACTIONS ON XXX 7 T ABLE II: Performance comparisons between proposed network and SRM, maxSRM on WO W , S-UNIW ARD and HILL steganography at fiv e different payloads. The BOSSbase 1.01 dataset is used for validation. Steganography Detection algorithm 0.1 bpp 0.2 bpp 0.3 bpp 0.4 bpp 0.5 bpp WO W SRM + ensemble 40.26% 32.10% 25.53% 20.60% 16.83% maxSRMd2 + ensemble 29.97% 23.39% 18.86% 15.43% 13.06% The proposed network 29.08% 21.03% 15.96% 12.13% 9.30% S-UNIW ARD SRM + ensemble 40.24% 31.99% 25.71% 20.37% 16.40% maxSRMd2 + ensemble 36.60% 28.86% 23.60% 19.08% 15.51% The proposed network 35.28% 26.21% 19.64% 14.62% 10.73% HILL SRM + ensemble 43.64% 36.11% 29.96% 24.82% 20.55% maxSRMd2 + ensemble 37.71% 30.91% 25.73% 21.84% 18.14% The proposed network 36.82% 28.83% 22.67% 18.10% 14.78% 0.1 0.2 0.3 0.4 0.5 0.05 0.1 0.2 0.3 0.4 0.45 Payload: bpp Detection error rate SRM maxSRMd2 Proposed CIS−Net (a) WO W steganography 0.1 0.2 0.3 0.4 0.5 0.05 0.1 0.2 0.3 0.4 0.45 Payload: bpp Detection error rate SRM maxSRMd2 Proposed CIS−Net (b) S-UNIW ARD steganography 0.1 0.2 0.3 0.4 0.5 0.1 0.2 0.3 0.4 0.45 0.5 Payload: bpp Detection error rate SRM maxSRMd2 Proposed CIS−Net (c) HILL steganography Fig. 6: Detection error rates for SRM, maxSRM and the proposed network for three different steganographic algorithms at five different payloads. 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 False positive rate True positive rate Random guess Proposed network at payload 0.4 bpp Proposed network at payload 0.2 bpp SRM at payload 0.4 bpp SRM at payload 0.2 bpp maxSRM at payload 0.4 bpp maxSRM at payload 0.2 bpp (a) WO W steganography 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 False positive rate True positive rate Random guess Proposed network at payload 0.4 bpp Proposed network at payload 0.2 bpp SRM at payload 0.4 bpp SRM at payload 0.2 bpp maxSRM at payload 0.4 bpp maxSRM at payload 0.2 bpp (b) S-UNIW ARD steganography 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 False positive rate True positive rate Random guess Proposed network at payload 0.4 bpp Proposed network at payload 0.2 bpp SRM at payload 0.4 bpp SRM at payload 0.2 bpp maxSRM at payload 0.4 bpp maxSRM at payload 0.2 bpp (c) HILL steganography Fig. 7: R OC curves of SRM, maxSRM and the proposed network for three different steganographic algorithms at payloads 0.4 bpp and 0.2 bpp. Instead of using a same learning rate for all layers in the network, we utilize layer-wise learning rates in the training phase. Specifically , learning rates for the con volutional layer in feature fusion block, two T ype-1 blocks, two T ype-2 blocks and the fully-connected layer are set to 0.01, 0.001, 0.0001 and 0.0001 respecti vely . As the network is optimized for image steganalysis, feature maps of cover images and their stegos are more discrimnative in later layers than they are in initial layers. Therefore, the layer-wise learning rate strategy can make dif ferent layers in netw ork be optimized in a same speed. T o make the HPFs adapti ve to the network, we also let them be updated in the learning stage and set its learning rate to be a small value, i.e. 5 × 10 − 6 . During the training, all learning rates of our model decay with an exponential factor , 0.985: α i ( t ) = α i · 0 . 985 t (17) where t represents the epoch number, α i denotes the learning rate for con volutional/fully-connected layers, i.e. α i ∈ { 5 × 10 − 6 , 0 . 01 , 0 . 001 , 0 . 0001 , 0 . 0001 } . It is usually hard for deep learning based methods to directly learn discriminative features between cov er images and stego images when the payload is lo w [25,32]. Compared with steganography at high payloads, modifications introduced by steganographic embedding at low payloads are too weak and SUBMISSION TO IEEE TRANSACTIONS ON XXX 8 T ABLE III: Performance comparisons between proposed network and sev eral state of the arts CNN models on S-UNIW ARD and HILL at five dif ferent payloads. The BOSSbase 1.01 dataset is used for validation. Steganography CNN model 0.1 bpp 0.2 bpp 0.3 bpp 0.4 bpp 0.5 bpp S-UNIW ARD Xu-network 40.57% 33.33% 26.32% 19.88% 16.46% Y e-network 40.29% 33.51% 25.62% 22.64% 17.64% SN-network 35.21% 26.82% 20.71% 16.53% 12.71% ReST -Net (SRM) 35.85% 31.27% 23.56% 15.72% 13.83% The proposed network 35.28% 26.21% 19.64% 14.62% 10.73% HILL Xu-network 41.07% 33.25% 26.86% 21.31% 18.18% Y e-network 43.55% 34.65% 27.98% 23.08% 21.14% SN-network 36.86% 29.63% 23.60% 19.87% 16.29% ReST -Net (SRM) 38.77% 30.87% 24.84% 19.75% 16.53% The proposed network 36.82% 28.83% 22.67% 18.10% 14.78% almost all of them are located in regions with highly varied in- tensities. In this case, deep models are difficult to discriminate cov er images and their stegos since high frequency compo- nents of cover images may swamp the existence of embedded message, making image steganalysis more challenging. T o handle the difficulty , we use curriculum learning [48] to detect steganopgraphic algorithms at low payloads. Specifically , the CIS-Net trained for a lower payload steganalysis, e.g. 0.4 bpp, are refined on the network trained at a higher payload, e.g. 0.5 bpp. The advantage of curriculum learning is that attentional features learned by higher payloads can guide/regularize the search of locations modified by steganographic algorithms at low payloads, which make the task much easier . T o avoid training samples are reused for testing at different payloads, we force that cov er images and their stegos used for network training/testing at a lower payload are same to those used for network training/testing at a higher payload. C. Ablation Study This section conducts ablation study to the proposed net- work. W e examine the behavior of proposed CIS-Net at different configuration of STL and SPL. The S-UNIW ARD steganography at 0.4 bpp is used for performance v alidation in following experiments. T runcation threshold T in STL . In this subsection, we ev aluate the performance of our CIS-Net at dif ferent truncation thresholds T . T o demonstrate the effecti veness of proposed STL, the network equipped with Bi-valued Truncation Layer (BTL) is also compared in the experiment. Fig.5(a) shows detection error rates of the network with STL and BTL at seven different thresholds, i.e. T ∈ [1 , 3 , 5 , 7 , 11 , + ∞ ] , where + ∞ means that no truncation is applied in the model. Results in the figure indicate that the model with STL is systematically better than the model with BTL at different T , which demonstrates the effecti veness of proposed data truncation method for image steganalysis. For small T v alue, e.g. T = 1 , the detection error rate of the CIS-Net is very high because excessiv e truncation to feature map elements makes discriminative features between cover images and stego images lost. For large T values, e.g. T > 5 , the network’ s performance degrades due to negati ve influence caused by large elements in cover image content. Therefore, to set an appropriate truncation threshold T is important for CNN based image steganalysis. P ower factor ( γ 1 , γ 2 ) in SPL . This subsection studies how the power of sublinear function in a SPL affects the performance of CIS-Net for detecting steganograph y . Both γ 1 and γ 2 in proposed SPL are selected from a giv en set [0 . 6 , 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0] . When both γ 1 and γ 2 are equal to 1.0, the SPL becomes a normal av erage pooling. In the experiment, two T ype-2 blocks use same setting of γ 1 and γ 2 . Fig.5(b) shows detection error rates when different configurations of γ 1 and γ 2 are set to the CIS-Net. From the figure, we can observe that detection error rates are high when γ 1 and γ 2 are lo w v alues. The reason is that lo w po wer factors not only reduce cover image content but also remove the embedded message, making classification of cover images and stego images difficult. The best performance ( 14 . 62% detection error rate) is obtained when two power factors are around 1.0, i.e. ( γ 1 , γ 2 ) = (1 . 0 , 0 . 9) . The result indicates that a slight sublinear suppression to feature map after average pooling is beneficial for image steganalysis. In the following experiments, we use this setting to train the model for different steganographic algorithms at fiv e payloads. D. P erformance Comparisons with Prior Arts In this section, we conduct experiments to demonstrate the effecti veness of proposed CIS-Net for image steganalysis. T wo kinds of methods, i.e. hand-crafted feature based methods and deep learning based methods, are compared in the experi- ment. For hand-crafted feature based methods, we compare performances of the proposed CIS-Net with the classic SRM steganalysis [11] and its selection channel version, the maxS- RMd2 steganalysis [12]. For deep learning based methods, four state of arts CNN models including Xu-Net [28], Y e- Net [32], Share Normalization Network (SN-Net) [25] and ReST -Net [29] are selected for performance comparison. For ReST -Net, we both compare the model with SRM high pass filers. Three steganographic algorithms, WO W , S-UNIW ARD and HILL, at five different payloads [0.1, 0.2, 0.3, 0.4, 0.5] are ev aluated. In the experiment, 5,000 cover images and their corresponding stego images are randomly selected to train the model, the rest 5,000 cover images and their stegos are used for testing. T o make results reliable, all reported detection SUBMISSION TO IEEE TRANSACTIONS ON XXX 9 (a) Cover images selected from BOSSbase 1.01 (b) Ground truth of embedding probability map (c) Attentional maps extracted from proposed CIS-Net Fig. 8: Comparisons of attentional maps and probability embedding maps on different images. (a) Fiv e selected cov er images from BOSSbase 1.01. (b) Ground truth probability embedding maps of selected co ver images on on S-UNIW ARD steg anography at 0.4 bpp. (c) Attentional maps of selected cover images extracted from CIS-Net based on CAM. error rates of the proposed model are results of the av erage performance of 5 times running. T ABLE II and Fig.6 sho w detection error rates of the SRM, maxSRMd2 and the proposed CIS-Net on three ste ganographic algorithms at fiv e payloads. Additionally , R OC curves of three methods at payload 0.4 bpp and 0.2 bpp are provided in Fig.7. Compared with the SRM ste ganalysis, our model ob- tains significant performance gain on different steganographic algorithms. Furthermore, the proposed CIS-Net outperforms the maxSRMd2 steganalysis even no information of selection channel is provided. An interesting phenomenon in T ABLE II is that, compared to SRM steganalysis, CIS-Net’ s perfor- mance gain ov er maxSRMd2 steganalysis decreases as the payload decreases. There are two main reasons for such phenomenon. One is that secret messages are embedded at complex/cluttered regions in a cover image when the payload is low . After highpass filtering, those regions in cov er images are statistically similar to stego images. The other is that the maxSRMd2 steg analysis only extracts features at positions that secret messages are exactly embedded since it is provided with embedding probability map. Howe ver , CIS-Net only has a rough estimation to embedding positions provided by the network trained at high payloads. Despite the traditional steganalytic methods, we also com- pare the proposed method with four deep CNN models on S-UNIW ARD and HILL steganography at fiv e payloads. For Xu-network and ReST network, we report their performances according to Li’ s paper [29]. For Y e-network, it is originally a CNN model optimized for 256 × 256 input images. Li in [29] has implemented a version of Y e-net which can detect 512 × 512 spatial images. Here, we use such result for performance comparison. For SN-Network, the performances in [25] are used for reporting. Recently , several research papers [34][51] used both BOSS [44] and BO WS [52] as the training set and obtain promising performance. Howe ver , these networks are only optimized for do wnsampled images ( 256 × 256 ) and use more training data in model learning. Such setting is greatly different from our case. Therefore, these networks are not compared in our experiments. Results in T ABLE III sho w that the proposed CIS-Net outperforms Xu-network, Y e-network, SN-Network and ReST -Net (SRM) on all configurations. Li in [29] boosted the performance of ReST -Net via en- sembling three networks (ReST -Net ensemble), in which each network is equipped with three different highpass filters, i.e. SRM, Gabor filters and the max-min nonlinear filters, for feature extraction. In our experiment, we also compare the proposed network with ReST -Net (ensemble) on S-UNIW ARD SUBMISSION TO IEEE TRANSACTIONS ON XXX 10 (a) WO W embedding map at 0.4 bpp (b) S-UNIW ARD embedding map at 0.4 bpp (c) HILL embedding map at 0.4 bpp (d) Attentional map of WO W at 0.4 bpp (e) Attentional map of S-UNIW ARD at 0.4 bpp (f) Attentional map of HILL at 0.4 bpp Fig. 9: Comparisons of probability embedding maps and attentional maps for different steganographic embedding methods. (a) and (d) are probability embedding maps and attentional maps of WO W steganography at 0.4 bpp; (b) and (e) are probability embedding maps and attentional maps of S-UNIW ARD steganography at 0.4 bpp; (c) and (f) are probability embedding maps and attentional maps of HILL steganography at 0.4 bpp. T ABLE IV: Detection error rates of proposed CIS-Net and ReST -Net (ensemble) on S-UNIW ARD and HILL at five different payloads. S-UNIW ARD 0.1 bpp 0.2 bpp 0.3 bpp 0.4 bpp 0.5 bpp ReST -Net (ensemble) 34.33% 28.65% 21.22% 14.56% 12.07% Proposed CIS-Net 35.28% 26.21% 19.64% 14.62% 10.73% HILL 0.1 bpp 0.2 bpp 0.3 bpp 0.4 bpp 0.5 bpp ReST -Net (ensemble) 37.62% 29.36% 23.26% 18.34% 15.46% Proposed CIS-Net 36.82% 28.83% 22.67% 18.10% 14.78% T ABLE V: Detection error rates of proposed model with and without augmentation on three steganographic algorithms at payload 0.4 bpp. Method WOW S-UNIW ARD HILL No augmentation 12.13% 14.62% 18.10% Augmentation 11.56% 13.95% 17.62% and HILL. T able IV sho ws that our CIS-Net outperforms ReST -Net (ensemble) in most of configurations e ven though it is augmented by model ensemble. Recent researches in deep learning sho w that data aug- mentation is important for the performance improv ement of various CNN models [49-50]. In this experiment, we use data augmentation method to decrease the detection error rates of CIS-Net for steganographic algorithms. Same to the setting in [25], we randomly split 10,000 BOSSbase samples into 5,000 training images and 5,000 testing images. For training images, we rotate them with 90 degree, 180 degree, and 270 degree along counter clockwise direction, which generates a ne w training set with 20,000 samples. Then, three steganographic algorithms embed secret messages into the augmented training set and the test set. The proposed network is trained on this new training set with 20,000 covers/ste gos and finally validated on the test set with 5,000 covers/ste gos. T o make experiment simple, we only demonstrate the performance of proposed CIS-Net at payload 0.4 bpp. Detection error rates in T ABLE V indicate that data augmentation can improve the performance of proposed CIS-Net on different algorithms. E. Attentional Map Extraction for CIS-Net In [38], Zhou et al. showed that an image classification CNN exposes implicit attention of the model on an image. SUBMISSION TO IEEE TRANSACTIONS ON XXX 11 Such ability of CNN models can be used to localize most discriminativ e regions contributing to image classification. For steganalytic CNN models, similar idea was also reported in [32] that the network can defeat the selection-channel-aware maxSRMd2 steganalytic algorithm, demonstrating that they are able to implicitly learn the distribution of selection channel for a specific embedding scheme. In this experiment, we aim to draw attentional features learned by the proposed CIS-Net for giv en images. For image steganalysis, such attentional feature is actually the estimation to the embedding probability map of the steganographic algorithm. The motiv ation is to understand whether a well trained CNN can indeed extract the embed- ding probability map implicitly even no such information is provided. Additionally , we also want to analyze the difference between the estimated embedding probability map and true embedding probability map, which may rev eal limitations of CNN models for image steganalysis. The Class Activ ation Mapping (CAM) [38] is an effecti ve method to extract attentional maps learned by a CNN model. Specifically , it computes a weighted sum of CNN’ s last feature maps as follows: M c ( x, y ) = X k w c k f k ( x, y ) (18) where f k ( x, y ) represents the feature map of unit k in the last con volutional layer at spatial location ( x, y ) , and w c k is the learned weight in the fully connect layer corresponding to class c for unit k . CAM highlights discriminativ e visual patterns for class c represented by f k ( x, y ) using the weight matrix w c k . F or adapti ve steganography , the discrimination between cover images and stego images mainly comes from noisy/cluttered regions in which secret messages are mostly embedded. Therefore, attentional feature map extracted by CAM for image steganalysis is an estimation to the embedding probability map. Follo wing the idea of CAM method, we compute a weighted sum of CIS-Net’ s feature maps of the global av erage pooling in second SPL to obtain attentional maps. T o make the size of attentional maps comparable to input images, we simply resize them from 64 × 64 to 512 × 512 with “imresize” in Matlab . In the experiment, we randomly select sev eral cov er images from BOSSbase 1.01 and also provide their ground truth embedding probability maps for S-UNIW ARD steganography at 0.4 bpp. Fig.(8) shows fiv e cover images, their ground truth embedding probability maps and the attentional maps calculated by CAM respectiv ely . From the figure, we can easily observed that attentional maps extracted by CAM are visually similar to ground truth embedding probability maps. The observation indicates that our proposed CIS-Net can implicitly estimate positions of embedded messages in case that no selected chan- nel information is provided. In addition, we also compare the differences between CAM attentional maps of three stegano- graphic algorithms in Fig.9. Compare to the S-UNIW ARD and HILL steganography , the attentional map of WO W is almost equal to zero at the region without message embedding. This demonstrates that CIS-Net optimized for WO W only extract discriminati ve features at message embedding regions, thus the detection error rate should be low . Howe ver , for the attentional map of HILL, it is still activ ated at no message em- bedding regions. These noisy acti vations are very harmful for discriminativ e features extraction between cover images and their stegos, thus make image steganalysis difficult. Therefore, the detection error rate should be high. Such analysis from extracted attentional map of three steganographic algorithms is consistent with results reported in T ABLE II, indicating that the quality of CAM attentional map is consistent with the performance of CNN model for steganographic algorithms. The reason why attentional maps of different steganographic algorithms demonstrate different visual qualities is that the embedding method of WO W and S-UNIW ARD make all secret messages be crowded in complex regions, while HILL use “spreading strategy” to make messages be distributed around complex regions. This strategy not only decreases the embedding intensity in a local region but also spreads secret messages into high frequency component of cover images. In this case, a CNN model is hard to classify cover images and their stegos since the embedding message signals and high frequency cov er image components are mixed together . I V . C O N C L U S I O N In this paper , we propose a novel CNN model called CIS- Net to detect adaptive steganography in spatial domain. T wo new layers, i.e. single-valued truncation layer and sublinear pooling layer , are designed to suppress cover image content. The single-valued truncation layer uses a same truncation threshold to reduce the variance introduced by the truncated data, while the sublinear pooling layer adaptively suppresses large elements of cover image content and aggregate weak embedded message signal with av erage pooling. Compared with pre vious data truncation and feature pooling, the pro- posed two layers can accelerate the learning and improve the generalization ability of the CNN model. Additionally , we use class activ ation map method to demonstrate that the proposed CIS-Net can learn the embedding probability map of steganographic algorithms when no selection channel information is provided. The result sho ws that CNN models hav e the ability to estimate the message embedding positions implicitly . In future works, we would extend our methods to compressed domain images. V . A P P E N D I X In this appendix, we prove that the proposed STL can reduce the variance of traditional data truncation method. For Eq.(4), we expand it as following equations: σ 2 s = 2 Z ∞ T ( T − µ s ) 2 Z e − | x s | α dx + Z T − T ( x − µ s ) 2 Z e − | x s | α dx = 2 Z ∞ T T 2 Z e − | x s | α dx + Z T − T x 2 Z e − | x s | α dx + Z T − T µ 2 s Z e − | x s | α dx + 2 Z ∞ T µ 2 s Z e − | x s | α dx − 2 µ s Z T − T x Z e − | x s | α dx − 4 µ s Z ∞ T T Z e − | x s | α dx (19) SUBMISSION TO IEEE TRANSACTIONS ON XXX 12 for the third line of Eq.(19), it can be written as: Z T − T µ 2 s Z e − | x s | α dx + 2 Z ∞ T µ 2 s Z e − | x s | α dx = Z − T −∞ µ 2 s Z e − | x s | α dx + Z T − T µ 2 s Z e − | x s | α dx + Z ∞ T µ 2 s Z e − | x s | α dx = µ 2 s Z ∞ −∞ 1 Z e − | x s | α dx = µ 2 s (20) since p ( x ) is a symmetric function, the following integral is equal to zero: 2 µ s Z T − T x Z e − | x s | α dx = 0 (21) based on Eq.(7), we obtain: 4 µ s Z ∞ T T Z e − | x s | α dx = 2 µ s · 2 Z ∞ T T Z e − | x s | α dx = 2 µ 2 s (22) Combining Eq.(20), Eq.(21) and Eq.(22), σ 2 s can be written as Eq.(9). R E F E R E N C E S [1] J. Mielikainen, “LSB matching revisited, ” IEEE Signal Pr ocessing Let- ters , 13(5):285-287, 2006. [2] X. Zhang and S. W ang, “Efficient steganographic embedding by exploit- ing modification direction, ”, IEEE Communications Letters , 10(11):781- 783, 2006. [3] W . Luo, F . Huang, and Jiwu Huang, “Edge adaptive image steganography based on LSB matching revisited, ” IEEE T ransactions on Information F or ensics and Security , 5(2):201-214, 2010. [4] T . Filler and J. Fridrich, “Gibbs construction in steganography , ” IEEE T ransactions on Information F orensics and Security , 5(4):705-720,2010. [5] V . Holub and J. Fridrich, “Designing steganographic distortion using directional filters, ” IEEE W orkshop on Information F orensic and Security , 2012. [6] V . Holub, J. Fridrich, and T . Denemark, “Universal distortion function for steganography in an arbitrary domain, ” EURASIP Journal on Information Security , 1(1):1-13, 2014. [7] B. Li, M. W ang, J. Huang, and X. Li, “ A new cost function for spatial image steganography , ” IEEE International Conference on Image Pr ocessing , pp.4206-4210, 2014. [8] T . Denemark and J. Fridrich, “Improving steganographic security by synchronizing the selection channel, ” Proceedings of 3rd ACM W orkshop on Information Hiding and Multimedia Security , 2015. [9] S. L yu and H. Farid, “Detecting hidden messages using higher-order statistics and support vector machines, ” International W orkshop on In- formation Hiding , 2002. [10] J. Fridrich, ”Feature-based steganalysis for JPEG images and its im- plications for future design of steganographic schemes, ” International W orkshop on Information Hiding , pp.67-81, 2004. [11] T . Pevny , P . Bas, and J. Fridrich, ”Steganalysis by subtractiv e pixel adjacency matrix, ” IEEE T ransactions on Information F or ensics and Security , 5(2):215-224, 2010. [12] J. Fridrich and J. K odovsky , “Rich models for steganalysis of digital images, ” IEEE T ransactions on Information F or ensics and Security , 7(3):868-882, 2012. [13] T . Denemark, V . Sedighi, V . Holub, R. Cogranne, and J. Fridrich, “Selection-channel-aware rich model for steganalysis of digital images, ” IEEE W orkshop on Information F or ensic and Security , 2014. [14] V . Holub and J. Fridrich, “Random projections of residuals for digital image steganalysis, ” IEEE Tr ansactions on Information F orensics and Security , 8(12):1996-2006, 2013. [15] H. Y in, W . Hui, H. Li, C. Lin, and W . Zhu, “ A novel large-scale digital forensics service platform for internet videos, ” IEEE T ransactions on Multimedia , 14(1):178-186, 2012. [16] H. Zhou, K. Chen, W . Zhang, C. Qin, and N. Y u, “Feature-preserving tensor voting model for mesh steganalysis, ” IEEE T ransactions on V i- sualization and Computer Graphics , DOI:10.1109/TVCG.2019.2929041, 2019. [17] T . Filler , J. Judas, and J. Fridrich, “Minimizing additiv e distortion in steganography using syndrome-trellis codes, ” IEEE T ransactions on Information F or ensics and Security , 6(3):920-935, 2011. [18] T . Pevny and J. Fridrich, “Merging Markov and DCT features for multi- class JPEG steganalysis, ”, Proceedings of SPIE Electr onic Imaging , 2007. [19] A. D. Ker , P . Bas, R. Bohme, R. Cogranne, S. Craver , T . Filler , J. Fridrich, and T . Pevny , “Moving steganography and steganalysis from the laboratory into the real world, ” Pr oceedings of the first ACM workshop on Information Hiding and Multimedia Security , pp.45-58, 2013. [20] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” IEEE Conference on Computer V ision and P attern Recognition , 2016. [21] I, Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair , A. Courville, and Y . Bengio, “Generati ve adversarial nets, ” Advances in Neural Pr ocessing Systems , pp.2672-2680, 2014. [22] C. Dong, C. Loy , K. He, and X. T ang, “Image super-resolution using deep con volutional networks, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , 38(2):295-307, 2015. [23] V . Badrinarayanan, A. Kendall, and R. Cipolla, “SegNet: A deep con- volutional encoder-decoder architecture for image segmentation, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , 39(12):2481- 2495, 2017. [24] K. Zhang, W . Zuo, Y . Chen, D. Meng, and L. Zhang, “Be yond a gaussian denoiser: Residual learning of deep cnn for image denoising, ” IEEE T ransactions on Image Pr ocessing , 26(7):3142-3155, 2017. [25] S. Wu, S. Zhong, and Y . Liu, “ A novel con volutional neural network for image steganalysis with shared normalization, ” IEEE T ransactions on Multimedia , DOI: 10.1109/TMM.2019.2920605, 2019. [26] S. T an and B. Li, “Stacked conv olutional auto-encoders for steganalysis of digital images, ” Asia-P acific Signal and Information Pr ocessing Asso- ciation, 2014 Annual Summit and Conference (APSIP A) , 2014, pp.1-4. [27] Y . Qian, J. Dong, W . W ang, and T . T an, “Deep learning for steganalysis via conv olutional neural networks, ” SPIE Media W atermarking, Security , and F or ensics , vol. 9409, 2015. [28] G. Xu, H. Z. Wu, and Y . Q. Shi, “Structural design of conv olutional neu- ral networks for ste ganalysis, ” IEEE Signal Pr ocessing Letters , 23(5):708- 712, 2016. [29] B. Li, W . W ei, A. Ferreira, and S. T an, “ReST -Net: Diverse activation modules and parallel subnets-based CNN for spatial image steganalysis, ” IEEE Signal Pr ocessing Letters , 25(5):650-654, 2018. [30] S. W u, S. Zhong, and Y . Liu, “Deep residual learning for image steganalysis, ” Multimedia T ools and Applications , pp. 1-17, 2017. [31] S. W u, S. Zhong, and Y . Liu,“Residual conv olution network based steganalysis with adaptiv e content suppression, ” IEEE International Con- fer ence on Multimedia and Expo (ICME) , 2017. [32] J. Y e, J. Ni, and Y . Y i, “Deep learning hierarchical representations for image steganalysis, ” IEEE Tr ansactions on Information F orensics and Security , 12(11):2545-2557, 2017. [33] W . W ang, J. Dong, Y . Qian, and T . T an, “Deep steganalysis: End- to-end learning with supervisory information be yond class labels, ” arXiv:1806.10443v1 , 2018. [34] M. Boroumand, M. Chen, and J. Fridrich, “Deep residual network for steganalysis of digital images, ” IEEE T ransactions on Information F or ensics and Security , 14(5):1181-1193, 2018. [35] M. Chen, V . Sedighi, M. Boroumand, and J. Fridrich, “JPEG-phase- aware con volutional neural network for steganalysis of JPEG images, ” Pr oceedings of the 5th ACM W orkshop on Information Hiding and Multimedia Security , pp.75-84, 2017. [36] J. Zeng, S. T an, B. Li, and J. Huang, “Large-scale JPEG image steganalysis using hybrid deep-learning framework, ” IEEE Tr ansactions on Information F or ensics and Security , 13(5):1200-1214, 2017. [37] G. Xu, “Deep conv olutional neural network to detect J-UNIW ARD, ” Pr oceedings of the 5th ACM W orkshop on Information Hiding and Multimedia Security , pp.67-73, 2017. [38] B. Zhou, A. Khosla, A. Lapedriza, A. Oliv a, and A. T orralba, “Learn- ing deep features for discriminative localization, ” IEEE Conference on Computer V ision and P attern Recognition , 2016. [39] K. Simonyan and A. Zisserman, “V ery deep conv olutional networks for large-scale image recognition, ” International Conference on Learning Repr esentation , 2015. [40] F . Y u and V . Koltun, “Multi-scale context aggregation by dilated con- volutions,“ International Conference on Learning Repr esentation , 2016. [41] J. Huang, “Statistics of natural images and models, ” PhD Thesis, Brown University , 2000. [42] A. Srivasta va, A. B. Lee, E. P . Simoncelli, and S-C. Zhu, “On adv ances in statistical modeling of natural images, ” Journal of Mathematical Imaging and V ision , 18(1):17-33, 2003. SUBMISSION TO IEEE TRANSACTIONS ON XXX 13 [43] M. Simon, Y . Gao, T . Darrell, J. Denzler, and E. Rodner, “Generalized orderless pooling performs implicit salient matching, ” IEEE International Confer ence on Computer V ision , 2017. [44] P . Bas, T . Filler , and T . Pevn y , “Break our steganographic system: the ins and outs of organizing BOSS, ” International W orkshop on Information Hiding , pp.59-70, 2011. [45] L. Pibre, J. Pasquet, J. Pasquet, D. Ienco, D. Ienco, and M. Chaumont, “Deep learning is a good steganalysis tool when embedding key is reused for different images, even if there is a cover sourcemismatch, ” Media W atermarking, Security , and F or ensics, P art of IS&T International Symposium on Electr onic Imaging , 2016. [46] K. He, X. Zhang, S. Ren, and J. Sun, “Delving deep into rectifiers: surpassing human-level performance on imageNet classification, ” IEEE International Confer ence on Computer V ision , 2015. [47] D. P . Kingma and J. L. Ba, “ Adam: A method for stochastic optimiza- tion, ” International Conference on Learning Repr esentation , 2015. [48] Y . Bengio, J. Louradour, R. Collobert, and J. W eston, “Curriculum learning, ” International Conference on Machine Learning , 2009. [49] L. Perez and J. W ang, “The effectiv eness of data augmentation in image classification using deep learning, ” , 2017. [50] Y . Xu, R. Jia, L. Mou, G. Li, Y . Chen, Y . Lu, and Z. Jin, “Improved relation classification by deep recurrent neural networks with data aug- mentation, ” , 2016. [51] R. Zhang, F . Zhu, J. Liu, G. Liu, “Depth-wise separable con volutions and multi-level pooling for an efficient spatial CNN-based steganaly- sis, ” IEEE Tr ansactions on Information F orensics and Security , DOI: 10.1109/TIFS.2019.2936913, 2019. [52] P . Bas and T . Furon. “Breaking Our W atermarking System (BO WS)”. A vailable: http://bows2.gipsa-lab .inpg.fr. 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment