Scalable Bayesian Preference Learning for Crowds

We propose a scalable Bayesian preference learning method for jointly predicting the preferences of individuals as well as the consensus of a crowd from pairwise labels. Peoples' opinions often differ greatly, making it difficult to predict their pre…

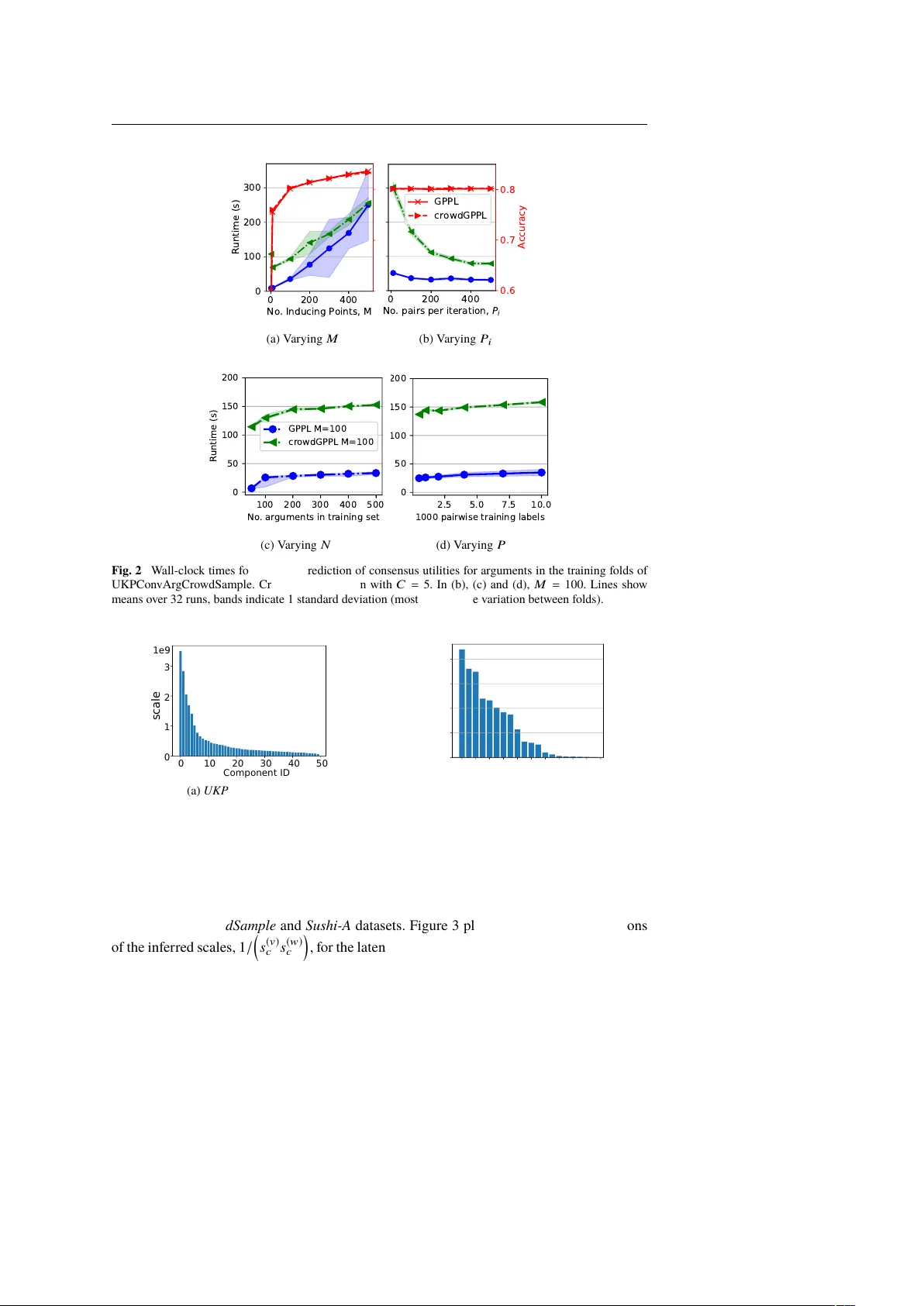

Authors: Edwin Simpson, Iryna Gurevych