Delay-Performance Tradeoffs in Causal Microphone Array Processing

In real-time listening enhancement applications, such as hearing aid signal processing, sounds must be processed with no more than a few milliseconds of delay to sound natural to the listener. Listening devices can achieve better performance with low…

Authors: Ryan M. Corey, Naoki Tsuda, Andrew C. Singer

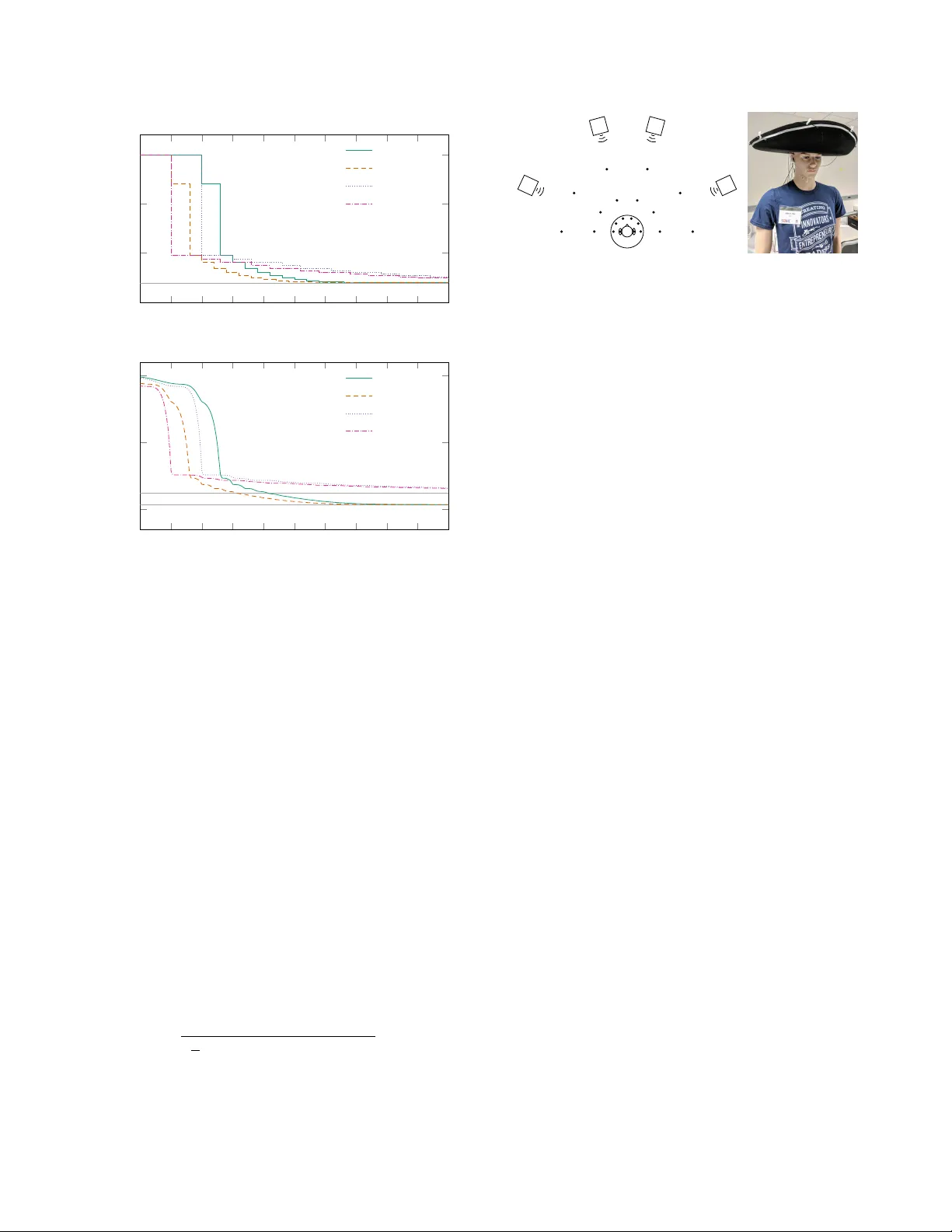

DELA Y -PERFORMANCE TRADEOFFS IN CA USAL MICR OPHONE ARRA Y PROCESSING Ryan M. Cor e y , Naoki Tsuda, and Andr ew C. Singer Uni versity of Illinois at Urbana-Champaign ABSTRA CT In real-time listening enhancement applications, such as hearing aid signal processing, sounds must be processed with no more than a few milliseconds of delay to sound natural to the listener . Listening devices can achiev e better performance with lower delay by using microphone arrays to filter acoustic signals in both space and time. Here, we analyze the tradeoff between delay and squared-error per- formance of causal multichannel W iener filters for microphone ar- ray noise reduction. W e compute exact expressions for the delay- error curves in two special cases and present experimental results from real-world microphone array recordings. W e find that delay- performance characteristics are determined by both the spatial and temporal correlation structures of the signals. Index T erms — Microphone arrays, audio enhancement, audio source separation, hearing aids, noise reduction, beamforming 1. INTR ODUCTION Listening enhancement applications, such as hearing aid process- ing [1] and audio augmented reality [2], differ from other audio en- hancement applications, like teleconferencing and speech recogni- tion, in part because of their strict delay constraints. Since users hear both li ve and processed signals simultaneously , these systems must process sound with no more than a few milliseconds of delay . Discerning listeners can notice delays as low as 3 ms and are dis- turbed by delays greater than 10 ms [3]. Listeners with hearing loss can tolerate greater delay , around 20 ms for closed-fitting hearing aids [4] and 6 ms for open-fitting hearing aids [5]. Delays longer than about 30 ms can impair the user’ s ability to speak [6]. This delay requirement limits the performance of audio enhance- ment systems. In single-channel systems, the frequency resolution of a frequency-selecti ve filter generally improves with longer delay . Modern single-microphone audio enhancement algorithms [7], such as those employing time-frequency masks [8] and non-negativ e ma- trix factorization [9], often process speech using short-time Fourier transform (STFT) frames of 60 ms or longer to maximize time- frequency sparsity [8]. These algorithms are ef fectiv e in many ap- plications, but their delay is too lar ge for listening enhancement. Multichannel audio enhancement systems use microphone ar- rays to spatially separate signals [10–12]. Many multichannel meth- ods are also applied in the STFT domain to more easily model re ver- beration [12, 13]. In principle, howe ver , spatial processing should require minimal delay: for example, a linear array can enhance a source at broadside with zero delay by simply summing its inputs. Whereas the frequency resolution of a temporal filter depends on its duration, the spatial resolution of an array is determined by its spa- tial extent. Multichannel listening systems can use both spatial and This material is based upon work supported by the National Science Foundation Graduate Research Fellowship Program under Grant Number DGE-1144245. spectral diversity to separate signals. It is natural to ask, therefore, whether devices with large arrays can enhance audio with lower de- lay than those with small arrays. That is, can we use arr ay pr ocess- ing to trade space for time? There is a large body of literature on array processing for lis- tening devices, e.g. [14, 15], and causal multichannel filters have been studied in the contexts of derev erberation [16–19] and noise and echo control [20]. In [21], the authors considered the minimum filter delay required to cov er the full aperture of an array . There ha ve also been several proposed low-delay single-microphone filtering and source separation techniques [22–24]. Ho wever , to the best of our kno wledge, there has been no prior study of delay-performance tradeoffs in array processing. Here we approach audio enhancement as a stationary linear es- timation problem: given an observed signal from the infinite past to time t , what is the linear minimum mean square error (MSE) esti- mate of a desired signal at time t − α ? Positi ve values of α corre- spond to delay and ne gative v alues to prediction. Such problems are well understood in the scalar case: for certain signals, we can use spectral factorization to compute exact expressions for the MSE as a function of α [25–27]. F or example, Figure 1 sho ws delay-error curves for separating sev eral spectrally distinct speechlike sounds, which will be described in Section 3. As α increases, the MSE decreases from the variance of the target signal to the MSE of a noncausal W iener filter . W e can apply similar theoretical tools in the multiv ariate case [28, 29] to analyze delay-performance tradeoffs for causal multichannel Wiener filters (CMWF) in terms of the spa- tial and temporal correlation structures of the source signals. In this work, we will deriv e a general expression for the MSE performance of a CMWF as a function of α , find exact expressions for idealized mixing models, and present experimental results from wearable and distributed microphone arrays in a real room. 2. DELA Y -CONSTRAINED MUL TICHANNEL FIL TERING Consider a mixture of N sources captured by M microphones. Let the sources s ( t ) = [ s 1 ( t ) , . . . , s N ( t )] T and additive noise z ( t ) = [ z 1 ( t ) , . . . , z M ( t )] T be wide-sense stationary continuous- time random processes that are uncorrelated with each other . Let a m,n ( t ) , m = 1 , . . . , M , n = 1 , . . . , N be known causal im- pulse responses and let w T α ( t ) = [ w α, 1 ( t ) , . . . , w α,M ( t )] be fil- ter impulse responses. Denote the observed signals by x ( t ) = [ x 1 ( t ) , . . . , x M ( t )] T and the system output by y α ( t ) , where x m ( t ) = N X n =1 ( a m,n ∗ s n )( t ) + z m ( t ) , m = 1 , . . . , M , and (1) y α ( t ) = M X m =1 ( w α,m ∗ x m ) ( t ) , (2) 978-1-5386-8151-0/18/$31.00 c 2018 IEEE − 30 − 20 − 10 0 10 20 30 − 20 − 15 − 10 − 5 0 ← Prediction Dela y → Delay α (ms) Relative error E rel ( α ) (dB) N = 1 N = 2 N = 4 Fig. 1 . Relativ e MSE as a function of delay for isolating one source from a mixture of N synthetic speechlike sounds (see Section 3) and uncorrelated noise using single-channel W iener filters. and ∗ denotes linear con volution. W e define the desired output signal d α ( t ) to be the first source as captured by the first microphone—for example, a target talker reproduced at the microphone nearest the listener’ s ear—and delayed by time α : d α ( t ) = ( a 11 ∗ s 1 ) ( t − α ) . (3) T o understand fundamental tradeoffs in performance, we re- strict our attention to the best-case scenario in which all signals are stationary in both space and time and hav e known statis- tics. Let A ( ω ) be the M × N frequency response matrix cor- responding to the a m,n ( t ) ’ s. Let r s ( t ) , r z ( t ) , r d ( t ) , and r x ( t ) be the autocorrelation sequences of the corresponding random variables and let R s ( ω ) , R z ( ω ) , R d ( ω ) = | A 1 , 1 ( ω ) | 2 R s 1 ( ω ) , and R x ( ω ) = A ( ω ) R s ( ω ) A H ( ω ) + R z ( ω ) be their respectiv e Fourier transforms. T o ensure that the CMWF is well defined, we assume that R x ( ω ) is positive definite for all ω of interest. Let r xd ( t ) be the cross-correlation of x ( t ) with d 0 ( t ) and let R xd ( ω ) = A 1 ( ω ) R s 1 ( ω ) A ∗ 1 , 1 ( ω ) be its Fourier transform, where A 1 ( ω ) is the column of A ( ω ) corresponding to the target source. Let W T α ( ω ) be the Fourier transform of w T α ( t ) . 2.1. Causal filter perf ormance The CMWF w T α ( t ) must satisfy the W iener-Hopf equation [25], r T xd ( t − α ) = Z ∞ 0 w T α ( u ) r x ( t − u ) d u, 0 < t < ∞ . (4) The MSE between y α ( t ) and d α ( t ) is E ( α ) = r d (0) − Z ∞ −∞ w T α ( t ) r xd ( t − α ) d t. (5) The noncausal ( α − → ∞ ) solution to (4) and its error power are readily expressed in the frequenc y domain: W T nc ( ω ) = R H xd ( ω ) R − 1 x ( ω ) (6) E nc = Z ∞ −∞ h R d ( ω ) − R H xd ( ω ) R − 1 x ( ω ) R xd ( ω ) i d ω 2 π . (7) For finite α , we can solve (4) by first decomposing R x ( ω ) into its spectral factors [28], R x ( ω ) = G ( ω ) G H ( ω ) , (8) where G ( ω ) and its in verse are both causal. W e proceed by decorre- lating x ( t ) using G − 1 ( ω ) and then solving (4) for the decorrelated signals [29] to find the causal filter W T α ( ω ) = h e − j ωα R H xd ( ω )( G H ( ω )) − 1 i + G − 1 ( ω ) , (9) where [ · ] + denotes the causal part of the argument, that is, time- domain truncation from t = 0 . Let ˜ R T ( ω ) = R H xd ( ω )( G H ( ω )) − 1 . For the listening enhancement application, this vector can be written ˜ R T ( ω ) = A 1 , 1 ( ω ) R s 1 ( ω ) A H 1 ( ω )( G H ( ω )) − 1 . (10) Let ˜ r T ( t ) be the in verse Fourier transform of ˜ R T ( ω ) . Substi- tuting w T α from (9) into (5), using the spectral factorization (8) and Parse val’ s identity , and rearranging terms [27], we can sho w that E ( α ) = E nc + Z − α −∞ ˜ r T ( t ) ˜ r ( t ) d t. (11) Thus, the err or penalty due to causality is the ener gy in ˜ r ( t ) for t < − α . Our goal is to understand ho w E ( α ) depends on the spatial and spectral characteristics of the source signals. While multiv ariate spectral factorizations are often difficult to compute in practice [30], we can find exact expressions for certain special cases that provide insight about the delay-constrained array processing problem. 2.2. Unif orm linear array First, consider a plane wave incident upon a uniform linear array of M sensors with the reference at one end. Let τ be the time dif ference of arriv al (TDOA) between adjacent microphones, let R s ( ω ) = 1 and let R z ( ω ) = σ 2 I , so that R H xd = 1 e + j ωτ · · · e + j ω ( M − 1) τ and (12) R x ( ω ) = σ 2 + 1 e + j ωτ · · · e + j ω ( M − 1) τ e − j ωτ σ 2 + 1 e + j ω ( M − 2) τ . . . . . . . . . e − j ω ( M − 1) τ e − j ω ( M − 2) τ · · · σ 2 + 1 . (13) A con venient spectral factor is the lo wer triangular matrix G ( ω ) = b 1 ( σ 2 + 1) 0 · · · 0 b 1 e − j ωτ b 2 ( σ 2 + 2) 0 . . . . . . . . . b 1 e − j ω ( M − 1) τ b 2 e − j ω ( M − 2) τ · · · b M ( σ 2 + M ) (14) where b m = p σ 2 / (( σ 2 + m )( σ 2 + m − 1)) . Applying (10) and tak- ing the in verse Fourier transform, we ha ve ˜ r T ( t ) = b 1 b 2 δ ( t + τ ) · · · b M δ ( t + ( M − 1) τ ) . (15) Finally , from (11), the MSE is E ( α ) = σ 2 σ 2 + M + M − 1 X m =0 b 2 m +1 u ( mτ − α ) (16) = σ 2 σ 2 + P M − 1 m =0 ¯ u ( α − mτ ) , (17) where u ( t ) = 1 if t > 0 and ¯ u ( t ) = 1 if t ≥ 0 . Thus, the error is reduced for each microphone that the source reaches within time α of reaching the reference. The delay-error curve is a piecewise constant function with steps of width | τ | and decreasing heights that depend on σ 2 . 2 − 2 − 1 0 1 2 3 4 5 6 7 8 − 15 − 10 − 5 0 E nc Delay α (ms) Error pow er E ( α ) (dB) (a) Uncorrelated sources Near/near F ar/far Near/far F ar/near − 2 − 1 0 1 2 3 4 5 6 7 8 − 20 − 10 0 Delay α (ms) Error pow er E ( α ) (dB) (b) Sp eec h-shap ed sources Near/near F ar/far Near/far F ar/near Fig. 2 . Delay-error curves for two plane wave sources and two sen- sors with | τ 1 | = 1 ms, | τ 2 | = 0.6 ms, and σ 2 = − 20 dB. The legend indicates the placement of the target/interference sources with re- spect to the reference. 2.3. T wo-source, two-micr ophone separation W e can follo w a similar procedure with multiple sources. Consider a scenario with two plane wa ve sources and tw o microphones. Let τ 1 and τ 2 6 = τ 1 be the TDOAs of the sources, let R s ( ω ) = I and let R z ( ω ) = σ 2 I with σ 2 > 0 , so that R H xd ( ω ) = 1 e + j ωτ 1 , and (18) R x ( ω ) = 2 + σ 2 e + j ωτ 1 + e + j ωτ 2 e − j ωτ 1 + e − j ωτ 2 2 + σ 2 . (19) The determinant of R x ( ω ) can be written det R x ( ω ) = γ − 1 1 − γ e − j ω ( τ 1 − τ 2 ) 2 , (20) where γ is a scalar that depends only on σ 2 . The spectral factor- ization of R x ( ω ) takes different forms depending on the signs of τ 1 and τ 2 , but ˜ R T ( ω ) always includes a term of the form (1 − γ e + j ω | τ 1 − τ 2 | ) − 1 , which results in an infinite-duration ˜ r T ( t ) . Ap- plying (11), we find that E ( α ) = ( E nc + u ( t 0 − α )+ c 2 1 γ u ( t 1 − | τ 1 − τ 2 |− α )+ c 2 2 f ( t 1 ) σ 2 +2 , if τ 1 τ 2 > 0 E nc + √ γ u ( t 0 − α ) + f ( t 0 − | τ 1 | ) + γ f ( t 1 ) , if τ 1 τ 2 ≤ 0 (21) where t 0 = min(0 , τ 1 ) , t 1 = max(0 , τ 1 , τ 2 , τ 1 − τ 2 ) , f ( t ) = γ 1+2 max(0 , b ( α − t ) / | τ 2 − τ 1 |c +1) / (1 − γ 2 ) , and (22) ? 60cm 120cm T arget source Fig. 3 . Left: Recording setup. Circles are microphones and squares are loudspeakers. Right: Hat-mounted microphone array . ( c 1 , c 2 ) = (0 , 0) , if | τ 1 | = | τ 2 | σ 2 + 1 , γ + γ σ 2 − 1 , if | τ 1 | < | τ 2 | 1 , σ 2 + 1 − γ , if | τ 1 | > | τ 2 | . (23) This delay-error curve is also piecewise constant, but has a geomet- ric “tail” that decays with a rate of roughly γ 2 / | τ 2 − τ 1 | . The height of the steps is determined by σ 2 and the width is determined by | τ 2 − τ 1 | , which depends on the distance between the sources. For large positi ve α , E ( α ) approaches E nc . Figure 2(a) shows E ( α ) for four combinations of source place- ment. The causality penalty takes a different form depending on the relativ e placement of sources and microphones. For example, if both the target and interference source are closer to microphone 1 than microphone 2 (near/near), then the second microphone does not contribute any information at α = 0 . If the sources are on opposite sides, then the dif ference in TDOAs, | τ 1 − τ 2 | is lar ger, and therefore E ( α ) decays more slowly . 2.4. T emporally correlated signals The expressions above were deri ved for uncorrelated source and noise processes. In many applications, howe ver , the signals of in- terest are correlated and can therefore be separated spectrally as well as spatially . It is difficult in general to predict the effects of signal correlation on the delay-error curv e. Ho wever , if the entries of R x ( ω ) share a common spectral factor—for example, if the sources are identically distributed and are recorded by identical micro- phones—then we can write R x ( ω ) = H ( ω ) ˆ G ( ω ) ˆ G H ( ω ) H ∗ ( ω ) and R H xd ( ω ) = H ( ω ) ˆ R H xd ( ω ) H ∗ ( ω ) , where H ( ω ) H ∗ ( ω ) is the scalar spectral factorization of the common factor . Then we hav e R H xd ( ω )( G H ( ω )) − 1 = H ( ω ) ˆ R H xd ( ω )( ˆ G H ( ω )) − 1 (24) ˜ r T ( t ) = ( h ∗ ˆ ˜ r T )( t ) . (25) Since h ( t ) is causal, it spreads the energy of ˜ r ( t ) forward in time. Figure 2(b) shows the same scenario as in the pre vious section, but with identically distributed speech-shaped sources. The error is lo wer overall, the steps are smoother , and the filter can begin to separate the signals ev en before they reach either microphone. 3. EXPERIMENTS T o ev aluate delay-performance tradeof fs in realistic conditions, we recorded audio mixtures using a wearable microphone array in a cocktail party scenario at the Augmented Listening Laboratory at the Univ ersity of Illinois at Urbana-Champaign, which has a rev erbera- tion time of around T 60 = 300 ms. The recording setup, shown in Figure 3, consisted of twenty omnidirectional lavalier microphones: 3 − 30 − 20 − 10 0 10 20 30 − 20 − 15 − 10 − 5 0 Delay α (ms) Relative error E rel ( α ) (dB) (a) Human sp eech Two ears Ears & hat (30 cm) Ears & stands (60 cm) Ears & stands (120 cm) − 30 − 20 − 10 0 10 20 30 − 20 − 15 − 10 − 5 0 Delay α (ms) Relative error E rel ( α ) (dB) (b) Synthetic speechlik e sounds Fig. 4 . Experimental delay-error results for isolating a single target source from a mixture of four sources. two at the left and right ears of a mannequin “listener , ” six along the perimiter of a hat with radius 30 cm, and twelve mounted on stands at 60 cm and 120 cm distances from the listener . The refer- ence microphone is that in the left ear . Source signals were produced by loudspeakers two meters away from the listener . The acoustic impulse responses between the loudspeakers and microphones were measured using linear sweeps. All data was sampled at 16 kHz. The signals were separated using the discrete-time, finite-length version of the CMWF . Let ¯ x [ k ] = x T [ k ] , . . . , x T [ k − L + 1] T and ¯ w T α = w T α [0] , . . . , w T α [ L − 1] be stacked vectors of the sam- pled multichannel signals and the finite impulse response filter co- efficients, respectively . Let y α [ k ] = ¯ w T α ¯ x [ k ] be the filter output sequence and let d α [ k ] be the desired output sequence. Let ¯ r x = E ¯ x [ n ] ¯ x T [ n ] and ¯ r xd ( α ) = E [ ¯ x [ n ] d α [ n ]] , where E [ · ] is expecta- tion. The linear minimum MSE filter coefficients are [10] ¯ w T α = ¯ r T xd ( α ) ¯ r − 1 x . (26) In our experiments, ¯ r x was computed using truncated impulse response measurements. W e applied diagonal loading compa- rable to the source power to account for modeling errors and ambient noise. W e used discrete-time filters with length L = 2048 samples (128 ms). For each experiment we report the sam- ple MSE relative to the source po wer , computed as E rel ( α ) = 10 log 10 P k ( y α [ k ] − d α [ k ]) 2 / P k d 2 α [ k ] . Figure 4(a) shows delay-error curves for four simultaneous talk- ers using arrays of up to eight microphones at varying distances. The speech signals were twenty-second clips taken from the VCTK dataset [31] and the filters were designed using a single approxi- mate long-term average speech autocorrelation. Because we model the signals as identically distributed, the filters must rely on spatial rather than spectral diversity to separate the sources. As the radius of the array increases, the curves mov e downward and to the left, indicating that the larger -aperture arrays can achieve similar perfor- mance with lower delay compared to the smaller-aperture arrays. In fact, since the source signals reach the microphone stands several milliseconds before the y reach the listener , the system could operate with negati ve delay . The two-channel filter performs poorly in this experiment be- cause it does not take advantage of the time-frequency sparsity of speech signals, which many speech enhancement algorithms exploit. T o account for the benefits of sparsity within the stationary esti- mation framew ork of this paper , we repeated the experiment with four stationary speechlike sounds generated using the V ocaloid mu- sic synthesis software. Each ten-second source signal represents a different v owel sung in a different ke y . Although the signals are de- terministic and periodic, the filters were designed based on 50 ms von Hann-windo wed autocorrelation sequences. Figure 1 shows the delay-error curves for single-channel mixtures of these sources and Figure 4(b) compares the separation performance of multichannel filters with different array sizes. Because the sources are approxi- mately disjoint in the frequency domain, a one- or two-channel filter can separate them effecti vely , but requires a delay to do so. The larger microphone arrays also benefit from longer delay , b ut perform better for small α . For example, the performance of the hat-mounted array with zero delay matches that of the binaural microphones with about 10 ms delay , which would be perceptible to man y listeners. 4. CONCLUSIONS The theoretical and experimental results presented here suggest that larger arrays can separate sound sources with lower delay and that the delay-performance tradeof f depends on both the spatial and tem- poral correlation structure of the observed signals. When micro- phones are located between the listener and sound sources, those sensors receiv e the signals before the listening device, shifting the delay-performance curve to the left. Arrays also provide spatial gain, which improves ov erall performance regardless of delay . When signals are spectrally distinct, a single-channel filter could separate them ef fectively given a long enough delay , b ut an array can achieve the same performance with little or no delay . Much remains to be understood about delay-constrained array processing. For example, equations (10) and (11) tell us little in general about the effects of rev erberation and signal spectra on de- lay . Furthermore, because many signals of interest are nonstationary , we must also consider time-varying causal array processing. Finally , to realize the benefits of spatial div ersity in delay-constrained listen- ing enhancement, listening devices must use larger microphone ar- rays than they do today . Lar ge wearable and distributed arrays could allow us to apply stronger noise reduction while meeting the strict delay constraints of real-time listening applications. 5. REFERENCES [1] J. M. Kates, Digital Hearing Aids . Plural Publishing, 2008. [2] V . V alimaki, A. Franck, J. Ramo, H. Gamper, and L. Savioja, “ Assisted listening using a headset: Enhancing audio percep- tion in real, augmented, and virtual en vironments, ” IEEE Sig- nal Pr ocessing Magazine , vol. 32, no. 2, pp. 92–99, 2015. [3] J. Agne w and J. M. Thornton, “Just noticeable and objection- 4 able group delays in digital hearing aids, ” J ournal of the Amer- ican Academy of Audiology , v ol. 11, no. 6, pp. 330–336, 2000. [4] M. A. Stone and B. C. Moore, “T olerable hearing aid delays. I. Estimation of limits imposed by the auditory path alone us- ing simulated hearing losses, ” Ear and Hearing , vol. 20, no. 3, pp. 182–192, 1999. [5] M. A. Stone, B. C. Moore, K. Meisenbacher, and R. P . Derleth, “T olerable hearing aid delays. V . Estimation of limits for open canal fittings, ” Ear and Hearing , vol. 29, no. 4, pp. 601–617, 2008. [6] M. A. Stone and B. C. Moore, “T olerable hearing aid delays. II. Estimation of limits imposed during speech production, ” Ear and Hearing , vol. 23, no. 4, pp. 325–338, 2002. [7] S. Makino, ed., A udio Source Separation . Springer, 2018. [8] O. Y ilmaz and S. Rickard, “Blind separation of speech mix- tures via time-frequency masking, ” IEEE T ransactions on Sig- nal Pr ocessing , vol. 52, no. 7, pp. 1830–1847, 2004. [9] A. Ozerov and C. F ´ evotte, “Multichannel nonnegativ e matrix factorization in con volutiv e mixtures for audio source sepa- ration, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 18, no. 3, pp. 550–563, 2010. [10] J. Benesty , J. Chen, and Y . Huang, Micr ophone Array Signal Pr ocessing , vol. 1. Springer, 2008. [11] M. Brandstein and D. W ard, Micr ophone Arrays: Signal Pro- cessing T echniques and Applications . Springer, 2013. [12] S. Gannot, E. V incent, S. Marko vich-Golan, and A. Ozerov , “ A consolidated perspecti ve on multimicrophone speech enhance- ment and source separation, ” IEEE/A CM Tr ansactions on A u- dio, Speech, and Language Pr ocessing , v ol. 25, no. 4, pp. 692– 730, 2017. [13] M. S. Pedersen, J. Larsen, U. Kjems, and L. C. Parra, “Con vo- lutiv e blind source separation methods, ” in Springer Handbook of Speech Pr ocessing , pp. 1065–1094, Springer, 2008. [14] S. Doclo, W . Kellermann, S. Makino, and S. E. Nordholm, “Multichannel signal enhancement algorithms for assisted lis- tening devices, ” IEEE Signal Pr ocessing Magazine , vol. 32, no. 2, pp. 18–30, 2015. [15] S. Doclo, S. Gannot, M. Moonen, and A. Spriet, “ Acoustic beamforming for hearing aid applications, ” in Handbook on Array Pr ocessing and Sensor Networks (S. Haykin and K. R. Liu, eds.), pp. 269–302, W iley , 2008. [16] P . Naylor and N. D. Gaubitch, Speech Der everberation . Springer , 2010. [17] T . Nakatani, T . Y oshioka, K. Kinoshita, M. Miyoshi, and B.-H. Juang, “Blind speech derev erberation with multi-channel lin- ear prediction based on short time Fourier transform represen- tation, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pp. 85–88, 2008. [18] B. Schwartz, S. Gannot, and E. Habets, “Online speech derev erberation using Kalman filter and EM algorithm, ” IEEE/A CM T ransactions on Audio, Speech and Language Pro- cessing , vol. 23, no. 2, pp. 394–406, 2015. [19] J. Benesty , J. Chen, Y . Huang, and J. Dmochowski, “On microphone-array beamforming from a MIMO acoustic signal processing perspectiv e, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 15, no. 3, pp. 1053–1065, 2007. [20] E. H ¨ ansler and G. Schmidt, Acoustic Echo and Noise Control: A Practical Appr oach . W iley , 2005. [21] J. Chen, J. Benesty , and Y . Huang, “ A minimum distortion noise reduction algorithm with multiple microphones, ” IEEE T ransactions on Audio, Speech, and Language Processing , vol. 16, no. 3, pp. 481–493, 2008. [22] J. M. Kates and K. H. Arehart, “Multichannel dynamic-range compression using digital frequency warping, ” EURASIP Jour - nal on Applied Signal Pr ocessing , vol. 2005, pp. 3003–3014, 2005. [23] H. W . L ¨ ollmann and P . V ary , “Low delay noise reduction and derev erberation for hearing aids, ” EURASIP Journal on Ad- vances in Signal Pr ocessing , vol. 2009, no. 1, p. 437807, 2009. [24] T . Barker , T . V irtanen, and N. H. Pontoppidan, “Low-latenc y sound-source-separation using non-negati ve matrix factorisa- tion with coupled analysis and synthesis dictionaries, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr o- cessing (ICASSP) , pp. 241–245, 2015. [25] N. Wiener , Extrapolation, Interpolation, and Smoothing of Sta- tionary T ime Series . Wiley , 1949. [26] H. W . Bode and C. E. Shannon, “ A simplified deri vation of lin- ear least square smoothing and prediction theory , ” Pr oceedings of the IRE , vol. 38, no. 4, pp. 417–425, 1950. [27] H. L. V an Trees, Detection, Estimation, and Modulation The- ory , P art I . Wile y , 2004. [28] N. W iener and P . Masani, “The prediction theory of multiv ari- ate stochastic processes, II, ” Acta Mathematica , vol. 99, no. 1, pp. 93–137, 1958. [29] E. W ong and J. Thomas, “On the multidimensional prediction and filtering problem and the factorization of spectral matri- ces, ” Journal of the F ranklin Institute , vol. 272, no. 2, pp. 87– 99, 1961. [30] V . Kucera, “F actorization of rational spectral matrices: a sur - ve y of methods, ” in International Confer ence on Control , pp. 1074–1078, 1991. [31] C. V eaux, J. Y amagishi, and K. MacDonald, “CSTR VCTK corpus: English multi-speaker corpus for CSTR voice cloning toolkit, ” 2017. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment