Deep Learning-Based Automatic Downbeat Tracking: A Brief Review

As an important format of multimedia, music has filled almost everyone's life. Automatic analyzing music is a significant step to satisfy people's need for music retrieval and music recommendation in an effortless way. Thereinto, downbeat tracking ha…

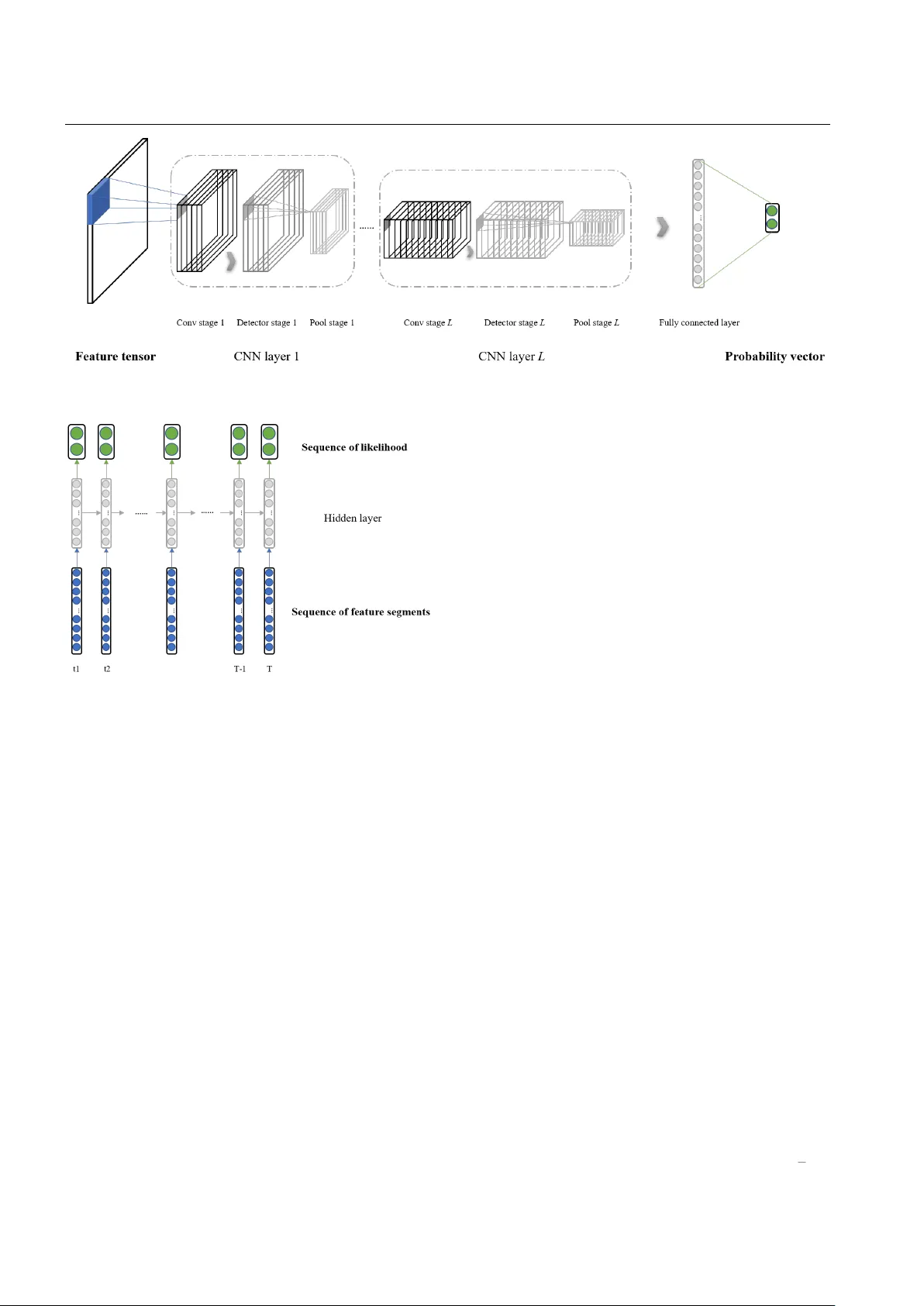

Authors: Bijue Jia, Jiancheng Lv, Dayiheng Liu