DCASE 2018 Challenge Surrey Cross-Task convolutional neural network baseline

The Detection and Classification of Acoustic Scenes and Events (DCASE) consists of five audio classification and sound event detection tasks: 1) Acoustic scene classification, 2) General-purpose audio tagging of Freesound, 3) Bird audio detection, 4)…

Authors: Qiuqiang Kong, Turab Iqbal, Yong Xu

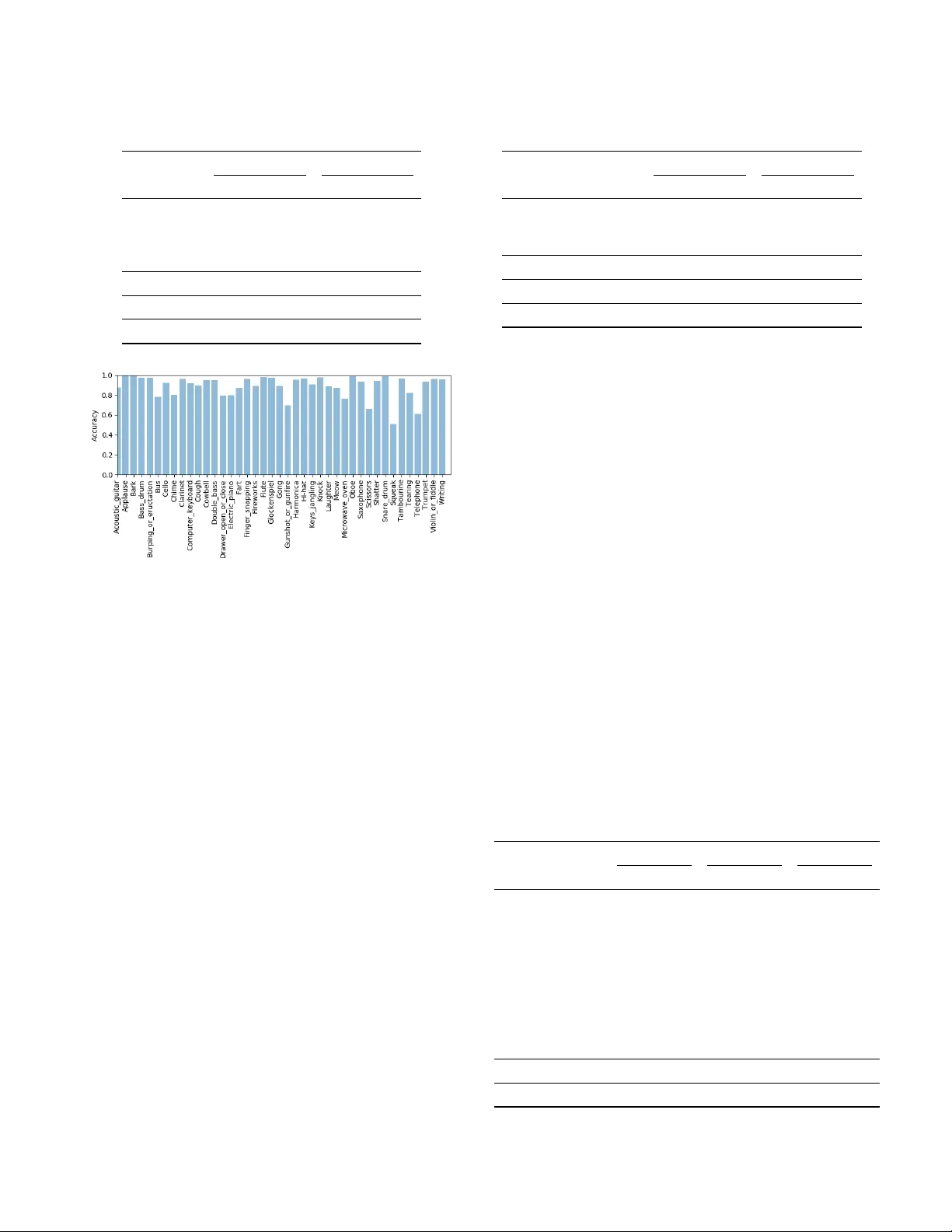

Detection and Classification of Acoustic Scenes and Events 2018 19-20 November 2018, Surre y , UK DCASE 2018 CHALLENGE SURREY CR OSS-T ASK CONV OLUTION AL NEURAL NETWORK B ASELINE Qiuqiang K ong 1 , T urab Iqbal 1 , Y ong Xu 2 , W enwu W ang 1 , Mark D. Plumble y 1 Centre for V ision, Speech and Signal Processing (CVSSP), Uni versity of Surre y 1 {q.kong, t.iqbal, w .wang, m.plumbley}@surre y .ac.uk 2 yong.xu.ustc@gmail.com ABSTRA CT The Detection and Classification of Acoustic Scenes and Events (DCASE) consists of five audio classification and sound e vent detec- tion tasks: 1) Acoustic scene classification, 2) General-purpose audio tagging of Freesound, 3) Bird audio detection, 4) W eakly-labeled semi-supervised sound e vent detection and 5) Multi-channel audio classification. In this paper , we create a cross-task baseline system for all fiv e tasks based on a convlutional neural netw ork (CNN): a “CNN Baseline” system. W e implemented CNNs with 4 layers and 8 layers originating from AlexNet and VGG from computer vision. W e in vestigated how the performance varies from task to task with the same configuration of neural networks. Experiments sho w that deeper CNN with 8 layers performs better than CNN with 4 layers on all tasks except T ask 1. Using CNN with 8 layers, we achieve an accuracy of 0.680 on T ask 1, an accurac y of 0.895 and a mean av erage precision (MAP) of 0.928 on T ask 2, an accuracy of 0.751 and an area under the curve (A UC) of 0.854 on T ask 3, a sound ev ent detection F1 score of 20.8% on T ask 4, and an F1 score of 87.75% on T ask 5. W e released the Python source code of the baseline systems under the MIT license for further research. Index T erms — DCASE 2018 challenge, con volutional neural networks, open source. 1. INTR ODUCTION Detection and classification of acoustic scenes and ev ents (DCASE) 2018 challenge 1 is a well known IEEE challenge consists of se veral audio classification and sound e vent detection tasks. DCASE 2018 challenge consists of fi ve tasks: In task 1, acoustic scene classifica- tion (ASC) [ 1 ], the task is to recognize the scenes where the sound is recorded, such as “street” or “park”. ASC has applications in enhanc- ing speech recognition systems and sound ev ent detection [ 2 ]. T ask 1 includes a matching device ASC subtask and a mismatching de vice ASC subtask. In task 2, general-purpose audio tagging of Freesound, [ 3 ] the task is to classify an audio clip to a pre-defined class, such as “flute” or “applause”. T ask 2 has applications in recognizing a wide range of sound events in real world and is useful for information retriev al. In task 3, bird audio detection, [ 4 ], the task is to detect the presence or the absence of birds in an audio clip. This could be used for automatic wildlife monitoring and audio library man- agement. An important goal of T ask 3 is to design a classification system which can generalize to new conditions. In task 4, weakly labeled semi-supervised sound event detection (SED) [ 5 ], the task is to detect the onset the offset times of sound ev ents where only weak 1 http://dcase.community/ labeled audio and unlabeled audio is av ailable for training. T ask 4 can be used for monitoring public security and used for abnormal sound detection. In task 5, the multi-channel audio classification [ 6 ], the task is to use multi-channel recordings to identify the human activities at home. The first DCASE challenge was the DCASE 2013 challenge [ 7 ], with only an audio classification and a sound e vent detection tasks. The DCASE 2016 challenge [ 8 ] consisted of four tasks including: 1) ASC, 2) SED in synthetic audio, 3) SED in real audio and 4) domestic audio tagging. The DCASE 2017 challenge [ 9 ] updated the domestic audio tagging task to a lar ge-scale weakly labeled audio tagging task. The DCASE challenge series provide public datasets for in vestigating audio related tasks. One recent dataset for DCASE challenges is the AudioSet dataset [ 10 ]. T ask 4 of both DCASE 2017 and 2018 challenge were subsets of AudioSet. Con volutional neural netw orks (CNNs) hav e achiev ed state-of- the-art performance in image classification [ 11 , 12 ]. In this paper , we inv estigate how dif ferent CNNs including CNN with 4 layers originated from AlexNet [ 11 ] and CNN with 8 layers originated from VGG [ 12 ] perform on T ask 1 to 5 of DCASE 2018. W e apply the same configurations of CNNs across all task 1 to 5 to fairly compare the relati ve performance across dif ferent tasks. Using the same CNN model, the performance on T ask 1 to 5 v aries, which indicates the dif ficulty of the tasks varies. The experiments show that T ask 4 sound e vent detection is more difficult than T ask 1 acoustic scene classification than T ask 3 bird audio detection than T ask 2 general-purpose audio tagging of Freesound and T ask 5 domestic multi-channel audio tagging. W e open source the Python code for all of T ask 1 - 5 of DCASE 2018 challenge under MIT license. The source code contains the im- plementation of CNNs with 4 layers and 8 layers. In complementary to the source code published by the organizer [ 1 ], we in vestigated that CNNs with more layers perform better in all of T ask 2 - 5 in DCASE 2018 challenge except T ask 1. This paper is organized as follo ws, Section 2 introduces related works. Section 3 introduces CNNs. Section 4 shows e xperimental results. Section 5 concludes and forecasts our work. 2. RELA TED WORKS Manually-selected features such as mel frequency cepstrum coef- ficients (MFCC) [ 13 ], the constant Q transform (CQT) [ 14 ], and I-vectors [ 15 ] have been used as audio features. Recently , mel spectrograms [ 16 ] have been widely used as features when using neural networks as classifiers. Mixture Gaussian models (GMMs) [ 17 ] and hidden Markov models (HMMs) [ 18 ] hav e been used to model audio scenes and sound e vents. Non-negativ e matrix factor - Detection and Classification of Acoustic Scenes and Events 2018 19-20 November 2018, Surre y , UK T able 1: Configurations of CNN4 and CNN8 feature map size CNN4 CNN8 T × 64 log mel spectrogram T / 2 × 32 5 × 5 , 64 3 × 3 , BN 3 × 3 , BN , 64 2 × 2 , max pooling T / 4 × 16 5 × 5 , 128 3 × 3 , BN 3 × 3 , BN , 128 2 × 2 , max pooling T / 8 × 8 5 × 5 , 256 3 × 3 , BN 3 × 3 , BN , 256 2 × 2 , max pooling T / 16 × 4 5 × 5 , 512 3 × 3 , BN 3 × 3 , BN , 512 2 × 2 , max pooling 1 × 1 Global max pooling Classes num. fc, sigmoid or softmax Parameters 4,309,450 4,691,274 ization (NMFs) [ 19 ] are methods to learn a set of bases to represent the audio. Recently , deep neural networks hav e been introduced to audio classification and sound event detection. F or example, fully-connected neural networks hav e been applied to DCASE 2016 challenges [ 20 ] and DCASE 2017 challenges [ 21 ]. CNNs hav e achiev ed the state-of-the-art performance in audio classification and sound e vent detection [ 22 , 16 , 23 ]. Conv olutional recurrent neural networks (RNNs) [ 24 , 25 ] hav e been used to model the temporal information of sound e vents. Attention neural netw orks hav e been proposed to focus on sound e vents [ 26 ] from weakly-labelled data [ 27 ]. Generative adv ersarial networks (GANs) hav e been applied to improv e the robustness of audio classification classifiers [28]. 3. CONV OLUTIONAL NEURAL NETW ORKS CNNs, such as AlexNet [ 11 ] and VGG [ 12 ], hav e achiev ed state-of- the-art performance in image classification [ 11 , 12 ]. A CNN consists of se veral con volutional layers followed by fully-connected layers. Each con volutional layer consists of filters to con volve with the out- put from the previous conv olutional layer . The filters can capture local patterns in feature maps, such as edges in lower layers and com- plex profiles in higher layers [ 12 ]. In this work, we adopt Ale xNet with 4 layers and VGG with 8 layers as models, which we call CNN4 and CNN8. CNN4 consists of 4 conv olutional layers and the filter size of each con volutional layer is 5 × 5 [ 11 ]. CNN8 consists of 8 layers and the filter size of each conv olutional layer is 3 × 3 [ 12 ]. W e apply batch normalization (BN) after each con volutional layer to stabilize training [ 29 ] followed by a rectifier (ReLU) nonlinearity . W e then apply a global max pooling (GMP) operation on the feature maps of the last conv olutional layer [ 16 ] to summarize the feature maps to a vector . GMP can max out the time and frequency infor- mation of sound events in a spectrogram, so it is in v ariant to time or frequency shift. Finally , a fully-connected layer is applied on the summarized vector follo wed by a sigmoid or softmax nonlinearity to output the probabilities of the audio classes. The configurations of CNN4 and CNN8 are summarized in T able 1. T able 2: T ask 1 acoustic scene classification class-wise accuracy of subtask A and B of dev elopment dataset. S U B TAS K A S U B TAS K B Scene label CNN [1] CNN4 CNN8 CNN [1] CNN4 CNN8 Airport 0.729 0.743 0.709 0.725 0.612 0.667 Bus 0.629 0.607 0.649 0.783 0.695 0.723 Metro 0.512 0.690 0.686 0.206 0.500 0.417 Metro station 0.554 0.687 0.741 0.328 0.472 0.584 Park 0.791 0.855 0.839 0.592 0.834 0.861 public square 0.404 0.486 0.472 0.247 0.361 0.389 Shopping mall 0.496 0.642 0.631 0.611 0.778 0.778 Street, pedestrian 0.500 0.583 0.567 0.208 0.333 0.361 Street, traffic 0.805 0.874 0.886 0.664 0.750 0.778 T ram 0.551 0.590 0.621 0.197 0.417 0.389 A verage 0.597 0.676 0.680 0.456 0.575 0.572 Public LB - 0.693 0.707 - 0.578 0.568 Private LB - 0.628 0.630 - 0.615 0.672 Evaluation - 0.697 0.704 - 0.588 0.596 4. EXPERIMENTS W e open source the Python code of the CNN baseline systems of DCASE 2018 challenge T ask 1 - 5 source here 2 3 4 5 6 . W e conv ert all stereo audio to mono for T ask 1 - 5 for building the baseline system. W e extract the spectrograms and apply log mel filter banks on the spectrograms followed by logarithm operation. W e choose the number of the mel filter banks as 64 because it is a power of two which can be di vided by two in max pooling layers. The mel filter bank has a cut of f frequenc y of 50 Hz. The log mel spectrograms are standarized by subtracting the mean and dividing the standard deviation along mel frequency bins. The same configuration of CNN4 and CNN8 are applied on T ask 1 - 5. W e use Adam optimizer [ 30 ] with a learning rate of 0.001 and the learning rate is reduced by multiplying 0.9 after e very 200 iterations training. A batch size of 128 is used for T ask 1, 2, 3 and 5 and a batch size of 32 is used for T ask 4 to suf ficiently use the GPU with 12 GB memory in training. W e trained the model for 5000 iterations for all of the fiv e tasks. The training takes 60 ms and 200 ms per iteration on a T itan X GPU for CNN4 and CNN8, respectiv ely . The results of T ask 1 - 5 are sho wn in the following subsections. 4.1. T ask 1: Acoustic scene classification T ask 1 acoustic scene classification [ 1 ] is a task to classify an audio recording to a predefined class that characterize the environment in which it was recorded. The 10 predefined classes are listed in T able 2. There are 10080 10-second audio clips in the dev elopment dataset, including 8640, 720 and 720 audio clips recorded with device A, B and C. T ask 1 has three subtasks. Subtask A is matching 2 https://github .com/qiuqiangkong/dcase2018_task1 3 https://github .com/qiuqiangkong/dcase2018_task2 4 https://github .com/qiuqiangkong/dcase2018_task3 5 https://github .com/qiuqiangkong/dcase2018_task4 6 https://github .com/qiuqiangkong/dcase2018_task5 Detection and Classification of Acoustic Scenes and Events 2018 19-20 November 2018, Surre y , UK T able 3: T ask 2 audio tagging accuracy and MAP@3. Accuracy MAP@3 CNN4 CNN8 CNN4 CNN8 Fold 1 0.858 0.897 0.900 0.930 Fold 2 0.824 0.875 0.870 0.912 Fold 3 0.862 0.903 0.901 0.934 Fold 4 0.861 0.904 0.904 0.935 A verage 0.851 0.895 0.894 0.928 Public LB - - 0.885 0.920 Private LB - - 0.862 0.903 Figure 1: T ask 2 audio tagging class-wise accuracy . device classification. Subtask B is mismatching de vice classification. Subtask C is matching de vice classification with external data and has the same ev aluation data as subtask A. T able 2 shows the accuracy of subtask A and subtask B. In [ 1 ] a two layer CNN with a dense connected layer is used as a baseline model. In de velopment dataset of subtask A, CNN4 and CNN8 achiev e similar accuracy of 0.676 and 0.680 respecti vely , outperforming the two layers CNN of 0.597 [ 1 ]. In subtask B, CNN4 and CNN8 achie ve similar accuracy of 0.575 and 0.572, respectiv ely , outperforming the two layers CNN of 0.456 [ 1 ]. The subtask B mismatching de vice classification is around 10% which is worse than the subtask A matching de vice classification in absolute value. T able 2 also shows the public leaderboard (LB), priv ate LB and final ev aluation result. W e did not explore the subtask C with external data. 4.2. T ask 2: General-purpose audio tagging of Freesound con- tent with A udioSet labels T ask 2 audio tagging [ 3 ] is a task to classify an audio clip to one of 41 predefined classes such as “oboe” and “applause”. The duration of the audio samples range from 300 ms to 30 s due to the div ersity of the sound categories. The development dataset contains 9473 audio clips. W e pad or split the log mel spectrograms of audio clips to 2 s log mel spectrograms as the input to a CNN. W e split the dev elopment dataset to four v alidation folds and only use 3710 manually verified audio clips for validation. T able 3 shows the accuracy and the mean a verage precision (MAP) [ 3 ] on the four folds and their av erage statistics. In development dataset, CNN8 achie ves T able 4: T ask 3 bird audio detection accuracy and A UC. Accuracy A UC validation dataset CNN4 CNN8 CNN4 CNN8 freefield1010 0.551 0.630 0.645 0.799 warblrb10k 0.692 0.867 0.799 0.882 BirdV ox-DCASE-20K 0.678 0.801 0.808 0.882 A verage 0.640 0.766 0.751 0.854 Leaderboard - - 0.850 0.847 Evaluation - - 0.748 0.809 an av erage accurac y of 0.895 and a MAP@3 of 0.928, outperforming CNN4 network of 0.851 and 0.894, respecti vely . Figure 1 shows the av eraged 4 folds class-wise accuracy of T ask 2. Sound classes such as “applause” and “bark” ha ve 100% classification accuracy but some classes such as “squeak” and “telephone” have accurac y of only 50% - 60%. T able 3 shows the MAP@3 of the priv ate leaderboard is approximately 2% w orse than the de velopment and the public leaderboard. 4.3. T ask 3: Bird audio detection T ask 3 bird audio detection [ 4 ] is a task to predict the presence or the absence of birds in a 10-second audio clip. One challenge of this task is to design a system that is able to generalize to ne w conditions. That is, a system trained on one dataset should generalize well to another dataset. The de velopment dataset consists of freefield1010 with 7690 audio clips, warblrb10k with 8000 audio clips and BirdV ox-DCASE- 20K with 20000 audio clips. W e train on two datasets and e valuate on the other hold out dataset. T able 4 shows the accuracy and the area under the curve (A UC) [ 4 ] of CNN4 and CNN8. In dev elopment dataset, CNN8 achie ves an accuracy and an A UC of 0.766 and 0.854, outperforming CNN4 of 0.640 and 0.751, respectiv ely . The result in T able 4 sho ws the classification of freefield1010 dataset is more difficult than warblrb10k and BirdV ox-DCASE-20K dataset. T able 5: T ask 4 audio tagging A UC and sound ev ent detection F1 score. A T (AUC) SED1 (F1) SED2 (F1) Class CNN4 CNN8 CNN4 CNN8 CNN4 CNN8 Speech 0.889 0.936 0.0% 0.0% 16.9% 22.5% Dog 1.000 1.000 2.6% 2.5% 8.3% 14.3% Cat 0.980 0.991 3.4% 3.5% 10.3% 7.2% Alarm/bell 0.964 0.975 4.2% 4.0% 12.5% 20.7% Dishes 0.835 0.898 0.0% 0.0% 0.0% 3.6% Frying 0.945 0.939 45.5% 54.5% 2.1% 0.0% Blender 0.839 0.883 18.9% 27.1% 8.3% 7.3% Running water 0.930 0.943 11.8% 11.9% 7.9% 3.1% V acuum cleaner 0.972 0.956 57.6% 61.3% 9.4% 2.6% Electronic shav er 0.944 0.957 45.0% 43.5% 18.9% 16.3% A verage 0.930 0.948 18.9% 20.8% 9.5% 9.8% Evaluation - - 16.7% 18.6% - - Detection and Classification of Acoustic Scenes and Events 2018 19-20 November 2018, Surre y , UK T able 6: T ask 5 multi-channel audio tagging F1 score. CNN4 (F1 score) CNN8 (F1 score) Scene label Baseline Fold 1 Fold 2 Fold 3 Fold 4 A verage Fold 1 Fold 2 Fold 3 Fold 4 A verage Absence 85.4% 86.4% 90.5% 78.5% 89.9% 86.3% 90.5% 92.2% 80.5% 89.9% 88.3% Cooking 95.1% 96.2% 94.7% 93.0% 96.6% 95.1% 98.0% 96.3% 93.8% 96.3% 96.1% Dishwashing 76.7% 77.8% 68.6% 75.8% 80.2% 75.6% 83.3% 71.2% 76.0% 85.8% 79.1% Eating 83.6% 79.7% 75.7% 85.4% 91.2% 82.3% 85.2% 85.1% 88.5% 94.5% 88.3% Other 44.8% 43.3% 55.2% 56.9% 60.2% 53.9% 54.3% 54.5% 51.4% 62.2% 55.6% Social activity 93.9% 95.5% 88.1% 90.2% 98.5% 93.1% 98.4% 90.1% 93.7% 99.3% 95.4% V acuum cleaner 99.3% 100.0% 100.0% 100.0% 100.0% 100.0% 100.0% 99.4% 100.0% 100.0% 99.9% W atching TV 99.6% 99.6% 99.7% 97.5% 100.0% 99.2% 99.8% 99.9% 99.0% 99.9% 99.7% W orking 82.0% 85.3% 86.3% 79.4% 90.5% 85.4% 88.7% 89.3% 81.4% 90.2% 87.4% A verage 84.5% 84.9% 84.3% 84.1% 89.7% 85.7% 88.7% 86.5% 84.9% 90.9% 87.8% Eval. Unknown mic. 83.1% - - - - 82.4% - - - - 83.2% Eval. dev . mic. 85.0% - - - - 86.2% - - - - 87.6% Furthermore, an A UC of 0.809 is achieved in e valuation dataset using CNN8. 4.4. T ask 4: Large-scale weakly labeled semi-supervised sound event detection in domestic en vironments T ask 4 is a weakly labeled semi-supervised sound e vent detection task [ 5 ] to predict both the onset and of fset of sound events. There are 10 audio classes in T ask 4, for example “speech” and “dog”. An audio clip can be assigned to one or more labels. The de velopment dataset consists of 1578 weakly labeled audio clips, 14412 unlabeled in domain audio clips and 39999 unlabeled out domain audio clips. Each audio clip has a duration of 10 seconds. W e only use the 1578 weakly labeled audio clips for training our systems. Different from T ask 1, 2, 3 and 5, to remain the time resolution of feature maps in time axis, max pooling operations are only applied along the frequency axis b ut not the time axis. In training, we av erage out the time axis and apply a fully connected layer to predict the clip-wise labels. In inference, we do not apply the av erage of time axis to remain frame-wise labels. T able 5 shows CNN8 achie ves an A UC of 0.948 in audio tagging, outperforming CNN4 of 0.930. In sound ev ent detection, system SED1 uses the audio tagging result as the sound event detection result. The onset and offset times are filled with 0 s and 10 s. System SED2 applies thresholds to the frame-wise predictions to detect sound e vents. The high threshold and the lo w threshold are set as 0.8 and 0.2, respecti vely . Sound ev ents such as “Frying”, “Blender” hav e higher F1 score with SED1. Sound e vent such as “Speech”, “Dog”, “Cat” ha ve higher F1 score with SED2. In dev elopment dataset, SED1 and SED2 achiev e average F1 scores of 20.8% and 9.8%, respectiv ely . In e valuation, a F1 score of 18.6% is achiev ed using CNN8 and system SED1. 4.5. T ask 5: Monitoring of domestic activities based on multi- channel acoustics T ask 5 multi-channel audio tagging [ 6 ] is a task to classify the do- mestic activities with multi-channel acoustic recordings. The target of T ask 5 is to research how the multi-channel information will help the audio tagging task. The dev elopment dataset of T ask 5 consists of 72984 10-second audio clips. The audio classes including “Cook- ing” and “Eating”, for e xample. The multi-channel audio clips are con verted to single channel audio clips to build the baseline system. T able 6 sho ws in dev elopment dataset the CNN8 achiev es a F1 score of 87.75%, outperforming CNN4 network of 85.73%. In Ev aluation data with unknown microphone a F1 score of 83.2% is achieved using CNN8 model. W ith unkown de velopment microphone, a F1 score of 87.6% is achiev ed. 5. CONCLUSION In this paper, we in vestigated the performance of conv olutional neural networks (CNNs) with 4 layers and 8 layers on T ask 1 to 5 of DCASE 2018. W e show the diffi culties of the tasks varies. T ask 4 sound e vent detection is more difficult than T ask 1 acoustic scene classification than T ask 3 bird audio detection than T ask 2 general-purpose audio tagging of Freesound and T ask 5 domestic multi-channel audio tagging. W e show CNN with 8 layers performs better than CNN with 4 layers in T ask 2 to 5. In T ask 1, CNN with 8 layers and 4 layers perform similar . With CNN8, we achiev es an accuracy of 0.680 on T ask 1, a mean av erage precision (MAP) of 0.928 on T ask 2, an area under the curve (A UC) of 0.854 on T ask 3, a sound event detection F1 score of 20.8% on T ask 4 and a F1 score of 87.75% on T ask 5. In future, we will explore more CNN structures on T ask 1 to 5 of DCASE 2018 challenge. W e released the Python source code of the baseline systems under the MIT license for further research. 6. A CKNO WLEDGEMENT This research was supported by EPSRC grant EP/N014111/1 “Mak- ing Sense of Sounds” and a Research Scholarship from the China Scholarship Council (CSC) No. 201406150082. 7. REFERENCES [1] A. Mesaros, T . Heittola, and T . V irtanen, “ A multi-device dataset for urban acoustic scene classification, ” arXiv pr eprint arXiv:1807.09840 , 2018. Detection and Classification of Acoustic Scenes and Events 2018 19-20 November 2018, Surre y , UK [2] D. Barchiesi, D. Giannoulis, D. Sto well, and M. D. Plumbley , “ Acoustic scene classification, ” arXiv preprint , 2014. [3] E. F onseca, M. Plakal, F . Font, D. P . W . Ellis, X. Fav ory , J. Pons, and X. Serra, “General-purpose tagging of freesound audio with audioset labels: T ask description, dataset, and baseline, ” arXiv pr eprint arXiv:1807.09902 , 2018. [4] D. Stowell, Y . Stylianou, M. W ood, H. Pamuła, and H. Glotin, “ Automatic acoustic detection of birds through deep learn- ing: the first bird audio detection challenge, ” arXiv preprint arXiv:1807.05812 , 2018. [5] R. Serizel, T . Nicolas, H. Eghbal-Zadeh, and A. P . Shah, “Large-scale weakly labeled semi-supervised sound e vent de- tection in domestic en vironments, ” https://hal.inria.fr/hal- 01850270 , 2018. [6] G. Dekkers, S. Lauwereins, B. Thoen, M. W . Adhana, H. Brouckxon, T . van W aterschoot, B. V anrumste, M. V er- helst, and P . Karsmakers, “The SINS database for detection of daily acti vities in a home en vironment using an acoustic sensor network, ” in Pr oceedings of the Detection and Classification of Acoustic Scenes and Events 2017 W orkshop (DCASE2017) , Munich, Germany , Nov ember 2017, pp. 32–36. [7] D. Giannoulis, E. Benetos, D. Sto well, M. Rossignol, M. La- grange, and M. D. Plumble y , “Detection and classification of acoustic scenes and e vents: An IEEE AASP challenge, ” in IEEE W orkshop on Applications of Signal Pr ocessing to Audio and Acoustics (W ASP AA) . IEEE, 2013, pp. 1–4. [8] A. Mesaros, T . Heittola, and T . V irtanen, “TUT database for acoustic scene classification and sound ev ent detection, ” in Signal Pr ocessing Confer ence (EUSIPCO) . IEEE, 2016, pp. 1128–1132. [9] A. Mesaros, T . Heittola, A. Diment, B. Elizalde, A. Shah, E. V incent, B. Raj, and T . V irtanen, “DCASE 2017 challenge setup: T asks, datasets and baseline system, ” in DCASE 2017- W orkshop on Detection and Classification of Acoustic Scenes and Events , 2017. [10] J. F . Gemmeke, D. P . W . Ellis, D. Freedman, A. Jansen, W . La wrence, R. C. Moore, M. Plakal, and M. Ritter , “ Audio set: An ontology and human-labeled dataset for audio events, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 776–780. [11] A. Krizhe vsky , I. Sutske ver , and G. E. Hinton, “Imagenet classi- fication with deep con volutional neural networks, ” in Advances in Neural Information Pr ocessing Systems (NIPS) , 2012, pp. 1097–1105. [12] K. Simonyan and A. Zisserman, “V ery deep con volutional networks for large-scale image recognition, ” arXiv preprint arXiv:1409.1556 , 2014. [13] D. Li, I. K. Sethi, N. Dimitrov a, and T . McGee, “Classifica- tion of general audio data for content-based retriev al, ” P attern Recognition Letters , v ol. 22, no. 5, pp. 533–544, 2001. [14] V . Bisot, R. Serizel, S. Essid, and G. Richard, “Supervised nonnegati ve matrix f actorization for acoustic scene classifica- tion, ” IEEE AASP Challenge on Detection and Classification of Acoustic Scenes and Events (DCASE) , 2016. [15] H. Eghbal-Zadeh, B. Lehner , M. Dorfer , and G. Widmer , “CP- JKU submissions for DCASE-2016: A hybrid approach using binaural i-vectors and deep conv olutional neural networks, ” IEEE AASP Challeng e on Detection and Classification of Acoustic Scenes and Events (DCASE) , 2016. [16] K. Choi, G. Fazekas, and M. Sandler, “ Automatic tagging using deep con volutional neural networks, ” arXiv pr eprint arXiv:1606.00298 , 2016. [17] D. Sto well, D. Giannoulis, E. Benetos, M. Lagrange, and M. D. Plumbley , “Detection and classification of acoustic scenes and ev ents, ” IEEE T ransactions on Multimedia , vol. 17, no. 10, pp. 1733–1746, 2015. [18] A. Mesaros, T . Heittola, A. Eronen, and T . V irtanen, “ Acoustic ev ent detection in real life recordings, ” in Signal Pr ocessing Confer ence . IEEE, 2010, pp. 1267–1271. [19] A. Mesaros, T . Heittola, O. Dikmen, and T . V irtanen, “Sound ev ent detection in real life recordings using coupled matrix factorization of spectral representations and class activity an- notations, ” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2015, pp. 151–155. [20] Q. K ong, I. Sobieraj, W . W ang, and M. D. Plumbley , “Deep neural network baseline for dcase challenge 2016, ” Proceed- ings of DCASE 2016 , 2016. [21] J. Li, W . Dai, F . Metze, S. Qu, and S. Das, “ A comparison of deep learning methods for environmental sound, ” arXiv pr eprint arXiv:1703.06902 , 2017. [22] S. Hershey , S. Chaudhuri, D. P . Ellis, J. F . Gemmeke, A. Jansen, R. C. Moore, M. Plakal, D. Platt, R. A. Saurous, B. Seybold et al. , “CNN architectures for large-scale audio classification, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 131–135. [23] Y . A ytar, C. V ondrick, and A. T orralba, “Soundnet: Learning sound representations from unlabeled video, ” in Advances in Neural Information Pr ocessing Systems (NIPS) , 2016, pp. 892– 900. [24] E. Cakir, G. Parascandolo, T . Heittola, H. Huttunen, T . V ir- tanen, E. Cakir, G. Parascandolo, T . Heittola, H. Huttunen, and T . V irtanen, “Conv olutional recurrent neural networks for polyphonic sound e vent detection, ” IEEE/A CM T ransactions on Audio, Speec h and Language Pr ocessing (T ASLP) , vol. 25, no. 6, pp. 1291–1303, 2017. [25] H. Lim, J. Park, K. Lee, and Y . Han, “Rare sound e vent detection using 1D con volutional recurrent neural networks, ” DCASE2017 Challenge, T ech. Rep., 2017. [26] Y . Xu, Q. K ong, W . W ang, and M. D. Plumbley , “Large-scale weakly supervised audio classification using gated conv olu- tional neural network, ” arXiv pr eprint arXiv:1710.00343 , 2017. [27] A. Kumar and B. Raj, “ Audio ev ent detection using weakly labeled data, ” in Pr oceedings of the 2016 ACM on Multimedia Confer ence . ACM, 2016, pp. 1038–1047. [28] S. Mun, S. Park, D. K. Han, and H. K o, “Generati ve adversarial network based acoustic scene training set augmentation and selection using svm hyper-plane, ” Pr oc. DCASE , pp. 93–97, 2017. [29] S. Ioffe and C. Szegedy , “Batch normalization: Accelerating deep network training by reducing internal cov ariate shift, ” arXiv pr eprint arXiv:1502.03167 , 2015. [30] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv pr eprint arXiv:1412.6980 , 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment