Importance Nested Sampling and the MultiNest Algorithm

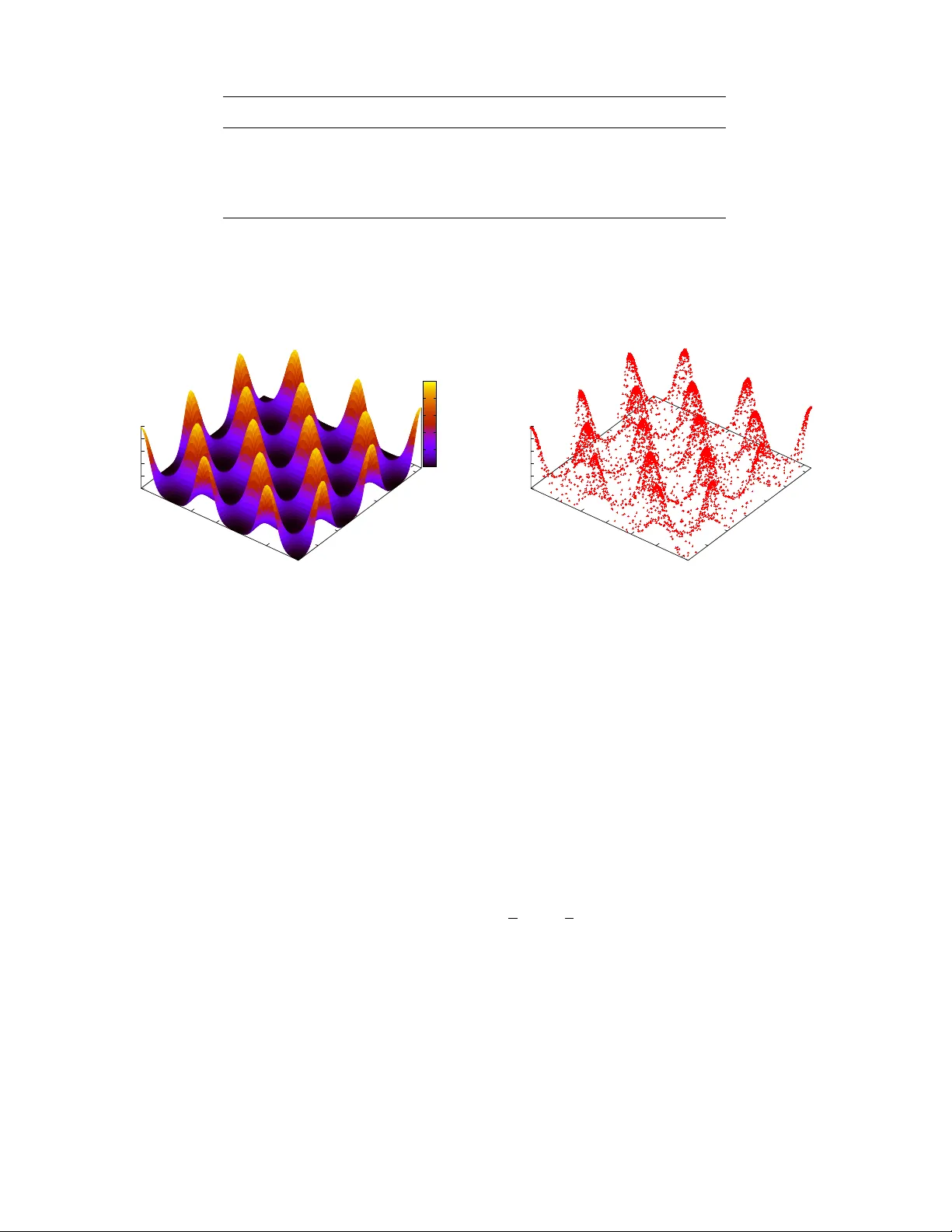

Bayesian inference involves two main computational challenges. First, in estimating the parameters of some model for the data, the posterior distribution may well be highly multi-modal: a regime in which the convergence to stationarity of traditional…

Authors: F. Feroz, M.P. Hobson, E. Cameron