Survey on Deep Neural Networks in Speech and Vision Systems

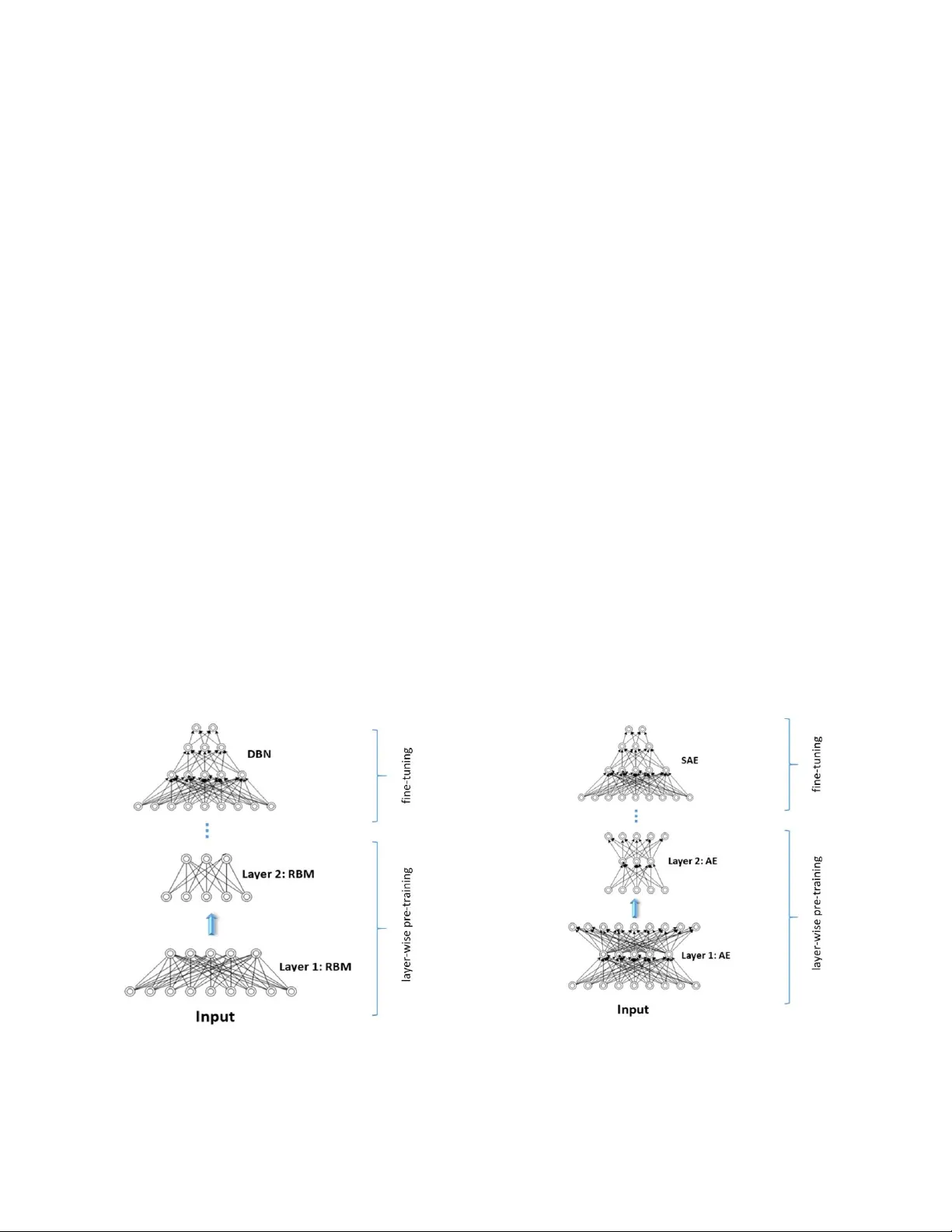

This survey presents a review of state-of-the-art deep neural network architectures, algorithms, and systems in vision and speech applications. Recent advances in deep artificial neural network algorithms and architectures have spurred rapid innovati…

Authors: Mahbubul Alam, Manar D. Samad, Lasitha Vidyaratne