Continuous Dropout

Dropout has been proven to be an effective algorithm for training robust deep networks because of its ability to prevent overfitting by avoiding the co-adaptation of feature detectors. Current explanations of dropout include bagging, naive Bayes, reg…

Authors: Xu Shen, Xinmei Tian, Tongliang Liu

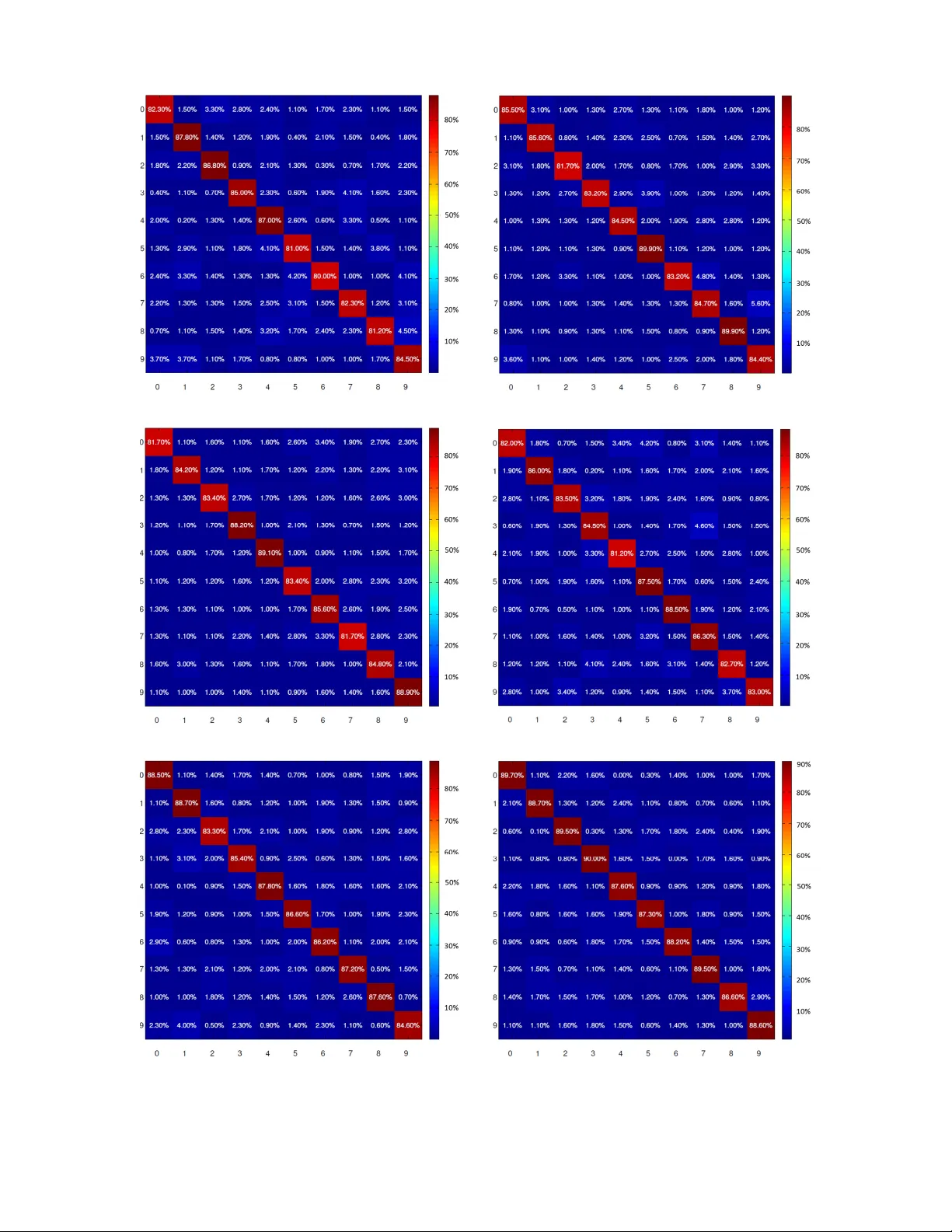

1 Continuous Dropout Xu Shen, Xinmei T ian, T ongliang Liu, Fang Xu, and Dacheng T ao Abstract —Dropout has been pro ven to be an effective algo- rithm for training r obust deep networks because of its ability to pre vent ov erfitting by a voiding the co-adaptation of feature detectors. Current explanations of dropout include bagging, naive Bayes, regularization, and sex in evolution. According to the activation patterns of neurons in the human brain, when faced with different situations, the firing rates of neur ons are random and continuous, not binary as current dropout does. Inspired by this phenomenon, we extend the traditional binary dropout to continuous dropout. On the one hand, continuous dropout is considerably closer to the activ ation characteristics of neurons in the human brain than traditional binary dropout. On the other hand, we demonstrate that continuous dropout has the property of a voiding the co-adaptation of featur e detectors, which suggests that we can extract more independent feature detectors for model av eraging in the test stage. W e introduce the proposed continuous dropout to a feedforward neural network and com- prehensi vely compare it with binary dropout, adaptive dropout, and DropConnect on MNIST , CIF AR-10, SVHN, NORB, and ILSVRC-12. Thor ough experiments demonstrate that our method performs better in pr eventing the co-adaptation of feature de- tectors and improves test performance. The code is available at: https://github .com/jasonustc/caffe- multigpu/tree/dr opout. Index T erms —Deep Learning, dropout, overfitting, regulariza- tion, co-adaptation. I . I N T RO D U C T I O N D R OPOUT is an ef ficient algorithm introduced by Hinton et al for training robust neural networks [1] and has been applied to many vision tasks [2, 3, 4]. During the training stage, hidden units of the neural networks are randomly omitted at a rate of 50% [1][5]. Thus, the presentation of each training sample can be viewed as pro viding updates of parameters for a randomly chosen subnetwork. The weights of this subnetwork are trained by back propagation [6]. W eights are shared for the hidden units that are present among dif- ferent subnetworks at each iteration. During the test stage, predictions are made by the entire network, which contains all the hidden units with their weights halved. The moti v ation and intuition behind dropout is to pre vent ov erfitting by avoiding co-adaptations of the feature detectors [1]. Deep network can achie ve better representation than shallow networks, but overfitting is a serious problem when training a large feedforward neural network on a small training set [1][7]. Randomly dropping the units from the neural net- work can greatly reduce this overfitting problem. Encouraged X. Shen, X. Tian are with the department of Electronic Engineering and Information Science, University of Science and T echnology of China (email: shenxu@mail.ustc.edu.cn; xinmei@ustc.edu.cn). F . Xu is with the department of Biophysics and Neurobiology , School of Life Science, Univ ersity of Science and T echnology of China (email: xufan@mail.ustc.edu.cn). T . Liu and D. T ao are with the Centre for Quantum Computation & Intel- ligent Systems and the Faculty of Engineering and Information T echnology , Univ ersity of T echnology , Sydney , 81 Broadway Street, Ultimo, NSW 2007, Australia (email: tongliang.liu@uts.edu.au; dacheng.tao@uts.edu.au). by the success of dropout, sev eral related works hav e been presented, including fast dropout [8], adaptive dropout [9], and DropConnect [10]. T o accelerate dropout training, W ang and Manning suggested sampling the output from an approximated distribution rather than sampling binary mask variables for the inputs [8]. In [9], Ba and Frey proposed adapti vely learning the dropout probability p from the inputs and weights of the network. W an et al . generalized dropout by randomly dropping the weights rather than the units [10]. T o interpret the success of dropout, sev eral explanations from both theoretical and biological perspectives have been proposed. Based on theoretical explanations, dropout is vie wed as an extreme form of bagging [1], as a generalization of naiv e Bayes [1], or as adapti ve regularization [11][12], which is prov en to be a very useful approach for neural netw ork training [13]. From the biological perspective, Hinton et al . explain that there is an intriguing similarity between dropout and the theory of the role of sex in ev olution [1]. Howe ver , no understanding from the perspective of the brain’ s neural network − the origin of deep neural networks − has been proposed. In fact, by ana- lyzing the firing patterns of neural netw orks in the human brain [14][15][16], we find that there is a strong analogy between dropout and the firing pattern of brain neurons. That is, a small minority of strong synapses and neurons provide a substantial portion of the activity in all brain states and situations [14]. This phenomenon explains why we need to randomly delete hidden units from the network and train different subnetworks for different samples (situations). Ho we ver , the remainder of the brain is not silent. The remaining neuronal activity in any given time window is supplied by very large numbers of weak synapses and cells. The amplitudes of oscillations of neurons obey a random continuous pattern [15][16]. In other words, the di vision between “strong” and “weak” neurons is not absolute. They obey a continuous − rather than bimodal − distribution [15]. Consequently , we should assign a continuous random mask to each neuron in the dropout network for the divisions of “strong” and “weak” rather than use a binary mask to choose “activ ated” and “silent” neurons. Inspired by this phenomenon, we propose a continuous dropout algorithm in this paper , i.e. , the dropout variables are subject to a continuous distribution rather than the discrete (Bernoulli) distribution in [1]. Specifically , in our continuous dropout, the units in the network are randomly multiplied by continuous dropout masks sampled from µ ∼ U (0 , 1) or g ∼ N (0 . 5 , σ 2 ) , termed uniform dropout or Gaussian dropout, respectiv ely . Although multiplicative Gaussian noise has been mentioned in [17], no theoretical analysis or generalized con- tinuous dropout form is presented. W e inv estigate two specific continuous distributions, i.e. , uniform and Gaussian, which are commonly used and also are similar to the process of neu- ron activ ation in the brain. W e conduct extensiv e theoretical 2 analyses, including both static and dynamic property analyses of our continuous dropout, and demonstrate that continuous dropout prev ents the co-adaptation of feature detectors in deep neural networks. In the static analysis, we find that continuous dropout achieves a good balance between the div ersity and independence of subnetworks. In the dynamic analysis, we find that continuous dropout training is equiv alent to a regularization of cov ariance between weights, inputs, and hidden units, which successfully prevents the co-adaptation of feature detectors in deep neural networks. W e ev aluate our continuous dropout through extensi ve experiments on se veral datasets, including MNIST , CIF AR- 10 , SVHN, NORB, and ILSVRC- 12 . W e compare it with Bernoulli dropout, adapti ve dropout, and DropConnect. The experimental results demonstrate that our continuous dropout performs better in prev enting the co-adaptation of feature detectors and improves test performance. I I . C O N T I N U O U S D R O P O U T In [1], Hinton et al . interpret dropout from the biological perspectiv e, i.e. , it has an intriguing similarity to the theory of the role of sex in e volution [18]. Sexual reproduction in v olves taking half the genes of each parent and combining them to produce offspring. This corresponds to the result where dropout training works the best when p = 0 . 5 ; more extreme probabilities produce worse results [1]. The criteria for natural selection may not be individual fitness b ut rather the mixability of genes to combine [1]. The ability of genes to w ork well with another random set of genes makes them more robust. The mixability theory described in [19] is that sex breaks up sets of co-adapted genes, and this means that achie ving a function by using a large set of co-adapted genes is not nearly as robust as achie ving the same function, perhaps less than optimally , in multiple alternativ e ways, each of which only uses a small number of co-adapted genes. Follo wing this train of thought, we can infer that randomly dropping units tends to produce more multiple alternative networks, which is able to achiev e better performance. For example, when we use one hidden layer with n units for dropout training, i.e. , the value of the dropout variable is randomly set to 0 or 1 , 2 n alternativ e netw orks will be produced during training and will make up the entire network for testing. From this perspective, it is more reasonable to take the continuous dropout distribution into account because, for continuous dropout variables, a hidden layer with n units can produce an infinite number of multiple alternativ e networks, which are expected to work better than the Bernoulli dropout proposed in [1]. The e xperimental results in Section IV demon- strate the superiority of continuous dropout over Bernoulli dropout. I I I . C O - A D A P TA T I O N R E G U L A R I Z ATI O N I N C O N T I N U O U S D R O P O U T In this section, we deriv e the static and dynamic properties of our continuous dropout. Static properties refer to the properties of the network with a fixed set of weights, that is, giv en an input, ho w dropout af fects the output of the network. Dynamic properties refer to the properties of updating of the weights for the network, i.e. , how continuous dropout changes the learning process of the network [12]. Because Bernoulli dropout with p = 0 . 5 achieves the best performance in most situations [1][20], we set p = 0 . 5 for Bernoulli dropout. For our continuous dropout, we apply µ ∼ U (0 , 1) and g ∼ N (0 . 5 , σ 2 ) for uniform dropout and Gaussian dropout to ensure that all three dropout algorithms hav e the same expected output (0.5). A. Static Pr operties of Continuous Dr opout In this section, we focus on the static properties of con- tinuous dropout, i.e. , properties of dropout for a fix ed set of weights. W e start from the single layer of linear units, and then we extend it to multiple layers of linear and nonlinear units. 1) Continuous dr opout for a single layer of linear units: W e consider a single fully connected linear layer with input I = [ I 1 , I 2 , ..., I n ] T , weighting matrix W = [ w ij ] k × n , and output S = [ S 1 , S 2 , ..., S k ] T . The i th output S i = P n j =1 w ij I j . In Bernoulli dropout, each input unit I j is kept with probability p ∼ B er noul li (0 . 5) . The i th output and its expectation are S B i = n X j =1 w ij I j p j and E [ S B i ] = 1 2 n X j =1 w ij I j . In our uniform dropout, I j is kept with probability u ∼ U (0 , 1) . The output becomes S U i = n X j =1 w ij I j u j and E [ S U i ] = 1 2 n X j =1 w ij I j . When Gaussian dropout is applied, I j is kept with probability g ∼ N (0 . 5 , σ 2 ) , S G i = n X j =1 w ij I j g j and E [ S G i ] = 1 2 n X j =1 w ij I j . Therefore, the three dropout methods achie ve the same ex- pected output. Because dropout is applied to the input units independently , the v ariance and cov ariance of the output units are: V ar ( S U i ) = n X j =1 w 2 ij I 2 j V ar ( u j ) = n X j =1 w 2 ij I 2 j 1 12 , C ov ( S U i , S U l ) = n X j =1 w ij w lj I 2 j 1 12 , V ar ( S G i ) = n X j =1 w 2 ij I 2 j V ar ( g i ) = n X j =1 w 2 ij I 2 j σ 2 , C ov ( S G i , S G l ) = n X j =1 w ij w lj I 2 j σ 2 , V ar ( S B i ) = n X j =1 w 2 ij I 2 j p j q j = n X j =1 w 2 ij I 2 j 1 4 , C ov ( S B i , S B l ) = n X j =1 w ij w lj I 2 j p j q j = n X j =1 w ij w lj I 2 j 1 4 . 3 The aim of dropout is to av oid the co-adaptation of feature detectors, reflected by the cov ariance between output units. Generally , networks with lower cov ariance between feature detectors tend to generate more independent subnetworks and therefore tend to work better during the test stage. Compar - ing the cov ariance of the output units of the three dropout algorithms, we can see that uniform dropout has a lower cov ariance than Bernoulli dropout. The covariance of Gaussian dropout is controlled by the parameter σ 2 . Through extensiv e experiments, we find that Gaussian dropout with σ 2 ∈ [ 1 5 , 1 3 ] works the best among the three dropout algorithms. This phe- nomenon implies that there is a balance between the div ersity of subnetworks (larger v ariance of the output of hidden units) and their independence (lo wer cov ariance between units in the same layer). Bernoulli dropout achieves the highest variance but its cov ariance is also the highest. In contrast, uniform dropout achieves the lo west cov ariance, but its variance is also the lowest. Gaussian dropout with a suitable σ 2 achiev es the best balance between v ariance and cov ariance, ensuring a good generalization capability . 2) Continuous dr opout appr oximation for non-linear unit: For the non-linear unit, we consider the case that the output of a single unit with total linear input S is giv en by the logistic sigmoidal function O = sig moid ( S ) = 1 1 + ce − λS . (1) For uniform dropout, S = n P i =1 w i I i u i , u i ∼ U (0 , 1) . W e hav e S = n P i =1 U i , U i ∼ U (0 , w i I i ) , E [ U i ] = 1 2 w i I i and V ar ( U i ) = 1 12 w 2 i I 2 i . Because U i ≤ max i ( w i I i ) , s 2 n = n P i =1 V ar ( U i ) → ∞ . According to Corollary 2.7.1 of L ya- punov’ s central limit theorem [18], S tends to a normal distribution as n → ∞ . It yields that S ∼ N ( µ U , σ 2 U ) , µ U = n X i =1 E [ U i ] = n X i =1 1 2 w i I i , σ 2 U = n X i =1 V ar ( U i ) = n X i =1 1 12 w 2 i I 2 i (2) For Gaussian dropout, S = n P i =1 w i I i g i , g i ∼ N ( µ, σ 2 ) and g i i.i.d. W e can easily infer that S ∼ N ( µ S , σ 2 S ) , where µ S = n P i =1 w i I i µ, σ 2 S = n P i =1 w 2 i I 2 i σ 2 . jhus, for both uniform dropout and Gaussian dropout, S is subject to a normal distribution. In the following sections, we only deriv e the statistical property of Gaussian dropout because it is the same for uniform dropout. The expected output is E ( O ) = E [ sig moid ( S )] = Z ∞ −∞ sig moid ( x ) N ( x | µ S , σ 2 S ) dx ≈ sig moid ( µ S p 1 + π σ 2 S / 8 ) . (3) This means that for Gaussian dropout g i ∼ N ( µ, σ 2 ) , we hav e the recursion E [ S h i ] = X l

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment