Understand Dynamic Regret with Switching Cost for Online Decision Making

As a metric to measure the performance of an online method, dynamic regret with switching cost has drawn much attention for online decision making problems. Although the sublinear regret has been provided in many previous researches, we still have li…

Authors: Yawei Zhao, Qian Zhao, Xingxing Zhang

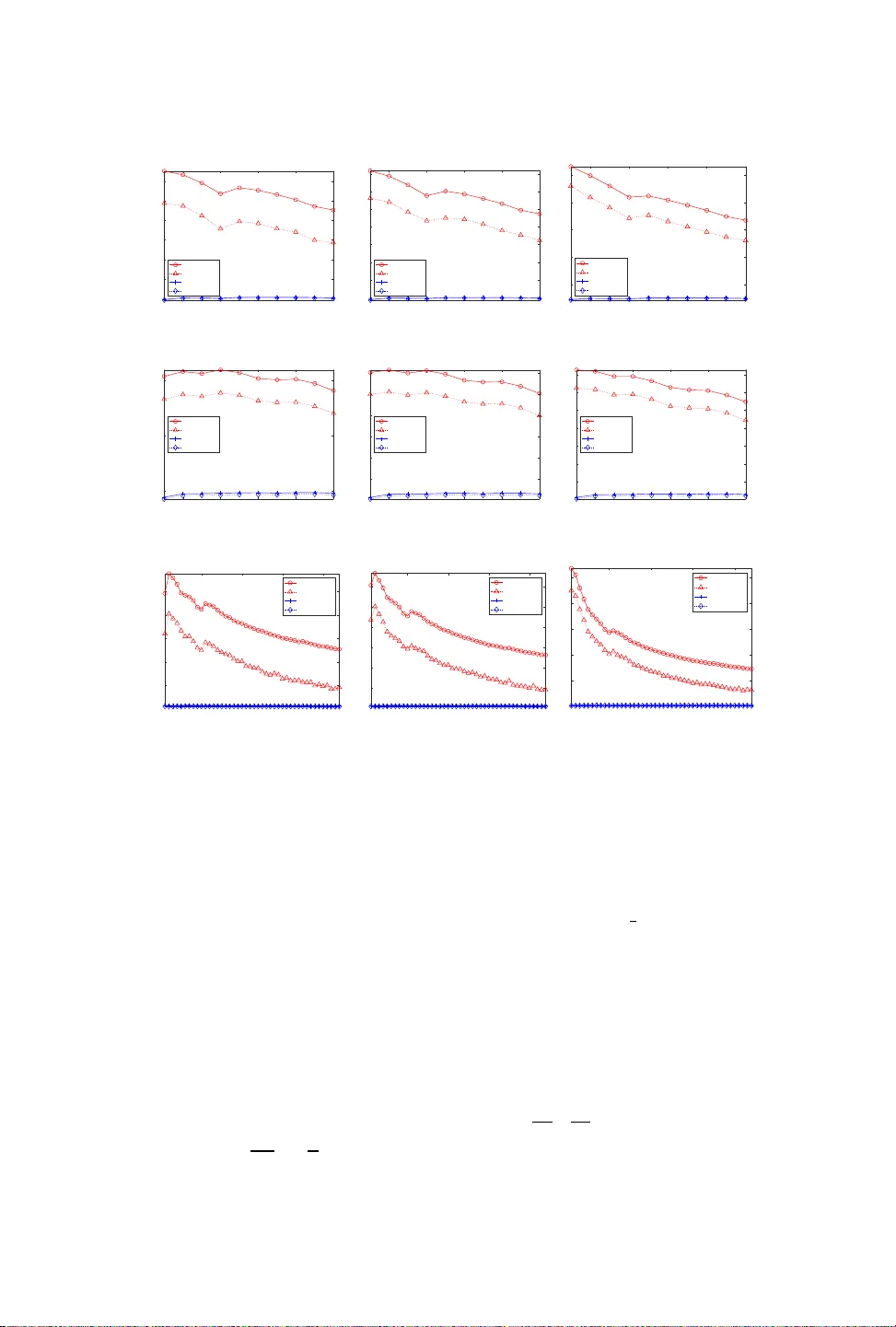

1 Understand Dynamic Regret with Switching Cost for Online Decision Making Y A WEI ZHA O, School of Computer , National University of Defense T e chnology QIAN ZHA O, College of Mathematics and System Science, Xinjiang University XINGXING ZHANG, Institute of Information Science, and Beijing Key Laboratory of Advanced Information Science and Network T echnology , Beijing Jiaotong University EN ZHU ∗ and XINW ANG LIU, School of Computer , National University of Defense T echnology JIANPING YIN, School of Computer , Dongguan University of T e chnology As a metric to measure the performance of an online method, dynamic regret with switching cost has drawn much aention for online decision making pr oblems. Although the sublinear regret has been provided in many pr evious r esearches, w e still have lile knowledge about the relation between the dynamic regret and the switching cost . In the pap er , we investigate the relation for two classic online seings: Online Algorithms (OA ) and Online Convex Optimization (OCO ). W e pro vide a new theoretical analysis frame work, which sho ws an interesting observation, that is, the relation between the switching cost and the dynamic regret is dierent for seings of O A and OCO. Specically , the switching cost has signicant impact on the dynamic r egret in the seing of OA. But, it does not have an impact on the dynamic regret in the seing of OCO . Furthermore, w e provide a lower bound of regr et for the seing of OCO , which is same with the lower b ound in the case of no switching cost. It shows that the switching cost does not change the diculty of online decision making problems in the seing of OCO . Additional K ey W ords and Phrases: Online decision making, dynamic regret, switching cost, online algorithms, online convex optimization, online mirror descent. A CM Reference format: Y awei Zhao, Qian Zhao, Xingxing Zhang, En Zhu, Xinwang Liu, and Jianping Yin. 2016. Understand Dynamic Regret with Switching Cost for Online Decision Making. 1, 1, Article 1 ( January 2016), 23 pages. DOI: 10.1145/nnnnnnn.nnnnnnn 1 INTRODUCTION Online Algorithms (O A) 1 [ 14 , 15 , 37 ] and Online Convex Optimization (OCO) [ 9 , 23 , 38 ] are two important seings of online decision making. Methods in both OA and OCO ∗ represents corresponding author . 1 Some literatures denote O A by ‘smoothed online convex optimization’ . Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than ACM must be honored. Abstracting with credit is permied. T o copy other wise, or republish, to post on servers or to redistribute to lists, requires prior specic permission and /or a fee. Request permissions from permissions@acm.org. © 2016 ACM. XXXX-XXXX/2016/1- ART1 $ 15.00 DOI: 10.1145/nnnnnnn.nnnnnnn , V ol. 1, No. 1, Article 1. Publication date: Januar y 2016. seings are designed to make a decision at every round, and then use the decision as a response to the environment. eir major dierence is outlined as follows. • For e very round, methods in the seing of OA are able to know a loss function rst, and then play a decision as the response to the environment. • Howev er , for every r ound, methods in the seing of OCO have to play a decision b efore knowing the loss function. us, the environment may be adversarial to decisions of those methods. Both of them have a large number of practical scenarios. For example, both the k -server problem [ 4 , 26 ] and the Metrical T ask Systems (MTS) problem [ 1 , 4 , 10 ] are usually studied in the seing of O A. Other problems include online learning [ 29 , 39 , 42 , 43 ], online recommendation [ 41 ], online classication [ 6 , 18 ], online portfolio selection [28], and model predictive control [36] are usually studied in the seing of OCO . Many r ecent researches b egin to investigate performance of online metho ds in b oth O A and OCO seings by using dynamic regret with switching cost [ 15 , 30 ]. It measures the dierence between the cost yielded by real-time decisions and the cost yielded by the optimal decisions. Comparing with the classic static regret [ 9 ], it has two major dierences. • First, it allo ws optimal decisions to change within a threshold over time, which is necessary in the dynamic environment 2 . • Second, the cost yielded by a decision consists of two parts: the op erating cost and the switching cost , while the classic static regret only contains the operating cost. e switching cost measures the dierence between two successive decisions, which is needed in many practical scenarios such as service management in ele ctric power network [ 35 ], dynamic resource management in data centers [ 31 , 33 , 40 ]. However , we still have lile knowledge about the relation between the dynamic regret and the switching cost. In the paper , we ar e motivated by the following fundamental questions. • Does the switching cost impact the dynamic regret of an online method? • Does the problem of online decision making become more dicult due to the switching cost? T o answer those challenging questions, we investigate online mirror descent in seings of O A and OCO , and pro vide a ne w theoretical analysis framework. According to our analysis, we nd an interesting observation, that is, the switching cost does impact on the dynamic regret in the seing of OA. But, it has no impact on the dynamic regret in the seing of OCO . Specically , when the switching cost is measur ed by k x t + 1 − x t k σ with 1 ≤ σ ≤ 2, the dynamic regret for an OA method is O T 1 σ + 1 D σ σ + 1 where T is the maximal number of rounds, and D is the given budget of dynamics. But, the dynamic regret for an OCO method is O √ T D + √ T , which is same with the case of no switching cost [ 20 , 21 , 50 , 51 ]. Furthermore, we provide a lower bound of dynamic regret, namely Ω √ T D + √ T for the OCO seing. Since the lower bound is still same with the case of no switching cost [ 50 ], it implies that the switching cost does not change the diculty of the online decision making problem for the OCO 2 Generally , the dynamic environment means the distribution of the data stream may change over time. 2 seing. Comparing with previous results, our new analysis is more general than previous results. W e dene a new dynamic regret with a generalized switching cost, and provide new regret b ounds. It is novel to analyze and provide the tight regr et bound in the dynamic environment, since previous analysis cannot work directly for the generalized dynamic regert. In a nutshell, our main contributions are summarized as follows. • W e propose a new general formulation of the dynamic regret with switching cost, and then develop a new analysis frame work based on it. • W e pro vide O T 1 σ + 1 D σ σ + 1 regret with 1 ≤ σ ≤ 2 for the seing of O A and O √ T D + √ T regret for the seing of OCO by using the online mirror descent. • W e provide a lower b ound Ω √ T D + √ T regret for the seing of OCO, which matches with the upper bound. e paper is organized as follows. Se ction 2 revie ws related literatures. Section 3 presents the preliminaries. Section 4 presents our new formulation of the dynamic regret with switching cost. Se ction 5 presents a new analysis framework and main results. Section 6 presents extensive empirical studies. Se ction 7 conludes the paper , and presents the future work. 2 RELA TED WORK In the section, we review r elated literatures briey . 2.1 Competitive ratio and regret Although the competitive ratio is usually used to analyze OA metho ds, and the regret is used to analyze OCO methods, recent researches aim to developing unied frameworks to analyze the performance of an online method in both seings [ 1 – 3 , 8 , 11 – 13 ]. [ 8 ] provides an analysis framework, which is able to achie ve sublinear regret for O A metho ds and constant competitive ratio for OCO methods. [ 1 , 11 , 12 ] uses a general OCO method, namely online mirror descent in the OA seing, and improv es the existing comp etitive ratio analysis for k -server and MTS problems. Dierent from them, we extend the existing regret analysis framework to handle a general switching cost, and focus on investigating the relation between regret and switching cost. [ 3 ] provides a lower bound for the OCO problem in the competitive ratio analysis framework, but we pro vide the lower bound in the regret analysis framework. [ 2 , 13 ] study the regret with switching cost in the OA seing, but the relation between them is not studied. Comparing with [ 2 , 13 ], we extend their analysis, and pr esent a more generalized bound of dynamic regret (see eorem 1). 2.2 Dynamic regret and switching cost Regret is widely used as a metric to measure the p erformance of OCO metho ds. When the environment is static, e.g., the distribution of data stream does not change over time, online mirror descent yields O √ T regret for convex functions and O ( log T ) regret for strongly convex functions [ 9 , 23 , 38 ]. When the distribution of data str eam changes 3 over time, online mirror descent yields O √ T D + √ T regret for convex functions [ 20 ], where D is the given budget of dynamics. Additionally , [ 51 ] rst investigates online gradient descent in the dynamic environment, and obtains O √ T D + √ T regret (by seing η ∝ q D T ) for convex f t . Note that the dynamic regret used in [ 51 ] does not contain swtiching cost. [ 21 , 22 ] use similar but more general denitions of dynamic regret, and still achie ves O √ T D + √ T regret. Furthermore, [ 50 ] presents that the lower bound of the dynamic r egret is Ω √ T D + √ T . Many other previous researches investigate the regret under dierent denitions of dynamics such as parameter variation [ 19 , 34 , 44 , 47 ], functional variation [ 7 , 25 , 46 ], gradient variation [ 17 ], and the mixe d regularity [ 16 , 24 ]. Note that the dynamic regret in those pr evious studies does not contain switching cost, which is signicantly dierent from our work. Our new analysis shows that this bound is achieved and optimal when there is switching cost in the regret ( see eorems 2 and 3). e proposed analysis framework thus shows how the switching cost impacts the dynamic regret for seings of O A and OCO , which leads to new insights to understand online decision making problems. 3 PRELIMINARIES Algo. Make decision rst? Obser ve f t rst? Metric Has SC? O A no yes competitive ratio yes OCO yes no regret no T able 1. Summary of dierence between OA and OCO . ‘SC’ repr esents ‘switching cost’. In the section, we present the preliminaries of online algorithms and online convex optimization, and highlight their dierence . en, we pr esent the dynamic regret with switching cost, which is used to measure the performance of both O A methods and OCO methods. 3.1 Online algorithms and online convex optimization Comparing with the seing of OCO [ 9 , 23 , 38 ], O A has the following major dier ence. • O A assumes that the loss function, e.g., f t , is known before making the decision at ev ery round. But, OCO assumes that the loss function, e .g., f t , is giv en aer making the decision at every round. • e performance of an OA method is measured by using the competitive ratio [15], which is dened by Í T t = 1 ( f t ( x t ) + k x t − x t − 1 k ) Í T t = 1 f t ( x ∗ t ) + x ∗ t − x ∗ t − 1 . Here, { x ∗ t } T t = 1 is denoted by { x ∗ t } T t = 1 = argmin { z t } T t = 1 ∈ ˜ L T D T Õ t = 1 ( f t ( z t ) + k z t − z t − 1 k ) 4 where ˜ L T D : = { z t } T t = 1 : Í T t = 1 k z t − z t − 1 k ≤ D . D is the given budget of dynamics. It is the best oine strategy , which is yielded by knowing all the requests beforehand [ 15 ]. Note that x ∗ t − x ∗ t − 1 is the switching cost yielded by A at the t -th round. But, OCO is usually measured by the regret , which is dened by T Õ t = 1 f t ( x t ) − min { z t } T t = 1 ∈ L T D T Õ t = 1 f t ( z t ) , where L T D : = { z t } T t = 1 : Í T − 1 t = 1 k z t + 1 − z t k ≤ D . D is also the given budget of dynamics. Note that the regret in classic OCO algorithm does not contain the switching cost. T o make it clear , we use T able 1 to highlight their dierences. 3.2 Dynamic regret with switching cost Although the analysis framew ork of O A and OCO is dierent, the dynamic regr et with switching cost is a popular metric to measure the performance of both O A and OCO [ 15 , 30 ]. Formally , for an algorithm A , its dynamic regr et with switching cost e R A D is dened by e R A D : = T Õ t = 1 f t ( x t ) + T − 1 Õ t = 1 k x t + 1 − x t k − min { z t } T t = 1 ∈ L T D T Õ t = 1 f t ( z t ) + T − 1 Õ t = 1 k z t + 1 − z t k ! , (1) where L T D : = { z t } T t = 1 : Í T − 1 t = 1 k z t + 1 − z t k ≤ D . Here, k x t + 1 − x t k represents the switching cost at the t -th round. D is the given budget of dynamics in the dynamic environment. When D = 0, all optimal decisions ar e same. With the increase of D , the optimal decisions are allowed to change to follo w the dynamics in the environment. It is necessary when the distribution of data stream changes over time. 3.3 Notations and Assumptions. W e use the following notations in the paper . • e bold lower-case leers, e.g., x , represent vectors. e normal leers, e.g., µ , represent a scalar number . • k · k represents a general norm of a vector . • X T represents Cartesian product, namely , X × X × . . . × X | {z } T times . F T has the similar meaning. • Bregman divergence B Φ ( x , y ) is dened by B Φ ( x , y ) = Φ ( x )− Φ ( y )− h ∇ Φ ( y ) , x − y i . • A represents a set of all possible online methods, and A ∈ A represents some a specic online method. • . represents ‘less than equal up to a constant factor’ . • E represents the mathematical expectation operator . Our assumptions are presented as follows. ey are widely used in previous litera- tures [9, 15, 23, 30, 38]. Assumption 1. e following basic assumptions are used throughout the paper . • For any t ∈ [ T ] , we assume that f t is convex, and has L -Lipschitz gradient. 5 • e function Φ is µ -strongly convex, that is, for any x ∈ X and y ∈ X , B Φ ( x , y ) ≥ µ 2 k x − y k 2 . • For any x ∈ X and y ∈ X , there exists a positive constant R such that max B Φ ( x , y ) , k x − y k 2 ≤ R 2 . • For any x ∈ X , there exists a positive constant G such that max k ∇ f t ( x ) k 2 , k ∇ Φ ( x ) k 2 ≤ G 2 4 D YNAMIC REGRET WITH GENERALIZED SWITCHING COST In the section, we propose a new formulation of dynamic regret, which contains a generalized switching cost. en, we highlight the nov elty of this formulation, and present the online mirror decent method for seing of O A and OCO. 4.1 Formulation For an algorithm A ∈ A , it yields a cost at the end of ev er y round, which consists of two parts: op erating cost and switching cost . At the t -th round, the operating cost is incurred by f t ( x t ) , and the switching cost is incurred by k x t + 1 − x t k σ with 1 ≤ σ ≤ 2. e optimal decisions are denoted by { y ∗ t } T t = 1 , which is denoted by { y ∗ t } T t = 1 = argmin { y t } T t = 1 ∈ L T D T Õ t = 1 f t ( y t ) + T − 1 Õ t = 1 k y t + 1 − y t k σ . Here, L T D is denoted by L T D = ( { y t } T t = 1 : T − 1 Õ t = 1 k y t + 1 − y t k ≤ D ) . D is a given budget of dynamics, which measures how much the optimal decision, i.e., y ∗ t can change o ver t . With the increase of D , those optimal decisions can change o ver time to follow the dynamics in the environment eectively . Denote an optimal method A ∗ , which yields the optimal sequence of decisions { y ∗ t } T t = 1 . Its total cost is denote d by cost ( A ∗ ) = T Õ t = 1 f t ( y ∗ t ) + T − 1 Õ t = 1 y ∗ t + 1 − y ∗ t σ . Similarly , the total cost of an algorithm A ∈ A is denoted by cost ( A ) = T Õ t = 1 f t ( x t ) + T − 1 Õ t = 1 k x t + 1 − x t k σ . Denition 1. For any algorithm A ∈ A , its dynamic regret R A D with switching cost is dened by R A D : = cost ( A ) − cost ( A ∗ ) . (2) Our new formulation of the dynamic regret R A D makes a balance between the operating cost and the switching cost, which is dierent from the previous denition of the dynamic regret in [20, 21, 51]. 6 Note that the freedom of σ with 1 ≤ σ ≤ 2 allows our new dynamic r egret R A D to measure the performance of online methods for a large number of pr oblems. Some problems such as dynamic control of data centers [ 32 ], stock portfolio management [ 27 ], require to b e sensitive to the small change b etween successive decisions, and the switching cost in these problems is usually bounded by k x t + 1 − x t k . But, many problems such as dynamic placement of cloud service [ 49 ] need to bound the large change between successive decisions eectively , and the switching cost in these problems is usually bounded by k x t + 1 − x t k 2 . 4.2 Novelty of the new formulation Our new formulation of the dynamic regret is more general than previous formulations [15, 30], which are presented as follows. • Support mor e general switching cost. [ 15 ] denes the dynamic regret with switching cost by (1) . It is a special case of our new formulation (2) by seing σ = 1. e sequence of optimal decisions { y ∗ t } T t = 1 is dominated by { f t } T t = 1 and D , and does not change over { x t } T t = 1 . R A D is thus impacte d by { x t } T t = 1 for the given { f t } T t = 1 and D . Generally , k x t + 1 − x t k is more sensitive to measure the slight change between x t + 1 and x t than k x t + 1 − x t k 2 . But, for some problems such as the dynamic placement of cloud service [ 49 ], the switching cost at the t -th round is usually measured by k x t + 1 − x t k 2 , instead of k x t + 1 − x t k . e previous formulation in [ 15 ] is not suitable to bound the switching cost for those problems. Beneting from 1 ≤ σ ≤ 2, (2) supports more general switching cost than previous w ork. • Support more general convex f t . [ 30 ] denes the the dynamic regret with switching cost by T Õ t = 1 f t ( x t ) + T − 1 Õ t = 1 k x t + 1 − x t k 2 − min { z t } T t = 1 ∈ X T T Õ t = 1 f t ( z t ) + T − 1 Õ t = 1 k z t + 1 − z t k 2 ! , and they use Í T − 1 t = 1 x ∗ t + 1 − x ∗ t to bound the regret. Here, x ∗ t = argmin x ∈ X f t ( x ) . It implicitly assumes that the dierence between x ∗ t + 1 and x ∗ t are bounded. It is reasonable for a strongly convex function f t , but may not be guaranteed for a general convex function f t . A dditionally , [ 30 ] uses x ∗ t + 1 − x ∗ t 2 to bound the switching cost, which is more sensitive to the signicant change than x ∗ t + 1 − x ∗ t . But, it is less eective to bound the slight change b etween them, which is not suitable for many problems such as dynamic control of data centers [32]. 4.3 Algorithm W e use mirror descent [ 5 ] in the online seing, and present the algorithm MD-OA for the O A seing and the algorithm MD-OCO for the OCO seing, respectively . As illustrated in Algorithms 1 and 2, both MD-OA and MD-OCO are p erformed iteratively . For every round, MD-OA rst observes the loss function f t , and then makes the de cision x t at the t -th round. But, MD-OCO rst makes the decision x t , and then observe the loss function f t . erefore, MD-O A usually makes the decision 7 Algorithm 1 MD-OA: Online Mirror Descent for O A. Require: e learning rate γ , and the numb er of rounds T . 1: for t = 1 , 2 , . . ., T do 2: Observe the loss function f t . Obser ve f t rst. 3: ery a gradient ˆ g t ∈ ∇ f t ( x t − 1 ) . 4: x t = argmin x ∈ X h ˆ g t , x − x t − 1 i + 1 γ B Φ ( x , x t − 1 ) . Play a decision aer knowing f t . 5: return x T Algorithm 2 MD-OCO: Online Mirror Descent for OCO. Require: e learning rate η , the number of rounds T , and x 0 . 1: for t = 0 , 1 , . . ., T − 1 do 2: Play x t . P lay a de cision rst before knowing f t . 3: Receive a loss function f t . 4: ery a gradient ¯ g t ∈ ∇ f t ( x t ) . 5: x t + 1 = argmin x ∈ X h ¯ g t , x − x t i + 1 η B Φ ( x , x t ) . 6: return x T based on the observed f t for the curr ent round, but MD-OCO has to predict a decision for the next round based on the received f t . Note that both MD-OA and MD-OCO requires to solve a convex optimizaiton problem to update x . e complexity is dominated by the domain X and the distance function Φ . Besides, b oth of them lead to O ( d ) memory cost. ey lead to comparable cost of computation and memory . 5 THEORETICAL ANAL YSIS In this section, we present our main analysis results about the proposed dynamic regret for both MD-O A and MD-OCO , and discuss the dierence between them. 5.1 New bounds for dynamic regret with switching cost e upper bound of dynamic regret for MD-O A is presented as follows. eorem 1. Choose γ = min n µ L , T − 1 1 + σ D 1 1 + σ o in Algorithm 1. Under Assumption 1, we have sup { f t } T t = 1 ∈ F T R MD-OA D . T 1 σ + 1 D σ σ + 1 + T 1 σ + 1 D − 1 σ + 1 . at is, Algorithm 1 yields O T 1 σ + 1 D σ σ + 1 dynamic regret with switching cost. Remark 1. When σ = 1 , MD-OA yields O √ T D dynamic regret, which achieves the state-of-the-art result in [ 15 ]. When σ = 2 , MD-O A yields O T 1 3 D 2 3 dynamic regr et, which is a new result as far as we know . 8 Howev er , we nd dierent result for MD-OCO . e switching cost does not have an impact on the dynamic regret. eorem 2. Choose η = min µ 4 , q D + G T in Algorithm 2. Under Assumption 1, we have sup { f t } T t = 1 ∈ F T R MD-OCO D . √ T D + √ T . at is, Algorithm 2 yields O √ DT + √ T dynamic regret with switching cost. Remark 2. MD-OCO still yields O √ T D + √ T dynamic regret [ 20 ] when there is no switching cost. It shows that the switching cost does not have an impact on the dynamic regret. Before presenting the discussion, w e show that MD-OCO is the optimum for dy- namic regret because the lower bound of the problem matches with the upper bound yielded by MD-OCO. eorem 3. Under Assumption 1, the lower bound of the dynamic regr et for the OCO problem is inf A ∈ A sup { f t } T t = 1 ∈ F T R A D = Ω √ T D + √ T . Remark 3. When there is no switching cost, the lower bound of dynamic regret for OCO is O √ T D + √ T [ 50 ]. e orem 3 achieves it for the case of switching cost. It implies that the switching cost does not let the online decision making in the OCO seing become more dicult. 5.2 Insights Switching cost has a signicant impact on the dynamic regret for the setting of OA. According to eorem 1, the switching cost has a signicant impact on the dynamic r egret of MD-OA. Given a constant D , a small σ leads to a strong dependence on T , and a large σ leads to a weak dependence on T . e reason is that a large σ leads to a large learning rate , which is more eective to follo w the dynamics in the environment than a small learning rate. Switching cost does not have an impact on the dynamic regret for the set- ting of OCO . According to eorem 2 and e orem 3, the dynamic regret yielde d by MD-OCO is tight, and MD-OCO is the optimum for the problem. Although the switching cost exists, the dynamic regret yielded by MD-OCO does not have any dierence. As we can see, there is a signicant dierence between the OA seing and the OCO seing. e reasons are presented as follows. • MD-O A makes decisions aer observing the loss function. It has known the potential operating cost and switching cost for any decision. us, it can make decisions to achie ve a good tradeo between the op erating cost and switching cost. 9 • MD-OCO make decisions before observing the loss function. It only knows the historical information and the potential switching cost, and does not know the potential op erating cost for any decision at the current round. In the worst case, if the environment provides an adversary loss function to maximize the operating cost based on the decision played by MD-OCO, MD-OCO has to lead to O √ T D + √ T regret ev en for the case of no switching cost [ 20 ]. Although the potential switching cost is known, MD-OCO cannot make a beer decision to reduce the regret due to unknown operating cost. 6 EMPIRICAL ST UDIES In this section, we evaluate the total r egret and the regret caused by switching cost for seings of both OA and OCO by running online mirr or decent. Our experiments show the importance of knowing loss function before making a decision. 6.1 Experimental seings W e conduct binar y classication by using the logistic regression model. Given an instance a ∈ R d and its label y ∈ { 1 , − 1 } , the loss function is f ( x ) = log 1 + exp − y a > x . In experiments, we let Φ ( x ) = 1 2 k x k 2 . W e test four methods, including MD-O A, i.e., Algorithm 1, and MD-OCO, i.e., Algorithm 2, online balanced descent [ 15 ] denoted by BD-OA in the experiment, and multiple online gradient descent [ 48 ] denoted by MGD-OCO in the experiment. Both MD-O A and BD-OA are two variants of online algorithm, and similarily b oth MD-OCO and MGD-OCO ar e two variants of online convex optimization. W e test those methods on three real datasets: usenet1 3 , usenet2 4 , and spam 5 . e distributions of data streams change over time for those datasets, which is just the dynamic environment as we have discussed. More details about those datasets and its dynamics are presented at: hp://mlkd.csd.auth.gr/concept dri.html. W e use the average loss to test the regr et, because they have the same optimal reference points { y ∗ t } t l = 1 . For the t -th round, the average loss is dened by 1 t t Õ l = 1 log 1 + exp − y l A > l x l | {z } average loss caused by operating cost + 1 t t − 1 Õ l = 0 k x l + 1 − x l k | {z } average loss caused by switching cost , where A l is the instance at the l -th round, and y l is its label. Besides, we evaluate the average loss caused by operating cost separately , and denote it by OL. Similarly , SL represents the average loss caused by switching cost. In experiment, we set D = 10. Since G , µ , and L are usually not known in practical scenarios, the learning rate is set by the following heuristic rules. W e choose the learning rate γ t = η t = δ √ t for the t -th iteration, where δ is a given constants by the 3 hp://lpis.csd.auth.gr/mlkd/usenet1.rar 4 hp://lpis.csd.auth.gr/mlkd/usenet2.rar 5 hp://lpis.csd.auth.gr/mlkd/concept dri/spam data.rar 10 200 400 600 800 1000 Number of rounds 0.5 1 1.5 2 2.5 3 3.5 Average loss MD-OCO MGD-OCO MD-OA BD-OA (a) usenet1 , total loss, σ = 1 200 400 600 800 1000 Number of rounds 0.5 1 1.5 2 2.5 3 3.5 4 Average loss MD-OCO MGD-OCO MD-OA BD-OA (b) usenet1 , total loss, σ = 1 . 5 200 400 600 800 1000 Number of rounds 1 2 3 4 5 Average loss MD-OCO MGD-OCO MD-OA BD-OA (c) usenet1 , total loss, σ = 2 200 400 600 800 1000 Number of rounds 0.5 1 1.5 Average loss MD-OCO MGD-OCO MD-OA BD-OA (d) usenet2 , total loss, σ = 1 200 400 600 800 1000 Number of rounds 0.6 0.8 1 1.2 1.4 1.6 Average loss MD-OCO MGD-OCO MD-OA BD-OA (e) usenet2 , total loss, σ = 1 . 5 200 400 600 800 1000 Number of rounds 0.6 0.8 1 1.2 1.4 1.6 1.8 Average loss MD-OCO MGD-OCO MD-OA BD-OA (f ) usenet2 , total loss, σ = 2 1000 2000 3000 4000 Number of rounds 0.5 1 1.5 2 2.5 Average loss MD-OCO MGD-OCO MD-OA BD-OA (g) spam , total loss, σ = 1 1000 2000 3000 4000 Number of rounds 0.5 1 1.5 2 2.5 3 Average loss MD-OCO MGD-OCO MD-OA BD-OA (h) spam , total loss, σ = 1 . 5 1000 2000 3000 4000 Number of rounds 1 2 3 4 5 Average loss MD-OCO MGD-OCO MD-OA BD-OA (i) spam , total loss, σ = 2 Fig. 1. OCO methods leads to large average loss than O A methods. following rules. First, we set a large value δ = 10. en, we iterativ ely adjust the value of δ by δ ← δ / 2 when δ cannot let the av erage loss converge. If the rst appropriate δ can let the average loss converge, it is nally chosen as the optimal learning rate. W e use the similar heuristic method to determine other parameters, e.g., the number of inner iterations in MGD-OCO . Finally , the mirror map function is 1 2 k · k 2 for BD-O A. 6.2 Numerical results As shown in Figure 1, both MD-OA and BD-O A are much more ecetive than MD- OCO and MGD-OCO to decrease the av erage loss during a few r ounds of begining. ose O A methods yield much smaller average loss than OCO methods. e reason is that O A knows the loss function f t before making decision x t . But, OCO has to make decision before know the loss function. Beneting from knowing the loss function f t , OA reduces the average loss more efectively than OCO . It matches with our theoretical analysis. at is, Algorithm 1 leads to O T 1 1 + σ D σ 1 + σ regret, but Algorithm 2 leads to O √ T D + √ T regret. When σ ≥ 1, O A tends to lead to smaller regret 11 200 400 600 800 1000 Number of rounds 0.5 1 1.5 2 2.5 3 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (a) usenet1 , separated loss, σ = 1 200 400 600 800 1000 Number of rounds 0.5 1 1.5 2 2.5 3 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (b) usenet1 , separated loss, σ = 1 . 5 200 400 600 800 1000 Number of rounds 0.5 1 1.5 2 2.5 3 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (c) usenet1 , separated loss, σ = 2 200 400 600 800 1000 Number of rounds 0.2 0.4 0.6 0.8 1 1.2 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (d) usenet2 , separated loss, σ = 1 200 400 600 800 1000 Number of rounds 0.2 0.4 0.6 0.8 1 1.2 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (e) usenet2 , separated loss, σ = 1 . 5 200 400 600 800 1000 Number of rounds 0.2 0.4 0.6 0.8 1 1.2 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (f ) usenet2 , separated loss, σ = 2 1000 2000 3000 4000 Number of rounds 0.5 1 1.5 2 2.5 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (g) spam , separated loss, σ = 1 1000 2000 3000 4000 Number of rounds 0.5 1 1.5 2 2.5 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (h) spam , separated loss, σ = 1 . 5 1000 2000 3000 4000 Number of rounds 0.5 1 1.5 2 2.5 3 Average loss MD-OA(OL) MD-OA(SL) MD-OCO(OL) MD-OCO(SL) (i) spam , separated loss, σ = 2 Fig. 2. Comparing with MD-OCO , The superiority of MD-OA becomes significant for a large σ . Difference of switching cost Fig. 3. MD-OCO leads to mor e average loss caused by switching cost than MD-OA, esp ecially for a large σ . than OCO . e reason is that O A knows the potential loss before playing a decision for every round. But, OCO works in an adversary environment, and it has to play a decision before knowing the potential loss. us, O A is able to play a beer decision 12 than OCO to decrease the loss. Additionally , we observe that both MD-O A and BD-OA reduce much more average loss than MD-OCO and MGD-OCO for a large σ , which validates our theoretical results nicely . It means that O A is more ee ctive to reduce the switching cost than OCO for a large σ . Sp ecically , as shown in Figure 2, the average loss caused by switching cost of O A methods, i.e., MD-O A(SL), has unsignicant changes, but that of OCO methods, i.e., MD-OCO(SL), has remarkable incr ease for a large σ . When handling the whole dataset, the nal dier ence of switching cost between MD-O A and MD-OCO is shown in Figure 3. Here, the dierence of switching cost is measured by using average loss caused by switching cost of MD-OCO minus corre- sponding average loss caused by switching cost of MD-OA. As we can see, it highlights that OA is more eective to de crease the switching cost. e sup eriority becomes signicant for a large σ , which veries our theoretical results nicely again. 7 CONCLUSION AND F U T URE W ORK W e have proposed a new dynamic regret with switching cost and a new analysis framework for both online algorithms and online convex optimization. W e nd that the switching cost signicantly impacts on the regret yielde d by OA methods, but does not have an impact on the regret yielded by OCO methods. Empirical studies have validated our theoretical result. Moreover , the switching cost in the paper is measured by using the norm of the dierence between two successive decisions, that is, k x t + 1 − x t k . It is interest- ing to investigate whether the work can b e extended to a more general distance measure function such as Bregman divergence d B ( x t + 1 , x t ) or Mahalanobis distance d M ( x t + 1 , x t ) . Specically , if the Bregman divergence 6 is used, the switching cost is thus d B ( x t + 1 , x t ) = ψ ( x t + 1 ) − ψ ( x t ) − h ∇ ψ ( x t ) , x t + 1 − x t i , wher e ψ (·) is a dier entiable distance function. If the Mahalanobis distance 7 is used, the switching cost is thus d M ( x t + 1 , x t ) = p ( x t + 1 − x t ) > S ( x t + 1 − x t ) , where S is the giv en covariance matrix. W e leave the potential extension as the future work. Besides, our analysis provides regret bound for any given budget of dynamics D . It is a good direction to extend the work in the parameter-free seing, where analysis is adaptive to the dynamics D of environment. Some previous w ork such as [ 45 ] have proposed the adaptive online method and analysis framework. But, [ 45 ] works in the expert seing, not a general seing of online convex optimization. It is still unknown whether their method can be used to extend our analysis. 8 A CKNO WLEDGMENTS is work was supp orted by the National Key R & D Program of China 2018YFB1003203 and the National Natural Science Foundation of China (Grant No. 61672528, 61773392, and 61671463). 6 See details in hps://en.wikipedia.org/wiki/Bregman divergence. 7 See details in hps://en.wikipedia.org/wiki/Mahalanobis distance. 13 REFERENCES [1] Jacob Abernethy , Peter L. Bartle, Niv Buchbinder , and Isab elle Stanton. 2010. A Regularization Approach to Metrical T ask Systems. In Proceedings of the 21st International Conference on Algorithmic Learning eory ( ALT) . Springer- V erlag, Berlin, Heidelberg, 270–284. [2] Lachlan Andrew , Siddharth Barman, Katrina Lige, Minghong Lin, Adam Meyerson, Alan Ro ytman, and Adam Wierman. 2013. A T ale of Two Metrics: Simultaneous Bounds on Competitiveness and Regret. In Proceedings of the ACM International Conference on Measurement and Modeling of Computer Systems . 329–330. [3] Antonios Antoniadis, Kevin Schewior , and Rudolf F leischer . 2018. A Tight Lower Bound for On- line Convex Optimization with Switching Costs. In A pproximation and Online Algorithms . Springer International Publishing, Cham, 164–175. [4] Nikhil Bansal, Niv Buchbinder , and Joseph Naor . 2010. Metrical T ask Systems and the K-server Problem on HST s. In Proceedings of the 37th International Colloquium Conference on Automata, Languages and Programming . [5] Amir Beck and Marc T eboulle. 2003. Mirror descent and nonlinear projected subgradient methods for convex optimization. Operations Research Leers 31, 3 (2003), 167 – 175. [6] Andrey Bernstein, Shie Mannor , and Nahum Shimkin. 2010. Online Classication with Specicity Constraints. In Proceedings of Advances in Neural Information Processing Systems (NIPS) , J. D . Laerty , C. K. I. Williams, J. Shawe- Taylor , R. S. Zemel, and A. Culoa (Eds.). 190–198. [7] Omar Besbes, Y onatan Gur, and A ssaf J Zeevi. 2015. Non-Stationary Stochastic Optimization. Opera- tions Research 63, 5 (2015), 1227–1244. [8] A vrim Blum and Carl Burch. 2000. On-line Learning and the Metrical T ask System Problem. Machine Learning 39, 1 (Apr 2000), 35–58. [9] Sbastien Bubeck. 2011. Introduction to Online Optimization. [10] S ´ ebastien Bubeck, Michael B Cohen, James R Lee, and Yin T at Lee. 2019. Metrical task systems on trees via mirror descent and unfair gluing. In Proceedings of the ACM-SIAM Symposium on Discrete Algorithms (SODA ) . [11] S ´ ebastien Bubeck, Michael B. Cohen, Yin T at Lee, James R. Le e, and Aleksander M k adry . 2018. K-server via Multiscale Entropic Regularization. In Proceedings of the 50th A nnual ACM Symp osium on eor y of Computing (STOC) . ACM, New Y ork, NY , USA, 3–16. [12] Niv Buchbinder , Shahar Chen, Joshep (Se) Naor , and Ohad Shamir . 2012. Unie d Algorithms for Online Learning and Competitive Analysis. In Proceedings of the 25th Annual Conference on Learning eory (COLT) , Shie Mannor , Nathan Srebro, and Rob ert C. Williamson (Eds.), V ol. 23. Edinburgh, Scotland, 5.1–5.18. [13] Niangjun Chen, Anish Agarwal, Adam Wierman, Siddharth Barman, and Lachlan L.H. Andrew . 2015. Online Convex Optimization Using Predictions. In Proceedings of the ACM International Conference on Measurement and Modeling of Computer Systems . 191–204. [14] Niangjun Chen, Joshua Comden, Zhenhua Liu, Anshul Gandhi, and Adam Wierman. 2016. Using Predictions in Online Optimization: Looking Forward with an Eye on the Past. In Proceedings of the 2016 ACM SIGMETRICS International Conference on Measurement and Mo deling of Computer Science . 193–206. [15] Niangjun Chen, Gautam Goel, and Adam Wierman. 2018. Smoothed Online Convex Optimization in High Dimensions via Online Balanced Descent. In Proceedings of the 31st Conference On Learning eory (COLT) , V ol. 75. 1574–1594. [16] Tianyi Chen, Qing Ling, and Georgios B. Giannakis. 2017. An Online Convex Optimization Approach to Proactive Network Resource Allocation. IEEE Transactions on Signal Processing 65 (2017), 6350–6364. [17] Chao Kai Chiang, Tianbao Y ang, Chia Jung Lee, Mehrdad Mahdavi, Chi Jen Lu, Rong Jin, and Shenghuo Zhu. 2012. Online Optimization with Gradual V ariations. Journal of Machine Learning Research 23 (2012). [18] Koby Crammer , Jaz Kandola, and Y oram Singer . 2004. Online Classication on a Budget. In Pr ocee dings of Advances in Neural Information Processing Systems (NIPS) . 225–232. [19] Xiand Gao , Xiaobo Li, and Shuzhong Zhang. 2018. Online Learning with Non-Conv ex Losses and Non- Stationary Regret. In Proceedings of the T wenty-First International Conference on Articial Intelligence and Statistics (AIST A TS) , Amos Storkey and Fernando Perez-Cruz (Eds.), V ol. 84. 235–243. 14 [20] Andr ´ as Gy ¨ orgy and Csaba Szepesv ´ ari. 2016. Shiing Regret, Mirror Descent, and Matrices. In Proceedings of the 33rd International Conference on Machine Learning (ICML) . JMLR.org, 2943–2951. [21] Eric C Hall and Rebecca Wille. 2013. Dynamical Models and tracking regret in online convex programming.. In Proceedings of International Conference on International Conference on Machine Learning (ICML) . [22] Eric C Hall and Reb ecca M Wille. 2015. Online Conve x Optimization in Dynamic Environments. IEEE Journal of Sele cte d T opics in Signal Processing 9, 4 (2015), 647–662. [23] Elad Hazan. 2016. Introduction to Online Convex Optimization. Foundations and Tr ends in Optimization 2, 3-4 (2016), 157–325. [24] Ali Jadbabaie, Alexander Rakhlin, Shahin Shahrampour , and Karthik Sridharan. 2015. Online Op- timization : Competing with D ynamic Comparators. In Procee dings of International Conference on A rticial Intelligence and Statistics (AIST A TS) . 398–406. [25] Rodolphe Jenaon, Jim Huang, and Ce dric Archambeau. 2016. Adaptive Algorithms for Online Convex Optimization with Long-term Constraints. In Proceedings of e 33rd International Conference on Machine Learning (ICML) , V ol. 48. 402–411. [26] James R Lee. 2018. Fusible HST s and the randomized k-server conjecture.. In Proceedings of the IEEE 59th A nnual Symposium on Foundations of Computer Science . [27] Bin Li and Steven C. H. Hoi. 2014. Online Portfolio Selection: A Survey . Comput. Surveys 46, 3 (2014), 35:1–35:36. [28] Bin Li, Steven C. H. Hoi, Peilin Zhao, and Vivekanand Gopalkrishnan. 2013. Condence W eighted Mean Reversion Strategy for Online Portfolio Selection. ACM Transactions on Knowledge Discovery from Data (TKDD) 7, 1 (March 2013), 4:1–4:38. [29] C. Li, P. Zhou, L. Xiong, Q . W ang, and T . W ang. 2018. Dierentially Private Distributed Online Learning. IEEE T ransactions on Knowledge and Data Engineering (TKDE) 30, 8 (A ug 2018), 1440–1453. [30] Yingying Li, Guannan , and Na Li. 2018. Online Optimization with Predictions and Switching Costs: Fast Algorithms and the Fundamental Limit. arXiv .org (Jan. 2018). arXiv:math.OC/1801.07780v3 [31] M. Lin, A. Wierman, L. L. H. Andrew , and E. ereska. 2011. Dynamic right-sizing for power- proportional data centers. In Proceedings of IEEE International Conference on Computer Communications (INFOCOMM) . 1098–1106. [32] Minghong Lin, Adam Wierman, Alan Roytman, Adam Meyerson, and Lachlan L.H. Andrew . 2012. Online Optimization with Switching Cost. SIGMETRICS Performance Evaluation Review 40, 3 (2012), 98–100. [33] T . Lu, M. Chen, and L. L. H. Andrew . 2013. Simple and Eective Dynamic Provisioning for Pow er- Proportional Data Centers. IEEE Transactions on Parallel and Distributed Systems (TPDS) 24, 6 (June 2013), 1161–1171. [34] Aryan Mokhtari, Shahin Shahrampour , Ali Jadbabaie, and Alejandro Ribeiro. 2016. Online optimization in dynamic environments: Improved regret rates for strongly conv ex problems. In Proceedings of IEEE Conference on Decision and Contr ol (CDC) . IEEE, 7195–7201. [35] Reetabrata Mookherjee, Benjamin F. Hobbs, T erry Lee Friesz, and Mahew A. Rigdon. 2008. Dynamic oligopolistic competition on an electric power network with ramping costs and joint sales constraints. Journal of Industrial and Management Optimization 4, 3 (11 2008), 425–452. [36] Manfred Morari and Jay H. Lee. 1999. Model predictive control: past, present and future. Computers & Chemical Engineering 23, 4 (1999), 667 – 682. [37] Marc P . Renault and Adi Ros ´ en. 2012. On Online Algorithms with Advice for the k-Server Problem. In A pproximation and Online Algorithms , Roberto Solis-Oba and Giuseppe Persiano (Eds.). Springer Berlin Heidelberg, Berlin, Heidelberg, 198–210. [38] Shai Shalev-Shwartz. 2012. Online Learning and Online Convex Optimization. Foundations and Trends ® in Machine Learning 4, 2 (2012), 107–194. [39] Y . Sun, K. T ang, L. L. Minku, S. W ang, and X. Y ao. 2016. Online Ensemble Learning of Data Streams with Gradually Evolved Classes. IEEE Transactions on Knowledge and Data Engine ering (TKDE) 28, 6 (June 2016), 1532–1545. [40] Hao W ang, Jianwei Huang, Xiaojun Lin, and Hamed Mohsenian-Rad. 2014. Exploring Smart Grid and Data Center Interactions for Electric Power Load Balancing. SIGMETRICS Performance Evaluation Review 41, 3 (Jan. 2014), 89–94. 15 [41] Liang W ang, Kuang-chih Lee, and an Lu. 2016. Improving Advertisement Recommendation by Enriching User Browser Cookie Aributes. In Proceedings of the 25th ACM International on Conference on Information and Knowledge Management (CIKM) . 2401–2404. [42] M. W ang, C. Xu, X. Chen, H. Hao, L. Zhong, and S. Y u. 2019. Dierential Privacy Oriented Distributed Online Learning for Mobile Social Video Prefetching. IEEE T ransactions on Multimedia 21, 3 (March 2019), 636–651. [43] Haiqin Y ang, Michael R. Lyu, and Ir win King. 2013. Ecient Online Learning for Multitask Feature Selection. ACM Transactions on Knowledge Discovery from Data (TKDD) 7, 2 (A ug. 2013), 6:1–6:27. [44] Tianbao Y ang, Lijun Zhang, Rong Jin, and Jinfeng Yi. 2016. Tracking Slowly Moving Clairvoyant - Optimal Dynamic Regret of Online Learning with True and Noisy Gradient.. In Proceedings of the 34th International Conference on Machine Learning (ICML) . [45] Lijun Zhang, Shiyin Lu, and Zhi-Hua Zhou. 2018. Adaptive Online Learning in Dynamic Environ- ments. In Advances in Neural Information Processing Systems 31 , S. Bengio, H. W allach, H. Larochelle, K. Grauman, N. Cesa-Bianchi, and R. Garne (Eds.). 1323–1333. [46] Lijun Zhang, Tianbao Y ang, rong jin, and Zhi-Hua Zhou. 2018. Dynamic Regret of Strongly Adaptive Methods. In Proceedings of the 35th International Conference on Machine Learning (ICML) . 5882–5891. [47] Lijun Zhang, Tianbao Y ang, Jinfeng Yi, Rong Jin, and Zhi-Hua Zhou. 2017. Improved Dynamic Regret for Non-degenerate Functions. In Proceedings of Neural Information Processing Systems (NIPS) . [48] Lijun Zhang, Tianbao Y angt, Jinfeng Yi, Rong Jin, and Zhi-Hua Zhou. 2017. Improved Dynamic Regret for Non-degenerate Functions. In Proceedings of the 31st International Conference on Neural Information Processing Systems . 732–741. [49] Q. Zhang, Q . Zhu, M. F . Zhani, and R. Boutaba. 2012. Dynamic Service Placement in Ge ographically Distributed Clouds. In Procee dings of the IEEE 32nd International Conference on Distributed Computing Systems (ICDCS) . 526–535. [50] Y awei Zhao, Shuang Qiu, and Ji Liu. 2018. Proximal Online Gradient is Optimum for Dynamic Regret. CoRR cs.LG (2018). [51] Martin Zinkevich. 2003. Online Convex Programming and Generalized Innitesimal Gradient Ascent. In Proceedings of International Conference on Machine Learning (ICML) . 928–935. PROOFS Lemma 1. Given any vectors g , u t ∈ X , u ∗ ∈ X , and a constant scalar λ > 0 , if u t + 1 = argmin u ∈ X h g , u − u t i + 1 λ B Φ ( u , u t ) , we have h g , u t + 1 − u ∗ i ≤ 1 λ ( B Φ ( u ∗ , u t ) − B Φ ( u ∗ , u t + 1 ) − B Φ ( u t + 1 , u t ) ) . Proof. Denote h ( u ) = h g , u − u t i + 1 λ B Φ ( u , u t ) , and u τ = u t + 1 + τ ( u ∗ − u t + 1 ) . According to the optimality of x t , we have 0 ≤ h ( u τ ) − h ( u t + 1 ) = h g , u τ − u t + 1 i + 1 λ ( B Φ ( u τ , u t ) − B Φ ( u t + 1 , u t ) ) = h g , τ ( u ∗ − u t + 1 ) i + 1 λ ( Φ ( u τ ) − Φ ( u t + 1 ) + h ∇ Φ ( u t ) , τ ( u t + 1 − u ∗ ) i ) ≤ h g , τ ( u ∗ − u t + 1 ) i + 1 λ h ∇ Φ ( u t + 1 ) , τ ( u ∗ − u t + 1 ) i + 1 λ h ∇ Φ ( u t ) , τ ( u t + 1 − u ∗ ) i = h g , τ ( u ∗ − u t + 1 ) i + 1 λ h ∇ Φ ( u t ) − Φ ( u t + 1 ) , τ ( u t + 1 − u ∗ ) i . 16 us, we have h g , u t + 1 − u ∗ i ≤ 1 λ h ∇ Φ ( u t ) − Φ ( u t + 1 ) , u t + 1 − u ∗ i = 1 λ ( B Φ ( u ∗ , u t ) − B Φ ( u ∗ , u t + 1 ) − B Φ ( u t + 1 , u t ) ) . It completes the proof. Lemma 2. For any x ∈ X , we have B Φ ( y ∗ t + 1 , x ) − B Φ ( y ∗ t , x ) ≤ 2 G y ∗ t + 1 − y ∗ t . (1) Proof. According to the third-point identity of the Bregman div ergence, we have B Φ ( y ∗ t + 1 , x ) − B Φ ( y ∗ t , x ) = ∇ Φ ( y ∗ t + 1 ) − ∇ Φ ( x ) , y ∗ t + 1 − y ∗ t − B Φ ( y ∗ t , y ∗ t + 1 ) 1 ≤ ∇ Φ ( y ∗ t + 1 ) − ∇ Φ ( x ) , y ∗ t + 1 − y ∗ t ≤ ∇ Φ ( y ∗ t + 1 ) − ∇ Φ ( x ) y ∗ t + 1 − y ∗ t ≤ ∇ Φ ( y ∗ t + 1 ) + k ∇ Φ ( x ) k y ∗ t + 1 − y ∗ t ≤ 2 G y ∗ t + 1 − y ∗ t . (2) 1 holds because B Φ ( u , v ) ≥ 0 holds for any vectors u and v . It completes the proof. Lemma 3. Given x t − 1 ∈ X and ˆ g t , if x t = argmin x ∈ X h ˆ g t , x − x t − 1 i + 1 γ B Φ ( x , x t − 1 ) , we have k x t − x t − 1 k ≤ 2 G γ µ . Proof. h ˆ g t , x t − x t − 1 i + µ 2 γ k x t − x t − 1 k 2 1 ≤ h ˆ g t , x t − x t − 1 i + 1 γ B Φ ( x t , x t − 1 ) 2 ≤ 0 . 1 holds due to Φ is µ -strongly conve x, and 2 holds due to the optimality of x t . us, µ 2 γ k x t − x t − 1 k 2 ≤ h ˆ g t , − x t + x t − 1 i ≤ k ˆ g t k k − x t + x t − 1 k ≤ G k − x t + x t − 1 k . at is, k x t − x t − 1 k ≤ 2 G γ µ . It completes the proof. Proof to e orem 1: Proof. f t ( x t ) − f t ( y ∗ t ) = f t ( x t ) − f t ( x t − 1 ) + f t ( x t − 1 ) − f t ( y ∗ t ) ≤ f t ( x t ) − f t ( x t − 1 ) + ˆ g t , x t − 1 − y ∗ t = f t ( x t ) − f t ( x t − 1 ) − h ˆ g t , x t − x t − 1 i + ˆ g t , x t − y ∗ t 17 1 ≤ L 2 k x t − 1 − x t k 2 + ˆ g t , x t − y ∗ t 2 ≤ L 2 k x t − 1 − x t k 2 + 1 γ B Φ ( y ∗ t , x t − 1 ) − B Φ ( y ∗ t , x t ) − B Φ ( x t , x t − 1 ) 3 ≤ L γ − µ 2 γ k x t − 1 − x t k 2 + 1 γ B Φ ( y ∗ t , x t − 1 ) − B Φ ( y ∗ t , x t ) 4 ≤ 1 γ B Φ ( y ∗ t , x t − 1 ) − B Φ ( y ∗ t , x t ) . (3) 1 holds b ecause f t has L -Lipschitz gradient. 2 holds due to Lemma 1 by seing g = ˆ g t , u t = x t − 1 , u t + 1 = x t , u ∗ = y ∗ t , and λ = γ . 3 holds because that Φ is µ -strongly convex, that is, B Φ ( x t , x t − 1 ) ≥ µ 2 k x t − x t − 1 k 2 . 4 holds due to γ ≤ µ L . us, we have T Õ t = 1 f t ( x t ) − f t ( y ∗ t ) + k x t − x t − 1 k σ − T Õ t = 1 y ∗ t − y ∗ t − 1 σ ≤ T Õ t = 1 f t ( x t ) − f t ( y ∗ t ) + k x t − x t − 1 k σ 1 ≤ T Õ t = 1 k x t − x t − 1 k σ + 1 γ T Õ t = 1 B Φ ( y ∗ t , x t − 1 ) − B Φ ( y ∗ t , x t ) = T Õ t = 1 k x t − x t − 1 k σ + 1 γ B Φ ( y ∗ 1 , x 0 ) − B Φ ( y ∗ T , x T ) + 1 γ T − 1 Õ t = 1 B Φ ( y ∗ t + 1 , x t ) − B Φ ( y ∗ t , x t ) 2 ≤ T Õ t = 1 k x t − x t − 1 k σ + 2 G γ T − 1 Õ t = 1 y ∗ t + 1 − y ∗ t + 1 γ B Φ ( y ∗ 1 , x 0 ) − B Φ ( y ∗ T , x T ) ≤ T Õ t = 1 k x t − x t − 1 k σ + 2 G γ T − 1 Õ t = 1 y ∗ t + 1 − y ∗ t + 1 γ B Φ ( y ∗ 1 , x 0 ) ≤ T Õ t = 1 k x t − x t − 1 k σ + 2 G D γ + R 2 γ 3 ≤ 2 G µ σ γ σ T + 2 G D + R 2 γ . 1 holds due to (3). 2 holds due to B Φ ( y ∗ t + 1 , x t ) − B Φ ( y ∗ t , x t ) ≤ 2 G y ∗ t + 1 − y ∗ t according to Lemma 2. 3 holds due to Lemma 3. Choose γ = min n µ L , T − 1 1 + σ D 1 1 + σ o . W e have T Õ t = 1 f t ( x t ) − f t ( y ∗ t ) + k x t − x t − 1 k σ − T Õ t = 1 y ∗ t − y ∗ t − 1 σ ≤ 2 G µ σ T 1 σ + 1 D σ σ + 1 + max L ( 2 G D + R 2 ) µ , T 1 σ + 1 2 G D σ σ + 1 + R 2 D − 1 σ + 1 18 . T 1 σ + 1 D σ σ + 1 + T 1 σ + 1 D − 1 σ + 1 . Since it holds for any seqence { f t } T t = 1 ∈ F T , we nally obtain sup { f t } T t = 1 ∈ F T R MD-OA D . T 1 σ + 1 D σ σ + 1 + T 1 σ + 1 D − 1 σ + 1 . It completes the proof. Proof to e orem 2: Proof. f t ( x t ) − f t ( y ∗ t ) + k x t − x t + 1 k σ − y ∗ t − y ∗ t + 1 σ ≤ ¯ g t , x t − y ∗ t + k x t − x t + 1 k σ = h ¯ g t , x t − x t + 1 i + ¯ g t , x t + 1 − y ∗ t + k x t − x t + 1 k σ 1 ≤ h ¯ g t , x t − x t + 1 i + 1 η B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t , x t + 1 ) − B Φ ( x t + 1 , x t ) + k x t − x t + 1 k σ 2 ≤ h ¯ g t , x t − x t + 1 i − µ 2 η k x t + 1 − x t k 2 + 1 η B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t , x t + 1 ) + k x t − x t + 1 k σ 3 ≤ η µ k ¯ g t k 2 + − µ 4 η k x t + 1 − x t k 2 + k x t + 1 − x t k σ + 1 η B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t , x t + 1 ) ≤ η G 2 µ + − σ 2 2 2 − σ 4 η µ σ 2 − σ + σ 2 σ 2 − σ 4 η µ σ 2 − σ ! + 1 η B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t , x t + 1 ) ≤ η G 2 µ + σ 2 σ 2 − σ 4 η µ + 1 η B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t , x t + 1 ) . 1 holds due to Lemma 1 by seing g = ¯ g t , u t = x t , u t + 1 = x t + 1 , u ∗ = y ∗ t , and λ = η . 2 holds due to Φ is µ -strongly convex. 3 holds because h u , v i ≤ a 2 k u k 2 + 1 2 a k v k 2 holds for any u , v , and a > 0. e last inequality holds due to η ≤ µ 4 and 1 ≤ σ ≤ 2. T elescoping it over t , we have T Õ t = 1 f t ( x t ) − f t ( y ∗ t ) + T − 1 Õ t = 1 k x t − x t + 1 k σ − y ∗ t − y ∗ t + 1 σ ≤ T η G 2 µ + 1 η T Õ t = 1 B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t , x t + 1 ) + σ 2 σ 2 − σ 4 η µ = T η G 2 µ + 1 η T Õ t = 2 B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t − 1 , x t ) ! + 1 η B Φ ( y ∗ 1 , x 1 ) − B Φ ( y ∗ T , x T + 1 ) + σ 2 σ 2 − σ 4 η µ ≤ T η G 2 µ + 1 η T Õ t = 2 B Φ ( y ∗ t , x t ) − B Φ ( y ∗ t − 1 , x t ) ! + 1 η B Φ ( y ∗ 1 , x 1 ) + σ 2 σ 2 − σ 4 η µ 19 1 ≤ T η G 2 µ + 2 G η T − 1 Õ t = 1 y ∗ t + 1 − y ∗ t + 1 η B Φ ( y ∗ 1 , x 1 ) + σ 2 σ 2 − σ 4 η µ ≤ T η G 2 µ + 2 G D η + R 2 η + σ 2 σ 2 − σ 4 η µ . √ T D + √ T . 1 holds due to B Φ ( y ∗ t + 1 , x t + 1 ) − B Φ ( y ∗ t , x t + 1 ) ≤ 2 G y ∗ t + 1 − y ∗ t according to Lemma 2. e last inequality holds by seing η = min q D + G T , µ 4 . Since it holds for any seqence of f t ∈ F , we nally obtain sup { f t } T t = 1 ∈ F T R MD-OCO D . √ T D + √ T . It completes the proof. Proof to e orem 3: Proof. is proof is inspired by [ 50 ], but our new analysis generalizes [ 50 ] to the case of switching cost. Construct the function f t ( x t ) = h v t , x t i for any t ∈ [ T ] . Here, v t ∈ { 1 , − 1 } d , and every element v t ( j ) with j ∈ [ d ] is a random variable, which is sampled from a Rademacher distribution independently . For any online method A ∈ A , its regret is bounded as follows. sup { f t } T t = 1 R A D ≥ R A D = E v 1: T T Õ t = 1 f t ( x t ) + T Õ t = 1 k x t − x t − 1 k σ − E v 1: T min { y t } T t = 1 ∈ L T D T Õ t = 1 f t ( y t ) + T Õ t = 1 k y t − y t − 1 k σ ! = E v 1: T T Õ t = 1 f t ( x t ) + T Õ t = 1 k x t − x t − 1 k σ ! + E v 1: T max { y t } T t = 1 ∈ L T D − T Õ t = 1 f t ( y t ) − T Õ t = 1 k y t − y t − 1 k σ ! = E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 ( f t ( x t ) − f t ( y t ) − k y t − y t − 1 k σ ) + E v 1: T T Õ t = 1 k x t − x t − 1 k σ = E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 ( h v t , x t − y t i − k y t − y t − 1 k σ ) + E v 1: T T Õ t = 1 k x t − x t − 1 k σ . (4) For any optimal sequence of { y ∗ t } T t = 1 , E v t v t , x t − 1 − y ∗ t − 1 = E v t v t , x t − 1 − y ∗ t − 1 = 0 , x t − 1 − y ∗ t − 1 = 0 . us, for any optimal sequence of { y ∗ t } T t = 1 , we have E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 ( h v t , x t − y t i − k y t − y t − 1 k σ ) 20 = E v 1: T T Õ t = 1 v t , x t − y ∗ t − T Õ t = 1 y ∗ t − y ∗ t − 1 σ ! = E v 1: T T Õ t = 1 v t , x t − x t − 1 + y ∗ t − 1 − y ∗ t − E v 1: T T Õ t = 1 y t − y ∗ t − 1 σ = E v 1: T T Õ t = 1 h v t , x t − x t − 1 i + E v 1: T T Õ t = 1 v t , y ∗ t − 1 − y ∗ t − T Õ t = 1 y t − y ∗ t − 1 σ ! = E v 1: T T Õ t = 1 h v t , x t − x t − 1 i + E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t − 1 − y t i − T Õ t = 1 k y t − y t − 1 k σ ! Substituting it into (4), we have sup { f t } T t = 1 R A D ≥ E v 1: T T Õ t = 1 h v t , x t − x t − 1 i + T Õ t = 1 k x t − x t − 1 k σ ! + E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t − 1 − y t i − T Õ t = 1 k y t − y t − 1 k σ ! 1 ≥ E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t − 1 − y t i − T Õ t = 1 k y t − y t − 1 k σ ! ≥ E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t − 1 − y t i − max { y t } T t = 1 ∈ L T D T Õ t = 1 k y t − y t − 1 k σ 2 ≥ E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t − 1 − y t i − D σ 3 = E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , − y t i − D σ 4 = E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t i − D σ . 1 holds due to E v t ( h v t , x t − x t − 1 i + k x t − x t − 1 k σ ) = E v t v t , x t − x t − 1 + k x t − x t − 1 k σ = k x t − x t − 1 k σ ≥ 0 . 2 holds be cause that, for any sequence { y t } T t = 1 , Í T t = 1 k y t − y t − 1 k ≤ D . us, max { y t } T t = 1 ∈ L T D T Õ t = 1 k y t − y t − 1 k σ ≤ max { y t } T t = 1 ∈ L T D T Õ t = 1 k y t − y t − 1 k ! σ ≤ D σ . 21 3 holds be cause that, for any vector y t − 1 , E v t h v t , y t − 1 i = E v t v t , y t − 1 = h 0 , y t − 1 i = 0 . 4 holds be cause that the domain of v t is symmetric. Furthermore, w e construct a sequence { y t } T t = 1 as follows. (1) Evenly split { y t } T t = 1 into two subsets: { y t } T 1 t = 1 and { y T 1 + t } T 2 t = 1 . Here, T 1 = T 2 = T 2 . (2) Aer that, evenly split { y t } T 1 t = 1 into N : = min D R , T 1 subsets, that is, { y t } T 1 N t = 1 , { y t } 2 T 1 N t = T 1 N + 1 , { y t } 3 T 1 N t = 2 T 1 N + 1 , …, { y t } T 1 t = ( N − 1 ) T 1 N + 1 . (3) For the i -th subset of the sequence { y t } T 1 t = 1 , let the values in it be same, and denote it by u i with k u i k ≤ R 2 . For the whole sequence { y T 1 + t } T 2 t = 1 , let all the values be same, namely u N . (4) For the sequence of { y t } T 1 t = 1 , elements in dierent subsets ar e dierent such that k u i + 1 − u i k ≤ k u i + 1 k + k u i k ≤ R . us, T − 1 Õ t = 1 k y t + 1 − y t k = T 1 − 1 Õ t = 1 k y t + 1 − y t k + T Õ t = T 1 k y t + 1 − y t k = N − 1 Õ i = 1 k u i + 1 − u i k + 0 ≤ ( N − 1 ) R ≤ D . e last inequality holds due to ( N − 1 ) R ≤ D . It implies that { y t } T t = 1 under our construction is feasible. en, we have E v 1: T max { y t } T t = 1 ∈ L T D T Õ t = 1 h v t , y t i = E v 1: T max { y t } T t = 1 ∈ L T D T 1 Õ t = 1 h v t , y t i + T Õ t = T 1 + 1 h v t , y t i ! = E v 1: T N Õ i = 1 max k u i k ≤ R 2 * T i N Õ t = 1 + T ( i − 1 ) N v t , u i + + E v 1: T max k u N k ≤ R 2 * T Õ t = T 1 + 1 v t , u N + 1 = R 2 E v 1: T N Õ i = 1 T i N Õ t = 1 + T ( i − 1 ) N v t + R 2 E v 1: T T Õ t = T 1 + 1 v t 2 ≥ R 2 √ d E v 1: T N Õ i = 1 d Õ j = 1 T i N Õ t = 1 + T ( i − 1 ) N v t ( j ) + R 2 √ d E v 1: T d Õ j = 1 T Õ t = T 1 + 1 v t ( j ) 3 = √ d N R 2 · Ω r T N ! + R √ d 2 · Ω r T 2 ! 22 = Ω √ R √ T N R + √ T 4 = Ω √ T D + √ T . 1 holds because that the maximum is obtained at the boundar y of the domain. 2 holds because that, for any v ∈ R d , k v k 1 ≤ √ d k v k 2 . 3 holds due to a classic result [23], that is, E v 1: T T i N Õ t = 1 + T ( i − 1 ) N v t ( j ) = Ω r T N ! . 4 holds due to D − R ≤ N R ≤ D + R , which implies that N R . D holds for D > 0. erefore , we obtain sup { f t } T t = 1 R A D ≥ E v 1: T max { y t } T t = 1 ∈ X T T Õ t = 1 h v t , y t i − D σ = Ω √ T D + √ T . e last equality holds because D σ is a constant, and it does not increase over T . Since it holds for any online algorithm A ∈ A , we nally have inf A ∈ A sup { f t } T t = 1 ∈ F T = Ω √ T D + √ T . It completes the proof. 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment