FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Interventions

The importance of incorporating ethics and legal compliance into machine-assisted decision-making is broadly recognized. Further, several lines of recent work have argued that critical opportunities for improving data quality and representativeness, …

Authors: Sebastian Schelter, Yuxuan He, Jatin Khilnani

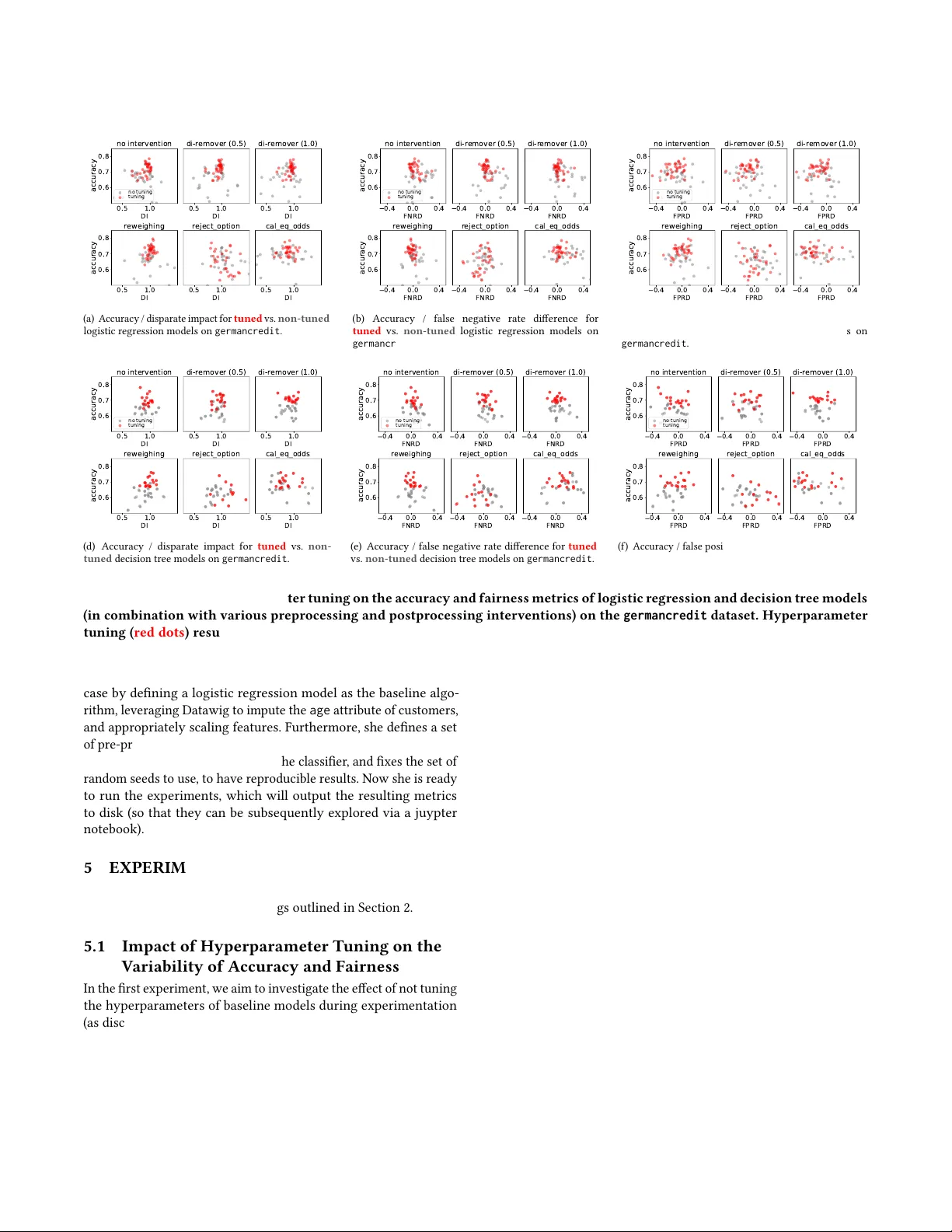

FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Inter ventions Sebastian Schelter , Y uxuan He, Jatin Khilnani, Julia Stoyanovich New Y ork University [sebastian.schelter , yh2857, jatin.khilnani, sto yanovich]@nyu.edu ABSTRA CT The importance of incorporating ethics and legal compliance into machine-assisted decision-making is broadly recognized. Further , several lines of recent work have argued that critical opp ortunities for improving data quality and r epresentativeness, controlling for bias, and allowing humans to oversee and impact computational processes are missed if we do not consider the lifecycle stages upstream from model training and deployment. Y et, very little has been done to date to pr ovide system-lev el support to data scientists who wish to develop and deploy r esponsible machine learning methods. W e aim to ll this gap and present FairPrep, a design and evaluation framework for fairness-enhancing interventions. FairPrep is based on a dev eloper-centered design, and helps data scientists follow best practices in software engineering and machine learning. As part of our contribution, we identify shortcomings in existing empirical studies for analyzing fairness-enhancing inter- ventions. W e then show how FairPr ep can be used to measure the impact of sound best practices, such as hyp erparameter tuning and feature scaling. In particular , our results suggest that the high variability of the outcomes of fairness-enhancing interventions observed in previous studies is often an artifact of a lack of hy- perparameter tuning. Further , we show that the choice of a data cleaning metho d can impact the eectiveness of fairness-enhancing interventions. A CM Reference Format: Sebastian Schelter , Yuxuan He, Jatin Khilnani, Julia Stoyanovich. 2019. FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness- Enhancing Interventions. In Procee dings of ACM Conference (Conference’17). A CM, New Y ork, NY, USA, 11 pages. https://doi.org/10.1145/nnnnnnn.nnnnnnn 1 IN TRODUCTION While the importance of incorporating responsibility — ethics and legal compliance — into machine-assisted decision-making is broadly recognized, much of current research in fairness, account- ability , and transparency in machine learning focuses on the last mile of data analysis — on mo del training and deployment. Several lines of recent work argue that critical opportunities for improv- ing data quality and representativeness, controlling for bias, and allowing humans to ov ersee and inuence the process are missed if Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commer cial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than ACM must be honored. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, requires prior specic permission and /or a fee. Request permissions from permissions@acm.org. Conference’17, July 2017, W ashington, DC, USA © 2019 Association for Computing Machinery . ACM ISBN 978-x-xxxx-xxxx-x/Y Y/MM. . . $15.00 https://doi.org/10.1145/nnnnnnn.nnnnnnn we do not consider earlier lifecyle stages [ 12 , 18 , 23 , 35 ]. Y et, very little has be en done to date to provide system-le vel support for data scientists who wish to develop, evaluate, and deploy responsible machine learning methods. In this paper we aim to ll this gap. W e build on the eorts of Friedler et al. [ 9 ] and Bellamy et al. [ 3 ], and develop a generalizable framework for evaluating fairness- enhancing interventions called FairPrep. Our framework currently focuses on data cleaning (including dierent methods for data im- putation), and model selection, tuning and validation (including feature scaling and hyperparameter tuning), and can be extended to accommodate earlier lifecycle stages, such as data integration and curation. In designing FairPrep we pursued the following goals: • Expose a developer-centered design throughout the life cycle, which allows for lo w eort customization and composition of the frame- work’s components. Here, we refer to data scientists and software developers. • Follow software engineering and machine learning best practices to reduce the technical debt of incorporating fairness-enhancing interventions into an already complex development and evalua- tion scenario [ 31 , 34 ]. Figure 1 summarizes the architecture of FairPrep. • Surface discrimination and due process concerns, including but not limited to disparate error rates, failure of a model to t the data, and failure of a model to generalize [23]. In what follows, we further motivate the ne ed for a compre- hensive design and e valuation framework for fairness-enhancing interventions, and explain how FairPrep can meet this need. 1.1 FairPrep by Example Consider Ann, a data scientist at an online retail company who wishes to develop a classier for deciding which payment options to oer to customers. Based on her experience, Ann decides to in- clude customer self-reported demographic data together with their purchase histories. Following her company’s best practices, Ann will start by splitting her dataset into training, validation and test sets. Ann will then use pandas, scikit-learn, and the accompanying data transformers to explore the data and implement data prepro- cessing, model selection, tuning, and validation. T o ensure proper isolation of held-out test data , Ann will work with the training and validation datasets, not the test dataset, during these stages. As the rst step of data preprocessing, Ann will compute value distributions and correlations for the features in her dataset, and identify missing values . She will ll these in using a default inter- polation method in scikit-learn, replacing missing values with the mean value for that feature. As another preprocessing step, Ann will perform feature scaling for the numerical attributes in her data. This step, also known as normalization, ensures that all features map to Conference’17, July 2017, W ashington, DC, USA Sebastian Schelter , Yuxuan He, Jatin Khilnani, Julia Stoyanovich the same value range, which will help certain kinds of classiers up-stream t the data correctly . Finally , following the accepted best practices at her company , Ann implements model selection and tuning. She will identify sev- eral classiers appropriate for her task, and will then tune hyper- parameters of each classier using k -fold cross-validation. T o do so, she will sp ecify a hyperparameter grid for each classier as appropriate, will train the classier for each point on the grid, and will then use her company’s standard accuracy metrics to nd a good setting of the hyperparameters on the validation dataset. As a r e- sult of this step, Ann will identify a classier that sho ws acceptable accuracy , while also exhibiting suciently low variance. The reader will observe that no fairness issues were surface d in Ann’s workow up to this point. This changes when Ann con- siders the accuracy of her classier more closely , and obser ves a disparity: the accuracy is lower for middle-aged women, and for female customers who did not specify their age as part of their self- reported demographic prole. Ann goes back to data analysis and observes that the value of the attribute age is missing far more fre- quently for female users than for male users. Further , she compares age distributions by gender , and notices dierences starting from the mid-thirties. Ann hypothesizes age to have been an important classication feature, r evisits the data cleaning step, and selects a state-of-the-art data imputation method such as Datawig [ 4 ] to ll in age (and other missing values) in customer demographics. Having adjusted data preprocessing in an attempt to reduce error rate disparities, Ann is now faced with several related challenges: • How should the data processing pipeline be extended to incorpo- rate additional fairness-specic evaluation metrics? Hand-coding evaluation metrics on a case-by-case basis, and determining ho w these should be traded o with each other , and with existing metrics is both time-consuming and error prone. • How can the eects of fairness-enhancing interventions be quan- tied, and judiciously validate d, to allow Ann to make an in- formed choice about which intervention to pick? These inter ven- tions may range from an improved data cleaning method that helps reduce variance for a demographic group, to a fairness- aware classier , and they may be incorporated at dierent pipeline stages — during data preprocessing, immediately before or after a classier is invoked, or as part of the classication itself. • How does one continue to follow software engineering and ML best practices when incorporating fairness considerations into these pipelines? For example, how does Ann ensure appropriate level of isolation of the test set? How does she go about tuning hyperparameters in light of additional obje ctives? How does she make her analysis reproducible, to support more eective debugging, and auditing for correctness and legal compliance? T o address these challanges, Ann will turn to existing develop- ment and evaluation frameworks, that by Friedler et al. [ 9 ] and IBM’s AIF360 [ 3 ]. While these frameworks are certainly a good starting point, they will unfortunately fall short of meeting Ann’s needs. 1 The main reason is that these frameworks are designed around a small number of academic datasets and use cases, and do not allow to integrate additional data preprocessing steps that are 1 This conjecture was veried by Ann, who met with us for a drink after her failed attempts. Ann’s real name and bar location are suppressed for anonymity :) a crucial part of existing machine learning pipelines, and are not designed to enforce best practices. 1.2 Contributions and Roadmap This paper makes the following contributions: • W e discuss shortcomings and lack of b est practices in existing empirical studies and software for analyzing fairness-enhancing interventions (Section 2). • W e propose FairPrep, a design and evaluation framework that makes data a rst-class citizen in fairness-related studies. Fair- Prep implements a mo dular data lifecycle, allowing to re-use exist- ing implementations of fairness metrics and interventions, and to integrate custom feature transformations and data cleaning oper- ations from real world use cases (Sections 3 and 4). W e implement FairPrep on top of scikit-learn [26] and AIF360 [3] (Section 4). • W e apply FairPrep to illustrate that enforcing best practices of machine learning evaluation, which are easy to get accidentally wrong with existing framew orks, and incorporating data clean- ing methods can impact the eectiveness of fairness-enhancing interventions (Se ction 5). W e present results of running Fair- Prep using s ome of the same benchmark datasets, classiers, and fairness-enhancing interventions as Friedler et al. [9]. W e present related work in Section 6 and conclude in Section 7. 2 SHORTCOMINGS OF PREVIOUS WORK W e inspected the code bases for existing studies [ 9 ], and e valuation frameworks [ 3 ], and thereby identied a set of shortcomings that motivated us to design a comprehensive, data-centric evaluation framework. In the following, w e detail our ndings. 2.1 Insucient Isolation of Held-Out T est Data A major requirement for the oine evaluation of ML algorithms is to simulate the real-world deployment scenario as closely as possible. In the real world, we train our model ( and select its hyper- parameters) on observed data from the past, and predict for target data later , which we have not yet seen and for which we typically do not know the gr ound truth. In oine evaluation, w e typically evaluate a model on a test set that was randomly sampled from observed historical data. It is crucial that this test set be completely isolated from the process of model selection, which, in turn, is only allowed to use training data (the remaining, disjunct observed his- torical data). Importantly , data isolation must also be guaranteed for preprocessing operations such as feature scaling or missing value imputation. If these operations wer e allowed to look at the test set, this could potentially result in a target leakage. Unfortunately , we encountered several violations of the test set isolation requirement in the existing benchmarking framework by Friedler at al. [ 9 ]. These violations, detaile d below , bring into question the reliability of reported study results. Further , we found that the architecture of the IBM AIF360 toolkit [ 3 ] does not support data isolation best practices for feature transformation. Hyperparameter selection on the test set . The grid search for hyperparameters 2 of fairness-enhancing models and inter ventions 2 https://github.com/algofairness/fairness- comparison/blob/ 4e7341929ba9cc98743773169cd3284f4b0cf4bc/fairness/algorithms/ParamGridSearch. py#L41 FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Interventions Conference’17, July 2017, W ashington, DC, USA in [ 9 ] computes metrics for all hyperparameter candidates on the test set and returns the candidate that gav e the best performance. This strongly violates the isolation requirement, as w e would not know the ground truth labels for data to predict on in the real world, and therefor e could only use a hyperparameter setting that w orked well on some pr eviously observed data. An evaluation pr ocedure should maintain an additional validation set, used to select the best hyperparameters, and only evaluate the prediction quality of the resulting single best hyperparameter candidate on the test set, in order to measure how w ell the model generalizes to unseen data. Lack of data isolation for missing value imputation . A com- mon challenge in real world ML scenarios is to handle examples with missing values. Often, this challenge is addressed by applying dierent missing value imputation techniques [ 33 ] to complete the data. Again, in order to simulate real w orld scenarios as closely as possible, we should carefully isolate training data from the held-out test data for missing value imputation. If our missing value impu- tation model were allow ed to access test data (and could thereby compute statistics of this data, which is unseen in practice), it would exhibit the potential for accidental target leakage . Unfortunately , this isolation is also not incorporate d into the design of existing studies, which invoke the missing value handling logic b efore com- puting the train/test split of the data. 3 Lack of data isolation for feature transformation . Analogously to the previously discussed case of missing value imputation, w e also nee d to reliably isolate training data from the held-out test data during feature transformation. Many feature transformation techniques (such as scalers for numerical variables or embe ddings of certain attributes) rely on the computation of aggr egate statistics over the data. T o simulate real world scenarios, it is crucial to only compute these aggregate statistics (“t the feature transformers”) on the training data. Computing aggregate statistics before conducting the train/validation/test splits can result in target leakage. W e did not nd such cases in the existing studies and frameworks, as their feature transformation mostly consists of format changes and the one-hot encoding of categorical variables — record-level operations that are indep enent of the data splits. Nevertheless, the design of these framew orks does not support isolated feature computations, as the featurization of the data is applie d before data splitting. 4 Therefore, a data scientist could accidentally introduce target leakage if she followed the existing software ar chitecture. 2.2 Lack of Hyperparameter T uning for Baseline Algorithms W e additionally found that the study by Friedler et al. [ 9 ] did not tune the hyperparameters of the baseline algorithms 5 for which pre-processing and post-processing interventions are applied, even though they tuned the hyperparameters of the fairness inter ven- tions, investigating the resulting fairness / accuracy trade-o. This is problematic because there is in general no guarantee that the 3 https://github.com/algofairness/fairness- comparison/blob/ 4e7341929ba9cc98743773169cd3284f4b0cf4bc/fairness/preprocess.py#L37 4 https://github.com/IBM/AIF360/blob/master/aif360/datasets/standard_dataset.py# L84 5 https://github.com/algofairness/fairness- comparison/tree/ 35f b53f7cc7954668eeee28eac5f b20faf89b3d8/fairness/algorithms/baseline learning procedure for a baseline algorithm with the default pa- rameters will converge to a solution that ts training data and generalizes to unseen data. If such a model were deployed in prac- tice, this failure to t and to generalize would lead to a due process violation according to Lehr and Ohm (see [23] p.710-715). Friedler et al. [ 9 ] found high variability of the fairness and ac- curacy outcomes with respect to dierent train/test splits. While we wer e unable to repr oduce these results dir ectly (see Section 2.5), were w ere able to observe a similar lev el of variability in our exper- iments with default parameter settings. ML te xtbooks [ 11 ] and research [ 21 ] suggests to use more expen- sive evaluation techniques such as k -fold cross-validation, which have the advantage of quantifying the variability of the estimated prediction error for a given hyperparameter selection (and thus giving a principled method to navigate the bias-variance trade-o ). 2.3 Lack of Feature Scaling W e observed that both existing frameworks [ 3 , 9 ] do not normalise the numeric features of the input data, but ke ep them on their original scale. While some ML models such as decision trees are insensitive features on dierent scales, many other algorithms im- plicitly rely on normalized and/or standardized features. Examples among the objective functions of popular models are the RBF kernel used in support vector machines, as well as L1 and L2 regularizers of linear models. 2.4 Removal of Records with Missing V alues Another point of critique is that the study of Frie dler et al. [ 9 ] ignored records with missing values in the data (by r emoving them before running experiments), which means that the studies’ ndings do not necessarily generalize to data with quality issues. Y et, real- world de cision-making systems still have to make decisions for data with missing values. The framework from [ 9 ] has a handle for a dataset to treat missing data, but this is never implemented as far as we could determine. In the default preprocessing routines, records with missing values are always remo ved from the data. 6 Thereby , existing frameworks are unable to investigate the ef- fects of fairness enhancing interventions on records with missing values, which could be especially imp ortant for cases where a pro- tected group has a higher likelihood of encountering missing values in their data. It has be en documented that sur vey data from ethnic minorities may b e noisier than data collected from the majority ethnic group [ 17 ]. W e also see evidence of this in the benchmark datasets: in the commonly-used Adult Income dataset 7 , there is a four times higher chance for the native-country attribute to be missing for non-white than for white persons. 2.5 Lack of Reproducibility An important objective of an evaluation framework should be to make its computations reproducible. A major factor for this is to x the seeds for pseudo-random number generators throughout the evaluation run, and provide the xed seed to all components (data splitters, learning algorithms, feature transformations) so that they 6 https://github.com/algofairness/fairness- comparison/blob/ 4e7341929ba9cc98743773169cd3284f4b0cf4bc/fairness/preprocess.py#L40 7 https://archive.ics.uci.edu/ml/datasets/adult Conference’17, July 2017, W ashington, DC, USA Sebastian Schelter , Yuxuan He, Jatin Khilnani, Julia Stoyanovich ra w d a t a s e t t r a i n s e t ra w t e s t s e t m i ss in g v al ue ha ndl er c o m p l e t e t ra i n s e t fe ature tra ns form f e a t u ri s e d t r a i n s e t c o m p l e t e t e s t s e t f e a t u ri s e d t e s t s e t f i t f i t p re- proc es s or [ o p t i o n a l ] f i t p re p ro c e s s e d t r a i n s e t p re p ro c e s s e d t e s t s e t cl as sif ie r f i t p r e d i c t i o n s f o r t e s t s e t pos t- p roce s so r [ o p t i o n a l ] p re d i c t i o n s f o r t e s t s e t f i t m e t ri c s f o r t e s t s e t res am p ler [ o p t i o n a l ] ra w t ra i n s e t ra w v a l s e t c o m p l e t e v a l s e t f e a t u ri s e d v a l s e t p re p ro c e s s e d v a l s e t p re d i c t i o n s f o r v a l s e t p re d i c t i o n s f o r v a l s e t M o d e l se l e ct i o n o n t ra i n i n g se t a n d v a l i d a t i o n se t , r e p e a t e d f o r u se r- d e f i n e d h yp e rp a ra me t e r g ri d 1 ra w t r a i n s e t m e t ri c s f o r m o d e l 1 m e t r i c s f o r m o d e l 2 m e t r i c s f o r m o d e l n . . . U s e r -d e f i n e d ch o i ce o f b e s t m o d e l , b a se d o n me t ri c s o n va l i d a t i o n s e t 2 m is s in g va lue h and le r f eatu re t rans fo rm p re- p roc es so r [ o p t i o n a l ] c la ss ifi er po st- proc es s or [ o p t i o n a l ] f i t Ap p l i ca t i o n o f b e st m o d e l ( a n d c o r re sp o n d i n g d a t a t r a n sf o r ma t i o n s) o n t e st s e t , co mp u t a t i o n o f me t ri cs o n t e st se t 3 Figure 1: Data life cycle in FairPrep, designed to enforce isolation of the test data, and to allow for customization through user- provided implementations of dierent comp onents. An evaluation run consists of three dierent phases: (1) learn dierent models, and their corresponding data transformations, on the training set; (2) compute performance / accuracy-related metrics of the model on the validation set, and allow the user to sele ct the ‘best’ model according to their setup; (3) compute predictions and metrics for the user-selecte d best model on the held-out test set. can lev erage it to conduct reproducible random number generation. W e nd that data splitting 8 in [ 9 ] does not use xed random se eds. This has been improved in AIF360 [ 3 ], where xed random seeds are used for data splitting. However other components such as the methods to train models 9 , do not expose a common random seed. 3 FRAMEW ORK DESIGN Our goal for FairPrep is to provide an evaluation environment that closely mimics real world use cases. (i) Data isolation — in order to avoid target leakage, user code should only interact with the training set, and never be able to access the held-out test set. User code can train models or t feature transformers on the training data, which will be applied by the framework to the test set later on. This is a form of inversion of control , a common pattern applied in middleware frameworks [ 14 ]. The framework should further- more especially take care of data with quality problems. For example, it should allo w experimenters to isolate the eects of their code on records with missing values by computing metrics and statistics separately for them. (ii) Componentization — dierent data transformations and learn- ing operations should b e implementable as single, exchangable 8 https://github.com/algofairness/fairness- comparison/blob/ 35f b53f7cc7954668eeee28eac5f b20faf89b3d8/fairness/data/objects/ProcessedData. py#L22 9 https://github.com/IBM/AIF360/blob/ca48d6557edf61ddfd112d6199397d9e48ebb6e1/ aif360/algorithms/transformer .py standalone components; the framework should expose simple interfaces to users, allowing them to rapidly customize their experiments with low eort. (iii) Explicit mo deling of the data lifecycle — the framework denes an explicit, standardized data lifecycle that applies a sequence of data transformations and model training in a particular , predened order . Users inuence and dene the lifecycle by conguring and implementating particular components. At the same time, the framework should also support users as much as possible in applying best practices from machine learning and software engineering. Figure 1 illustrates the data lifecycle during the e xecution of a run of FairPrep, which we now describe in detail. The execution of an evaluation run occurs in the three subsequent phases: 1 Model selection on training set and validation set . The purpose of this phase is to train dierent mo dels (for dierent hyperparameter settings) on the training data, and compute their corresponding performance metrics on the validation set. FairPrep applies a xed series of consecutive steps in this phase, some of which are optional, and all of which can be customized with dedi- cated component implementations by our users. (1) In the rst (optional) st ep, we allow users to resample the train- ing data: to apply bootstrapping, to balance classes, or to gener- ate additional synthetic examples. (2) Next, the user has to decide how to treat records with miss- ing values. FairPrep oers a set of predened strategies such FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Interventions Conference’17, July 2017, W ashington, DC, USA as ‘complete case analysis’ (removal of records with missing values) or dierent imputation algorithms, ranging fr om simple strategies that ll in the most frequent value of an attribute, to more sophisticated strategies that learn a mo del tailored to the data for imputation. Note that FairPrep enforces that imputation models are learned on the training data only . (3) After imputation on the raw training data, FairPrep applies feature transformations to convert the data into a numeric for- mat suitable for learning algorithms. By default, the frame work scales numeric features with a user-chosen strategy , and one-hot encodes categorical values. If the feature transformers require aggregate statistics from the data, we again ensure that these are only computed on the training dataset. The ‘tted’ feature transformers are stored in memory afterwards, in order to be applied to the validation set and test set in later phases. (4) The next (optional) step is the application of a pr e-processing in- tervention to enhance the fairness of the outcome ( e.g., reweigh- ing the training instances). (5) Subsequently , FairPrep trains a classier on the training data. This can be a baseline classier (such as logistic regression) that will be combined with a pre-processing or p ost-processing fairness-enhancing intervention, or a sp ecialized in-processing model for fairness enhancement. (6) Next, FairPrep repeats the data transformation conducted so far on the validation set, and applies the traine d model to compute predictions for the training and the validation datasets. (7) In the nal (optional) step , users have an opportunity to apply a post-processing intervention to adjust computed predictions in a use-case specic manner . 2 User-dened choice of b est model . In the second phase , Fair- Prep computes a large set of accuracy and fairness-related metrics for each model based on its predictions for the validation set and training set. A user can then choose the ‘best’ model via a user- dened function, selecting the mo del with a suitable fairness / accuracy trade-o for their scenario. 3 Application of the ‘best’ model (and its corresponding data transformations) on test set . In the nal phase, FairPrep will automatically apply the user-selected best model (and its corre- sponding data transformations) on the test set, and provide the user with a nal set of metrics. Note that, due to data isolation concerns, the user never gets direct access to the test set. 4 IMPLEMEN T A TION In the following, w e detail implementation asp ects of FairPr ep. Our framework is based on AIF360 [ 3 ], from which it leverages the dataset abstraction, metrics and fairness enhancing interventions, as well as on scikit-learn [ 26 ], from which it uses several data transformations and models. Experiments & Datasets . At the heart of FairPrep is an abstract class for experiments that denes the execution order and lifecycle shown in Figure 1. This class needs to be extended for each experi- mental dataset. For datasets, we build up on the BinaryLabelDataset abstraction from AIF360, and make their implementation more ex- ible by allowing operations like one-hot enco ding on dierent ver- sions by adding feature dimensions for unseen categorical values. This enables FairPrep to view data in relational form (as a pandas dataframe) or in matrix form (e.g., featur es as numpy matrix), and to access extensive dataset metadata (e.g., sensitive attribute in- formation). FairPrep integrates several datasets commonly used in fairness-related studies: adult – The Adult Income dataset 10 contains information about individuals from the 1994 U .S. census, with sensitive attributes race and sex, as well as instances with missing values. The task is to predict if an individual earns more or less than $50 , 000 p er year . germancredit – The German Credit dataset 11 contains demo- graphic and nancial data about p eople, as well as the sensitive attribute sex. The task is to predict an individual’s credit risk. propublica – The ProPublica dataset 12 includes data such as criminal history , jail and prison time, demographics and COM- P AS risk scores for defendants from Broward County , F lorida. It includes the sensitive attributes race and se x. The prediction con- cerns a binary “recidivism” outcome, denoting whether a person was rearrested within two years after the charge giv en in the data. ricci – The Ricci dataset contains promotion data ab out re- ghters, used as part of a Supreme court case (Ricci v . DeStefano) dealing with racial discrimination. The dataset contains the sensi- tive attribute race. The task is to predict the promotion decision. The original promotion decision (assignment to the positive class) was made by a threshold of achieving at least a scor e of 70 on the combined exam outcome. Integrating a custom dataset with FairPrep only r equires users to load the data as a pandas dataframe and congure several class variables that denote which attributes to use as numeric and cate- gorical features, which attribute to use as the class label, and how to identify the protected groups in the dataset. Data Preprocessing Steps . W e highlight some of the data pre- processing and feature transformation operations supported by FairPrep. W e integrate common feature scaling techniques such as standardisation and min-max scaling from scikit-learn. W e addi- tionally provide a component that does not scale numeric features (which might be dangerous) for studying the eect of this pr epro- cessing step. Additionally , we provide a MissingValueHandler interface to dene dierent ways how to treat records with missing values. FairPrep oers a set of predened strategies such as ‘ complete case analysis’ (removal of recor ds with missing values) or a simple im- putation strategy based on scikit-learn’s ModeImputer that lls in the most frequent value of an attribute. In addition, our abstraction also supports more sophisticated techniques that learn a model to impute missing values. W e pro vide an example of such a strat- egy as part of FairPrep ’s code base that leverages Datawig [ 4 ], an imputation library that auto-featurizes data and learns a deep learn- ing model tailored to the data for imputation. Its implementation focuses on imputing one column at a time for ecient modeling. W e utilize this approach in the fit method to learn an imputation model for each feature using the remaining features (but not the class label) in the training dataset as input. At imputation time 10 https://archive.ics.uci.edu/ml/datasets/adult 11 https://archive.ics.uci.edu/ml/support/Statlog+(German+Credit+Data) 12 https://github.com/propublica/compas- analysis Conference’17, July 2017, W ashington, DC, USA Sebastian Schelter , Yuxuan He, Jatin Khilnani, Julia Stoyanovich (in the handle_missing method), each of of the tted models is applied on the target data to impute the missing attributes. c l a s s D a t a w i g I m p u t e r ( M i s s i n g V a l u e H a n d l e r ) . . . d e f f i t ( s e l f , t r a i n _ d a t a ) : c o l u m n s = t r a i n _ d a t a . f e a t u r e _ c o l u m n s # L e a r n a n i m p u t a t i o n m o d e l f o r e a c h c o l u m n f o r t a r g e t _ c o l u m n i n s e l f . t a r g e t _ c o l u m n s : i n p u t _ c o l u m n s = [ c o l u m n i n c o l u m n s i f c o l u m n ! = t a r g e t _ c o l u m n ] s e l f . i m p u t e r s [ t a r g e t _ c o l u m n ] = d a t a w i g . I m p u t e r ( i n p u t _ c o l u m n s = i n p u t _ c o l u m n s , o u t p u t _ c o l u m n = t a r g e t _ c o l ) . f i t ( t r a i n _ d f = t r a i n _ d a t a ) d e f h a n d l e _ m i s s i n g ( s e l f , t a r g e t _ d a t a ) : c o m p l e t e d _ d a t a = t a r g e t _ d a t a . c o p y ( ) # I m p u t e e a c h c o l u m n f o r c o l u m n i n s e l f . t a r g e t _ c o l u m n s : c o m p l e t e d _ d a t a [ c o l u m n ] = s e l f . i m p u t e r s [ c o l u m n ] . p r e d i c t ( t a r g e t _ d a t a ) r e t u r n c o m p l e t e d _ d a t a Models . FairPrep exposes a simple interface for learning algo- rithms, to allow the integration of many dierent models with low eort. The fit_model method of a learner provides the imple- mentation with access to the training data and the random seed used by the current run (to allow for reproducible training). W e provide implementations for common ML models, such as logistic regression ( SGDClassifier with logistic loss function) and decision trees from scikit-learn. W e no w give two e xamples of integrating learners: a baseline model from scikit-learn and an in-processing intervention from AIF360. Integrating a baseline mo del from scikit-learn . W e implement a logis- tic regression learner with 5-fold cross-validation into our frame- work as follows. W e grid search over common hyperparameters for logistic regression, such as the type of regularization and the learning rate. With the dened parameter choices and 5-fold cross validation, the grid search automatically inv estigates 60 dierent settings. Note that we propagate the random se ed to all components to ensure reproducible behavior . c l a s s L o g i s t i c R e g r e s s i o n ( L e a r n e r ) : d e f f i t _ m o d e l ( s e l f , t r a i n _ d a t a , s e e d ) : # H y p e r p a r a m e t e r g r i d p a r a m _ g r i d = { ' l e a r n e r _ _ l o s s ' : [ ' l o g ' ] , ' l e a r n e r _ _ p e n a l t y ' : [ ' l 2 ' , ' l 1 ' , ' e l a s t i c n e t ' ] , ' l e a r n e r _ _ a l p h a ' : [ 0 . 0 0 0 0 5 , 0 . 0 0 0 1 , 0 . 0 0 5 , 0 . 0 0 1 ] } # P i p e l i n e f o r c l a s s i f i e r p i p e = P i p e l i n e ( [ ( ' l e a r n e r ' , S G D C l a s s i f i e r ( r a n d o m _ s t a t e = s e e d ) ) ] ) # S e t u p 5 - f o l d c r o s s - v a l i d a t i o n s e a r c h = G r i d S e a r c h C V ( p i p e , p a r a m _ g r i d , c v = 5 , r a n d o m _ s t a t e = s e e d , f i t _ p a r a m s = { ' l e a r n e r _ _ s a m p l e _ w e i g h t ' : t r a i n _ d a t a . i n s t a n c e _ w e i g h t s } ) # L e a r n m o d e l v i a c r o s s - v a l i d a t i o n r e t u r n s e a r c h . f i t ( t r a i n _ d a t a . f e a t u r e s , t r a i n _ d a t a . l a b e l s ) Integrating an in-processing inter vention . Next, we show how to integrate an in-processing fairness-enhancing intervention from AIF360. Adversarial debiasing [ 40 ] learns a classier to maximize prediction accuracy and simultaneously reduce an adversar y’s abil- ity to determine the protected attribute from the predictions. This model can be integrated into FairPrep with a few lines of code. c l a s s A d v e r s a r i a l D e b i a s i n g ( L e a r n e r ) : . . . d e f f i t _ m o d e l ( s e l f , t r a i n _ d a t a , s e e d ) : a d _ m o d e l = A d v e r s a r i a l D e b i a s i n g A I F 3 6 0 ( p r i v i l e g e d _ g r o u p s = s e l f . p r i v i l e g e d _ g r o u p s , u n p r i v i l e g e d _ g r o u p s = s e l f . u n p r i v i l e g e d _ g r o u p s , s e s s = s e l f . t f _ s e s s i o n , s e e d = s e e d ) r e t u r n a d _ m o d e l . f i t ( a n n o t a t e d _ t r a i n _ d a t a ) Fairness Enhancing Inter ventions . Next, we focus on fairness- enhancing interventions. Note that in-processing methods, which learn a specialized model, can simply be implemented as learners into our framework. Therefore, we only ne ed to additionally in- tegrate the pre-processing and post-pr ocessing interventions, for which FairPrep provides dedicated abstractions. In the following, we detail how to integrate a preprocessing technique called ‘disparate impact removal’ [ 7 ], which edits feature values to incr ease group fairness while preserving the rank-ordering within groups. The repair level parameter r epresents the repair amount. W e le verage the implementation from AIF360. Note that FairPrep provides infor- mation about protected and unprotected groups in the dataset to the preprocessing intervention. The integration of post-processing techniques works analogously . c l a s s D I R e m o v e r ( P r e p r o c e s s o r ) : d e f p r e _ p r o c e s s ( s e l f , d a t a , p r i v i l e g e d _ g r o u p s , u n p r i v i l e g e d _ g r o u p s , s e e d ) : d i r e m o v e r = D i s p a r a t e I m p a c t R e m o v e r ( r e p a i r _ l e v e l = s e l f . r e p a i r _ l e v e l ) r e t u r n d i r e m o v e r . f i t _ t r a n s f o r m ( a n n o t a t e d _ d a t a ) Metrics . W e leverage the metrics implementations from AIF360 13 , and compute 25 dierent metrics for the o verall train and test set, as well as separately for the privileged and unprivilege d groups. In adddition, we compute 22 dierent global metrics that measure the eects between the privileged and the unprivilege d groups. Every experiment writes an output le with these metrics by default. Example . W e nally revisit our introductor y example from Se c- tion 1.1, where our data scientist Ann wants to investigate the impact of dierent fairness-enhancing interventions on her classi- er that decides on payment option oerings. # F i x e d r a n d o m s e e d s f o r r e p r o d u c i b i l i t y s e e d s = [ 4 6 9 4 7 , 7 1 7 3 5 , 9 4 2 4 6 , . . . ] # I n t e r v e n t i o n s i n t e r v e n t i o n s = [ N o I n t e r v e n t i o n ( ) , R e w e i g h i n g ( ) , D i R e m o v e r ( 0 . 5 ) ] f o r s e e d i n s e e d s : f o r i n t e r v e n t i o n i n i n t e r v e n t i o n s : # C o n f i g u r e e x p e r i m e n t e x p = P a y m e n t O p t i o n G e n d e r E x p e r i m e n t ( r a n d o m _ s e e d = s e e d , m i s s i n g _ v a l u e _ h a n d l e r = D a t a w i g I m p u t e r ( ' a g e ' ) , n u m e r i c _ a t t r i b u t e _ s c a l e r = S t a n d a r d S c a l e r ( ) , l e a r n e r = L o g i s t i c R e g r e s s i o n ( ) , p r e _ p r o c e s s o r = i n t e r v e n t i o n ) # r u n e x p e r i m e n t , a n d w r i t e m e t r i c s t o d i s k e x p . r u n ( ) Ann integrates her custom dataset via a PaymentOptionExperiment class with FairPrep, which describes how to load the dataset and de- nes the attributes to use as features, as the label, and the sensitive attributes. Next, she congures the experiment to match her use 13 https://github.com/IBM/AIF360/tree/master/aif360/metrics FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Interventions Conference’17, July 2017, W ashington, DC, USA 0.5 1.0 DI 0.6 0.7 0.8 accuracy no intervention no tuning tuning 0.5 1.0 DI di-remover (0.5) 0.5 1.0 DI di-remover (1.0) 0.5 1.0 DI 0.6 0.7 0.8 accuracy reweighing 0.5 1.0 DI reject_option 0.5 1.0 DI cal_eq_odds (a) Accuracy / disparate impact for tuned vs. non-tuned logistic regression models on germancredit . 0.4 0.0 0.4 FNRD 0.6 0.7 0.8 accuracy no intervention no tuning tuning 0.4 0.0 0.4 FNRD di-remover (0.5) 0.4 0.0 0.4 FNRD di-remover (1.0) 0.4 0.0 0.4 FNRD 0.6 0.7 0.8 accuracy reweighing 0.4 0.0 0.4 FNRD reject_option 0.4 0.0 0.4 FNRD cal_eq_odds (b) Accuracy / false negative rate dierence for tuned vs. non-tuned logistic regression models on germancredit . 0.4 0.0 0.4 FPRD 0.6 0.7 0.8 accuracy no intervention no tuning tuning 0.4 0.0 0.4 FPRD di-remover (0.5) 0.4 0.0 0.4 FPRD di-remover (1.0) 0.4 0.0 0.4 FPRD 0.6 0.7 0.8 accuracy reweighing 0.4 0.0 0.4 FPRD reject_option 0.4 0.0 0.4 FPRD cal_eq_odds (c) Accuracy / false positive rate dierence for tuned vs. non-tuned logistic regression models on germancredit . 0.5 1.0 DI 0.6 0.7 0.8 accuracy no intervention no tuning tuning 0.5 1.0 DI di-remover (0.5) 0.5 1.0 DI di-remover (1.0) 0.5 1.0 DI 0.6 0.7 0.8 accuracy reweighing 0.5 1.0 DI reject_option 0.5 1.0 DI cal_eq_odds (d) Accuracy / disparate impact for tuned vs. non- tuned decision tree models on germancredit . 0.4 0.0 0.4 FNRD 0.6 0.7 0.8 accuracy no intervention no tuning tuning 0.4 0.0 0.4 FNRD di-remover (0.5) 0.4 0.0 0.4 FNRD di-remover (1.0) 0.4 0.0 0.4 FNRD 0.6 0.7 0.8 accuracy reweighing 0.4 0.0 0.4 FNRD reject_option 0.4 0.0 0.4 FNRD cal_eq_odds (e) Accuracy / false negative rate dierence for tuned vs. non-tuned decision tree models on germancredit . 0.4 0.0 0.4 FPRD 0.6 0.7 0.8 accuracy no intervention no tuning tuning 0.4 0.0 0.4 FPRD di-remover (0.5) 0.4 0.0 0.4 FPRD di-remover (1.0) 0.4 0.0 0.4 FPRD 0.6 0.7 0.8 accuracy reweighing 0.4 0.0 0.4 FPRD reject_option 0.4 0.0 0.4 FPRD cal_eq_odds (f ) Accuracy / false positive rate dierence for tuned vs. non-tuned decision tree models on germancredit . Figure 2: Impact of hyperparameter tuning on the accuracy and fairness metrics of logistic regression and decision tree mo dels (in combination with various preprocessing and p ostprocessing interventions) on the germancredit dataset. Hyperparameter tuning ( red dots ) results in higher accuracy and reduced variance of the fairness outcome compared to no tuning ( gray dots ) in many cases. case by dening a logistic regression mo del as the baseline algo- rithm, leveraging Datawig to impute the age attribute of customers, and appropriately scaling features. Furthermor e, she denes a set of pre-processing inter ventions, for which she would like to investi- gate the impact on the outcome of the classier , and xes the set of random seeds to use , to have reproducible results. Now she is ready to run the experiments, which will output the resulting metrics to disk (so that they can be subsequently explored via a juypter notebook). 5 EXPERIMEN T AL EV ALU A TION W e now demonstrate ho w FairPrep can be used to sho wcase and overcome some of the shortcomings outlined in Section 2. 5.1 Impact of Hyperparameter T uning on the V ariability of Accuracy and Fairness In the rst experiment, we aim to investigate the eect of not tuning the hyperparameters of baseline models during experimentation (as discussed in Section 2.2). Dataset . W e leverage the germancredit dataset for this experi- ment, which contains 20 demographic and nancial attributes of 1000 people, as well as the sensitive attribute sex. The task is to predict each individual’s credit risk. Setup . W e congure FairPrep as follows: we randomly split the data into 70% train data, 10% validation data, 20% test data, based on supplied xed random se eds (for reproducibility). W e apply a xed set of data preprocessing steps: we do not resample the data, do not handle missing values (as the data is complete already), and standardize numeric features. W e leverage two baseline models (lo- gistic regression and decision trees) in two dierent variants each: ( i ) without hyperparameter tuning, where we just use the default hyperparameters of the baseline model; ( ii ) with hyperparameter tuning, where apply gridsearch (o ver 3 regularizers and 4 learning rates for logistic regression; over 2 split criteria, 3 depth params, 4 min samples per leaf params, 3 min samples per split params for the decision tree) and v e-fold cross validation on the training data. W e apply three dierent fairness-enhancing interventions that pre- process the data: ‘disparate impact remover’ (‘di-r emover’ in the plots) [ 7 ] with repair lev els 0.5 and 1.0, as well as ‘reweighing’ [ 15 ]. Additionally , we experiment with two dierent fairness-enhancing interventions that post-process the predictions: ‘reject option clas- sication’ [ 16 ] and ‘calibrated equal odds’ [ 28 ]. W e leverage 16 dierent random seeds for the experiment and execute 1,344 runs in total. W e report metrics computed from the pr edictions on the held-out test set. Conference’17, July 2017, W ashington, DC, USA Sebastian Schelter , Yuxuan He, Jatin Khilnani, Julia Stoyanovich 0.5 1.0 DI 0.3 0.5 0.7 0.9 accuracy no intervention no scaling scaling 0.5 1.0 DI reweighing no scaling scaling 0.5 1.0 DI di-remover no scaling scaling (a) High sensitivity on feature scaling for logistic regression on the ricci dataset. Red dots represent experiments with feature scaling, gray dots represent experiments without scaling, and contain many cases where no satisfactory classier could be learne d. 0.5 1.0 DI 0.3 0.5 0.7 0.9 accuracy no intervention no scaling scaling 0.5 1.0 DI reweighing no scaling scaling 0.5 1.0 DI di-remover no scaling scaling (b) Robustness of decision trees against a lack of featur e scaling on the ricci dataset. Red dots represent experiments with feature scaling, gray dots represent experiments without feature scaling. Figure 3: Comparison of the impact of a lack of numeric fea- ture scaling on the ricci dataset. De cision trees are robust against this lack, while logistic regression often fails to learn a reasonable classier . Results . W e plot the results of this experiment in Figure 2, where we show the r esulting accuracy and sev eral fairness related mea- sures 14 between the privileged and unprivilege d groups, including disparate impact (DI), the dierence in false negative rates (FNRD), and the dierence in false p ositive rates (FPRD). The red dots denote the outcome when we apply hyp erparameter tuning to the baseline model, while the gray dots denote the outcome using the default model parameters, without tuning. W e obser ve a large number of cases where the tune d variant results in b oth, a higher accuracy model and a lower variance in the fairness outcome. Examples are ( i ) the accuracy and dis- parate impact for the ‘di-r emover’ and ‘rew eighing’ interventions of both logistic regression and decision trees in Figures 2(a) & 2(d), ( ii ) the accuracy and false negative rate dierence for ‘di-remov er’ and logistic regression, ‘ di-remov er’ and decision trees, as well as ’reweighing’ and decision trees in Figures 2(b) & 2(e); and ( ii i ) ac- curacy and false positive rate dier ence for ‘di-r emover’ for both models and ‘reweighing’ for the decision tree. These results strongly suggest that the high variability of the fairness and accuracy outcomes with respect to dierent train/test splits observed in previous studies [ 9 ] might be an artifact of the lack of hyperparameter tuning of the baseline models in these studies (as discussed in Section 2.2). 14 Note that we plot these measures regar dless of whether the intervention optimizes for them or not. 5.2 Impact of Feature Scaling In the next experiment, we show that the lack of feature scaling (Sec- tion 2.3) can lead to the failure to learn a well-working model. Dataset . W e leverage the ricci dataset that has 118 entries and ve attributes, including the sensitive attribute race. Setup . W e congure FairPrep as follows: we randomly split the data into 70% train data, 10% validation data, 20% test data, based on supplied random seeds. W e do not resample the data, and do not need to handle missing values as the data is complete already . W e leverage two baseline models (logistic regression and decision tree), with hyperparameter tuning analogous to the previous section, and two fairness-enhancing interventions that preprocess the data: ‘disparate impact remover’ and ‘reweighing’ . W e vary the treatment of numeric features, how ever: for one set of runs, we leav e these on their original scale, and for the remaining runs w e standardize them using our integration of scikit-learn’s StandardScaler . W e execute 216 runs in total and report metrics from predictions on the held-out test set. Results . The results of our runs are illustrated in Figure 3, where Figure 3( a) sho ws the accuracy and disparate impact for the logistic regression model (under dierent inter ventions) and Figure 3( b) analogously shows the results for the decision tree. The lack of scaling did not impact the results for the de cision tree: the red dots with feature scaling and the gray dots without feature scaling ar e overlapping. Logistic regr ession (trained with stochastic gradient descent in this setup), however , often fails to learn a valid model in the case of unscaled features, resulting in an accuracy under 50% — worse than if classication decisions wer e assigned at ran- dom. This nding conrms our claim that a lack of feature scaling alone can lead to unsatisfactory results in an ML evaluation setup (independently of fairness-related issues). 5.3 Impact of Missing V alue Imputation In our third experiment, we sho wcase how FairPrep can be lever- aged to investigate the ee cts of including records with missing values into a study (which are commonly ltered out in other stud- ies and toolkits, as discussed in Section 2.4). Dataset . W e leverage the adult dataset for this experiment, with 32,561 instances and 14 attributes, including the sensitive attributes race and sex , and 2,399 instances with missing values. The task is to predict whether an individual makes more or less than $50 , 000 per year . Fairness evaluation is conducted between the privileged group of white individuals (85% of records) and the unprivileged group of non-white individuals (15% of records). Among the 14 attributes, three have missing values — workclass , occupation , and native-country . Missing values do not se em to o ccur at random, as the records with missing values exhibit very dierent statistics than the complete records. For example, the positive class label (high income) occurs with 24% probability among the complete records, but only with 14% probability in the records with missing values. Additionally , married individuals are in the vast majority in the complete records, while the most frequent marital-status among the incomplete records is never-married . Furthermore, the records with missing values from the priv- ileged group are very dierent from the records with missing FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Interventions Conference’17, July 2017, W ashington, DC, USA 0.85 0.90 accuracy (datawig) 0.85 0.90 accuracy (mode) no intervention imputed complete 0.85 0.90 accuracy (datawig) reweighing imputed complete 0.85 0.90 accuracy (datawig) di-remover imputed complete (a) Accuracy for dierent missing value imputation strategies and a logistic regression baseline model on the adult dataset. Imputed records are denoted by red dots , complete records are denoted by gray dots . No signicant dierence between mode and datawig imputation is observed. 0.85 0.90 accuracy (datawig) 0.85 0.90 accuracy (mode) no intervention imputed complete 0.85 0.90 accuracy (datawig) reweighing imputed complete 0.85 0.90 accuracy (datawig) di-remover imputed complete (b) Accuracy for dierent missing value imputation strategies and a de cision tree baseline model on the adult dataset. Imputed records are denoted by red dots , complete records are denoted by gray dots . No signicant dierence between mode and datawig imputation is observed. Figure 4: Impact of missing value imputation on the pre- diction accuracy for dierent imputation strategies and in- terventions. W e obser ve a higher accuracy for incomplete records ( red dots ), which we attribute to the fact that data is not missing and random, and incomplete records contain more easy-to-classify negative examples. values from the unprivileged group. For example, the attribute native-country is missing four times more frequently for non- white individuals than for white individuals. Among the incomplete privileged records there is a 15% chance of a high income, the se cond largest age group consists of 60 to 70 y ear-olds, and the majority of the individuals is married. For the incomplete records from the non-privileged group however , there is only a 10.6% chance of a high income, it contains very few seniors, and the majority of the individuals is unmarried. Setup . W e congure FairPrep as follows: we randomly split the data into 70% train data, 10% validation data, 20% test data, base d on supplied random seeds. W e do not resample the data, standardize numerical features and leverage logistic regression and de cision trees as baseline learners with hyperparameter tuning analogous to previous experiments. W e apply tw o dierent fairness enhancing interventions that preprocess the data: ‘disparate impact r emover’ and ‘reweighing’ . W e vary the strategy to treat missing values for this experiment: ( i ) we apply complete case analysis and remov e incomplete records; ( ii ) we retain all records and impute missing values with ‘mode imputation’; ( ii i ) we retain all records and apply model-based imputation with datawig [ 4 ]. W e execute 530 runs in total, and again report metrics from predictions on the held-out test set. 0.50 0.75 1.00 DI 0.825 0.850 0.875 accuracy no intervention complete case datawig 0.50 0.75 1.00 DI reweighing complete case datawig 0.50 0.75 1.00 DI di-remover complete case datawig (a) Impact of the inclusion of imputed records ( red dots ) on the accuracy and disparate impact of a logistic regression model and various interventions on adult . 0.50 0.75 1.00 DI 0.825 0.850 0.875 accuracy no intervention complete case datawig 0.50 0.75 1.00 DI reweighing complete case datawig 0.50 0.75 1.00 DI di-remover complete case datawig (b) Impact of the inclusion of imputed records ( red dots ) on the accuracy and disparate impact of a decision tree model and various interventions on adult . Figure 5: Comparison of accuracy and disparate impact on the adult dataset for complete case analysis (removal of in- complete records, gray dots ) and inclusion of incomplete records (with imputation of missing values via datawig, red dots ). Including imputed records does not signicantly af- fect the disparate impact of the resulting models. Results . Figure 4 shows classication accuracy for complete (gray dots) and incomplete (red dots) records, under imputation with mode and datawig. First, we observe that records with imputed values achieve high accuracy . This is a signicant result, since these records could not have b een classied at all b efore imputation! Interestingly , we observe higher accuracy for records with missing values compar ed to the complete records. Based on our understand- ing of the data, described earlier in this section, we attribute this to the higher fraction of (easier to classify) negative examples among the incomplete records. Further , we do not obser ve a signicant dierence in accuracy between mode imputation and datawig im- putation. W e attribute this to the highly skewed distribution of the attributes to impute — a favorable setting for mode imputation. Because datawig does no worse than mo de, and is expected to p er- form better in general [ 4 ], we only present results for datawig-base d imputation in the next, and nal, plot. Figure 5 shows the accuracy and disparate impact of complete case analysis (e.g., the removal of incomplete records) versus the in- clusion of incomplete records with datawig imputation. W e observe a minimally higher accuracy in the case of including incomplete records, but in general nd no signicant positiv e or negative im- pact on disparate impact. T aken together , the results in Figures 4 and 5 paint an encouraging picture: Imputation allows us to classify records with missing values, and do so accurately , and it does not degrade performance, either in terms of accuracy or in terms of fairness, for the complete records. Conference’17, July 2017, W ashington, DC, USA Sebastian Schelter , Yuxuan He, Jatin Khilnani, Julia Stoyanovich 6 RELA TED WORK As the resear ch on algorithmic fairness b egins to mature, eorts are being made to standardize the requirements [ 20 ], systematize the measures and the algorithms [ 25 , 41 ], and generalize the insights [ 5 , 8 , 19 ]. A s we are preparing to translate the resear ch advances made by this community into data science practice, it is essential that we develop methods for judicious evaluation of our te chniques, and for integrating them into real-world testing and deployment scenarios. Our work on FairPrep is motivated by this need. The importance of b enchmarking. Other systems communi- ties have benete d tremendously from benchmarking and standard- isation eorts. For example, the investment of the National Institute of Standards and T e chnology (NIST) into T ext REtrieval Confer- ence (TREC) started in 1992 and brought tremendous benets both to the Information Retrieval community , and to the global econ- omy [ 36 ]. The Transaction Processing Performance Council (TPC), established in 1988 to develop transaction processing and database benchmarks, has had similar impact on the wide-spread commercial adoption of relational data management technology [ 24 ]. While the algorithmic fairness community may not yet be ready for stan- dardization — considering that our understanding of the fairness measures and their trade-os is still evolving — we are certainly ready to grow up past the initial stage of wild exploration, and into developing and adhering to rigorous evaluation and software engineering best practices. Design and evaluation frameworks for fairness. In our work on FairPrep we build on the eorts of Friedler et al. [ 9 ] to de velop a generalizable methodology for comparing p erformance of fairness- enhancing interventions, and on the work of Bellamy et al. [ 3 ] to provide a standardized implementation framework for these meth- ods. W e are also inspired by Stoyanovich et al. [ 35 ], who advocate for systems-level support for responsibility properties through the data life cycle. Other relevant eorts include Fair T est [ 37 ], a method- ology and a framework for identifying “unwarranted associations” that may correspond to unfair , discriminator y , or oensive user treatment in data-driv en applications. Fair T est automatically dis- covers associations between outcomes and sensitive attributes, and provides debugging capabilities that let programmers rule out po- tential confounders for observed unfair ee cts. The fairness-aware programming project [ 1 ] also shares motivation with our work, in that it develops a methodology for handling fairness as a sys- tems requirement. Specically , the authors develop a specication language that allows programmers to state fairness expectations natively in their code, and have a runtime system monitor decision- making and report violations of fairness. These statements are then translated to Python de corators, wrapping and modifying function behavior . The main dierence with our approach is that we do not assume a homogeneous programming environment, but rather incorporate fairness inter ventions into data-rich machine learn- ing pipelines, while paying close attention to data pre-processing. Another relevant line of work is Themis [ 10 ], a software testing framework that automatically designs fairness tests for black-box systems. General challenges in end-to-end machine learning . Software systems that learn from data using machine learning (ML) are b eing deployed in incr easing numbers in the real w orld. The operation and monitoring of such systems intr oduces no vel challenges, which are very dierent from the challenges encountered in traditional data processing systems [ 22 , 34 ]. ML systems in the real world ex- hibit a much higher complexity than “text b ook” ML scenarios ( e.g., training a classier on a standard benchmark dataset). Real world systems not only have to learn a single mo del, but must dene and execute a whole ML pipeline, which includes data preprocessing operations such as data cleaning, standardisation and feature ex- traction in addition to learning the model, as w ell as methods for hyperparameter selection and model evaluation. Such ML pipelines are typically deployed in systems for end-to-end machine learn- ing [ 2 , 31 ], which require the integration and validation of raw input data from various input sources, as well as infrastructure for deploying and ser ving the trained models. These systems must also manage the lifecycle of data and models in such scenarios [29], as new (and potentially changing) input data has to be continuously processed, and the corresponding ML models have to b e retrained and managed accordingly . Many of the challenges incurred by end-to-end ML are only recently attracting the attention of the academic community . These include enabling industry practitioners to improve the fairness in real world ML systems [ 1 , 12 ], eciently testing and debuging ML models [ 6 , 27 ], and recording the metadata and the lineage of ML experiments [ 32 , 38 , 39 ]. W e contribute to this line of work by presenting a framew ork that brings the insights from end-to-end machine learning to the fairness, accountability , and transparency community . 7 CONCLUSIONS & F U T URE W ORK W e identied shortcomings in existing empirical studies and toolk- its for analyzing fairness-enhancing interventions. Subsequently , we presented the design and implementation of our evaluation framework FairPr ep. This framework empowers data scientists and software developers to congure and customise experiments on fairness-enhancing interventions with lo w eort, and enfor ces best practices in software engineering and machine learning at the same time. W e demonstrated how FairPrep can be leveraged to measure the impact of sound b est practices, such as hyperparameter tuning and feature scaling, on the fairness and accuracy of the r esulting classiers. Additionally , we show cased how FairPrep enables the inclusion of incomplete data into studies (through data cleaning methods such as missing value imputation), and helps to analyze the resulting eects. Future work . W e aim to extend FairPrep by integrating additional fairness-enhancing interventions [ 13 , 30 ], datasets, preprocessing techniques (such as stratied sampling), and feature transforma- tions (such as embeddings of the input data). A dditionally , we in- tend to extend its scope to scenarios beyond binary classication. Furthermore, w e would like to str engthen the human-in-the-loop character of FairPrep by adding visualisations and allowing end- users to control experiments with low eort. While our current focus is on data scientists and software developers as end-users, we think that it is also crucial to empower less technical users to conduct fairness-related studies [23]. FairPrep: Promoting Data to a First-Class Citizen in Studies on Fairness-Enhancing Interventions Conference’17, July 2017, W ashington, DC, USA REFERENCES [1] A ws Albarghouthi and Samuel Vinitsky . 2019. Fairness-A ware Programming. In Proceedings of the Conference on Fairness, Accountability , and Transparency , F AT* 2019, Atlanta, GA, USA, January 29-31, 2019 . 211–219. https://doi.org/10.1145/ 3287560.3287588 [2] Denis Baylor , Eric Breck, Heng- Tze Cheng, Noah Fiedel, Chuan Y u Foo, Zakaria Haque, Salem Haykal, Mustafa Ispir , Vihan Jain, Levent Koc, et al . 2017. Tfx: A tensorow-based production-scale machine learning platform. In KDD . 1387– 1395. [3] Rachel KE Bellamy, Kuntal Dey , Michael Hind, Samuel C Homan, Stephanie Houde, Kalapriya Kannan, Pranay Lohia, Jacquelyn Martino, Sameep Mehta, Aleksandra Mojsilovic, et al . 2019. AI fairness 360: An extensible to olkit for detecting, understanding, and mitigating unwanted algorithmic bias. F A T*ML (2019). [4] Felix Biessmann, David Salinas, Sebastian Schelter , Philipp Schmidt, and Dustin Lange. 2018. Deep Learning for Missing V alue Imputation in T ables with Non- Numerical Data. In Proce edings of the 27th ACM International Conference on Information and Knowledge Management . ACM, 2017–2025. [5] Alexandra Chouldechova. 2017. Fair prediction with disparate impact: A study of bias in recidivism prediction instruments. CoRR abs/1703.00056 (2017). arXiv:1703.00056 http://arxiv .org/abs/1703.00056 [6] Y e ounoh Chung, Tim Kraska, Neoklis Polyzotis, Ki Hyun T ae, and Steven Euijong Whang. 2019. Slice nder: Automated data slicing for model validation. In ICDE . 1550–1553. [7] Michael Feldman, Sorelle A. Friedler, John Moeller, Carlos Scheidegger, and Suresh V enkatasubramanian. 2015. Certifying and Removing Disparate Impact. In Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Sydney , NSW , Australia, August 10-13, 2015 . 259–268. https://doi.org/10.1145/2783258.2783311 [8] Sorelle A. Friedler , Carlos Scheidegger , and Suresh V enkatasubramanian. 2016. On the (im)possibility of fairness. CoRR abs/1609.07236 (2016). http://arxiv .org/abs/1609.07236 [9] Sorelle A. Friedler , Carlos Scheidegger, Suresh V enkatasubramanian, Sonam Choudhary , Evan P. Hamilton, and Derek Roth. 2019. A comparative study of fairness-enhancing interventions in machine learning. In Proceedings of the Conference on Fairness, Accountability , and Transparency , F A T* 2019, Atlanta, GA, USA, January 29-31, 2019 . 329–338. https://doi.org/10.1145/3287560.3287589 [10] Sainyam Galhotra, Yuriy Brun, and Alexandra Meliou. 2017. Fairness testing: testing software for discrimination. In Proceedings of the 2017 11th Joint Meeting on Foundations of Software Engineering, ESEC/FSE 2017, Paderb orn, Germany, September 4-8, 2017 . 498–510. https://doi.org/10.1145/3106237.3106277 [11] Trev or Hastie, Rob ert Tibshirani, Jerome Friedman, and James Franklin. 2005. The elements of statistical learning: data mining, inference and prediction. The Mathematical Intelligencer 27, 2 (2005), 83–85. [12] Kenneth Holstein, Jennifer W ortman V aughan, Hal Daumé III, Miro Dudík, and Hanna W allach. 2019. Improving fairness in machine learning systems: What do industry practitioners need? CHI (2019). [13] Lingxiao Huang and Nisheeth Vishnoi. 2019. Stable and Fair Classication. In ICML , Kamalika Chaudhuri and Ruslan Salakhutdinov (Eds.), V ol. 97. 2879–2890. [14] Rod Johnson, Juergen Hoeller , Alef Arendsen, and R Thomas. 2009. Professional Java development with the Spring framework . John Wiley & Sons. [15] Faisal Kamiran and Toon Calders. 2012. Data preprocessing techniques for classication without discrimination. Knowledge and Information Systems 33, 1 (2012), 1–33. [16] Faisal Kamiran, Asim K arim, and Xiangliang Zhang. 2012. Decision theor y for discrimination-aware classication. In 2012 IEEE 12th International Conference on Data Mining . IEEE, 924–929. [17] Joost Kappelhof. 2017. T otal Survey Error in Practice . Chapter Survey Research and the Quality of Survey Data Among Ethnic Minorities. [18] Keith Kirkpatrick. 2017. It’s Not the Algorithm, It’s the Data. Commun. ACM 60, 2 (Jan. 2017), 21–23. https://doi.org/10.1145/3022181 [19] Jon M. Kleinberg. 2018. Inherent Trade-Os in Algorithmic Fairness. In Ab- stracts of the 2018 ACM International Conference on Measurement and Modeling of Computer Systems, SIGMETRICS 2018, Ir vine, CA, USA, June 18-22, 2018 . 40. https://doi.org/10.1145/3219617.3219634 [20] Ansgar R. K oene, Liz Dowthwaite, and Suchana Seth. 2018. IEEE P7003 ™ standard for algorithmic bias considerations: work in progress paper . In Proceedings of the International W orkshop on Software Fairness, FairW are@ICSE 2018, Gothenburg, Sweden, May 29, 2018 . 38–41. https://doi.org/10.1145/3194770.3194773 [21] Ron Kohavi et al . 1995. A study of cross-validation and bootstrap for accuracy estimation and model selection. In Ijcai , V ol. 14. Montreal, Canada, 1137–1145. [22] Arun Kumar , Robert McCann, Jere y Naughton, and Jignesh M Patel. 2016. Model selection management systems: The next frontier of advanced analytics. ACM SIGMOD Record 44, 4 (2016), 17–22. [23] David Lehr and Paul Ohm. 2017. Playing with the Data: What Legal Scholars Should Learn about Machine Learning. UC Davis Law Review 51, 2 (2017), 653– 717. [24] Raghu Nambiar , Nicholas W akou, Forrest Carman, and Michael Majdalany . 2010. Transaction Processing Performance Council (TPC): State of the Council 2010, V ol. 6417. 1–9. https://doi.org/10.1007/978- 3- 642- 18206- 8_1 [25] Arvind Narayanan. 2018. 21 fairness denitions and their politics. Conference on Fairness, Accountability , and Transparency (2018). [26] Fabian Pedregosa, Gaël V aroquaux, Alexandre Gramfort, Vincent Michel, Bertrand Thirion, Olivier Grisel, Mathieu Blondel, Peter Prettenhofer , Ron W eiss, Vincent Dubourg, et al . 2011. Scikit-learn: Machine learning in Python. Journal of machine learning research 12, Oct (2011), 2825–2830. [27] Kexin Pei, Yinzhi Cao , Junfeng Y ang, and Suman Jana. 2017. Deepxplore: A uto- mated whitebox testing of deep learning systems. In SOSP . 1–18. [28] Geo Pleiss, Manish Raghavan, Felix W u, Jon Kleinberg, and Kilian Q W einberger . 2017. On fairness and calibration. In Advances in Neural Information Processing Systems . 5680–5689. [29] Neoklis Polyzotis, Sudip Roy , Steven Euijong Whang, and Martin Zinkevich. 2018. Data life cycle challenges in production machine learning: a survey . ACM SIGMOD Record 47, 2 (2018), 17–28. [30] Babak Salimi, Luke Rodriguez, Bill Howe, and Dan Suciu. 2019. Interventional fairness: Causal database repair for algorithmic fairness. In SIGMOD . 793–810. [31] Sebastian Schelter, Felix Biessmann, Tim Januscho wski, David Salinas, Stephan Seufert, Gyuri Szarvas, Manasi V artak, Samuel Madden, Hui Miao, Amol Desh- pande, et al . 2018. On Challenges in Machine Learning Model Management. IEEE Data Eng. Bull. 41, 4 (2018), 5–15. [32] Sebastian Schelter , Joos-Hendrik Boese, Johannes Kirschnick, Thoralf Klein, and Stephan Seufert. 2017. A utomatically tracking metadata and provenance of machine learning experiments. Machine Learning Systems workshop at NeurIPS (2017). [33] Peter Schmitt, Jonas Mandel, and Mickael Guedj. 2015. A comparison of six methods for missing data imputation. Journal of Biometrics & Biostatistics 6, 1 (2015), 1. [34] David Sculley , Gary Holt, Daniel Golovin, Eugene Davydov , T o dd Phillips, Diet- mar Ebner , Vinay Chaudhary, Michael Y oung, Jean-Francois Crespo, and Dan Dennison. 2015. Hidden technical debt in machine learning systems. In Advances in neural information processing systems . 2503–2511. [35] Julia Sto yanovich, Bill How e, Serge Abiteboul, Gerome Miklau, Arnaud Sahuguet, and Gerhard W eikum. 2017. Fides: To wards a Platform for Responsible Data Science. In Procee dings of the 29th International Conference on Scientic and Statistical Database Management, Chicago, IL, USA, June 27-29, 2017 . 26:1–26:6. https://doi.org/10.1145/3085504.3085530 [36] Gregory Tasse y . 2010. Economic Impact Assessment of NIST’s T ext REtrieval Conference (TREC) Program. https://trec.nist.gov/pubs/2010.economic.impact. pdf [37] Florian Tramèr , V aggelis Atlidakis, Roxana Geambasu, Daniel J. Hsu, Jean-Pierre Hubaux, Mathias Humbert, Ari Juels, and Huang Lin. 2017. FairT est: Discovering Unwarranted Associations in Data-Driven Applications. In 2017 IEEE European Symposium on Se curity and Privacy, EuroS&P 2017, Paris, France, A pril 26-28, 2017 . 401–416. https://doi.org/10.1109/EuroSP.2017.29 [38] Joaquin V anschoren, Jan N V an Rijn, Bernd Bischl, and Luis T orgo. 2014. Op enML: networked science in machine learning. ACM SIGKDD Explorations Newsletter 15, 2 (2014), 49–60. [39] Manasi V artak, Harihar Subramanyam, W ei-En Lee, Srinidhi Viswanathan, Saadiyah Husnoo, Samuel Madden, and Matei Zaharia. 2016. M odel DB: a system for machine learning model management. In Proceedings of the W orkshop on Human-In-the-Loop Data Analytics . A CM, 14. [40] Brian Hu Zhang, Blake Lemoine, and Margaret Mitchell. 2018. Mitigating un- wanted biases with adversarial learning. In Pr oceedings of the 2018 AAAI/A CM Conference on AI, Ethics, and Society . ACM, 335–340. [41] Indre Zliobaite. 2017. Measuring discrimination in algorithmic decision making. Data Min. Knowl. Discov . 31, 4 (2017), 1060–1089. https://doi.org/10.1007/s10618- 017- 0506- 1

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment