Adapting a Container Infrastructure for Autonomous Vehicle Development

In the field of Autonomous Vehicle (AV) development, having a robust yet flexible infrastructure enables code to be continuously integrated and deployed, which in turn accelerates the rapid prototyping process. The platform-agnostic and scalable cont…

Authors: Yujing Wang, Qinyang Bao

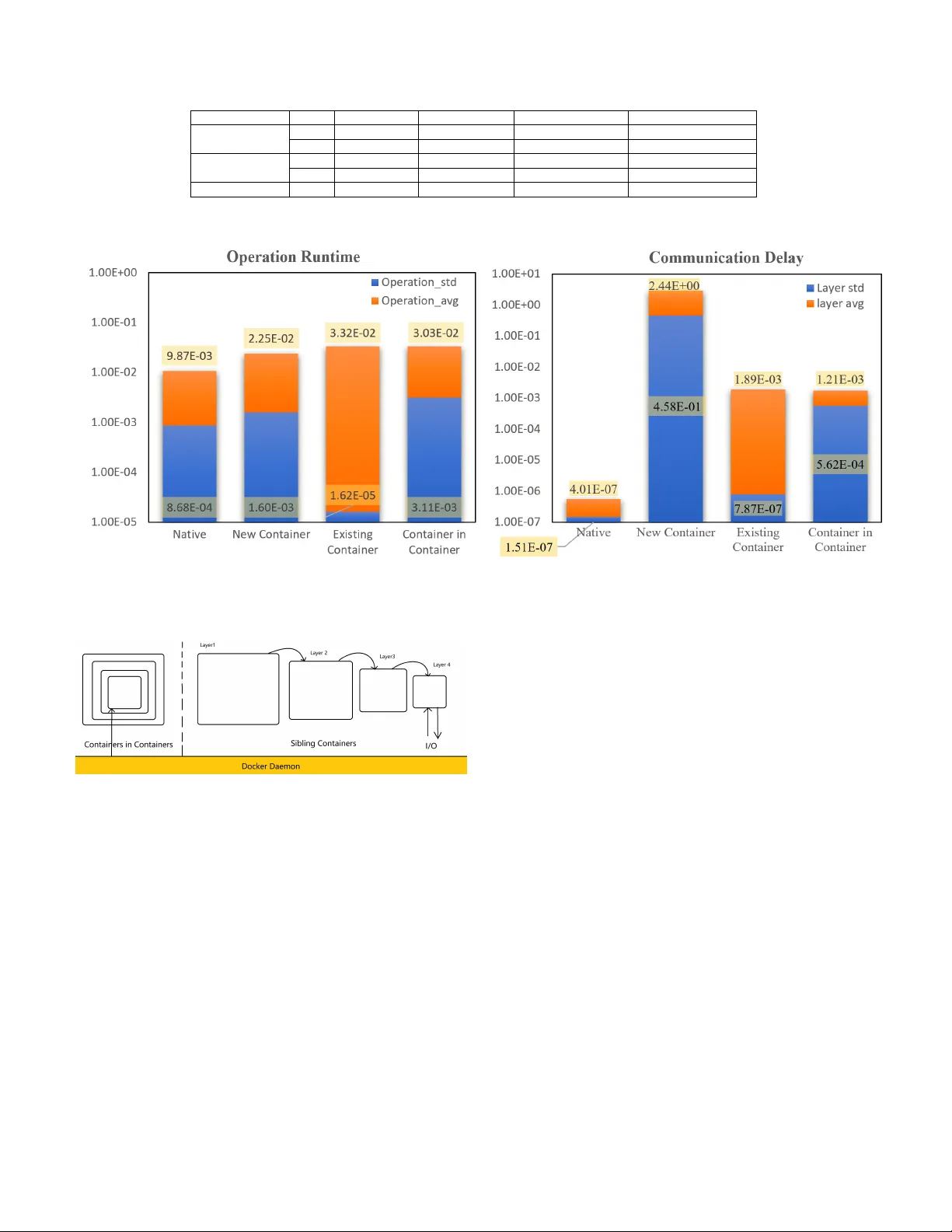

Adapting a Container Infrastructure for Autonomous V ehicle De v elopment Y ujing W ang Department of Mechanical and Mec hatr onics Engineering University of W aterloo W aterloo, ON, Canada yj9wang@edu.uwaterloo.ca Qinyang Bao Department of Mechanical and Mec hatr onics Engineering University of waterloo W aterloo On, Canada q7bao@edu.uwaterloo.ca Abstract —In the field of A utonomous V ehicle (A V) de velop- ment, having a r obust yet flexible infrastructure enables code to be continuously integrated and deployed, which in tur n accel- erates the rapid prototyping pr ocess. The platform-agnostic and scalable container infrastructure, often exploited by developers in the cloud domain, presents a viable solution addressing this need in A V development. Developers use tools such as Docker to b uild containers and Kuber netes to setup container networks. This paper presents a container infrastructure strategy for A V development, discusses the scenarios in which this strategy is useful and performs an analysis on container boundary ov erhead, and its impact on a Mix Critical System (MCS). An experiment was conducted to compare both operation runtime and commu- nication delay of running a Gaussian Seidel Algorithm with I/O in four different envir onments: nativ e OS, new container , existing container , and nested container . The comparison reveals that running in containers indeed adds a delay to signal r esponse time, but behav es mor e deterministically and that nested container does not stack up delays but makes the process less deterministic. With these concerns in mind, the dev elopers may be more informed when setting up the container infrastructure, and take full advantage of the new infrastructure while a voiding some common pitfalls. Index T erms —A utonomous V ehicle, Container , Docker , De- terministic, realtime, Continous Integration, Kubernetes, Mixed Critical System I . I N T RO D U C T I O N Agile, a new approach to software de velopment, has been quickly winning fa vors with cloud developers over the tradi- tional waterfall model. In agile practice, software de velopers write code, run it through CI/CD pipelines, integrate daily , and deploy as soon as a new feature needs to be tested [1]. This practice gives de velopers chances to test newly de veloped prototypes in all kinds of scenarios much more frequently . As a result, more iterations can be performed, and more bugs can be discov ered in the meantime; thus, increasing both the quality and speed of the software. Applying agile practice to A V de velopment is a bit more challenging; howe ver , software technologies used on an A V often come from a wide range of temporal criticality: from low-le vel safety-critical mechanical controls to embedded realtime systems to high-lev el perception models, as well as mid-level networking applications. Fearing to mess up the temporal separation in an MCS, developers are generally hesitant to devise a unified infrastructure strategy . Furthermore, the business practice of modularizing teams into fine-grained functional unit also enhances the status quo mindset where things should be done as it is. As a result, each team must perform repetiti ve adaptation processes for each vehicle during each iteration. This repetitiv e procedure greatly slo ws down the dev elopment and testing feedback loop. A well-designed container infrastructure remov es these ov erheads and allo ws de velopers to build once and run on any platform. The accelerated build and test c ycle makes con- tinuous delivery of new features in response to ever -changing requirements possible [1]. The idea of a container first came from Linux namespace, which makes dedicating an exclusi ve resource set for a task possible [2]. Docker later came out to streamline the resource isolation process. Docker packages all software dependencies and running mechanism into an isolated en vironment called ”image” Each software dependency or each step of the running mechanism is a layer in the image [3]. T o update the image, Docker updates the corresponding layer without making modifications to the rest of the image. Docker then deploys such an image into containers independent from other containers and the host en vironment. Docker daemon supplies the needed resource from the host machine to each container , thereby sa ving the dev eloper from having to deploy an entire OS for each application, which is necessary for a virtual machine infrastructure, as shown in Fig. 1. Fig. 1: Containers vs V irtual Machine Infrastructure [4] As the number of containers increases, the need for es- tablishing an efficient container network becomes crucial, especially for A V , where the storage and computing po wer is highly constrained. Kubernetes is an open source container orchestration tool, that lets the dev eloper manage a network of containers [2]. Kubernetes reads declarativ e configurations from Y AML files, in which the dev eloper has specified the desired state. Kno wing the current state and the desired state, Kubernetes works its way towards the desired state [5]. Kubernetes automates the tedious process of spawning, updating, and healing any number of containers. Moreov er , it lets dev elopers provision system resources for any containers. Fig. 2 depicts the master workers architecture of Kubernetes [6]. The master node accepts the command from the user , stores configuration, schedules pods, and realizes actions by sending signals to worker nodes. W orker nodes are connected to the master through Kube-proxy . Once a signal is receiv ed from the master node, Kubelet in each worker node executes the action accordingly . Fig. 2: Architecture of Kubernetes [6] This paper will present the scenarios in which a container infrastructure benefits A V development, examine the resource and time ov erhead each container layer adds. I I . A R C H I T E C T U R E A N D A P P L I C A B L E S C E NA R I O S A. Multiple V ariation for one Scenario In an A V , complex tasks such as lane changing, parking, and merging/yielding actions rely on a line of agents operating on data: data are collected, analyzed, and according to which actions are ex ecuted. Most actions are performed by local agents; some are performed by remote agents connected to each vehicle via the internet. By utilizing cloud computing, a vehicle can perform much more powerful data analysis that the limited local resource cannot support. This combination of local and remote agents mix makes up a Cyber Physical System (CPS) [7]. Fig. 3 shows the data processing line. Located at the very beginning of the pipeline are the data collectors. These are sensors such as lidars and cameras. On top of each sensor is its respecti ve driv er . There may be multiple sensors of the same type mounted on the vehicle, that are highly similar but not made of the identical hardware. T ake the on-vehicle camera as an example, the front cam1 optimized for traffic observ ation is different from the driv er back cam adapted for rear approach checking. Having to manage different versions and variations of camera driv ers Fig. 3: Line of Data Agents on a A V is tedious, especially when one needs to perform A/B split testing to see which version performs better [1]. In a container infrastructure, the user packages each revision and versions in Docker images, then specify ‘sensor type:variation version‘. For example, the dev eloper may name the front left camera driv er’ s version 1.2 as: ‘camera dri ver:frontLeft v1.1‘. Using Kubernetes’ replicable and self-healing deployment object, the developer can write a helm template manifest (via built- in Helm T emplating Engine) for shelving the sensor driv er containers [7]. Then specify the correlation between physical sensors and containers in a key-v alue file. Similarly , data processing agents down the data pipeline can be broken down in a similar structure using the aforementioned strategy . Each agent stands alone in one container . It receiv es input from upstream agents, processes it, and subsequently sends results to downstream agents. B. One Module used in Multiple Scenarios The reverse is also true: A module can be packaged and employed in different scenarios. This enables application that handles one specific task to be repetiti vely deployed on different de vices when a change in environment only affected its upstream or downstream agents. For example, running simulation is a very common practice to train vehicles perception module. The same perception module is coupled with different agents in the following scenarios (Fig. 4) [8]: 1) Running the vehicle on roads 2) Running the vehicle in a lab simulated en vironment 3) Running perception core on a computer-simulated model. Although dif ferent setups are in volved in different scenarios, the same perception module is used in all scenarios. T o maintain consistency across all settings, the de velopers would deploy one perception model repetitively in all scenarios. I I I . R E S O U R C E O V E R H E A D A N D C O N TA I N E R B O U N DA RY A. Resour ce Overhead Overhead is a pain point when using containers. Run- ning an application in a container inevitably consumes extra system resources and takes longer to communicate across the container boundary . In cloud computing, the limitation Fig. 4: One Module Multiple Scenarios on memory and computation resource is close to negligible: dev elopers can add more machines to the container network, thereby scaling the cluster horizontally to accommodate for increased usage. In A V dev elopment, the physical space on the vehicle for housing machines is limited. Fog Architecture proposes a way to utilize cloud computing’ s power best and accommodate for the limited space on a vehicle by having vehicles upstreaming resource intensi ve computation logic to edge de vices [9]. Modules on these de vices enable vehicles to navigate through more complex situations such as dri ving in a chaotic urban en vironment where pedestrians and vehicles may cross paths at irregular intervals and random locations. The vehicle itself, on the other hand, hosts a complete ecosystem of data processing agents to na vigate through places where network connections are weak to non-existent, and the traffic is more predictable, such as driving on a highway in the countryside. The architecture of such infrastructure is similar to ”One module used in multiple scenarios”. Each vehicle joins the container network as a less po werful node. Each module is deployed repetiti vely in each vehicle node and cloud node. The container flav or manager manages which tag of the image will be deployed, gi ven the specs of the target node. Though not the focus of this paper, containers infrastructure allows A Vs to tap into the computing power of cloud machines and henceforth to circumvent the limitation on physical space constraints partially . B. Overhead Analysis and Realtime Scheduling Analysis Even more limited than the resource is the response time in a time-critical system. A signal trav ersing in container networks needs first to exit its originating container and enter its destination container , crossing at least two layers of delay per container in volv ed. The more containers in volved, the more layers that the signal needs to cross, and the higher the delay stacks. The accumulation of delay worsens when there are containers nested inside of other containers or when signals are tra versing through multiple intermediate contain- ers. Compounding layers of boundary communication gi ves a significant delay in the signal relay process. The delay adds more considerable uncertainty in communication time, making the realtime system less deterministic. [10] performed a runtime analysis on the temporal criticality of each con- tainer’ s operation runtime in 4 different en vironments: ubuntu vanilla-nati ve, v anilla-docker , R T -native, and R T -docker . The result showed that runtime in Docker is approximately the same as running nativ ely . Real-time enabled Linux kernel performs more deterministically than the v anilla kernel, which nev ertheless, is o verall faster in both empty and loaded context. [10] did not study the ef fect of crossing the container itself, which we intend to inv estigate in the following experiment to understand how container network should be orchestrated to provide the lo west av erage delay and lowest uncertainty in the signal relay process. C. Experimental Setup T o study the communication delay across the container boundary , we decided to perform an experiment to see how container boundary affects communication time. Four scenar- ios are tested and juxtaposed: 1) Running on a nativ e machine 2) Running by spawning ne w containers 3) Running in an existing container 4) running in a fiv e-folds nested container In this experiment, we use an algorithm that perform iter- ativ e Gaussian Seidel Approximation on a strictly diagonally dominant matrix [11]. For any Matrix operation in the form A x = b, (1) where x is an unknown n × 1 vector , A is a kno wn n × n strictly diagonal matrix, and b is a known n × 1 vector . Decomposing Matrix A into lower triangular matrix L ∗ and strictly upper part U such that ( L ∗ ) + U = A , we get ( L ∗ ) x = U − bx (2) Isolating for one x and using forward subsitution, x k +1 = ( L ∗ ) − 1 ( b − U x k ) . (3) This giv es us an iterati ve algorithm to obtain the next guess of each element x k +1 i from x k i its pre vious guess, x 0 i a base case ”initial guess” using formula x k +1 i = 1 a ii ( b i − i − 1 X j =1 a ij x k +1 j − n X j = i +1 a ij x k j ) , i = 1 , 2 , ...n. (4) This operation performs an iterati ve solution to calculate result. This effecti vely con verts ordinary matrix solution with uncertain runtime to O(n) runtime during each iteration and storing O(n) variables in memory space. Knowing the size of the matrix, we have a constant runtime and constant memory , which are conv enient for measurement purpose. Using an initial guess of [0,0,0,0,0], the program performs a total of 100 calls per experimental scenario and display the generated logs when finished. For each call, it reads the ”initial guess” and signal sent time as input, records the signal relay time, performs 2500 iterations of the algorithm, writes results as logs to a local persistent storage, and then sends output back, which is used as the initial guess in the next call. The value used for matrix A and vector b, and the calculated solution for x are A = 4 1 2 1 1 3 5 1 1 1 1 1 3 1 1 1 1 1 5 1 1 1 1 1 9 , b = 4 7 3 9 2 , x = 39 / 106 46 / 53 11 / 106 329 / 212 − 21 / 212 . (5) Only output that falls within a 99.95% confidence interval in a 5 th degree of freedom T -test will be used in the ov erhead analysis. The t value is determined using (6), where ¯ x is the answer being sampled, µ the mean is the ground truth calculated in II, n is the sample size, and ˆ σ is standard deviation of sample results [12]. t = ¯ x − µ ˆ σ √ n (6) Note that since each call performs 2500 iterations, it is very unlikely to hav e the result not fall within the 99.95% confidence level and that since the result of the pre vious stage is feed into the next step, the accuracy is likely to increase ov er time. In ”running on nati ve machine” and ”running by spawn- ing new containers”, the function is exposed through ” main .py”. When ”python gausse.py” is ex ecuted, an instance is initiated on the local machine or inside a new container . As soon as the results are returned, the instance is terminated, and its allocated memory space released. Com- paring running code on the native machine against running code by spawning a new container each time help us study the ov erhead of instantiating a new container . In ”running in an existing container” and ”running in fiv e-fold nested container in container”, the function is wrapped in Flask, a python web framew ork that allows communication via HTTP calls. The application is only initiated once at the start, so we can measure the time for signals to traverse through the communication layer and compare how nesting containers affect the signal relay time. T o k eep the en vironment as consistent as possible, we performed all four scenarios on one machine with the following specification (note specs not related to this experiment are not listed): • CPU: Intel(R) Core(TM) i7-6700 CPU @ 3.40GHz • Memory: 16 GiB Memory DDR3 at 1600MHz • Storage: 2 TB HDD TOSHIB A DT01ACA200 • File System: ext4 • OS: Ubuntu 18.04.3 L TS • Container: Docker v19.3 • Code base: Python v3.4 When the experiment is finished, the av erage and standard deviation of operation time and communication delay will be determined using (7) and (8), where n is the number of elements, µ is av erage and σ is standard deviation: µ = 1 n n X i =1 x i (7) σ = v u u t 1 n n X i =1 ( x i − µ ) 2 (8) I V . R E S U LT S A N D F I N D I N G The logs and calculated data is hosted on GitHub reposi- tory kamagaw a/containers infrastructure [13], the average and standard deviation of operation and communication time are documented in T able I. From an accuracy perspectiv e, all entries meet the accuracy requirement, thanks to strict diago- nality of the matrix that guarantees con vergence within 2500 iterations. Fig. 5a shows that executing the algorithm on the nativ e OS is much faster than any other option, then followed by running in new containers, nested containers, and existing containers. The standard de viation of running in an e xisting container is the lowest by a large margin, making it the most deterministic option. Having been provisioned its resource bundle, processes running inside Docker enjoy isolation from system noises that af fect nativ e processes. Howe ver , when placing containers inside containers fi ve-folds, the docker daemon’ s scheduler can no longer provision the containers directly , thus giving rise to the standard deviation of container communication delay . Operation runtime in a five-folds nested container is approximately the same as running in a one-layer container . Fig. 5b shows that the communication delay of the nativ e process is the lo west by a lar ge margin. There is no delay between the signal sent and received. Running code by spawn- ing a new container is the longest and the least deterministic option since the process of creating a new container and provisioning it resource takes a long time. Sending signals across container boundary to existing containers and nested containers in containers consumes a roughly equal amount of time. Howev er, sending signals to 1 layer of container is much more deterministic than fiv e layers of nested containers. Whether it’ s operation time or communication time, a fiv e-fold nested container doesn’t take a longer time than a regular container . This finding is a little bit surprising, as one would expect crossing five layers of the container boundary would take five times as long as crossing one layer of container boundary . This phenomenon could be attributed to the architecture of container creation in Docker , as shown in Fig. 6. When creating a new container inside an existing container , Docker Damon creates a sibling container that is linked to the current container , rather than directly spawning the ne w container inside the current one [14]. Howe ver , sibling containers’ resource sets are provision from the current container’ s resource set. The weak resource isolation among sibling containers makes nested containers behave less deter- ministic than standard ones. T ABLE I: T able T ype Styles Measurement T ime Nativ e New Container Existing Container 5x Nested Containers Operation ¯ t 0.009868 0.022465 0.033195 0.030283465 Duration ˆ σ 0.000867726 0.001595 1.62101E-05 0.003111 Communication ¯ t 4.01E-07 2.440119 0.001893 0.001209655 Duration ˆ σ 1.51E-07 0.45777 7.87E-07 0.000561769 Accuracy - 100% 100% 100% 100% (a) (b) Fig. 5: Runtime A verage and Standard De viation Comparison Graph of (a) Operation and (b) Layering Fig. 6: Containers vs V irtual Machine Infrastructure [4] Understanding the runtime behavior of containers in dif- ferent situations is crucial to setting up a robust and flexible container infrastructure for Autonomous V ehicle development. For such a safety-critical system, being able to know when a task will fire, and finish is more important than finishing it as quickly as possible. When one needs to nest a container , they must ask themselves, is this task time-critical, and is it ok for it to share the resource with its current containers? T o achiev e a higher le vel of temporal precision, industry partners often use a realtime enabled kernel (rt-kernel) and implement their Network T ime Protocol server as part of their container networks. V . C O N C L U S I O N A N D F U T U R E W O R K This paper presented a container infrastructure for au- tonomous vehicle development, the scenarios in which it out- performs nati ve development such as ”Multiple V ariation for one Scenario” and ”One Module used in Multiple Scenarios”. W e presented an option to extend the container network with the Fog network to tap into the power of cloud computing such that more powerful computation can be performed on cloud and less powerful computation perform locally . Then study the runtime overhead when running in container network compared to the native machine and discovered that signals crossing into and out of containers experience a considerable delay compared to nativ e but beha ves much more determinis- tically . Nested containers do not add extra overhead because architecturally , they are linked as ”sibling containers” rather than being put inside one another . Howe ver , their provisioned resources are shared, making nested containers less determin- istic than a single layer container . Understanding the operation runtime and communication delay is essential when designing a container network of a mixed critical system. T o fulfill a higher degree of temporal precision, companies often use rt- kernel and implement their customized time control logic. During the experiment, we face many obstacles that could potentially be an inspiration for future work. One is streamlin- ing the process of image creation such that when a non-critical line is changed in the code, it doesn’t rebuild the entire layer . Building on top of the container architecture, we will study a pragmatic approach for utilizing Kubernetes to orchestrate a robust network to run simulations and allo ws for CI/CD of new features in A V de velopment. R E F E R E N C E S [1] M. Soni, ”End to End Automation on Cloud with Build Pipeline: The Case for DevOps in Insurance Industry , Continuous Integration, Continuous T esting, and Continuous Delivery , ” 2015 IEEE International Conference on Cloud Computing in Emerging Markets (CCEM), Ban- galore, 2015, pp. 85-89. doi:10.1109/CCEM.2015.29 [2] D. Bernstein, ”Containers and Cloud: From LXC to Docker to Kuber - netes, ” in IEEE Cloud Computing, vol. 1, no. 3, pp. 81-84, Sept. 2014. doi: 10.1109/MCC.2014.51 [3] About images, containers, and storage driv ers, docker .io, 2019, [online], A vailable: https://docs.docker .com/v17.09/engine/userguide/storagedri ver/ imagesandcontainers/ [4] J.Fong, ”Are Containers Replacing V irtual Machines?”, docker .io, 2019, [online], A vailable: www .docker .com/blog/containers-replacing-virtual- machines/ [5] Declarative Management of Kubernetes Objects Using Configuration Files, Kubernetes.io, 2019, [online], A vailable: https://kubernetes.io/docs/tasks/manage-kubernetes-objects/declarativ e- config/ [6] A. Gerrard, ”What Is Kubernetes? An Introduction to the Wildly Popular Container Orchestration Platform”, blog.newrelic.com, 2019, [online], A vailable: https://blog.newrelic.com/engineering/what-is-kubernetes/ [7] W . W olf, ”Cyber -Physical Systems”. Computer , V ol. 42, No. 3, 88-89. 2009. doi:10.1109/MC.2009.81 [8] A. Kemeny , F . Panerai, ”Evaluating perception in driving simulation experiments”, Trends Cogn. Sci., vol. 7, no. 1, pp. 31-37, Jan. 2003. doi:10.1016/S1364-6613(02)00011-6 [9] K. Katsaros, M. Dianati, A Conceptual 5G V ehicular Network- ing Architecture, Cham, Switzerland:Springer , pp. 595-623, 2017, doi:10.1007/978-3-319-34208-5 22. [10] P . Masek, M. Thulin, H. Andrade, C. Berger and O. Benderius, ”System- atic ev aluation of sandboxed software deployment for realtime software on the example of a self-driving heavy vehicle, ” 2016 IEEE 19th International Conference on Intelligent Transportation Systems (ITSC), Rio de Janeiro, 2016, pp. 2398-2403. doi: 10.1109/ITSC.2016.7795942 [11] C. Gauss, ”W erke”, Gttingen: Kniglichen Gesellschaft der Wis- senschaften, 1903, pp. 9 [12] STUDENT , ”Probable Error of a Correlation Coefficient”, Biometrika, V ol. 6, Issue 2-3, September 1908, P ages 302310, doi: 10.1093/biomet/6.2-3.302 [13] Y . W ang, ”Container Infrastructure”, GitHub, Oct. 2019, [online], doi:10.5281/zenodo.3524680 [14] A. Colangelo, ”Sibling Docker Container”, Medium, Jul. 2019, A vailable: https://medium.com/@andreacolangelo/sibling-docker- container-2e664858f87a

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment