Decentralized On-line Task Reallocation on Parallel Computing Architectures with Safety-Critical Applications

This work presents a decentralized allocation algorithm of safety-critical application on parallel computing architectures, where individual Computational Units can be affected by faults. The described method consists in representing the architectu…

Authors: Thanakorn Khamvilai, Louis Sutter, Eric Feron

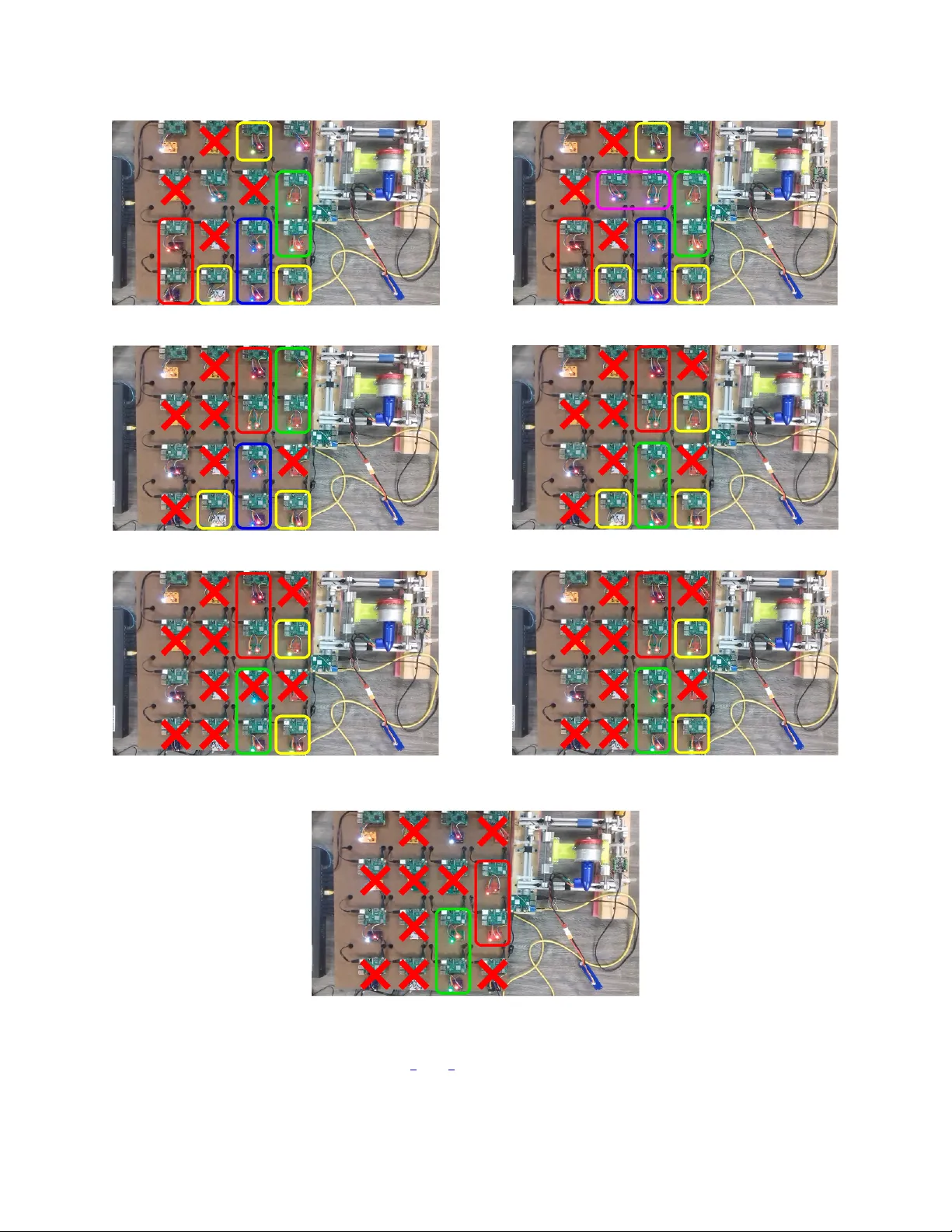

Decentralized On-line T ask Reallocation on P arallel Computing Architectures with Safety-Critical Applications Thanakorn Khamvilai School of Aer ospace Engineering Geor gia Institute of T echnology Atlanta, GA, USA tkhamvilai3@gatech.edu Philippe Baufreton Safran Electr onics & Defense Massy , France philippe.baufreton@safrangroup.com Louis Sutter School of Aer ospace Engineering Geor gia Institute of T echnology Atlanta, GA, USA lsutter6@gatech.edu Franc ¸ ois Neumann Safran Electr onics & Defense Massy , France francois.neumann@safrangroup.com Eric Feron School of Aer ospace Engineering Geor gia Institute of T echnology Atlanta, GA, USA eric.feron@aerospace.gatech.edu Abstract —This work presents a decentralized allocation algo- rithm of safety-critical application on parallel computing archi- tectures, where individual Computational Units can be affected by faults. The described method consists in representing the ar chitecture by an abstract graph where each node represents a Computa- tional Unit. Applications are also r epresented by the graph of Computational Units they require f or execution. The problem is then to decide how to allocate Computational Units to appli- cations to guarantee execution of the safety-critical application. The problem is formulated as an optimization problem, with the form of an Integer Linear Program. A state-of-the-art solver is then used to solve the problem. Decentralizing the allocation process is achie ved through redundancy of the allocator executed on the architecture. No centralized element decides on the allocation of the entire architectur e, thus improving the r eliability of the system. Experimental reproduction of a multi-core architecture is also presented. It is used to demonstrate the capabilities of the pro- posed allocation process to maintain the operation of a physical system in a decentralized way while individual component fails. Index T erms —parallel computing, multi-core, reconfigurable, safety-critical, fault tolerance, decentralized, integer linear pro- gramming I . I N T RO D U C T I O N A N D P R I O R A RT The onset of multi-core processors appeared as a golden opportunity for the embedded systems industry to improv e efficienc y of embedded computers. Multicore processors carry sev eral benefits over single core ones, bringing more computational power through parallelization without increasing chip’ s internal frequency , and without increased energy consumption or increased heating. They no w pervade cellular communication devices and embedded electronics for mass-market, for example, and many other industries are no w This ef fort has been funded in part by SAFRAN and by the National Science Foundation, Grants CNS 1544332 and 1446758. taking advantage of such processors, such as the automotiv e industry [1], the biotechnology industry [2] and the circuit industry [3]. Howe ver , as far as critical systems are concerned, these benefits come with great certification challenges [4] [5], since parallel applications on a multi-core processor may interfere. The aerospace industry is yet undertaking to take up this challenge [6]. A reconfigurable multi-core architecture that could host safety critical applications, e.g. [7], [8], [9], can become an example of a safe multi-core processor by taking advantage of the inherent redundancy of such processors that enables graceful degradation [10]: when some core fails, we can use the multiple remaining ones by reallocating affected applications to a healthy area of the chip. The inherent redundancy in such parallel architecture can also be seen as an opportunity to increase the reliability of computing systems, be it in safety critical embedded systems or for computing centers requiring guaranties of continuity of service. For example, se veral attempts hav e been made to increase the reliability of safety-critical systems using multi-core processors. In [11], an “hypervisor” is used to organize access to shared resources for applications, including safety-critical ones. Ho wev er , a failure of this hypervisor is not taken into account in this patent. Therefore, such technique just mov es the problem since the whole reliability is carried by the reallocation decision or gan, which constitutes a single point of failure: the most complex and efficient reallocator is pointless if the system it executes on fails. In [12], backup allocations are pre-calculated for each failure case and they are stored by indi vidual Computational Units (CUs). F or small architecture with only a few CUs, this solution is satisfactory and ensures a continuous fault tolerance of the system without requiring a centralized allocator . Howe ver , storing backup configuration can require a lot of memory when the architecture becomes bigger . Also, the proposed approach does not consider application that can themselves be parallelized and executed on sev eral CUss at the same time. Our approach differs from these tw o solutions by providing an on-line and decentralized reallocation algorithm for a general architecture that can be represented by a graph and for parallelized applications requiring se veral CUs to e xecute. Even though this w ork is motiv ated by a multi-core architecture, it presents a decentralized task allocation algorithm for an abstract parallel computing architecture made of a set of CUs connected together and forming a network. Such an architecture can represent for example a multi-core processor, with each CU standing for one core, a cluster of high-performance computers, or a team of mobile robots. The aim of the algorithm is to find the optimal allocation of an a priori defined set of tasks on the architecture while taking into account the faults af fecting the CUs. The faults are assumed to be detected by the algorithm when they occur either via a timeout mechanism or a voter , but this work does not provide details of those fault detection mechanisms. As described later , two types of fault will be considered, the first one completely stopping the operation of the CU, and a second one considered to modify the computed output of the CU. The second main feature of this work is the decentralized aspect of the allocation process. Decentralized means here that there is no central element deciding alone of the allocation for the rest of the architecture. Instead, we use redundant copies of the allocation algorithm executed on the architecture itself, meaning that the copies must reallocate themselves. This is achiev ed by using majority voting systems. This work also presents an e xperimental setup reproducing sev eral aspects of a parallel computing architecture and used to implement the proposed decentralized allocation algorithm. The setup uses a network of Raspberry Pi single board computers [18] to represent the CUs of the architecture. I I . T H E O R E T I C A L A S P E C T A. Mathematical description of the allocation pr oblem This section describes the mathematical formulation of the general allocation problem that is considered in this work. The idea is to use this mathematical formulation in an Integer Linear Program (ILP), whose solution is the best allocation of the tasks on the parallel computing platform (multi-core processors, network of computers in a computing center , etc), according to criteria described in Section III-C2, taking into account the number of applications running, their priority , the number of reallocated applications and the length of communication paths between allocators and other applications. The considered parallel computing platform is represented by a directed simple graph G = ( V , E ) , where V is the set of vertices and E ⊆ ( x, y ) ∈ V 2 | x 6 = y is the set of edges [13]. Each vertex of G represents a CU, for example one core in a multi-core processor or at a dif ferent scale, one computer in a massively parallel supercomputer , and each edge of G represents a physical communication link between two CUs. The communication links are considered bidirectional, and therefore the orientation of edges can be chosen arbitrarily: we choose them to be oriented only to write more con v eniently further constraints on the communication flo w . The graph G therefore represents the topology of the platform. F or example, the platform can hav e a simple square mesh topology , as represented in Fig. 1. Figure 1: Example of square mesh topology . Orientation of edges are arbitrary . From G , we define parameters that will be used later in this work. Definition 1. N CUs is defined as the number of CUs in the computing platform, that is the number of v ertices of G . Definition 2. N paths is defined as the number of Physical Communication Links, or physical paths, in the platform, that is the number of edges of G . Let N app ∈ N and A = { app k , k ∈ J 1 , N app K } be a set of applications to be ex ecuted on the parallel computing platform. The applications in A are ranked by priority , app 1 having the highest priority and app N app having the lowest one. The ranking is established a priori and represents the tolerated order in which we stop applications in case of computing resource failures. In the context of a commercial aircraft, an example of such applications with different priority would the engine controller , with the highest priority , and a health monitoring application, with a lower priority , which is in charge of analyzing data from the engine in order to estimate its wear and to predict when maintenance operations are required. In case of computing resource failures, it would be tolerated in this context to stop the health monitoring application in order to maintain the ex ecution of the engine controller . For k ∈ J 1 , N app K , we assume that the compiler for the considered architecture decomposes the application app k into a undirected simple graph G k = ( V k , E k ) , where each verte x, that we will call Application Node , represents a sub-task of app k that must be e xecuted by a CU, and each edge represents a required communication link between two Application Nodes, that we will call an Application Link . Fig. 2 gives an example of such application graphs. Figure 2: Example of application graphs. Each application node is identified with a unique index. app 1 has highest priority , app 3 has the lowest. From each graph G k for k ∈ J 1 , N app K , we define the following parameters. Definition 3. N k nodes is defined as the number of Application Nodes in application k and N k links is defined as the number of Application Links in application k Definition 4. N nodes : = P N apps k =1 N k nodes is the total number of Application Nodes, and N links : = P N apps k =1 N k links is the total number of Application Links. Each application node is giv en a global inde x j ∈ J 1 , N nodes K with the following procedure: the nodes of app 1 keep the same indices as in the local numbering of vertices in G 1 ; then the global indices for nodes of app 2 are obtained by increasing their local indices by N 1 nodes ; and so on for the nodes of app k , by increasing the local numbering by P k − 1 l =1 N l nodes . The result of the global numbering of the nodes can be seen on Fig. 2. An identical process is applied to obtain a global numbering of the edges of the application graphs. The problem that we tackle here is to assign applications to CUs of the architecture while faults affect some CUs, taking into account the priority of the applications and specific constraints of the architecture. A solution will look like Fig. 3. The approach that we take here to solve the problem is to formulate the allocation problem as Integer Linear Program (ILP) and use a state-of-the-art IP solver such as “GNU Linear Programming Kit” (GLPK) [14]. An additional aspect of the problem that we propose to solve is to make the allocation process decentralized, in the Figure 3: Example of a solution with a fault on CU 11. sense detailed in the introduction and in Section IV, with no central computing element allocating the tasks according to the solution of the ILP problem. The way this decentralized allocation is achieved is specifically described in Section IV: it in v olves sev eral copies of the task computing the allocation and being executed on the platform itself. The number of such copies is the last parameter of our problem. Definition 5. N realloc is defined as the number of copies of the Allocator Application . The next section details how the allocation problem is formulated as an ILP problem. I I I . I L P F O R M U L AT I O N O F T H E TA S K A L L O C A T I O N P R O B L E M A. Matrix repr esentation of graphs Definition 6. From the graph representation G = ( V , E ) of the parallel computing platform, the N CUs × N paths incidence matrix G associated with G is defined as: [ G ] ij : = − 1 if e j ∈ E leaves v i ∈ V 1 if e j ∈ E enters v i ∈ V 0 otherwise And the N CUs × N paths NoC unoriented incidence matrix ˆ G associated with G is defined as: [ ˆ G ] ij : = | [ G ] ij | Definition 7. From the graph G k = ( V k , E k ) representing the k -th application, the N k nodes × N k links application unoriented incidence matrix H k associated with G k is defined as: [ H ] k ij : = ( 1 if v k i ∈ V k and e k j ∈ E k are incident 0 otherwise Furthermore, the N nodes × N links overall application unoriented incidence diagonal block-matrix H is defined as H : = H 1 . . . H k B. Definition of the Decision V ariables Definition 8. The N CUs × N nodes decision matrix X CUs → nodes , mapping Application Nodes to CUs, is defined as: X CUs → nodes ij = 1 if the CU i is allocated to the Application Node j 0 otherwise Definition 9. The N paths × N links decision matrix X paths → links , mapping Application Links to Physical Links, is defined as: X paths → links ij = 1 if the Physical Link i is allo- cated to the Application Link j 0 otherwise Definition 10. The N apps × 1 decision vector r , representing which applications are executed, is defined as: r i = ( 1 if the application i is running 0 if it is dropped Definition 11. The N nodes × 1 decision vector M , representing which application nodes are reallocated, is defined as: M i = 1 if the Application Node i is moved from its pre viously allocated CU 0 otherwise Definition 12. For k ∈ J 1 , N app K , the N paths × N CUs decision matrix X Comm, k , representing communication paths between the k -th allocator application and ev ery CU of the platform, is defined as: X Comm, k ij = − 1 if the Physical Link i is used to communicate between the al- locator k and the CU j in the negati ve direction 1 if the Physical Link i is used to communicate between the al- locator k and the CU j in the positiv e direction 0 otherwise Positiv e (respecti vely neg ativ e) direction means that the com- munication takes place in the same (respecti vely opposite) direction as the edge of the directed graph G , as in Fig. 1. C. F ormulation of the optimization model This section giv es the detail of the formulation of the optimization problem that is solved each time a new fault is detected. This formulation includes the detail of the chosen objectiv e function and constraints. 1) General form of the optimization model: The allocation problem is formulated as an Integer Linear Program (ILP) of the form [15]: maximize f ( x ) = c T x subject to M 1 x ≤ b 1 M 2 x = b 2 and x is a vector of integers. (1) x is the global vector of decision variables deri ved from the vectorization and the aggregation of the decision matrices from Section III-B. W e define it formally as: x = v ec( X CUs → nodes ) v ec( X paths → links ) r M v ec( X Comm, 1 ) . . . v ec( X Comm, N realloc ) (2) where v ec is the common vectorization function for matrices: ∀ Q = ( q i,j ) 1 ≤ i ≤ m, 1 ≤ j ≤ n , v ec( Q ) = [ q 1 , 1 , . . . , q m, 1 , q 1 , 2 , . . . , q m, 2 , . . . , q 1 ,n , . . . , q m,n ] T . c is the coefficients of the objective function and M 1 , M 2 , b 1 and b 2 are parameters derived from the aggregation of the constraints of the problem that are described in the following sections. For example, for each scalar inequality constraint, after arranging the inequality with all decision variables on the left-hand side in the same order as in x and constant terms on the right-hand side, a row containing the coef ficients of the decision variables is added to M 1 and the constant term is added in the vector b 1 . The same is done for equality constraints to b uild M 2 and b 2 . 2) Objective function: Giv en the priority of the applications in an ascending order i.e. the first application has the highest priority and the N apps -th application has the lo west one, the objectiv e function is used in order to maximize the number of executed applications while minimizing the number of reallocations and the length of communication paths. The chosen objectiv e function, in terms of x as defined above in equation 2, is: max ( f ( x ) = N apps X k =1 α k · r k − ( β + 1) N nodes X j =1 M j − N realloc X k =1 N CUs X j =1 N paths X i =1 X Comm, k ij ) , (3) where β = N realloc × N CUs × N paths α N apps = ( β + 1) × N nodes + β + 1 and ∀ k < N apps : α k = N apps X l = k +1 α l + ( β + 1) × N nodes + β + 1 . (4) The coef ficients of the objecti ve function are chosen to prioritize the dif ferent aspects that are optimized in this function. 1) The first priority is to ex ecute each application, e ven if it means more reallocations and longer communication paths. 2) Then, minimizing the number of reallocations is more important than having shorter communication paths, since a reallocation temporarily interrupts the ex ecution of the allocation. 3) When running all applications is not feasible, the priorities of the applications are enforced and e xecuting any giv en application is more important than running any number of applications with a lower priority . Howe v er , if because of its geometry , a gi ven application cannot be ex ecuted anyway , nothing prev ents lower - priority applications from being executed. These requirements motivated the choice for the coefficients in the objecti ve function. The proof that these coefficients allow the objectiv e function to meet these requirements is giv en in Appendix. Note that the problem of minimizing or maximizing the absolute value of the X Comm, k ij variables, which is a nonlinear program, can be reformulated as a linear program by intro- ducing additional v ariables and constraints [15], that were not presented in the previous section for conciseness. For each entry X Comm, k ij of X Comm , an auxiliary variable ˆ X Comm, k ij is introduced to represent its absolute value, and two extra constraints are added: + X Comm, k ij ≤ ˆ X Comm, k ij , − X Comm, k ij ≤ ˆ X Comm, k ij . ˆ X Comm, k ij is then used instead of X Comm, k ij in the objective function. Because the objective function tends to maximize − X Comm, k ij , so to minimize ˆ X Comm, k ij , one of the two previous constraints will be binding, the stricter one, where the left-hand side is the greatest and equal to max(+ X Comm, k ij , − X Comm, k ij ) , which is exactly X Comm, k ij . The other constraint will be non-binding and therefore does not affect the optimal point. It thus ensures that ˆ X Comm, k ij is equal to X Comm, k ij . 3) Constraints: a) Domain of decision variables: The decision v ariables X CUs → nodes , X paths → links , r and M are binary i.e. the value of their entries must be either 0 or 1. The entries of X Comm, k for k ∈ J 1 , N real loc K must belong to {− 1 , 0 , 1 } . b) Resour ce allocation and partitioning: Sev eral equations express the constraints of allocating the resources of the CUs to applications while enforcing partitioning on the platform. • Each CU can be allocated to at most one application, as a way to enforce spatial partitioning of applications on the platform, i.e. ∀ i ∈ J 1 , N CUs K , N nodes X j =1 X CUs → nodes ij ≤ 1 . (5) • Each running Application Node must be assigned to exactly one CU, i.e. ∀ i ∈ J 1 , N nodes K , N CUs X j =1 X CUs → nodes j i = r N ( i ) . (6) N ( i ) is the application number corresponding to Application Node i . • A physical communication link of the platform can be allocated to at most one Application Link 1 , i.e. ∀ i ∈ J 1 , N paths K , N links X j =1 X paths → links ij ≤ 1 . (7) • Each running Application Link must be assigned to exactly one physical communication link of the platform, i.e. ∀ i ∈ J 1 , N links K , N paths X j =1 X paths → links j i = r L ( i ) . (8) L ( i ) is the application number corresponding to Application Link i . c) Compliance with the platform: An Application link, adjacent to an Application Node that has been mapped to a given CU, must be allocated to a Physical Link that is adjacent to that CU, i.e. X CUs → nodes H = ˆ G X paths → links . (9) 1 This does not mean that this communication link cannot be used for other communication purposes on the architecture, b ut only one of the Application Link computed by the compiler for the applications can be allocated to that physical communication link. This equation (9) is equi v alent to the scalar equations (III-C3c): ∀ i ∈ J 1 , N CUs K , ∀ j ∈ J 1 , N links K , N nodes X k =1 X CUs → nodes ik H kj = N paths X l =1 ˆ G il X paths → links lj . (10) The left-hand side is equal to one if and only if the CU i has been allocated to Application Node k and Application Node k is adjacent to Application Link j . The right-hand side is equal to one if and only if the CU i is adjacent to the Physical Link l and the Physical Link l is allocated to Application Link j , which prov es the correctness of the constraint. d) Reallocating several applications: A giv en Applica- tion Node can either remain affected to the same CU, either be moved or be dropped: ∀ i ∈ J 1 , N CUs K , ∀ j ∈ J 1 , N nodes K , s.t. X CUs → nodes old ij = 1 , 1 − r N ( j ) + M j + X CUs → nodes ij = X CUs → nodes old ij , (11) with X CUs → apps old be the parameter containing the mapping between CUs and Application Nodes computed during the previous allocation. This constraint (11) is ignored for the initial allocation. e) F aults: W e assume the parallel computing platform is equipped a fault detection system that can detect and inform the allocators when a CU fails. From this information, we can add constraints to take into accounts fault in the platform. W ithin a CU i : • If the CU is healthy , any Application Node can be mapped on the CU. • If the CU is faulty , then no Application Nodes can be mapped on the CU i : N nodes X k =1 X CU → apps ik = 0 . (12) The detection of this fault is either assumed for the model or detected by the voter using the majority rule described in V -A2. f) Communication constraints: Since the allocators are ex ecuted on the platform, we must ensure that they will be able to send the allocation they computed to the other CUs of the platform, giv en the communication links that allows each CU to send a message only through its neighbors. Therefore, we must make sure that there exists a path from each allocator to the other CUs. ∀ k ∈ J 1 , N realloc K , G X Comm, k = S k (13) where S k is the N CUs × N CUs sour ce-sink matrix S k , depending on X CUs → nodes and defined by: [ S ] k ij : = 0 if deg ( v i ) = 0 in G 0 if CU i is faulty − X CUs → nodes i node of alloc ( k ) + [ I N CUs ] ij otherwise . where the degree of a verte x deg( v i ) is the number of edges connected to it, and node of alloc ( k ) is the Application Node corresponding to allocator k . When the CU is neither faulty nor without any neighbor, in each path between an allocator and a given CU i , the allocator is the source (-1) and the CU i is the sink (+1). g) Constraints specific to the arc hitectur e: Additional constraints can be added to respect specific aspects of the considered architecture. For example, some multi-core architectures [7], where intra-application communication between CU can happen only in a specific way as illustrated in Fig. 4, orientation of the applications on the architecture matters because nodes that can communicate in a giv en orientation will not be able to do so if they are rotated on the architecture. Therefore the orientation as computed by the compiler must be enforced. T o ensure correct orientation of applications, another set of constraints is also needed. In order to enforce this, the numbering of the CUs on the platform is used. For example, as illustrated in Fig. 4, a CU has always a number difference of − 1 with its right neighbor and + N row with its top neighbor , where N row is the number of T iles per row of the NoC ( N row = 4 in our example). The difference between the numbers of the contiguous pairs of CUs allocated to an application must match the orientation computed by the compiler . Let j k be the index of the top-left node of the k -th application: ∀ i ∈ J 1 , N CUs K , ∀ k ∈ J 1 , N apps K , X CUs → nodes ij k = X CUs → nodes ( i +1)( j k +1) X CUs → nodes ij k = X CUs → nodes ( i + N row )( j k + N k row ) . (14) where N k row is the number of nodes per row of the k -th application. I V . D E C E N T R A L I Z A T I O N O F T H E A L L O C A T I O N S Y S T E M In this paper , we use the word decentralized to qualify a system where no single CU has control over all the other ones in the parallel architecture: there is no central CU whose failure jeopardizes the operation of the whole parallel architecture. In safety words, this means that no CU constitutes a single point of failur e . Figure 4: By equating the difference between two CUs’ indices allocated to an application to a specific number, the spatial orientation of the application can be enforced. W e focus here on CUs, b ut there are other elements that may be a single point of failure and that we do not take into account in this work. For example, electrical power may be provided by one unique and central power supply unit, which is an obvious single point of failure if not designed carefully . T o mitigate the ef fect of other single point failures, methods for safety assessment process may be conducted [16]. A. N-modular redundancy and majority voting system T o de velop a decentralized allocation system for the considered parallel computing platform, we chose to use the concept of N-modular redundancy with a majority voting system [17]. In this approach, N realloc is an odd number greater or equal to 3 , and N realloc copies of the same sub-tasks are executed in parallel. N realloc is taken odd to av oid the case where equal number of copies agree on two different results. The copies are fed with the same inputs and their outputs are then sent to a majority voting system. As illustrated in Fig. 5, the voting system compares the outputs of the redundant copies and filters them: only the result that has been computed by the majority of the redundant copies will be transmitted, i.e. the result computed by at least N realloc +1 2 redundant copies. The voting system is also used to report the failure of the redundant copies that do not match the majority result. B. Decentralized implementation The proposed idea to decentralize the allocation system is to ex ecute N realloc modular redundant copies of the allocator application on the architecture itself, with a voting system implemented on each CU. For further examples, N realloc will be taken equal to 3 . In normal conditions, the N realloc copies of the allocator compute the same allocation, since they solve an identical ILP problem, with same inputs and constraints, and because Figure 5: Illustration of the voting process with 3 redundant copies. GLPK is a deterministic solver . This allocation is then broadcast to e very CU, including the ones e xecuting the allocators. If a CU not running an allocator fails, all 3 allocators compute the same new allocation, in which the af fected application is assigned to a new CU, according the algorithm described previously in Section III. This new allocation is then broadcast and recei ved by all CUs. Since the 3 signals that the CUs receive are coherent, they all comply with it and therefore, the af fected application is reallocated. On the other hand, as illustrated in Fig. 6, if a CU that was running a copy of the allocator is affected by a fault, the 2 other ones will compute the same ne w allocation where the affected copy is assigned to a new healthy CU. Regardless of what the faulty allocator computes, only the two coherent allocation sent by the two healthy allocators will be taken into account by the CUs, and the faulty allocator will be reallocated. V . P R AC T I C A L E X A M P L E This section describes the experimental setup that is used as a representation of the parallel computing platform as well as the result of reallocating safety-critical applications using the previously-described optimization problem. A. Repr esentation of a parallel computing platform 1) Har dwar e components: T o illustrate and demonstrate the capabilities of the new formulation of the allocation algorithm in operational conditions, we choose to implement it on a cluster of single-board computer , Raspberry Pi [18], in order to control and maintain operation of a physical system despite the presence of faults. a) platform description: In this setup, a cluster of parallel CUs of 4 × 4 units is replicated with a network of 16 Raspberry Pi computers. All of them are connected to a common routing switch in a local area network (LAN). Although the use of this common routing switch is a single point failure, it serves a purpose of visualizing that these Raspberry Pi computers are grouped as a single parallel CU. Also, for simplicity of visualization, the network is (a) Layout of the allocators on the computing architecture. (b) Information flow between allocators and CUs. The correct allocation includes the instruction for some node i to run allocator 3. Figure 6: Fault affecting a CU running an allocator . considered to be a square mesh, instead of a toroidal mesh. One alternati ve of using a wired LAN network is to use a routing protocol for multi-hop mobile ad hoc network such as [19]. This ad-hoc network is implemented on a data link layer , which allo ws the data transportation protocol operate in a wireless and decentralized fashion as if there is a common routing switch. The goal of this parallel computing platform is to show the possibility to decentralize the allocation process; therefore, there is no central computing unit outside the network and three copies of the allocator are executed on the network, as described in Section III. b) F aults: T wo types of faults are considered in this e xperiment. The first type is computational fault, which randomly affect the computations performed by the Raspberry Pi. W e detect this kind of fault by using redundant copies of the considered application combined with a voting system that is described below in section V -A2. The second type of fault is assumed to stop the operation of the computing unit it affects. W e also assume that this fault can be detected by the network. In practice, each time one of these faults affects a Raspberry Pi, the status signal sent by this Raspberry Pi to the allocators is changed to a signal identifying it as faulty . Each of these two kinds of fault can be manually triggered or recovered thanks to a breadboard as seen in Fig. 7 connected to each Raspberry Pi. Figure 7: Hardware associated with each Raspberry Pi T ile. The RGB-LED (bottom-left corner) represents the LED application. The red LED (right side) indicates an healthy T ile when turned on. Each switch is used to trigger one type of fault. c) Contr olled system: The physical system we chose to control with this parallel computing platform is a propulsion system, made of an electric fan mounted on a thrust stand. The fan is commanded by using Pulse width modulation (PWM). The measure of the thrust is used by a simple proportional controller e xecuted as a safety-critical application on the platform in order to compute the value of the PWM command required to maintain the thrust at a constant value. Figure 8: Electric fan mounted on the thrust stand. The delivered thrust is measured thanks to a load cell on the stand, indicated by the orange circle. An extra Raspberry Pi is used as the micro-controller of the fan: it conv erts the value measured by the load cell, sends it to the Controller s where the appropriate control value is computed, and generates the corresponding PWM signal controlling the fan. It must therefore be noted that although the same hardware representation is used, this Raspberry Pi does not correspond to the same components as the ones used for the CUs of the platform. 2) Softwar e components: Even if a controller is reallocated to healthy Tiles when it is af fected by a fault, because of the time required to compute the new allocation and to actually reallocate the set of tasks, the operation of the fan may be temporarily altered during the reallocation process. T o av oid interruptions in the operation of the fan during reallocations, we also use a standard T riple Modular Redundancy (TMR) architecture [17]. Three copies of the controller are executed on the parallel computing platform. Each one separately computes the duty-cycle value of the PWM signal that should be sent to the fan, given the thrust value that they all receiv e from the sensor . The three values are sent to the Raspberry Pi representing the micro-controller of the fan, where a voting system decides which control output should be used. The vote outputs the result that has been computed by the majority of the controllers, in this case 2 out of 3. Signals are here considered equal if their difference is smaller than a giv en tolerance. In the case of a fault af fecting the output of one of the controllers, the two remaining healthy controllers ensure that the correct value is sent to the fan. The voting system also identifies which controller is not coherent with the two others and informs the allocators of the fault. The reallocation process that we implemented can then take place while pro viding continuity of service with the two healthy controllers. T o complicate the reallocation tasks, each copy of the controller has been arbitrarily attrib uted to 2 Application Nodes. Concretely , only one of them is responsible of actual computations. Three copies of the allocator execute the allocation algorithm itself. They have second rank priority immediately below the controllers, which represent the safety-critical application in this case. Giving the allocators only the second rank in the priority list can be justified when considering the case where only a controller or an allocator can be ex ecuted on the platform: the resource must be allocated to the safety-critical application, in this case the controller , that maintains the operation of the system, whereas the allocator is only a protection against further faults, but cannot alone ensure operation of the controlled system. In addition to these six applications, one dummy application is considered in this experiment: it occupies 2 Tiles of the Fabric, but does not perform actual computation except changing the voltage in the RGB LED to display its corresponding color . It has the lowest priority . Figure 9 sums up the list of considered applications for the experiment, their relative priority and the resources the y require in terms of number of CUs. The initial allocation of these applications on the model is giv en in Fig. 10. B. Results Starting from the initial allocation given in Fig. 11, faults are triggered on the model. After each fault, the allocators detect the faulty Raspberry Pi and compute a ne w allocation that is then broadcast on the network. They maintain the Figure 9: Considered applications for the experiment, their priority and the number of T iles they require. Figure 10: Initial allocation of the applications on the model. The orange circle identifies the extra Raspberry Pi for interactions with the stand. ex ecution of the safety-critical application as long as enough resources are a vailable for it. CUs surrounded by faulty neighbors are isolated from the rest of the platform and cannot communicate. As enforced by the communication constraints described in paragraph III-C3f, such a CU is not giv en any task to execute and is as good as faulty , as seen in Fig. 11a. When a CU recov ers from a fault, an application can be allocated back to it as seen in Fig. 11b. Applications are dropped according to their priority when more computing units become faulty . Howe ver , as illustrated in Fig. 11d, when no space is av ailable for all 1 st priority applications, lower priority ones are still allowed to be ex ecuted. The voting system implemented on the fan needs at least two functioning and coherent controllers to run the fan (Fig. 11d), as previously explained in section V -A2: in case the signals receiv ed from the controllers are incoherent, it decides not to trust any of them and the engine stops, as in Fig. 11e. Since the controllers have the highest priority , they are the last remaining applications to be executed in Fig. 11g. After this step, further faults will af fect the controllers b ut no reallocation can happen because no more allocator is executed. V I . C O N C L U S I O N This work presented a decentralized allocation algorithm for parallel computing architectures, where individual Computational Units can be affected by faults. The described method consisted in representing the architecture by an abstract graph and formulating the allocation problem as an optimization problem, with the form of a Inte ger Linear Program. Decentralizing the allocation process has been achiev ed through redundancy of the allocator executed on the architecture. That way , no centralized element decides of the allocation of the entire architecture. An experimental reproduction of a parallel computing architecture has also been built. It has been used to demonstrate the capabilities of the proposed allocation process to maintain operation of a physical system in a decentralized way while indi vidual component fail. The proposed work assumed that faults af fecting the Computational Units of the architecture were automatically detected by the allocation algorithm, so that it is able to compute a new allocation every time a fault affects a Computational Unit. This work can be improv ed by defining a more precise model of the considered faults and a method to detect them. One first approach to identify dead Computational Unit would be a simple heartbeat that each would send to the allocators. A CU not sending its heartbeat would be considered faulty . One challenge to tackle in this approach is the fact that the allocators do not hav e a fixed position in the architecture, and therefore, the heartbeat of each CU would ha ve to be broadcast through the entire architecture to be sure to reach all allocators. Another solution would be to include the position of the allocator in the allocation message that they broadcast, so that the CU know where to send back their heartbeat. In both cases, the amount communication packets transmitted through the architecture drastically increases. The second lead for impro vement is the w ay communication isolation are taken into account in the allocation problem. For now , only individual nodes with all of their neighbors being faulty were considered isolated. Howe ver , an entire area of the architecture, can be isolated from the allocator . The problem becomes quite tricky when the architecture is split in two halves that are isolated one from the other: a decision must be made to decide which area is isolated from the other . It seems that the area with the highest number of allocators should be privile ged, since they are the ones that will send the ne w allocation to other CU, and therefore the ones in the other isolated area will not be able to receiv e this new allocation. They should therefore be considered as lost CUs. Also, it should be considered that not only the Computational Units can fails, but also the communication links between them. The effect of such faults would be the same as isolating the Computational Units from their neighbors and would make more of them unavailable. It would also change the communication paths usable to connect the allocators to other applications and would affect their position since minimizing these paths is a part of the optimization problem. A P P E N D I X Coefficients of the objective function This appendix provides the proof that the coef ficients in the objective function from equation 3 allow to meet the requirements stated in Section III-C. For con venience, this objectiv e function is rewritten here: max ( f ( x ) = N apps X k =1 α k · r k − ( β + 1) N nodes X j =1 M j − N realloc X k =1 N CUs X j =1 N paths X i =1 X Comm, k ij ) , (3) where β = N realloc × N CUs × N paths α N apps = ( β + 1) × N nodes + β + 1 and ∀ k < N apps : α k = N apps X l = k +1 α l + ( β + 1) × N nodes + β + 1 . (4) The requirements of Section III-C are also re written belo w . 1) When solving the optimization problem, the objective function 3 privile ges executing any giv en application, ev en if it implies more reallocations and longer com- munication paths. 2) When solving the optimization problem, the objective function 3 privileges minimizing the number of reallo- cations, even if it implies longer communication paths. 3) When solving the optimization problem, the objective function 3 pri vileges executing a given application compared to running any number of applications with a lower priority . The following theorems pro ve that these requirements are met. Theorem 1. ∀ ˜ k ∈ J 1 , N apps K : α ˜ k > ( β + 1) N nodes X j =1 1 + N realloc X k =1 N CUs X j =1 N paths X i =1 1 , that is, the contribution to the value of the objective function for executing application app ˜ k is greater than the maximum contribution for r educing the number of r eallocations and the length of the communication paths. Pr oof. ∀ ˜ k ∈ J 1 , N apps K : α ˜ k ≥ ( β + 1) × N nodes + β , by definition of α ˜ k . Now , ( β + 1) N nodes X j =1 1 + N realloc X k =1 N CUs X j =1 N paths X i =1 1 = ( β + 1) × N nodes + β . So α ˜ k > ( β + 1) N nodes X j =1 1 + N realloc X k =1 N CUs X j =1 N paths X i =1 1 . Theorem 1 prov es that requirement 1 is met. Theorem 2. ( β + 1) × 1 > N realloc X k =1 N CUs X j =1 N paths X i =1 1 , that is, the contribution to the value of the objective function for not r eallocating one Application node is gr eater then the maximum contrib ution for reducing the length of communica- tion paths. Pr oof. β + 1 > β = N realloc × N CUs × N paths = N realloc X k =1 N CUs X j =1 N paths X i =1 1 . Theorem 2 prov es that requirement 2 is met. Theorem 3. ∀ ˜ k ∈ J 1 , N apps − 1 K : α ˜ k > N apps X l = ˜ k +1 α l that is, the contribution to the value of the objective function for executing application app ˜ k is gr eater than the contribution for e xecuting every applications with lower priority than app ˜ k , which ar e app ˜ k +1 to app N apps . Pr oof. ∀ ˜ k ∈ J 1 , N apps K : α ˜ k = N apps X l = ˜ k +1 α l + ( β + 1) × N nodes + β + 1 > N apps X l = ˜ k +1 α l since ( β + 1) × N nodes + β + 1 > 0 . Theorem 3 prov es that requirement 3 is met. R E F E R E N C E S [1] A. Monot, N. Nav et, B. Bav oux, and F . Simonot-Lion, “Multisource software on multicore automoti ve ECUs—combining runnable sequenc- ing with task scheduling, ” IEEE Tr ansactions on Industrial Electronics , vol. 59, no. 10, pp. 3934–3942, Oct 2012. [2] N. Neves, N. Sebasti ˜ ao, D. Matos, P . T om ´ as, P . Flores, and N. Roma, “Multicore SIMD ASIP for next-generation sequencing and alignment biochip platforms, ” IEEE T r ansactions on V ery Large Scale Inte gration (VLSI) Systems , vol. 23, no. 7, pp. 1287–1300, July 2015. [3] Y . Lu, H. Zhou, L. Shang, and X. Zeng, “Multicore parallelization of min-cost flo w for CAD applications, ” IEEE T ransactions on Computer- Aided Design of Inte grated Circuits and Systems , vol. 29, no. 10, pp. 1546–1557, Oct 2010. [4] J. Nowotsch and M. Paulitsch, “Leveraging multi-core computing archi- tectures in avionics, ” in Dependable Computing Conference (EDCC), 2012 Ninth Eur opean . IEEE, 2012, pp. 132–143. [5] F . Reichenbach and A. W old, “Multi-core technology–next ev olution step in safety critical systems for industrial applications?” in Digital System Design: Ar chitectur es, Methods and T ools (DSD), 2010 13th Eur omicr o Conference on . IEEE, 2010, pp. 339–346. [6] U. Durak and F . Bapp, “Introduction to special issue on multi-core architectures in avionics systems, ” 2019. [7] M. Alle, K. V aradarajan, A. Fell, C. R. Reddy , J. Nimmy , S. Das, P . Biswas, J. Chetia, A. Rao, S. K. Nandy , and R. Narayan, “REDE- FINE: runtime reconfigurable polymorphic ASIC, ” ACM T ransactions on Embedded Computing Systems , vol. 9, no. 2, 2009. [8] M. A. W atkins and D. H. Albonesi, “Remap: A reconfigurable het- erogeneous multicore architecture, ” in 2010 43rd Annual IEEE/ACM International Symposium on Microar chitecture , Dec 2010, pp. 497–508. [9] R. Gerard, “Network on chip (noc) for many-core system on chip in space applications, ” Dec 2017. [10] L. M. Kinnan, “Use of multicore processors in a vionics systems and its potential impact on implementation and certification, ” in Digital A vionics Systems Confer ence, 2009. D ASC’09. IEEE/AIAA 28th . IEEE, 2009, pp. 1–E. [11] “Symmetric multi-processor arrangement, safety critical system, and method therefor, ” Patent US 2015/0 254 123 A1, Sep. 10, 2015. [12] M. Oriol, T . Gamer, T . de Gooijer , M. W ahler, and E. Ferranti, “Fault- tolerant fault tolerance for component-based automation systems, ” in Pr oceedings of the 4th international ACM Sigsoft symposium on Ar chi- tecting critical systems , June 2013, pp. 49–58. [13] M. Mesbahi and M. Egerstedt, Graph theor etic methods in multiagent networks . Princeton Univ ersity Press, 2010, vol. 33. [14] “GLPK reference manual, ” GNU Linear Pr ogramming Kit , 2012, https: //www .gnu.org/software/glpk/T OCdocumentation. [15] F . S. Hillier and G. J. Lieberman, Intr oduction to Operations Resear ch , 10th ed. New Y ork, NY , USA: McGraw-Hill, 2015. [16] A. SAE, “Guidelines and methods for conducting the safety assessment process on civil airborne systems and equipment, ” London-UK: SAE , 1996. [17] C. M. K. Israel K oren, F ault tolerant systems . Morgan Kaufmann Publishers, 2007. [18] R. P . Foundation, “Raspberry Pi 3 Model B+, ” https://www .raspberrypi. org/products/raspberry- pi- 3- model- b- plus/, Accessed June 2018. [19] A. Neumann, C. Aichele, M. Lindner , and S. Wunderlich, “Better approach to mobile ad-hoc networking (batman), ” IETF draft , pp. 1– 24, 2008. (a) After 4 faults, Application 3 has to be dropped. The isolated T ile cannot be used. (b) When a Tile recovers from a fault, an allocation can be reallocated to it. (c) After more faults, Application 3 and a controller have to be dropped by lack of resources. (d) One controller is dropped. The 3 controllers can still be executed, ev en if all 1st priority applications are not. (e) One of the two controllers is affected by a computational fault: the fan stops since the fan v oter does not trust any of the two incoherent controllers. (f) The computational faults disappear: the fan starts again. (g) All allocators have been dropped: no further reallocation is possible. Figure 11: Result of the task allocation algorithm. A full video of the demo is av ailable at the link below . https://gtvault- my .sharepoint.com/:v:/g/personal/lsutter6 gatech edu/EQbz60ttNU1KkzFo0l0tycIBDyboI9KU0SHs4ntq8lPwA w?e=glyKtj

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment