Active Learning for Black-Box Adversarial Attacks in EEG-Based Brain-Computer Interfaces

Deep learning has made significant breakthroughs in many fields, including electroencephalogram (EEG) based brain-computer interfaces (BCIs). However, deep learning models are vulnerable to adversarial attacks, in which deliberately designed small pe…

Authors: Xue Jiang, Xiao Zhang, Dongrui Wu

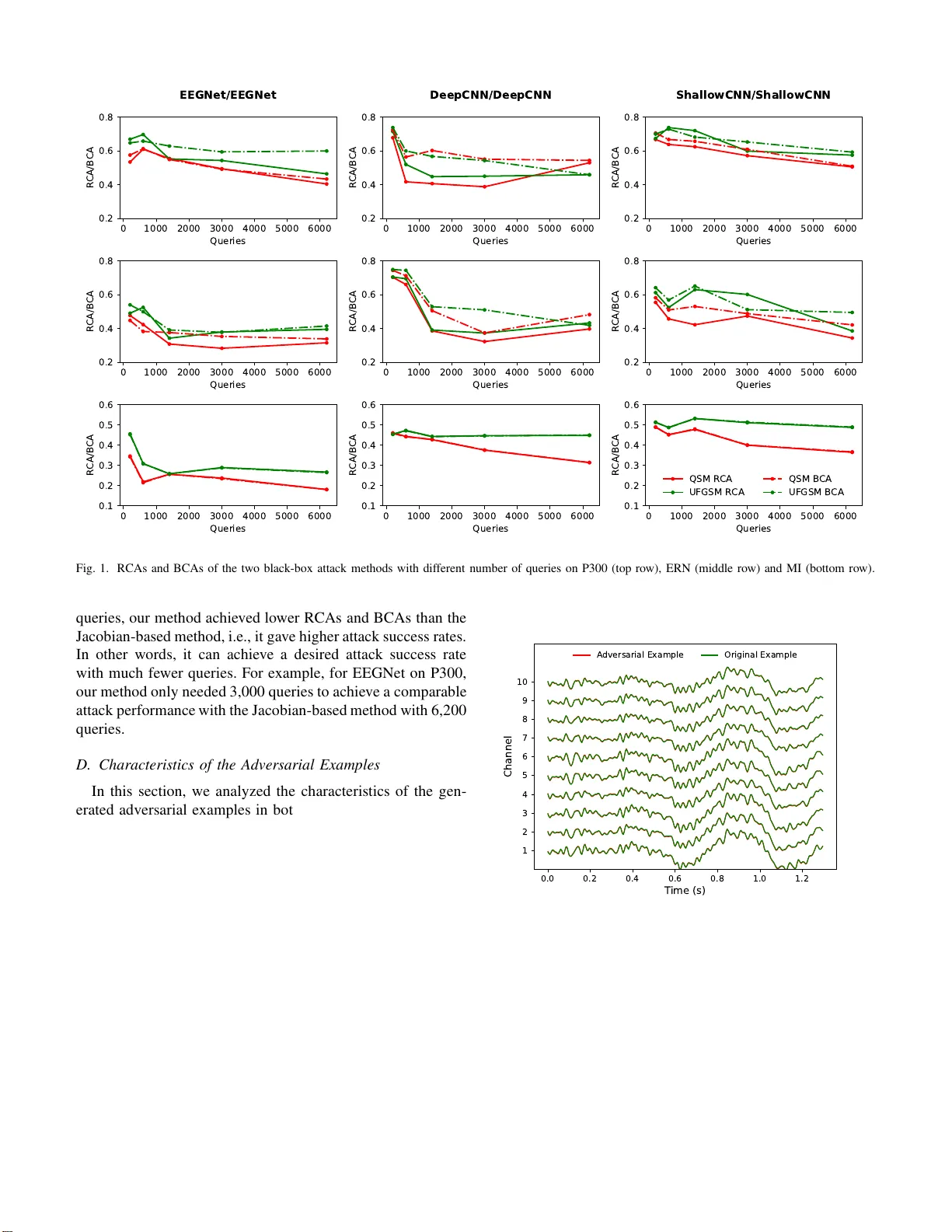

Acti v e Learning for Bla ck-Box Adve rsarial Atta cks in EEG-Based Bra in-Computer Interf ace s Xue Jiang, Xiao Zhang and Dongrui W u School of Artificial Intelligence and Automation Huazhon g University of Science an d T echnolog y , W uhan, Chin a Email: xuejiang @hust.edu.cn , xiao zhang@hu st.edu.cn, d rwu@hust.edu .cn Abstract —Deep learning has ma de signifi cant br eakthroughs in many fields, includin g electroencephalogram (EE G) based brain- computer interfaces (BCIs). Howev er , deep learning models are vulnerable to adversarial attacks, in which d eliberately designed small perturbations are added to th e benign input samples to fo ol the deep learning model and degrade i ts performance. This paper considers transferability-based black-b ox attac ks, where the attacker trains a substitu te model to approximate the target model, and th en generates adversarial examples from the substitute model to attack the target model. Learning a good substitute model is critical to the success of these attacks, b ut it requires a large number of queries to th e target mo del. W e propose a n ov el framew ork which uses query synthesis b ased activ e learning to improv e the query effi cien cy in training the substitute model. Experiments on three con v olutional neu ral network ( CN N ) classifiers and three EEG datasets demonstrated that our method can improv e the attack success rate with the same number of qu eries, or , in other words, our method requir es fewer queries to achi eve a desir ed attack performance. T o our knowledge, th i s is the fi rst work that in tegrates active learning and adversarial attacks for EEG-based B CIs. Key words —Brain-computer interfaces; adversa rial examples; activ e learning; black-box attack I . I N T RO D U C T I O N A brain- compu te r in terface (BCI) is a commu nication sys- tem that con nects a human brain an d a comp uter [1]. Direct dialogue b etween the br ain and the comp uter can be achieved by the established inf ormation path. Electroence p halogr a m (EEG) is the most frequ ently used input signal in BCIs, due to its low c ost an d convenience [2]. V arious p a r adigms are used in E EG-based BCIs, su ch as P300 ev oked po ten tials [3]–[6], motor imager y (MI) [7] , steady-state visu a l e v oked poten tial (SSVEP) [8], etc. Deep lear n ing has achieved gre a t success in numero us fields. Multip le conv olutional neural ne twork (CNN) classifiers have also been proposed for EEG-based BCIs. Lawhern et al. [9] proposed EEGNet, which can be applied to different BCI paradig ms. Schirr meister et al. [ 10] designed a deep CNN model ( DeepCNN) an d a sha llow CNN model (ShallowCNN). In add itio n, there were som e studies to con vert EEG sign als into images an d then classify the m with d eep learning mod - els [ 11]–[13]. T his pap er co nsiders on ly CNN models ( i.e., EEGNet, Deep CNN, Sh a llowCNN) which take th e raw EEG signals as the inp ut. Despite their state-of- th e-art performa n ce, r ecent studies have shown that deep learning models are vulnerab le to adversarial examples, which are crafted b y addin g small imperceptib le perturba tio ns to benign examp les to degrade the perfor mance of a well-trained deep learning model. For ex- ample, in face recognition , an adversarial pertur b ation ca n be attached to the glasses, and the attacker who wears it can a void being recognized , or b e recognized as another person [14]. In im age c lassification, th e adversarial example s c a n foo l a deep learnin g model to g iv e incorr ect image labels [15]–[ 1 8]. Many a d versarial attacks h av e also been p e r formed in speech recogn itio n [19], m alware classification [20], sem a ntic seg- mentation [2 1], etc. Recently , Zhang an d W u [2 2] verified that adversarial example s exist in EEG- based BCIs a n d propo sed se veral a d versarial attack appro aches. Many effectiv e algor ithms for gener ating adversarial exam- ples have been proposed , such as th e fast grad ien t sign method (FGSM) [15], th e C&W meth o d [23], L-BFGS [16], the basic iterativ e method [17], Deep Fool [24], etc. These me th ods mainly co nsidered the white-bo x attack scen ario, wher e the attacker has full a ccess to the target model, includin g its archi- tecture an d par ameters. Acco rdingly , th e attacker can perf o rm attacks by addin g p erturba tio ns along the direc tion calculated by gradient-based stra tegies or optimization-b ased strategies. Howe ver , the white-box setting requires full inform ation of the target m odel, making it impra c tical in many rea l-world applications. In this paper, we focus on a mo re realistic and cha llen ging black-bo x attack scenario, where the attacker can only ob serve the target model’ s respo nses to inputs but ha s no inf or- mation ab out its architecture, parameters, and training d ata. The attacker needs t o generate a dversarial examp les whose perturb ations are constrain ed to a magnitud e threshold with limited que r ies. Papernot et a l. [2 5] propo sed a black -box attack m ethod which genera ted adversarial examples for a white-box substitute model and attacked the black-b ox target model ba sed on the transferability . Zhan g and W u [22] pr o- posed an unsupe rvised fast gradien t sign meth o d (UFGSM) to craft adversarial e xamples for black-b ox attacks in EEG- based B CIs. Ho wev er , th ese tran sferability-b a sed ap proache s suffered from low quer y efficiency: they usually req uire a large nu mber of queries to build a sub stitute model th at is sufficiently sim ilar to th e target model. T o add ress this issue, we introduce a q uery sy n thesis based active le a rning strategy to transfer ability-based black- box attack s of EEG-based BCIs. Giv en a small amou nt of initial tr a ining EEG e pochs for the substitute model, we first random ly obtain a pair of o pposite in stances in each iteratio n, i.e., two instances o f different classes. Accordin g to the initial opposite-p air , we use a b inary search stra tegy to synthesize another opposite-p air close to the current classification bou nd- ary in the in put space . Af ter that, we synthesize quer ies along the perpendicular line of the p reviously fou nd op posite-pair . This query sy n thesis based active learning strategy can directly synthesize queries which are close to the target m odel’ s decision boundary and well scattered. It improves the query efficiency b y directly searching fo r informative examples in the input space fo r su bstitute model training, instead of tak ing a fixed step alon g th e gradien t dir ection. E x perimen ts on three CNN classifiers and th ree BCI d a tasets dem o nstrated the effecti veness o f o ur proposed m ethod. The r emainder of this paper is organized as f ollows: Sec- tion I I introdu ces related work on black-bo x adversarial attacks and activ e learn ing. Sectio n III pr o poses ou r que ry synth esis based active lea rning appro ach fo r craftin g a d versarial exam - ples for b lack-bo x attacks in EEG-b ased BCIs. Section IV ev aluates the attack p e rforman ce of our pr o posed approach . Finally , Section V draws con clusion. I I . R E L A T E D W O R K In this section, we b riefly revie w previous studies on black- box attacks a nd acti ve learn ing. A. Black-Box Attacks Black-box attacks ca n be ro ughly d ivided into three c a te- gories: decision-based, score- based and transferability- b ased. Decision-based attacks were first proposed by Bren del et al. [2 6]. Its main id ea is to g radually redu ce the magnitud e o f the ad versarial perturbatio n while ensuring its effecti veness. Score-based attack s rely o n th e model’ s outpu t scores, e.g ., class pr o babilities or lo gits, to estimate the grad ients and then generate adversarial examples [27], [28]. Transferability-based attacks wer e first proposed by P apernot e t al. [25] in image classification. The attacker train s a substitute mo del, wh ich solves the sam e classification problem as the ta rget mo del, to generate adversarial examples for the target model. In transfera b ility-based black-box attacks, the key step is to learn a sub stitute model whose decision bo undary resembles the target m odel’ s. Papernot et al. [25] used Jacob ia n -based dataset augm entation to syn th esize a substitute training set and lab eled it by query ing the target mod el. By alter nativ ely augmen tin g the training set and updating the substitute mo del, it ca n gra d ually app roximate the target mod el. Recently , Zhang and W u [2 2] extend e d th is idea to EEG-b ased BCIs, but their ap p roach was sligh tly different: they sy n thesized a new training set by u sing th e loss computed f rom the inputs, instead of the labels fr om the target mo d el, to calculate the Jacob ian matrix. Albeit the ou tstanding attack performa n ce of th ese methods, they always req uire a large nu m ber of quer ies to train a substitute model. Generally , th e n u mber of queries gr ows ex- ponen tially with the num ber of iteration s. In order to imp rove the que r y efficiency , we pro pose an active learning based data augmenta tio n ap proach to train the substitute mo del in transferability -based b lack-bo x a ttacks. B. Active Lea rning Activ e learning is an effective way to redu ce the data labeling effort, by actively selecting the most useful instances to label. There are two main scenarios of active learn ing in the literature: quer y sy nthesis [29]– [ 32] and sampling . The latter can be furth er divided in to stream -based sam p ling [33], [34] and pool- b ased sampling [35]–[38]. Sampling - based active learning selects r e al u n labeled instance s fro m a p ool o r steam for labe lin g. In query syn thesis based acti ve learn ing, one can query any d ata instance in the inpu t space, in c lu ding synthesized instances. Intuitively , query synthesis can be applied to th e train in g process of the substitute model to improve the que r y ef ficiency of black-b ox attack s, because it can actively synthesize m ore informa tive E EG epo c hs than gener ating some epochs in Jacobian-b ased way . Furth e rmore, c ompared with samplin g, it is more effi cient to synthesize a query directly instead of ev aluating every instanc e in an unlabeled data pool. Here we don’t nee d to worry about the fact that some synthe sized EEG epochs may b e unrec o gnizable to hum an [39], becau se the target model can label any in stance in the input space. Our ultimate goal is to ide ntify the decision b ound a r y of the target model b y a m inimum number of q u eries. I I I . M E T H O D O L O G Y In th is section, we first introduce the transferability - based black-bo x attack setting for CNN classifiers in EEG- b ased BCIs, and th en describe the query-synth esis-based active learning strategy for trainin g the su b stitute m odel, an d adver- sarial example craftin g for the target mo del. A. Attack Setting The attack framework in this p aper is the same as ou r previous work [22], where the attackers can add adversarial perturb ations before the machine learning m o dules. Let x i ∈ R C × T be the i -th raw EEG epoch ( i = 1 , ..., n ), where C is the number of EEG ch a nnels and T th e n u mber of the time domain sam p les. Let f θ ( x i ) → y i denote the CNN model th at predicts the lab e l for an inp ut E EG ep och. Giv en a ta rget CNN model and a no rmal EEG ep och x i , th e task is to gen e r ate an adversarial example x ∗ i misclassified b y the CNN model. Formally , a n adversarial exam p le x ∗ i should satisfy the f ollowing constraints: f θ ( x ∗ i ) 6 = y i , (1) D ( x i , x ∗ i ) 6 ǫ, (2) where D ( · , · ) is a distance metric, and ǫ con strains the magni- tude of the ad versar ial pe r turbation . (1) ensures the succ e ss of the attack, and (2) ensures that the pertu rbation is not larger than a predefined up per b ound ǫ . Next we d escribe an alg o rithm to learn a sub stitute model for a giv en target CNN classifier by quer ying it fo r labels on q u ery-syn thesized inp uts, and then in troduce th e UFGSM approa c h [22] to craft ad versarial examp les on the trained substitute m odel, which can also b e tran sferred to the target model. W e start with b inary classification, and then extend it to multi-class tasks. B. Query-synthesis-ba sed Dataset Augmentation for T raining the Substitute Model In the black- box attac k scenario, we h av e n o access to th e architecture , p arameters and tra ining d ata o f the target mod e l, but can input EEG trials to the target mo del and observe the correspon ding outputs to probe the mo del. As in [25], we also adopt the transferability-b ased approa ch to implemen t the b lack-box attack . The dif ference is that we use a qu ery- synthesis-based acti ve lear n ing strategy as the data augmen - tation techn ique in substitute m o del training rather tha n the Jacobian-b ased app roach. The oracle in q uery synthesis active learning is the target model. 1) Binary sear ch synthesis: Assume a small lab eled train- ing set S 0 has b een obtained f rom quer ying th e target mo d el. An initial substitute mod el f ′ 0 can be trained on th is set. Su p - pose { x + 0 , x − 0 } is an opposite-p air in S 0 . T h en, we query their middle poin t on the sub stitute mode l to find another opp o site- pair closer to th e decision bo undar y , using Algorithm 1. Algorithm 1: Binary search synthesis. { x + , x − } = B i nary S ear ch ( { x + 0 , x − 0 } , f ′ , m ) Input: { x + 0 , x − 0 } , initial opposite-pair o f EEG epo chs; f ′ , current substitute mo del; m , maximum number of binary sear ch iter ations. Output: { x + , x − } , an opposite-p a ir o f EEG epochs. x + = x + 0 ; x − = x − 0 ; for i = 1 to m do x b = ( x + + x − ) / 2 ; Query f ′ for y b , the label of x b ; if y b is positive then x + ← x b else x − ← x b end end return { x + , x − } 2) Mid-perpen dicular synth esis: Th e re is an obvious lim- itation in binar y sear c h synthesis: if we always use b inary search to g enerate training epo chs, they may concentrate in one area a nd lack diversity . Therefo re, we synthesize th e n ext query along the m id-perp endicular d ir ection af ter we find an opposite-p air close eno ugh to the decisio n bo u ndary . Spe cifi- cally , we find th e op posite-pair ’ s or thogon al vector b y Gr am- Schmidt process [32], set th e magnitu de of the orthog o nal vector to q , the n m ove it to a more precise midpoin t. Th e details are sh own in Algorith m 2. Algorithm 2: Mid-perp endicular syn thesis. x s = M idP erp ( { x + b , x − b } , f ′ , k , q ) Input: { x + b , x − b } , an opposite-p a ir o f E EG epochs; f ′ , current substitute m o del; m , maximum number of binary search iter ations; q , magnitud e of the orthog onal vector . Output: x s , an sy nthesized E E G epoch. x 1 = x + b − x − b ; Generate an EEG epo ch x 2 random ly; Find the orthogo nal dire c tio n by Gr a m-Schmidt pr ocess: x 2 = q · ( x 2 − h x 1 , x 2 i / h x 1 , x 2 i × x 1 ) ; { x + , x − } = B i nar y S ear ch ( { x + b , x − b } , f ′ , m ) ; x s = x 2 + ( x + + x − ) / 2 . return x s W ith this query- sy ntheses-based active learning strategy , the entire substitute m odel training pro cess for binary classifica- tion is shown in Algorithm 3. Algorithm 3 : Query-syn thesis-based s ubstitute mod el training strategy . Input: f , the target m odel; S 0 , a set o f unlabeled E E G epochs; N max , maximum number o f tr aining epochs; n max , maximum number o f sy nthesized EEG epochs in one iter ation; m , max imum number of binary sear ch iter ations; q , magnitud e of the orthogo nal vector . Output: f ′ , a trained sub stitute mo del Label S 0 by querying f to o b tain an initial train ing set D ; Initialize f ′ and pre-train f ′ on D ; ∆ D = ∅ ; for N = 1 to N max do ∆ S = ∅ ; for n = 1 to n max do Select an o pposite p air x + 0 and x − 0 random ly from D ; { x + b , x − b } = B i nar y S ear ch ( { x + 0 , x − 0 } , f ′ , m ) ; x s = M idP er p ( { x + b , x − b } , f ′ , m , q ) ; ∆ S ← ∆ S S { x s } ; end ∆ D = { ( x i , f ( x i )) } x i ∈ ∆ S ; D ← D S ∆ D ; T rain f ′ on D ; end return f ′ W e then extend query-sy n thesis-based aug mentation metho d to m ulti-class classification, by the simple one-v s-one ap- proach , which deco mposes a multi-class task into multip le binary classification tasks. More specifically , if ther e are k 1 classes, then we ca n solve k 2 = k 1 ( k 1 − 1) / 2 b in ary classifications instead, and use our active learning strategy to synthesize n max /k 2 EEG ep ochs (wher e n max is the maximum number o f synthe sized E EG ep ochs in one iteration ) for eac h binary task. C. Adversarial Exa mple Crafting for the T ar get Model After train in g the substitute mo del, we can ge nerate adver- sarial examples from it for the target model. Goodfellow et al. [15] pr oposed to construc t adversarial p erturba tio ns in the following way: δ = ε · sign ( ∇ x i J ( θ , x i , y i )) , (3) where θ are the para meters of the target m odel f , and J the loss fu nction. The main idea is to find an optimal max- norm p erturbatio n δ constraine d by ε to maximize J . T he requirem ents in (2) hold s if ε ≤ ǫ and l ∞ -norm is used as the distance metric. Let ε = ǫ so that we can pertur b x i at the maximum extent. Then, the adversarial example x ∗ i can be re- expressed as: x ∗ i = x i + ǫ · sign ( ∇ x i J ( θ , x i , y i )) . (4) UFGSM [22] is an unsup e r vised extensio n of FGSM, which replaces th e label y i in (4) by y ′ i = f ( x i ) . T hen, x ∗ i in UFGSM can be written a s: x ∗ i = x i + ǫ · sign ( ∇ x i J ( θ , x i , y ′ i )) . (5) UFGSM was u sed in this paper to constru ct the adversarial examples. I V . E X P E R I M E N T S This section presents the experimental results to d emon- strate the effectiv eness of the qu e ry-synth esis-based active learning strategy in transfe r ability-based black-b ox attacks. W e ev aluate the vulnerab ility of thr ee CNN classifiers in EEG- based BCIs. A. Experimental S etup The thre e BCI d atasets, P300 ev oked po tentials (P300 ) [40], fee dback error-related negativity ( ERN) 1 [41], an d moto r imagery (M I ) 2 [42], used in ou r recen t study [22] were used again in th is study . The data pr e-proce ssing steps wer e also identical. T hree CNN c la ssifiers, EEGNet [9 ], Deep CNN [10], and ShallowCNN [10] wer e used as the target models in our exper iments, as in [2 2]. Adam o ptimizer [4 3], cross- entropy loss fun c tio n, and early stop p ing were used in training. Moreover , we applied weights to d ifferent classes to address the class imbalance p roblem in P300 and ERN. Raw classification accuracy (RCA) an d balan ced classifica- tion accuracy ( BCA) were used to evaluate the attack perfo r- mance, where RCA is the unweigh ted overall classification accuracy on the test set, a n d BCA is th e average of the individual RCAs of different classes. 1 https:/ /www .kaggl e.com/c/i nria-bci-challenge 2 http:/ /www .bbci .de/competi tion/i v/ In order to simu late th e black- box scenario , we partition ed the three datasets in to two g roups, as descr ib ed in [22]: the larger gr o up A of 7 /14/7 subjects in P300/ERN/MI w as used to simu late the unkn own data from the target model, where 80% epo c hs wer e for train ing the target mo del and the remaining 2 0% for testing. Th e other smaller g roup B of 1/2 /2 subjects was used to initialize set S 0 for tra ining th e sub stitute model. In this way , the 8 /16/9 subjects in three datasets can be par titio ned in 8/120 /3 6 different ways. W e perform ed both o ne-division and multi-d ivision black-bo x attacks. In th e one-division experiment, the attac ks were rep eated 5 time s to re d uce rando m ness. In the mu lti-division experiment, we repeated each d i vision 5 times o n P300 and on ly one time o n ERN and MI; so, in total we had 40/120/3 6 ev aluations on the three datasets, resp ectiv ely . B. Baseline W e first ev aluated th e baselin e perfo rmance of the th ree CNN target mod els on the un perturb ed EEG d ata, as sho wn in the first part of T able I. Becau se the MI dataset had 4 classes whereas P300 an d ERN had only tw o, its RCAs and BCAs were much lo wer . W e then c onstructed a ran dom p erturbatio n δ ′ : δ ′ = ǫ · sign ( N (0 , 1)) , (6) which has the sam e maximu m amplitu d e ǫ as the ad versarial perturb ations, to verify the necessity of delibe r ately construct- ing the ad versarial examples. The re sults are shown in the second part o f T able I. It is obvious that the target mod els we re robust to random n o ise, i.e., random n oise ca nnot effecti vely perfor m a d versarial attacks. C. Attac k P erformance Comparison W e next compared our query -synthesis-based app roach with the Jacobian -based method [ 22] in black -box attacks. ǫ = 0 . 1 / 0 . 1 / 0 . 05 on P300/ERN/MI were u sed to con struct the adversarial examp le s after the substitute mod els were train ed. In one-division experiments, we ran domly d ownsampled the data f or each class according to the labe ls th at the target model pred icted at the first time to ba lance the classes in the initial dataset. W e set the dow nsampl ed numb er in each class as 200 in P300 and ERN, and 100 in MI, to ensure that th e size of th e initial substitute m odel training set S 0 was 400. The initial substitute mod el was then tr ained on S 0 using both the Jacob ian-based me thod and ours. λ = 0 . 5 and N = 1 were used in the Jacobian-based metho d, where N corr esponds to N max in our method. n max = 2 00 and m = 10 wer e used in our method, so N max = 2 should be used to get the same numb er of queries as in the Jacobian -based method. q = 1 . 0 / 0 . 8 / 0 . 8 on P300/ERN/MI were used in the experiments. They were determined such th at the generated EEG epochs had approx imately th e same magn itudes as those in th e initial training set S 0 . In multi-division experiments, we did not limit downsamp l e d number to avoid wasting data, but always kept the same numb er o f qu eries in the Jacobian -based T ABLE I A V E R AG E R C A S / B C A S ( % ) O F D I FF E R E N T T A R G E T C L A S S I FI E R S O N T H E T H R E E D ATAS E T S B E F O R E A N D A F T E R B L AC K - B O X A T TAC K S . Experiment Dataset T arget Model f Baselin es Method Substitut e Model f ′ Original Noisy EEGNet DeepCNN Shallo wCNN One-Di vision P300 EEGNet 73.81/72.71 73.78/72.46 Ours 54.90 / 54.63 41.39 / 50.80 72.71/ 71.79 Jacobia n-based 62.36/61.64 54.58/55.55 72.07 /72.02 DeepCNN 77.77/74.79 78.08/74.78 Ours 69.77/70.52 47.41 / 59.00 74.86 / 73.38 Jacobia n-based 66.40 / 66.82 53.11/60.13 76.99/74.20 Shallo wCNN 72.56/72.87 72.64/72.87 Ours 63.43 / 65.17 60.84 / 62.18 66.54 / 67.91 Jacobia n-based 67.52/67.74 74.20/66.15 73.24/71.73 ERN EEGNet 76.26/74.19 75.47/73.34 Ours 36.55 / 38.23 36.55 / 41.10 73.56 / 71.76 Jacobia n-based 52.07/51.65 47.06/50.94 75.82/72.37 DeepCNN 77.21/76.85 75.05/76.38 Ours 46.22 / 46.57 52.07 / 61.59 75.14 / 73.99 Jacobia n-based 51.65/52.79 55.36/64.33 75.67/75.97 Shallo wCNN 71.85/71.17 71.51/70.95 Ours 70.69 / 71.44 69.75 / 70.66 44.82 / 49.81 Jacobia n-based 72.16/71.50 70.73/71.22 52.10/53.57 MI EEGNet 54.93/54.62 52.93/52.78 Ours 31.77 / 31.70 35.96/36.07 40.27 / 40.28 Jacobia n-based 37.07/36.87 35.26 / 35.24 41.01/41.02 DeepCNN 49.38/49.18 49.73/49.56 Ours 41.91 / 41.78 39.24 / 39.08 38.14 / 38.05 Jacobia n-based 45.36/45.22 40.56/40.48 41.09/41.06 Shallo wCNN 60.96/60.81 61.04/60.93 Ours 51.93 / 52.06 51.93/52.01 43.60 / 43.68 Jacobia n-based 55.05/55.18 48.28 / 48.44 48.97/49.00 Multi-Di vision P300 EEGNet 73.59/71.95 73.52/71.83 Ours 41.40 / 42.44 32.66 / 39.75 64.95 / 64.91 Jacobia n-based 47.02/48.49 39.79/41.99 65.16/64.16 DeepCNN 75.99/74.10 76.20/73.94 Ours 53.29 / 55.10 36.57 / 44.55 68.78 / 66.88 Jacobia n-based 59.18/60.01 44.54/49.66 70.05/66.98 Shallo wCNN 72.23/71.90 72.24/71.85 Ours 59.66 / 60.17 51.76/ 55.15 53.80/ 49.60 Jacobia n-based 59.75/61.73 51.40 /57.91 52.38 /52.54 ERN EEGNet 73.89/72.94 73.23/72.72 Ours 45.71 / 47.29 46.78 / 48.94 71.27/71.14 Jacobia n-based 54.42/54.47 52.54/54.53 71.22 / 70.97 DeepCNN 74.24/72.69 73.86/72.38 Ours 56.78 / 55.07 53.85 / 53.33 72.44 / 70.89 Jacobia n-based 59.64/58.32 57.77/57.54 72.73/71.43 Shallo wCNN 71.86/71.45 71.77/71.21 Ours 70.15 /70.46 68.49 / 69.28 58.28 / 59.42 Jacobia n-based 70.44/ 70.30 70.31/70.18 63.51/63.80 MI EEGNet 60.85/60.71 59.48/59.39 Ours 35.10 / 35.27 46.47 / 46.45 43.60 / 43.67 Jacobia n-based 39.13/39.13 46.58/46.61 53.19/53.17 DeepCNN 55.83/55.57 55.65/55.39 Ours 47.06 / 46.90 45.32 / 45.31 42.54 / 42.46 Jacobia n-based 48.68/48.59 48.45/48.38 48.72/48.57 Shallo wCNN 64.71/64.62 64.18/64.09 Ours 57.26 / 57.32 57.94 / 57.98 46.74 / 46.84 Jacobia n-based 59.44/59.49 59.04/59.07 54.56/54.56 method and ou rs. so n max = dow nsampl ing number o n P300 an d ERN, n max = 2 ∗ dow nsampl ing number on MI in our me thod, the other h yper-parameters were th e same as th ose in one-division experiments. The attack results ar e shown in T a b le I. W e can obser ve that: 1) Generally , th e RCAs and BCAs of th e target mode ls af- ter attacks were lower than th e co rrespond ing baselines, indicating the ef fectiveness o f the transferability-based black-bo x attack m ethods. However , shallowCNN did not perfor m well in attacking EEGNet and deepCNN on P300 and ERN. 2) The RCAs and BCAs after query- sy nthesis-based black- box attacks were gener a lly lower tha n the correspo nding accuracy after Jacob ian-based black-b ox attack s. For example, in one-d ivision experiments in which only 400 q ueries were u sed for both meth ods, when th e target mode l an d the substitute mo del were EEGNet a n d DeepCNN respectively on P300 , our method achiev ed 13% improvement over the Jacob ian-based me th od. 3) Our prop osed method was often much better than the Jacobian-b ased method when the substitute model and the target mod el had th e same structure, which m a y due to the strong in tra-transfer ability of the adversarial examples. W e compar ed the attack perform ance with different numb er of queries when the sub stitute mod e l and the target m odel h ad the same architectur e, i.e., both were EEGNet, DeepCNN or ShllowCNN. 200 EEG epo chs wer e used for initializing the substitute model, and the numbe r of qu eries varied fro m 2 00 to 6 ,200. Th e c o rrespon ding RCAs/ BCAs are shown in Fig. 1. Generally , bo th RCAs and BCAs decrea sed as the numb er of queries in creased, which is in tuitiv e. W ith the same n umber of 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Q u e r i e s 0 . 2 0 . 4 0 . 6 0 . 8 R C A/ BC A E E G N e t/ E E G N e t 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Q u e r i e s 0 . 2 0 . 4 0 . 6 0 . 8 R C A/ BC A D e e p C N N / D e e p C N N 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Qu e r i e s 0 . 2 0 . 4 0 . 6 0 . 8 R C A/ BC A S h a l l ow CN N / S ha l l ow C N N 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Q u e r i e s 0 . 2 0 . 4 0 . 6 0 . 8 R C A/ BC A 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Q u e r i e s 0 . 2 0 . 4 0 . 6 0 . 8 R C A/ BC A 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Qu e r i e s 0 . 2 0 . 4 0 . 6 0 . 8 R C A/ BC A 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Q u e r i e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 R C A / BC A 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Q u e r i e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 R C A / BC A 0 1 0 0 0 2 0 0 0 3 0 0 0 4 0 0 0 5 0 0 0 6 0 0 0 Qu e r i e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 R C A / BC A QS M R C A UF G S M R C A QS M BC A UF G S M BC A Fig. 1. RCAs and BCAs of the two black-box attack m ethods with differe nt number of queries on P300 (top ro w), ERN (middle ro w) and MI (bottom row). queries, our meth o d achiev ed lower RCAs and BCAs than the Jacobian-b ased method, i.e., it gave h igher attack success rates. In othe r words, it can achiev e a desired attack success rate with much f ewer queries. For example, for EEGNet on P300, our method o nly n eeded 3, 000 queries to achieve a co mparab le attack p erforma n ce with the Jacobian-b a sed metho d with 6,2 00 queries. D. Characteristics of the Adversarial Ex amples In this section, we analyze d the ch aracteristics of th e g en- erated ad versarial examples in both time doma in and spec tral domain. Consider one-division q uery-sy n thesis-based black- b ox at- tacks o n ERN after 4 00 q ueries, wh e n both th e target model and the substitute model are DeepCNN. An e xample of the original EEG epoch (we o nly show the first 10 chann els) an d its correspon ding ad versarial epoch is shown in Fig. 2. They were alm o st co mpletely overlappin g in the tim e dom ain, which means the adversarial example is very difficult to b e d etected by huma n or a com puter . W e had similar ob servations on other datasets and from o th er classifiers. Next, spectro gram a nalysis was used to fu rther explo re the ch aracteristics o f the adversar ia l e xamples. Fig. 3 sh ows the mean spectro gram of all original EEG epoc h s, o f all adversarial examples wh ose pr edicted lab e ls did not match the ground- truth labels of the original EEG epochs (successful attacks), and of the correspo nding successfu l perturbation s 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 1 . 2 T i m e ( s) 1 2 3 4 5 6 7 8 9 1 0 C h a n n e l Ad v e r sa r i a l E x a mp l e O r i g i n a l E x a m p l e Fig. 2. Example of an original EEG epoch and its adversarial epoch, generat ed by query-synthesis-ba sed black-bo x attack on the ERN dataset. The first 10 channe ls are shown. ǫ = 0 . 1 . ( x ∗ − x ), by using wav elet decom position. The adversarial examples were cr afted by q uery-syn thesis-based black-bo x attacks on MI. Both the target an d the substitute mo dels were DeepCNN. T here was no sign ificant difference in the spectrogra ms of the o riginal EEG epo c hs and the adversarial examples. T he amplitudes of the mean sp ectrogram of the Fig. 3. Mean spectrogram of the original EEG epochs, of all successful adve rsarial examples, and of the corresponding perturbations, in one-di vision query-synth esis-based black-box attac ks on the MI data set. Channel F z was used. adversarial p erturba tio ns were much smaller than tho se of the o riginal an d adversarial examples, sugg esting that our generated ad versarial examples ar e difficult to be detected by spectrogra m analysis. W e had similar observations on other datasets and from o th er classifiers. V . C O N C L U S I O N In th is paper, we h ave pro posed a query- synthesis-based activ e learning strategy fo r transferab ility-based b lack-bo x attacks. It improves th e query ef ficiency b y acti vely sy n the- sizing EEG ep ochs scattering aro und the decision boundary of the target model, and thus the train e d su bstitute mod el can better approx imate the target model. W e app lied our meth od to attacking thr ee state-of-the-art deep learnin g classifiers in EEG-based BCIs. Expe r iments demonstrated its effecti veness and ef ficiency . Wi th the sam e number of queries, it can achieve better attack perfor mance than the tra d itional Jaco b ian-based method; or , in other words, o ur approaches needs a smaller number of queries to achieve a desired attack success rate. R E F E R E N C E S [1] B. Graimann, B. Allison, and G. Pfurtscheller , Brain-Compute r Inter- faces: A Gentle Intr oducti on . Berlin, Heidelb erg: Springer , 2009, pp. 1–27. [2] L. F . Nicolas-Al onso and J. Gomez-Gil, “Brain computer interf aces, a re vie w , ” Sensors , vo l. 12, no. 2, pp. 1211–1279, 2012. [3] S. Sutton, M. Brare n, J. Zubin, and E . R. John, “Evoke d-potenti al correla tes of stimulus uncertai nty , ” Science , vol. 150, no. 3700, pp. 1187–1188, 1965. [4] L. Farwell and E. Donchin, “T alk ing of f the top of your head: towa rd a mental prosthesis utilizing eve nt-rela ted brain potentials, ” Electr oen - cephalo grap hy and Clinical Neur ophysiol ogy , vol. 70, no. 6, pp. 510– 523, 1988. [5] D. Wu , “Online and offli ne domain adaptation for reducing BCI cali - bration effort, ” IEEE T rans. on Human-Mac hine Systems , vol. 47, no. 4, pp. 550–563, 2017. [6] D. W u, V . J. Lawhern, W . D. Hairston, and B. J. Lance, “Switc hing EEG headsets m ade easy: reducing offline calibrati on effort using acti ve weighte d ada ptatio n regu larizat ion, ” IE EE T rans. on Neural Systems and Rehabili tation Engineerin g , vol. 24, no. 11, pp. 1125–1137, 2016. [7] G. Pfurtschell er and C. Neuper , “Motor imagery and direct brai n- computer communication, ” Pr oceeding s of the IEEE , vol. 89, no. 7, pp. 1123–1134, Jul 2001. [8] D. Zhu, J. Bie ger , G. Garcia Molina, and R. M. Aarts, “ A s urve y of stimul ation metho ds used in SSVEP-based BCIs, ” Compu tational Intell igenc e and Neur oscie nce , p. 702357, 2010. [9] V . J. Lawher n, A. J. Solon, N. R. W aytowic h, S. M. Gordon, C. P . Hung, and B. J. Lance, “EEGNet: a compac t con volu tional neural netw ork for EEG-based brain -computer interface s, ” J ournal of Neura l Engineering , vol. 15, no. 5, p. 056013, 2018. [10] R. T . Schirrmeist er , J. T . Springenber g, L. D. J. Fiederer , M. Glasstetter , K. Eggensper ger , M. T ange rmann, F . Hutter , W . Burga rd, and T . Ball, “Deep learning with con vo lutiona l neural netw orks for E EG decoding and visualizati on, ” Human Brain Mapping , vol. 38, no. 11, pp. 5391– 5420, 2017. [11] P . Bashi v an, I. Rish, M. Y easin, and N. Codella, “Learning representa - tions from EEG with deep recurr ent-con volutional neural netw orks, ” in Pr oc. Int’l Conf. on Learning R epr esentat ions , San Juan, Puerto Rico, May 2016. [12] Y . R. T abar and U. Hali ci, “ A nove l deep learn ing approa ch for cla ssifi- catio n of EEG motor imagery signals, ” Journa l of Neur al Enginee ring , vol. 14, no. 1, p. 016003, 2017. [13] Z. T aye b, J. Fedjae v , N. Ghaboosi, C. Ric hter , L. E verdi ng, X. Qu, Y . Wu, G. Cheng, and J. Conradt , “V alidati ng dee p neural networks for online decoding of m otor imagery moveme nts from EEG signals, ” Sensors , vol. 19, no. 1, p. 210, Jan. 2019. [14] M. Sharif, S. Bhaga va tula, L. Bauer , and M. K. Reite r , “ Accessorize to a crime: real and stealt hy atta cks on state-o f-the-art face recogniti on, ” in Pr oc. ACM SIGSA C Conf. on Computer and Communicati ons Security . V ie nna, Austria: A CM, Oct. 2016, pp. 1528–1540. [15] I. J. Goodfello w , J. Shlens, and C. Sze gedy , “Explaining and harne ssing adve rsarial e xamples, ” in Proc. Int’l Conf . on Learning Represent ations , San Diego, CA, May 2015. [16] C. Szeg edy , W . Z aremba, I. Sutske ver , J. Bruna, D. Erhan, I. J. Goodfell ow , and R. Fergus, “Intriguing properties of neural networks, ” in P r oc. Int’l Conf. on Learning Represent ations , Banf f, Cana da, Apr . 2014. [17] A. Kurak in, I. J. Goodfell ow , and S. Bengio, “ Adversa rial examples in the physical world, ” in Proc. Int’l Conf. on Learning Repre sentation s , T oulon, France , Apr . 2017. [18] A. Athalye, L. E ngstrom, A. Ilyas, and K. Kwok, “Synthe sizing robust adve rsarial example s, ” in P r oc. 35th Int’l Conf . on Machine Learning , Stockhol m, Sweden, Jul. 2018, pp. 284–293. [19] N. Carlini and D. A. W agner , “ Audio adversaria l e xamples: targe ted attac ks on speech-t o-tex t, ” CoRR , vol. abs/1801.0194 4, 2018. [Online ]. A vail able: http://a rxi v . org/a bs/1801.01944 [20] K. Grosse, N. Papern ot, P . Manoharan, M. Back es, and P . McDaniel, “ Adversari al perturb ations aga inst dee p neural netw orks for mal ware classifica tion, ” CoRR , vol. abs/1606.04435, 2016. [Online]. A vaila ble: https:/ /arxi v .org/abs/1606 .04435 [21] J. H. Metzen, M. C. Kumar , T . Brox, and V . Fischer , “Uni versal adve rsarial perturbatio ns against semantic image s egmen tation, ” in Proc . Int’l Conf. on Computer V ision . V enice, Italy: IEEE, Oct. 2017, pp. 2774–2783. [22] X. Zhang and D. Wu , “On the vulnera bility of CNN classifier s in EEG-based BCIs, ” IEEE T rans. on Neural Systems and R ehabili tation Engineerin g , vol. 27, no. 5, pp. 814–825, 2019. [23] N. Carlini and D. W agne r , “T owards ev alu ating the robustness of neural netw orks, ” in Proc . IEEE Symposium on Secu rity and P rivacy . San Jose, CA: IEEE, May 2017, pp. 39–57. [24] S.-M. Moosavi-Dezfool i, A. Fawzi , and P . Frossard, “Deepfool: a simple and accurate m ethod to fool deep neural networks. ” in Pro c. IEEE Conf. on Computer V ision and P att ern Recognitio n . Las V egas, NV : IE EE, Jun. 2016, pp. 2574–2582 . [25] N. Papern ot, P . McDaniel, I. Goodfello w , S. Jha, Z. B. Celik, and A. Swami, “Practic al black-box attacks against machine learnin g, ” in Pr oc. ACM Asia Conf . on Computer and Communicat ions Security . Abu Dhabi, UAE: ACM, Apr . 2017, pp. 506–519. [26] W . Brende l, J. Raube r , and M. Beth ge, “Decision-base d adversari al attac ks: rel iable attacks a gainst black-box machine learning models, ” in P r oc. Int’l Conf. on Learning Represen tations , V ancouver , Canada, May 2018. [27] A. Ilyas, L. Engstrom, A. Athalye, and J. L in, “Black-box adversaria l attac ks with limited queries and informat ion, ” in Pr oc. Int’l Conf. on Mach ine Learning , Stockho lm, Sweden, Jul. 2018, pp. 2142–215 1. [28] A. N. Bhagoj i, W . He, B. Li, and D. Song, “Practic al black-bo x attac ks on deep neural netwo rks using efficie nt query mechanisms, ” in Proc. Eur opean Conf. on Computer V ision . Munich, German y: Springer , Sep. 2018, pp. 158–174. [29] D. Angluin, “Querie s and concept learning, ” Machine learning , vol. 2, no. 4, pp. 319–342, 1988. [30] R. D. King, J. Rowland , S. G. Oli ver , M. Y oung, W . Aubrey , E. Byrne, M. Liakat a, M. Markham, P . Pir , L. N. Soldato v a et al. , “The automati on of scien ce, ” Scienc e , vol. 324, no. 5923, pp. 85–89, 2009. [31] I. Alabdulmohsin, X. Gao, and X. Zhang, “Effici ent acti ve learning of halfspac es via query synthesis, ” in Proc. 29th AAA I Conf. on Artificial Intell igenc e . Austin, T exas: AAAI Press, Jan. 2015, pp. 2483–2489. [32] L. W ang, X. Hu, B. Y uan, and J. Lu, “ Acti ve learning via query synthesis and neare st neighbour search, ” Neur ocomputing , vol. 147, pp. 426–4 34, 2015. [33] L. Atlas, D. Cohn, R. Ladner , M. El-Sharka wi, R. Marks II, M. Aggoune, and D. Park, “Traini ng connectio nist netwo rks with queries and select iv e sampling, ” in Proc. Advances in Neural Information Pr ocessing Systems . Den v er , Colorado: MIT Press, Nov . 1989, pp. 566–573. [34] S. Dasgupta, D. J . Hs u, and C. Monteleo ni, “ A general agnostic acti ve learni ng algorithm, ” in P roc. A dvances in Neural Informati on Pr ocessing Systems , British Columbia, Canad a, Dec. 2008, pp. 353–360. [35] B. Settles and M. Crave n, “ A n ana lysis of acti ve le arning strategie s for sequence label ing tasks, ” in Pro c. Conf. on E mpirical Methods in Natural Languag e Pr ocessing . Honolul u, H awaii: Association for Computati onal Linguistics, Oct. 2008, pp. 1070–1079. [36] D. Wu, V . J. L awher n, S. Gordon, B. J. Lance, and C.-T . Lin, “Of fline EEG-based dri ver dro wsiness esti mation using enhanced batch- mode acti v e learnin g (E BMAL ) for regressio n, ” in Pr oc. IEEE Int’l Conf . on Systems, Man, and Cybernetic s (SMC) . Budapest , Hungary: IEEE, Oct. 2016, pp. 730–736. [37] D. Wu , C.-T . Lin, and J. Huang, “ Activ e learning for regression using greedy sampling, ” Information Sciences , vol. 474, pp. 90–105, 2019. [38] D. Wu and J. Huang, “ Affect estimation in 3D space using multi-task acti v e le arning for regression, ” IE EE T rans. on Affecti ve Compu ting , 2019. [39] E. B. Baum and K. Lang, “Que ry learning can work poorly when a human oracle is used, ” in Proc. IEEE Int’l Jo int Conf. on Neural Network s , vol. 8, Baltimore, Maryland, J un. 1992, p. 8. [40] U. Hoffmann, J.-M. V esin, T . Ebrahimi, and K. Diserens, “ An ef ficient P300-based brain-computer interface for disabled subjects, ” Journa l of Neur osci ence Methods , vol. 167, no. 1, pp. 115–125, 2008. [41] P . Margaux, M. Emmanuel, D. Sbasti en, B. Oli vier , and M. Jrmie, “Objec ti ve and subjecti v e e v aluatio n of online error correc tion during P300-based spelling, ” A dvances in Human-Computer Interact ion , vol. 2012, no. 578295, p. 13, 2012. [42] M. T angermann, K.-R. Mll er , A. Aertsen, N. Birbaumer , C. Braun, C. Brunner , R. Leeb, C. Mehring, K. Miller , G. Mueller -Putz, G. Nolte, G. Pfurtschel ler , H. Preissl, G. Schalk, A. Schlgl, C. V idaurre, S. W aldert, and B. Blank ertz, “Rev ie w of the BCI Competiti on IV, ” F r onti ers in Neur oscience , vol. 6, p. 55, 2012. [43] D. P . Kingma and J . Ba, “ Adam: a method for stochastic optimizati on, ” in P r oc. Int’l Conf. on Learning Represent ations , Banff, Canada, Apr . 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment