Learning to Fix Build Errors with Graph2Diff Neural Networks

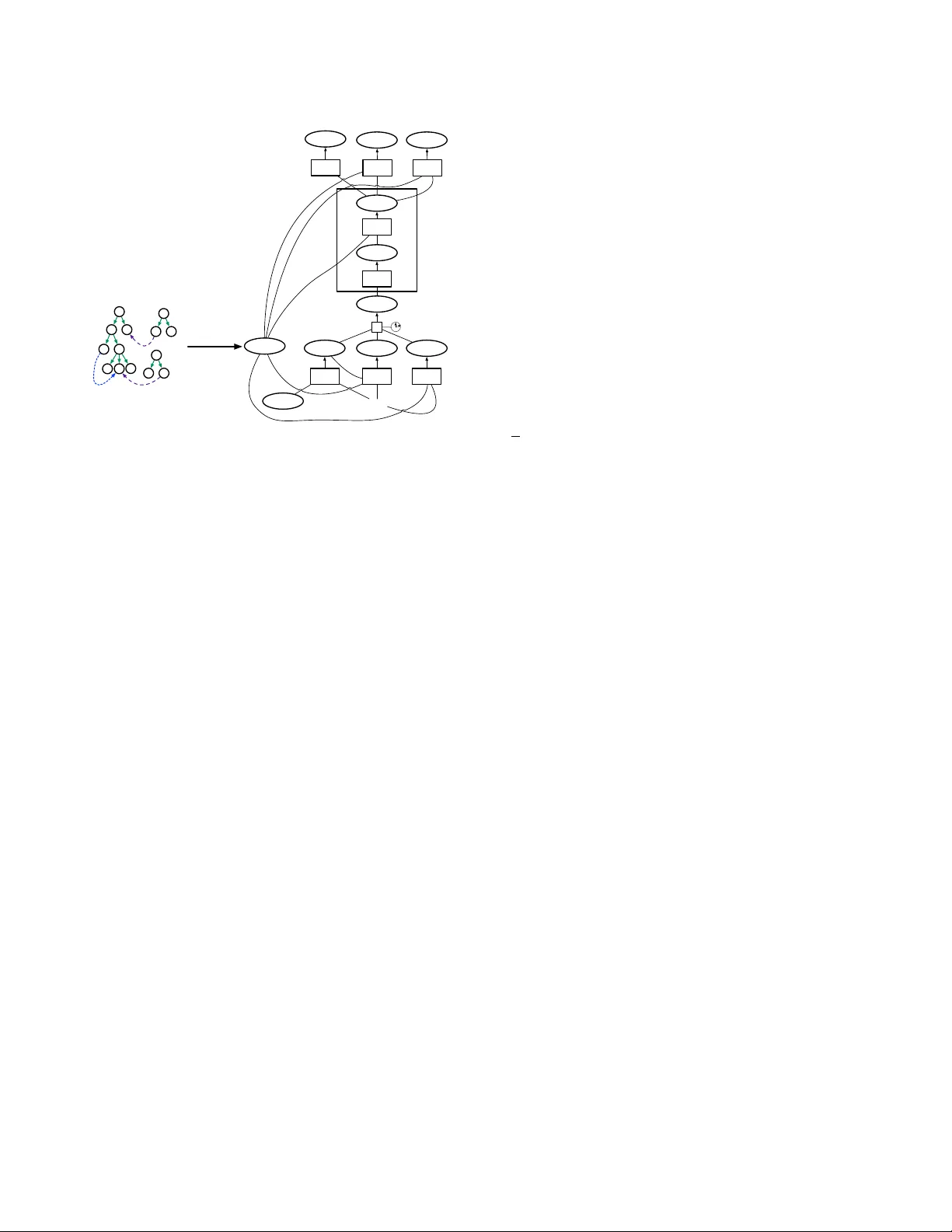

Professional software developers spend a significant amount of time fixing builds, but this has received little attention as a problem in automatic program repair. We present a new deep learning architecture, called Graph2Diff, for automatically loca…

Authors: Daniel Tarlow, Subhodeep Moitra, Andrew Rice