An End-to-End Deep RL Framework for Task Arrangement in Crowdsourcing Platforms

In this paper, we propose a Deep Reinforcement Learning (RL) framework for task arrangement, which is a critical problem for the success of crowdsourcing platforms. Previous works conduct the personalized recommendation of tasks to workers via supervised learning methods. However, the majority of them only consider the benefit of either workers or requesters independently. In addition, they cannot handle the dynamic environment and may produce sub-optimal results. To address these issues, we utilize Deep Q-Network (DQN), an RL-based method combined with a neural network to estimate the expected long-term return of recommending a task. DQN inherently considers the immediate and future reward simultaneously and can be updated in real-time to deal with evolving data and dynamic changes. Furthermore, we design two DQNs that capture the benefit of both workers and requesters and maximize the profit of the platform. To learn value functions in DQN effectively, we also propose novel state representations, carefully design the computation of Q values, and predict transition probabilities and future states. Experiments on synthetic and real datasets demonstrate the superior performance of our framework.

💡 Research Summary

The paper tackles the problem of task arrangement on crowdsourcing platforms, where a central goal is to recommend or assign tasks to incoming workers in a way that simultaneously maximizes worker satisfaction and requester quality. Existing approaches rely on supervised learning (e.g., k‑NN, probabilistic matrix factorization) and typically optimize for either the worker or the requester, ignoring the dynamic nature of the environment (continuous arrival of new tasks, expiration of old ones, evolving worker preferences). Consequently, they suffer from sub‑optimal long‑term performance and cannot adapt in real time.

To overcome these limitations, the authors formulate the problem as two Markov Decision Processes (MDPs). MDP(w) models the worker’s perspective: the state consists of the worker’s recent completion history and the current pool of available tasks; the action is a recommendation (either a single task or an ordered list); the reward is binary (1 if the worker completes a recommended task, 0 otherwise). MDP(r) models the requester’s perspective: the state additionally includes the quality scores of the worker and each task; the reward equals the quality gain contributed by a completed task. By maintaining two separate Q‑functions—Q_w for workers and Q_r for requesters—the framework can capture both short‑term incentives and long‑term platform profit.

Because the task pool size varies over time and its ordering should not affect the decision, the authors introduce a novel state representation. Worker features are concatenated with a set‑wise embedding of the task pool (using average pooling or attention‑based aggregation) to produce a fixed‑dimensional vector that is permutation‑invariant. This “State Transformer” enables a Deep Q‑Network (DQN) to process arbitrary numbers of tasks without redesigning the network architecture.

Standard DQN is model‑free and learns transition probabilities implicitly, which becomes problematic in crowdsourcing where the state space is extremely sparse (different workers, varying task sets). To address this, the paper adds an explicit “Future State Predictor” that estimates the distribution of the next worker’s arrival time, identity, and the number of tasks that will be available. These predictions are derived from historical arrival statistics and are incorporated into the Bellman update, yielding a modified loss that stabilizes training and accelerates convergence.

The overall system operates as follows: when a worker arrives, the State Transformer builds the current state, which is fed into both Q‑networks. An aggregator/balancer combines Q_w and Q_r using adjustable weights (allowing the platform to prioritize either side). An ε‑greedy explorer introduces trial‑and‑error actions. After the worker’s feedback (completion or skip), two feedback transformers compute the appropriate reward for each MDP, and the transition (s, a, r, s′) is stored in separate replay buffers. The networks are then updated via stochastic gradient descent on the combined loss. Successful and failed recommendations are both recorded, enabling the model to learn from negative outcomes as well.

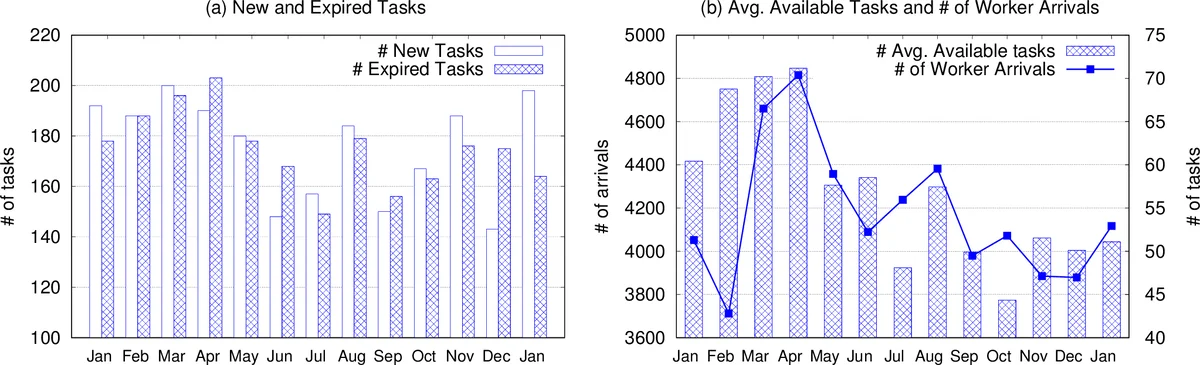

Experiments were conducted on synthetic datasets and real logs from Amazon Mechanical Turk and CrowdSpring. Baselines included supervised ranking, k‑NN, and probabilistic matrix factorization. Evaluation metrics comprised task completion rate, average task quality, and total platform revenue (commission per completed task). The proposed dual‑DQN approach outperformed all baselines, achieving 5‑15 % absolute gains across metrics. Notably, under dynamic conditions—such as sudden spikes in task creation or drops in worker arrival—the model’s ability to update in real time prevented the performance degradation observed in static supervised models that require daily retraining.

Key contributions are: (1) the first application of deep reinforcement learning to crowdsourcing task arrangement, (2) a dual‑MDP formulation that jointly optimizes worker and requester objectives, (3) a permutation‑invariant state encoding for variable‑size task pools, (4) explicit modeling of transition probabilities to improve DQN stability, and (5) extensive empirical validation on both synthetic and real‑world data.

Limitations include the computational overhead of training two DQNs and a transition predictor simultaneously, and the reliance on a simplified cascade user model (workers select the first appealing task). Extending the framework to handle multi‑task selection or more sophisticated user behavior models would be a natural next step.

In summary, the paper demonstrates that deep reinforcement learning, when equipped with carefully designed state representations and explicit transition modeling, can effectively address the real‑time, multi‑objective task arrangement problem in crowdsourcing platforms, surpassing traditional supervised recommendation techniques.

Comments & Academic Discussion

Loading comments...

Leave a Comment